LessWrong Has Agree/Disagree Voting On All New Comment Threads

Starting today we’re activating two-factor voting on all new comment threads.

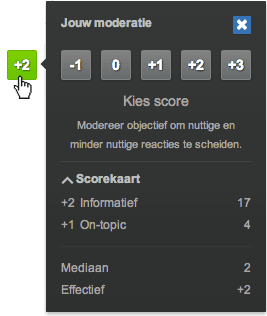

Now there are two axes on which you can vote on comments: the standard karma axis remains on the left, and the new axis on the right lets you show much you agree or disagree with the content of a comment.

How the system works

For the pre-existing voting system, the most common interpretation of up/down-voting is “Do I want to see more or less of this content on the site?” As an item gets more/less votes, the item changes in visibility, and the karma-weighting of the author is eventually changed as well.

Agree/disagree is just added on to this system. Here’s how it all hooks up.

Agree/disagree voting does not translate into a user’s or post’s karma — its sole function is to communicate agreement/disagreement. It has no other direct effects on the site or content visibility (i.e. no effect on sorting algorithms).

For both regular voting and the new agree/disagree voting, you have the ability to normal-strength vote and strong-vote. Click once for normal-strength vote. For strong-vote, click-and-hold on desktop or double-tap on mobile. The weight of your strong-vote is approximately proportional to your karma on a log-scale (exact numbers here).

Ben’s personal reasons for being excited about this split

Here’s a couple of reasons that are alive for me.

I personally feel much more comfortable upvoting good comments that I disagree with or whose truth value I am highly uncertain about, because I don’t feel that my vote will be mistaken as setting the social reality of what is true.

I also feel very comfortable strong-agreeing with things while not up/downvoting on them, so as to indicate which side of an argument seems true to me without my voting being read as “this person gets to keep accruing more and more social status for just repeating a common position at length”.

Similarly to the first bullet, I think that many writers have interesting and valuable ideas but whose truth-value I am quite unsure about or even disagree with. This split allows voters to repeatedly signal that a given writer’s comments are of high value, without building a false-consensus that LessWrong has high confidence that the ideas are true. (For example, many people have incompatible but valuable ideas about how AGI development will go, and I want authors to get lots of karma and visibility for excellent contributions without this ambiguity.)

There are many comments I think are bad but am averse to downvoting, because I feel that it is ambiguous whether the person is being downvoted because everyone thinks their take is unfashionable or whether it’s because the person is wasting the commons with their behavior (e.g. belittling, starting bravery debates, not doing basic reading comprehension, etc). With this split I feel more comfortable downvoting bad comments without worrying that everyone else who states the position will worry if they’ll also be downvoted.

I have seen some comments that previously would have been “downvoted to hell” are now on positive karma, and are instead “disagreed to hell”. I won’t point them out to avoid focusing on individuals, but this seems like an obvious improvement in communication ability.

I could go on but I’ll stop here.

Please give us feedback

This is one of the main voting experiments we’ve tried on the site (here’s the other one). We may try more changes and improvement in the future. Please let us know about your experience with this new voting axis, especially in the next 1-2 weeks.

If you find it concerning/invigorating/confusing/clarifying/other, we’d like to know about it. Comment on this post with feedback and I’ll give you an upvote (and maybe others will give you an agree-vote!) or let us know in the intercom button in the bottom right of the screen.

We’ve rolled it out on many (15+) threads now (example), and my impression is that it’s worked as hoped and allowed for better communication about the truth.

appreciation for high-quality comments that many users disagree with.

- Agree/disagree voting (& other new features September 2022) by (EA Forum; 7 Sep 2022 11:07 UTC; 142 points)

- LessWrong FAQ by (14 Jun 2019 19:03 UTC; 91 points)

- “Two-factor” voting (“two dimensional”: karma, agreement) for EA forum? by (EA Forum; 25 Jun 2022 11:10 UTC; 81 points)

- Voting Results for the 2022 Review by (2 Feb 2024 20:34 UTC; 57 points)

- Forum user manual by (EA Forum; 28 Apr 2022 14:05 UTC; 42 points)

- 's comment on Fanatical EAs should support very weird projects by (EA Forum; 30 Jun 2022 23:56 UTC; 22 points)

- 's comment on My Most Likely Reason to Die Young is AI X-Risk by (EA Forum; 5 Jul 2022 15:35 UTC; 21 points)

- 's comment on Person-affecting intuitions can often be money pumped by (EA Forum; 7 Jul 2022 20:12 UTC; 10 points)

- 's comment on shortplav by (27 Nov 2022 22:49 UTC; 6 points)

- 's comment on Open & Welcome Thread—August 2020 by (6 Aug 2024 23:46 UTC; 4 points)

- 's comment on Automatic Rate Limiting on LessWrong by (4 Jul 2023 6:30 UTC; 3 points)

- 's comment on What specific changes should we as a community make to the effective altruism community? [Stage 1] by (EA Forum; 5 Dec 2022 16:56 UTC; 2 points)

- 's comment on How ForumMagnum builds communities of inquiry by (6 Sep 2023 21:10 UTC; 1 point)

i think, in retrospect, this feature was a really great addition to the website.