Voting Results for the 2022 Review

The 5th Annual LessWrong Review has come to a close!

Review Facts

There were 5330 posts published in 2022.

Here’s how many posts passed through the different review phases.

| Phase | No. of posts | Eligibility |

| Nominations Phase | 579 | Any 2022 post could be given preliminary votes |

| Review Phase | 363 | Posts with 2+ votes could be reviewed |

| Voting Phase | 168 | Posts with 1+ reviews could be voted on |

Here how many votes and voters there were by karma bracket.

| Karma Bucket | No. of Voters | No. of Votes Cast | ||

| Any | 333 | 5007 | ||

| 1+ | 307 | 4944 | ||

| 10+ | 298 | 4902 | ||

| 100+ | 245 | 4538 | ||

| 1,000+ | 121 | 2801 | ||

| 10,000+ | 24 | 816 |

To give some context on this annual tradition, here are the absolute numbers compared to last year and to the first year of the LessWrong Review.

| 2018 | 2021 | 2022 | |||

| Voters | 59 | 238 | 333 | ||

| Nominations | 75 | 452 | 579 | ||

| Reviews | 120 | 209 | 227 | ||

| Votes | 1272 | 2870 | 5007 | ||

| Total LW Posts | 1703 | 4506 | 5330 |

Review Prizes

There were lots of great reviews this year! Here’s a link to all of them

Of 227 reviews we’re giving 31 of them prizes.

This follows up on Habryka who gave out about half of these prizes 2 months ago.

Note that two users were paid to produce reviews and so will not be receiving the prize money. They’re still here because I wanted to indicate that they wrote some really great reviews.

Click below to expand and see who won prizes.

Excellent ($200) (7 reviews)

Buck for his self-review of Causal Scrubbing: a method for rigorously testing interpretability hypotheses

DirectedEvolution for their paid review of How satisfied should you expect to be with your partner?

LawrenceC for their paid review of Some Lessons Learned from Studying Indirect Object Identification in GPT-2 small

LawrenceC for their paid review of How “Discovering Latent Knowledge in Language Models Without Supervision” Fits Into a Broader Alignment Scheme

LoganStrohl for their self-review of the Intro to Naturalism sequence

porby for their self-review of Why I think strong general AI is coming soon

Great ($100) (6 reviews)

DirectedEvolution for their paid review of Slack matters more than any outcome

janus for their self-review of Simulators

Lee Sharkey for their self-review of Taking features out of superposition with sparse autoencoders

Neel Nanda for their review of “Some Lessons Learned from Studying Indirect Object Identification in GPT-2 small”

nostalgebraist for their review of Simulators

Writer for their review of “Inner and outer alignment decompose one hard problem into two extremely hard problems”

Good ($50) (18 reviews)

Alex_Altair for their review of the Intro to Naturalism sequence

Buck for their review of K-complexity is silly; use cross-entropy instead

Davidmanheim for their review of It’s Probably not Lithium

[DEACTIVATED] Duncan Sabien for their review of Here’s the exit.

[DEACTIVATED] Duncan Sabien for their self-review of Benign Boundary Violations

eukaryote for their self-review of Fiber arts, mysterious dodecahedrons, and waiting on “Eureka!”

Jan_Kulveit for their review of Human values & biases are inaccessible to the genome

Jan_Kulveit for their review of The shard theory of human values

johnswentworth for their review of Revisiting algorithmic progress

L Rudolf L for their self-review of Review: Amusing Ourselves to Death

Nathan Young for their review of Introducing Pastcasting: A tool for forecasting practice

Neel Nanda for their self-review of A Longlist of Theories of Impact for Interpretability

Screwtape for their review of How To: A Workshop (or anything)

Vanessa Kosoy for their post-length review of Where I agree and disagree with Eliezer

Vika for their self-review of DeepMind alignment team opinions on AGI ruin arguments

Vika for their self-review of Refining the Sharp Left Turn threat model, part 1: claims and mechanisms

We’ll reach out to prizewinners in the coming weeks to give you your prizes.

We have been working on a new way of celebrating the best posts of the year

The top 50 posts of each year are being celebrated in a new way! Read this companion post to find out all the details, but for now here’s a preview of the sorts of changes we’ve made for the top-voted posts of the annual review.

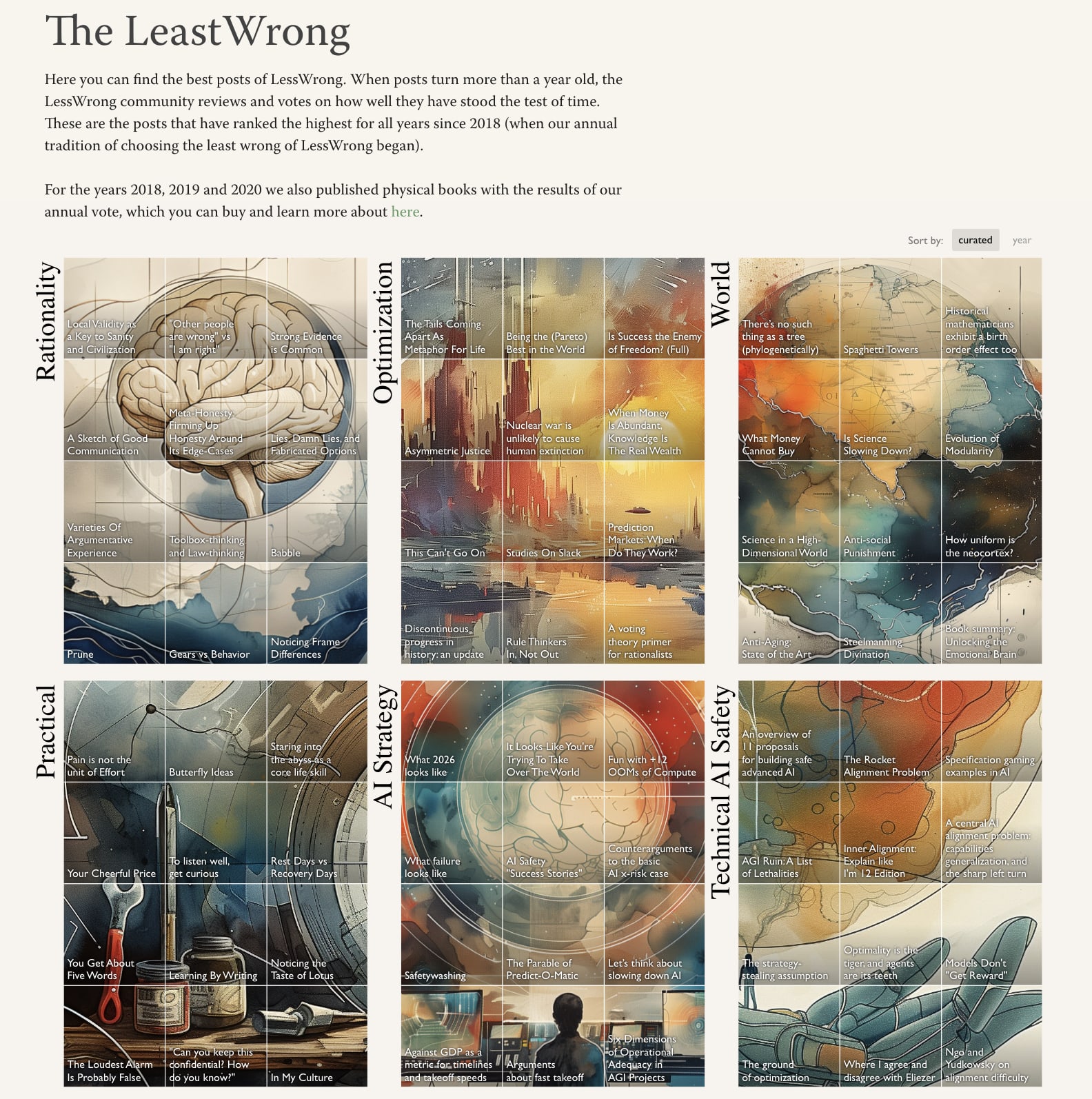

And there’s a new LeastWrong page with the top 50 posts from all 5 annual reviews so far, sorted into categories.

You can learn more about what we’ve built in the companion post.

Okay, now onto the voting results!

Voting Results

Voting is visualized here with dots of varying sizes, roughly indicating that a user thought a post was “good” (+1), “important” (+4), or “extremely important” (+9).

Green dots indicate positive votes. Red indicate negative votes.

If a user spent more than their budget of 500 points, all of their votes were scaled down slightly, so some of the circles are slightly smaller than others.

These are the 161 posts that got a net positive score, out of 168 posts that were eligible for the vote.

Just noticing that every post has at least one negative vote, which feels interesting for some reason.

Technically the optimal way to spend your points to influence the vote outcome is to center them (i.e. have the mean be zero). In-practice this means giving a −1 to lots of posts. It doesn’t provide much of an advantage, but I vaguely remember some people saying they did it, which IMO would explain there being some very small number of negative votes on everything.

The new designs are cool, I’d just be worried about venturing too far into insight porn. You don’t want people reading the posts just because they like how they look (although reading them superficially is probably better than not reading them at all). Clicking on the posts and seeing a giant image that bleeds color into the otherwise sober text format is distracting.

I guess if I don’t like it there’s always GreaterWrong.