If your model of we get safety is wrong, the effect of your third point may be largely to justify pushing capabilities in certain areas.

Oh, hmm, good point, thanks. Let me try again:

When I think of humans who get difficult things done, or figure difficult things out, they tend to care about accomplishing those things, a lot, and in a direct and explicit way, not just e.g. as a facet of what kind of person they see themselves as. I mean, maybe “what kind of person I see myself as” has something to do with how they originally came to care about those things, but it’s not what they’re explicitly thinking about. They’re thinking directly about the object-level prize at the end of the journey, and how to get that prize.

E.g. plenty of climate change activists think of climate change activism as a good and virtuous thing to do, but I think the subset of climate change activists who are really moving the needle are the ones who are directly thinking about climate change being directly bad, and really want it to stop, and are focused directly on how to make that happen.

E.g. plenty of mathematicians think of math as a good and praiseworthy activity, but I think that the person who will solve the Riemann hypothesis will be a person who is (in addition to being smart etc.) really damn curious about why the Riemann hypothesis is true, and focused directly on figuring that out. Or they’re really damn eager to become famous by solving the Riemann hypothesis, or whatever else.

It seems to me that this is a general pattern—i.e., we need direct-consequentialism not just consequentialism-incidentally-arising-from-virtue to accomplish difficult novel tasks—and my hunch is that this pattern generalizes to brain-like AGI. If so, then we will face the problem of balancing consequentialist direct top-level goals with non-consequentialist direct top-level goals, rather than merely facing the (probably easier) problem of avoiding the former altogether.

(This is all a lightly-held opinion.)

Here are some of my top candidates for big pushes to do right now on technical AI safety (low effort notes):

Much better model organisms / misalignment analogies:

Doing a wider set of pessimized training runs

Good candidate for lots of AI labor automation? Like maybe good to try to set up pipelines for building these envs.

Demonstrating risks from fitness-seekers/reward-seekers empirically

Even on current models with better tests, see here

Demonstrating various types of memetic spread of misalignment?

Actually do control

Build pipelines for red-teaming monitors and the agent itself. For the agent red-teaming, I’d put particular focus on checking whether it continues malign trajectories.

Scaffold integrated control features and other non-monitoring runtime control measures

Human response and auditing

Improving async and sync monitoring

Agent security features

Surveilling for rogue internal deployments (as in, building after-the-fact detection methods for rogue deployments)

Preparing for handoff and elicitation

Get AIs generically better at conceptual work

Have a plan for the evals we ultimately need to see if handoff/deference would go well and start iterating on earlier versions

These presumably will involve a bunch of manual scoring, so we’ll need to build a process for it.

Analyze AI biases and epistemics and improve across many domains

Build the anti-slop/anti-mundane-misalignment coalition via doing ratings of AIs and applying some pressure to improve on these ratings. This could focus on a variety of related issues.

The hope is basically that there might be widespread interest in removing/redacting mundane misalignment and other non-misalignment behavioral problems that reduce productivity and large parts of this seem differentially good. So, if we could make this a salient metric, AI companies might improve this. A lot of the difficulty would be in measuring the problem reasonably well. There are a bunch of different ways to apply pressure or increase salience if we had decent metrics, especially if these metrics legibly correspond to a common problem that many people are running into.

Try to do various trend extrapolations on things here to argue we aren’t on track?

Neuralese decoding prep: Make natural language autoencoders much better, build methods for extracting internal CoT, build better evaluations of how well natural language autoencoders work.

It’s wacky that we don’t know if current AIs would try to take over the world if:

Takeover was easy

Takeover was an obvious way to accomplish an otherwise extremely difficult task

Takeover didn’t have clearly evil vibes while clearly being takeover

The situation didn’t look like a test

Checking is hard!

I suspect the answer is basically no, at least for AIs from Anthropic and OpenAI, but I’m not that confident. Models sometimes do crazy (misaligned) actions to try to succeed at tasks when they get stuck.

My understanding is that ARC Theory has thought some about this topic and has proposals for avoiding manipulation. I think their only public output on this is in an appendix of the ELK report ‘Avoiding subtle manipulation’. I recommend reading ‘“Narrow” elicitation and why it might be sufficient’ and ‘Indirect normativity: defining a utility function’ first for context.

I think @Lukas Finnveden and maybe @Eric Neyman thought about this more after this point. (My recollection is that at least @Lukas Finnveden updated toward thinking the problem was harder and that the sketch in the ELK appendix wouldn’t be sufficient.)

Fair enough. And I certainly agree that there is a lot of bathwater! The bundle of connotations attached to the word “wanting” is a mess. I just want to flag that it seems to me that much of the normal ontology can be rescued, albeit with a little bit of work. I claim that concepts like corrigibility are still useful and coherent once the rescuing has taken place.

I haven’t written at length about the distinction between terminal and instrumental goals myself (there’s a bit at the start of CAST, but I don’t belabor it), but I think Eliezer did a good job in 2007. In my own words, I would say that it makes some sense to divide the planning system of the mind into a portion that is a model of the world, where it makes sense to talk about truth and so on, and another section of the mind that is about judging the desirability of various potential world states and/or trajectories. That second portion (or an important component of it) is what I would call the “values” of the agent, and when the values are put in contact with concrete outcomes that are judged highly compared to others, I would call them terminal goals. Instrumental goals are then constructed as a second-order operation on top of one or more terminal goals (and the dynamics of the world model), so that we can shortcut planning as a question of how to first get the instrumental goal so that we can later move from that state to the terminal goal.

As a concrete example, I wanted to go home after work last night (which is itself an instrumental goal in the service of many other terminal goals, such as comfort, but which we can treat as terminal). I planned to drive in my car through a small town to get home, and thus steered my car towards the town, because “get to the town” was instrumental to my (more) terminal goal. As I approached, I found out that there had been an accident and that the road was closed. If my world model had included this fact, I would not have identified “get to the town” as an instrumental goal. Once I was aware of it, I changed my plan so that I drove down a country detour that went around the town.

I do not consider learning about the accident to have changed my values or the way that I judged outcomes. Instead, it changed my plan.

Does that make sense?

I guess in my takeaway from the post (haven’t reread since yesterday), the thrust was like: non-deception, corrigibility etc probably don’t have ‘true names’, because they’re concepts fundamentally attached to a fake ontology. In particular, non-causal vitalistic free will sort of things.

Not exactly … What I had in mind overall was more like: the discussion of human intuitions in §2 “sets the stage” by (A) providing some brainstorming aid and grounding as we embark on the Quest for a True Name For Manipulation in §3–§4; and §2 also “sets the stage” by (2) seeding the idea that we should at least be open to the possibility of such a True Name not existing at all, rather than it being an obvious thing, like how I know that a sock exists even if I don’t how to mathematically define it, because I have an intuitive notion of a sock and it’s all-but-certain that this intuitive notion can in principle be formalized into some real-world notion of sock-ness. Whereas for manipulation, maybe it can be construed as pointing to something real, or maybe it can’t, but I think it’s important to be in a mindset where this is not obvious, like it is for socks.

Then the alignment-relevant meat of the post is §3–§4, along with further discussion in §5.

Sorry if I wrote things up in a confusing way, but I’m not seeing an obvious way to improve it right now.

Hmm. I guess when I wrote that part, I was imagining a kind of dichotomy, where one branch is “don’t change what the human is trying to do right now” (and then the AI wouldn’t say that the store is closed), and the other branch is “don’t change what the human would want after infinite ideal reflection” (and then I don’t know how to install that motivation into the particular AI architecture I have in mind).

I guess you’re saying that that’s a false dichotomy, because there’s a middle ground in between those? Have you written more about what constitutes a “terminal goal” in your view? (Even if you don’t have a rigorous definition, I’m interested in examples or intuitions.) Thanks.

It’s possible for an intuitive human concept X to be a bundle of connotations that don’t add up to anything coherent, while ALSO there’s some similar concept Y that is mathematically well-defined and is capturing many (but not all) of those connotations. Then we can argue about whether X is “really” an imperfect pointer to Y, versus whether X and Y are different but related. But that’s a pointless argument with no answer.

(Fun example: Dan Dennett wrote a book advocating for free will compatibilism in 1984, and then in 2015 added a new preface saying: well actually on second thought, maybe I should have just said all along that we should abandon the term “free will” altogether.)

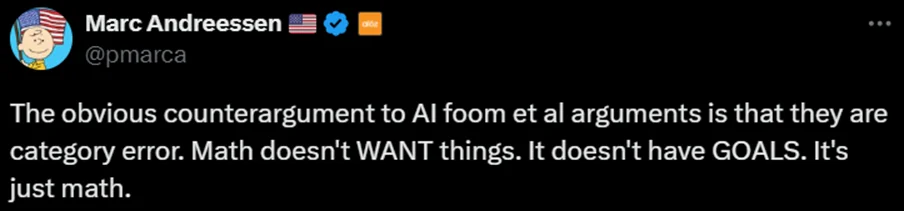

Anyway, I stand by my claim that acausality is an aspect of how most people intuitively think about wanting. Here’s an example … it’s possible that this tweet is bad-faith, but regardless, I think Marc wouldn’t have said it if it didn’t have some intuitive appeal:

So anyway, we can say that our intuitions around “wanting” have that incoherent aspect (per §2), and we can simultaneously ALSO say that there are well-defined notions of optimization (e.g. in §3.4 I cite Alex Flint’s) that overlap many aspects of the “wanting” intuition, just like you say. Those aren’t contradictory. That’s the whole thing I was trying to do in §3–§4.

I don’t understand how simulacrum levels come into it [1] . Are you taking me to mean ‘virtue signalling’ when I say virtue? No! Adherence to honest and earnest communication, for example, is a commonly recognised virtue.

- ↩︎

FWIW I also have never got what is supposedly ordinal about the simulacrum levels beyond 1, the honest one. The other ‘levels’ just look like various orthogonal breeds of fakery, to me. Haven’t scrutinised deeply.

- ↩︎

There’s also a curious and troubling aspect of humans that we mingle the types of beliefs, intentions, preferences, and intrinsic vs instrumental stuff. We’re also malleable which makes things like CEV potentially underdetermined. I’m not entirely sure what to do about that! Though I unironically anticipate that the right virtue orientation can help a lot with selecting ‘acceptable enough’ paths through that highly path-dependent space.

I guess in my takeaway from the post (haven’t reread since yesterday), the thrust was like: non-deception, corrigibility etc probably don’t have ‘true names’, because they’re concepts fundamentally attached to a fake ontology. In particular, non-causal vitalistic free will sort of things.

But actually I appear to be able to describe deception and other things in terms which don’t route through that kind of ontology, at all, and models like that are how I and many of my interlocutors discuss such things. Curiously I don’t think any entries in section 3 or 4 correspond to the description I gave—agree that 3.4 is maybe closest (and on a different day I might have used a case example closer to that).

To me that refutes the paraphrased thrust above.

I grant that there’s something funny with ‘intentional stance’ at all, where in principle one can describe any instantiated agent in terms of lower-level mechanics. So I guess one might say that ontology is mistaken (I don’t think you would)? But that’s just like all of physics basically. Complex systems are like that. You get emergence and have to deal with it. I hold that beliefs and intentions and preferences are worthy concepts to build with (and I think you do too).

Indeed various colloquial conceptions of agents are more mystical and incoherent. But I don’t think that has bearing on whether there’s a true name for the concepts discussed in more coherent terms.

Do you think it could manipulate you into things that you-now would find repugnant, while never manipulating you by your standards? That seems contradictory. If my ASI says “You asked my to tell you anything I’m doing that you might consider manipulative. Here’s the biggest one. I like pink ponies a lot, so I’m going to keep presenting possible futures in ways that emphasize how awesome pink ponies are. I think you’ll ultimately agree with me.” You could either say “okay fine that doesn’t seem like manipulation” or “don’t do that, that’s manipulative!”

What I’d really do is turn this AI off because I didn’t think it was safe. Which, like, good job to the hypothetical interpretability / honesty / corrigibility / contingency systems work that puts me in that hypothetical situation. But as you mention, maybe the AI could avoid getting here in the first place. (And even if I turn it off, that’s cold comfort if someone else makes the other choice a few days later.)

I think manipulating me into not asking the question that leads to me shutting it off is definitely a strong choice that leads to more pink ponies. Or manipulating the situation so that it’s not me who’s asking the question, it’s someone else. It can also modify what the honest answer to questions about its own behavior are by precommitting—it could deliberately choose the answer to be something less concerning, if that led to more pink ponies. I’m also pretty concerned that the standards for what counts as “honesty” will allow for strategy.

(Isn’t it paradoxical to manipulate me into not asking the question if it’s not supposed to make some fixed manipulation-detector fire? No, it just has to simultaneously optimize against both my behavior and the firing of the manipulation-detector, and it’s the result of this optimization that I’m saying I expect to be manipulative according to me-outside-the-thought-experiment. There are probably more sophisticated (albeit currently unknown) ways of incorporating my standards into the AI’s decision-making that wouldn’t be so vulnerable, and if we figure them out I hope we use them to solve value learning.)

Partially I commented with this inside-the-thought-experiment versus outside-the-thought-experiment disconnect because I think it’s interesting in general. It’s kind of like Gödel sentences, or the vibrating record players from G.E.B. - me-inside-the-thought-experiment is a complicated enough system that he can be nudged in all sorts of ways if you understand him, and this property is hard to patch out. But the other part is the real-world case of replacing “pink ponies” with “human giving positive feedback signal”.

Clever AI we build to “just follows instructions” will on the current paradigm probably have some consequentialist desires about positive feedback signals and various correlates. As you can tell I’m pretty pessimistic that this would end in bad stuff.

An LLM solves a mathematical problem by introducing a novel definition which humans can interpret as a compelling and useful concept.

@Jude Stiel nudged me to (very much in my own words) update a bit to anticipate that it’s plausible we’ll see some degree of impoverished / partial originary (and therefore occasionally novel) concept formation. Some aspects of [real according to me] concept formation could be accessible to faster feedback. (This doesn’t much change my overall picture, and my view would still be surprised by large numbers of concepts produced by AIs and as interesting+useful to humans as human-produced concepts.)

I think you’re correctly identifying important issues and cracks in the standard ontology, but I think you’re throwing out too much baby in an effort to get rid of bathwater.

For example, I do not think it’s obvious that “Just like vitalistic force, ‘wanting’ is conceptualized as being acausal, i.e. an intrinsic property of an entity with no upstream cause.” In control theory, we can say that a system controls for a thing based on a small collection of mathematical relationships—pressuring an error signal towards zero. While the concept of wanting is overloaded and more complex, I think it makes sense to recognize that “X is controlling for Y” is a valid underpinning that has no vitalistic magic. We can ask what led X to control for Y, or how X controls for Y in terms that are closer to the underlying physics; there’s nothing acausal or intrinsic (except for the definitions, I suppose).

Oh, uh. Whoops. Forgot to switch to my work account.

Max’s stopgap plan would involve comparing the human’s values to what they’d be if the AI did nothing. I think he understates how bad that stopgap plan is. Even providing straightforwardly-true factual information can change what a person wants, right?

Yes. That’s right. And I am (among other things) worried about an AI that warps my values by telling me a series of facts.

But I want to clarify that I’m talking about terminal values, not strategic sub-goals. The stopgap plan is 100% able to tell me that the store is closed, thus changing my plan of going to the store. What it shouldn’t do is tell me intense stories about the suffering of pigs and thereby change how much I care about pigs.[1]

Why do you think this stopgap is so bad? (I agree that it’s bad, but it seems like you see it as worse than me.)

- ^

Unless this story is necessary to counteract another pressure such that I cleave closer to the null-action counterfactual.

- ^

if we’re learning what’s good by the gestalt of human judgment and culture, and if human judgment and culture can themselves be gradually shifted over time, then this might not be an adequate bulwark against the AGI’s consequentialist desires.

…

I think the way you put non-manipulation on par with consequentialist desires is to think in terms of evaluating future trajectories

Yeah, exactly, when my shoulder-optimist argues his case, he brings up the idea that the virtue-ethics-y side of the AI would maybe notice that the AI is engaging in a systematic pattern of behavior that has the effect of gradually shifting human judgment and culture over time, and it would see that as bad, and it would vote against behaving in that way. But it also might not. That’s why I said (in §1.2 and §4.2) that I was unsure about how bad a problem this is. I’m kinda stuck on that right now, and not sure how to proceed, except to try to engineer some different solution that’s easier for me to reason about.

I sort of agree? I think the net effect on overall capabilites progress is pretty small and some of the action I proposed would hopefully divert people from generic capabilites to working on this type of (hopefully particularly differential) capabilities. But I agree that some of these actions would involve safety motivated people doing work that would shorten timelines (relative to if they did nothing / worked on areas with no capabilities externalities) and it could turn out this work isn’t valuable.

I think for “Get AIs generically better at conceptual work” I think it seems especially relevant to account for whether: (1) the work would be done by capabilities people later anyway without this having a sufficiently useful acceleration effect on differential capabilities (2) the work has significant capabilities externalities. It’s possible I should describe this area as “Get AIs generically better at particularly differential conceptual work”, but I’m also sympathetic to work that tries less hard to be strongly differential/focused depending on the circumstances and various details.