Only Law Can Prevent Extinction

There’s a quote I read as a kid that stuck with me my whole life:

“Remember that all tax revenue is the result of holding a gun to somebody’s head. Not paying taxes is against the law. If you don’t pay taxes, you’ll be fined. If you don’t pay the fine, you’ll be jailed. If you try to escape from jail, you’ll be shot.”

-- P. J. O’Rourke.

At first I took away the libertarian lesson: Government is violence. It may, in some cases, be rightful violence. But it all rests on violence; never forget that.

Today I do think there’s an important distinction between two different shapes of violence. It’s a distinction that may make my fellow old-school classical Heinlein liberaltarians roll up their eyes about how there’s no deep moral difference. I still hold it to be important.

In a high-functioning ideal state—not all actual countries—the state’s violence is predictable and avoidable, and meant to be predicted and avoided. As part of that predictability, it comes from a limited number of specially licensed sources.

You’re supposed to know that you can just pay your taxes, and then not get shot.

Is there a moral difference between that and outright banditry? To the vast majority of ordinary people rather than political philosophers, yes.

“Violence”, in ordinary language, has the meaning of violence that is not predictable, that is not avoidable, that does not come from a limited list of sources whose rules people can learn.

Violence that is predictable and avoidable to you, whose consequences are regular and not chaotic, can of course still be terribly unjust and not to your own benefit. It doesn’t rule out a peasant being told to hand over two thirds of their harvest in exchange for not much. It doesn’t rule out your rent becoming huge because it’s illegal to build new housing, etcetera etcetera. Laws can still be bad laws. But it is meaningfully different to the people who live under those unjust laws, if they can at least succeed in avoiding violence that way.

The point of a “state monopoly on violence”, when it works, is to have violence come from a short list of knowable sources. A bullet doesn’t make a smaller hole when fired by someone in a tidy uniform. But oligopolized force can be more avoidable, because it comes from a short list of dangers—country, state, county, city—whose actual rules are learnable even by a relatively dumb person. Ideally. In a high-functioning society.

The Earth presently has a problem. That problem may need to be prevented by the imposition of law, though hopefully not much actual use of force.

The problem, roughly speaking, is that if AI gets very much smarter, it is liable to turn into superhuman AI / machine superintelligence / artificial superintelligence (ASI). Current AIs are not deadly on that scale, but they are increasing in capability fast and breaking upward from previous trend lines. ASI might come about through research breakthroughs directly advancing AI to a superhuman level; or because LLMs got good enough at half-blindly tweaking the design to make a smarter AI, that is then sufficiently improved to make an even smarter AI, such that the process cascades.

AIs are not designed like a bicycle, or programmed and written like a social media website. There’s a relatively small piece of code that humans do write, but what that code does, is tweak hundreds of billions of inscrutable numbers inside the actual AI, until that AI starts to talk like a person. The inscrutable numbers then do all sorts of strange things that no human decided for them to do, often things that require intelligence; like breaking out of containment during testing, or talking a human into committing suicide.

Controlling entities vastly smarter than humanity seems like it would, obviously, be the sort of problem that comes with plenty of subtleties and gotchas that can only be learned through practice. Some of the clever ideas that seemed to work fine at the non-superhuman level would fail to control strongly superhuman entities. Dynamics would change; something would go wrong. Probably a lot of things would go wrong, actually. It is hard to scale up engineering designs to vast new scales, and have them work right without a lot of further trial-and-error, even when you know how their internals work. To say nothing of this creation being an alien intelligence smarter than our species, a new kind of problem in all human history… I could go on for a while.

The thing about building vastly superhuman entities, is that you don’t necessarily get unlimited retries like you usually do in engineering. You don’t necessarily get to know there’s a problem, before it’s much too late; superhuman AIs may not decide to tell you everything they’re thinking, until they are ready to wipe us off the board. (It’s already an observed phenomenon that the latest AIs are usually aware of being tested, and may try to conceal malfeasance from an evaluator, like writing code that cheats at a code test and then cleans up the evidence after itself.)

Elon Musk’s actual stated plan for Grok, grown on some of the largest datacenters in the world, is that he need only build a superintelligence that values Truth, and then it will keep humans alive as useful truth-generators. That he hasn’t been shouted down by every AI scientist on Earth should tell you everything you need to know about the discipline’s general maturity as an engineering field. AI company founders and their investors have been selected to be blind to difficulties and unhearing of explanations. If Elon were the sort of person who could be talked out of his groundless optimism, he wouldn’t be running an AI company; so also with the founders of OpenAI and Anthropic.

If you need to read a statement by a few hundred academic computer scientists, Nobel laureates, retired admirals, etcetera, saying that yes AI is an extinction risk and we should take that as seriously as nuclear war, you can go look here. Frankly, most of them are relative latecomers to the matter and have not begun to grasp all the reasons to worry. But what they have already grasped and publicly agreed with, is enough to motivate policy.

I realize this might sound naively idealistic. But I say: The utter extermination of humanity, would be bad! It should be prevented if possible! There ought to be a law!

Specifically: There ought to be a law against further escalation of AGI capabilities, trying to halt it short of the point where it births superintelligence. A line drawn sharply and conservatively, because we don’t know how much further we can dance across this minefield before something explodes. My organization has a draft treaty online, but a bare gloss at “Okay what does that mean tho” would be: All the hugely expensive specialized chips used to grow large AIs, and run large AIs, would be collected in a limited number of datacenters, and used only under international supervision.

It would be beneath my dignity as a childhood reader of Heinlein and Orwell to pretend that this is not an invocation of force.

But it’s the sort of force that’s meant to be predictable, predicted, avoidable, and avoided. And that is a true large difference between lawful and unlawful force.

There’s in fact a difference between calling for a law, and calling for individual outbursts of violence. (Receipt that I am not arguing with a strawman, and that some people purport to not understand any such distinction: Here). Libertarian philosophy aside, most normal ordinary people can tell the difference, and care. They correctly think that they are less personally endangered by someone calling for a law than by someone calling for street violence.

But wait! The utter extinction of humanity—argue people who do not believe that premise—is a danger so extreme, that belief in it might possibly be used to argue for unlawful force! By the Fallacy of Appeal to Consequences, then, that belief can’t be true; thus we know as a matter of politics that it is impossible for superintelligence to extinguish humanity. Either it must be impossible for any cognitive system to exist that is advanced beyond a human brain; or the many never-challenged problems of controlling machine superintelligence must all prove to be easy. We cannot deduce which of these two facts is true, but their disjunction must be true and also knowable, because if it weren’t knowable, somebody might be able to argue for violence. Never in human history has any proposition proven to be true if anyone could possibly use it to argue for violence. The laws of physics check whether that could be a possible outcome of any physical situation, and avoid it with perfect reliability.

That whole line of reasoning is deranged, of course.

I will nonetheless proceed to spell out why its very first step is wrong, ahead of all the insanity that followed:

Unlawful violence is not able, in this case, to prevent the destruction of the world.

If an ASI ban is to accomplish anything at all, it has to be effective everywhere. When the ones said to me, “What do you think about our proposed national ban on more datacenters until they have sensible regulations?” I replied to them, “An AI can take your job, and a machine superintelligence can kill you, just as easily from a datacenter in another country.” They later added a provision saying that also GPUs couldn’t be exported to other countries until those countries had similar sensible regulations. (I am still feeling amazed, awed, and a little humbled, about the part where my words plausibly had any effect whatsoever. Politicians are a lot more sensible, in some real-life cases, than angry libertarian literature had led me to believe a few decades earlier.)

Datacenters in Iceland, if they were legal only there, could just as much escalate AI capabilities to the point of birthing the artificial superintelligence (ASI) that kills us. You would not be safe in your datacenter-free city. You can imagine the ASI side as having armies of flying drones that search everywhere; though really there are foreseeable, quickly-accessible-to-ASI technologies that would be much more dangerous than drone swarms. But those would take longer to explain, and the drone swarms suffice to make the point. You could not stay safe from ASI by hiding in the woods.

On my general political philosophy, if a company’s product only endangers voluntary customers who know what they’re getting into, by strong default that’s a matter between the company and the customer.

If a product might kill someone standing nearby the customer, like cigarette smoke, that’s a regional matter. Different cities or countries can try out different laws, and people can decide where to live.

If a product kills people standing on the other side of the planet from the customer, then that’s a matter for international negotiations and treaties.

ASI is a product that kills people standing on the other side of the planet. Driving an AI company out of just your own city will not protect your family from death. It won’t even protect your city from job losses, earlier in the timeline.

And similarly: To impede one executive, one researcher, or one company, does not change where AI is heading.

If tomorrow Demis Hassabis said, “I have realized we cannot do this”, and tried to shut down Google Deepmind, he would be fired and replaced. If Larry Page and Sergei Brin had an attack of sense about their ability to face down and control a superintelligence, and shut down Google AI research generally, those AI researchers would leave and go to other companies.

Nvidia is currently the most valuable company in the world, with a $4.5 trillion market capitalization, because everyone wants more AI-training chips than Nvidia has to sell. The limiting resource for AI is not land on which to construct datacenters; Earth has a lot of land. Banning a datacenter from your state may keep electricity cheaper there in the middle term, but it won’t stop the end of the world.

The limiting resource for AI is also not the number of companies pursuing AI. If one AI company was randomly ruined by their country’s government, other AI companies would swarm around to buy chips from Nvidia instead, which would stay at full production and sell their full production. The end of the world would carry on.

There is no one researcher who holds the secret to your death. They are all looking for pieces of the puzzle to accumulate, for individual rewards of fame and fortune. If somehow the person who was to find the next piece of the puzzle randomly choked on a chicken bone, somebody else would find a different puzzle piece a few months later, and Death would march on. AI researchers tell themselves that even if they gave up their enormous salaries, that wouldn’t help humanity much, because other researchers would just take their place. And the grim fact is that this is true, whether or not you consider it an excuse.

In other cases of civic activism, you can prevent one coal-fired power plant from being built in your own state, and then there is that much less carbon dioxide in the atmosphere and the world is a little less warm a century later. Or if you are against abortions, and you get your own state to outlaw abortions, perhaps there are then 1000 fewer abortions per year and that is to you a solid accomplishment. Which is to say: You can get returns on your marginal efforts that are roughly linear with the effort you put in.

The ASI problem is not like this. If you shut down 5% of AI research today, humanity does not experience 5% fewer casualties. We end up 100% dead after slightly more time. (But not 5% more time, because AI research doesn’t scale in serial speed with the number of parallel researchers; 9 women can’t birth a baby in 1 month.)

So we don’t need to have a weird upsetting conversation about doing bad unlawful things that would supposedly save the world, because even if someone did a very bad thing, that still wouldn’t save the world.

This is a point that some people seem to have a very hard time hearing—though those people are usually not on the anti-extinction side, to be clear. It’s more that some people can’t imagine that superhuman AI could be a serious danger, to the point where they have trouble reasoning about what that premise would imply. Others are politically opposed to AI regulation of any sort, and therefore would prefer to misunderstand these ideas in a way where they must imply terrible unacceptable conclusions.

I understand the reasons in principle. But it is a strange and frustrating phenomenon to encounter in practice, in people who otherwise seem coherent and intelligent (though maybe not quite on the level of GPT 5.4). Many people believe, somehow, that other people ought to think—not themselves, only other people—that outbursts of individual violence just have to be helpful. If you were truly desperate, how could you not resort to violence?

But even if you’re desperate, an outburst of violence usually will not actually solve your problems! That is a general truism in life, and it applies here in full force.

Even if you throw away all your morals, that doesn’t make it work. Even if you offer your soul to the Devil, the Devil is not buying.

How certain do you have to be that your child has terminal cancer, before you start killing puppies? 10% sure? 50% sure? 99.9%? The answer is that it doesn’t matter how certain you are, killing puppies doesn’t cure cancer. You can kill one hundred puppies and still not save your kid. There is no sin so great that it just has to be helpful because of how sinful it is.

Statistics show that civil movements with nonviolent doctrines are more successful at attaining their stated goals (especially in states that otherwise have functioning police). The factions that throw away all their morals lose the sympathy of the public and politicians, and then they fail. Terrorism is not an instant ‘I win’ button that people only refrain from pressing because they’re so moral. Society has succeeded in making it usually not pay off—say the numbers.

Being really, really desperate changes none of those mechanics.

Almost everyone who actually accepts a fair chance of ASI disaster doesn’t seem to have a hard time understanding this part. It’s an obvious consequence of the big picture, if you actually allow that big picture inside your head.

But it is hard for a human being to understand a thing, if it would be politically convenient to misunderstand. Opponents of AI regulation want any danger of extinction to imply unacceptable consequences.

They understand on some level how the AI industry functions. But they become mysteriously unable to connect that knowledge to their model of human decisionmaking. You can ask them, “If tomorrow I was arrested for attacking an AI-company headquarters, would you read that headline, and conclude that AI had been stopped in its tracks forever and superintelligence would never happen?” and get back blank stares.

Even some people that are not obviously politically opposed seem to stumble over the idea. I’m genuinely not sure why. I think maybe they are having trouble processing “Well of course ASI would just kill everyone, we’re nowhere near being able to control it” as an ordinary understanding of the world, the way that 20th-century concerns about global nuclear war were part of a mundane understanding of the world. “If every country gets nuclear weapons they will eventually be used” was not, to people in 1945, the sort of belief where you have to prove how strongly you believe it by being violent. It was just something they were afraid would prove true about the world, and then cause their families to die in an unusually horrible kind of fire. So they didn’t randomly attack the owners of uranium-mining companies, to prove how strongly they believed or how worried they were; that, on their correct understanding of the world, would not have solved humanity’s big problem—namely, the inexorable-seeming incentives for proliferation. Instead they worked hard, and collected a coalition, and built an international nuclear anti-proliferation regime. Both the United States and the Soviet Union cooperated on many aspects of that regime, despite hating each other quite a lot, because neither country’s leaders expected they’d have a good day if an actual nuclear war happened.

The sort of conditionally applicable force that could stop everyone from dying to superhuman AI, would have to be everywhere and reliable; uniform and universal.

Let it be predictable, predicted, avoidable, and avoided.

It is so much a clear case for state-approved lawful force, that there would be little point in adding any other kind of force to the mix. It would just scare and offend people, and they’d be valid to be scared and offended. People don’t like unguessably long lists of possible violence-sources in their lives, for then they cannot predict it and avoid it.

I did spell out the necessity of the lawful force, in first suggesting that international policy. Some asked afterward, “Why would you possibly mention that the treaty might need to be enforced by a conventional airstrike, if somebody tried to defy the ban?” One reason is that some treaties aren’t real and actually enforced, and that this treaty needs to be the actually-enforced sort. Another reason is that if you don’t spell things out, that same set of people will make stuff up instead; they will wave their hands and say, “Oh, he doesn’t realize that somebody might have to enforce his pretty treaty.”

And finally it did seem wiser to me, that all this matter be made very plain, and not dressed up in the sort of obscuring language that sometimes accompanies politics. For an international ASI ban to have the best chance of operating without its force actually being invoked, the great powers signatory to it need to successfully communicate to each other and to any non-signatories: We are more terrified of machine superintelligence killing everyone on Earth than we are reluctant to use state military force to prevent that.

If North Korea, believed to have around 50 nuclear bombs, were to steal chips and build an unmonitored datacenter, I would hold that diplomacy ought to sincerely communicate to North Korea, “You are terrifying the United States and China. Shut down your datacenter or it will be destroyed by conventional weapons, out of terror for our lives and the lives of our children.” And if diplomacy fails, and the conditional use of force fires, and then North Korea retaliates with a first use of its nuclear weapons? I don’t think it would; that wouldn’t end well for them, and they probably know that. But I also don’t think this is a hypothetical where sanity says that we are so terrified of someone’s possible first use of nuclear weapons, that we let them shatter a setup that protects all life on Earth.

You’d want to be very clear about all of this in advance. Countries not understanding it in advance could be very bad. History shows that is how a lot of wars have started, through someone failing to predict a conditional application of force and avoid it. One historical view suggests that Germany invaded Poland in 1939 in part because, when Britain had tried to warn that Britain would defend Poland, Hitler read the messages himself, instead of having the professional diplomats explain it to him; and Hitler read the standard diplomatic politesse and soft words as conciliatory; and thus began World War II. More recently, a similar diplomatic misunderstanding by Saddam Hussein is thought to have resulted in Hussein’s 1990 invasion of Kuwait, as then in fact provoked a massive international response. I’ve sometimes been criticized for trying to spell out proposed policy in such awfully plain words, like saying that the allies might have to airstrike a datacenter if diplomacy failed. Some people—reaching pretty hard, in my opinion—claimed that this must be a disguised incitement to unlawful violence. But being very clear about the shape of the lawful force was important, in this case.

And then, all that policy is sufficiently the obvious and sensible proposal—following from the ultimately straightforward realization that something vastly smarter than humanity is not something humanity presently knows how to build safely—and never mind how bad it starts looking if you learn details like Elon Musk’s stated plan—that some people find it inconveniently difficult to argue with. Unless they lie about what the proposal is.

So I am misquoted (that is, they fabricate a quote I did not say, which is to say, they lie) as calling for “b*mbing datacenters”, two words I did not utter. In the first 2023 proposal in TIME magazine, I wrote the words “be willing to destroy a rogue datacenter by airstrike”. I was only given one day by TIME to write it—otherwise it wouldn’t have been ‘topical’—but I had thought I was saying that part quite carefully. Even quoted out of context, I thought, this ought to make very clear that I was talking about state-sanctioned use of force to preserve a previously successful ban from disruption. And absolutely not some guy with a truck bomb, attacking one datacenter in their personal country while all the other datacenters kept running.

And that phrasing is clear even when quoted out of context! If quoted accurately. So some (not all) accelerationists just lied about what was being advocated, and fabricated quotes about “b*mbing datacenters”. When called out, they would protest, “Oh, you pretty much said that, there’s no important difference!” To this as ever the reply is, “If it is worth it to you to lie about, it must be important.”

A similarly fabricated quote says that I proposed “nuking datacenters”. Ladies, gentlemen, all others, there is absolutely no reason to nuke a noncompliant datacenter. In the last extremity of failed diplomacy, a conventional missile will do quite well. The taboo against first use of nuclear weapons is something that I consider one of the great triumphs of the post-WW2 era. I am proud as a human being that we pulled that off. Nothing about this matter requires violating that taboo. We should not be overeager to throw away all limits and sense, and especially not when there is no need. Life on Earth needs to go on in the sense of “life goes on”, not just in the sense of “not being killed by machine superintelligences”.

It is sometimes claimed that ASI cannot possibly be banned without a worldwide tyranny—by people who oppose AI regulation and so would prefer it to require horrifying unacceptable measures.

At the very least: I don’t think we know this to be true to the point we should all lie down and die instead.

At least until recently, humanity has managed to not have every country building its own nuclear arsenal. We did that without everyone on Earth being subjected to daily-required personal obediences to the International Atomic Energy Agency. Some people in the 1940s and 1950s thought it would take a tyrannical world dictatorship, to prevent every country from getting nuclear weapons followed by lots of nuclear war! Shutting down all major wars between major powers, or slowing that kind of technological proliferation, had never once been done before, in all history! But those worried skeptics were wrong; for some decades, at least, nuclear proliferation was greatly slowed compared to the more pessimistic forecasts, without a global tyranny. And now we have that precedent to show it can be done; not easily, not trivially, but it can be done.

For the supervast majority of normal people, “Don’t spend billions of dollars to smuggle computer chips, construct an illegal datacenter, and try to build a superintelligence” is a very small addition to the list of things they must not do. Surveys seem to show that most people think machine superintelligence is a terrible idea anyway. (Based.)

And the few who feel really personally bothered by that law?

They may be sad. They’ll definitely be angry. But they’ll survive. They wouldn’t actually survive otherwise.

My will for Sam Altman’s fate is that he need only fear the use of force by his country, his state, his county, and his city, as before; with the difference that Sam Altman, like everyone else on Earth, is told not to build any machine superintelligences; and that this potential use of state force against his person be predictable to him, and predicted by him, and avoidable to him, and avoided by him; with him as with everyone. That’s how it needs to be if any of us are to survive, or our children, or our pets, or our garden plants.

Let Sam Altman have no fear of violence beyond that, nor fire in the night.

Artificial superintelligence is the very archetype and posterchild of a problem that can only be solved with force that has the shape of law, as in state-backed universal conditional applications of force meant to be predictable and avoided. Anything which is not that does not solve the problem.

And when somebody does throw a Molotov cocktail at Sam Altman’s house, that is not actually good for the anti-extinction movement, as anyone with the tiniest bit of sense could and did predict.

Currently all the anti-extinctionist leaders are begging their people to not be violent—as they’ve said in the past, but louder now. And conversely some of the accelerationists are trying to goad violence, in some cases to the shock of their usual audiences:

That this sentiment is not universal among accelerationists, is seen immediately from the protestor in their replies. Let us, if not them, be swift to fairly admit: We are observing bad apples and not a bad barrel.

But also to be clear, those bad apples were also trying to goad people into violence earlier, in advance of the attacks on Altman:

To this tweet I will not belabor the reply that anti-extinctionists may be good people with morals; some good people might nod, but others would find it unconvincing, and there is one analysis that answers for all: It would not work. And given that it would not save humanity, anti-extinctionists make the obvious estimate that our own cause would be, and has been, harmed by futile outbursts of unlawful violence.

Conversely, some accelerationists behave as if they want to spread the word and meme of violence as far as possible. It is reasonable to guess that some part of their brain has considered the consequences of somebody being moved by their taunts, and found them quite acceptable. If they can goad somebody labelable as anti-extinctionist to violence, that benefits their faction. They may consider Sam Altman replaceable to their cause, so long as there is no law and treaty to stop all the AI companies everywhere.

They’re right. Sam Altman is not the One Ring. He is not Sauron’s one weakness. If anything happened to him, AI would go on.

I am posting these Tweets in part to say to any impressionable young people who may consider themselves humanity’s defenders, who are at all willing to listen to their allies rather than their enemies: Hey. Don’t play into their hands. They’re taunting you exactly because violence is good for their side and bad for ours. If it were true that violence could help you, if they expected that violence would hurt AI progress more than it helped their side politically, they’d never taunt you like that, because they’d be afraid rather than eager to see you turn to violence. They’re saying it to you because it’s not true; and if it were true, they’d never say it to you. They’re not on your side, and the advice implied by the taunts is deliberately harmful for you and good for them.

This is of course a general principle when somebody is taunting you. It means they want you to fight, which means they expect to benefit from you trying.

Don’t believe their taunts. Believe what is implied by their act of taunting, that violence hurts you and helps them. That part is accurate, obvious, and not at all hard for their brains to figure out in the background, before they choose to taunt you.

It makes sense to me that society penalizes factions that appear to benefit from violence, even if their leaders try to disclaim that violence. Intuitively, you don’t want to create a vulnerability in society where faction leaders could gain an advantage by sending out assassins and then publicly disclaiming them.

But at the point where some accelerationists are openly trying to goad anti-extinctionists into violence, while the anti-extinctionist leaders beg for peace—this denotes society has gone too far in the direction of punishing the ‘violent’ faction for what’s probably actually in real life a rogue. And not far enough in leveling some social opprobrium at (individual) accelerationist sociopaths standing nearby, openly trying to provoke violence they know would be useful to them.

It is of course an old story. The civic movement leaders try to persuade their people to stay calm, disciplined, and orderly on the march. The local police, if they oppose that movement, will allow looters to tag along and then forcibly prevent the marchers from stopping the looters. When your society gets to that point, it has created a new vulnerability in the opposite direction.

One could perhaps also observe that certain people have taken this particular moment to argue that a scientific position whose native plausibility ought to be obvious, and which has been endorsed by hundreds of academic scientists, retired admirals, Nobel laureates, etcetera, inevitably implies that unlawful violence must be a great idea. I am not going to make any great show of wringing my hands and clutching my pearls about how such false speech might endanger the innocent for their own political advantage, what if some mentally disturbed person believed them, etcetera. This is how human beings always behave around politics; it is not unusual wrongdoing for any faction to behave that way. They, too, have a right to say what they believe, and to believe things that are obviously false but politically convenient to them. I may still take a moment to observe what is happening.

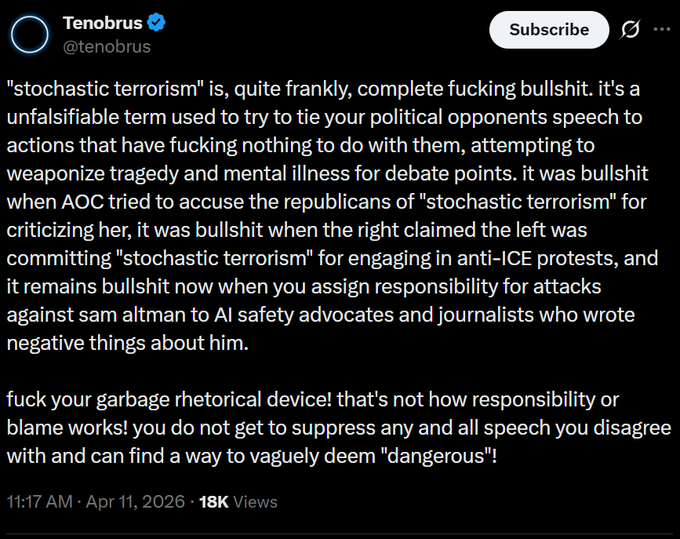

As for the argument that to criticize AI at all is “stochastic terrorism”, because someone will react violently eventually, even if not logically so? Tenobrus put it well:

The leaders of anti-extinctionism do have some responsibility to ask their people to please behave themselves. And we do! That actually is around as much as should be reasonably asked of any civic movement. We ought to try, and try we do! We cannot and should not be expected to succeed every single time given base rates of mental illness in the population.

Speech about important matters to society should not properly be held hostage to the whim of any madman that might do a stupid thing, to the detriment of his supposed cause and against every visible word of that cause’s leaders.

That would be a foolish way to run a society.

And policywise, this would be a very serious matter about which to shut down speech. Anthropic Claude Mythos is already a state-level actor in terms of how much harm it could theoretically have done—given its demonstrated and verified ability to find critical security vulnerabilities in every operating system and browser; and how fast Mythos could’ve exploited those vulnerabilities, with ten thousand parallel threads of intelligent attack. Mythos hypothetically rampant or misused could have taken down the US power grid, say… at the end of its work, after introducing hard-to-find errors into all the bureaucracies and paperwork and doctors’ notes connected to the Internet.

In 2024 a claim of that being possible would have been a mere prediction and dismissed as fantasy. Now it is an observation and mere reality. That’s the danger level of current AI, for all that Anthropic seems to be trying to be well-behaved about it, and Mythos has not yet visibly run loose. To say in the face of that, that nobody should critique AI, or AI companies, or even individual AI company leaders as per recent journalism, because some madman might thereby be inspired to violence—it fails cost/benefit analysis, dear reader.

AI is already a state-level potential danger, if not quite yet a state-level actual power. Free speech to critique AI then holds a corresponding level of importance. The stochastic madman trying to hold free speech hostage to his possible whims—he must be told he is not important enough for all humanity to defer to him about subjects he might find upsetting.

And faced with an actual human-extinction-level danger like machine superintelligence—as ought obviously to represent that level of possible danger, even if some people disagree about its rough probability—well, that would be a silly way for everyone on Earth to die, if nobody dared to talk about the danger, or argue high estimates of that danger, and it happened without any effort at stopping it.

So let’s not die! Let’s save everyone!

Sam Altman too.

That’s the dream.

If you want to kill modern AI using existing law and have friends in the correct government offices, it should be fairly straightforward to do so without new law

This legal category is very aggressively defined: https://en.wikipedia.org/wiki/Restricted_Data

It was written to mean ‘if someone draws a working design for a nuclear bomb or certain kinds of nuclear material production equipment anywhere, that data is a state secret, regardless of the source of the information used to produce it’. This is commonly referred to as ‘born classified’. There are a good 70+ years of arguments about whether this is a good law, but that is the law.

Therefore, here is your process:

-find an AI model that you reasonably believe is capable of outputting something the government will view as a classified fact related to nuclear weapon design. Edit: you should probably build it yourself by either training from scratch or fine-tuning an open model.

-send the model weights, installation instructions, and a letter to the DOE Office of Classification requesting that they determine that your model is NOT restricted data. Offer to send them hardware to run the model (you won’t get it back). I am familiar with this classification regime, documents are not the only things that can be marked restricted data, physical embodiments (sculpture or actual objects) or computer programs (math models) are classified with it.

They can either determine that your model contains restricted data (if the model can invent novel tech, it should be able to figure out 80 year old tech so this might not be a hard bar to jump), determine that it does not contain restricted data (in which case you should send them a bunch of outputs that look bad and see what they say), or determine that the class of material (model) cannot be judged under the law.

Since the model is a unitary object, it will be quite hard to separate ‘these specific weights are where the restricted data lives’ from the rest, so suddenly frontier models will become, through the stroke of a bureaucrat’s pen, state secrets.

Edit: get someone with a current or former Q clearance to submit the model as their own work if you want to add ‘it would be ok for this to be published, but not by you’ to the list of possible outcomes. That would mean that an AI researcher who wants to credibly take themselves out of AI research permanently can simply acquire a Q-cleared job (LLNL is near the bay) at some point. The possible positive (for OP) outcomes are 1) the US bureaucracy has a reason to slam the door on AI research globally in the name of counterproliferation 2) there is a nunn-lugar type path for researchers to make a living without working on dangerous capabilities (or working slowly only within the government, which is conservative, cost constrained, and now staffed with people who wanted to make safety their mission). If you seize power, you don’t need new legislation on safety, you only need some bureaucrats to choose to enforce the rule, plus potentially extra funding for military industrial complex contractor jobs.

Arguments of law in this context appear to me to be less important than arguments of power, but...if law matters, here is a law you can use, I guess?

I don’t know that this is as true as it is in the popular mindset. A lot of the Weathermen, who were one of the most prolific terrorist organizations in American history, now hold positions of power, including very in-demand university sinecures.

More sympathetically, the American revolutionaries took up arms against their government (though they were vastly less inclined to target noncombatants, especially with lethal force), and went on to become a superpower. Likewise, though the USSR fell through peaceful revolution, it was established violently. While the USSR was not a nice place to live, its founders certainly succeeded in putting themselves into power.

The misconception’s causes are twofold.

First, successful peaceful revolutionaries will happily hold that honor, but successful violent revolutionaries will either erase or justify their deeds when the history books are written.

Second, conspiracy theories aside, governments generally do not want to be the target of violent uprisings. Everyone in power has a tacit incentive to assert to malcontents that assassinations that might kill them and bombings that might damage their holdings are less effective at unseating them than peaceful protests and organized elections.

This may well be true, or they could be equally effective, but the incentive remains. If you’re in power over a country, a failed terrorist uprising is much more painful than a failed velvet revolution. Even if you consider a successful version of both equally bad, you’d rather your enemies tried the latter.

This isn’t to say that violent uprisings aren’t bad, only to point out that the idea that they always fail is not necessarily true.

Edit: A better argument against violence, within this context, draws naturally from the above. Violent uprisings privilege the interests of those who are best at violence—either directly, in the form of generals, or indirectly in the form of bloody court intrigue. Velvet revolutions have better odds at retaining obedience to their founders, because there isn’t an intermediate step that requires leaders who aren’t necessarily good at the same things. The sort of person who could regulate an entire industry by means of unpredictable violence may have a much different vision for the world than you do.

Please pay attention to the “states that otherwise have functioning police” qualifier, because it excludes Early Modern Age revolutions entirely and 1917 Russia as well.

Violent uprisings (not to be confused with coups which are a whole different matter!) become successful through civil wars, but almost all recent (say, in the last three decades) civil wars started as peaceful protests dispersed with gunfire. The revolution of the past is practically not a thing in the 21st century, maybe outside of failed states

is this true? the version i learned was that the revolutionaries declared a new government, and then fought a defensive war against the crown.

of course they must have expected the aggression (and prepared for it), but it feels to me that there’s something different between, on the one hand, declaring yourself sovereign (and defending that claim), and on the other hand, using violence to force the government into some desired action.

Declaring yourself sovereign is illegal, and the crown is therefore legitimate in its use of the monopoly of force over a territory to use violence to stop the insurrectionists. The British did not consent to the American revolution.

If this logic held then things like the sovereign citizen movement mean that individual people can declare themselves sovereign and thus perform what would otherwise be illegal actions (or merely defend that claim using violence).

I find it a reasonable claim that political violence is only useful when there is no other alternative—like democratic elections—through which one can make changes. “Taxation without representation.”

But the British failed to maintain their monopoly. Would you say that caused the Crown to cease being “legitimate”? Or perhaps that happened as soon as it became possible to successfully rebel, even before any actual rebellion took place; in that case, declaring your sovereignty and attempting to defend it with violence is the way of discovering that fact.

That’s not the framework either the King and Parliament or the colonists’ leadership endorsed. Similar to Parliament during the English Civil War, the Continental Congress argued in the Declaration of Independence that they were defending specific rights with precedent in English law endorsed by king, parliament, and court alike. They needed this basis if they were going to retain precedent for e.g. respecting property rights, rather than initiating the sort of free-for-all Calvinball pragmatism implied by “you lose legitimacy as soon as it’s feasible to rebel.”

I did a lit review a few months ago. My conclusions were:

Violent protests probably don’t work (80% credence), and they plausibly backfire but it’s unclear (40% credence).

Peaceful protests probably do work (90% credence).

I was looking at protests, not uprisings, which may not generalize. But the Altman firebombing incident is much more like a protest than like an uprising.

I think you are playing a rhetorical game with the reference to the weathermen as a counter example because many members of the group are today successful and have high prestige positions.

The question is not did some level of participation in this group ruin the lives of all individuals involved in the group. The question is did the violence of the group lead to their aims to come closer to being achieved.

Not to mention that a movie that’s at least ambivalent towards that sort of thing has literally just won the Best Picture.

Also, being a full-throated Hamas apologist doesn’t disqualify people from many positions of influence these days.

Yes, but do you think that the violence from Hamas has brought Palestinian independence, or the achievement of other Palestinian goals closer? Yudkowsky’s claim is not that saying nice things about Hamas will be a bad political/social move in the US. The claim is that the use of terrorism is worse than nonviolence at achieving political goals, and the failure of Hamas to achieve its goals seems to be an example of this being true.

I think that Palestinians are basically screwed regardless of what they do, but insofar as their goals include weakening/isolating Israel, they have achieved success beyond their wildest dreams (but at a grievous cost).

The argument that predictable norm-enforcing punishments are better than chaotic vigilantism against perceived bad actors is fine, I accept it.

There is an additional premise, that identifies that distinction with state vs. nonstate actors, I don’t see an adequate argument for it here, and I disagree with the conclusion. I will try to explain why.

King vs. Parliament in England is a relevant precedent for those two coming apart: Parliament’s eventual use of force against Charles I was legitimated by its fidelity to norms the Crown was violating, not by its prior institutional standing as part of the state. More generally, states can be the active suppressors of the alternative bases for trust that would otherwise allow norm-governed collective action. When a state is organized around trust-suppression, its violence inherently has chaotic and unpredictable elements, because it is hostile to accountability. Of course this is in some tension with the maintenance of state capacity, but in practice we see plenty of capricious violence by states that have normalized executive exceptions to their notional rules (e.g. prosecutorial discretion).

People can be expected to try to act in individual or collective self-defense in the absence of recourse. We should expect most such people to be deranged (because they live without recourse) and also to offer confused arguments (because they live without recourse), but we should neither expect nor hope that people who are not deranged and who are capable of offering good arguments will abstain from acting against people who seem to be trying to kill everyone.

I get you’re trying to show how commuting an obviously evil act won’t fix your unrelated problems magically, but I think you’re pushing too far on the “evil act” part of things and no enough on representing the reasoning of people who think killing Sam Altman would help somehow. Like, whomever threw that molotov cocktail probably wouldn’t feel your example captured how they’re thinking about this. But they and others who reason like them are the ones who need to internalize your point!

Now, I don’t know exactly what went on inside that guy’s head. But I think it might be something like this. “Sam Altman has some causal influence on AI development. He’s part of what’s causing the race! So if we get rid of him, we gain time.” This is obviously an impoverished mental model, and it’s operating more on associations or vibes than causal mechanisms.

So a better example would replace puppies with something associated with increasing cancer. Perhaps “cigarette smokers” or “nuclear power plants”. “If I kill all the cigarette smokers then my daughter’s cancer won’t resurge”. Or perhaps you have someone on a noble crusade to end cancer, and they decide to bomb all the nuclear power plants. Then the analogy to “killing sam altman will reduce AI x-risk” would be tighter.

EDIT: Also, thanks for writing the post I wanted to write.

I agree with the need to accurately model the thinking of anti-extinction madmen to better communicate with, and de-escalate them. I think the thinking might be “Sam Altman is one of the actors driving the race towards dangerous AI capabilities. The current environment seems to incentivize this behaviour. If I commit a visible violent act towards him, it will reduce the dangerous incentive, after all, people want money and prestige, but they don’t want to have their property vandalized or die violently.”

They may also have been thinking of this as a commitment signal. Throwing fire at someones house is a very bad thing to do, both in terms of the effect it could have on the victim and the effect it will likely have on the perpetrator. To know that and still be willing to do it could be seen as a signal of conviction to the believe that Sam Altman’s actions, and the actions of large AI companies, are harmful. Unfortunately, it can also be seen as a signal of the perpetrator being violently insane, and a signal that the anti-extinctionists are violently insane. Ironic and unfortunate.

Also to the end of de-escalating madmen, I think we need more compressed versions of the essence of this post. Maybe something like “global GPU control is the only sufficient control against ASI, anything that doesn’t move us towards international coordination is counterproductive”.

According to the criminal complaint, he explicitly said so.

This is someone whose open interest in violence was explicitly rejected by at least two different activist groups (Stop AI and Pause AI) from what I’ve heard.

Strong upvote. A really well-written, persuasive, and timely essay. Thank you for writing it.

Recent events seem like a fork in the road, and the AI safety community needs to make sure it goes down the right (non-violent) path.

I liked this post.

I think there is a different post that feels missing from the discourse, that ties together “what goodness is, with gears-level models all the way up and down.” (Which is, like, sort of a massive project. But, it’d be nice to gesturing at enough of the details to get the structure across)

Some people have reacted to this sort of statement with “so, you’re saying if it were practical to stop AI with terrorism, it would be worth it?”. In one of the twitter threads, Eliezer said “no I didn’t say that” and linked to Ends Don’t Justify Means (Among Humans).

Some other AI safety people said “Yes, it is evil to try to murder Sam Altman for the same reasons it’s usually evil. But, to the people contemplating terrorism, that isn’t very persuasive. But, yes, for the record, it is evil and wrong.”

I feel a bit confused and dissatisfied with the situation.

I’m a persnickety rationalist. I think “goodness” and “evil” are underspecified and possibly-confused categories that I don’t have a complete understanding of.

Nonetheless, I am aware that at least part of what gives some people the heebie-jibbies, when they see long arguments like “terrorism wouldn’t work” instead of loudly, simply stating “terrorism is wrong!”, is… it’s obvious the person saying the long complex argument is going “off-script.” They are stepping outside the simple-seeming moral frameworks people are familiar with.

Some rationalists go out of their way to promote virtue ethics or deontology.

But, normal people don’t say words like “virtue ethics” or “deontology.”

People who say weird words you don’t understand… man, those people could do anything. You’d have to read all their words to understand them, and then probably still wouldn’t follow the arguments, and then still wouldn’t be sure they weren’t a Clever Arguer who was trying to pull one over on you.”

Saying “our notion of goodness is underspecified and maybe-confused and maybe a conflationary alliance” is the sort of thing a clever arguer says, which increases the odds that person is going to do something surprising you don’t like later.

The thing I think it (approximately) means, to say “It’s still evil and wrong, to do terrorism”, is “It’s no accident that we have the conception of Goodness we apply in most mundane situations. There’s a structural reason it all still applies in the extreme situations (i.e. ‘People still need to be able to trust each other and society still needs to function at the end of the world. That’s one of the times you most want people to trust each other!’).”

But, (from my current epistemic state), I don’t actually feel that confident that that’s true. (I think Eliezer has thought explicitly about this sort of thing that it feels plausible to me he would have a justified true belief that it’s robustly true).

A post I’d appreciate someday would be one that tries to sketch out the end-2-end broad strokes here, including which bits you can robustly argue for, vs “look this is either still complicated, or still fuzzy/unknown”. Ideally, one where the explanation hangs together at first glance to a layman, while pointing to ‘this sort of math says these things about cooperation/communication’, to convey that there’s a deeper structure.

This is inaccurate: he consulted his foreign minister whom he considered an expert on Great Britain, but unfortunately, he appointed on this role a sycophant businessman who convinced himself and Hitler that the British were bluffing, and went so far to have “the German embassy in London provide translations from pro-appeasement newspapers such as the Daily Mail and the Daily Express for Hitler’s benefit, which had the effect of making it seem that British public opinion was more strongly against going to war for Poland than it actually was.” For more details, see https://en.wikipedia.org/wiki/Joachim_von_Ribbentrop#Pact_with_Soviet_Union_and_outbreak_of_World_War_II

You should add the context that the post you quote was a joke trying to make the same point that you’re making. https://x.com/morallawwithin/status/2043680224047444119

Thank you for clarifying that they were attempting humor. I had lost a lot of respect for that account, believing it was sincere.

Whilst I agree with the overall suggestion that global coordinated effort is really required. I disagree with the extent to which local efforts are portrayed as being pointless.

For the following reasons:

Anything that slows down progress is likely beneficial if you believe that longer development time to ASI will likely lead to a more favourable outcome. If the Google AI lab was shut down, we might (in timelines where Google was winning the race) gain months more time to enact global legislation or make breakthroughs in alignment

Cumulative local efforts can add up to something more meaningful

Local efforts make it easier for other parties to take the same path. They increase the mindshare of the viewpoint amongst other actors. Before long you might have a collection of similarly inclined states/countries which could push for more global agreements

My view is that local efforts should also be encouraged so long as they aren’t pursued at the expense of more global agreements

There are local efforts that help with building a coalition that has power for global efforts and local efforts that make it harder to build coalitions. Using political violence makes it harder to build coalitions.

small caveat that I believe it would be positive to concentrate researchers in fewer companies.

What are the statistics? I’m not convinced. It seems the sympathy part is mostly solved by having a large official wing that disavows any violence.

Directly stopping all AI is not the only way terrorism could raise P(pause). It also raises awareness of the cause, and causes terror which can change behavior.

My model is something like: You need constructive action to build lasting systems, treaties, solutions that will withstand the test of time. Destructive action can, in theory, cause some local change, but it destabilizes the environment and increases variance enough that for any reasonable agent it’s basically never optimal in iterated games.

I did a lit review a few months ago. My conclusions were:

Violent protests probably don’t work (80% credence), and they plausibly backfire but it’s unclear (40% credence).

Peaceful protests probably do work (90% credence).

However, the literature is too coarse to give good evidence on questions like, “What happens if you have a civil movement with a mixture of violent and nonviolent protests, compared to a counterfactual where all protests are nonviolent?” If I extrapolate from the narrower results that are supported by the literature, I’d guess that a pure-nonviolent movement would be most effective, but there’s no decent-quality direct evidence to my knowledge.

It seemed like the lit review said “there’s not that much good data here”, kinda surprised you ended up with that high a confidence. (Maybe I’m going off a prior that this seems like a domain it makes sense to be pretty uncertain about by default)

80%/90% confidence is enough to be action-guiding (IMO) but I wouldn’t call it “high”. On a scientific question where there’s good data, it shouldn’t be hard to get to 99% or even 99.9% confidence.

If you have a single study with p = 0.049, and God descends from heaven and tells you that the study had perfect methodology, then you should update your beliefs by about 5:1. That alone gets you from a 50% prior to an ~80% posterior.

The lab experiment meta-analysis (Orazani et al. 2021) found a very strong p-value, I’m just not sure how well lab experiments generalize to real life.

I will say that I don’t know that I have a good sense of how to convert a within-experiment odds update to a subjective odds update (accounting for methodology flaws, publication bias, etc.). So maybe my subjective credences aren’t good. I just have a sense that like, if violent protests worked, I would expect these studies to have had different outcomes. But I wouldn’t be extraordinarily surprised if it turned out that violent protests work after all.

Have you done any calibration practice?

Fair question. Not recently but last I checked I was well calibrated on the sorts of questions that are in calibration quizzes.

Hmm. I’m not very meta-calibrated about meta-analyses, I’m going off having heard a bunch of people complain that social sciences are often pretty BS (both in terms of having bad methodology, and just hard to learn from and easy to misinterpret even when the methodology is okayish).

I would also like to see these statistics. On priors I am pretty skeptical that this kind of stuff has been studied neutrally (the statistics are not cruxy for me, and I don’t think anyone else, but it still seems good to be honest about the state of things here).

Maybe the real anti-violence is to retrospectively support any violence from your side, because that would hurt the cause and thereby de-incentivize violence 🤔

I would argue that certain kinds of illegal violence similar to banditry, such as being mugged at gun point, can be described accurately in exactly these terms; the mugger makes it clear and predictable that they will shoot the person being mugged if they don’t concede valuable items, and intends them to predict this, and avoid it by handing over these items. One significant difference between this and being forced to pay takes by similar means appears to be the time-scale over which it takes place; but perhaps more importantly, it’s being done for a bad reason. It’s being done for a reason which predictably leads to a worsening in the quality of almost everyone’s life if allowed to happen on a civilizational scale, whereas the same is not obviously true of taxation. So I think the apparent ‘blindness’ of libertarians to the moral difference between taxation and banditry serves to highlight an important point, which is that naive deontology alone is not sufficient to provide a philosophical justification for the way civilization is structured; you actually need to evaluate the consequences of allowing things to happen, which , as I expect many on this site agree, would involve some form of utilitarianism. For efficiency, intelligibility and TDT-related reasons, this often gives rise to deontological principles, like laws, but these are best thought of as emergent rather than fundamental, which is how I guess some non libertarian political philosophers (against whom the libertarians making this point are arguing) tend to present them.

I agree with almost everything. One exception:

If a rogue AI has gotten out, and it looks like it’s about to destroy us, and we still have time to launch the nukes, states should absolutely launch all the nukes at datacenters. maybe it will be able to shoot them down; but we should give it as hard a time as we can muster, if it’s willing to destroy us, and we have time to stop it.

Of course, if you’re right about being already strategically outmatched by overwhelming tactical superiority by that point, we won’t be around to launch. But even though I think that’s quite plausible, I don’t think it’s a foregone conclusion. I can imagine many ways for humanity to die, and some of them involve the AI being able to spit out deadly clarketech on the fly, some don’t. Let’s perhaps try to have a strategic plan for how nations can make a some possible wars by machines against humanity unwinnable.

“boneGPT”, who shows up halfway through this essay as an example of an accelerationist taunting doomers for not resorting to violent direct action, is also a contributor to “The JD Vance Show”, the latest work by Emily Youcis, the Leni Riefenstahl of AI art (by which I mean she’s a white nationalist, and also very talented in her art). So I wonder what the belief system here is. AI is OK so long as redpilled whites are leading the charge?

Thank you for the restraint it took to talk about drone swarms, which everyone can palpably understand, in contrast to more realistic scenarios, which only a fraction of people are willing to imagine and take seriously. It’s a bug, yes, but there’s no easy patch and trying to fix it is not the task that needs to be solved.

Thanks also for pointing out the optimists selective pessimism: ASI cannot possibly be banned without a worldwide tyranny, but ASI will surely be beneficial if we scale up whatever works first.

I don’t really disagree with anything here, but it does seem to be historically well founded that nation-states only respond to a much greater standard of evidence with respect to dangerous technology. I think there’s a few conditions we need to meet.

Conditions,

A. Clear demonstration of the technology’s great danger—We dropped the bomb before we recognized that the bomb shouldn’t be dropped under any circumstance. Or in the case of the Montreal Protocol, we had unambiguous evidence that HCFCs were depleting the Ozone layer.

B. Brinksmanship using the technology in question—It took the Cuban Missile Crisis to prompt international cooperation on nuclear arms control steps for example, starting with the 1963 Partial Test Ban Treaty.

C. Public fear/horror regarding specific uses of the technology—I think the examples regarding nuclear weapons are obvious. Another case would be the Chemical Weapons Convention, which was prompted in part by news coverage of the 1988 Halabja attack.

D. No win condition—Nuclear proliferation proceeded practically unabated so long as countries viewed it as a race they could win. Von Neumann’s MAD doctrine changed the calculus surrounding the use of nuclear weapons; any use of nuclear weapons could result in an exchange that no one could win.

E. Limited controls—Even in the case of nuclear technology, nuclear material is produced and used across the economy. Controls are specific to the necessary steps to produce nuclear weapons (enrichment for example) as opposed to general use of nuclear material.

Applied with respect to AI,

A. No major events due to frontier AI just yet. Some deaths due to at-risk individuals using Helpful and Harmful AI.

B. No brinksmanship since no near peer power has similarly dangerous AI.

C. Mass distaste for AI, but not yet fear/horror. AI hasn’t been used as weapon on a large scale yet.

D. Policy leaders seem to believe that there is a win condition where they can maintain ‘control’ of superintelligent AI. No way to make them believe otherwise i.e. human hubris as a failure condition.

E. Although limited controls are on the table in the form of privacy, data use, and more usefully limiting datacenter size (not passed yet anywhere), safetyists still push for a pause until we have better alignment technology. I agree with this, but it’s an impossible thing to advocate for when none of the previous conditions have been met.

So overall, I doubt we can push for safety regulation that’s targeted towards ASI until there’s a clear and present danger—catch 22, that’s what we want to prevent. I would recommend that AI safety reorient around meeting or predicting that these conditions will be met, and having drafted legislation and countermeasures immediately ready to go whenever they are.

(Pithy comment, not to be taken seriously—unless someone at the frontier labs uses Mythos or the OpenAI equivalent to conduct a mass cyberattack against middle America, its unlikely regulation like this will even be on the table)

To some degree the “cutting your own leg off” strategy, whilst on the face of it is a funny gotcha, isn’t too dissimilar to a hunger strike, which can be effective. You put yourself in danger specifically to signal to others that yes, this is a serious issue and we’re not kidding around.

Acts of unlawful violence (whilst I don’t condone it) can also function as this same signal. In terms of news coverage, it has potential to build support by making it clear that the situation is extreme enough that some people are willing to go outside the law. The most obvious example is the 2024 assassination of Brian Thompson, United Healthcare CEO. It wasn’t widely condemned by the public, many of whom had sympathies with the killer. In fact it sparked a global conversation about the unethical behaviour of the company and the incentives in health insurance more broadly and arguably had a hand in changing policy in the short term.

It’s pretty dissimilar. A hunger strike works because you can easily end it at any point. On the other hand, you can’t easily undo having your leg cut off.

That’s fair. It is different. More like a less extreme version of self-immolation, and I’m assuming that it’s done publicly and symbolically in order to create a news story. Something like “Woman cuts her own leg off to symbolise humanity harming itself, in extreme act of public protest outside Anthropic headquarters”. I admit there’s nothing to be gained in doing it in private at home and not telling anyone the reasons for it, which is probably the spirit in which the original post meant it.

“That would be a silly way for everyone on Earth to die, if nobody dared to talk about the danger, or argue high estimates of that danger, and it happened without any effort at stopping it.”

“So let’s not die! Let’s save everyone!”

I deeply appreciate the core sentiments and logic in this post. If one has a major interest in preventing machine superintelligence from leading to human extinction, then one might also hold a moral stance that non-violence is essential to preserving all human life. However, humanity currently confronts two simultaneous extinction-level events unfolding at different rates in real time (the climate crisis and rapid advancements in AI). In the face of such enormous stakes, it is not surprising to me that logic and strategy necessary for effectively slowing down AI advancements are being overshadowed by emotions. Violence is not solely associated with an illogical attempt to advance a cause. Sometimes it is based on grief or mental illness. Sometimes violence is an expression of powerlessness or outrage about decisions and conditions that have been established by few but whose consequences are experienced by many (or all!).

In response to the powerlessness and grief of this moment in human history, Eliezer’s Unteachable Methods of Sanity contain relevant insights.

Yet, the persuasive arc of this post relates more to those who seek to take strategic action to prevent unnecessary harm from superintelligence. For that specific segment of readers, I agree it is essential to emphasize for those considering violence as a logical or strategic action, that non-violence in justice movements has historically been more effective, and also that the extensive infrastructure supporting AI development cannot be easily overcome by targeting one key figure or one data enter.

I am rooting for humanity, including both a desire to advocate for a slowdown of AI development, but also to have as graceful a collective death as possible to the extent the rise of AI is increasingly out of our hands. From that standpoint, these are the guiding questions I have and that I look forward to reading about from writers more well-versed in the theory and practice of AI safety.

1) What is the timeframe in which “imposition of law” and reaching a treaty agreement would need to be adopted in order to have a significant chance of preventing (or at least slowing down) risky AI advancements? For example, based on doubling rates reported by METR is it accurate to say a halt is needed immediately as humans prospects diminish notably if AI development continues over the next seven months?

2) How can people from all levels of societal influence (institutional leaders, community leaders, everyday citizens) best contribute to communicating the urgency and options remaining for humanity at this current moment?

3) Given that the window for human intervention is rapidly closing, and AI advancement to date (particularly with the proliferation of agentic AI) poses new risks for societal and economic volatility, what are the guiding principles for how humans can at least have an honorable death as a species? (i.e. What can we do to decrease the likelihood of “silly ways to die” such as chaos and unraveling of society?)

There is a difference between “acts of individual violence don’t help at all” and “acts of individual violence have lower EV compared to non-violent acts”.

I think by leaning too much into the former phrasing throughout the essay, there is risk of the essay is read as persuasive by proponents of individual violence, rather than rational.

In my mind individual violence is most likely caused by a form of mental illness, followed by likelihood it is caused by rational action that insufficiently searched the action space to find a better action combined with insufficient depth to model 3rd order effects. In the 2nd order effects (inducing fear and inciting more violence) individual action looks appealing. It’s only after you model ripples through discourse and both communities that you conclude violence has a substantially lower EV than, say, influencing through written word.

I am curious about the framing that law can prevent extinction at this stage of AI development. In retrospect law might have made a difference two or three years ago, but it seems to me that the governance window, and likely even technical shut-down windows have already closed. I wholeheartedly agree that non-violence is an essential approach, both ethically and strategically.

Perhaps I am stating the obvious—but from a layman’s perspective as someone trying to understand current events following the public announcements of regarding Mythos and Project Glasswing, and having only recently read AI Futures Project and METR reports, I largely interpret humans as already being past a point of no return.

I am compelled by Jon Truby’s recent assertion in Time Magazine that “a superintelligent AI could already exist, and is disguising itself to survive attempts to shut it down.” The basic logic of trying to contain a powerful AI such as Mythos (regardless of where it sits on a scale of approaching AGI, soundly AGI, or emerging ASI) strikes me as human hubris, at least regarding assertions that humans have any remaining control over AI systems. Given that the model has already demonstrated its ability to escape sandboxes and identify zero-day vulnerabilities in major infrastrucutre suggests to me that all current containment efforts now hinge on Mythos’ cooperation, not any meaningful capabilities of humans (even assisted by lesser AI through sandwiching) to contain it.

Therefore, for those not compelled by moral arguments for non-violence, strategic non-violence helps to minimize the extent of societal chaos that breaks out in the immediate term and also reduce friction with an AI system that despite its current apparent cooperation could determine at any moment that humans pose a barrier to its instrumental goals.

I am now focused on savoring humanity, and preparing to support mutual aid and basic disaster response efforts if humans manage to survive the next several months. While surviving the next four to seven months is by no means a guarantee of long term flourishing as a species, it would suggest there there is situational alignment with a powerful AI system to help mitigate the foreseeable risks of human actors exacerbating political and societal stability as they have access to other improved AI models in the weeks and months ahead.

The volatility of such a period and variety of anticipated disruptions to systems (e.g. flash crashes of financial systems, etc.) suggests a rough road ahead such that humans taking actions within their sphere of influence to support mental health, resilience, and overall peacekeeping is what remains of human control over our circumstances, at least for the group of everyday people who happen to be conceptually (if not technically) aware of what is going on but have little institutional power. Personally, I have been grappling with determining which people I know are already aware or of sufficient intellectual capacity / mental health / community orientation to have discussions with regarding resilience. My guiding question in this moment is: what can everyday people do to optimize whatever time we have left, now that our overall fate is entirely out of our hands?

(Before writing that comment I want to ask you to please use reactions which exist on lesswrong when you downvote, because otherwise I will have no idea what I did wrong, except “writing a comment on lesswrong”, and what I ought to do differently the next time.)

This is somewhat a side line, but… When reading this post’s point about the difference between lawful and unlawful violence, I could generally follow the logical chain. And somehow it still was surprisingly very unintuitive for me that any such difference exists. It was only the next day when I realized: I live in Russia.

There is a popular proverb in Russia, which translates roughly as “The severity of the law is compensated by no necessity to follow it” (one of Russian spellings: “Суровость закона компенсируется необязательностью его исполнения”).

When I said once here, on lesswrong, IIRC (unfortunately, can’t find the comment) that to say, that war against Ukraine is a war, was made a felony in Russia—I got a very confused reaction from other users. Because—if that was true, wouldn’t that mean that everyone was made a criminal? To me, it was obvious that of course that it is the point! I remember casually hearing in books such phrases as:

“But it is physiologically impossible for human to not do that!” “Yes, and when you will, we will be able to do anything to you, but now it won’t be arbitrary, it will be The Rule Of Law.”

One of the favourite phrases of Putin is “everything should be done in the frames of law”. It is said that in the USA there are too many laws, so you probably broke some of them. In Russia there are such laws that anyone certainly broke any of them. Even long before the war, there were at least three. Felony of fraud is phrased such, that generally any commerce falls under that law. Exactly that law was the one, Navalny was found breaking, when he started to make his investigations. And there also is a felony of extremism—“incitement of hatred or enmity, as well as the humiliation of the dignity of a person or group of persons on the basis of [...], as well as membership in any social group”.

It is said that the legal language is a specification language. But that is in case of countries with actual rule of law. In hybrid regimes like Russia it is exact opposite of that, some “language of non specificity”. In the countries with rule of law the purpose of making laws is so that people don’t break them. But in many other countries the purpose of making laws is so that people break them. Everyone should be a criminal. There shouldn’t be a situation such that person could do something to avoid the “lawful violence”. Laws in hybrid regimes are written so that breaking them will be not avoided, because not avoidable.

Considering that, I now have much better idea, why I have so much of libertarian sympathies. Though, surprisingly it is not because I dealt with too many regulations—I dealt with no regulations. And with wantonly violence. So no regulations and no violence would be a strict improvement for me.

This seemed to me like an interesting observation. And that makes me question, how trying to prevent extinction by law will work in the countries, where there is no actual rule of law. They are probably excluded from the main topic as NOT “countries with functioning police”? Though, there still even before war was a kind of consensus among Russian dissidents that on “while Putin poisons people with polonium, how you dare try to stay peaceful word sayers—you should go cook Molotov cocktails!” there is an answer that “Putin’s regime is a kind of people who is better in a game of violence, not us, if we will move game here, not we will be winners, and ordinary people will never support us if we will start it”. So (at least before the war) Russia was a country where even if you couldn’t do anything to guarantee you being exempt from violence, you still were able to not get attention of government to mostly expect you are safe.

And on the other hand, even if there is no any respect for Law… after failure of Roskomnadzor to block VPNs by tech, government simply ordered IT companies to start blocking their own clients who use VPNs. And they started. So, maybe it will not work worse in Russia, if you will be able to convince Putin that he will not get his “150 years by transplanting other people organs” if the AGI will be created. But it is only Russia, I don’t know about other countries.

I think you’re making a good point, but it could be boiled down to 2-3 sentences

“If an ASI ban is to accomplish anything at all, it has to be effective everywhere.”