Researcher at MIRI

peterbarnett

I spent one day writing up an AI 2027-style scenario about continual learning and misalignment. It’s not meant as a forecast, but instead to gesture at misalignment failures we might encounter with continual learning.

Full post here: https://naiveconsequentialism.substack.com/p/a-continual-learning-misalignment

Why the difference?

[ignoring misalignment risk] I think it’s pretty unlikely that by default ASI built by great powers will end up sufficiently empowering the middle powers. I expect that by default the ASI-creators will try and make the ASI follow their orders or the orders of their government, rather than something that upholds the global order.

I think that middle powers could potentially use verification mechanisms to help ensure that an ASI was being trained with a model spec that includes respecting the interests of middle powers. Overall though, I expect getting it to be very hard to actually get good assurance that an AI isn’t being trained in a way that middle powers don’t like. And therefore, I guess that it would be better to stop/slow.

I do agree that a reasonable ask of the middle powers is asking for some control over the model spec, but I think it’s probably better for the main ask to be about something that the middle powers have direct influence over, e.g., AI hardware.

Yes, and as a result (as I stated in the final paragraph) I think it would probably be good to wake up middle powers to ASI.

[Take that’s been bumping around in AI governance circles, not original to me]

The middle powers have strong incentives to stop/slow AI development (even if we ignore misalignment risk, which we shouldn’t), and can plausibly do something about it.

Even if we ignore misalignment risk, countries that are not the US and China by default will become totally geopolitically irrelevant if ASI is built. One or both of the US and China will have overwhelming economic and military power. The middle powers should therefore want to slow/stop AI development, until they can work out how this can be done without them becoming irrelevant.

Middle powers have some leverage here. States with critical parts of the AI chip supply chain (Netherlands, Korea, Japan, maybe Taiwan) can refuse to sell critical components without certain demands. The EU market is a fairly big deal. States with nuclear weapons can threaten to use them, if they risk becoming totally disempowered, and can defend the chip supply chain states.

My favorite ask right now is that chip supply chain states (Netherlands, Korea, Japan) should refuse to help manufacture chips (both selling components, and keeping SME machines running), unless chips are manufactured with secure verification mechanisms. These verification mechanisms can help give these states assurances that the chips aren’t being used to disempower them, and can be used to verify a halt to AI development in the future.

This move might be met by strong retaliation by states that want to keep buying chips, and so middle powers (including nuclear states) should coordinate and back each other up. This includes defense pacts.

I therefore think it would probably be good to wake up governments in these countries in key middle powers about ASI. If ASI is real, then this is fairly straightforwardly in their interests, even ignoring misalignment risk.

That’s a good point. It does say:

These are commitments of the form: If an AI model has capability X, risk mitigations Y must be in place. And, if needed, we will delay AI deployment and/or development to ensure the mitigations can be present in time.

This isn’t explicit about a unilateral pause, but I think it would be kinda weird if this meant to imply “And, if needed, we will delay [...] unless other groups are not also delaying.”

with the exception of interfacing specifically with you, Holden, on this topic, where your comments have seemed clear and reasonable and consistent across time to me

This doesn’t seem “consistent across time” to me, given that Holden is the author of a report called If-Then Commitments for AI Risk Reduction

Makes sense! I think the real ask is more like “signal boost if you think that would be good”

Zachary Robinson and Kanika Bahl are no longer on the Anthropic LTBT. Mariano-Florentino (Tino) Cuéllar has been added. The Anthropic Company page is out of date, but as far as I can tell the LTBT is: Neil Buddy Shah (chair), Richard Fontaine, and Cuéllar.

It might be good to have you talk about more research directions in AI safety you think are not worth pursuing or are over-invested in.

Also I think it would be good to talk about what the plan for automating AI alignment work would look like in practice (we’ve talked about this a little in person, but it would be good for it to be public).

Were current models (e.g., Opus 4.5) trained using this updated constitution?

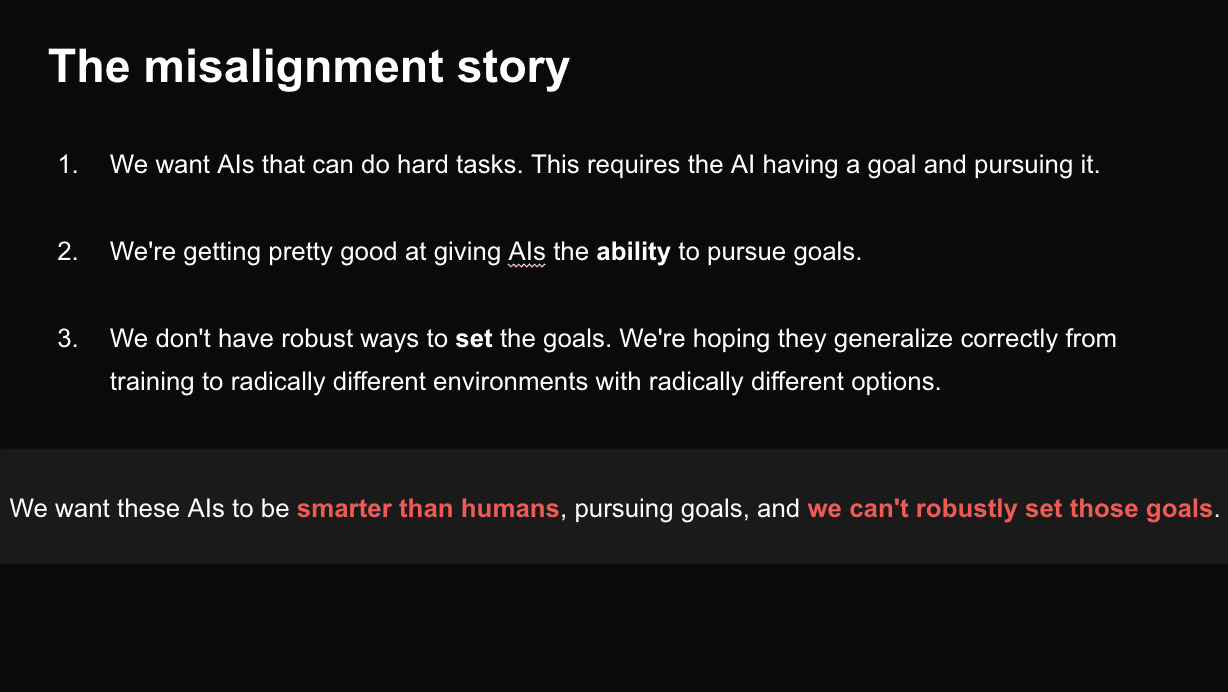

Here’s a slide from a talk I gave a couple of weeks ago. The point of the talk was “you should be concerned with the whole situation and the current plan is bad”, where AI takeover risk is just one part of this (IMO the biggest part). So this slide was my quickest way to describe the misalignment story, but I think there are a bunch of important subtleties that it doesn’t include.

Recognizable values are not the same as good values, but also I’m not at all convinced that the phenomena in this post will be impactful enough to outweigh all the somewhat random and contingent pressures what will shape a superintelligence’s values. I think a superintelligence’s values might be “recognizable” if we squint, and don’t look/think to hard, and if the superintelligence hasn’t had time to really reshape the universe.

Maybe I’m dense, but was the BART map the intended diagram?

The inability to copy/download is pretty weird. Anthropic seems to have deliberately disabled downloading, and rather than uploading a PDF, the webpage seems to be a bunch of PNG files.

I am very concerned about breakthroughs in continual/online/autonomous learning because this is obviously a necessary capability for an AI to be superhuman. At the same time, I think that this might make a bunch of alignment problems more obvious, as these problems only really arise when the AI is able to learn new things. This might result in a wake up of some AI researchers at least.

Or, this could just be wishful thinking, and continual learning might allow an AI to autonomously improve without human intervention and then kill everyone.

I like the sentiment and much of the advice in this post, but unfortunately I don’t think we can honestly confidently say “You will be OK”.

Maybe useful to note that all the Google people on the “Chain of Thought Monitorability” paper are from Google Deepmind, while Hope and Titans are from Google Research.

Thanks!

Is this a recent thing with Opus 4.7? Malo noticed similar behavior here https://x.com/m_bourgon/status/2044849815964811333