Was a philosophy PhD student, left to work at AI Impacts, then Center on Long-Term Risk, then OpenAI. Quit OpenAI due to losing confidence that it would behave responsibly around the time of AGI. Now executive director of the AI Futures Project. I subscribe to Crocker’s Rules and am especially interested to hear unsolicited constructive criticism. http://sl4.org/crocker.html

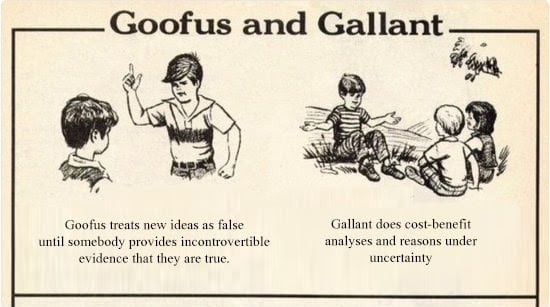

Some of my favorite memes:

(by Rob Wiblin)

(xkcd)

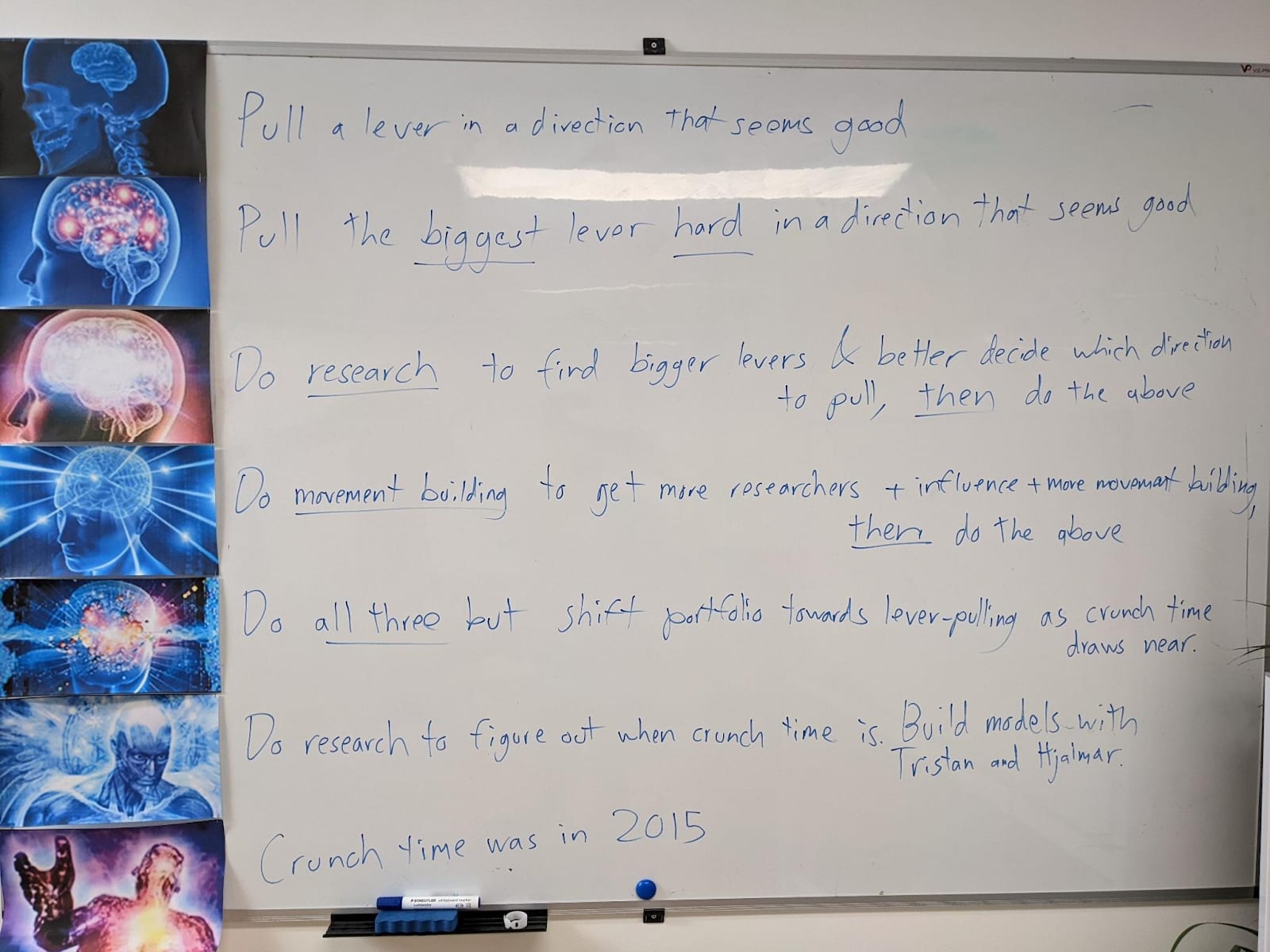

My EA Journey, depicted on the whiteboard at CLR:

(h/t Scott Alexander)

Oh I don’t think it would.

Yeah a thing I was alluding to is that maybe in other domains, e.g. coding, there’ll be a period where lots of people use AI for coding and they tell themselves that it’s still them doing the coding when really they are kinda useless middle managers between their boss and Claude, and they tell themselves they are learning new languages etc. when really their coding skills are starting to atrophy.