Was a philosophy PhD student, left to work at AI Impacts, then Center on Long-Term Risk, then OpenAI. Quit OpenAI due to losing confidence that it would behave responsibly around the time of AGI. Now executive director of the AI Futures Project. I subscribe to Crocker’s Rules and am especially interested to hear unsolicited constructive criticism. http://sl4.org/crocker.html

Some of my favorite memes:

(by Rob Wiblin)

(xkcd)

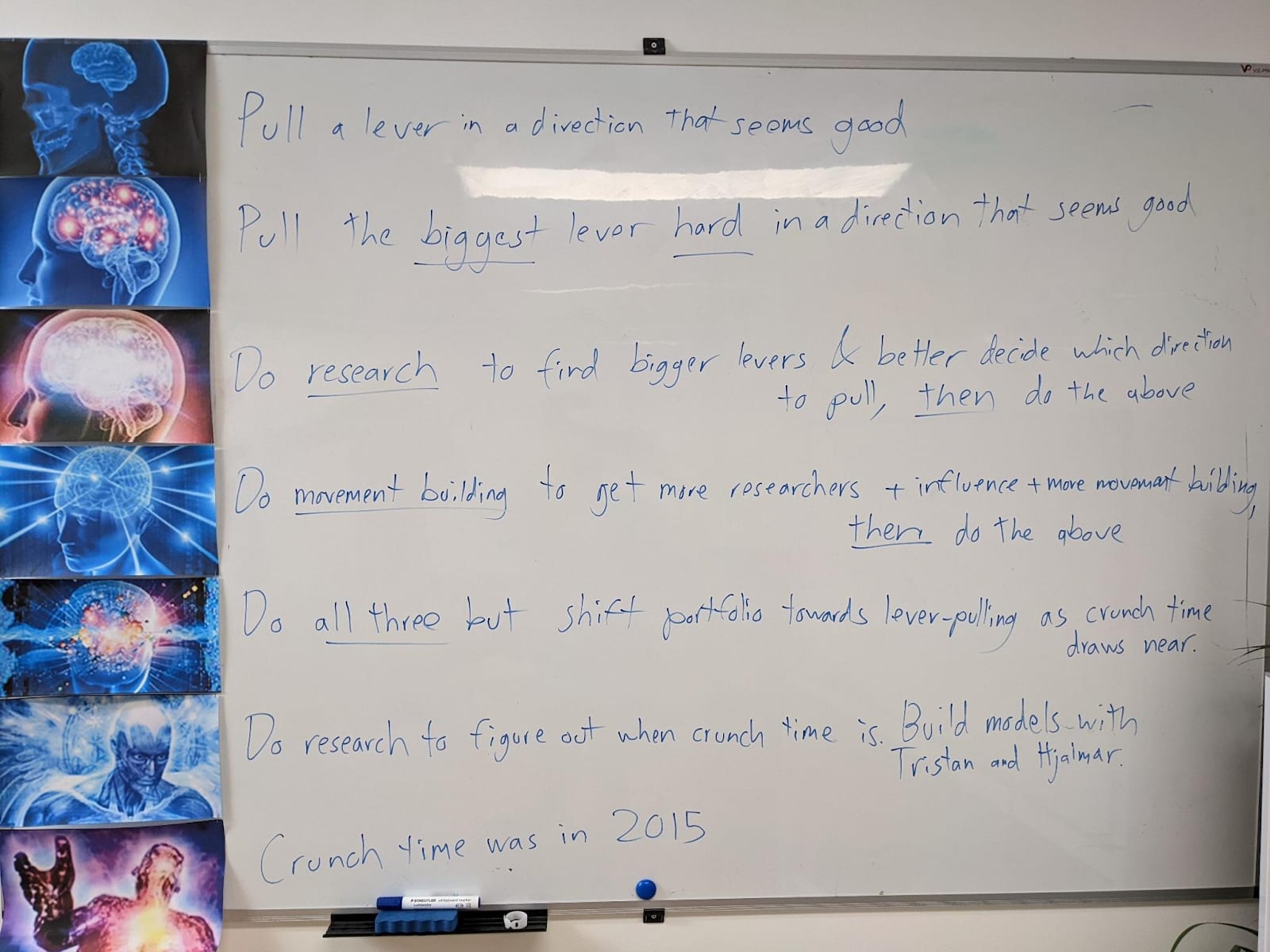

My EA Journey, depicted on the whiteboard at CLR:

(h/t Scott Alexander)

There’s an old gdoc from my time at OpenAI where I make a similar distinction, but I like it better: The distinction is between aligned, misaligned, and adversarially misaligned. Misaligned models that are not adverarially misaligned, are the in-between category; the Spec didn’t internalize in all the right ways, so e.g. the AIs sometimes misbehave, or have drives or biases they aren’t supposed to have. BUT, they aren’t plotting against you; they aren’t thinking seriously about how to prevent you from realizing they are misaligned; etc. Adversarially misaligned models, by contrast, are. They have become your adversary.

Note that with the right prompt and/or context, current models can become adversarially misaligned. But my best guess is that most of the time / for most prompts/contexts, current models are merely misaligned, not adversarially misaligned.

(A similar distinction occurs in humans; we might say for example “Alice is aligned on this issue; she agrees with our policy proposals and is working hard to help achieve them. Bob, by contrast, is not; he has his own agenda and doesn’t really care about our issues that much. But at least he’s happy to live and let live, and have honest conversations with us. Chris, though—that bastard is our enemy. He’s actively trying to block our policy proposals and he lied to us about this when we met him earlier.”