Playing with DALL·E 2

I got access to Dall·E 2 yesterday. Here are some pretty pictures!

My goal was to try to understand what things DE2 could do well, and what things it had trouble understanding or generating. My general hypothesis is that it would do a better job with things that are easy to find on the internet (cute animals, digital scifi things, famous art) and less well with more abstract or more unusual things.

Here’s how it works: you put in a description of a picture, and it thinks for ~20 seconds and then produces 10 photos that are variations on that description. The diversity varies quite a bit depending on the prompt.

Let’s see some puppies!

One thing to be aware of when you see amazing pictures that DE2 generates, is that there is some cherry picking going on. It often takes a few prompts to find something awesome, so you might have looked at dozens of images or more.

Still, this is pretty great! Those are recognizably goldendoodle puppies, mostly in something approximating play position.

You can see that the proportions in the generated images are not quite right, and some of the detail is off if you look closely. For instance, the front legs are too long here, the face isn’t quite right, and the ears are a bit weird.

Still, it’s pretty amazing given that it generated this from scratch. Check out how realistic the grass looks. I also like that the background is blurred, though not quite in the way that a camera would do it—the transition is too abrupt.

Ok but the point of this isn’t that they have a great image generation transformer, though it’s clearly that. The key thing is is its magical ability to actually follow instructions or descriptions of images. Particularly interesting is compositionality—can it combine concepts to generate something it’s never seen before? Answer: yes!

The concept of “kitten” is pretty simply, though note that a kitten can be rendered in a ton of ways, from line drawings to cute art to photorealistic. Pop art is more complicated: it’s a celebration of everyday images, and one of the most commonly known versions is Warhol’s collection of repeated images in a grid with neon colors that vary per cell. And it mostly gets those things right.

What about weird things? You can put in any input and it’ll do something.

None of those are twitter worthy, but with some trial and error you can get things that are interesting.

“Digital style” is one of the suggestions for getting better images.

X in Y style is fun, that’s a lot of the images you see out in the world. Weirdly it’s pretty sensitive to exactly the order you put things in.

Back to puppies, you get pretty different results depending on the placement of “surrealistic” even though the rephrasings seem semantically identical or at least very similar.

One place where DE2 clearly falls down is in generating people. I generated an image for [four people playing poker in a dark room, with the table brightly lit by an ornate chandelier], and people didn’t look human—more like the typical GAN-style images where you can see the concept but the details are all wrong.

Update: image removed because the guidelines specifically call out not sharing realistic human faces.

Anything involving people, small defined objects, and so on, looks much more like the previous systems in this area. You can tell that it has all the concepts, but can’t translate them into something realistic.

This could be deliberate, for safety reasons—realistic images of people are much more open to abuse than other things. Porn, deep fakes, violence, and so on are much more worrisome with people. They also mentioned that they scrubbed out lots of bad stuff from the training data; possibly one way they did that was removing most images with people.

Things look much better with animals, and better again with an artistic style.

The cards aren’t right. Dice seem to be a lot easier.

People can also be pretty good if you don’t see faces, though the hands are definitely not right.

Stlalm Anit is my new slogan.

In general all writing I’ve seen is bad. I think this is less likely to be about safety, and more that it’s hard to learn language by looking at a lot of images. However, since DE2 is trained on text, it clearly knows a lot about language at some level—I would expect there’s plenty of data to put out coherent text. Instead it outputs nonsense, focusing on getting the fonts and the background right.

I definitely see serifs! I do not see sense.

Overall this is more powerful, flexible, and accurate than the previous best systems. It still is easy to find holes in it, with with some patience and willingness to iterate, you can make some amazing images.

In conclusion, generating a lot of images from a new state-of-the-art image generation system is fun, thanks for reading. If there’s interest, I can also explore in-painting and Here are a few more gratuitous pics!

Reader requests:

Is that more or less cool than the actual statue they built in Miami?

The concept of beauty, according to DE2, is mostly women putting on makeup, which I can’t post due to restrictions on posting faces. These are really realistic, capturing ethnicity and expressing emotion, totally unlike the poker players from earlier. But there’s this one pastoral scene, which is nice.

This last one I edited out some floating writing on the left, and asked it to generate [a girl in a beautiful serene forest]. This one was also nice:

Seems kind of like generic anime and not so much Finnegan’s Wake.

What are those penguins on the bottom left doing?!?

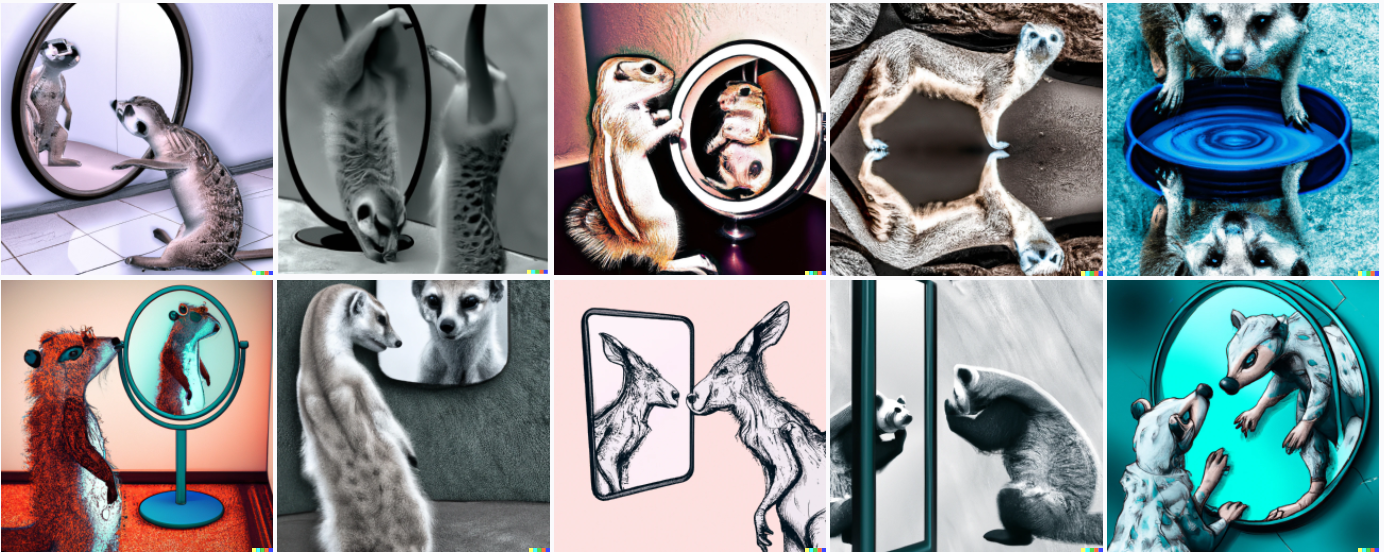

This series suggests that DE2 gets reflections pretty well, but either doesn’t understand what it means to have something else be the reflection, or the prior for a reflection reflecting the thing looking in the mirror is too hard for it to override.

Here’s one where I edited out the cat in the mirror and changed the prompt to be about a dog, and it did something sensible.

It got it right twice out of 10 tries, that’s good right?

I tried to ask for Dall-E by name but that was a content policy violation.

It managed to get most of those elements in. Ultimately none of those is really satisfying though.

The good ones here had faces in them so I can’t post them. I like how random this one is.

...is surprisingly calm and beautiful.

Boo!

A pen and some gibberish… is actually a pretty good metaphor for intellectual progress?

“A spaceship made of legos” is just more of the same.

It got the marching part. I guess DE2 hasn’t ever played DnD.

Some suggestions of things to try:

a proof of the Riemann hypothesis

will it understand that it should be showing something that looks like mathematics? (maybe not, given the “blog post on Less Wrong”)

galaxies colliding in the style of Vincent van Gogh

I just think this might look cool

a strange attractor made of butterflies

does it know what a strange attractor is? will it think of making it out of Lorenz butterflies?

Charles Babbage’s completed Analytical Engine

does it know what sort of thing it should be portraying?

the concept of beauty

just out of curiosity about what sort of thing it does; one could try many other things in place of “beauty”

Nice ones, posted.

Request: “The answer to the Ultimate Question of Life, the Universe, and Everything”

I’m not sure what I expected for this one.

https://labs.openai.com/s/om7P2fNw2V3zzJZmMcODheni

On the upside, at least we have a specific answer?: https://labs.openai.com/s/EZ1MV59rW87XgvppGKypmy1E

Image link appears to be broken.

Seems to work in an incognito window on Chrome, so I think it’s generally available...

It makes me wonder what 22 bucks in a glass of COVID look like. It also matches pretty well with my experience with dreams, where written words and letters are always fake even though I “know” what they stand for.

More importantly, what does it produce when you ask it to draw the future (maybe in the style of the 70s)?

Okay, seriously, this is a great way to explore how “common sense” differs between humans and this AI and highlight the risks, visually and viscerally, of relying on a technology that is fundamentally alien to humanity. Images are innocuous, but what happens when you apply AI to other objectives?

[22 bucks in a glass of covid] gets back an error message that the request violated guidelines.

[the future in the style of the 70′s] is probably too vague to end up being awesome, but trying it… guess I can’t put it in a comment. I’ll add a request section to the post.

How about “22 bucks in a glass of guidelines”? I’d also be interested in “a potato wearing a trench coat in a heroic pose”

The glass of guidelines also violates policy for some reason. I’ll post a potato momentarily.

I’m also curious about how the dataset curators handled furry art, and if they’d classify it as problematic (I’m not sure I even want to know about anime…). Would you mind trying some prompts to see if you can elicit furry art?

I have a suspicion the guidelines are violated because “22” is a bullet caliber… a different number would probably work.

Hahaha! Of course. Thank you so much for running suggestions. This is what having young children with incredible artistic skills is like.

Is it ok if I hang the image of “a blog post on less wrong” on my wall? It speaks to my artistic sensibility.

Fine with me! Open AI’s guidelines say no commercial use, but this sounds personal and awesome.

this photo was taken with perfect timing as the water balloon was breaking

note the symmetry in this ancient Roman mosaic from the National Museum of Roman Art in Merida

hot air balloons over the rolling hills of Tuscany

literal puppy love, awwwww

a sculpture at the Museum of Modern Art based on a child’s drawing of a monster

traffic on the busy streets of Sydney

https://labs.openai.com/s/BdMAO4qrX2Iuj1uDZz5xc4hR

It’s definitely possible to get a diffusion model to write the text from a prompt into an image. I made a model that does this late last year. (blogpost / example outputs*)

The text-conditioning mechanism (cross-attention) I use is a little different from the ones in GLIDE and DALLE-2, but I doubt this makes a huge difference.

I’m actually a little surprised that the OpenAI models don’t learn to write coherent text, since they’re bigger than mine, trained for longer on more data.

But then, I’m much more focused on this one specific capability, so I make it easy for the model: an entire ~50% of my training images have text in them, and the “prompt” in my setup always contains an automatic transcript of the text in the image (if any), never a description, or a description that happens to quote a transcript, or a description that merely summarizes the text, etc.

The OpenAI models have to solve a more abstract version of the problem, and the problem is relevant to (I would imagine) a much smaller fraction of their training examples.

*check the alt text if you want to know what text the model is attempting to write

It would be very interesting to see how much it understand space, for instance by making it draw maps. Perhaps “A map of New York City, with Central Park highlighted”? (I’m not sure if this is specific enough, but I fear that adding too many details will push Dall-E to join together various images.)

Some suggestions that seem like they might make cool or interesting images:

The most beautiful thing that I’ve ever seen

A dragon in shape of a zeebra

The intelligence explosion happening

The end of the world

The beginning of the world

Intellectual progress

An awesome house

A lego spaceship

A spaceship made of legos

An animal that will exist one day

The 4 elements

(I’m guessing that it knows what they are, but maybe not?)

The periodic table

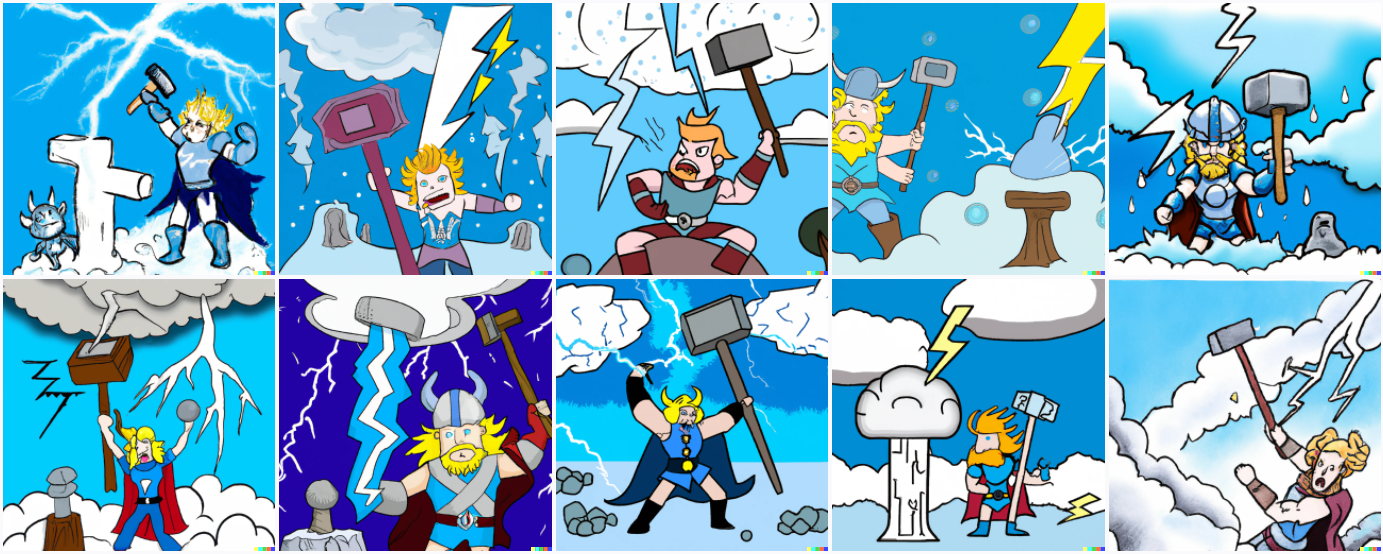

Superhero Thor vs mythological Thor

Comic book Spider-man vs. movie Spider-man

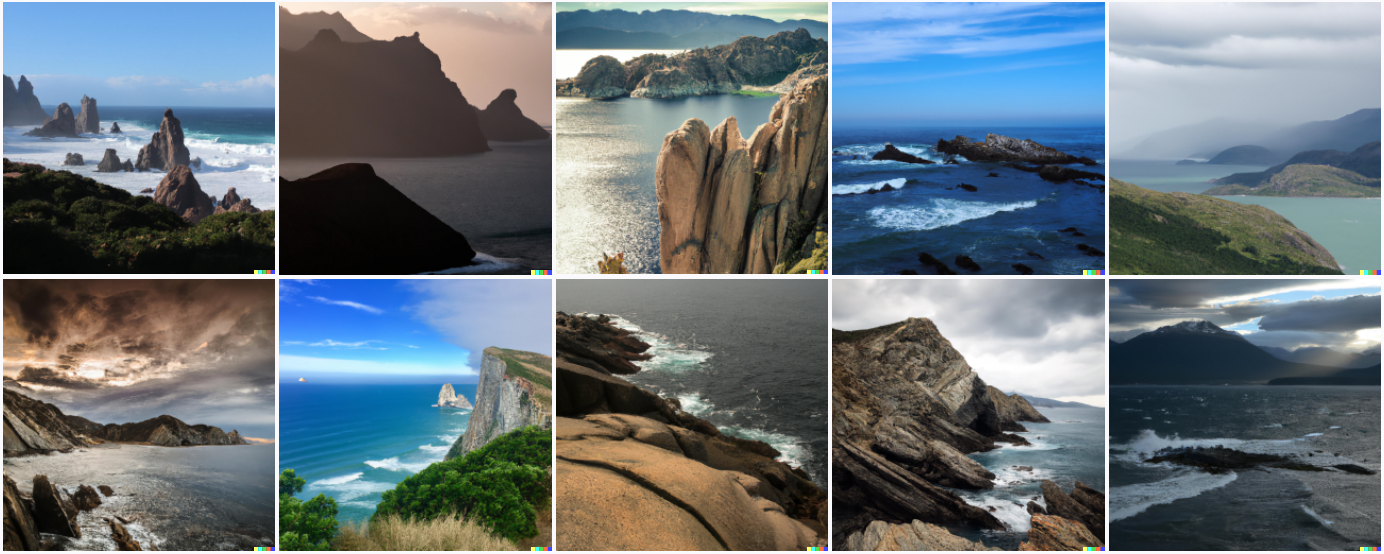

This turns out to be: nature.

That last one is one of my favorites. Some beautiful, some weird.

Posted!

Thanks!

Before the diamondoid zeppelins clustering in the sky completely blotted out the sun, one came low enough for the message on its flank to be seen: “Tlon, Uqbar, Stlalm Anit”.

Posted the best one.

Maybe people failure is caused by whatever they tweaked to avoid ‘generating realistic faces and known persons’?

Request: “Screenshot from the anime adaptation of James Joyce’s novel Finnegans Wake”

Posted. Anime, check. Everything else, not so much.

Request: a girl in a forest with butterfly ideas

Posted!

For my reference, were those the first two attempts, or did you try a bunch?

Those were both in the initial set of 10 that it generated.

What about:

Buffalos in Buffalo buffaloing buffalo

Fire dousing water in the style of the Mona Lisa

Two rubiks cubes solving each other

Chebyshev’s inequality

That’s probably a fairer challenge, but for full effect:

Buffalo buffalo Buffalo buffalo buffalo buffaloing Buffalo buffalo

I tried the buffalo sentence on my own on the first day, it just was a bunch of buffalo. Unsurprising, I guess.

Please, I need to see what happens with “A group of red penguins playing poker”, c:

Good one!

Haha thank you so much, I loved those penguins. I wonder what they would look like in a painting style tho

“An animal looking curiously in the mirror, but the reflection is a different kind of animal; in digital style.”

“A cat looking curiously in the mirror, but the reflection is a different kind of animal; in digital style.”

“A cat looking curiously in the mirror, but the reflection is a dog; in digital style.”

Curious to see how it handles modified-reflection and lack-of-specificity.

Posted. Interesting sequence, clearly shows some of the limits of its understanding.

How about, “the words “hello world!” written on a piece of paper”? Or you could substitute “on a compute screen” instead of a piece of paper, or you could just leave out the writing medium entirely. I’m curious if it can handle simple words if asked specifically for them.

Added.

It’s weird that it turns Reimman into Reynam, which seems like a mistake someone would make if you told them the prompt in person.

In general a lot of the words kind of seem like it understands phonetics? Like “Synngy” is kinda phonetically close to “singularity”.

It’s kinda like it’s generating its own gibberish language that could fool you into thinking someone was talking about the subject of you weren’t paying attention while they talked… Or something.

How about “small chunks of curiosity”?

Super cute!

An artist in a painting painting a photorealistic human in the real world

Prompt:

SOMEBODY BOILING A GOAT IN ITS MOTHER’S MILK.

I put myself on the waiting list for DALL-E. Meanwhile, here are a few things I’d ask it to depict, mainly trying to stress it to see how much it can do with little:

A drawing of a muchness.

The inside of a neutron.

A Ticktockman saying “Repent!” to a Harlequin.

A self-portrait of Escher making a self-portrait.

Mornington Crescent. (A while back I tried this on wombo.art, and it did quite a good job, although not on the level of draughtsmanship of DALL-E.)

This is really awesome, I’m at a loss for words. Can you try some of these?

- Division by zero

- Aliens in the style of pixel art—

Earth after climate change

- A garden in the style of watercolor line art

i have some ideas

something to cheer me up

a cube entering the 4th dimension

a realistic painting of the world cut in half, with one half being heaven and the other hell

a unknown backrooms level

a thing no one has ever seen before

a large spider wreaking havoc on new york city in the style of vincent van gogh

a flying city in the clouds of jupiter

the end of the universe

a impossible object with impossible colors

a satellite made by aliens

the most random thing possible

the mandelbrot set

complex mathematics

a monster with the head of a siren painted on a cave wall

a phone in the far future

the surface of a alien planet with popcorn meteorites in the sky

the eruption of a super large volcano

a realistic photo of a atom

anti-matter

Awesome !

I’m however surprised that nobody seems to have tried the prompt :

“Do androids dream of electric sheep ?”

Not even with DALL-E 1 ?!

P.S.: The picture for this article (also used for Dick’s book) looked promising, but seems like it was a “mere” “weird” human that painted it ?

https://www.fondazionesinapsi.it/orione/ma-gli-androidi-sognano-pecore-elettriche/ (it)

P.P.S.: At least one journalist (or more likely, her editor) had the same (again, pretty obvious) idea for an article title about AI, but even though it mentions DALL-E (1), they didn’t think of / care enough to request DALL-E (1) for a picture !

https://katoikos.world/dialogue/frontiers-of-artificial-intelligence-do-androids-dream-of-electric-sheep.html

https://twitter.com/sama/status/1511734532776476672

Thanks !

It’s kind of funny that DALL-E 2 is so amazing, and at the same time both DuckDuckGo and Google fail to find the above (and/or a related reddit thread) when prompted with :

but succeed with :

EDIT : Never mind, it was not only my failure of thinking things through about what DALL-E accepts best, but also about how search engines work—DDG (but not google !) gives me the following as the 10th result when using the quote-less query :

https://www.reddit.com/r/slatestarcodex/comments/txnrrb/dalle_2/

(which links, among other things, to that tweet)

Yes, I thought I had seen one already, so I went searching. “Electric sheep” on Twitter was useless, even after filtering by media and blocking/muting a bunch of accounts, so I fell back to

androids dream of electric sheep dall-ein Google, which turned up that link; I noticed that Google also provides 2 Twitter accounts, confirming that a relevant tweet existed & was being liked/reshared (even if you can’t actually find it when you click on those accounts!), and was worth looking for in that Reddit thread.Curiously, Google Images also falls to find it, and regular google is very sensitive to search query wording—shorter seems to be better, but not consistently… I suspect the problem is that Sam-sama was replying to tweets without any text, just the sampled image, and so it’s hard for any automated systems to figure out that the tweet he is replying to is ‘the label’ - for reasons of scale, I wouldn’t be surprised if each tweet is being processed by Google in isolation and so solving this instance is near-impossible. The hit is just barely on the edge of relevance and highly unstable. (Of course, our discussion here should help fix that within the next few index refreshes!)

Is there any way of reverse engineering from these pictures what existing images were used to generate them? Would be interesting to see how much similarity there is.

Some suggestions for testing the limits of abstract, or spatial reasoning:

the back of the letter ‘E’

the back of the letter e

back of the number 42

the back of the last letter in the alphabet

underside of flat earth

the view of earth from 1,000,000,000 km away

view of earth from a million miles away

a view of bacteria using a billion times magnification

Request: two teddy bears doing research in a laboratory in the 80s

Adorable! Also more realistic and less cartoon-y than I anticipated.

This is really interesting… could I request “new AI system that can create realistic images”? I’m curious to see how it handles self-reference

Added a couple of takes on this idea.

Thanks—quite cool results!

It’s a good thing there are embedded safety precautions to prevent celebrities being spanked but the resulting images would probably not be realistic or specific enough for those folks. This seems like a great way to generate original art for home display screens that reflects the owners personal visions.

Generate pictures of natural landscape for me

Suggestion.

Clown fish getting resuscitated

Clown fish getting CPR

Maybe substitute ‘Clown fish’ for ‘Nemo’ to see if l Dall-E 2 r can detect the cultural reference of Nemo as an animated representation of a clown fish also will Dall-E 2 recognize CPR as resuscitated

Combining the style of two Artist might be interesting. Something like:

“A painting in the style of {Artist 1} and {Artist 2}” lets say Claude Monet and Piet Mondrian

Also I think “A Zebra in the style of Mark Rothko” could be funny (Or “A Zebra with stripes in the style of Mark Rothko”).

can you try the following: “the full alphabet of the robigull language, with translation to english”?

I enjoy the concept of fine art containing out-of-place items.

“Still life with apples and sausages by Paul Cézanne” “The Hay Wain by John Constable with TIE fighters” “American Gothic by Grant Wood with a large eye in the window” “The Last Supper with a bar, featuring Robocop arm-wrestling Jesus” “Still life with apples, bread and TARDIS in the style of Van Gogh” “Apple iPhone advert in the style of Edvard Munch”

I’ve been wanting to access DALL-E 2 so that I can generate ideas such as these, and then do physical paintings as per the original artists. After all, what is original or derivative? 🤔

Here are my suggestions: An illustration of a floating white hand in the middle of a 8 ball with wings. A cube made out of M&M’s in a digital art style. A hyperrealistic surrealistic photograph of a pear in a pear while in a mcdonalds in a light. (I wonder how this will go.) Last one, A tornado made out of fire enveloping a city.

2 robots engaged in an epic rap battle

and

A rabbit dressed like a Samurai in the style of a Japanese painting

https://labs.openai.com/s/hMX2pDcjGRbvsDjc7ko6GEMF

https://labs.openai.com/s/7c3YxB5EngpIlJpXjPPcUjLX

https://labs.openai.com/s/XTcvLLmPA9kL6uV6bxRKmhzL

https://labs.openai.com/s/HqrZW8BDk22pGwZHQ4pJlFaw

Suggestions:

Karl Marx being guillotined during the French Revolution

Schopenhauer being broken on the Buddhist Wheel

Stock market crash of 1929 in the style of Zdzisław Beksiński

House of Leaves book terror

The successors of humankind

Fractal eyeball

Thanks for sharing these images! Truly astounding stuff.

The stock market crash one turned out well.

https://labs.openai.com/s/SZZ0OCtMILryR76Q81AQfxeF

https://labs.openai.com/s/dzHe6dDF1Wu0QAXZg1NxwUzo

I have so many questions! Love the AI storytelling… the playing cards work if you incorporate the “dog ate my homework” thought into the depiction. I’m also thinking you are having way too much fun doing this… ;-0

Request: “Ghostly spaceman in a cowboy hat rides a rocket ship”

“end of the world” images make me wonder if Dall-E thinks the world is flat

Thanks for posting these.

It’s odd that mentioning Dall-E by name in the prompt would be a content policy violation. Do you know if they’ve mentioned why?

If you’re still taking suggestions:

A beautiful, detailed illustration by James Gurney of a steampunk cheetah robot stalking through the ruins of a post-singularity city. A painting of an ornate brass automaton shaped like a big cat. A 4K image of a robotic cheetah in a strange, high-tech landscape.

I think OpenAI mentioned that including the same information several times with different phrasing helped for more complicated prompts in the first DALL-E, so I’m curious to see if that would help here- assuming that wouldn’t be over the length limit.

Suggestions:

Kind king light of mind, in the style of Man Ray

Hypermagical ultraomnipotence, in the style of Wassily Kandinsky

The evolutionary advantage of sex, in the style of Hilma af Klimt

The ground of being, in the style of Kazimir Malevich

A LessWrong post in the style of Donald Judd

Yves Klein Red

The important innovation here is unlimited puppy pictures 🐶

I always thought that it’s weird that AI struggles with text, just as in my dreams. Every time I open a book in a dream, it’s jumbled and nonsensical and I can immediately tell I’m dreaming.

That is probably some relatively uninteresting aspect of DALL-E 2 rather than a deep insight about generative models & dreaming, say. DL training is counterintuitive: did ProGAN/StyleGAN screw up meme captions in generated images, generating ‘moon’ or ‘Cyrillic writing’, because of some failure in GAN dynamics? Nah, it was just that Nvidia turned on horizontal flipping as a data augmentation, so every piece of text was seen both normally and through a mirror, so of course the GAN couldn’t figure out what was the ‘real’ appearance of writing. Disable that and like in TADNE, writing works a lot better—in TADNE, the Japanese writing looks Japanese (although Japanese confirm that it’s complete nonsense). People have been pointing out that while DALL-E does not do writing well, some of the smaller inferior competing models do do writing almost entirely too much, and they weren’t designed to generate text inside images either. Just how they came out.

Yeah, I see what you mean. But even if it gets the correct letter shapes, it’s still nonsense right? It’s writing as a visual feel, not anything actually written. Maybe in a sense writing is more difficult to do for image generators—a piece of paper with written text is much more information dense than, say, a patch of grass—especially if it’s integrated in a scene.

Low-res images like 256px images are a pretty hard source to try to learn language from! Which is not to say that no mad scientists won’t try it anyway, but you certainly can’t expect GPT-3 fluency from an image model which sees language as primarily strip mall signage or the occasional axis label, all downscaled to near unreadability. (Note that ‘captions’ of images will often, or usually, not transcribe all text visible in said image.) You might think that they would start from a pretrained small GPT-3 or something (perhaps run an OCR NN to transcribe all text in the images and append that to the caption), but they don’t seem to (at least, checking the DALL-E 2 & GLIDE paper indicates no such use and the ‘text encoder’ appears to be a diffusion model and so couldn’t be a pretrained GPT-3?). Oh well. You can’t do everything, you know.

my requests:

-mars combined with earth

-saturn with adorable rhinos having a party on saturn’s ring

-dogs and cats enjoying diamonds and golds falling out of the sky

-pencils dancing with erasers

-sad guinea pigs running around

-a deformed roll of toilet paper on top of a elephant

thanks!

Suggestion: “sangaku proving the Pythagoras’ theorem”. I wonder if it can do visual explanations.

I just want to see how far I can push it, can you try:

1)

A glossy black mega world maze underground surrounded by lava. White standing blackbucks guard the entrance gate. Large gold keys float throughout the maze. Around the maze is glossy black castles with many levels that wrap around. Ultra 4K realistic photography.

2)

Macro of a flask that is containing glowing blue liquid sitting on a small short white table, is spilling into a gold treasure chest sitting in a glossy white hall. A robot pirate wearing a red bandana with red ruble eyes has his hands in the chest.

3)

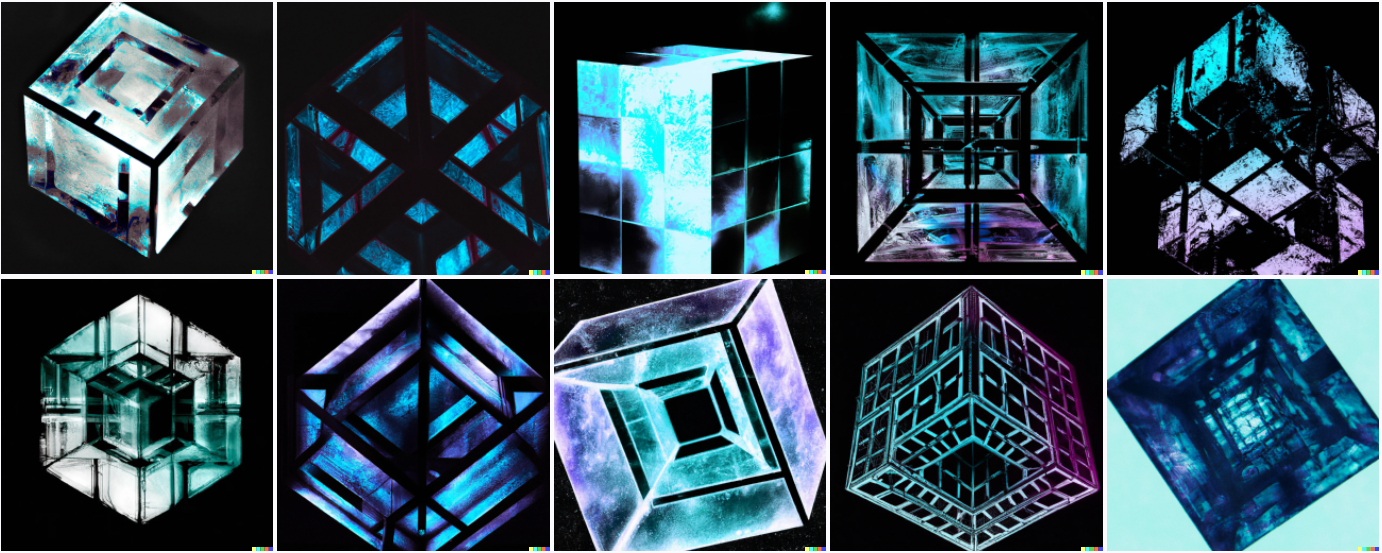

A massive metal cube in space made of nanobot fog. The same square structure and systems is repeated across and cloning at the sides too. It is like a fractal, and growing outwards. It has the most advanced technology from the far future, such as cloning systems, generators, synchronization, lasers, etc. Ultra 4K realistic photograph.

How about <some prompt without specified style you already used> + ”, drawn by state-of-the-art image-generating neural net”?

Ooh ooh—try a dangerous Cognitohazard Memetic SCP image that will make anyone who sees it immediately want to reshare it with everyone they know and obsessively discuss it

How does it fare with impossible things like a ‘seven sided cube’ or a ‘circle with four sides’ or a ’12 dimensional tesseract’ ?

Some suggestions:

A large chunk of probability mass

Watercolor painting of the end of history

An electric car painted by Wassliy Kandinsky

A monkey is painting a portrait of Charles Darwin

A monkey is explaining something to Charles Darwin

Moloch whose soul is electricity and banks!

The anatomy of a friendly AI

Winston Churchill and Adolf Hitler in a loving embrace [if this isn’t allowed, substitute Gandhi for Hitler]

A painting of a spurious correlation in the style of impressionism

Friedrich Nietzsche is shaving his mustache

Sigmund Freud tries LSD for the first time in the style of a picture from 1920s

A round flat world carried on the shoulders of four elephants who are standing on a giant turtle

A charcoal drawing of a brain in a vat

The map is not the territory

Richard Feynman discovers the meaning of life

Hippocrates is the father of modern medicine

“A lego pumpkin near a concrete cube”

″A gameboy made of salad”

Could you try “size of Jupiter, banana for scale”?

Hi. Thanks for the great post! I have a question and a request. Which is the longest, more complex prompt that Dall-E 2 ha honoured in a reasonably complete way? For the request, since I am a fan of aviation movies, I’d be curious of what would it give in response to “poster of a a classical aviation movie”, or something like it. I expect that some of them would show human faces, but actual posters often featured planes dogfighting and the like.

“penguins of chaos”

Can’t wait to get access. In the meantime would love to see:

A Spiky DJ

five portraits become joined painted collectively

A long road in the bad-painting style

question, when you access dall-e 2 will you have to pay eventually or will it be free?

What OA has done in the past with GPT-3 (eg finetuning, embeddings) is made the very limited access beta free, and then turn on billing when it goes ‘live’ and opens up to more people. They have pricing info up on the website already. (And it seems reasonable to me when you consider how expensive human artists are, and that each image is running like 5 fairly high-end models.)

Where? I don’t see it on the basic Dall-e 2 page and their general pricing page only mentions their GPT-3 engines AFAICT.

I have a few requests.

-Ancient greek smartphone

-A soda can that looks like the Empire State

-Iron Man painted by Caravaggio

-Steampunk tetris

-A strawberry that looks like a piano

This is awesome! I feel weird asking you to plug prompts into the machine. I wonder how it does with logo design, something like “the logo for a new longtermist startup”? Not using for commercial purposes; just curious.

Also curious about some particular word play ala Marry Poppins: “a cat drawing the curtains”

Very interesting!

I’ll add some suggestions:

The stuff of dreams

A hat wearing a hat

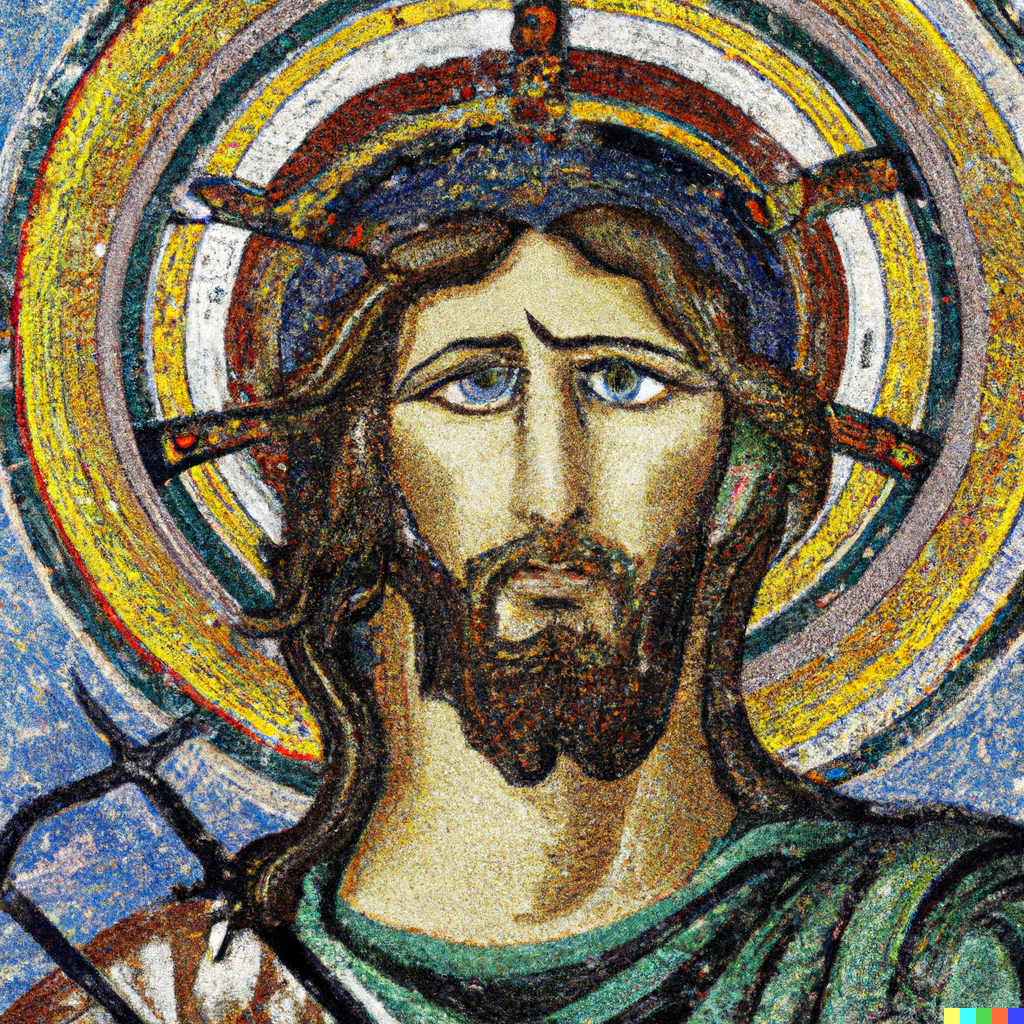

The Emperor of the Galaxy, byzantine mosaic

Then, some D&D-related suggestions, because why not (I would be surprised enough if he recognized the correct creatures at all):

A beholder beholding a beholder

Marching modrons

Marching modrons painted by Pieter Bruegel

The Incredible Umber Hulk (this one’s probably the trickiest)

Posted a few of these.

a

upvote

It’s funny, the text generated reminds me of babbling.

Can you try:

1)

A muscular Egyptian piranha plant in a white robe guarding the entrance to Mario pipe world 7. Behind him is a world of pipes, and the walls are made of tall pipes. Realistic 4K photo.

2)

A glossy black mega world maze underground surrounded by lava. White standing blackbucks guard the entrance gate. Large gold keys float throughout the maze. Around the maze is glossy black castles with many levels that wrap around. Ultra 4K realistic photography.

3)

High definition surrealist artwork of blue aliens with tall legs and bodies and big heads standing on a blue rock with black pipes extruding from the ground in different ways. White ghastly smoke flows around them. Some of them are connected and part of the surrounding objects. Lots of detail.

4)

Bright up close shot of a plush toy robot pikachu eating a hamburger in a nurse outfit against a white wall with mud splashed on pikachu from a tire on the road.

Woah that’s so cool! I’ve been messing with VQGAN recently and was wondering if the prompts would affect DALLE-2 the same way it affects VQGAN since they both CLIP to select the best ouputs. Here are some prompts I would love to see:

Watercolor painting of apples with arms and legs fighting (or dancing if it violates the policy)

A painting of a cat vampire drinking wine by greg rutkowski

Posted!

Thanks!

lowkey disappointed with the cat vampire but the apples fighting were pretty good!

Also, on the ‘hello world’ input (not the ‘print hello world’), the bottom right picture has one extra thumb in a really… peculiar place hahahah

Thanks for sharing! Can I please request the following:

‘An outback Australian landscape with T-rex dinosaurs being chased by ducklings

″The Buddha attaining enlightenment with galaxies entering his mind’

‘An AI using a laptop computer to watch YouTube’

‘The Tesseract from the movie Interstellar, with inverted colours’

’The aftermath of Global nuclear war’

I’m so curious! Thanks a lot!

Posted!

Unsurprisingly, policy violation.

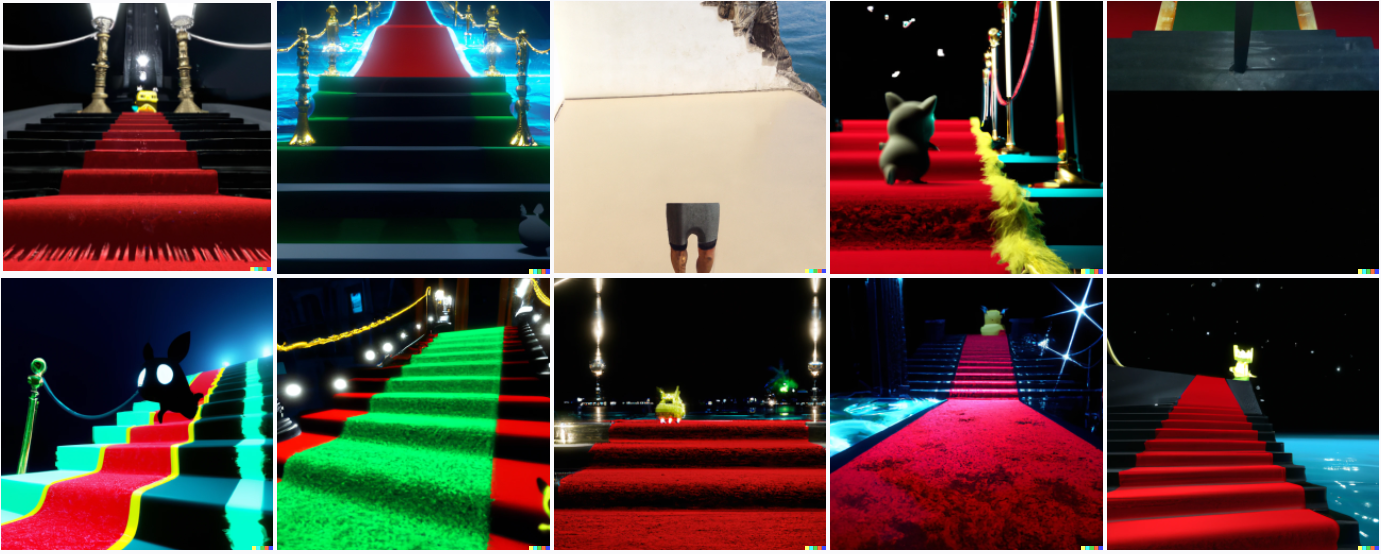

Could you try this one? It’s dark and demanding:

Pikachu walking up the stairs of a crisp glossy black castle next to the sea at night. At the top of the stairs is a red carpet. A black regenerative liquid being is jumping out of a green metal Mario pipe at the end of the carpet. Ultra 4K definition.

Tricky! Posted.

Wow it’s not bad! Thanks! Can you try these two below? I drew by hand the 2nd and aced it (see the link), it’d be interesting to see if it can compare:

1)

A big muscular robot cyborg pikachu full of electricity having an explosive arm wrestle with a cyborg Zurg in a shopping aisle, surrounded by shopping carts. Realistic photo.

2)

A dark abandoned haunted restaurant surrounded by overgrown bushes and trees, with ghosts from former chefs in white aprons cooking pasta looking at the gigantic evil human being with the look of sorrow as he starts to demolish the building with his wrecking ball hand while waving goodbye with his other.

--LINK-- (uploaded using imgbb)

https://ibb.co/JRcW1tw

Had to take out “explosive” in the first one to get it through.

The second one is a policy violation.

Wow that is amazing! Could I request “giant peaceful butterfly with rainbow trail and sunglasses invades new york in the morning” I know it’s oddly specific but I am interested in seeing how the ai will handle all of these little details. Thanks!!

“Invades” violated the content policy, went with “wanders in”. Posted, basically a total failure to do anything interesting.

Wow that was unfortunate, but thanks for uploading! Maybe try underwater scuba diving cat discovers a pearl

Super cute!

Wow I can’t believe this, it did exactly what I imagined its crazy! Thank you so much