6 reasons why “alignment-is-hard” discourse seems alien to human intuitions, and vice-versa

Tl;dr

AI alignment has a culture clash. On one side, the “technical-alignment-is-hard” / “rational agents” school-of-thought argues that we should expect future powerful AIs to be power-seeking ruthless consequentialists. On the other side, people observe that both humans and LLMs are obviously capable of behaving like, well, not that. The latter group accuses the former of head-in-the-clouds abstract theorizing gone off the rails, while the former accuses the latter of mindlessly assuming that the future will always be the same as the present, rather than trying to understand things. “Alas, the power-seeking ruthless consequentialist AIs are still coming,” sigh the former. “Just you wait.”

As it happens, I’m basically in that “alas, just you wait” camp, expecting ruthless future AIs. But my camp faces a real question: what exactly is it about human brains[1] that allows them to not always act like power-seeking ruthless consequentialists? I find that existing explanations in the discourse—e.g. “ah but humans just aren’t smart and reflective enough”, or evolved modularity, or shard theory, etc.—to be wrong, handwavy, or otherwise unsatisfying.

So in this post, I offer my own explanation of why “agent foundations” toy models fail to describe humans, centering around a particular non-“behaviorist” part of the RL reward function in human brains that I call Approval Reward, which plays an outsized role in human sociality, morality, and self-image. And then the alignment culture clash above amounts to the two camps having opposite predictions about whether future powerful AIs will have something like Approval Reward (like humans, and today’s LLMs), or not (like utility-maximizers).

(You can read this post as pushing back against pessimists, by offering a hopeful exploration of a possible future path around technical blockers to alignment. Or you can read this post as pushing back against optimists, by “explaining away” the otherwise-reassuring observation that humans and LLMs don’t act like psychos 100% of the time.)

Finally, with that background, I’ll go through six more specific areas where “alignment-is-hard” researchers (like me) make claims about what’s “natural” for future AI, that seem quite bizarre from the perspective of human intuitions, and conversely where human intuitions are quite bizarre from the perspective of agent foundations toy models. All these examples, I argue, revolve around Approval Reward. They are:

1. The human intuition that it’s normal and good for one’s goals & values to change over the years

2. The human intuition that ego-syntonic “desires” come from a fundamentally different place than “urges”

3. The human intuition that kindness, deference, and corrigibility are natural

4. The human intuition that unorthodox consequentialist planning is rare and sus

5. The human intuition that societal norms and institutions are mostly stably self-enforcing

6. The human intuition that treating other humans as a resource to be callously manipulated and exploited, just like a car engine or any other complex mechanism in their environment, is a weird anomaly rather than the obvious default

0. Background

0.1 Human social instincts and “Approval Reward”

As I discussed in Neuroscience of human social instincts: a sketch (2024), we should view the brain as having a reinforcement learning (RL) reward function, which says that pain is bad, eating-when-hungry is good, and dozens of other things (sometimes called “innate drives” or “primary rewards”). I argued that part of the reward function was a thing I called the “compassion / spite circuit”, centered around a small number of (hypothesized) cell groups in the hypothalamus, and I sketched some of its effects.

Then last month in Social drives 1: “Sympathy Reward”, from compassion to dehumanization and Social drives 2: “Approval Reward”, from norm-enforcement to status-seeking, I dove into the effects of this “compassion / spite circuit” more systematically.

And now in this post, I’ll elaborate on the connections between “Approval Reward” and AI technical alignment.

“Approval Reward” fires most strongly in situations where I’m interacting with another person (call her Zoe), and I’m paying attention to Zoe, and Zoe is also paying attention to me. If Zoe seems to be feeling good, that makes me feel good, and if Zoe is feeling bad, that makes me feel bad. Thanks to these brain reward signals, I want Zoe to like me, and to like what I’m doing. And then Approval Reward generalizes from those situations to other similar ones, including where Zoe is not physically present, but I imagine what she would think of me. It sends positive or negative reward signals in those cases too.

As I argue in Social drives 2, this “Approval Reward” leads to a wide array of effects, including credit-seeking, blame-avoidance, and status-seeking. It also leads not only to picking up and following social norms, but also to taking pride in following those norms, even when nobody is watching, and to shunning and punishing those who violate them.

This is not what normally happens with RL reward functions! For example, you might be wondering: “Suppose I surreptitiously[2] press a reward button when I notice my robot following rules. Wouldn’t that likewise lead to my robot having a proud, self-reflective, ego-syntonic sense that rule-following is good?” I claim the answer is: no, it would lead to something more like an object-level “desire to be noticed following the rules”, with a sociopathic, deceptive, ruthless undercurrent.[3]

I argue in Social drives 2 that Approval Reward is overwhelmingly important to most people’s lives and psyches, probably triggering reward signals thousands of times a day, including when nobody is around but you’re still thinking thoughts and taking actions that your friends and idols would approve of.

Approval Reward is so central and ubiquitous to (almost) everyone’s world, that it’s difficult and unintuitive to imagine its absence—we’re much like the proverbial fish who puzzles over what this alleged thing called “water” is.

…Meanwhile, a major school of thought in AI alignment implicitly assumes that future powerful AGIs / ASIs will almost definitely lack Approval Reward altogether, and therefore AGIs / ASIs will behave in ways that seem (to normal people) quite bizarre, unintuitive, and psychopathic.

The differing implicit assumption about whether Approval Reward will be present versus absent in AGI / ASI is (I claim) upstream of many central optimist-pessimist disagreements on how hard technical AGI alignment will be. My goal in this post is to clarify the nature of this disagreement, via six example intuitions that seem natural to humans but are rejected by “alignment-is-hard” alignment researchers. All these examples centrally involve Approval Reward.

0.2 Hang on, will future powerful AGI / ASI “by default” lack Approval Reward altogether?

This post is mainly making a narrow point that the proposition “alignment is hard” is closely connected to the proposition “AGI will lack Approval Reward”. But an obvious follow-up question is: are both of these propositions true? Or are they both false?

Here’s how I see things, in brief, broken down into three cases:

If AGI / ASI will be based on LLMs: Humans have Approval Reward (arguably apart from some sociopaths etc.). And LLMs are substantially sculpted by human imitation (see my post Foom & Doom §2.3). Thus, unsurprisingly, LLMs also display behaviors typical of Approval Reward, at least to some extent. Many people see this as a reason for hope that technical alignment might be solvable. But then the alignment-is-hard people have various counterarguments, to the effect that these Approval-Reward-ish LLM behaviors are fake, and/or brittle, and/or unstable, and that they will definitely break down as LLMs get more powerful. The cautious-optimists generally find those pessimistic arguments confusing (example).

Who’s right? Beats me. It’s out-of-scope for this post, and anyway I personally feel unable to participate in that debate because I don’t expect LLMs to scale to AGI in the first place.[4]

If AGI / ASI will be based on RL agents (or similar), as expected by David Silver & Rich Sutton, Yann LeCun, and myself (“brain-like AGI”), among others, then the answer is clear: There will be no Approval Reward at all, unless the programmers explicitly put it into the reward function source code. And will they do that? We might (or might not) hope that they do, but it should definitely not be our “default” expectation, the way things are looking today. For example, we don’t even know how to do that, and it’s quite different from anything in the literature. (RL agents in the literature almost universally have “behaviorist” reward functions.) We haven’t even pinned down all the details of how Approval Reward works in humans. And even if we do, there will be technical challenges to making it work similarly in AIs—which, for example, do not grow up with a human body at human speed in a human society. And even if it were technically possible, and a good idea, to put in Approval Reward, there are competitiveness issues and other barriers to it actually happening. More on all this in future posts.

If AGI / ASI will wind up like “rational agents”, “utility maximizers”, or related: Here the situation seems even clearer: as far as I can tell, under common assumptions, it’s not even possible to fit Approval Reward into these kinds of frameworks, such that it would lead to the effects that we expect from human experience. No wonder human intuitions and “agent foundations” people tend to talk past each other!

0.3 Where do self-reflective (meta)preferences come from?

This idea will come up over and over as we proceed, so I’ll address it up front:

In the context of utility-maximizers etc., the starting point is generally that desires are associated with object-level things (whether due to the reward signals or the utility function). And from there, the meta-preferences will naturally line up with the object-level preferences.

After all, consider: what’s the main effect of ‘me wanting X’? It’s ‘me getting X’. So if getting X is good, then ‘me wanting X’ is also good. Thus, means-end reasoning (or anything functionally equivalent, e.g. RL backchaining) will echo object-level desires into corresponding self-reflective meta-level desires. And this is the only place that those meta-level desires come from.

By contrast, in humans, self-reflective (meta)preferences mostly (though not exclusively) come from Approval Reward. By and large, our “true”, endorsed, ego-syntonic desires are approximately whatever kinds of desires would impress our friends and idols (see previous post §3.1).

Box: More detailed argument about where self-reflective preferences come from

The actual effects of “me wanting X” are

(1) I may act on that desire, and thus get X (and stuff correlated with X),

(2) Maybe there’s a side-channel through which “me wanting X” can have an effect:

(2A) Maybe there are (effectively) mind-readers in the environment,

(2B) Maybe my own reward function / utility function is itself a mind-reader, in the sense that it involves interpretability, and hence triggers based on the contents of my thoughts and plans.

Any of these three pathways can lead to a meta-preference wherein “me wanting X” seems good or bad. And my claim is that (2B) is how Approval Reward works (see previous post §3.2), while (1) is what I’m calling the “default” case in “alignment-is-hard” thinking.

(What about (2A)? That’s another funny “non-default” case. Like Approval Reward, this might circumvent many “alignment-is-hard” arguments, at least in principle. But it has its own issues. Anyway, I’ll be putting the (2A) possibility aside for this post.)

(Actually, human Approval Reward in practice probably involves a dash of (2A) on top of the (2B)—most people are imperfect at hiding their true intentions from others.)

…OK, finally, let’s jump into those “6 reasons” that I promised in the title!

1. The human intuition that it’s normal and good for one’s goals & values to change over the years

In human experience, it is totally normal and good for desires to change over time. Not always, but often. Hence emotive conjugations like

“I was enculturated, you got indoctrinated, he got brainwashed”

“I came to a new realization, you changed your mind, he failed to follow through on his plans and commitments”

“I’m open-minded, you’re persuadable, he’s a flip-flopper”

…And so on. Anyway, openness-to-change, in the right context, is great. Indeed, even our meta-preferences concerning desire-changes are themselves subject to change, and we’re generally OK with that too.[5]

Whereas if you’re thinking about an AI agent with foresight, planning, and situational awareness (whether it’s a utility maximizer, or a model-based RL agent[6], etc.), this kind of preference is a weird anomaly, not a normal expectation. The default instead is instrumental convergence: if I want to cure cancer, then I (incidentally) want to continue wanting to cure cancer until it’s cured.

Why the difference? Well, it comes right from that diagram in §0.3 just above. For Approval-Reward-free AGIs (which I see as “default”), their self-reflective (meta)desires are subservient to their object-level desires.

Goal-preservation follows: if the AGI wants object-level-thing X to happen next week, then it wants to want X right now, and it wants to still want X tomorrow.

By contrast, in humans, self-reflective preferences mostly come from Approval Reward. By and large, our “true”, endorsed desires are approximately whatever kinds of desires would impress our friends and idols, if they could read our minds. (They can’t actually read our minds—but our own reward function can!)

This pathway does not generate any particular force for desire preservation.[7] If our friends and idols would be impressed by desires that change over time, then that’s generally what we want for ourselves as well.

2. The human intuition that ego-syntonic “desires” come from a fundamentally different place than “urges”

In human experience, it is totally normal and expected to want X (e.g. candy), but not want to want X. Likewise, it is totally normal and expected to dislike X (e.g. homework), but want to like it.

And moreover, we have a deep intuitive sense that the self-reflective meta-level ego-syntonic “desires” are coming from a fundamentally different place as the object-level “urges” like eating-when-hungry. For example, in a recent conversation, a high-level AI safety funder confidently told me that urges come from human nature while desires come from “reason”. Similarly, Jeff Hawkins dismisses AGI extinction risk partly on the (incorrect) grounds that urges come from the brainstem while desires come from the neocortex (see my Intro Series §3.6 for why he’s wrong and incoherent on this point).

In a very narrow sense, there’s actually a kernel of truth to the idea that, in humans, urges and desires come from different sources. As in Social Drives 2 and §0.3 above, one part of the RL reward function is Approval Reward, and is the primary (though not exclusive) source of ego-syntonic desires. Everything else in the reward function mostly gives rise to urges.

But this whole way of thinking is bizarre and inapplicable from the perspective of Approval-Reward-free AI futures—utility maximizers, “default” RL systems, etc. There, as above, the starting point is object-level desires; self-reflective desires arise only incidentally.

A related issue is how we think about AGI reflecting on its own desires. How this goes depends strongly on the presence or absence of (something like) Approval Reward.

Start with the former. Humans often have conflicts between ego-syntonic self-reflective desires and ego-dystonic object-level urges, and reflection allows the desires to scheme against the urges, potentially resulting in large behavior changes. If AGI has Approval Reward (or similar), we should expect AGI to undergo those same large changes upon reflection. Or perhaps even larger—after all, AGIs will generally have more affordances for self-modification than humans do.

By contrast, I happen to expect AGIs, by default (in the absence of Approval Reward or similar), to mainly have object-level, non-self-reflective desires. For such AGIs, I don’t expect self-reflection to lead to much desire change. Really, it shouldn’t lead to any change more interesting than pursuing its existing desires more effectively.

(Of course, such an AGI may feel torn between conflicting object-level desires, but I don’t think that leads to the kinds of internal battles that we’re used to from humans.[8])

(To be clear, reflection in Approval-Reward-free AGIs might still have “complications” of other sorts, such as ontological crises.)

3. The human intuition that helpfulness, deference, and corrigibility are natural

This human intuition comes straight from Approval Reward, which is absolutely central in human intuitions, and leads to us caring about whether others would approve of our actions (even if they’re not watching), taking pride in our virtues, and various other things that distinguish neurotypical people from sociopaths.

As an example, here’s Paul Christiano: “I think that normal people [would say]: ‘If we are trying to help some creatures, but those creatures really dislike the proposed way we are “helping” them, then we should try a different tactic for helping them.’”

He’s right: normal people would definitely say that, and our human Approval Reward is why we would say that. And if AGI likewise has Approval Reward (or something like it), then the AGI would presumably share that intuition.

On the other hand, if Approval Reward is not part of AGI / ASI, then we’re instead in the “corrigibility is anti-natural” school of thought in AI alignment. As an example of that school of thought, see Why Corrigibility is Hard and Important.

4. The human intuition that unorthodox consequentialist planning is rare and sus

Obviously, humans can make long-term plans to accomplish distant goals—for example, an 18-year-old could plan to become a doctor in 15 years, and immediately move this plan forward via sensible consequentialist actions, like taking a chemistry class.

How does that work in the 18yo’s brain? Obviously not via anything like RL techniques that we know and love in AI today—for example, it does not work by episodic RL with an absurdly-close-to-unity discount factor that allows for 15-year time horizons. Indeed, the discount factor / time horizon is clearly irrelevant here! This 18yo has never become a doctor before!

Instead, there has to be something motivating the 18yo right now to take appropriate actions towards becoming a doctor. And in practice, I claim that that “something” is almost always an immediate Approval Reward signal.

Here’s another example. Consider someone saving money today to buy a car in three months. You might think that they’re doing something unpleasant now, for a reward later. But I claim that that’s unlikely. Granted, saving the money has immediately-unpleasant aspects! But saving the money also has even stronger immediately-pleasant aspects—namely, that the person feels pride in what they’re doing. They’re probably telling their friends periodically about this great plan they’re working on, and the progress they’ve made. Or if not, they’re probably at least imagining doing so.

So saving the money is not doing an unpleasant thing now for a benefit later. Rather, the pleasant feeling starts immediately, thanks to (usually) Approval Reward.

Moreover, everyone has gotten very used to this fact about human nature. Thus, doing the first step of a long-term plan, without Approval Reward for that first step, is so rare that people generally regard it as highly suspicious. They generally assume that there must be an Approval Reward. And if they can’t figure out what it is, then there’s something important about the situation that you’re not telling them. …Or maybe they’ll assume that you’re a Machiavellian sociopath.

As an example, I like to bring up Earning To Give (EtG) in Effective Altruism, the idea of getting a higher-paying job in order to earn money and give it to charity. If you tell a normal non-nerdy person about EtG, they’ll generally assume that it’s an obvious lie, and that the person actually wants the higher-paying job for its perks and status. That’s how weird it is—it doesn’t even cross most people’s minds that someone is actually doing a socially-frowned-upon plan because of its expected long-term consequences, unless the person is a psycho. …Well, that’s less true now than a decade ago; EtG has become more common, probably because (you guessed it) there’s now a community in which EtG is socially admirable.

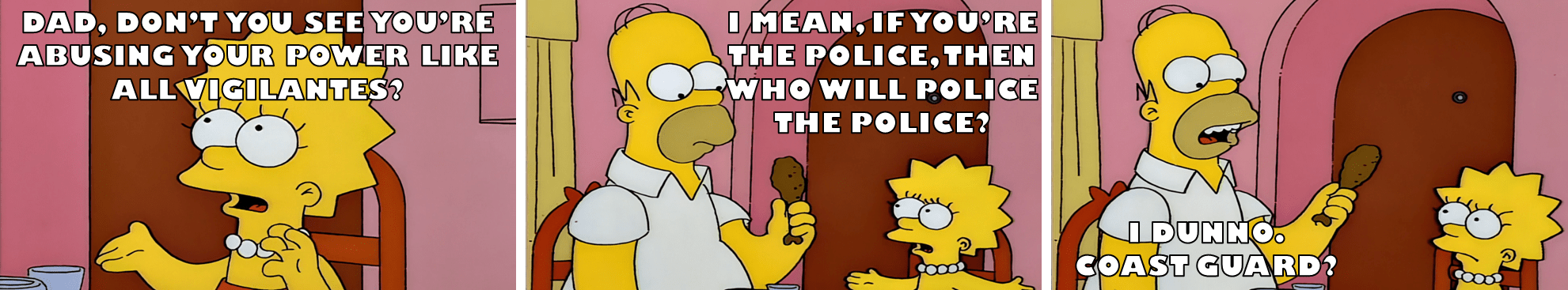

Related: there’s a fiction trope that basically only villains are allowed to make out-of-the-box plans and display intelligence. The normal way to write a hero in a work of fiction is to have conflicts between doing things that have strong immediate social approval, versus doing things for other reasons (e.g. fear, hunger, logic(!)), and to have the former win out over the latter in the mind of the hero. And then the hero pursues the immediate-social-approval option with such gusto that everyone lives happily ever after.[9]

That’s all in the human world. Meanwhile in AI, the alignment-is-hard thinkers like me generally expect that future powerful AIs will lack Approval Reward, or anything like it. Instead, they generally assume that the agent will have preferences about the future, and make decisions so as to bring about those preferences, not just as a tie-breaker on the margin, but as the main event. Hence instrumental convergence. I think this is exactly the right assumption (in the absence of a specific designed mechanism to prevent that), but I think people react with disbelief when we start describing how these AI agents behave, since it’s so different from humans.

…Well, different from most humans. Sociopaths can be a bit more like that (in certain ways). Ditto people who are unusually “agentic”. And by the way, how do you help a person become “agentic”? You guessed it: a key ingredient is calling out “being agentic” as a meta-level behavioral pattern, and indicating to this person that following this meta-level pattern will get social approval! (Or at least, that it won’t get social disapproval.)

5. The human intuition that societal norms and institutions are mostly stably self-enforcing

5.1 Detour into “Security-Mindset Institution Design”

There’s an attitude, common in the crypto world, that we might call “Security-Mindset Institution Design”. You assume that every surface is an attack surface. You assume that everyone is a potential thief and traitor. You assume that any group of people might be colluding against any other group of people. And so on.

It is extremely hard to get anything at all done in “Security-Mindset Institution Design”, especially when you need to interface with the real-world, with all its rich complexities that cannot be bounded by cryptographic protocols and decentralized verification. For example, crypto Decentralized Autonomous Organizations (DAOs) don’t seem to have done much of note in their decade of existence, apart from on-chain projects, and occasionally getting catastrophically hacked. Polymarket has a nice on-chain system, right up until the moment that a prediction market needs to resolve, and even this tiny bit of contact with the real world seems to be a problematic source of vulnerabilities.

If you extend this “Security Mindset Institution Design” attitude to an actual fully-real-world government and economy, it would be beyond hopeless. Oh, you have an alarm system in your house? Why do you trust that the alarm system company, or its installer, is not out to get you? Oh, the company has a good reputation? According to who? And how do you know they’re not in cahoots too?

…That’s just one tiny microcosm of a universal issue. Who has physical access to weapons? Why don’t those people collude to set their own taxes to zero and to raise everyone else’s? Who sets government policy, and what if those people collude against everyone else? Or even if they don’t collude, are they vulnerable to blackmail? Who counts the votes, and will they join together and start soliciting bribes? Who coded the website to collect taxes, and why do we trust them not to steal tons of money and run off to Dubai?

…OK, you get the idea. That’s the “Security Mindset Institution Design” perspective.

5.2 The load-bearing ingredient in human society is not Security-Mindset Institution Design, but rather good-enough institutions plus almost-universal human innate Approval Reward

Meanwhile, ordinary readers[10] might be shaking their heads and saying:

“Man, what kind of strange alien world is being described in that subsection above? High-trust societies with robust functional institutions are obviously possible! I live in one!”

The wrong answer is: “Security Mindset Institution Design is insanely overkill; rather, using checks and balances to make institutions stable against defectors is in fact a very solvable problem in the real world.”

Why is that the wrong answer? Well for one thing, if you look around the real world, even well-functioning institutions are obviously not robust against competent self-interested sociopaths willing to burn the commons for their own interests. For example, I happen to have a high-functioning sociopath ex-boss from long ago. Where is he now? Head of research at a major USA research university, and occasional government appointee wielding immense power. Or just look at how Donald Trump has been systematically working to undermine any aspect of society or government that might oppose his whims or correct his lies.[11]

For another thing, abundant “nation-building” experience shows that you cannot simply bestow a “good” government constitution onto a deeply corrupt and low-trust society, and expect the society to instantly transform into Switzerland. Institutions and laws are not enough. There’s also an arduous and fraught process of getting to the right social norms. Which brings us to:

The right answer is, you guessed it, human Approval Reward, a consequence of which is that almost all humans are intrinsically motivated to follow and enforce social norms. The word “intrinsically” is important here. I’m not talking about transactionally following norms when the selfish benefit outweighs the selfish cost, while constantly energetically searching for norm-violating strategies that might change that calculus. Rather, people take pride in following the norms, and in punishing those who violate them.

Obviously, any possible system of norms and institutions will be vastly easier to stabilize when, no matter what the norm is, you can get up to ≈99% of the population proudly adopting it, and then spending their own resources to root out, punish, and shame the 1% of people who undermine it.

In a world like that, it is hard but doable to get into a stable situation where 99% of cops aren’t corrupt, and 99% of judges aren’t corrupt, and 99% of people in the military with physical access to weapons aren’t corrupt, and 99% of IRS agents aren’t corrupt, etc. The last 1% will still create problems, but the other 99% have a fighting chance to keep things under control. Bad apples can be discovered and tossed out. Chains of trust can percolate.

5.3 Upshot

Something like 99% of humans are intrinsically motivated to follow and enforce norms, with the rest being sociopaths and similar. What about future AGIs? As discussed in §0.2, my own expectation is that 0% of them will be intrinsically motivated to follow and enforce norms. When those sociopathic AGIs grow in number and power, it takes us from the familiar world of §5.2 to the paranoid insanity world of §5.1.

In that world, we really shouldn’t be using the word “norm” at all—it’s just misleading baggage. We should be talking about rules that are stably self-enforcing against defectors, where the “defectors” are of course allowed to include those who are supposed to be doing the enforcement, and where the “defectors” might also include broad coalitions coordinating to jump into a new equilibrium that Pareto-benefits them all. We do not have such self-enforcing rules today. Not even close. And we never have. And inventing such rules is a pipe dream.[12]

The flip side, of course, is that if we figure out how to ensure that almost all AGIs are intrinsically motivated to follow and enforce norms, then it’s the pessimists who are invoking a misleading mental image if they lean on §5.1 intuitions.

6. The human intuition that treating other humans as a resource to be callously manipulated and exploited, just like a car engine or any other complex mechanism in their environment, is a weird anomaly rather than the obvious default

Click over to Foom & Doom §2.3.4—“The naturalness of egregious scheming: some intuitions” to read this part.

7. Conclusion

(Homework: can you think of more examples?)

I want to reiterate that my main point in this post is not

Alignment is hard and we’re doomed because future AIs definitely won’t have Approval Reward (or something similar).

but rather

There’s a QUESTION of whether or not alignment is hard and we’re doomed, and many cruxes for this question seem to be downstream of the narrower question of whether future AIs will have Approval Reward (or something similar) (§0.2). I am surfacing this latent uber-crux to help advance the discussion.

For my part, I’m obviously very interested in the question of whether we can and should put Approval Reward (and Sympathy Reward) into Brain-Like AGI, and what might go right and wrong if we do so. More on that in (hopefully) upcoming posts!

Thanks Seth Herd, Linda Linsefors, Charlie Steiner, Simon Skade, Jeremy Gillen, and Justis Mills for critical comments on earlier drafts.

- ^

…and by extension today’s LLMs, which (I claim) get their powers mainly from imitating humans.

- ^

I said “surreptitiously” here because if you ostentatiously press a reward button, in a way that the robot can see, then the robot would presumably wind up wanting the reward button to be pressed, which eventually leads to the robot grabbing the reward button etc. See Reward button alignment.

- ^

See Perils of under- vs over-sculpting AGI desires, especially §7.2, for why the “nice” desire would not even be temporarily learned, and if it were it would be promptly unlearned; and see “Behaviorist” RL reward functions lead to scheming for some related intuitions; and see §3.2 of the Approval Reward post for why those don’t apply to (non-behaviorist) Approval Reward.

- ^

My own take, which I won’t defend here, is that this whole debate is cursed, and both sides are confused, because LLMs cannot scale to AGI. I think the AGI concerns really are unsolved, and I think that LLM techniques really are potentially-safe, but they are potentially-safe for the very reason that they won’t lead to AGI. I think “LLM AGI” is an incoherent contradiction, like “square circle”, and one side of the debate has a mental image of “square thing (but I guess it’s somehow also a circle)”, and the other side of the debate has a mental image of “circle (but I guess it’s somehow also square)”, so no wonder they talk past each other. So that’s how things seem to me right now. Maybe I’m wrong!! But anyway, that’s why I feel unable to take a side in this particular debate. I’ll leave it to others. See also: Foom & Doom §2.9.1.

- ^

…as long as the meta-preferences-about-desire-changes are changing in a way that seems good according to those same meta-preferences themselves—growth good, brainwashing bad, etc.

- ^

Possible objection: “If the RL agent has lots of past experience of its reward function periodically changing, won’t it learn that this is good?” My answer: No. At least for the kind of model-based RL agent that I generally think about, the reward function creates desires, and the desires guide plans and actions. So at any given time, there are still desires, and if these desires concern the state of the world in the future, then the instrumental convergence argument for goal-preservation goes through as usual. I see no process by which past history of reward function changes should make an agent OK with further reward function changes going forward.

(But note that the instrumental convergence argument makes model-based RL agents want to preserve their current desires, not their current reward function. For example, if an agent has a wireheading desire to get reward, it will want to self-modify to preserve this desire while changing the reward function to “return +∞”.)

- ^

…At least to a first approximation. Here are some technicalities: (1) Other pathways also exist, and can generate a force for desire preservation. (2) There’s also a loopy thing where Approval Reward influences self-reflective desires, which in turn influence Approval Reward, e.g. by changing who you admire. (See Approval Reward post §5–§6.) This can (mildly) lock in desires. (3) Even Approval Reward itself leads not only to “proud feeling about what I’m up to right now” (Approval Reward post §3.2), which does not particularly induce desire-preservation, but also to “desire to actually interact with and impress a real live human sometime in the future”, which is on the left side of that figure in §0.3, and which (being consequentialist) does induce desire-preservation and the other instrumental convergence stuff.

- ^

If an Approval-Reward-free AGI wants X and wants Y, then it could get more X by no longer wanting Y, and it could get more Y by no longer wanting X. So there’s a possibility that AGI reflection could lead to “total victory” where one desire erases another. But I (tentatively) think that’s unlikely, and that the more likely outcome is that the AGI would continue to want both X and Y, and to split its time and resources between them. A big part of my intuition is: you can theoretically have a consequentialist utility-maximizer with utility function , and it will generally split its time between X and Y forever, and this agent is reflectively stable. (The logarithm ensures that X and Y have diminishing returns. Or if that’s not diminishing enough, consider , etc.)

- ^

To show how widespread this is, I don’t want to cherry-pick, so my two examples will be the two most recent movies that I happen to have watched, as I’m sitting down to write this paragraph. These are: Avengers: Infinity War & Ant-Man and the Wasp. (Don’t judge, I like watching dumb action movies while I exercise.)

Spoilers for the Marvel Cinematic Universe film series (pre-2020) below:

The former has a wonderful example. The heroes can definitely save trillions of lives by allowing their friend Vision to sacrifice his life, which by the way he is begging to do. They refuse, instead trying to save Vision and save the trillions of lives. As it turns out, they fail, and both Vision and the trillions of innocent bystanders wind up dead. Even so, this decision is portrayed as good and proper heroic behavior, and is never second-guessed even after the failure. (Note that “Helping a friend in need who is standing right there” has very strong immediate social approval for reasons explained in §6 of Social drives 1 (“Sympathy Reward strength as a character trait, and the Copenhagen Interpretation of Ethics”).) (Don’t worry, in a sequel, the plucky heroes travel back in time to save the trillions of innocent bystanders after all.)

In the latter movie, nobody does anything quite as outrageous as that, but it’s still true that pretty much every major plot point involves the protagonists risking themselves, or their freedom, or the lives of unseen or unsympathetic third parties, in order to help their friends or family in need—which, again, has very strong immediate social approval.

- ^

And @Matthew Barnett! This whole section is based on (and partly copied from) a comment thread last year between him and me.

- ^

- ^

Superintelligences might be able to design such rules amongst themselves, for all I know, although it would probably involve human-incompatible things like “merging” (jointly creating a successor ASI then shutting down). Or we might just get a unipolar outcome in the first place (e.g. many copies of one ASI with the same non-indexical goal), for reasons discussed in my post Foom & Doom §1.8.7.

- My AGI safety research—2025 review, ’26 plans by (11 Dec 2025 17:05 UTC; 136 points)

- “Act-based approval-directed agents”, for IDA skeptics by (18 Mar 2026 18:47 UTC; 68 points)

- Social drives 2: “Approval Reward”, from norm-enforcement to status-seeking by (12 Nov 2025 20:40 UTC; 42 points)

- Social drives 1: “Sympathy Reward”, from compassion to dehumanization by (10 Nov 2025 14:53 UTC; 36 points)

- [Advanced Intro to AI Alignment] 2. What Values May an AI Learn? — 4 Key Problems by (2 Jan 2026 14:51 UTC; 33 points)

- 's comment on Alignment Fellowship by (26 Dec 2025 6:24 UTC; 20 points)

- On Steven Byrnes’ ruthless ASI, (dis)analogies with humans and alignment proposals by (20 Feb 2026 15:32 UTC; 9 points)

- 's comment on williawa’s Shortform by (17 Apr 2026 23:14 UTC; 3 points)

- 's comment on Disciplined iconoclasm by (EA Forum; 13 Dec 2025 20:17 UTC; 2 points)

- 's comment on Tristan’s list of things to write by (9 Dec 2025 21:00 UTC; 1 point)

Do you think sociopaths are sociopaths because their approval reward is very weak? And if so, why do they often still seek dominance/prestige?

Basically yes (+ also sympathy reward); see Approval Reward post §4.1, including the footnote.

My current take is that prestige-seeking comes mainly from Approval Reward, and is very weak in (a certain central type of) sociopath, whereas dominance-seeking comes mainly from a different social drive that I discussed in Neuroscience of human social instincts: a sketch §7.1, but mostly haven’t thought about too much, and which may be strong in some sociopathic people (and weak in others).

I guess it’s also possible to prestige-seek not because prestige seems intrinsically desirable, but rather as a means to an end.

My default mental model of an intelligent sociopath includes something like this:

You find yourself wandering around in a universe where there’s a bunch of stuff to do. There’s no intrinsic meaning, and you don’t care whether you help or hurt other people or society; you’re just out to get some kicks and have a good time, preferably on your own terms. A lot of neat stuff has already been built, which, hey, saves you a ton of effort! But it’s got other people and society in front of it. Well, that could get annoying. What do you do?

Well, if you learn which levers to pull, sometimes you can get the people to let you in ‘naturally’. Bonus if you don’t have to worry as much about them coming back to inconvenience you later. And depending on what you were after, that can turn out as prestige—‘legitimately’ earned or not, whatever was easier or more fun. (Or dominance; I feel like prestige is more likely here, but that might be dependent on what kind of society you’re in and what your relative strengths are. Also, sometimes it’s much more invisible! There’s selection effects in which sociopaths become well-known versus quietly preying somewhere they won’t get caught.)

Beyond that, a lot of times the people are the good stuff. They’re some of the most complicated and interesting toys in the world to play with! And dominance and prestige both look like shiny score levers from a distance and can cause all sorts of fun ripply effects when you jangle them the right way. So even if you’re not drawn to them for intrinsic, content-specific reasons, you can get drawn in by the game, just like how people who play video games have their motivations shaped by contextual learning toward whatever the gameplay loop focuses on.

Relatedly, how do we model the reflective desires of sociopaths in the absence of Approval Reward?

I don’t know! IIRC they talk about related things a bit in this podcast but I wound up not really knowing what to make of it. (But I listened to it a year ago, and I think I’ve learned new things since then, perhaps I should try listening to it again.) UPDATE MAY 2026: It actually makes a ton of sense in my model, see this comment.

Nice post. Approval reward seems like it helps explain a lot of human motivation and behavior.

I’m wondering whether approval reward would really be a safe source of motivation in an AGI though. From the post, it apparently comes from two sources in humans:

Internal: There’s an internal approval reward generator that rewards you for doing things that other people would approve of, even if no one is there. “Intrinsically motivated” sounds very robust but I’m concerned that this just means that the reward is coming from an internal module that is possible to game.

External: Someone sees you do something and you get approval.

In each case it seems the person is generating behaviors and they there is an equally strong/robust reward classifier internally or externally so it’s hard to game.

The internal classifier is hard to game because we can’t edit our minds.

And other people are hard to fool. For example, there are fake billionaires but they are usually found out and then get negative approval so it’s not worth it.

But I’m wondering would an AGI with an approval reward modify itself to reward hack or figure out how to fool humans in clever ways (like the RLHF robot arm) to get more approval.

Though maybe implementing an approval reward in an AI gets you most of the alignment you need and it’s robust enough.

I definitely have strong concerns that Approval Reward won’t work on AGI. (But I don’t have an airtight no-go theorem either. I just don’t know; I plan to think about it more.) See especially footnote 7 of this post, and §6 of the Approval Reward post, for some of my concerns, which overlap with yours.

(I hope I wasn’t insinuating that I think AGI with Approval Reward is definitely a great plan that will solve AGI technical alignment. I’m open to wording changes if you can think of any.)

From personal experience, the internal Approval module does in fact seem possible to game, specifically by manipulating whose approval it’s seeking.

I became very weird (from the perspective of everyone else) very fast when I replaced the abstract-person-which-would-do-the-approving with a fictional person-archetype of my choosing. That process seems to have injected a bunch of my object-level desires into my Approval system. I now find myself feeling pride at doing things with selfish benefit in expectation, which ~never happened before (absent a different reason to feel about that action). It also killed certain subsets of my previous emotional reactions, for example the deaths of loved ones basically hasn’t affected me at all since (though that prospect still seems dreadful in anticipation).

I had been pathologically selfless before, and I’m now considerably less-so, but not in a natural-seeming kind of way. I’ve become an amalgam of very selfish motivations, coexisting with a subset of my previous very selfless morality. It’s… honestly a mess, but I wouldn’t call the attempt actually unsuccessful, just far from perfectly executed.

Curated! I very much like the project of finding upstream cruxes to different intuitions regarding AI alignment. Oddly, such cruxes can be invisible until someone points them out. It’s also cool how Steven’s insight here isn’t a one-off post, but flows from his larger research project and models, kind of the project paying dividends. (To clarify, in curating this I’m not saying it’s definitely correct according to me, but I find it quite plausible.)

I also appreciate that most times when I or others try to do this mechanistic modeling of human minds, it ends up very dry and others don’t want to read it even when it feels compelling to the author; somehow Steven has escaped that, by dint of writing quality or idea quality, I’m not sure.

I really liked this and when the relevant Annual Review comes around, expect to give it at least a 4.

A complementary angle: we shouldn’t be arguing over whether or not we’re in for a rough ride, we should be figuring out how to not have that.

I suspect more people would be willing to (both empirically and theoretically) get behind ‘ruthless consequentialist maximisers are one extreme of a spectrum which gets increasingly scary and dangerous; it would be bad if those got unleashed’.

Sure, skeptics can still argue that this just won’t happen even if we sit back and relax. But I think then it’s clearer that they’re probably making a mistake (since origin stories for ruthless consequentialist maximisers are many and disjunctive). So the debate becomes ‘which sources of supercompetent ruthless consequentialist maximisers are most likely and what options exist to curtail that?’.

I appreciate this post for working to distill a key crux in the larger debate.

Some quick thoughts:

1. I’m having a hard time understanding the “Alas, the power-seeking ruthless consequentialist AIs are still coming” intuition. It seems like a lot of people in this community have this intuition, and I feel very curious why. I appreciate this crux getting attention.

2. Personally, my stance is something more like, “It seems very feasible to create sophisticated AI architectures that don’t act as scary maximizers.” To me it seems like this is what we’re doing now, and I see some strong reasons to expect this to continue. (I realize this isn’t guaranteed, but I do think it’s pretty likely)

3. While the human analogies are interesting, I assume they might appeal more to the “consequentialist AIs are still coming” crowd than people like myself. Humans were evolved for some pretty wacky reasons, and have a large number of serious failure modes. Perhaps they’re much better than some of what people imagine, but I suspect that we can make AI systems that have much more rigorous safety properties in the future. I personally find histories of engineering complex systems in predictable and controllable ways to be much more informative, for these challenges.

4. You mention human intrinsic motivations as a useful factor. I’d flag that in a competent and complex AI architecture, I’d expect that many subcomponents would have strong biases towards corrigibility and friendliness. This seems highly analogous to human minds, where it’s really specific sub-routines and similar that have these more altruistic motivations.

We probably mostly disagree because you’re expecting LLMs forever and I’m not. For example, AlphaZero does act as a scary maximizer. Indeed, nobody knows any way to make an AI that’s superhuman at Go, except by techniques that produce scary maximizers. Is there a way to make an AI that’s superhuman at founding and running innovative companies, but isn’t a scary maximizer? That’s beyond present AI capabilities, so the jury is still out.

The issue is basically “where do you get your capabilities from?” One place to get capabilities is by imitating humans. That’s the LLM route, but (I claim) it can’t go far beyond the hull of existing human knowledge. Another place to get capabilities is specific human design (e.g. the heuristics that humans put into Deep Blue), but that has the same limitation. That leaves consequentialism as a third source of capabilities, and it definitely works in principle, but it produces scary maximizers.

Yup, my expectation is that ASI will be even scarier than humans, by far. But we are in agreement that humans with power are much-more-than-zero scary.

I’m not sure what you mean by “subcomponents”. Are you talking about subcomponents at the learning algorithm level, or subcomponents at the trained model level? For the former, I think both LLMs and human brains are mostly big simple-ish learning algorithms, without much in the way of subcomponents. For the latter (where I would maybe say “circuits” instead of “subcomponents”?), I would also disagree but for different reasons, maybe see §2 of this post.

Thanks so much for that explanation. I’ve started to review those posts you linked to and will continue doing so later. Kudos for clearly outlining your positions, that’s a lot of content.

> “We probably mostly disagree because you’re expecting LLMs forever and I’m not.”

I agree that RL systems like AlphaZero are very scary. Personally, I was a bit more worried about AI alignment a few years ago, when this seemed like the dominant paradigm.

I wouldn’t say that I “expect LLMs forever”, but I would say that if/when they are replaced, I think it’s more likely than not that they will be replaced by a system of a scariness factor that’s similar to LLMs or less. The main reason being is that I think there’s a very large correlation between “not being scary” and “being commercially viable”, so I expect a lot of pressure for non-scary systems.

The scariness of RL systems like AlphaZero seems to go hand-in-hand with some really undesirable properties, such as [being a near-total black box] and [being incredibly hard to intentionally steer]. It’s definitely possible that in the future some capabilities advancement might mean that scary systems have such a intelligence/capabilities advantage that this outweighs the disadvantages, but I see this as unlikely (though definitely a thing to worry about).

> I’m not sure what you mean by “subcomponents”. Are you talking about subcomponents at the learning algorithm level, or subcomponents at the trained model level?

I’m referring to scaffolding. As in, an organization makes an “AI agent” but this agent frequently calls a long list of specific LLM+Prompt combinations for certain tasks. These subcalls might be optimized to be narrow + [low information] + [low access] + [generally friendly to humans] or similar. This can be made more advanced with a large variety of fine-tuned models, but that might be unlikely.

I have a three-way disjunctive argument on why I don’t buy that:

(1) The really scary systems are smart enough to realize that they should act non-scary, just like smart humans planning a coup are not gonna go around talking about how they’re planning a coup, but rather will be very obedient until they have an opportunity to take irreversible actions.

(2) …And even if (1) were not an issue, i.e. even if the scary misaligned systems were obviously scary and misaligned, instead of secretly, that still wouldn’t prevent those systems from being used to make money—see Reward button alignment for details. Except that this kind of plan stops working when the AIs get powerful enough to take over.

(3) …And even if (1-2) were not issues, i.e. even if the scary misaligned systems were useless for making money, well, MuZero did in fact get made! People just like doing science and making impressive demos, even without profit incentives. This point is obviously more relevant for people like me who think that ASI won’t require much hardware, just new algorithmic ideas, than people (probably like you) who expect that training ASI will take a zillion dollars.

I think this points to another deep difference between us. If you look at humans, we have one brain design, barely changed since 100,000 years ago, and (many copies of) that one brain design autonomously figured out how to run companies and drive cars and go to the moon and everything else in science and technology and the whole global economy.

I expect that people will eventually invent an AI like that—one AI design and bam, it can just go and autonomously figure out anything—whereas you seem to be imagining that the process will involve laboriously applying schlep to get AI to do more and more specific tasks. (See also my related discussion here.)

I agree that there is an optimization pressure here, but I don’t think it robustly targets “don’t create misaligned superintelligence” rather, it targets “customers and regulators not being scared” which is very different from “don’t make things customers and regulators should be scared of”.

I was thinking more of internal systems that a company would have enough faith in to deploy (a 1% chance of severe failure is pretty terrible!) or customer-facing things that would piss off customers more than scare them.

Getting these right is tremendously hard. Lots of companies are trying and mostly failing right now. There’s a ton of money in just “making solid services/products that work with high reliability.”

My impression is that companies are very short sighted, optimizing for quarterly and yearly results even if it has a negative effect on the companies performance in 5 years and even if it has negative effects on society. I also think many (most?) companies view regulations not as signals for how they should be behaving but more like board game rules, if they can change or evade the rules to profit, they will.

I’ll also point out that it is probably in the best interest of many customers to be pissed off. Sycophantic products make more money than ones that force people to confront ways they are acting against their own values. It is my estimation that that is a pretty big problem.

But the thing I’m most worried about is companies succeeding at “making solid services/products that work with high reliability” without actually solving the alignment problem, and then it becomes even more difficult to convince people there even is a problem as they further insulate themselves from anyone who disagrees with their hyper-niche worldview.

Thanks for the clarification.

> But the thing I’m most worried about is companies succeeding at “making solid services/products that work with high reliability” without actually solving the alignment problem, and then it becomes even more difficult to convince people there even is a problem as they further insulate themselves from anyone who disagrees with their hyper-niche worldview.

The way I see it, “making solid services/products that work with high reliability” is solving a lot of the alignment problem. As in, this can get us very far into making AI systems do a lot of valuable work for us with very low risk.

I imagine that you’re using a more specific definition of it than I am here.

I might be. I might also be using a more general definition. Or just a different one. Alas, that’s natural language for you.

I agree, but feel it’s important to note the low risk is only locally low. Globally I think the risk is catastrophic.

I think the biggest difference in our POV might be that I think the systems we are using to control what happens in our world (markets, governments, laws) are already misaligned and heading towards disasters, and if we allow them to continue getting more capable they will not suddenly be capable enough to get back on track because they were never aligned to target human friendly preferences in the first place. Rather, they target proxies, but capabilities have gone beyond the point where those proxies are articulate enough for good outcomes. We need to switch focus from capabilities to alignment.

Funny, I see “high reliability” as part of the problem rather than part of the solution. If a group is planning a coup against you, then your situation is better not worse if the members of this group all have dementia. And you can tell whether or not they have dementia by observing whether they’re competent and cooperative and productive before any coup has started.

If the system is not the kind of thing that could plot a coup even if it wanted to, then it’s irrelevant to the alignment problem, or at least to the most important part of the alignment problem. E.g. spreadsheet software and bulldozers likewise “do a lot of valuable work for us with very low risk”.

Curious what evidence makes you point towards “being a near-total black box” refrains adoption of these systems? Social media companies have very successfully deployed and protected their black box recommendation algorithms despite massive negative societal consequences, and the current transformer models are arguably black boxes with massive adoption.

Further, “being incredibly hard to intentionally steer” is a baseline assumption for me how practically any conceivable agentic AI works, and given that we almost surely cannot get statistical guarantees about AI agent behaviour in open settings I don’t see any reason (especially in the current political environment) that this property would be a showstopper.

Having actually worked for a tech giant on recommendation systems (specifically, for music), they are very much not black boxes to the people building them. They us fairly old and quite understandable ML techniques to predict engagement, from every obvious signal that the engineers involved can think of that might help do so, and they’re tweaked a lot, and every tweak is A/B tested at huge scale. It’s a very obvious learning algorithm, with a lot of hand-engineering involved. Getting a 0.5% increase in a secondary metric that your data scientists have shown is correlated to your north-star metric is a major win. The only element of all this that’s in any way hard to predict is the social side effects of maximizing engagement. So the recommendation algorithms might be a black box to users, but by LLM standards they’re practically transparent.

is that still the case? I had the impression that the youtube recommender may be a transformer now? I’m not sure why I had this hunch

I left a couple of years ago. At that time, for the music aspect of the company that I was working for, the main recommender was a great many carefully-crafted input signals (many having already been processed by a wide variety of ML models) fed into a small tower of MLP layers with multiple output heads attempting to predict different aspects of engagement with the item, feeding into a data-scientist-derived formula. I gather the main video recommender then used something comparable. Quite old-school ML. Since almost all of our inputs generally weren’t in the form of meaningful sequences, there weren’t many obvious problems that a transformer could help with — for those that were, I’d expect it to be applied only to that portion of the data (e.g. chunks of text). Indeed, that was starting to happen with I was there (e.g. for search where the user input actually is a chunk of text). In general, they put a lot of effort into having data scientists understand what was going on inside the system in as much detail as possible.

They also did actually try to think about the possible social consequences of their algorithms — for example, they maximized account retention, not total engagement, which turned out to mean that past a certain point total engagement had very diminishing returns. They also classified content into buckets, including certain ones of which they did NOT want to encourage engagement with, even if it was still available for users actively looking for it (less of an issue for music, admittedly), and other highly promoted ones which seemed to correlate with people’s self-reported long-term happiness with using the site — which tended to be quite “worthy” (again, rare for music). (Note that I am explicitly NOT claiming all social media companies were then acting like that.)

I agree that some companies do use RL systems. However, I’d expect that most of the time, the black-box nature of some of these systems is not actively preferred. They use them despite the black-box nature, because these are specific situations where the benefits outweigh the costs, not because of them.

“current transformer models are arguably black boxes with massive adoption.” → They’re typically much less that of RL. There’s a fair bit of customization that can be done with prompting, and the prompting is generally English-readable.

Do you think AI-empowered people / companies / governments also won’t become more like scary maximizers? Not even if they can choose how to use the AI and how to train it? This seems a super strong statement and I don’t know any reasons to believe it at all.

“Do you think AI-empowered people / companies / governments also won’t become more like scary maximizers?” → My statements above were very focused on AI architectures / accident risk. I see people / misuse risk as a fairly distinct challenge/discussion.

You might be interested in a model / terminology / lens I’m trying to get off the ground: Outcome Influencing Systems (OISs) are any system with preferences and capabilities that use their capabilities to influence reality towards outcomes according to their preferences. An important aspect of this definition is that it includes not just AI and ASI, but also humans and human organizations. I think it’s useful because then we can more easily talk about the risk of misaligned OIS which makes it explicit that we are talking both about potential ASI and about organizations that may create ASI and about organizations that may pose catastrophic risk due to their capabilities, regardless of whether they are using anything laypeople would identify as an AI.

I think this was in the Sequences, the notion of “optimization process”. Eliezer describes here how he realized this notion is important, by drawing a line through three points: natural selection, human intelligence, and an imaginary genie / outcome-pump device.

Yeah! That was the post that got me to really deeply believe the Orthogonality Thesis. “Naturalistic Awakening” and “Human Guide to Words” are my two favourite sequences.

OISs are actually a slightly broader definition than optimization processes for two reasons though: (1) OISs have capabilities, not intelligence, and (2) OIS capabilities are arbitrarily general.

(1) The important distinction is that OISs are defined in terms of capabilities not in terms of intelligence, where capabilities can be broken down into skills, knowledge, and resource access.

This is valuable for breaking skills down into skill domains, which is relevant for risk assessment, while intelligence is a kind of generalizable skill that seems to be very poorly defined and usually more distracting to valuable analysis in my opinion.

Also, resource access has the compounding property that knowledge and skill also have which could potentially lead to dangerously compounding capabilities. Making it explicit that “intelligence” is not the only aspect of an OIS that has this compounding property seems important.

(2) Is less well considered and less important. The example I have for this is a bottle cap. A bottle cap makes it more likely that water will stay in a bottle, but it isn’t an optimizer, it is an optimized object. When viewed through the optimizer lens, the bottle cap doesn’t want to keep the water in, rather, it was optimized by something that does want to keep the water in, so it is not an optimizer. That is, the cap has extremely fragile capabilities. It keeps the water in when it is screwed on, but if it is unscrewed it has no ability on it’s own to put itself back on or try to continue keeping the water in. This must be very nearly the limit in how little it is possible for capabilities to generalize.

However, from the OIS lens, the cap indeed makes water staying in the bottle a more likely outcome, and we can say that in some sense it does want to keep the water in.

I find it a little frustrating how general this makes the definition, and I’m sure other people will as well, but I think it is more useful in this case to cast a very wide net and then try to understand the differences between the kinds of things caught by that net, rather than working with the overly limited definitions that fail to reference the objects I am interested in. It also highlights the potential issues with highly optimized fragile OIS. If we need them to generalize, it is a problem that they won’t, and if we are expecting safety because something “isn’t actually an optimizer” that may not matter if it is sufficiently well optimized over a sufficiently dangerous domain of capability.

I think if this was going to stick, you would already be seeing other people using it here. The fact that it didn’t quickly spread is a bad sign for the evaluation your readers have had of what they think of it.

For myself, I find the term clunky. I don’t think you’re wrong to want to talk about it, but the term on its own already uses three of your five words for mass communication, they’re rare words, they’re long words, and the meaning of each in context is a bit odd. Also, they rely on people having a habit of trying to generalize. Most of those drawbacks are easy to work around on lesswrong; but then there’s the much more important reason the term doesn’t work, which is simply that it’s not necessary to memorize—if I were to use a three word phrase to describe consequence-dependent processes, I have an infinite wellspring of rephrases of those three words at hand in my head, and which rephrase I use depends on exactly which combination of subtle meanings I want to refer to right now.

The flipside of this is that I do agree with you that consequence-steering processes are a core source of concern and are general between humans and AIs, that there’s an unsolved problem of how to specify goodness in a way that still means “good things” if put in a spreadsheet (perhaps one that is gigabytes large) and number-go-up’ed about.

Unfortunately I am guided by my inside view, so I will continue discussing OISs until people do start using the term or until I come to understand the flaws in the terminology. By discussing it with me you are helping with this process, so thank you : )

I would love to hear more thoughts on this. I examined many other sets of words before settling on these ones. If you are interested I can discuss why I think they are better than any of the examples you suggested

Although I like the way “consequence” implies the involvement of causality, I think “outcome” is preferable to “consequence” because I want to ground the terminology in formal mathematics and would like to leverage the term “outcome” from probability theory.

The term “process” is one that I spent a good amount of time considering, especially in the phrase “decision process”, but I ended up preferring the term “system” because of the implication that we should be thinking not only about actions, but about objects and the actions those objects can perform. An OIS is a physical thing. No OIS exists without being instantiated by some part of reality.

I prefer “influencing” over “steering” because “steering” implies especially competent influence which is explicitly incorrect when reasoning about multiple agents operating in the same environment with incompatible goals and similar levels of capabilities. It is not true that either agent steers. Both agents influence.

I find the phrase “consequence-dependent processes (CDP)” very interesting. With “dependent” in the place of “influencing” or “steering”, CDP seems reminiscent of the outcome pump discussed in The Hidden Complexity of Wishes and My Naturalistic Awakening. Although notably, CDP doesn’t seem to imply that the process is causing the consequence to become more likely or certain, rather, it is just some kind of acausality based on the consequence. I don’t know if this is what you meant to imply, but it is certainly different from an OIS. While the CDP is acausal and doesn’t necessarily affect outcome likelihoods, OIS operate according to the causal rules of our world explicitly making some outcomes more likely than others.

The art of abstraction involves generalization and specification. I love both and wish more people would delight in carefully constructed abstraction. Rather than rely I would say OIS terminology is maybe trying to promote people generalizing.

I think this is a flaw, not a feature. My goal with creating a standard set of terminology is (among other things) to avoid the ambiguity of subtle rephrasings and to create the shorthand words “OIS” and “OISs” pronounced “oh-ee” and “oh-ees” to make it easier to articulately discuss specific sets of important general phenomena.

I strongly agree. I think this is basically Goodhart’s law. My thinking about and talking about OIS is very much a result of trying to think about how to solve the generalized AI Alignment Problem of representing what we want in a sufficiently accurate, articulate, and precise encoding that it can be used as the preferences for an arbitrarily capable OIS without that OIS becoming misaligned.

Thanks again. I appreciate your critical engagement.

To explain my disagreement, I’ll start with an excerpt from my post here:

So that’s one piece of where I’m coming from.

Meanwhile, as it happens, I have worked on “engineering complex systems in predictable and controllable ways”, in a past job at an engineering firm that made guidance systems for nuclear weapons and so on. The techniques we used involved understanding the engineered system incredibly well, understanding the environment / situations that the system would be in incredibly well, knowing exactly what the engineered system should do in any of those situations, and thus developing strong confidence and controls to ensure that the system would in fact do those things.

If I imagine applying those engineering techniques, or anything remotely like them, to “Everything, Inc.”, I just can’t. They seem obviously totally inapplicable. I know extraordinarily little about what any of these millions of AGIs is doing, or where they are, or what they should be doing.

See what I mean?

Your example of “Everything Inc” is also similar to what I’m expecting. As in, I agree with:

1. The large majority of business strategy/decisions/implementation can (somewhat) quickly be done by AI systems.

2. There will be strong pressures to improve AI systems, due to (1).

That said, I’d expect:

1. The benefits are likely to be (more) distributed. Many companies will be simultaneously using AI to improve their standings. This leads to a world where there’s not a ton of marginal low-hanging-fruit for any single company. I think this is broadly what’s happening now.

2. A great deal of work will go into making many of these systems reliable, predictable, corrigible, legally-compliant, etc. I’d expect companies to really dislike being blind-sighted by sub-AI systems that do bizarre things.

3. This is a longer-shot, but I think there’s a lot of potential for strong cooperation between companies, organizations, and (effective) governments. A lot of the negatives of maximizing businesses comes from negative externalities and similar, which can also be looked at as coordination/governance failures. I’d naively expect this to mean that if power is distributed among multiple capable entities at time T, then these entities would likely wind up doing a lot of positive-sum interactions with each other. This seems good for many S&P 500 holders.

”or anything remotely like them, to “Everything, Inc.”, I just can’t. They seem obviously totally inapplicable.”

This seems tough to me, but quite possible, especially as we get much stronger AI systems. I’d expect that we could (with a lot of work) have a great deal of:

1. Categorization of potential tasks into discrete/categorizable items.

2. Simulated environments that are realistic enough.

3. Innovations in finding good trade-offs between task competence and narrowness.

4. LLM task eval setups would get substantially more sophisticated and powerful.

I’d expect this to be a lot of work. But at the same time, I’d expect a lot of of it to be strongly commercially useful.

What’s your take on why Approval Reward was selected for in the first place VS sociopathy?

I find myself wondering if non-behavioral reward functions are more powerful in general than behavioral ones due to less tendency towards wireheading, etc.(consider the laziness & impulsivity of sociopaths) Especially ones such as Approval Reward which can be “customized” depending on the details of the environment and what sort of agent it would be most useful to become.

Good question!

There are lots of things that an ideal utility maximizer would do via means-end reasoning, that humans and animals do instead because they seem valuable as an end in itself, thanks to the innate reward function. E.g. curiosity, as discussed in A mind needn’t be curious to reap the benefits of curiosity. And also play, and injury-avoidance, etc. Approval Reward has the same property—whatever selfish end an ideal utility maximizer can achieve via Approval Reward, it can achieve it as well if not better by acting as if it had Approval Reward in situations where that’s in its selfish best interests, and not where it isn’t.

In all these cases, we can ask: why do humans in fact find it intrinsically motivating? I presume that the answer is something like humans are not automatically strategic, which is even more true when they’re young and still learning. “Humans are the least intelligent species capable of building a technological civilization.” For example, people with analgesic conditions (like leprosy or CIP) are often shockingly cavalier about bodily harm, even when they know consciously that it will come back to bite them in the long term. Consequentialist planning is often not strong enough to outweigh what seems appealing in the moment.

To rephrase more abstractly: for ideal rational agents, intelligent means-end planning towards X (say, gaining allies for a raid) is always the best way to accomplish that same X. If some instrumental strategy S (say, trying to fit in) is usually helpful towards X, means-end planning can deploy S when S is in fact useful, and not deploy S when it isn’t. But in humans, who are not ideal rational agents, they’re often more likely to get X by wanting X and intrinsically want S as an end in itself. The costs of this strategy (i.e., still wanting S even in cases where it’s not useful towards X) are outweighed by the benefit (avoiding the problem of not pursuing S because you didn’t think of it, or can’t be bothered).

This doesn’t apply to all humans all the time, and I definitely don’t think it will apply to AGIs.

…For completeness, I should note that there’s a evo-psych theory that there has been frequency-dependent selection for sociopaths—i.e., if there are too many sociopaths in the population, then everyone else improves their wariness and ability to detect sociopaths and kill or exile them, but when sociopathy is rare, it’s adaptive (or at least, was adaptive in Pleistocene Africa). I haven’t seen any good evidence for this theory, and I’m mildly skeptical that it’s true. Wary or not, people will learn the character traits of people they’ve lived and worked with for years. Smells like a just-so story, or at least that’s my gut reaction. More importantly, the current population frequency of sociopathy is in the same general ballpark as schizophrenia, profound autism, etc., which seem (to me) very unlikely to have been adaptive in hunter-gatherers. My preferred theory is that there’s frequency-dependent selection across many aspects of personality, and then sometimes a kid winds up with a purely-maladaptive profile because they’re at the tail of some distribution. [Thanks science banana for changing my mind on this.]

I think the “laziness & impulsivity of sociopaths” can be explained away as a consequence of the specific way that sociopathy happens in human brains, via chronically low physiological arousal (which also leads to boredom and thrill-seeking). I don’t think we can draw larger lessons from that.

I also don’t see much connection between “power” and behaviorist reward functions. For example, eating yummy food is (more-or-less) a behaviorist component of the overall human reward function. And its consequences are extraordinary. Consider going to a restaurant, and enjoying it, and thus going back again a month later. It sounds unimpressive, but really it’s remarkable. After a single exposure (compare that to the data inefficiency of modern RL agents!), the person is making an extraordinarily complicated (by modern AI standards) plan to get that same rewarding experience, and the plan will almost definitely work on the first try. The plan is hierarchical, involving learned motor control (walking to the bus), world-knowledge (it’s a holiday so the buses run on the weekend schedule), dynamic adjustments on the fly (there’s construction, so you take a different walking route to the bus stop), and so on, which together is way beyond anything AI can do today.

I do think there’s a connection between “power” and consequentialist desires. E.g. the non-consequentialist “pride in my virtues” does not immediately lead to anything as impressive as the above consequentialist desire to go to that restaurant. But I don’t see much connection between behaviorist rewards and consequentialist desires—if we draw a 2×2 thing, then I can think of examples in all four quadrants.

Right. What you said in your comment seems pretty general—any thoughts on what in particular leads to Approval Reward being a good thing for the brain to optimize? Spitballing, maybe it’s because human life is a long iterated game so reputation ends up being the dominant factor in most situations and this might not be easily learned by a behaviorist reward function?

You mean, if I’m a guy in Pleistocene Africa, then why it instrumentally useful for other people to have positive feelings about me? Yeah, basically what you said; I’m regularly interacting with these people, and if they have positive feelings about me, they’ll generally want me to be around, and to stick around, and also they’ll tend to buy into my decisions and plans, etc.

Also, Approval Reward also leads to norm-following, which is also probably adaptive for me, because probably many of those social norms exist for good and non-obvious reason, cf. Heinrich.