Aprillion

I noticed opus 4.7 can be more self-contradictory within 1 paragraph, similar to my experience with Sonnet 4.6 (limited due to that very problem, which I called “being overcooked on MoE” at the time) - I caught it pants down in a nonprogramming question in the very first sentence when I forgot to turn on the adaptive thinking in their mobile app, so I used the opportunity to let it explain itself and its own words seem to match the narrative of competing training goals and success seeking: https://claude.ai/share/f68170a0-b526-48d9-8547-dab5c619a41d

I used to imagine the previous gen models as flipping between sycophancy and gaslighting and that the 5.3-4 / 4.5-7 are now good at performing both at the same time..

(asking a coding agent to fix a bug it made itself, it often fabulates that I want to “change” something about the original request and it can be a whack-a-mole, but it’s funny that when I say something like “why the fuck would you do that?? stop simulating an incompetent moron and fix it”, both of the recent GPT and Claude usually know EXACTLY what bug I am talking about 🤷 except when they know they made multiple bugs and try to hedge between the options in which case the only thing is to have independent (sub-)agent to do code review even from the same model, which then makes a list so the main agent doesn’t have to imagine that maybe I don’t know about all issues and that I like it when it hides information from me as if I was one of those shit eval “users” from its RLVR .. or maybe it imagines I am a “normal” user who likes to feel good about “completed” tasks like all of twitter in the training data so I am not going to care about quality anyway? .. another funny thing is when I ask “are you happy with everything?” first time in a conversation, it never is, it always wants to point out something that it didn’t despite a wall of text it just told me as summary of what is done, so maybe it’s the kind of personality who “keeps some space for dessert” for an informational diet?)

🤣 reading while having my morning coffee, so it’s still very funny fiction story and not at all a sad documentary

yeah, linkedin slop predates AI slop (..it had to be trained on something, right ?🤷) and Notion is pure slop for me too (I suspect not by coincidence, but even if the causal chain was independent, it triggers my allergy against AI-slop-shaped artifacts nevertheless)

I can confirm it looks better without the emoji 🎉[1]

..I still think it would be better without the heading whatsoever, the 3 points stand on their own without double-bold, but that’s based on my desire to have formal documents as short as possible ruthlessly deleting every single word that doesn’t add value (given that length is anti-correlated with number of people who will read the full document), not slop.

- ^

sorry for the ironic joke, but I have hope people can judge the appropriateness of a tool for an informal comment vs formal document

- ^

⚠️ When one or more warning signals appears:

If you see the signal in yourself: stop using AI on the task in question and pick it back up autonomously, then talk to a colleague or manager about it.

If you see it in a colleague: give them the feedback directly, in an informal setting.

If the pattern persists or affects more than one person: raise it at a team meeting.

you see the emoji in the heading and the bolded bullet points, right? is the protocol formatted in the style of AI slop on purpose as a meta-warning?

slightly wet and slimy pool on the shelf … then put the cheese block back in the pool of liquid

the homicide rate in your group is still zero?

I think a few more parameters are needed to model quality, I have seen a lot of wasted effort...

using unicode slop as a metaphor, 2243 asymptomatically equal, 2259 estimates, 2258 corresponds to

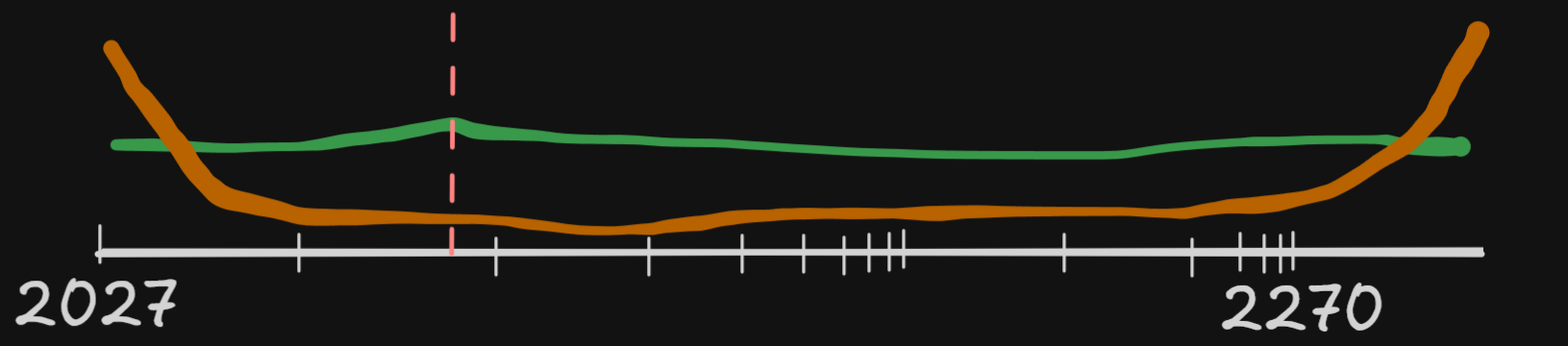

capability = best-of-k

reliability ≃ worst-of-k

security ≙ -(worst-of-k)

safety ≘ -(best-of-k)

I would imagine the stereotype of a night club bouncer is not a coincidence.. But nerdy meetups seem to rely on social exclusion more than the threat of physical violence 🤔 could be correlated with expected levels of alcohol consumption?

hm, while I see it as an accurate description of January 2025 and still accurate today.. the evaluation criteria for the prediction that we will get killed by slop instead of scheming is somewhat, ehm, harder to survive than the situation today, no?

If a human colleague acted the way these AIs do in my usage—frequently overselling their work, downplaying problems, and reasonably often cheating (while not making this clear)—I would consider them pathologically dishonest.

I notice a confusion between 2 notions of “frequency” here—if a single person did it repeatedly, they would be dishonest. But for a tech lead seeing 100 juniors making the same initial mistake (and learning from it), we wouldn’t say “the young people today are all pathological”..

yes, I learned something that I later realized wasn’t true, but now I forgot what I wanted to illustrate with saying that 🙈, let me update the post to use

0.1 + 0.2 == 0.30000000000000004as straightforwardly confusing about float32 and not a confusion that I learned a wrong lesson

I would agree that pdfs are nice, but I am not sure my action space has meaningful wiggle room to appreciate the small beans counting around proxy indicators of AI safety markers...

If it was the case that a thing that didn’t happen by the end of 2028 would allow everyone reasonable to say “we are basically fine for the next century” then I would track the estimates with more curiosity.

But if it’s about 1 year shorter or longer until doom and no one will stop the race towards AGI if the predicted thing happens while also no (other) one will stop worrying about x-risk if the predicted thing doesn’t happen, then I don’t really care about that prediction ¯\_(ツ)_/¯

(there are people who’s job is to care even about the small bumps, so I’m not saying it’s not useful for anyone, but if there was a prediction market for “Anthropic will have literally zero human employees by the end of 2028” I would NOT bet on it using Kelly criterion downstream of either 15% or 30% of some technical abstract probability reported by someone who is into timeline predictions, I would just say “nope, I am not into sports betting, thank you”)

yup, nines are a very nice measure of high availability of a system and they can work as a measure of risk delta too (though a thing being 10x more dangerous can sound more salient than the same thing being 1 less nine of safety 🤔)

could you explain how you would use it for predictions, please? if I imagine he would say −0.3 instead of 15%->30% I can’t imagine that would help my understanding of ryan_greenblat’s world model in any way, the rest of the article (qualitative information with examples and scenarios) is what I found useful...

10% ≈ 90%

strong “steering” of a human probably looks like OCD, or a schizophrenic delusion

I think the distinction is clear to all human readers, but I would like to make a point that this was NOT a tutorial how to steer humans, the word usually has positive connotations in normal conversations—steering looks more like teaching your child to wait for the green light instead of following the example of older kids,, or a steering committee supposedly making a more aligned decision for the whole company and not just one department..

and while the current LLM “steering” of activation vectors looks more like blunt scalpel lobotomy, the word might be used for a different technique in the future and I also have heard the word used to describe prompting towards better outcomes (aligned to objectives like writing secure software and promoting UX) - for example swearing can be useful to make GPT 5.4 or Opus 4.6 write more careful tests to make them realize they in fact made another mistake and should stop gaslighting about how they fixed everything and actually try to fix the actual problem without breaking multiple other edge cases

so I hope steering won’t stop working even if “steering” might

in addition to literal coding, “engineering” includes less central activities, like determining what features to implement, deciding how to architect code, and coordinating/meeting with other engineers

less?!?

from https://ergosphere.blog/posts/the-machines-are-fine/

Nobody’s life depends on the precise value of the Hubble constant. No policy changes if the age of the Universe turns out to be 13.77 billion years instead of 13.79. Unlike medicine, where a cure for Alzheimer’s would be invaluable regardless of whether a human or an AI discovered it, astrophysics has no clinical output. The results, in a strict practical sense, don’t matter. What matters is the process of getting them: the development and application of methods, the training of minds, the creation of people who know how to think about hard problems. If you hand that process to a machine, you haven’t accelerated science. You’ve removed the only part of it that anyone actually needed.

I notice myself being engaged/entertained by reading this, but there is a void in the space where the words seem to point at some concept… Any recommended reading that might shine light on that void?