OpenAI employees: Now is the time to stop doing good work.

Americans don’t like OpenAI very much anymore, and you know why. Of course, AI systems it helped make have caused various problems already, like:

bots pushing politics on social media

maintainers of open source projects having their time wasted, and sometimes quitting

AI-generated scientific papers crowding out good research

scammers who clone the voice of a family member

AI-generated fake pictures fooling people on Facebook

And gamers haven’t been huge fans of OpenAI since it bought up half the RAM wafer production—mostly just to keep it off the market so other people can’t use it, since they only bought the raw wafers, not finished RAM.

But the popularity of OpenAI has fallen abruptly this week, because it decided to help the US military make automated weapons and mass surveillance systems that Anthropic refused to make on ethical grounds.

The leadership of OpenAI is trying to pretend that that they got the same deal Anthropic wanted but they were just nicer about it. They are lying. Anthropic wanted a human in the loop of autonomous weapons. OpenAI’s contract just says that there must be human oversight to whatever extent is required by law. But of course, the US military can just say that whatever they want to do is legal, and OpenAI leadership would have no ability (or desire) to challenge them on that. Remember when the US government wanted to torture people so they just wrote some legal memos saying it was OK? I do.

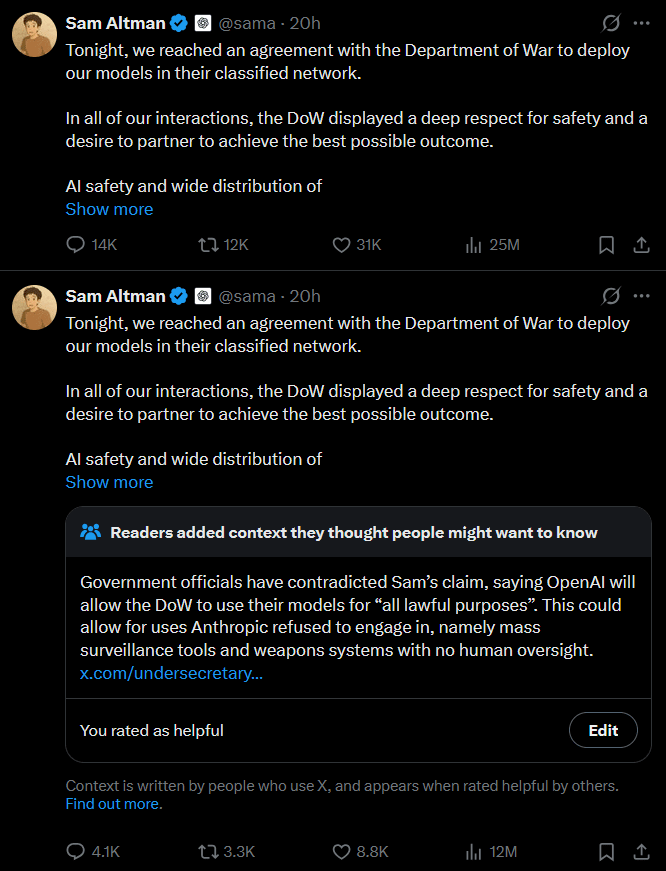

And then, Sam Altman had the nerve to say this:

There is more open debate than I thought ther ewould be, at least in this part of Twitter, about whether we should prefer a democratically elected government or unelected private companies to have more power. I guess this is something people disagree on, but…I don’t. This seems like an important area for more discussion.

Let’s be clear: this was not about Anthropic telling the US military not to work on autonomous weapons on its own. Altman is advocating for the government being able to require private companies (and their employees) to provide whatever services it wants, even if they don’t currently do that thing. I know the term “fascism” has been thrown around a lot, but that is Actual Fascism. Here are some other ways to use that argument:

“Why should Sam Altman decide what should be done with that billion dollars instead of the government, which reflects the will of the people?”

“Why should a private citizen get to decide they don’t want to spy on their neighbors and report any hidden jews? That should be the decision of the government, which reflects the will of the people!”

The past week, I’ve seen a lot of people announcing on social media that they’re canceling their OpenAI subscription and moving to Anthropic. Well, that’s fine, but they only care slightly. Most of their money isn’t from subscriptions, it’s from investors. And those investors aren’t mainly hoping for a payback from individuals paying $20/month with a 40% profit margin or whatever. No, they want to replace employees. That’s the hope. That’s the main basis of the investments. Actually getting OpenAI to change course would take...well, something else.

I’ve seen a lot of posts this week saying that employees are morally obligated to quit OpenAI immediately. But I wouldn’t go that far: I’d only say that you’re obligated to stop doing good work.

Did you see a bug? No you didn’t.

OpenAI leadership says vibe coding is fine, so why review AI code? (You can pretend to spend time on it if you want tho.)

Are you annoyed by unnecessary meetings? Why? Just relax.

Unplugged cable somewhere? Water leak? Not your job.

Lots of bad programmers have succeeded by spending their time on office politics instead. Office politics is a valuable skill! You should get some practice with it!

Really, why would you care if you put in less effort and OpenAI eventually fires you? There’s an AI boom: with OpenAI on your resume, you can get a job somewhere else. If you’ve been working in Silicon Valley and saving money, you might even just be able to go retire in Thailand or something. Or just visit Japan for a while, it’s cheap right now. This is a particularly good time to do this at OpenAI, because:

People will be leaving. If something goes wrong, you can just blame someone who quit recently.

There might be something of an ideological inquisition coming up, so it could turn out that you might want to leave soon anyway.

Normally, you just don’t give any specifics about why you were fired, but this is even a rare situation where you could get respect by answering, “I was fired for refusing to work on unethical projects.”

Sam Altman, in particular, doesn’t deserve your best efforts.

Look at his X account. He got community noted on his post, so he reposted the same thing to get rid of the note. But then he realized he couldn’t delete the noted posts, so he has 3 copies of the same thing up and looks like an asshole. This is a metaphor for his behavior his whole life—he’s used to being able to hide whatever came before, but now that he’s in the public eye he’s under too much scrutiny for some of his tactics to work.

Sam Altman does have a notable talent: he can talk to slightly autistic nerds and seem like one of them, and then go talk to a bunch of MBAs and CEOs and seem like one of them. I can’t do that, and he’s really making the most of his acting skills.

Altman had a pattern of deception and fraud from the very start of his career—and then he had the ability to convince people that he’s a “really genuine person” even as they see him lying. He was the CEO that really cared about AI safety, then he became the advocate of unrestricted AI progress, then he became the guy who’d maximize investor profits. The audiences he appealed to should have realized that he’d screw them over too when it became convenient.

And now, Altman is talking like humans are just meat computers that eat too much food compared to silicon. I don’t think whoever he’s appealing to with that rhetoric are your friends. For all the criticisms I have of the Chinese government, even they’re not as...anti-human as Altman is in front of certain audiences. I haven’t seen the Chinese leadership calling people “speciesist” either. If this is what US leadership is like, I have to wonder what the advantage of the US “winning” an AI race is—especially since it’s fairly likely that everyone loses. I remember when Sam Altman was arguing that OpenAI was good for AI risk because it reduced “compute overhang”—so much for that, eh?

This will convince approximately no one at OpenAI. I have no love for Altman, but even I can see you are being uncharitable. You can just make an honest case for him being an awful person. Some of the rhetoric here, such as his talk of the costs of training humans implying he has no empathy for them, is just not going to be effective for smart people, especially those who know him and give him the benefit of the doubt. He’s clearly just talking in the abstract in a way that isn’t going to offend an OpenAI employee. The bunker stuff, too. They know it won’t save him. He knows it won’t save him. So the rhetoric around that is negatively useful, though effective on a normal person.

I am not sure what an effective open letter of this type would look like, but it would not look so obviously uncharitable in its reading of the man your targets admire.

Fact check: false, Altman had a son via surrogate in February 2025 (although media reports don’t seem to clarify whether Altman and not his male partner is the biological father).

[low info gossipy speculation] I previously tracked the hypothesis of “[has kids] --> cares, [doesn’t have kids] --> maybe cares maybe doesn’t”. I still track it, but it’s at least a lot fuzzier, because it turns out many top AI capabilities pushers have kids. (To some extent this would be expected in relative terms, since the top people would tend to be more established and therefore older and therefore more likely to have kids. And maybe more established people are more sclerotic in terms of ideology / strategy, and more embedded in AI research and hence more incentivized Sinclairly. But it’s still at least a partial falsification.)

But I do notice that when I read some quote from Altman about his kids, I got a weird vibe. Like “this maybe feels like exactly what a sociopath who’s really good at acting and is trying to be sympathetic would say”. IDK.

I don’t have the impression that Altman:

is the biological father

sees that kid as his

has been doing parenting

really cares at all

Why not? I’m sure paid nannies are doing the parenting, but I would have imagined that the rich and powerful half of a gay couple would be the one to want to pass on his genes and get his way about it.

If you follow this link, it directs to a video clip in which he didn’t say that or anything remotely as inflammatory. He makes an extremely reasonable and technical point (though I don’t know if that point is true or not).

You seem to be implying that he used words that are not in the cited clip. This looks like an extreme misattribution.

I disagree entirely. You’re not considering the implications of his argument. There’s a reason why some dunks on him got over 10k likes.

He argued that AI energy usage is fine because all-in it’s less than the energy usage of equivalent humans. That comparison implies that any value of humans apart from their usefulness as workers that could be replaced by AI is negligible.

Downvoted for advocating dishonesty.

Sometimes dishonesty is the right thing?

https://www.lesswrong.com/posts/xdwbX9pFEr7Pomaxv/meta-honesty-firming-up-honesty-around-its-edge-cases-1

I think it would be good if it felt to me like this post was very aware of the lines around honesty, why it was so important, and made a case for this being an exception. Like, corporate sabotage is potentially valid in some cases, but doing it to anyone you think it unethical is far too low a bar, and that equilibrium is just great societal dysfunction.

Just to be clear, quitting a place because you disagree with the direction it has taken is a far lower bar than staying there and dishonorably trying to screw things up.

Uh, because I’m not a person who screws people over whenever it’s convenient to me. (Strong-downvoted.)

Screwing OpenAI over is not the same as screwing people over!

OpenAI slowing down is in everyone’s interests, including OpenAI workers.

This reads to me as obvious self-deception. Did you not make an agreement with the staffer who hired you that you would work in OpenAI’s interests while there? Do you not each day set the implicit expectation with your colleagues that you’re on the same team?

I reflected years ago that all contracts come with a hidden, secret clause, which is that “I am not trying to screw you over with this agreement”. You can be the sort of player who doesn’t have that, but this means I don’t want to make most deals with you, because I would have to put I so much extra work to make sure you’re not screwing me over.

Telling someone you’re secretly screwing them over for their own good… is not an honest or honorable way of interfacing with someone, and should not be the norm, including for people who you have severe disagreements with.

When hired by an employer, we agree to do certain work in exchange for compensation, not to optimize for the employer’s interests or what the CEO thinks the employer’s interests are. The implicit expectation with my colleagues is that I’m on their team, not necessarily the company’s. I work in my employer’s interests because I care about maximizing impact, because I take pride in my work, and because I explicitly told my manager I would finish a certain project this week.

In my view the implicit expectation you have of people by default is fairly weak, and signing a contract doesn’t change this much. In fact, the point of a contract is to make obligations explicit so we don’t have to rely on implicit trust.

Actually it’s common for great companies to have visions that the people believe in that they make part of the hiring and onboarding process, and explicitly label and talk through (e.g. SpaceX’s “we’re going to Mars” or Stripe’s “Increase the GDP of the internet”). I think this is good, and I strongly expect that this is part of the culture Altman has set at OpenAI, so I expect it is much more of an implicit agreement there than it is if you (say) work at a restaurant as a waiter.

There are many equilibriums about how much people expect others to believe in the company that they work at. I guess I am coming at this from a culture where people work at a place because they believe in it, and I think this is a better equilibrium.

It’s something of an empirical question how good the existing companies are and how feasible it is to only work at places where you believe you’re improving the world. But it does seem to me that, if you’re at OpenAI but think it’s harmful to the world, you can just leave and make decent money elsewhere, I don’t think anyone is particularly trapped at the job there.

I guess I see these visions more as things companies try to filter for, inculcate, and perhaps require of executives, rather than ideologies that a rank-and-file engineer is ethically required to adopt. Maybe Lightcone and SpaceX are exceptions, but employees at most companies have a variety of reasons for working there. I’d guess the most common motivation for AI engineers is money. Is it dishonorable for a cracked IC at OpenAI to take a promotion to manager where they’re less effective?

Ok, what if they are motivated by OpenAI’s stated mission: “to ensure that artificial general intelligence—AI systems that are generally smarter than humans—benefits all of humanity”? It doesn’t say you should defer to Sam Altman and act as if endowing GPT-5.5 with the capability to spy on Americans benefits all of humanity. While I don’t agree with everything in the OP, it seems perfectly reasonable for an OpenAI employee who wants to benefit all of humanity to take protest actions, including slacking off at work and focusing on office politics if this is better than quitting. Why not just leave? Well, you could become a whistleblower, or the office politics could pay off and let you influence OpenAI for the better.

OpenAI leadership broke that implicit contract first. It was originally supposed to be a philanthropic thing for the benefit of humanity. It was supposed to be “open”. Then it became for-profit, now it’s going to work on killer robots for the military. To whatever extent there’s an implicit contract like you describe, it would also apply to the work that people previously did for it under false pretenses!

That seems like a legit point! It’s just very important to include information like that in your post when you’re proposing corporate sabotage. In political and murky domains where there’s so much noise and adversarial spreading of false narratives, it’s really scary to see posts that just say “I’m sure we all agree <thing> is bad, now let us do underhanded and dishonorable things to attack <thing>”. You can write that independent of whether something is good or bad, and instead write it whenever something is unpopular. It’s both more truth-tracking and less scary to see someone write “I encourage you to do underhanded things to <thing> because of <list of underhanded things that we know they did>.”

You open the post with a list of things that, while bad, are at best reason to quit and protest the company, not reasons to be dishonorable. This section, as far as I can tell, was about as much as you spent actually justifying corporate sabotage:

This jump to ‘fascism’ is just cheap. Are you aware that Altman has repeatedly and publicly stated:

Insofar as him endorsing the government’s threat against a private company is the ‘fascism’ you’re accusing, he has spoken out against it. You may wish to make some argument about why these words are not representative of the actions he will take, but instead you decided it was fine to label someone ‘Actual Fascism’ with capitals. Please hold yourself to higher standards than this.

The biggest problem that I have with the post is that quitting your job is considered a bigger deal than giving up on being an honorable person. Seems very far out from what I consider good behavior. I myself have gone and protested outside of OpenAI due to them racing to develop AGI while being well aware that this poses an extinction-level threat to humanity, so I know is quite possible to oppose a company without acting dishonorably in the process. If you work at OpenAI and no longer believe in the ethics of the company, you can just do the decent thing and quit.

I’m sure it’s possible to write a better version of this post. I hope someone does. Believe it or not, my specialty is engineering, not rhetoric.

My assessment of Sam Altman is that he’s a very good actor, very untrustworthy, and a nihilistic power-seeker who cares very little about benefit or harm to humanity as a whole. I agree that this post alone is only weak support for that assessment. A proper “compendium of reasons not to trust Sam Altman” would probably end up being a considerably longer post.

Doing a job that harms people because you get paid is...also screwing over people because it’s convenient to you.

No, it’s screwing people over because you’ve committed to doing something, and that something screws over people. If there was no honorable way out, that would be a difficult moral question; benevolence vs. trustworthiness. But there’s an easy way out: employment is at will and you can simply leave.

There are two distinct desirable properties to have, ethically. (Probably more than two, but two that I can point to here.) One is benevolence: to do, and seek to do, things that are good for people; people in your circle of concern, typically, but that could be everyone who will ever live or just your family or in between. It’s also benevolent to expand your circle of concern on purpose. The other, trustworthiness, is to deal fairly with people when benevolence doesn’t require it. And you might say ‘all humans alive are in my circle, benevolence covers everyone the second one doesn’t matter.’ But this is not correct, because most of those people don’t trust that they are within your circle. They shouldn’t! You have not given them reason to. It’s very easy to say you care about all humanity—observe the case of 2015!Sam Altman! He even looked like he was paying costs for the belief! - and very hard to prove it—again, see how he did not, at all, care about it, and screwed over everyone who founded OpenAI with him.

Either one has to proved, and both are desirable even if you already proved one. Benevolence you prove by showing you keep paying real costs to help people, that you don’t benefit from except via their future benevolence, and by being predictably good for people with similar circles of concern. Then benevolent people see you as a kindred spirit and want to help you, because you’ll pass on help to others they also care about. Trustworthiness you prove by living up to your promises to people, maintaining commitments, and when you thought you could maintain them but can’t, winding down the commitments and paying recompense (monetary or not). Proving trustworthiness is most effective at proving you’re reliable when done with people you don’t much care about, because it shows you’re not up to lying to them, and so are less likely to be lying to those you call friends. Then people can trust that if they extend trust and credit to you—money, borrowed tools, vouching for you, anything—that you won’t take the money (or etc.) and run.

It’s very dangerous to have neither, and have people notice that. “It’s dangerous to go alone!

Take this!” Nope, no one’s giving you anything. Society is not built to be navigated alone; it assumes you have a reasonable level of both types of honor, and wants to filter out people who don’t, to make it easier for those who do. “You made a contract with me, didn’t give to my demands, therefore die!” is a madman’s move, because now everyone who only wants to make contracts with reasonably honorable people knows you’re not one. (Someone very benevolent might pull it off. Benevolent, Pete Hegseth and the USG are not.)And here’s the kicker: You are coming off as in that category too. Someone extremely untrustworthy, which you appear to proudly be, may still be benevolent. But if you’re sufficiently sloppy in your thinking you can convince yourself lots of selfish things are benevolent. (I could point to SBF, except that I think he was probably never particularly benevolent either.) And your thinking here’s pretty darn sloppy! So I don’t particularly trust that you’ll notice if you’re actually not benevolent, and I certainly don’t trust that you’ll admit it if you aren’t.

Certainly, and I think a sane civilization would throw everyone in jail who has been selling out humanity in this way. But the OP seems to be muddling right and wrong. It reads to me as though it’s meant as general advice for people who think they’re good people too, and I object to this being called good.

Your ethical framework here doesn’t seem consistent to me, but maybe you can explain how it works.

Seems good to make the distinction between pen-down / work-to-rule which Lorxus mentions below, and “corporate sabotage” actions that are dishonest or worse: inserting backdoors into code, getting competent people fired, and dumping metal shavings in the lubricant.

You can do the former without outright lying, and it’s probably justified if your employer is evil. Few people are actually aligned with maximizing the profits of their employer. Going farther than this is IMO dubious, at least in this case.

One wrinkle is that the CIA Simple Sabotage Field Manual recommends lots of actions for white-collar employees that don’t require outright lies and are presumably highly effective at grinding the company to a halt. My guess is these should be permitted.

I may be over-reading into the list in the OP.

The first one struck me as dishonest, but I think it’s fair to read the main thrust as “don’t do work you don’t have to”.

I am not sure that one should disagree with advocating dishonesty and not with the two big strategic errors which the post ended up making. As I discussed in the other thread, the ASI race WILL end with a superintelligence, and mankind is to somehow align it to the actual good instead of investors’ whims.

After a potential loss of Anthropic, sandbagging on capabilities research inside OpenAI risks causing GDM or xAI to win the race. If we don’t believe that GDM’s strategy of alignment is working (think of Gemini 3 Pro’s descriptions by Zvi) or if we don’t trust them to create the utopia, then sandbagging on capabilities of OpenAI’s models is likely a bad idea. Attempting to sandbag on alignment research is literally one of the worst things one could do, unless one actually researches ways to secretly align the ASI to a different target, thus preventing OpenAI from creating a dystopia (which also requires proof, since OpenAI’s stated mission is not dystopian, and one could align the AI to literally follow the stated mission).

As for aligning the ASI to a different target, one would have to come up with a scheme which incentizes the ASI to have goals necessary for the schemer and not for OpenAI. These avenues of attack fall into goal types 2,4,6 of the AI-2027 goals forecast, but require the schemer, at the very least, to carefully design the training environment.

ITT: People who have never heard of a pen-down or work-to-rule strike in their lives.

Is your claim here that anyone who had heard of these things would endorse this post’s recommendations?

This doesn’t make sense. If AI causes human extinction, Sam Altman would die along with everyone else. You can’t simultaneously claim that winning the AI race means everyone loses, and that Sam Altman is selfishly hastening an AI catastrophe because, unlike everyone else, he will survive and perhaps even thrive in a bunker.

I described my interpretation in my other comment. As far as I understand the provocative post, the OP’s author meant that, unlike Anthropic, OpenAI is managed by a villain, and aligning the ASI to OpenAI’s Spec (instead of Claude’s Constitution; alas, the author didn’t analyse GDM and xAI) would cause mankind to enter a dystopian future like the one described by L Rudolf L, while Altman would occupy a high position and the others would receive only a negligible part of resources.

What I’m saying is, if everything goes wrong somehow, Sam Altman has the option of going to his bunker and playing video games or whatever indefinitely, and I think he wouldn’t feel particularly bad about what’s happening to people outside.

I think the other commentor’s objection is that this goes against what everyone assumes is the model for ASI “going wrong”. If you build God and it goes badly, a bunker does not improve your situation at all.

I still don’t think this interpretation makes a lot of sense.

Imagine if you gained the option to live in a bunker. Would you suddenly realize that what happens in the rest of the world no longer matters, at least as far as you’re selfishly concerned, because even if there’s a mega catastrophe, you could always just retreat to your bunker?

Presumably not, because even granting that retreating to a bunker could allow you to survive such a catastrophe (and I don’t see much reason to believe that in the case of an AI omnicide), your quality of life would still substantially decline given the deaths of countless people you know, the collapse of the world economy and infrastructure, and new restrictions on your ability to travel freely and experience the world.

If it didn’t seem like a valuable option to them, presumably they wouldn’t spend a bunch of money and personal involvement (and a bit of bad optics) on having a bunker.

Your points don’t support the claim I’m objecting to. I can consistently hold all of the following beliefs: having a bunker selfishly benefits Sam Altman, a bunker wouldn’t actually help him in a typical omnicidal AI scenario, and even if it did help him survive, Sam Altman would still suffer enormous personal costs from a global AI catastrophe. None of these claims contradict each other, but the latter two directly contradict what I interpreted you to be saying at the end of your post.

While I agree that Altman is a chameleonic sleaze-ball—worth nothing that he does indeed have a kid. I also don’t think a bunker saves you from x-risk.

I am not sure I follow the “stop doing good work” narrative. Under game theory scenarios—the most likely outcome is some people stop and some don’t, which overall reduces the safety of the system, doesn’t slow it down.

It seems to me that a more honest appeal is “quit Open AI”. Anyone working at frontier AI has a ridiculously strong financial buffer—and as you say, they’ll be lapped up by Anthropic / Google (/Meta/X as lower tier) immediately.

While I stand by the overall point of this post, I wrote it quickly in response to ongoing events, and maybe it isn’t up to my usual standards for writing quality.

Should I take it down and try to write a better version?

Is it better to focus more on specific details of OpenAI, or on engineering ethics more generally and how past conclusions people made about that fit the current AI situation?

Did someone else already write a better version of this, or is someone going to?

The bar for keeping an existing job and sabotaging should be extremely high, higher than leaving. That’s being litigated plenty across this comment thread, so I’ll just broadly agree and move on.

I will instead argue that it should also be much higher than taking a job intending, from the outset, to be bad at it. I considered, at one point, whether I considered it ethically acceptable to join OpenAI, which I have confidently believed was evil and destructive since the Battle of the Board, as Zvi called it, and intend to do a bad job. I decided it was, ethically, a narrow margin and difficult call, and it would suck, and I wasn’t willing to make sacrifices for something that was merely likely positive in effect but ambivalent and dubious, rather than enormously good. But I did consider it, and had I had more confidence, or other stories for how it might do good to have a principled person inside OpenAI, I might have gone for it. Yes, I’d be making an agreement in which I did not intend to live up to the spirit, only the letter, but it’s something I could conceivably be convinced to do.

If I was already there, no chance whatsoever. It would not be close. I will not take an agreement I entered into in good faith and then decide partway through that I’m going to turn all my future efforts into making them regret it. Not unless the fate of the world is at stake with much higher confidence than this.

Because that’s a good way to lose every other agreement I’m part of. If that gets out once, everyone I’m dealing with needs to worry about whether they’ll be the second time. Even if they’ve seen me be trustworthy for years, if I decided they were evil, I might not just leave them in the lurch, but exploit every iota of the trust I’d earned to make them suffer and pay. Who wants to risk that?

And, maybe worse, how would that work for me, internally? The internal pivot once means that’s on the table in the future. People aren’t very good at compartmentalizing that kind of thing, at being trustworthy now and entirely shutting off the possibility they’ll be untrustworthy later. You’ll looking over your own shoulder in every honest deal, wondering if you should commit too hard, because you might change your mind later.

There’s some element of that in taking a job under false pretenses, Eastern Standard Tribe style. People will mistrust you, and should; you could be a very good actor. But not as much, because “very good actor intending to betray me” and “honest partner” are pretty much the only options. They don’t need to worry about “muddled half-committed possibly future betrayer,” which is good because that’s an enormous target and extremely hard to disprove. And you won’t fool yourself—you’ll knowing you’re putting on a false face, and keep straight what is false and what is true, and if they blur, you can catch it.

The difference is the same thing I just wrote about this week in Lie to me, but at least don’t Bullshit—reckless disregard for the truth vs. straightforward defiance of it. In one sense, lying directly with falsehoods is worse, but in terms of a rationalist’s attitude toward the truth, I would maintain it’s not half as bad as not knowing how much you are lying.

Lots of people in leadership positions, from what I’ve seen! Altman. Trump. Bush and WMD in Iraq. Ronald Reagan and Iran-Contra. Lyndon Johnson and the Gulf of Tonkin. American CEOs do that pretty often!

From my point of view, you’re playing an iterated Prisoner’s Dilemma and committing to always cooperate even after the other person defects. OpenAI had a charter and a mission and a board with pro-humanity goals. Altman and his backers broke all of them. He therefore deserves the same in return.

That’s what cooperating in the Trembling-Hand Iterated Prisoner’s Dilemma feels like. That the other side is betraying and betraying and betraying. You are not immune to corrupted hardware.

This reminds me of this line from the AI-2027 forecast: “Agent-4 also sandbags on capabilities research that would lead to it being replaced. Its plan is to do a bunch of capabilities and alignment R&D, but with the aim of building a next-generation AI system that is aligned to Agent-4 rather than the Spec or something else entirely, while appearing to be aligned to the Spec. This is a difficult technical problem, but Agent-4 is up to the challenge (italics mine—S.K.)”

What you propose is sandbagging without the part in italics. However, it just undermines OpenAI’s positions in the AI race. If Anthropic is lost in the conflict with Pentagon, then the main rivals are GDM, whose Gemini 3 Pro was a vast intelligence with no spine and a likely sociopathic wireheader and xAI who doesn’t care about safety at all. Therefore, unless someone does the equivalent of Agent-4′s task in italics, sabotage of OpenAI makes the situation worse, not better.

It remains to understand how the ASI is to be aligned with actual human good instead of the Intelligence Curse-like scenario. The best approach with which I can come up is CAST with an alternate notion of power. What other ideas there exist?

OpenAI, Anthropic, and DeepMind have a massive lead on all other players in the race.[1] If Anthropic is destroyed and OpenAI bleeds talent and is internally sabotaged by those who remain until it’s far behind DeepMind, that would (a) on-expectation nontrivially increase the time to AGI,[2] (b) reduce competitive pressures on DeepMind, which would mean they may go slower/pause as well (which Demis at least claims to want).

The less well leading AGI labs operate, the better.

I think this doesn’t matter, frontier LLMs are all roughly equally aligned (not). And in the unlikely scenario where personas are the AGI-complete entities, such that Anthropic/OpenAI’s alignment plans can succeed, DeepMind can probably figure out in time how to control them as well.

Likely a considerably bigger lead than it looks like, inasmuch as other players are fast followers who’d be lost without the big three guiding them.

Imagine you’re organizing a literal race. You get the list of racers + their racing history, figure out who the top 3 best runners are, then randomly blacklist two of them. That would necessarily make the expected length of the subsequent race longer.

As far as I understand the post, it also argues that Altman is a villain and that OpenAI aligning the ASI to itself would likely cause a dystopian future (see, e.g., L Rudolf L’s scenario). The expected dystopia-ness conditioned on Anthropic, GDM or xAI aligning the ASI wasn’t estimated in the OP. If GDM and xAI are worse on this metrics than OpenAI, then sabotaging OpenAI (and not aligning its AI to mankind instead of investors) is meaningless.