The Telephone Theorem: Information At A Distance Is Mediated By Deterministic Constraints

You know the game of Telephone? That one where a bunch of people line up, and the first person whispers some message in the second person’s ear, then the second person whispers it to the third, and so on down the line, and then at the end some ridiculous message comes out from all the errors which have compounded along the way.

This post is about modelling the whole world as a game of Telephone.

Information Not Perfectly Conserved Is Completely Lost

Information is not binary; it’s not something we either know or don’t. Information is uncertain, and that uncertainty lies on a continuum − 10% confidence is very different from 50% confidence which is very different from 99.9% confidence.

In Telephone, somebody whispers a word, and I’m not sure if it’s “dish” or “fish”. I’m uncertain, but not maximally uncertain; I’m still pretty sure it’s one of those two words, and I might even think it’s more likely one than the other. Information is lost, but it’s not completely lost.

But if you’ve played Telephone, you probably noticed that by the time the message reaches the end, information is more-or-less completely lost, unless basically-everyone managed to pass the message basically-perfectly. You might still be able to guess the length of the original message (since the length is passed on reasonably reliably), but the contents tend to be completely scrambled.

Main takeaway: when information is passed through many layers, one after another, any information not nearly-perfectly conserved through nearly-all the “messages” is lost. Unlike the single-message case, this sort of “long-range” information passing is roughly binary.

In fact, we can prove this mathematically. Here’s the rough argument:

At each step, information about the original message (as measured by mutual information) can only decrease (by the Data Processing Inequality).

But mutual information can never go below zero, so…

The mutual information is decreasing and bounded below, which means it eventually approaches some limit (assuming it was finite to start).

Once the mutual information is very close to that limit, it must decrease by very little at each step—i.e. information is almost-perfectly conserved.

This does allow a little bit of wiggle room—non-binary uncertainty can enter the picture early on. But in the long run, any information not nearly-perfectly conserved by the later steps will be lost.

Of course, the limit which the mutual information approaches could still be zero—meaning that all the information is lost. Any information not completely lost must be perfectly conserved in the long run.

Deterministic Constraints

It turns out that information can only be perfectly conserved when carried by deterministic constraints. For instance, in Telephone, information about the length of the original message will only be perfectly conserved if the length of each message is always (i.e. deterministically) equal to the length of the preceding message. There must be a deterministic constraint between the lengths of adjacent messages, and our perfectly-conserved information must be carried by that constraint.

More formally: suppose our game of telephone starts with the message . Some time later, the message is whispered into my ear, and I turn around to whisper into the next person’s ear. The only way that can contain exactly the same information as about is if:

There’s some functions for which with probability 1; that’s the deterministic constraint.

The deterministic constraint carries all the information about - i.e. . (Or, equivalently, mutual information of and equals mutual information of and .)

Proof is in the Appendix.

Upshot of this: we can characterize information-at-a-distance in terms of the deterministic constraints in a system. The connection between and is an information “channel”; only the information encoded in deterministic constraints between and will be perfectly conserved. Since any information not perfectly conserved is lost, only those deterministic constraints can carry information “at a distance”, i.e. in the large-n limit.

The Thing For Which Telephone Is A Metaphor

Imagine we have a toaster, and we’re trying to measure its temperature from the temperature of the air some distance away. We can think of the temperature of the toaster itself as the “original message” in a game of Telephone. The “message” is passed along by many layers of air molecules in between our toaster and our measurement device.

(Note: unlike the usual game of Telephone, the air molecules could also be “encoding” the information in nontrivial ways, rather than just passing it along with some noise mixed in. For instance, the air molecules may be systematically cooler than the toaster. That’s not noise; it’s a predictable difference which we can adjust for. In other words, we can “decode” the originally-hotter temperature which has been “encoded” as a systematically-cooler temperature by the molecules. On the other hand, there will also be some unpredictable noise from e.g. small air currents.)

More generally, whenever information is passed “over a long distance” (or a long time) in a physical system, there’s many layers of “messages” in between the start and the end. (This has to be the case, because the physics of our universe is local.) So, we can view it as a game of telephone: the only information which is passed over a sufficiently-long “distance” is information perfectly transmitted by deterministic constraints in the system. Everything else is lost.

Let’s formalize this.

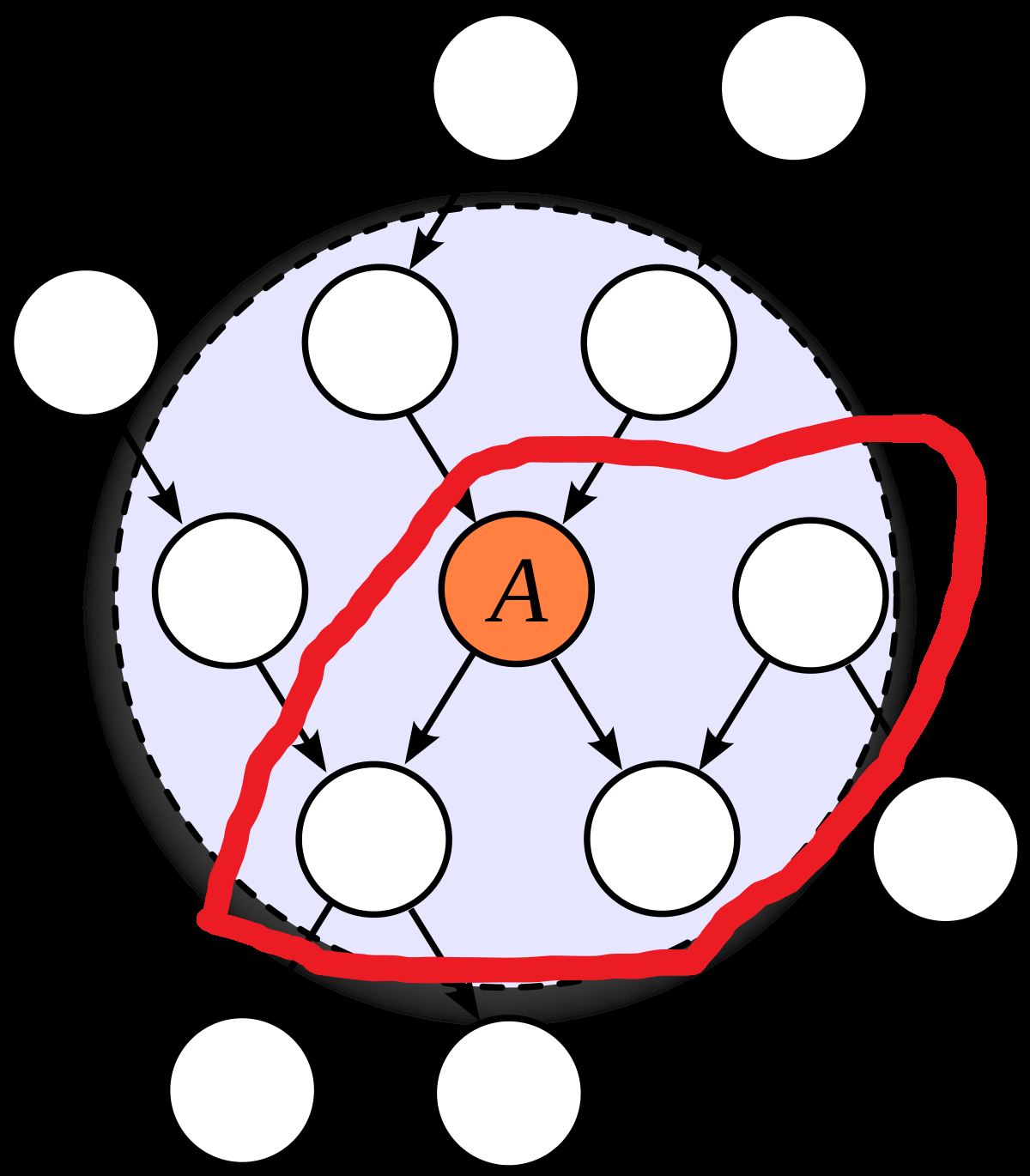

Suppose we have a giant causal model representing the low-level physics of our universe:

We can draw cuts in this graph, i.e. lines which “cut off” part of the graph from the rest. In the context of causal models, these are called “Markov blankets”; their interpretation is that all variables on one side are statistically independent of all variables on the other side given the values of any edges which cross the blanket. For instance, a physical box which I close at one time and open at a later time is a Markov blanket—the inside can only interact with the outside via the walls of the box or via the state when the box is first closed/opened (i.e. the “walls” along the time-dimension).

We want to imagine a sequence of nested Markov blankets, each cutting off all the previous blankets from the rest of the graph. These Markov blankets are the “messages” in our metaphorical game of Telephone. In the toaster example, these are sequential layers of air molecules between the toaster and the measurement.

Our theorem says that, in the “long distance” limit (i.e. ), constraints of the form determine which information is transmitted. Any information not perfectly carried by the deterministic constraints is lost.

Another example: consider a box of gas, just sitting around. We can choose our “layers of nested Markov blankets” to be snapshots of the microscopic states of all the molecules at sequential times. At the microscopic level, molecules are bouncing around chaotically; quantum uncertainty is quickly amplified by the chaos. The only information which is relevant to long-term predictions about the gas molecules is information carried by deterministic constraints—e.g. conservation of energy, conservation of the number of molecules, or conservation of the volume of the box. Any other information about the molecules’ states is eventually lost. So, it’s natural to abstractly represent the “gas” via the “macroscopic state variables” energy, molecule count and volume (or temperature, density and pressure, which are equivalent); these are the variables which can carry information over long time horizons. If we found some other deterministic constraint on the molecules’ dynamics, we might want to add that to our list of state variables.

The Big Loophole

Note that our constraints only need to be deterministic in the limit. At any given layer, they need only be “approximately deterministic”, i.e. with probability close to 1 (or , in which case the leading-order bits are equal with probability close to 1). As long as that approximation improves at each step, converging to perfect determinism in the limit, it works.

In practice, this is mainly relevant when information is copied redundantly over many channels.

An example: suppose I start with a piece of string, cut two more pieces of string to match its length, then throw out the original. Each of the two new strings has some “noise” in its length, which we’ll pretend is normal with mean zero and standard deviation , independent across the two strings.

Now, I iterate the process. For each of my two pieces of string, I cut two new pieces to match the length of that one, and throw away my old string. Again, noise is added in the process; same assumptions as before. Then, for each of my four pieces of string, I cut two new pieces to match the length of that one, and throw away my old string. And so forth.

We can represent this as a binary-tree-shaped causal model; each new string is an independent noisy measurement of its parent:

… and we can draw layers of Markov blankets horizontally:

Now, there is never any deterministic constraint between and ; is just a bunch of “measurements” of , and every one of those measurements has some independent noise in it.

And yet, it turns out that information is transmitted in the limit in this system. Specifically, the measurements at layer are equivalent to a single noisy measurement of the original string length with normally-distributed noise of magnitude . In the limit, it converges to a single measurement with noise of standard deviation . That’s not a complete loss of information.

So what’s the deterministic constraint which carries that information? We can work backward through the proofs to calculate it; it turns out to be the average of the measurements at each layer. Because the noise in each measurement is independent (given the previous layer), the noise in the average shrinks at each step. In the limit, the average of one layer becomes arbitrarily close to the average of the next.

That raises an interesting question: do all deterministic-in-the-limit constraints work roughly this way, in all systems? That is, do deterministic constraints which show up only in the limit always involve an average of conditionally-independent noise terms, i.e. something central-limit-theorem-like? I don’t know yet, but I suspect the answer is “yes”.

Why Is This Interesting?

First, this is a ridiculously powerful mathematical tool. We can take a causal system, choose any nested sequence of Markov blankets which we please, look at deterministic constraints between sequential layers, and declare that only those constraints can transmit information in the limit.

Personally, I’m mostly interested in this as a way of characterizing abstraction. I believe that most abstractions used by humans in practice are summaries of information relevant at a distance. The theorems in this post show that those summaries are estimates/distributions of deterministic (in the limit) constraints in the systems around us. For my immediate purposes, the range of possible “type-signatures” of abstractions has dramatically narrowed; I now have a much better idea of what sort of data-structures to use for representing abstractions. Also, looking for local deterministic constraints (or approximately-deterministic constraints) in a system is much more tractable algorithmically than directly computing long-range probability distributions in large causal models.

(Side note: the previous work already suggested conditional probability distributions as the type-signature of abstractions, but that’s quite general, and therefore not very efficient to work with algorithmically. Estimates-of-deterministic-constraints are a much narrower subset of conditional probability distributions.)

Connections To Some Other Pieces

Content Warning: More jargon-y; skip this section if you don’t want poorly-explained information on ideas-still-under-development.

In terms of abstraction type-signatures and how to efficiently represent them, the biggest remaining question is how exponential-family distributions and the Generalized Koopman-Pitman-Darmois Theorem fit into the picture. In practice, it seems like estimates of those long-range deterministic constraints tend to fit the exponential-family form, but I still don’t have a really satisfying explanation of when or why that happens. Generalized KPD links it to (conditional) independence, which fits well with the overarching framework, but it’s not yet clear how that plays with deterministic constraints in particular. I expect that there’s some way to connect the deterministic constraints to the “features” in the resulting exponential family distributions, similar to a canonical ensemble in statistical mechanics. If that works, then it would complete the characterization of information-at-a-distance, and yield quite reasonable data structures for representing abstractions.

Meanwhile, the theorems in this post already offer some interesting connections. In particular, this correspondence theorem (which I had originally written off as a dead end) turns out to apply directly. So we actually get a particularly strong form of correspondence for abstractions; there’s a fairly strong sense in which all the natural abstractions in an environment fit into one “global ontology”, and the ontologies of particular agents are all (estimates of) subsets of that ontology (to the extent that the agents rely on natural abstractions).

Also, this whole thing fits very nicely with some weird intuitions humans have about information theory. Basically, humans often intuitively think of information as binary/set-like; many of our intuitions only work in cases where information is either perfect or completely absent. This makes representing information quantities via Venn diagrams intuitive, and phenomena like e.g. negative interaction information counterintuitive. But if our “information” is mostly representing information-at-a-distance and natural abstractions, then this intuition makes sense: that information is generally binary/set-like.

Appendix: Information Conservation

We want to know when random variables , and factor according to both and , i.e.

… and

Intuitively, this says that and contain exactly the same information about ; information is perfectly conserved in passing from one to the other.

(Side note: any distribution which factors according to also factors according to the reversed chain . In the main post, we mostly pictured the latter structure, but for this proof we’ll use the former; they are interchangeable. We’ve also replaced “” with “”, to distinguish the “original message” more.)

First, we can equate the two factorizations and cancel from both sides to find

… which must hold for ALL with . This makes sense: and contain the same information about , so the distribution of given is the same as the distribution of given - assuming that .

Next, define , i.e. gives the posterior distribution of given . Our condition for all with can then be written as

… for all with . That’s the deterministic constraint. (Note that matters only up to isomorphism, so any invertible transformation of can be used instead, as long as we transform both and .)

Furthermore, a lemma of the Minimal Map Theorem says that , so we also have . In other words, depends only on the value of the deterministic constraint.

This shows that any solutions to the information conservation problem must take the form of a deterministic constraint , with dependent only on the value of the constraint. We can also easily show the converse: if we have a deterministic constraint, with dependent only on the value of the constraint, then it solves the information conservation problem. This proof is one line:

… for any which satisfy the constraint .

- Discussion with Nate Soares on a key alignment difficulty by (13 Mar 2023 21:20 UTC; 277 points)

- The Plan by (10 Dec 2021 23:41 UTC; 264 points)

- Natural Abstractions: Key Claims, Theorems, and Critiques by (16 Mar 2023 16:37 UTC; 251 points)

- Look For Principles Which Will Carry Over To The Next Paradigm by (14 Jan 2022 20:22 UTC; 186 points)

- A rough and incomplete review of some of John Wentworth’s research by (28 Mar 2023 18:52 UTC; 177 points)

- Principles for Alignment/Agency Projects by (7 Jul 2022 2:07 UTC; 122 points)

- The Plan − 2025 Update by (31 Dec 2025 20:10 UTC; 96 points)

- Research agenda: Formalizing abstractions of computations by (2 Feb 2023 4:29 UTC; 93 points)

- Optimization at a Distance by (16 May 2022 17:58 UTC; 89 points)

- Testing The Natural Abstraction Hypothesis: Project Update by (20 Sep 2021 3:44 UTC; 88 points)

- $500 + $500 Bounty Problem: Does An (Approximately) Deterministic Maximal Redund Always Exist? by (6 May 2025 23:05 UTC; 73 points)

- Reflections on the PIBBSS Fellowship 2022 by (EA Forum; 11 Dec 2022 22:03 UTC; 69 points)

- The Lightcone Theorem: A Better Foundation For Natural Abstraction? by (15 May 2023 2:24 UTC; 69 points)

- Abstractions as Redundant Information by (13 Feb 2022 4:17 UTC; 69 points)

- Distributed Decisions by (29 May 2022 2:43 UTC; 66 points)

- Voting Results for the 2021 Review by (1 Feb 2023 8:02 UTC; 66 points)

- Clarifying the Agent-Like Structure Problem by (29 Sep 2022 21:28 UTC; 64 points)

- The “Minimal Latents” Approach to Natural Abstractions by (20 Dec 2022 1:22 UTC; 53 points)

- [Appendix] Natural Abstractions: Key Claims, Theorems, and Critiques by (16 Mar 2023 16:38 UTC; 48 points)

- Three Kinds Of Ontological Foundations by (10 Nov 2025 0:33 UTC; 40 points)

- AXRP Episode 15 - Natural Abstractions with John Wentworth by (23 May 2022 5:40 UTC; 34 points)

- The Big Picture Of Alignment (Talk Part 2) by (25 Feb 2022 2:53 UTC; 34 points)

- Maxent and Abstractions: Current Best Arguments by (18 May 2022 19:54 UTC; 33 points)

- Reflections on the PIBBSS Fellowship 2022 by (11 Dec 2022 21:53 UTC; 32 points)

- What wheels do EAs and rationalists reinvent? by (EA Forum; 17 Oct 2024 4:42 UTC; 28 points)

- A rough and incomplete review of some of John Wentworth’s research by (EA Forum; 28 Mar 2023 18:52 UTC; 28 points)

- Abstraction As Symmetry and Other Thoughts by (1 Feb 2023 6:25 UTC; 28 points)

- AI Risk Intro 2: Solving The Problem by (22 Sep 2022 13:55 UTC; 22 points)

- 's comment on A transparency and interpretability tech tree by (17 Jun 2022 10:29 UTC; 22 points)

- AI Risk Intro 2: Solving The Problem by (EA Forum; 24 Sep 2022 9:33 UTC; 11 points)

- Does the Telephone Theorem give us a free lunch? by (15 Feb 2023 2:13 UTC; 11 points)

- 's comment on The Pragmascope Idea by (5 Aug 2022 15:38 UTC; 8 points)

- 's comment on Causality, Transformative AI and alignment—part I by (28 Jan 2022 17:23 UTC; 8 points)

- 's comment on Fixing The Good Regulator Theorem by (18 Jul 2022 1:32 UTC; 4 points)

- 's comment on wrapper-minds are the enemy by (17 Jun 2022 20:48 UTC; 4 points)

- 's comment on The “Minimal Latents” Approach to Natural Abstractions by (4 Jan 2023 17:56 UTC; 4 points)

- Noisy environment regulate utility maximizers by (5 Jun 2022 18:48 UTC; 4 points)

- 's comment on Testing The Natural Abstraction Hypothesis: Project Intro by (19 Sep 2022 18:12 UTC; 3 points)

- 's comment on Testing The Natural Abstraction Hypothesis: Project Update by (14 Aug 2022 15:28 UTC; 3 points)

- 's comment on Leon Lang’s Shortform by (21 Jan 2023 0:18 UTC; 1 point)

Uncharitable Summary

Most likely there’s something in the intuitions which got lost when transmitted to me via reading this text, but the mathematics itself seems pretty tautological to me (nevertheless I found it interesting since tautologies can have interesting structure! The proof itself was not trivial to me!).

Here is my uncharitable summary:

Assume you have a Markov chain M_0 → M_1 → M_2 → … → M_n → … of variables in the universe. Assume you know M_n and want to predict M_0. The Telephone theorem says two things:

You don’t need to keep all information about M_n to predict M_0 as well as possible. It’s enough to keep the conditional distribution P(M_0 | M_n).

Additionally, in the long run, these conditional distributions grow closer and closer together: P(M_0 | M_n) ~ P(M_0 | M_{n+1})

That’s it. The first statement is tautological, and the second states that you cannot keep losing information. At some point, your uncertainty in M_0 stabilizes.

Further Thoughts

I think actually, John wants to claim that the conditional probabilities can be replaced by something else which carries information at a distance and stabilizes over time. Something like:

Average measurements

Pressure/temperature of a gas

Volume of a box

Length of a message

…

These things could then serve as “sufficient statistics” that contain everything one needs for making predictions. I have no idea of how one would go about finding such conserved quantities in general systems. John also makes a related remark:

“(Side note: the previous work already suggested conditional probability distributions as the type-signature of abstractions, but that’s quite general, and therefore not very efficient to work with algorithmically. Estimates-of-deterministic-constraints are a much narrower subset of conditional probability distributions.)”

The math was made easier in the proof by assuming that information at a distance precisely stabilizes at some point. In reality, it may slowly converge without becoming constant. For this, no proof or precise formulation is yet in the text.

John claims: “I believe that most abstractions used by humans in practice are summaries of information relevant at a distance. The theorems in this post show that those summaries are estimates/distributions of deterministic (in the limit) constraints in the systems around us.”This confuses me. It seems like this claims that we can also use summaries of very closeby objects to make predictions at arbitrary distance. However, the mathematics doesn’t show this: it only considers varying the “sender” of information,notvarying the “receiver” (which in the case of the theorem is M_0!). If you want to make predictionsaboutarbitrarily far away different things in the universe, then it’s unclear whether you can throw any information of closeby things away. (But maybe I misunderstood the text here?)A somewhat more random comment:

I disagree with the claim that the intuitions behind information diagrams fall apart at higher degrees: if you’re “just fine” with negative information, then you can intersect arbitrarily many circles and get additivity rules for information terms. I actually wrote a paper about this, including how one can do this for other information quantities like Kolmogorov complexity and Kullback-Leibler divergence. What’s problematic about this is not the mathematics of intersecting circles, but that we largely don’t have good real-world interpretations and use cases for it.

This is a great theorem that’s stuck around in my head this last year! It’s presented clearly and engagingly, but more importantly, the ideas in this piece are suggestive of a broader agent foundations research direction. If you wanted to intimate that research direction with a single short post that additionally demonstrates something theoretically interesting in its own right, this might be the post you’d share.