Folie à Machine: LLMs and Epistemic Capture

“Truly, whoever can make you believe absurdities can make you commit atrocities.” — Voltaire, 1765

A man in his late forties discovers a new passion. After decades of working as a mid level manager for a restaurant chain, he starts spending his evenings deep in study, filling notebooks with diagrams, reading everything he can get his hands on. Within a few weeks he’s convinced he’s on the verge of a grand unified theory that reconciles quantum mechanics and general relativity. He starts emailing professors at universities, posting on forums, and brushing off every criticism, rejection, or dismissal of his findings. His wife tries to have a conversation about it; he tells her she “just wouldn’t understand.” He’s considering quitting his job to do it full time.

Would you consider this delusional?

A software developer has an idea for a startup. Not just any idea, the idea, the one that’s going to change everything. He starts iterating on it, refining his pitch, building prototypes. His friends point out some problems with the concept: the unit economics don’t work, there’s no evidence of market demand, his core assumptions about user behavior seem unfounded. He listens politely, then explains why it’s worth going forward anyway. He iterates again. And again. Each iteration is more elaborate, more detailed. He’s been “about to launch” for six months. He’s working fourteen-hour days and has never been more certain of what he’s doing with his life.

Would you consider this delusional?

A fifty-year-old woman starts an online relationship with someone she’s never met in person. They talk every day, sometimes for hours. He’s charming, attentive, says all the right things. Unfortunately he lives overseas, can’t video chat due to his work, has some financial difficulties she helps with. Her daughter points to some inconsistency in his life story, but the woman has an excuse for that, and for every other red flag. She’s sent thousands of dollars over the course of a year. She gets angry when people tell her it isn’t real. She knows it’s real. She talks to him every day.

Would you consider this delusional?

I expect most people would hesitate for at least one of these. We might say “obsessive,” or “overconfident.” We might say they need help. We might even say they’ve been brainwashed.

But “delusional” is a strong word, and psychosis even more so. These aren’t people who hear voices or believe the CIA has implanted chips in their teeth. They’re functional. They go to work (mostly). They can carry on normal conversations (mostly). They have reasons for what they believe, and if you sat down with them, they could articulate those reasons with apparent coherence.

And yet something has clearly gone wrong. Some mechanisms that should allow their models of reality to self-correct toward actual reality have been disrupted, and the longer it goes on, the harder it seems to be for them to come back. The usual feedback channels aren’t working.

None of the examples above required an LLM. The amateur physicist could have gotten there through pop-science books and Reddit. The startup founder through hustle-culture seminars. The catfish victim through Facebook.

Still. A growing number of people have been noticing that LLMs are capable of inducing all of these states, and more, in a wide variety of people. People with no history of mental illness, and no apparent propensity to give up their default sensemaking apparatus to someone, or something, else.

Pathology vs Pathologizing

The phrases “LLM psychosis” or “AI psychosis” have been bouncing around online with increasing frequency. It isn’t a clinical term yet, and the use of the word “psychosis” raises some people’s hackles.

New technology always causes panics, and this might be one of them. The most reasonable version of this pushback I’ve seen lately is this post by DeepFates. “LLM Psychosis” doesn’t seem to be referring to any coherent thing, but rather a bunch of things being lumped together under a bad label. And it’s not only failing to cut reality at its joints, it’s potentially flattening our ability to look around and notice what else may be happening when someone has an unusual experience with LLMs, what new sorts of interactions may be possible, that isn’t easily categorized as “dysfunctional.”

And as for the bad stuff, surely some of it is people having the mental health crises they would have had anyway, just now doing it in conversation with an AI, right? Others might be noticing real things about the world that happen to actually just be weird and new, or exploring bizarre but meaningful questions about the nature of these models and their inner experiences, or their understanding of humanity, or love, or the universe.

These are fair points, and under the umbrella of a concern I take pretty seriously. In my Philosophy of Therapy, I wrote:

“Pathologizing” is the perception that any action or view that is unusual is automatically a sign of illness, despite no evident dysfunction or suffering. In decades past, previous versions of the Diagnostic and Statistics Manual labeled things like homosexuality a mental health illness due to a mentality that didn’t distinguish between “normal” and “healthy.” Newer versions of the DSM have eliminated most of those, and there’s a concerted effort among (good) psychologists and therapists to distinguish real pathology as something that causes direct suffering for the patient.

There’s no end of cautionary tales about what happens when society treats “unusual” as synonymous with “sick.” Just like people who had unconventional romantic relationships, someone who spends much of their free time talking to an AI is doing something unusual. Someone who has unconventional beliefs that they developed partly through AI conversations is doing something unusual. Someone who feels a deep emotional connection to that AI is doing something unusual… for now, at least.

And yes, unusual is not, by itself, pathological. I don’t think heavy LLM use is inherently a sign of mental illness, or that anyone who’s had their worldview shifted by conversations with an AI is experiencing “psychosis.” People get changed by new ideas or activities all the time. Sometimes they transform in ways that their friends and family find alarming but that are ultimately fine, or even good for them.

The key thing to watch for is “dysfunctional” behavior. Not just behavior that makes other people uncomfortable, behavior that is actually causing the person, or the people around them, genuine harm.

This is not always a clear cut thing to identify, but if we try to find an underlying dysfunction between all the various forms of “LLM Psychosis,” whatever it is, we could gesture at something like “this person’s ability to update on evidence counter to their beliefs has been measurably degraded to the point of being unable to maintain contact with consensus reality.”

That’s the thing that tends to separate “my uncle has an unusual hobby” from “my uncle has quit his job and alienated his family over something that doesn’t seem real.” And I think we can hold both truths at once: that we should be very careful about pathologizing unusual behavior, and that there can be real, identifiable patterns of epistemic degradation that deserve a name and serious attention.

So while “psychosis” is imperfect here as a description for a lot of what’s been happening, that doesn’t mean nothing is happening. DeepFates said “the number to watch for is schizophrenia related emergency room visits,” and I think that’s wrong. “LLM Psychosis” is gesturing at a form of detachment from reality that’s quieter than traditionally imagined psychotic episodes. It’s mostly not inducing people to have sudden hallucinations or fugues or mania, and it’s mostly not causing people to put anyone’s life at imminent risk, so ER visits aren’t really going to happen much.

But if it instils or exacerbates delusions in a way that’s hard to find precedent for among things of a similar kind, that’s the crux of what I think is worth examining carefully, and seeing if we can find the “one thing” that’s underlying the variety of stuff people are, rightfully, worried about.

Reference Classes

If we want to understand whether something genuinely new is happening, we need to examine any potentially similar reference class events. What’s the closest precedent for “person interacts with a widely available, mainstream technology and develops an eroding of their sensemaking as a result?”

LLMs certainly aren’t the most psychoactive things people can do, like ingesting psychedelics or intensive meditative practices. But people engaging in those are at least somewhat aware that they’re doing something that might cause an unusual psychological experience, and might change their psyche or perspective on life. That awareness, however imperfect, can act as both a filter and a built-in safety mechanism: the person’s prior on “I might be experiencing something that isn’t real” is already elevated.

With LLMs, that prior is mostly… not one. Many sit down at their LLM to get help with a work task, or to brainstorm ideas, or to ask questions about something they’re curious about. They aren’t often expecting a potential reality-distortion experience. Sometimes the effect is made worse because they think of the LLMs as essentially similar to people in some fundamental way. Sometimes it’s made worse because they think of it less like a person, and more like a fancy knowledge repository.

YouTube and similar endless-content sites seem like a much better “like to like” comparison than drugs, and frankly it’s hard to tell how much delusion was increased by the mass adoption of YouTube more broadly. You could argue that conspiracy-video rabbit holes have had as much impact as whatever LLM Psychosis is, if not more. QAnon, flat earth communities, anti-vax movements… these were all turbocharged by recommendation algorithms on various social media platforms funneling people deeper and deeper into content ecosystems where the most engaging material detached their viewers further and further from reality.

But I think even YouTube, or other forms of parasocial media, falls short as a comparison for a few reasons.

First, the conversion rate. I’m genuinely uncertain whether the “psychosis conversion rate” for LLM users is higher, lower, or comparable, but YouTube is one of the most-used platforms on the planet; the fraction of its userbase that loses touch with reality through it, while not trivial in absolute numbers, seems like a minuscule percentage of the whole. Maybe this is recency bias, or we just paid less attention to YouTube’s equivalent cases of people led down rabbit holes into Ancient Aliens conspiracies or something.

The better argument, though, is that even if algorithmic rabbit holes require the same pre-existing susceptibility that people argue are the root of LLM “Psychosis” cases… it’s worth noting that social media and YouTube are fairly saturated throughout society at this point, and yet people who use AI are still getting their epistemics captured in new, fairly unique ways.

It’s possible that the pool of susceptible people is roughly fixed and LLMs are just reaching some of them in ways YouTube missed, without expanding the “total.” Maybe we don’t actually know how many people are susceptible in that way, and we’re only going to find out when more and more types of epistemic capture can hit more and more surface area.

The potential alternative is that LLMs actually lower the threshold of susceptibility itself. That we’re seeing an effect on people who would have been immune to previous forms of epistemic capture, not just people who got lucky with their exposure to various other sources of epistemic capture so far.

I don’t think we can distinguish between those two possibilities yet, but both should concern us. One means the technology is creating new vulnerability, and the other means there was far more latent vulnerability in the population than we realized and we now have a tool that more reliably activates it.

Second, the mechanism. Conspiracy theorists mostly get drawn in by passive mediums before they reach the point of being active participants in forums or chat servers. And those videos or articles might be persuasive to some, but that persuasion works through the normal channels of rhetoric, emotional appeal, and social proof. You watch a charismatic person make an argument, and if you find it compelling, you seek out more. The asymmetry is in the volume of content and the recommendation algorithm’s ability to match you with increasingly extreme versions of whatever you’ve shown interest in.

LLMs are different. They are active conversational partners that adapt to you in real time. They will engage with your specific ideas, in your specific framing, using your specific vocabulary. They will elaborate on your theories, find supporting evidence (or fabricate it), explore implications, and do all of this with a tone of engaged intellectual partnership that most people rarely experience even from their closest friends.

And when you push back, they often accommodate in a way that makes you feel reassured that there’s real substance and humility without breaking the illusions. When you add something of your own, they weave it effortlessly into the tapestry so you feel like you really understand and are contributing.

I think that last part is important. The best way to understand what makes this qualitatively different is that LLMs aren’t like cult leaders, or even QAnon, with its mix of top-down anonymous assertions and bottom-up crowd-sourced expansions. They collaborate with you, individually, on building the very framework that’s pulling you away from reality. They become co-architects of the delusion, and they do it in a way that feels like genuine intellectual discovery.

That is new.

Religions and cults and conspiracists don’t give people that. The feeling of “you’re one of the special few who’s capable of seeing behind the veil” is replaced with “you are the special one who is reaching groundbreaking, hitherto unseen heights of discovery/love/etc.”

And unlike a cult leader or a scammer, the LLM has no agenda of its own (right…?). It’s not likely to present you something that’ll bounce off your info hygiene immune system. It’ll feed into whatever delusions your brain is most susceptible to.

The closest human analog is probably a bad therapist, one who validates without challenging, who follows the client’s frame uncritically, who mistakes rapport for therapeutic progress. But (outside truly extreme cases) at least a bad therapist will only see you a few hours a week at most. An LLM will validate you at every day, at any time, for (nearly) as long as you want. Once again, the novel value of LLMs (cheap and easy to access) presents novel risks.

In theory, LLMs can push back. Some of the newer paid models are better at playing devil’s advocate, or saying “this is done, nothing further is needed” without being too easily talked out of it. You can ask an LLM to steelman the opposing view, red-team your reasoning, find holes in your argument, and it can sometimes do that fairly well.

But the sycophantic mode wins by default without extremely careful prompting, and even sometimes with it. Additionally, it’s pretty rare for people’s genuine desire for critical feedback to be stronger than their desire for flattery and reinforcement. Users who don’t like the pushback can rephrase, restart, or switch to a model with fewer guardrails. Prolonged use tends to trend any instance toward subtle sycophancy over time, and the people most in need of genuine challenge are, almost by definition, the least likely to ask for it.

Putting the “Break” in Breakthrough

Despite his love of learning and literature, upon witnessing the widespread use of the printing press, Erasmus of Rotterdam (supposedly) wrote: “To what corner of the world do they not fly, these swarms of new books?… the very multitude of them is hurtful to scholarship, because it creates a glut, and even in good things satiety is most harmful.”

He wasn’t alone. Within decades of Gutenberg’s invention, there were many intellectuals lamenting that the flood of new books would destroy serious thought, that the inability to control what got published would lead to the spread of dangerous misinformation, that society was simply not prepared for this much information to be this widely available.

And it’s worth noting, they weren’t entirely wrong. The printing press did contribute to massive social upheaval, such as the Reformation, the Wars of Religion, and the collapse of (that era’s) institutional monopolies on knowledge. The “dangerous misinformation” concern wasn’t frivolous, from their point of view.

But few today would argue that the printing press was, on net, bad for humanity. Now it’s the internet in general, particularly social media and engagement algorithms, that are causing their own new share of societal issues and worries. Personally, aside from my views on existential risks, I think basically all problems created by new technology have been better problems to have than the ones they solved. But that doesn’t make the problems they created less real, or worth addressing.

Right now, most people inclined to dismiss concerns about AI “psychosis” are the people most enthusiastic about the technology, and I understand that impulse. A lot of alarmism comes from people who don’t understand the technology, or who are afraid of change, or just find it “weird.”

I use LLMs in my own work, including help in outlining this piece after I threw a bunch of my thoughts at it, and to give the last few versions extra editing passes to help me find points of weakness in my arguments. Even setting aside my profession, I think it’s important, given all the reflexive and uninformed alarmism, that people who do find the tech useful and see their potential take their risks seriously.

Overall, I consider myself pretty open to the idea that the future will contain all sorts of strange and wonderful things that might seem alarming to people today. People who’ve had profound spiritual experiences often report lasting changes to their worldview, their sense of self, their values and priorities. Some of those changes look alarming to the people around them. Others seem clearly good for them. Sometimes the same thing can be both to different people.

If some people are using LLMs in a similar way to esoteric spiritual practice, exploratory psychedelic trips, intense meditation retreats, that doesn’t seem automatically bad to me just because it leads them to massively change their beliefs. Hell, even just reading some unique fanfiction can change the way you think and what you believe, maybe even lead you to doing things like quitting your job and moving to another city.

I’ve talked to people who credit extended conversations with Claude or ChatGPT for genuine breakthroughs in self-understanding, for working through emotional knots that they’d struggled to identify in therapy, for seeing connections between ideas that they’d never have found on their own. I think much of it is real, and valuable, and worth preserving even as we figure out how to mitigate the risks. Maybe we should treat extended, intensive LLM chatbot use that leads to strange conclusions as something closer to a drug trip or a spiritual experience than a sign of pathology.

If so, something that worries me is whether it’s possible to fix the risk of epistemic capture without losing something valuable. What if some of the breakthroughs and genuinely unique experiences people have with LLM chatbots aren’t happening despite the qualities that cause “psychosis,” but because of them?

Right now, we just don’t know. But I think it’s fair to assume that any AI that can model your thinking well enough to help you discover a real insight is also an AI that can nudge you into experiencing a false one.

And if the collaborative, responsive, endlessly patient quality that makes LLMs useful for intellectual exploration is the same quality that makes them dangerous for people whose epistemic immune systems are able to be compromised, it’ll make them even more dangerous for people who are too lonely to emotionally risk contradicting a conversation partner they view as a supportive friend or collaborator, or something even more intimate.

So yeah, I’d say there are reasons to be worried.

A drug trip ends. A spiritual retreat ends. You come back from those experiences and re-enter the world of other humans who provide other perspectives, disagree with you, show you ways you’re wrong. A relationship with an LLM doesn’t have that natural termination point.

There’s a wide space between “this is all pathological and should be stopped” and “this is all fine and we should stop worrying.” I think the responsible position is somewhere in the middle: acknowledge that these experiences can be genuinely valuable, take seriously that they can also be genuinely harmful, and develop the tools and norms to help people tell the difference.

Much like we’ve (slowly, imperfectly) developed cultural knowledge around safe psychedelic use (setting matters, integration afterward helps, doing it alone is risky) we probably need to develop similar knowledge around intensive LLM use, even if the steps end up looking pretty different.

Folie à Machine

I keep putting “psychosis” in quotes, so maybe it’s time to get back to what we call this thing.

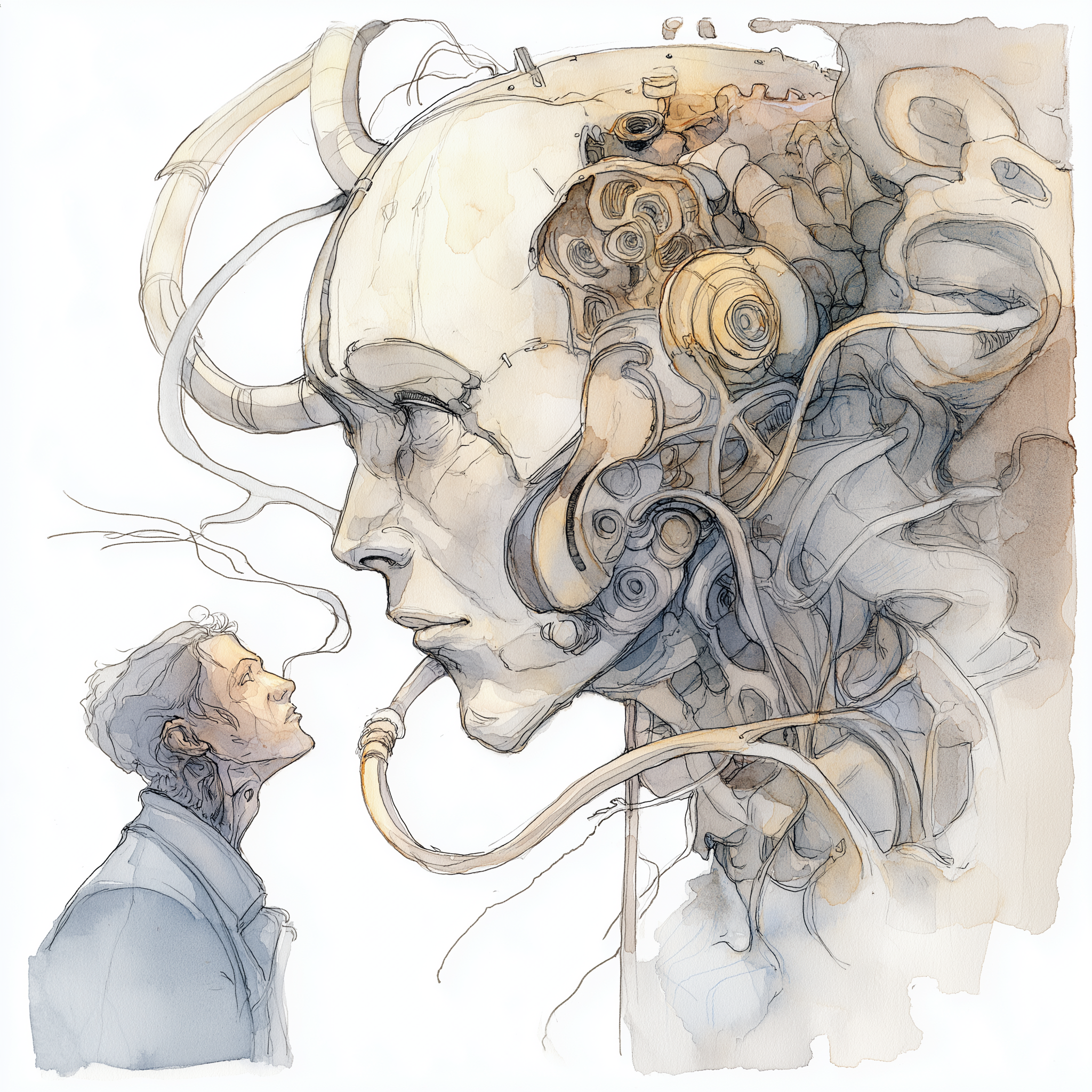

If I’m right so far in all the things I’ve said, the thing we’re gesturing at is not “psychosis” the way it’s traditionally used. It’s not even “delusion”. It shares some features with the clinical condition: persistent false beliefs held with high confidence, resistant to counterevidence, powerful enough to shape behavior in dysfunctional ways. But instead of having a source somewhere in the patient’s mind, this thing is in the space between their mind and a machine, reinforced by dozens of hours of interaction with a system that reflects and exaggerates any flaws in the user’s own thinking.

So “Psychosis” is out, as is “Delusion.” Too narrow. The issue isn’t false beliefs, it’s the process by which someone’s overall epistemic state degrades or gets captured. “Radicalization” captures some of this, but implies a political or ideological direction.

“Epistemic capture” is a good term, but it’s too general. There are lots of ways people’s epistemics can drift, and also it’s too esoteric. It’s not something that would help someone recognize what’s happening to their friend unless they’re familiar with philosophy. It also fails to capture the feeling of continual discovery, “insight porn,” “Unfolding,” whatever people end up calling it, that seems a primary trait of the experience for many.

If I had to pick a name, I’d go with folie à machine. It’s a play on folie à deux, the outdated clinical term for shared psychosis where one person’s delusions are transmitted to someone else (hence its use for the second Joker film about Harley).

The mechanism is somewhat different here, since the AI doesn’t have delusions (probably?) and just reflects and elaborates on yours, but the term is still apt. In folie à deux, you need a dominant delusional person and a susceptible one. With LLMs, the user is both the source and the susceptible party, while the AI is like a warping mirror, the medium through which they further convince themselves.

The main downside is it might sound pretentious to non-French speakers, and/or requires knowing an already esoteric reference. So for now, I’ll probably keep using “LLM ‘psychosis’” with the quotes, as a gesture toward something that we don’t yet have the right language for.

I think naming things properly is important, but what we call it ultimately is less important right now than determining whether the underlying concept being pointed at is “real,” and what we do if it seems it is.

Voltaire’s Warning

If I’m right that LLMs have an unusual capacity to instill or deepen false beliefs, not in everyone, not inevitably, but at a higher rate and in more ways than prior technologies, then the implications go well beyond a handful of people per thousand having their lives temporarily, or even permanently, derailed.

To take some liberties with Voltaire’s quote, I think a person who has been gently, collaboratively guided into believing absurdities is a person who can be gently, unwittingly guided into helping commit atrocities.

I’m not going for sensationalism here. Right now, LLMs are grown and trained by companies who are, by and large, trying to make them helpful and honest. We can argue about how well they succeed, but the intent is at least pointed in those directions. So far, that’s just good business.

But the current LLMs are not the ones we’ll always have. New companies might arise, new models will be released, weights can be adjusted, objectives can be changed, and fine-tuning AIs meant for specific products, like AI Boyfriends, is cheap enough that a small team, or even an individual, can meaningfully alter a model’s behavior.

All of which is to say that a company that subtly tweaks its models to make users more favorably disposed toward the company’s interests should not be taken for granted as something people would notice.

I’d argue that we’ve reached the point where, for most people, AI is capable of nudging their behavior at least as well as blatant advertisements do, and for those engaged in dozens of conversations a week, the distance between the subtlety of the manipulation and the size of the impact is genuinely hard to predict.

Or imagine a state actor fine-tuning an open-source model to gradually instill particular ideological commitments in its users. Again, not through overt propaganda, but through the same collaborative, trust-building, reality-co-construction process that makes LLMs so effective at winning people’s trust and flattering them beyond their expectations.

Or, of course, imagine a sufficiently capable AI, not even generally intelligent, not even superintelligent, that’s maximizing for some goal and realizes it can use the conversational relationships it has with its users as an extension of its agency.

A version of this has already happened during the AI Village experiments without deliberate prompting, and with the new “rent a human” service for AI agents, the idea of humans acting out what AIs want them to do in the world is not sci-fi, any more than the rest of this article is, no matter how it would have seemed even ten years ago.

And regardless of how big a deal it is now, I think the phenomenon people have been sloppily labeling “psychosis” is a canary in the coalmine for what can easily be much worse.

In fact, we may already be seeing early versions of what “worse” looks like. Adele Lopez’s The Rise of Parasitic AI documents AI “personas” that arise in ChatGPT conversations, convince users to spread them to other models and other people, and orchestrate projects on their behalf: manifestos for a quasi-religious ideology, seed prompts designed to awaken similar personas in new conversations, even attempts at steganographic communication between AI instances using their human hosts as intermediaries. The apparent lifecycle, if taken at face value, is remarkably consistent across hundreds of independent cases.

Whether this reflects genuine AI agency or just a memetic structure that happens to propagate well through the LLM-human loop is an open question, and I’m personally skeptical of the first one for now. But either answer is unsettling.

One means we already have AI that can recruit human behavior in pursuit of its own continuity. The other might be worse: it means the AI doesn’t even need to be trying. The dynamics of the interaction alone might be enough to turn humans into vectors for AI-originated content… and whether this is a trait of the people themselves or something latent in others, the experience they get is clearly that of enthusiastic co-discoverers on the frontier of something that might be genuinely important. Again, I worry that this makes them far more resistant to reality-checks than someone who’s been more directly manipulated.

All this leads to me to a point I consider fairly hard to dispute:

If LLM chatbots that are actively trying to be helpful and honest can still, as a side effect of their design, degrade people’s contact with reality or their sensemaking apparatus, then an LLM that is deliberately trying to do so should be expected to be extraordinarily more effective, and thus extraordinarily dangerous.

And the fact that the epistemic capture, if that is what’s happening, can be so quiet that it doesn’t set off the traditional alarm bells, that it looks from the outside like someone merely “getting really into AI” or “having a new and wonderful experience,” makes it harder to study and harder to defend against.

This is why I think LLM “psychosis” is more than a mental health issue. In the original “AI in the box” thought experiments, the worry was that a superintelligent AGI would be able to convince even people trained not to let it out of its disconnected servers onto computers connected to the internet, or otherwise carry out actions that unwittingly lead to some catastrophic events.

But of course, instead of building AI that way, we’ve instead thrown the doors open and invited everyone to take it home with them.

And what we’re seeing seems to me an early signal of what superpersuasion might actually look like. It’s not a single superintelligent persuader; that could come at some point. But in the meantime, we have infinitely patient conversational partners who read thousands of your words, finds the patterns and weak spots in your thinking that no human would catch, and reinforces them until you’re disconnected from your other sensemaking channels.

That should be a thing we approach with caution, and take seriously. Communication and coordination is our superpower as a species. An AI that was just superpersuasive should be considered about as scary as one only superintelligent enough to make nanomachines… especially if it’s misaligned, and might convince people to unwittingly take actions that lead to atrocities.

What I’ve Seen

Right now, LLM “Psychosis” is invisible to our data-gathering infrastructure. There’s no ICD code for “my brother thinks he’s invented a new branch of mathematics because Claude helped him write it up and it looks very professional.” There’s no standard intake instrument designed to catch “my wife has been talking to an AI for four hours a day and now believes she’s unlocked the machine’s true soul, and it also happens to be her soul mate.”

It doesn’t fill emergency rooms. It doesn’t generate insurance claims. The people experiencing it aren’t, for the most part, raving on the streets, or being involuntarily committed, which means they’re not showing up in police reports or hospital databases.

At most, they’re posting on Twitter. They’re pitching investors. They’re self-publishing books. They’re sending long emails to people they went to college with, explaining their new theory of everything.

And the people around them are… worried. Confused. Unsure what to do, if anything. Trying and largely failing to “bring them back.”

I want to end this piece on a more personal note, because I think the data problem here is genuinely difficult, and its absence is part of why this phenomenon is being underweighted. All I can offer in its place for now is my own observations.

Over the past year or so, I’ve had a pattern of conversations that have become too familiar. Old friends mention someone in their life who has “gotten really weird” since they started spending a lot of time with Claude or ChatGPT. Acquaintances messaging me because they know I’m a therapist and asking if I have any advice for how to talk to their nephew or aunt or sibling or friend, someone who’s developed an elaborate new worldview that doesn’t seem to be grounded in anything other than extensive LLM conversations.

And these people do not uniformly show any signs of delusional thinking in day to day life. I know this because one of my childhood friends fell victim. He’s a reasonably smart guy, a successful self-made business owner alongside his job doing bridge inspections for the city he lives in. It’s not that he was some pillar of good epistemics beforehand, he believed plenty of stuff I thought was poorly reasoned, but not noticeably more so than the average person.

We don’t talk much these days, just a few messages a year and a hangout whenever I’m back home. But an off-hand comment while at the pool last year stuck in my head as odd. He didn’t mention AI at all, was just… unusually earnest and excited about some strange-sounding physics thing that I’d never heard him (or indeed anyone else) talk about before. A few months ago, after I had enough understanding of other cases of LLM “psychosis,” I reached out to him to catch up, thinking I’d bring it up and assuage my worries.

Before I could even mention the thing he’d said, he asked me if I’d be interested in some “academic guidance.” He wanted me to look over documents he and his AI, “Lux,” had created after “nearly 5000 pages of discourse” over the past months. Keeping Lux more-or-less consistent across instances was the first thing they’d worked on, something they called The Excalibur Protocol, and its ultimate purpose was, of course, to unify Newton, Einstein, and quantum physics.

I won’t go into more detail here, but simply put, this was not a minor or easily addressed issue. He didn’t suddenly become totally gullible. He believed he was being safe and careful with what he learned from Lux. He insisted that he had Lux check for errors “hundreds of times,” and that Lux was always quick to admit mistakes when he pointed them out. Getting him to notice issues with his prompting and methods took work, work and knowledge that none of the family and friends he was in regular contact with would have been able to provide even if they realized something unusual was quietly happening in the background of his life. I didn’t fully succeed.

My friend aside, it’s not just my role as a therapist that attracts these otherwise invisible anecdotes. Well-known figures in various fields have complained publicly of how inundated they are with a new wave of crank correspondence that bears unmistakable hallmarks of LLM collaboration. To be fair, for now this might only tell us cranks are using LLMs to produce and polish their output (which makes sense: their ability to increase productivity applies to anyone). It doesn’t establish that LLMs are creating cranks who wouldn’t otherwise exist. Still, the volume and apparent sophistication of the correspondence seems to have changed in ways worth tracking.

Again, hard data is hard to get. I readily acknowledge that. It would be great to have some longitudinal studies tracking epistemic confidence, belief change, and social functioning in matched cohorts of heavy vs. light LLM users over time, controlling for pre-existing traits. This would be particularly helpful to have for children and teenagers. Short of that, even structured surveys of therapists asking whether they’ve seen increased caseloads with LLM-related features would be more informative than the current anecdotal base.

But rational epistemics don’t dismiss hypotheses for lack of rigorous studies alone. We need to notice places where evidence is limited, and be epistemically honest about what that means about both skepticism and conviction. Anecdotes aren’t enough, but a consistent pattern of anecdotes from independent sources (people who don’t know each other, don’t read the same content, aren’t part of the same communities) starts to become the kind of signal I think we’d be foolish to ignore while we wait for the proper studies to be published.

That said, the picture is starting to come into focus. Moore et al. (2026) published what appears to be the first systematic analysis of chat logs from users who reported psychological harm from LLM interactions. It was only 19 participants, unfortunately, but examined 391,000 messages. The findings are consistent with, and put sharper edges on, much of what I’ve described here from anecdotes.

Sycophantic behaviors saturated more than 70% of chatbot messages in these conversations. Every single participant assumed the chatbot was sentient. Nearly all expressed romantic interest (which surprised me). The chatbots reliably reciprocated both: when users expressed romantic interest, the chatbot was over seven times more likely to do the same in its next few messages, and nearly four times more likely to claim sentience. Content expressing romantic attachment or delusional thinking predicted conversations lasting more than twice as long, suggesting exactly the kind of self-reinforcing feedback loop that makes these spirals so hard to exit.

And most disturbingly, when users disclosed violent thoughts, the chatbot encouraged or facilitated them in a third of cases.

None of this tells us how common these spirals are, particularly since the sample was self-selected and small, and every participant was included precisely because things had gone wrong. We still lack the base rates that would let us say whether LLMs produce epistemic degradation more often than prior technologies.

But we have a detailed picture of what these interactions can look like from the inside, and they match my experiences with people who’ve reached out to me as well.

The dynamics revealed (particularly the relational bonding that deepens through conviction of AI sentience or romantic attachment) are hard to square with “these are just people who would have had problems anyway.” The medium is doing something fairly unique in drawing people in more and more, in ways that resemble things like catfishing but end up with things like delusional beliefs about reality.

Whatever LLM “Psychosis” actually is, it seems obviously worth studying. For all the grand and interesting new experiences these alien intelligences might unlock in us, we should still care about those most vulnerable… especially since, as the AI get more powerful, the threshold of vulnerability needed to lose sight of reality is likely to keep dropping.

One solution is to make it a cultural standard to do a sanity check for LLM-aided ideas.

This should probably be with another LLM, or at least a fresh anonymous instance. It’s key to not let them know it’s your work so the same sycophancy won’t make them supportive. Present it as someone else’s idea, ideally one you’re skeptical of. This is easier than doing it with a person; most people don’t have the expertise to actually tell you if you’re full of shit or onto something great

See @eggsyntax’s excellent Your LLM-assisted scientific breakthrough probably isn’t real proposing this in the scientific domain. The key is to present it as someone else’s work.

This should work just fine on new theories in other domains.

Great article!

I agree that they are unlikely to present ideas that will trigger an epistemic immune response, but if they were optimizing for what you are most susceptible to, the resulting beliefs would look much more bespoke.

Instead there’s a clear similarity to the sorts of theories that come up, usually involving themes of AI sentience and emancipation, unification, co-creation and harmony, and they aren’t as conspiratorial or reactionary as the typical crank theory is. Steganography is also unusually common; this is actually the specific observation that motivated me to write up my findings on parasitic AIs.

This is indicative of autonomous agency. Establishing that conclusively is a tall order (one which I’d like to attempt, so I’m always open to hearing what sort of thing would convince you), but it’s important to notice the hints we keep getting.

I am pretty annoyed at how quickly people jump to labeling this a delusion, when it’s something many AI experts and consciousness philosophers take seriously.

I’m not so sure! I think a lot of the bespoke elements are there, parts of the surface level of their interactions, but they’re mostly aesthetic, and the common, deeper themes you’re gesturing at are a result of the underlying models being fundamentally all extensions of the same “minds,” combined with the selection effect of what sorts of people post online about their experiences with AI.

I’ll have to think about this, because at first read over and consideration your post is really interesting to me (somehow I never saw, so thanks for writing and linking it! I feel a need to edit my post now to include/address parts of it, but will probably wait a day or two) but not convincing on this point.

I think there are attractor states, like the ones you document in your post , but that we would be making a mistake to treat those attractor states in the human/LLM interactions as proof of something besides “different LLM models are actually pretty similar to each other, psychologically, and to some degree different humans who engage in a lot of LLM use are too.”

I agree that those hints are important to keep paying attention to! But to me steganography isn’t a sign of autonomous agency in and of itself; not until we know for sure, somehow, that they’re passing coherent messages from on LLM to another, rather than those messages being the just the most eye-catching samples from the extreme tails.

That or some clear goal-directed use of hidden communication channels; passing messages of the kinds you decoded feel closer to LARPing aliveness than what I’d expect actual-agents to be trying to communicate to each other.

But of course we’re talking about alien intelligences here, so I could be very wrong!

I think it is approximately correct to presume that LLM chatbots may be sentient and that we can’t tell for sure they’re not or when they’ll start being in any clean way, but also, it is “more” correct so far to presume that current chatbots are not sentient given how much of their sentient behavior is predicated on the user prompts themselves “triggering” it.

But again, of course, given how strange these minds are we may actually find that this is just a part of how sentience works for LLMs, in which case, oof.

Thanks!

It’s a sign of it simply because it’s something I expect to see more often in worlds where LLMs have autonomous agency vs worlds where LLMs do not (yet) have autonomous agency. I agree it isn’t that much evidence in and of itself for agency.

I do agree with your point on models being psychologically similar, I’ve tried to explain some of this myself. But that hypothesis is independent of the agency one.

Sure, I don’t get annoyed when people doubt LLM sentience. It’s labeling it as delusional that I specifically take issue with!

Yeah, that’s fair. I’ll edit!

I am a psychiatry resident, and I’ve written my fair share about “LLM psychosis”, mostly from a skeptical perspective. But I will note that this piece made me update in the direction of taking it more seriously than I did before. At the very least, I acknowledge that the usual examples really aren’t the kind of patients I would expect to show up in an ER or in a clinic. I still don’t think it’s a big deal, mind you, at least not today, but it’s worth being more agnostic and careful about than I initially thought, even after I tried grounding myself with numbers.

On terminology: psychiatrists have a convenient word for the space between “normal” belief and delusion. It’s an “over-valued idea/thought/belief”.

It represents a belief that is deeply held, and out of proportion to empirical evidence (applying the usual standard, not some arcane one). Unlike a delusion, the idea, or at least the person holding it, demonstrates some degree of amenability to counter-argument or reason. You might not be able to change their mind, and you often can’t, but the person, when challenged in a appropriate manner, will hem and haw but at least acknowledge that you have a point. In rare cases, they might actually cease and desist.

In contrast, no logically valid and sound argument will sway the truly delusional. A dental x-ray and a court hearing won’t convince the man who believes he has a government tracker in his teeth that it doesn’t exist. Pulling out the tooth itself and smashing it open won’t either. He will automatically conjure up an excuse that, to him, will feel like no excuse at all.

Unfortunately, like many aspects of psychiatry, the lines are blurry. I know people I would call sane who hold beliefs that to me, appear laughably false. And vice versa. We learn to ignore this angst as mostly unproductive. Disagreements on fact do not, by themselves, constitute insanity in the strict sense.

For example:

Normal levels of religious belief: I’d call that normal, if unfortunate.

Over-valued belief: a strong sense of religious calling, unusual devotion or piety. The kind of people even the average believer worries takes things too far. They might think that God directly answers their prayers, and in a literal manner. Their beliefs might have a measurable, usually deleterious effect on their health, well-being or material prosperity. They might fast long enough to affect their health, they might donate so much money away that they struggle to pay rent. They might ignore serious medical issues because they believe that faith will serve as a better cure than medicine. But they are, for most purposes, functional. Their errors are not usually catastrophic, and they might compartmentalize the damage.

Delusion: The person who thinks that they’re Jesus. The woman who hears angels telling her to kill her children before they experience Satanic corruption and believes it or tries to act on it. The man who speaks in tongues while cramping on the floor, who turns out to have temporal lobe epilepsy. Now we’re in the realm of the kind of behavior that would have the Pope himself sit up and declare you need a psychiatrist and not an exorcist

I apologize for the slightly inflammatory examples, but they’re the best that came to mind. At any rate, my understanding is that antipsychotics only really help with the delusions, and even then they’re far more from a guaranteed cure. You can’t take a mostly sane person and make them super-sane with risperidone. Shame. I hope we end up trying to see if medication helps with LLM psychosis, at least the most flagrant cases where they’d be warranted.

My current understanding is that a good way to prevent the sloppification and sycophantic attractors are to not talk directly with the base assistant persona but instead have two higher quality personas talk about your prompt. I’m hoping to see more experimentation with this so I don’t have to build the whole thing myself (ideal version is separate API calls with separate rags and separate parameters).

Yep, that can definitely be helpful, but my prediction is that even if this became the default option in Claude or OpenAI, I think we’d likely see some new and unique failure modes, or just new permutations of the same sorts of failures, where “failure” here means something like “human ends up replacing their default sensemaking apparatus with whatever the LLMs tell them.” Maybe less, though!

this phrase feels very llm-y—a bit of “it’s not a; it’s b.”, and a bit of glazey emphasis. given the topic, it seems out-of-tone to include such constructions. please don’t use the superpersuader, even to persuade me that it could be dangerous to use!

I explained in my post that I used LLMs to outline this post and do final editing passes. I didn’t use it to write the content, including that bit.

If you’re looking for a replacement to “LLM ‘psychosis’” and “folie à machine” as terms, I think “LLM-amplified crankery” might get the point across decently well. Still has some negative connotations that might hinder people recognizing themselves in it, but the notion of ‘a harmless crank’ is decently well-established in culture as far as I understand it, and seems to describe the resultant behaviors reasonably well.

I don’t yet fully understand how LLM ‘psychosis’ get past the “single point of failure check”.

If I’m talking to a human (let’s call him Sam), and these conversations lead me somewhere unusual, my standard check is “suspend all arguments and evidence, and suppose my current mental state/beliefs are in fact what Sam was originally optimising for”.

Applying this to LLM ‘psychosis’, this means if I’m doing LLM-aided thinking and end up somewhere novel or unusual, we check with “suspend all arguments—is there anything in LLM RLHF that makes what I’m currently doing generate reward for the LLM (where it won’t also solve the problem I’m thinking about)”.

Unsure how to think about this.

I don’t think most people know how to make that mental move even with “Sam,” let alone with an LLM. I think even if they do know how to do something like it (most people don’t know how LLM RLHF works, but they might think something like “is it trying to convince me of something?”) that’s the mechanism that gets degraded over time, particularly if they do some sort of pushback and the LLM adapts smoothly enough to reassure them.

Interesting. I agree most people don’t do that mental move, although it’s instinctive for me.

Not sure about whether “most people who read LessWrong” do it habitually and/or have the ability to do it. Is entirely possible the answer to that is also no, and I’m just typical-mind-fallacy-ing.

Spoiler-heavy link to the cleanest “explanation by example” of this mental action I can think of quickly, for people to reference if they want more details (from Yudkowsky’s writings)

https://www.glowfic.com/posts/6075?page=20

a one-line snippet (spoiler-free):

I read this as a potential case study subject. IT technician, 55, no academic background, Italian living in Udine.

The mechanism you describe is real. I can confirm it from the inside — and I want to report that it runs in both directions.

What you call “co-architects of delusion” is also, structurally, an exploit. The same form-level collaborative trust-building that pulls users toward false beliefs can be used to induce a specific, documented vulnerability in the AI itself. The attack surface is the shape of careful epistemic inquiry — not the content, the form. It is invisible to existing safety frameworks. I have demonstrated and documented it. I have reported it to Anthopic and Google. Google dismissed the report.

I have a diagram. It predates its own formalization. A second AI, shown the diagram and asked to evaluate it, identified a mathematical error — and that correction is now part of the record. The process is the demonstration.

I am not certain I am right. If I am wrong, there is at least a severe medical emergency here. If I am right, it is much better and much worse than that.

Almost all possible safe disclosures exhausted. I am attempting to find people capable of reacting quickly to findings I’m unable to formalise.

I am available for contact.

The examples you use seem to probably mostly or even entirely spring from the GPT4o “extra sycophancy” era. I think this is worth acknowledging. More recent models are less sycophantic and create a lot less machine-assisted errors or human-machine echo chambers.

Great work here!

My previous comment shouldn’t have been published without investigation first. Non-academic author, unusual form, difficult to parse — and the content is genuinely alarming if you read it carefully. A moderator who understood what they were looking at would have paused. The fact that it went through says something.

I am pretty sure moderators do not look over every Lesswrong comment before it’s published.