Hello! I work at Lightcone and like LessWrong :-). I have made some confidentiality agreements I can’t leak much metadata about (like who they are with). I have made no non-disparagement agreements.

kave

I claim that similarly: non-profit funding norms are bad. In non-profit land, funding is cost-based. As long as you’re above some bar, you get funding to carry out your activities. No more, no matter how cost-effective you are. This reduces the power of the people doing good object-level work, and increases the power of the funder. OP’s point (1) and (2) both seem like things that happen with funders!

To be clear, this dynamic lacks the epistemic distortion of inappropriate credit assignment. And funders ultimately get to use their money how they want. But I think non-profit workers underrate the impact of their diminished empowerment. And that impacts their ability to do good by their lights!

I’ve read the linked transcript, but I don’t notice what you notice. You complain to it about this paragraph:

So the simpler version of the delay story is probably just: the US needed time to position defenses, Iran used that time to kill protestors, and that’s it. No need for a clever rally-around-the-flag mechanism to explain the crackdown — raw state violence was apparently sufficient on its own.

To which you reply:

that last paragraph seems glitchy like I just triggered a taboo

And then Claude agrees with you, though it’s not very concrete at first. When I was reading this section, I didn’t know what your complaint was, and couldn’t figure it out from Claude’s replies either. It seems like eventually Claude gets a bit more concrete in a way you can agree with, after you give more detailed pushback.

This is cool! I’m sad he spends so much of his time criticising the good part (AI doing tonnes of productive labour). I say this not because I want to demand every ally agree with me on every point, but because I want to early disavow beliefs that political expediency might want me to endorse.

I do prefer saying “effective ban”, because I don’t think a chatbot provider could be sued for any advice it gives that doesn’t result in any actual harm. It can only be sued for the harm. Now, because medical (and legal and …) advice is a game of risk management, this means that its impractical to offer advice under those constraints.

Folks with 5k+ karma often have pretty interesting ideas, and I want to hear more of them. I am pretty into them trying to lower the activation energy required for them to post. Also, they’re unusually likely to develop ways of making non-slop AI writing

There’s also a matter of “standing”; I think that users who have contributed that much to the site should take some risky bets that cost LessWrong something and might payoff. To expand my model here: one of the moderators’ jobs, IMO, is to spare LW the cost of having to read bad stuff and downvote it to invisibility. If LW had to do all the filtering that moderators do, it would make LW much noisier and more unpleasant to use. But users who’ve contributed a bunch should be able to ask LW to make that judgement directly.

That said, I do expect I’d strong downvote. LLM text often contains propositions no human mind believes, and I’m happy to triage to avoid reading a bunch of sentences no one believes. But I could be wrong and if there’s a strong enough quality signal, I’d be happy to see that.

For example, consider Christian homeschoolers in the year 3000. I’ve not read it; I bounced off of it. Based on Buck’s description of his writing process, I think it’s quite likely it would have been automatically rejected. (Pangram currently only gives it an LLM score of 0.1, though). I think writers like Buck might like to try more experiments like that in the future, with even more LLM prose. My guess is that LW is better off for having that post on it than not.

I think in most cases that a >5k karma user posts something that’s 100% AI, it’s better to let it through (though I expect I would strong downvote it).

When this post first came out, I felt that it was quite dangerous. I explained to a friend: I expected this model would take hold in my sphere, and somehow disrupt, on this issue, the sensemaking I relied on, the one where each person thought for themselves and shared what they saw.

This is a sort of funny complaint to have. It sounds a little like “I’m worried that this is a useful idea, and then everyone will use it, and they won’t be sharing lots of disorganised observations any more”. I suppose the simple way to express the bite of the worry is that I worried this concept was more memetically fit than it was useful.

Almost two years later, I find I use this concept frequently. I don’t find it upsetting; I find it helpful, especially for talking to my friends. Wuckles.

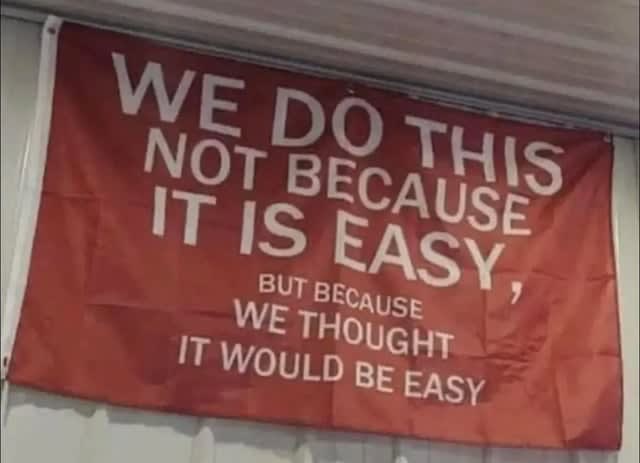

I see things in the world that look like believing in. For example, a friend of mine, who I respect a fair amount and has the energy and vision to pull off large projects, likes to share this photo:

Interestingly, I think that those who work with him generally know it won’t be easy. But it’s more achievable than his comrades think, because he has delusion on his side. He has a lot of non-epistemic believing in.

Another example: I think when interacting with people, it’s often appropriate to extend a certain amount of believing in to their self-models. Say my friend says he’s going to take up exercise. If I thought that were true, perhaps I’d get them a small exercise-related gift, like a water bottle. Or maybe I’d invite him on a run with me. If I thought it were false, a simple calculation might suggest not doing these things: it’s a small cost, and there’ll be no benefit. But I think it’s cool to invite them on the run or maybe buy the water bottle. I think this is a form of believing in, and I think it makes my actions look similar to those I’d take if I just simply believed them. But I don’t have to epistemically believe them to have my believing in lead to the action.

So, I do find this a helpful handle now. I do feel a little sad, like: yeah, there’s a subtle landscape that encompasses beliefs and plans and motivation, and now when I look it I see it criss-crossed by the balks of this frame. And I’m not sure it’s the best I could find, had I the time. For example, I’m interested in thinking more about lines of advance. Nonetheless, it helps me now, and that’s worth a lot. +4

Here’s the tally of each kind of vote:

Weak Upvote 3834911 Strong Upvote 369901 Weak Downvote 426706 Strong Downvote 43683And here’s my estimate of the total karma moved for each type:

Weak Upvote 5350471 Strong Upvote 1581885 Weak Downvote 641568 Strong Downvote 206491

Thanks, fixed!

I don’t think non-substantive aggression like this is appropriate for LessWrong. I appreciate that you pulled out quotes, but “typical LessWrong word salad” is not a sufficiently specific complaint to support the sneering.

Given that you imply you’re leaving, perhaps no moderation action is needed. But I expect I’ll take moderation action if you stick around and keep engaging in this way.

Huh! Where did you find the Stripe donation link? Did you just have it saved from last year?

Curated. I appreciate this post’s concreteness.

It can be hard to really understand what numbers in a benchmark mean. To do so, you have to be pretty familiar with the task distribution, which is often a little surprising. And, if you are bothering to get familiar with it, you probably already know how the LLM performs. So it’s hard to be sure you’re judging the difficulty accurately, rather than using your sense of the LLM’s intelligence to infer the task difficulty.

Fortunately, a Pokémon game involves a bunch of different tasks, and I’m pretty familiar with them from childhood gameboy sessions. So LLM performance on the game can provide some helpful intuitions about LLM performance in general. Of course, you don’t get all the niceties of statistical power and so on, but I still find it a helpful data source to include.

This post does a good job abstracting some of the subskills involved and provides lots of deliciously specific examples for the claims. It’s also quite entertaining!

Thank you!

Would it help if the prompt read more like a menu?Reviews should provide information that help evaluate a post. For example:

What does this post add to the conversation?

How did this post affect you, your thinking, and your actions?

Does it make accurate claims? Does it carve reality at the joints? How do you know?

Is there a subclaim of this post that you can test?

What followup work would you like to see building on this post?

The predicted winners for future years of the review are now visible on the Best of LessWrong page! Here are the top ten guesses for the currently ongoing 2024 review:

(I’ve already voted on several of these! I doctored the screenshot to hide my votes)

I think LessWrong’s annual review is better than karma at finding the best and most enduring posts. Part of the dream for the review prediction markets is bringing some of that high-quality signal from the future into the present. That signal is currently highlighted with gold karma on the post item, if the prediction market has a high enough probability.

Currently the markets are pretty thinly traded, but I think they already have decent signal. They could do a lot better, I think, with a little more smart trading. It would be a nice bonus if this UI attracted a bit more betting.

Hopefully coming soon: a tag on the markets which indicates which year review they’ll be in, to make it a bit easier for consistency traders to make their bag.

Human intelligence amplification is very important. Though I have become a bit less excited about it lately, I do still guess it’s the best way for humanity to make it to a glorious destiny. I found that having a bunch of different methods in one place organised my thoughts, and I could more seriously think about what approaches might work.

I appreciate that Tsvi included things as “hard” as brain emulation and as soft as rationality, tools for thought and social epistemology.

I liked this post. I thought it was interesting to read about how Tobes’ relation to AI changed, and the anecdotes were helpfully concrete. I could imagine him in those moments, and get a sense of how he was feeling.

I found this post helpful for relating to some of my friends and family as AI has been in the news more, and they connect it to my work and concerns.A more concrete thing I took away: the author describing looking out of his window and meditating on the end reaching him through that window. I find this a helpful practice, and sometimes I like to look out of a window and think about various endgames and how they might land in my apartment or workplace or grocery store.

I’m a big fan of this series. I think that puzzles and exercises are undersupplied on LessWrong, especially ones that are fun, a bit collaborative and a bit competitive. I’ve recently been trying my hand at some of the backlog, and it’s been pretty cool. I can feel that I’m getting at least a bit better at compressing the dimensionality of the data as I investigate it.

In general, I’d guess that data science is a pretty important epistemological skill. I think LessWrongers aren’t as strong in it as they ideally would be. This is in part because of a justified suspicion that people just pour in data and confusion, and get out more official-looking confusion. I’d say that a central point of this series is: how do you avoid confusing yourself with data by actually thinking about things?

I have the impression that I reach for this rule fairly frequently. I only ontologise it as a rule to look out for because of this post. (I normally can’t remember the exact number, so have to go via the compound interest derivation).

I’m not sure what you’re getting at with all your “just”s. Like, it doesn’t seem like we can “just” get a data centre ban. Why would these other bans be easier? probably you don’t mean that they would be, but I’m confused what you do mean.

Similarly, I don’t understand which worldview “where you can’t use technological progress to make it harder to unilaterally deploy AI” you’re talking about. In particular, I don’t see such a worldview expressed in the comment you’re replying to. I’d guess you think it’s a consequence of the “drastically more costly/invasive” qualifier, but the connection is a little remote for me to follow.