Hello! I work at Lightcone and like LessWrong :-). I have made some confidentiality agreements I can’t leak much metadata about (like who they are with). I have made no non-disparagement agreements.

kave

I predict that LessWrong would moderate an imprisoned Ziz’s writing in accord with Vaniver’s comment, rather than yours.

Eliezer wrote about the psychological unity of humankind. Though he seemingly disavows it now (human value differences also seems to be a theme in Planecrash).

To be clear, I’m not saying that Eliezer’s current views would make negative predictions about Putin’s CEV. I think a more central examples is that Eliezer now predicts (I think) that some people’s CEV is nothingness, because they hate suffering so much more than they like flourishing.

I used to use it for coming up with words.

I use it often as a “reverse dictionary”, which helps with coining new words too. I often ask about related vocabulary in follow up messages.

Examples from a recent post

historical word that refers to a poor person (not necessarily qua a poor person. it could for example be villein)

word for uncertain, especially colocating with “living”/”living situation”is there a word for something like a pole with a big flat disk at the end? I think a piston is one term in one context. What about others?

are there any technical terms used in processes where moisture is removed from something?

what are some technical, cloud-related words? (or potentially sky related) as in actual clouds, not cloud computing

what are some specific pre-industrial mining professions?

Don’t overupdate on insider gossip

Anthropic employees seem to be taking the Mythos results pretty seriously! I know people who work at Anthropic who are talking about buying shacks in the woods, or are spending their weekends setting up 2FAs and closing down old internet accounts. I think there’s similar hullabalo on twitter. These actions may well be high EV! But, I think people tend to overupdate from all of this lab-employee seriousness.

People at a lab are unusually likely to think that that lab’s work is a big deal. There’s both a selection effect and an intervention effect: you’re more likely to choose to work there if you expect it to be impactful, and then you’re spending all day with people who also expect that.

I imagine most people at Anthropic haven’t seen good evidence about how Mythos actually performs. They’re mostly going off the internal vibe, which is particularly seeded by the people who worked on Mythos the most. Those people have the best information, but they’re also the ones most likely to think that Mythos is a big deal that matters even more than Anthropic’s work in general.

A friend pointed out that Anthropic does have a bunch of smart, disagreeable people working there. I think disagreeableness does defend you against groupthink, but it’s much more effective when you start out disagreeing about whether an effect is real than how large it is. I think disagreeable people are often pretty good at saying “no, fuck you, I don’t think that’s true at all”. They might get dragged along with the crowd once they agree that something is some amount true

This isn’t to say that we should completely discount insider gossip. And I’m definitely not saying anything in particular about Mythos’ impact. I’d have to look much more into the model card and the patches and stuff if I wanted to form an opinion about that! I’m just saying, I’m less swayed by the miasma of panic rolling out of Howard St than many of my friends seem to be.

You could try this coffee where they decaffeinate it and then add in paraxanthine

I wanted to see how this compared to a world where caffeine had a 10 hour half-life, and no relevant metabolite.

Here’s what Claude made:

The relative potency seems quite important to the overall effect. Like, if paraxanthine is 0.5x as effective as caffeine, it doesn’t seem like a huge deal. More than that and it starts seeming quite significant! Would be great to get more of a sense.

There may be a better term than superbabies for the thing I want to point to, or the thing you want to point to, but superbabies is certainly a bad term for the topic of OP

I think “superbabies” has historically referred to (close-to-)smartest-ever-humans via reprogenetics, and I think this is a useful term, and should continue to refer to it. This post seems like it’s diluting the term, using it to refer to all reprogenetically enhanced children

I’m not sure what you’re getting at with all your “just”s. Like, it doesn’t seem like we can “just” get a data centre ban. Why would these other bans be easier? probably you don’t mean that they would be, but I’m confused what you do mean.

Similarly, I don’t understand which worldview “where you can’t use technological progress to make it harder to unilaterally deploy AI” you’re talking about. In particular, I don’t see such a worldview expressed in the comment you’re replying to. I’d guess you think it’s a consequence of the “drastically more costly/invasive” qualifier, but the connection is a little remote for me to follow.

I claim that similarly: non-profit funding norms are bad. In non-profit land, funding is cost-based. As long as you’re above some bar, you get funding to carry out your activities. No more, no matter how cost-effective you are. This reduces the power of the people doing good object-level work, and increases the power of the funder. OP’s point (1) and (2) both seem like things that happen with funders!

To be clear, this dynamic lacks the epistemic distortion of inappropriate credit assignment. And funders ultimately get to use their money how they want. But I think non-profit workers underrate the impact of their diminished empowerment. And that impacts their ability to do good by their lights!

I’ve read the linked transcript, but I don’t notice what you notice. You complain to it about this paragraph:

So the simpler version of the delay story is probably just: the US needed time to position defenses, Iran used that time to kill protestors, and that’s it. No need for a clever rally-around-the-flag mechanism to explain the crackdown — raw state violence was apparently sufficient on its own.

To which you reply:

that last paragraph seems glitchy like I just triggered a taboo

And then Claude agrees with you, though it’s not very concrete at first. When I was reading this section, I didn’t know what your complaint was, and couldn’t figure it out from Claude’s replies either. It seems like eventually Claude gets a bit more concrete in a way you can agree with, after you give more detailed pushback.

This is cool! I’m sad he spends so much of his time criticising the good part (AI doing tonnes of productive labour). I say this not because I want to demand every ally agree with me on every point, but because I want to early disavow beliefs that political expediency might want me to endorse.

I do prefer saying “effective ban”, because I don’t think a chatbot provider could be sued for any advice it gives that doesn’t result in any actual harm. It can only be sued for the harm. Now, because medical (and legal and …) advice is a game of risk management, this means that its impractical to offer advice under those constraints.

Folks with 5k+ karma often have pretty interesting ideas, and I want to hear more of them. I am pretty into them trying to lower the activation energy required for them to post. Also, they’re unusually likely to develop ways of making non-slop AI writing

There’s also a matter of “standing”; I think that users who have contributed that much to the site should take some risky bets that cost LessWrong something and might payoff. To expand my model here: one of the moderators’ jobs, IMO, is to spare LW the cost of having to read bad stuff and downvote it to invisibility. If LW had to do all the filtering that moderators do, it would make LW much noisier and more unpleasant to use. But users who’ve contributed a bunch should be able to ask LW to make that judgement directly.

That said, I do expect I’d strong downvote. LLM text often contains propositions no human mind believes, and I’m happy to triage to avoid reading a bunch of sentences no one believes. But I could be wrong and if there’s a strong enough quality signal, I’d be happy to see that.

For example, consider Christian homeschoolers in the year 3000. I’ve not read it; I bounced off of it. Based on Buck’s description of his writing process, I think it’s quite likely it would have been automatically rejected. (Pangram currently only gives it an LLM score of 0.1, though). I think writers like Buck might like to try more experiments like that in the future, with even more LLM prose. My guess is that LW is better off for having that post on it than not.

I think in most cases that a >5k karma user posts something that’s 100% AI, it’s better to let it through (though I expect I would strong downvote it).

When this post first came out, I felt that it was quite dangerous. I explained to a friend: I expected this model would take hold in my sphere, and somehow disrupt, on this issue, the sensemaking I relied on, the one where each person thought for themselves and shared what they saw.

This is a sort of funny complaint to have. It sounds a little like “I’m worried that this is a useful idea, and then everyone will use it, and they won’t be sharing lots of disorganised observations any more”. I suppose the simple way to express the bite of the worry is that I worried this concept was more memetically fit than it was useful.

Almost two years later, I find I use this concept frequently. I don’t find it upsetting; I find it helpful, especially for talking to my friends. Wuckles.

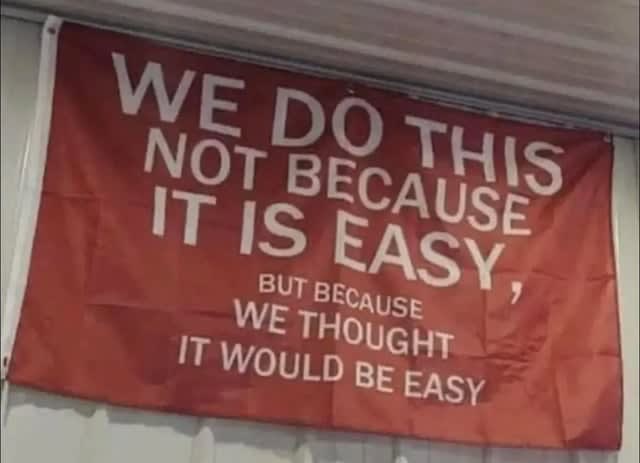

I see things in the world that look like believing in. For example, a friend of mine, who I respect a fair amount and has the energy and vision to pull off large projects, likes to share this photo:

Interestingly, I think that those who work with him generally know it won’t be easy. But it’s more achievable than his comrades think, because he has delusion on his side. He has a lot of non-epistemic believing in.

Another example: I think when interacting with people, it’s often appropriate to extend a certain amount of believing in to their self-models. Say my friend says he’s going to take up exercise. If I thought that were true, perhaps I’d get them a small exercise-related gift, like a water bottle. Or maybe I’d invite him on a run with me. If I thought it were false, a simple calculation might suggest not doing these things: it’s a small cost, and there’ll be no benefit. But I think it’s cool to invite them on the run or maybe buy the water bottle. I think this is a form of believing in, and I think it makes my actions look similar to those I’d take if I just simply believed them. But I don’t have to epistemically believe them to have my believing in lead to the action.

So, I do find this a helpful handle now. I do feel a little sad, like: yeah, there’s a subtle landscape that encompasses beliefs and plans and motivation, and now when I look it I see it criss-crossed by the balks of this frame. And I’m not sure it’s the best I could find, had I the time. For example, I’m interested in thinking more about lines of advance. Nonetheless, it helps me now, and that’s worth a lot. +4

Here’s the tally of each kind of vote:

Weak Upvote 3834911 Strong Upvote 369901 Weak Downvote 426706 Strong Downvote 43683And here’s my estimate of the total karma moved for each type:

Weak Upvote 5350471 Strong Upvote 1581885 Weak Downvote 641568 Strong Downvote 206491

I mean his comment on this thread. I originally read Ben as saying that if all the associated parties had been cleared or imprisoned, LessWrong would allow posts from the imprisoned parties in general. I think that, in fact, writing on psychology etc would likely be moderated.

On rereading Ben, I think there’s less of a difference than I imagined. Ben points to new people taking action apparently downstream of this thinking & writing. That would suggest continuing to moderate imprisoned folks. So seems like Ben’s comment agrees about the writings of imprisoned parties.