Was a philosophy PhD student, left to work at AI Impacts, then Center on Long-Term Risk, then OpenAI. Quit OpenAI due to losing confidence that it would behave responsibly around the time of AGI. Now executive director of the AI Futures Project. I subscribe to Crocker’s Rules and am especially interested to hear unsolicited constructive criticism. http://sl4.org/crocker.html

Some of my favorite memes:

(by Rob Wiblin)

(xkcd)

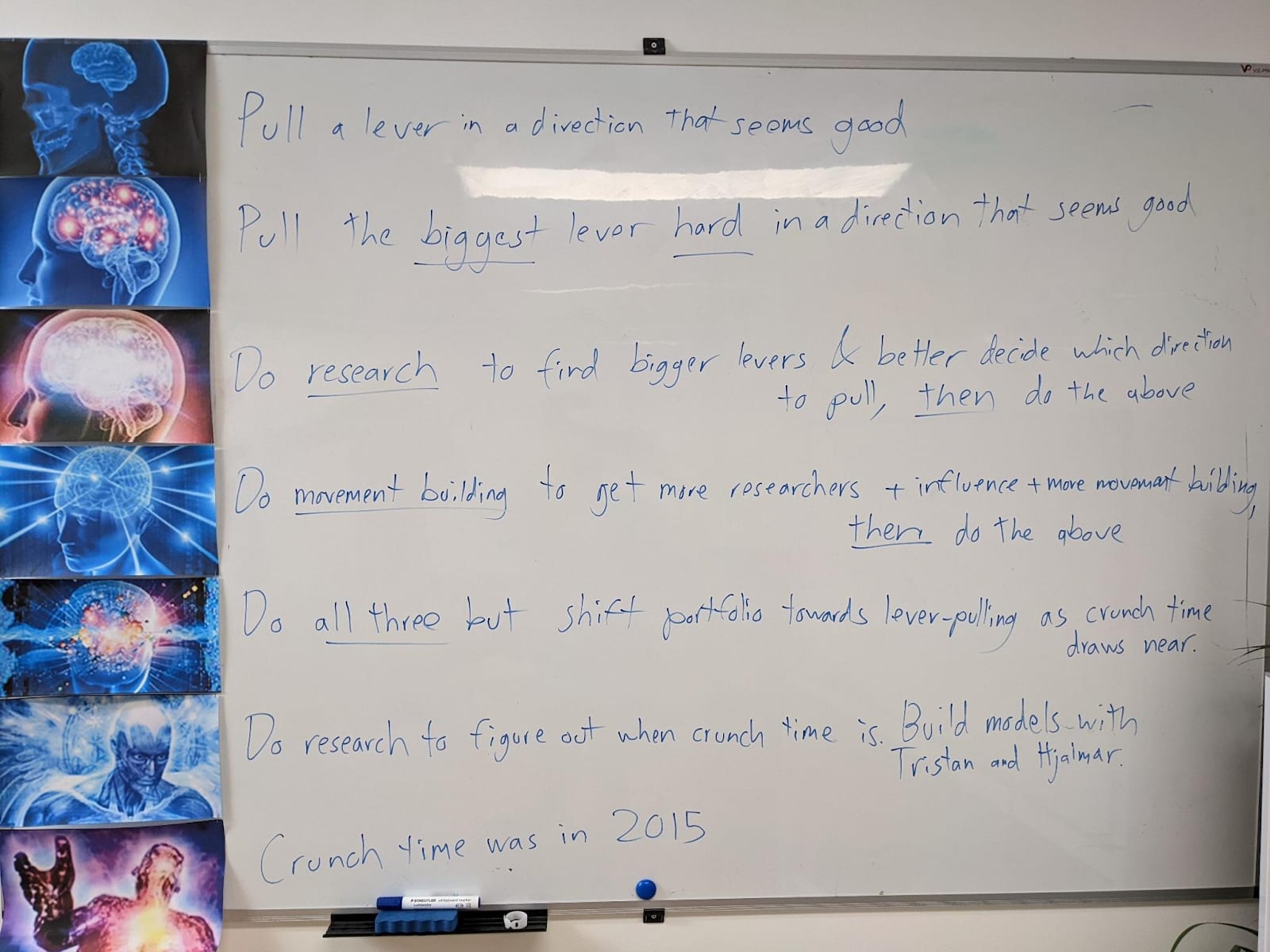

My EA Journey, depicted on the whiteboard at CLR:

(h/t Scott Alexander)

It doesn’t feel that way to me fwiw. I feel like lots of people I know including myself have made arguments that things might be fine. For example the salty, cynical, John Wentworth wrote “Alignment by Default.” Also, see AI 2027 Slowdown ending.

Now, if xriskpilled means: You think there’s a >5% chance of literal extinction (or similarly bad outcomes) due to misaligned AIs, then yeah I think I do kinda judge people who aren’t xriskpilled in that sense, because I think believing the chance is <5% is extremely unjustified once you know a decent amount about the situation and the evidence.