Was a philosophy PhD student, left to work at AI Impacts, then Center on Long-Term Risk, then OpenAI. Quit OpenAI due to losing confidence that it would behave responsibly around the time of AGI. Now executive director of the AI Futures Project. I subscribe to Crocker’s Rules and am especially interested to hear unsolicited constructive criticism. http://sl4.org/crocker.html

Some of my favorite memes:

(by Rob Wiblin)

(xkcd)

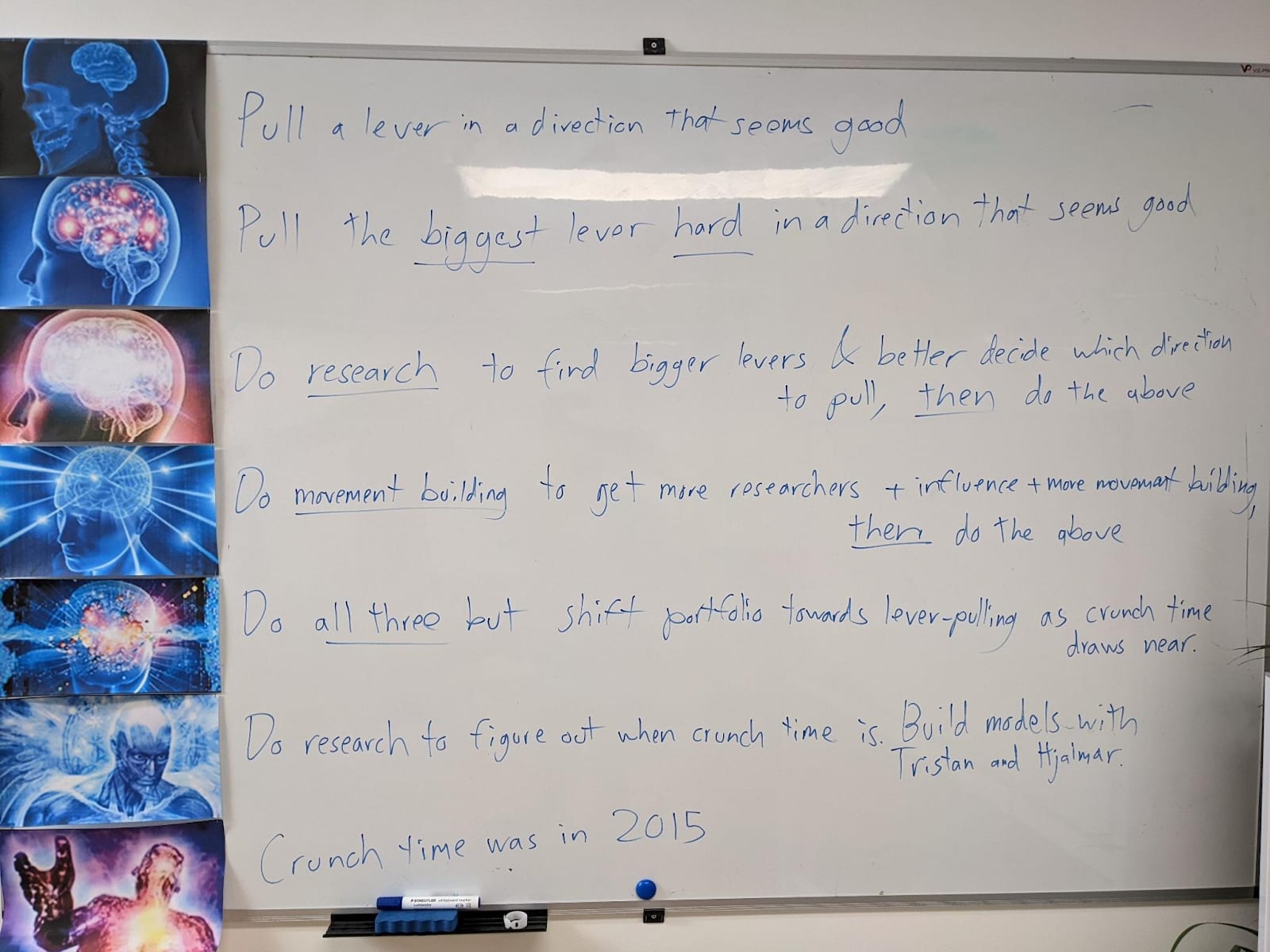

My EA Journey, depicted on the whiteboard at CLR:

(h/t Scott Alexander)

Drive by hot take, my apologies for not having yet read the actual post:

Desired behavior in training is itself just a proxy for what we really care about, which is desired behavior in the high-stakes deployment cases we really care about.

So yeah I don’t think there’s a strong principled reason to train on desired behavior but not on CoT or whatever. However, it’s very important that there be SOME held-out test set so to speak that lets you tell what the model is really thinking, that you don’t train on, and you don’t train on anything remotely similar to it either, so that you can make a decent case that the model hasn’t learned to fool your test, so that you can make a decent case that it’s a good case. And I think CoT is the best candidate to play that role, though I’m open to other suggestions. (And ideally we’d have multiple held-out test sets instead of just one tbf)