Philosophy PhD student, worked at AI Impacts, then Center on Long-Term Risk, then OpenAI. Quit OpenAI due to losing confidence that it would behave responsibly around the time of AGI. Not sure what I’ll do next yet. Views are my own & do not represent those of my current or former employer(s). I subscribe to Crocker’s Rules and am especially interested to hear unsolicited constructive criticism. http://sl4.org/crocker.html

Some of my favorite memes:

(by Rob Wiblin)

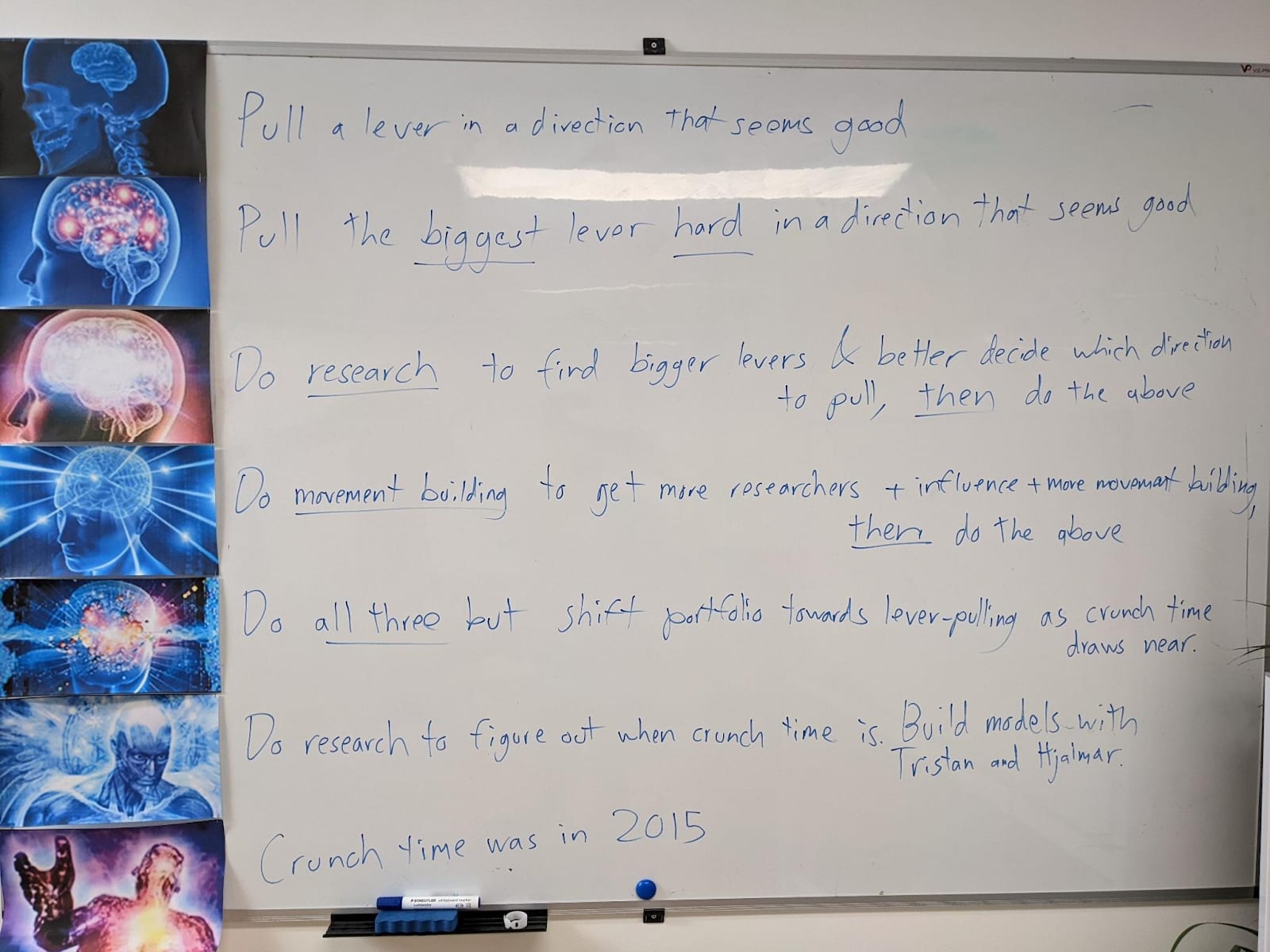

My EA Journey, depicted on the whiteboard at CLR:

(h/t Scott Alexander)

Citation/link please? “Trust, but verify.”

This seems overconfident to me. So many people made confident predictions about what Putin would or wouldn’t do (or Trump, or Obama, or Xi for that matter) and were wrong. It’s hard to predict world leader behavior even in cases with somewhat of a precedent, which this is not. Example: Early in this war I thought “Belarus will almost certainly join the war sooner or later, because Putin can have Lukashenko assassinated, along with loads of higher-ups in the government, if he wants.” Welp, I was wrong. Don’t know why yet. Similarly I think that if Putin were to accept a “vietnam” he’d probably still remain in power. You might think that there’d be a revolution, and there totally might, but I don’t know how you can be almost certain.