Interfaces as a Scarce Resource

Outline:

The first three sections (Don Norman’s Fridge, Interface Design, and When And Why Is It Hard?) cover what we mean by “interface”, what it looks like for interfaces to be scarce, and the kinds of areas where they tend to be scarce.

The next four sections apply these ideas to various topics:

Why AR is much more difficult than VR

AI alignment from an interface-design perspective

Good interfaces as a key bottleneck to creation of markets

Cross-department interfaces in organizations

Don Norman’s Fridge

Don Norman (known for popularizing the term “affordance” in The Design of Everyday Things) offers a story about the temperature controls on his old fridge:

I used to own an ordinary, two-compartment refrigerator—nothing very fancy about it. The problem was that I couldn’t set the temperature properly. There were only two things to do: adjust the temperature of the freezer compartment and adjust the temperature of the fresh food compartment. And there were two controls, one labeled “freezer”, the other “refrigerator”. What’s the problem?

Oh, perhaps I’d better warn you. The two controls are not independent. The freezer control also affects the fresh food temperature, and the fresh food control also affects the freezer.

The natural human model of the refrigerator is: there’s two compartments, and we want to control their temperatures independently. Yet the fridge, apparently, does not work like that. Why not? Norman:

In fact, there is only one thermostat and only one cooling mechanism. One control adjusts the thermostat setting, the other the relative proportion of cold air sent to each of the two compartments of the refrigerator.

It’s not hard to imagine why this would be a good design for a cheap fridge: it requires only one cooling mechanism and only one thermostat. Resources are saved by not duplicating components—at the cost of confused customers.

The root problem in this scenario is a mismatch between the structure of the machine (one thermostat, adjustable allocation of cooling power) and the structure of what-humans-want (independent temperature control of two compartments). In order to align the behavior of the fridge with the behavior humans want, somebody, at some point, needs to do the work of translating between the two structures. In Norman’s fridge example, the translation is botched, and confusion results.

We’ll call whatever method/tool is used for translating between structures an interface. Creating good methods/tools for translating between structures, then, is interface design.

Interface Design

In programming, the analogous problem is API design: taking whatever data structures are used by a software tool internally, and figuring out how to present them to external programmers in a useful, intelligible way. If there’s a mismatch between the internal structure of the system and the structure of what-users-want, then it’s the API designer’s job to translate. A “good” API is one which handles the translation well.

User interface design is a more general version of the same problem: take whatever structures are used by a tool internally, and figure out how to present them to external users in a useful, intelligible way. Conceptually, the only difference from API design is that we no longer assume our users are programmers interacting with the tool via code. We design the interface to fit however people use it—that could mean handles on doors, or buttons and icons in a mobile app, or the temperature knobs on a fridge.

Economically, interface design is a necessary input to make all sorts of things economically useful. How scarce is that input? How much are people willing to spend for good interface design?

My impression is: a lot. There’s an entire category of tech companies whose business model is:

Find a software tool or database which is very useful but has a bad interface

Build a better interface to the same tool/database

…

Profit

This is an especially common pattern among small but profitable software companies; it’s the sort of thing where a small team can build a tool and then lock in a small number of very loyal high-paying users. It’s a good value prop—you go to people or businesses who need to use X, but find it a huge pain, and say “here, this will make it much easier to use X”. Some examples:

Companies which interface to government systems to provide tax services, travel visas, patenting, or business licensing

Companies which set up websites, Salesforce, corporate structure, HR services, or shipping logistics for small business owners with little relevant expertise

Companies which provide graphical interfaces for data, e.g. website traffic, sales funnels, government contracts, or market fundamentals

Even bigger examples can be found outside of tech, where humans themselves serve as an interface. Entire industries consist of people serving as interfaces.

What does this look like? It’s the entire industry of tax accountants, or contract law, or lobbying. It’s any industry where you could just do it yourself in principle, but the system is complicated and confusing, so it’s useful to have an expert around to translate the things-you-want into the structure of the system.

In some sense, the entire field of software engineering is an example. A software engineer’s primary job is to translate the things-humans-want into a language understandable by computers. People use software engineers because talking to the engineer (difficult though that may be) is an easier interface than an empty file in Jupyter.

These are not cheap industries. Lawyers, accountants, lobbyists, programmers… these are experts in complicated systems, and they get paid accordingly. The world spends large amounts of resources using people as interfaces—indicating that these kinds of interfaces are a very scarce resource.

When And Why Is It Hard?

Don Norman’s work is full of interesting examples and general techniques for accurately communicating the internal structure of a tool to users—the classic example is “handle means pull, flat plate means push” on a door. At this point, I think (at least some) people have a pretty good understanding of these techniques, and they’re spreading over time. But accurate communication of a system’s internal structure is only useful if the system’s internal structure is itself pretty simple—like a door or a fridge. If I want to, say, write a contract, then I need to interface to the system of contract law; accurately communicating that structure would take a whole book, even just to summarize key pieces.

There are lots of systems which are simple enough that accurate communication is the bulk of the problem of interface design—this includes most everyday objects (like fridges), as well as most websites or mobile apps.

But the places where we see expensive industries providing interfaces—like law or software—are usually the cases where the underlying system is more complex. These are cases where the structure of what-humans-want is very different from the system’s structure, and translating between the two requires study and practice. Accurate communication of the system’s internal structure is not enough to make the problem easy.

In other words: interfaces to complex systems are especially scarce. This economic constraint is very taut, across a number of different areas. We see entire industries—large industries—whose main purpose is to provide non-expert humans with an interface to a particular complex system.

Given that interfaces to complex systems are a scarce resource in general, what other predictions would we make? What else would we expect to be hard/expensive, as a result of interfaces to complex systems being hard/expensive?

AR vs VR

By the standards of software engineering, pretty much anything in the real world is complex. Interfacing to the real world means we don’t get to choose the ontology—we can make up a bunch of object types and data structures, but the real world will not consider itself obligated to follow them. The internal structure of computers or programming languages is rarely a perfect match to the structure of the real world.

Interfacing the real world to computers, then, is an area we’d expect to be difficult and expensive.

Augmented reality (AR) is one area where I expect this to be keenly felt, especially compared to VR. I expect AR applications to lag dramatically behind full virtual reality, in terms of both adoption and product quality. I expect AR will mostly be used in stable, controlled environments—e.g. factory floors or escape-room-style on-location games.

Why is interfacing software with the real world hard? Some standard answers:

The real world is complicated. This is a cop-out answer which doesn’t actually explain anything.

The real world has lots of edge cases. This is also a cop-out, but more subtly; the real world will only seem to be full of edge cases if our program’s ontologies don’t line up well with reality. The real question: why is it hard to make our ontologies line up well with reality?

Some more interesting answers:

The real world isn’t implemented in Python. To the extent that the real world has a language, that language is math. As software needs to interface more with the real world, it’s going to require more math—as we see in data science, for instance—and not all of that math will be easy to black-box and hide behind an API.

The real world is only partially observable—even with ubiquitous sensors, we can’t query anything anytime the way we can with e.g. a database. Explicitly modelling things we can’t directly observe will become more important over time, which means more reliance on probability and ML tools (though I don’t think black-box methods or “programming by example” will expand beyond niche applications).

We need enough compute to actually run all that math. In practice, I think this constraint is less taut than it first seems—we should generally be able to perform at least as well as a human without brute-forcing exponentially hard problems. That said, we do still need efficient algorithms.

The real-world things we are interested in are abstract, high-level objects. At this point, we don’t even have the mathematical tools to work with these kinds of fuzzy abstractions.

We don’t directly control the real world. Virtual worlds can be built to satisfy various assumptions by design; the real world can’t.

Combining the previous points: we don’t have good ways to represent our models of the real world, or to describe what we want in the real world.

Software engineers are mostly pretty bad at describing what they want and building ontologies which line up with the real world. These are hard skills to develop, and few programmers explicitly realize that they need to develop them.

Alignment

Continuing the discussion from the previous section, let’s take the same problems in a different direction. We said that translating what-humans-want-in-the-real-world into a language usable by computers is hard/expensive. That’s basically the AI alignment problem. Does the interfaces-as-scarce-resource view lend any interesting insight there?

First, this view immediately suggests some simple analogues for the AI alignment problem. The “Norman’s fridge alignment problem” is one—it’s surprisingly difficult to get a fridge to do what we want, when the internal structure of the fridge doesn’t match the structure of what we want. Now consider the internal structure of, say, a neural network—how well does that match the structure of what we want? It’s not hard to imagine that a neural network would run into a billion-times-more-difficult version of the fridge alignment problem.

Another analogue is the “Ethereum alignment problem”: we can code up a smart contract to give monetary rewards for anything our code can recognize. Yet it’s still difficult to specify a contract for exactly the things we actually want. This is essentially the AI alignment problem, except we use a market in place of an ML-based predictor/optimizer. One interesting corollary of the analogy: there are already economic incentives to find ways of aligning a generic predictor/optimizer. That’s exactly the problem faced by smart contract writers, and by other kinds of contract writers/issuers in the economy. How strong are those incentives? What do the rewards for success look like—are smart contracts only a small part of the economy because the rewards are meager, or because the problems are hard? More discussion of the topic in the next section.

Moving away from analogues of alignment, what about alignment paths/strategies?

I think there’s a plausible (though not very probable) path to general artificial intelligence in which:

We figure out various core theoretical problems, e.g. abstraction, pointers to values, embedded decision theory, …

The key theoretical insights are incorporated into new programming languages and frameworks

Programmers can more easily translate what-they-want-in-the-real-world into code, and make/use models of the world which better line up with the structure of reality

… and this creates a smooth-ish path of steadily-more-powerful declarative programming tools which eventually leads to full AGI

To be clear, I don’t see a complete roadmap yet for this path; the list of theoretical problems is not complete, and a lot of progress would be needed in non-agenty mathematical modelling as well. But even if this path isn’t smooth or doesn’t run all the way to AGI, I definitely see a lot of economic pressure for this sort of thing. We are economically bottlenecked on our ability to describe what we want to computers, and anything which relaxes that bottleneck will be very valuable.

Markets and Contractability

The previous section mentioned the Ethereum alignment problem: we can code up a smart contract to give monetary rewards for anything our code can recognize, yet it’s still difficult to specify a contract for exactly the things we actually want. More generally, it’s hard to create contracts which specify what we want well enough that they can’t be gamed.

(Definitional note: I’m using “contract” here in the broad sense, including pretty much any arrangement for economic transactions—e.g. by eating in a restaurant you implicitly agree to pay the bill later, or boxes in a store implicitly agree to contain what they say on the box. At least in the US, these kinds of contracts are legally binding, and we can sue if they’re broken.)

A full discussion of contract specification goes way beyond interfaces—it’s basically the whole field of contract theory and mechanism design, and encompasses things like adverse selection, signalling, moral hazard, incomplete contracts, and so forth. All of these are techniques and barriers to writing a contract when we can’t specify exactly what we want. But why can’t we specify exactly what we want in the first place? And what happens when we can?

Here’s a good example where we can specify exactly what we want: buying gasoline. The product is very standardized, the measures (liters or gallons) are very standardized, so it’s very easy to say “I’m buying X liters of type Y gas at time and place Z”—existing standards will fill in the remaining ambiguity. That’s a case where the structure of the real world is not too far off from the structure of what-we-want—there’s a nice clean interface. Not coincidentally, this product has a very liquid market: many buyers/sellers competing over price of a standardized good. Standard efficient-market economics mostly works.

On the other end of the spectrum, here’s an example where it’s very hard to specify exactly what we want: employing people for intellectual work. It’s hard to outsource expertise—often, a non-expert doesn’t even know how to tell a job well done from sloppy work. This is a natural consequence of using an expert as an interface to a complicated system. As a result, it’s hard to standardize products, and there’s not a very liquid market. Rather than efficient markets, we have to fall back on the tools of contract theory and mechanism design—we need ways of verifying that the job is done well without being able to just specify exactly what we want.

In the worst case, the tools of contract theory are insufficient, and we may not be able to form a contract at all. The lemon problem is an example: a seller may have a good used car, and a buyer may want to buy a good used car, but there’s no (cheap) way for the seller to prove to the buyer that the car isn’t a lemon—so there’s no transaction. If we could fully specify everything the buyer wants from the car, and the seller could visibly verify that every box is checked, cheaply and efficiently, then this wouldn’t be an issue.

The upshot of all this is that good interfaces—tools for translating the structure of the real world into the structure of what-we-want, and vice versa—enable efficient markets. They enable buying and selling with minimal overhead, and they avoid the expense and complexity of contract-theoretic tools.

Create a good interface for specifying what-people-want within some domain, and you’re most of the way to creating a market.

Interfaces in Organizations

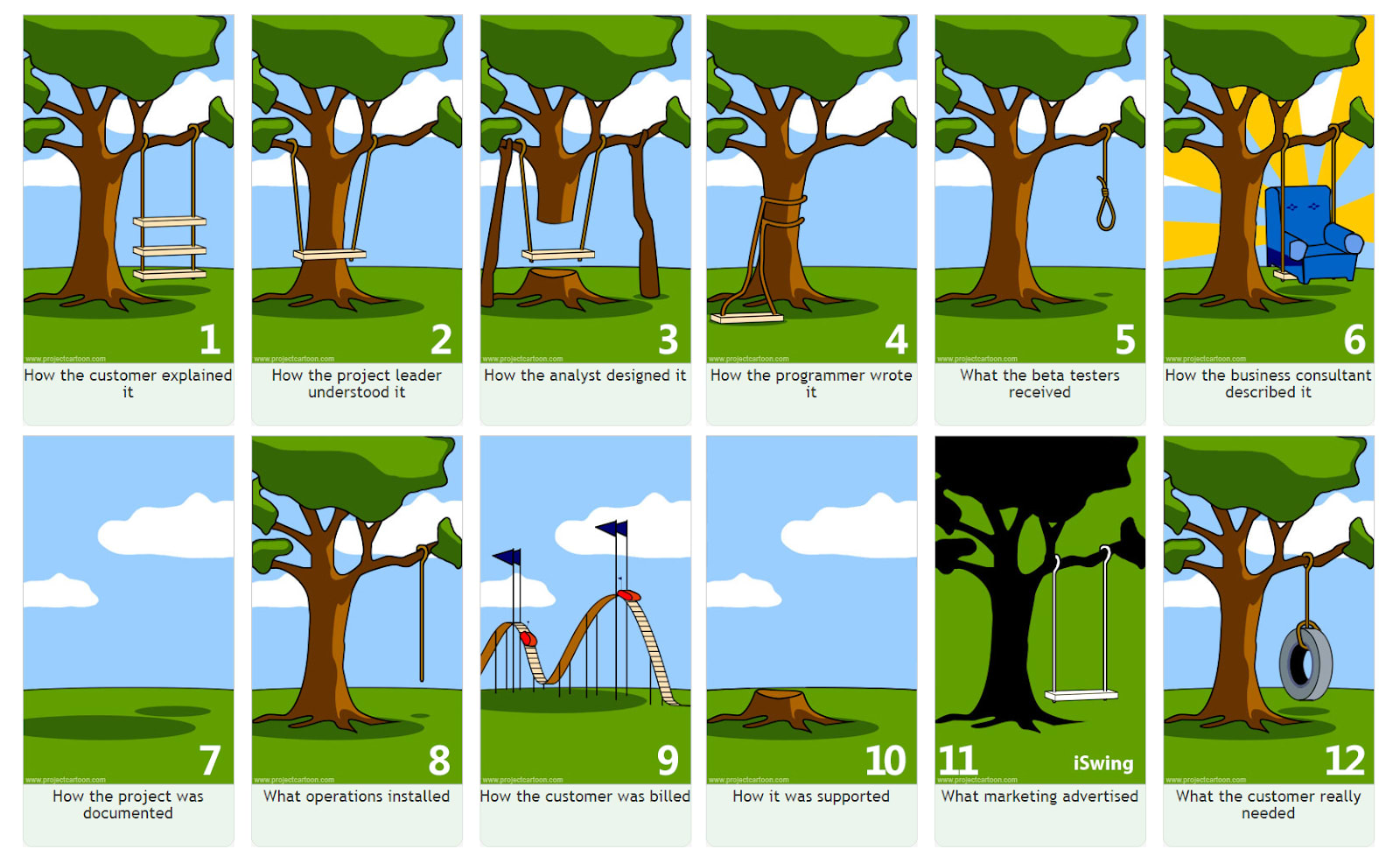

Accurately communicating what we want is hard. Programmers and product designers are especially familiar with this:

Incentives are a problem sometimes (obviously don’t trust ads or salespeople), but even mostly-earnest communicators—customers, project managers, designers, engineers, etc—have a hard time explaining things. In general, people don’t understand which aspects are most relevant to other specialists, or often even which aspects are most relevant to themselves. A designer will explain to a programmer the parts which seem most design-relevant; a programmer will pay attention to the parts which seem most programming-relevant.

It’s not just that the structure of what-humans-want doesn’t match the structure of the real world. It’s that the structure of how-human-specialists-see-the-world varies between different specialists. Whenever two specialists in different areas need to convey what-they-want from one to the other, somebody/something has to do the work of translating between structures—in other words, we need an interface.

A particularly poignant example from several years ago: I overheard a designer and an engineer discuss a minor change to a web page. It went something like this:

Designer: “Ok, I want it just like it was before, but put this part at the top.”

Engineer: “Like this?”

Designer: “No, I don’t want everything else moved down. Just keep everything else where it was, and put this at the top.”

Engineer: “But putting that at the top pushes everything else down.”

Designer: “It doesn’t need to. Look, just...”

… this went on for about 30 minutes, with steadily increasing frustration on both sides, and steadily increasing thumping noises from my head hitting the desk.

It turned out that the designer’s tools built everything from the bottom of the page up, while the engineer’s tools built everything from top down. So from the designer’s perspective, “put this at the top” did not require moving anything else. But from the engineer’s perspective, “put this at the top” meant everything else had to get pushed down.

Somebody/something has to do the translation work. It’s a two-sided interface problem.

Handling these sorts of problems is a core function for managers and for anyone deciding how to structure an organization. It may seem silly to need to loop in, say, a project manager for every conversation between a designer and an engineer—but if the project manager’s job is to translate, then it can be useful. Remember, the example above was frustrating, but at least both sides realized they weren’t communicating successfully—if the double illusion of transparency kicks in, problems can crop up without anybody even realizing.

This is why, in large organizations, people who can operate across departments are worth their weight in gold. Interfaces are a scarce resource; people who operate across departments can act as human interfaces, translating model-structures between groups.

A great example of this is the 1986 Goldwater-Nichols Act. It was intended to fix a lack of communication/coordination between branches of the US military. The basic idea was simple: nobody could be promoted to lieutenant or higher without first completing a “joint mission”, one in which they worked directly with members of other branches. People capable of serving as interfaces between branches were a scarce resource; Goldwater-Nichols introduced an incentive to create more such people. Before the bill’s introduction, top commanders of all branches argued against it; they saw it as congressional meddling. But after the first Iraq war, every one of them testified that it was the best thing to ever happen to the US military.

Summary

The structure of things-humans-want does not always match the structure of the real world, or the structure of how-other-humans-see-the-world. When structures don’t match, someone or something needs to serve as an interface, translating between the two.

In simple cases, this is just user interface design—accurately communicating how-the-thing-works to users. But when the system is more complicated—like a computer or a body of law—we usually need human specialists to serve as interfaces. Such people are expensive; interfaces to complicated systems are a scarce resource.

- The Fusion Power Generator Scenario by (8 Aug 2020 18:31 UTC; 160 points)

- What’s General-Purpose Search, And Why Might We Expect To See It In Trained ML Systems? by (15 Aug 2022 22:48 UTC; 158 points)

- Book Launch: “The Carving of Reality,” Best of LessWrong vol. III by (16 Aug 2023 23:52 UTC; 131 points)

- Rant on Problem Factorization for Alignment by (5 Aug 2022 19:23 UTC; 112 points)

- Voting Results for the 2020 Review by (2 Feb 2022 18:37 UTC; 108 points)

- 2020 Review Article by (14 Jan 2022 4:58 UTC; 74 points)

- 's comment on How might we solve the alignment problem? (Part 1: Intro, summary, ontology) by (29 Oct 2024 2:43 UTC; 69 points)

- Alignment as Translation by (19 Mar 2020 21:40 UTC; 66 points)

- Relaxation-Based Search, From Everyday Life To Unfamiliar Territory by (10 Nov 2021 21:47 UTC; 60 points)

- Constraints & Slackness as a Worldview Generator by (25 Jan 2020 23:18 UTC; 59 points)

- What are important UI-shaped problems that Lightcone could tackle? by (27 Apr 2025 0:02 UTC; 59 points)

- Let’s Rename Ourselves The “Metacognitive Movement” by (23 Apr 2021 21:06 UTC; 52 points)

- Infinite Data/Compute Arguments in Alignment by (4 Aug 2020 20:21 UTC; 51 points)

- What Would Advanced Social Technology Look Like? by (10 Nov 2020 17:55 UTC; 44 points)

- Some Biology Related Things I Found Interesting by (2 Oct 2025 12:18 UTC; 40 points)

- Recognizing Numbers by (20 Jan 2021 19:50 UTC; 38 points)

- World-Model Interpretability Is All We Need by (14 Jan 2023 19:37 UTC; 36 points)

- Conjecture Workshop by (15 May 2020 22:41 UTC; 35 points)

- 's comment on The Plan by (12 Dec 2021 18:28 UTC; 33 points)

- Babble Challenge: 50 thoughts on stable, cooperative institutions by (5 Nov 2020 6:38 UTC; 29 points)

- Sunday June 28 – talks by johnswentworth, Daniel kokotajlo, Charlie Steiner, TurnTrout by (26 Jun 2020 19:13 UTC; 28 points)

- Models of Value of Learning by (7 Jul 2020 19:08 UTC; 24 points)

- 's comment on Discussion with Eliezer Yudkowsky on AGI interventions by (13 Nov 2021 17:39 UTC; 23 points)

- 's comment on Beyond Blame Minimization by (27 Mar 2022 9:42 UTC; 22 points)

- Core of AI projections from first principles: Attempt 1 by (11 Apr 2023 17:24 UTC; 21 points)

- 's comment on nora’s Quick takes by (EA Forum; 12 Jul 2021 7:26 UTC; 15 points)

- 's comment on Coordination as a Scarce Resource by (8 Jan 2022 1:35 UTC; 15 points)

- Sunday August 2, 12pm (PDT) — talks by jimrandomh, johnswenthworth, Daniel Filan, Jacobian by (30 Jul 2020 23:55 UTC; 15 points)

- 's comment on johnswentworth’s Shortform by (5 Mar 2020 2:42 UTC; 13 points)

- 's comment on nora’s Quick takes by (EA Forum; 12 Jul 2021 7:40 UTC; 12 points)

- 's comment on Alignment As A Bottleneck To Usefulness Of GPT-3 by (22 Jul 2020 19:46 UTC; 12 points)

- 's comment on AGI Ruin: A List of Lethalities by (10 Jun 2022 16:39 UTC; 7 points)

- What is the point of College? Specifically is it worth investing time to gain knowledge? by (23 Mar 2020 17:33 UTC; 6 points)

- 's comment on Nora_Ammann’s Shortform by (12 Jul 2021 8:03 UTC; 5 points)

- 's comment on Some Biology Related Things I Found Interesting by (3 Oct 2025 14:17 UTC; 5 points)

- 's comment on Why Not Just Outsource Alignment Research To An AI? by (11 Mar 2023 18:17 UTC; 2 points)

- 's comment on Problems I’ve Tried to Legibilize by (11 Nov 2025 1:23 UTC; 2 points)

- 's comment on Wireless is a trap by (15 Jan 2022 16:59 UTC; 2 points)

- 's comment on Convince me that humanity is as doomed by AGI as Yudkowsky et al., seems to believe by (14 Apr 2022 23:25 UTC; 2 points)

- 's comment on Beyond Blame Minimization: Thoughts from the comments by (30 Mar 2022 10:14 UTC; 2 points)

- 's comment on EA is a Career Endpoint by (EA Forum; 19 May 2021 4:26 UTC; 1 point)

- 's comment on The topic is not the content by (27 Jul 2021 4:46 UTC; 1 point)

What this post does for me is that it encourages me to view products and services not as physical facts of our world, as things that happen to exist, but as the outcomes of an active creative process that is still ongoing and open to our participation. It reminds us that everything we might want to do is hard, and that the work of making that task less hard is valuable. Otherwise, we are liable to make the mistake of taking functionality and expertise for granted.

What is not an interface? That’s the slipperiest aspect of this post. A programming language is an interface to machine code, a programmer to the language, a company to the programmer, a liaison to the company, a department to the liaison, a chain of command to the department, a stock to the chain of command, an index fund to the stock, an app to the index fund. Matter itself is an interface. An iron bar is an interface to iron. An aliquot is an interface to a chemical. A fruit is an interface, translating between the structure of a chloroplast and the structure of things-animals-can-eat. A janitor is an interface to brooms and buckets, the layout of the building, and other considerations bearing on the task of cleaning. We have lots of words in this concept-cluster: tools, products, goods and services, control systems, and now “interfaces.”

“As a scarce resource,” suggests that there are resources that are not interfaces. After all, the implied value prop of this post is that it’s suggesting a high-value area for economic activity. But if all economic activity is interface design, then a more accurate title is “Scarce Resources as Interfaces,” or “Goods Are Hard To Make And Services Are Hard To Do.”

The value I get out of this post is that it shifts my thinking about a tool or service away from the mechanism, and toward the value prop. It’s also a useful reminder for an early-career professional that their value prop is making a complex system easier to use for somebody else, rather than ticking the boxes of their course of schooling or acquiring line items on their resume. There are lots of ways to lose one’s purpose, especially if it’s not crystal clear. This post does a good job of framing jobs not as a series of tasks you do, or an identity you have, but as an attempt to make a certain outcome easier to achieve for your client. It’s fundamentally empathic in a way I find appealing.