Sure but do you think the adherents of capitalism who are the correct relationship are better?

Nathan Young

Anthropic vs USG. What will happen by May 1st? Long careful forecast.

Does liberal capitalism have enough vital energy to sustain itself?

A thing I like about Christianity is that some Christians really believe what they say. Some Christians, across history, and today, would die for their beliefs.

I am less sure of liberal capitalists. It seems that big companies have not really been willing to spend very much to maintain the system that gives them so much wealth. Where have we seen companies attempt to push back, even despite their huge wealth? Against Tariffs? Against Trump bullying law firms? Against decisions that happen on the basis of one’s personal interactions with Trump?

I wonder if this system has enough vital energy to sustain itself if with trillions in capital so few are willing to take a stand.

Let’s taboo the word “spammy”. Sounds like you think it was misguided of me to ask? Like it showed poor judgement? “an obviously wasteful fashion”.

I don’t think it was an obviously wasteful fashion. Epstein was someone who exchanged money for status. Had he funded MIRI, perhaps he would have invited Yud and Nate to some of his events. Perhaps they would have attended. Perhaps a high status person would have met and respected them and thought “hmm this Epstein guy seems okay”.

But notably, I think one should be able to ask such questions. I have been in communities where it is considered bad form to ask such questions and I didn’t like that. So now, if I have concerns, I tend to ask. If I understand correctly, @habryka wishes he’d been more public with his disagreements with SBF. Well maybe I wish I’d asked a few of my personal questions about that publicly. So I’m doing that here.

If people don’t like it they can downvote the comment and get on with their lives. In that sense the forum has voted. But if you want to discuss this personally, no I don’t feel bad to ask questions that concern me. Your (and Yud’s) initial response did cause me to change my mind, but this tone of ‘you shouldn’t even have asked about it’ seems bad. I am yet to be convinced of that. Seems plausible to me that while we should trust legal systems to do their job, that’s not the world we live in and Epstein was someone who traded money for reputation and MIRI was perhaps closer to doing that deal than I’d have liked, hence my question.

Surely SBF was also involved in similar trades. Should people have taken money from him, if they had suspicions? What about after the trial? If they hadn’t followed the trial but considered raising money from him, would that have been an error?

Where do you think I was “spam”ming?

Yeah, that makes sense.

Though I don’t think I regret asking questions about a thing that was troubling me in a polite way on the community forum.

I’m about to go to sleep but I am a bit confused about Epstein stuff.

In 2019, @Rob Bensinger said that “Epstein had previously approached us in 2016 looking for organizations to donate to, and we decided against pursuing the option;”

Looking at the Justice Dept releases (which I assume are real) (eg https://www.justice.gov/epstein/files/DataSet%209/EFTA00814059.pdf)

That doesn’t feel a super accurate description. It seems like there was a discussion with Epstein after it was clear he had been involved in pretty bad behaviour. (In 2008 he pleaded guilty in a Florida state court to procuring a minor for prostitution and soliciting prostitution, was required to register as a sex offender, and served about 13 months in custody). Likewise the discussion involves a lot of information sent to Epstein and probably a skype call. It wasn’t just him approaching and MIRI saying no.

I guess I wonder if it might have been more appropriate to say, “We discussed funding with Epstein but decided not to go forward with it. In hindsight even the discussion was an error”

From the emails it seems @Eliezer Yudkowsky makes clear that @So8res and he know who Epstein is:

Epstein: Or were you clearing my name with him

Yudkowsky: Not sure what you mean. Nate knows you’re Jeffrey E. I check not-yetpublished info/speculation past him before saying it.[1]

So I guess, do we think the discussion was an error? I think I would not endorse discussing funding with a known sex offender, but it’s hard to get in frames of mind I’m not in and much still wasn’t known.

I guess:Are the emails real?

What do Yud and Nate think of talking to Epstein now?

What does Rob Bensinger think of his 2019 communication

What are some broader thoughts on this?

I don’t have a strong view. I find it’s worth moving slowly. Appreciate everyone’s time.

- ^

I’m not sure what this third sentence means.

Looking at the Justice Department disclosures, I feel uncomfortable reading this.

In 2016 quite a lot of Epstein misbehaviour was known.

Link: https://www.justice.gov/epstein/files/DataSet%209/EFTA00814059.pdf

Please critique the discussion styles of the participants in this discussion

This is an interesting discussion to analyze. Here’s my critique of each participant’s style:

Nathan Young

Strengths: Opens with genuine intellectual uncertainty and acknowledges tensions in his own position (“Which leaves me sort of confused”). He’s willing to admit aesthetic discomfort with positions he’s trying to defend on game-theoretic grounds. This shows real intellectual honesty.

Weaknesses: His final response (“I think your response would admit basically no evidence”) is a meta-dismissal rather than engagement. Jimmy offered specific criteria for what would constitute a true parallel—Nathan could have either met those criteria with better examples or challenged the criteria directly. Instead, he essentially accused Jimmy of being unfalsifiable without demonstrating it.

interstice

Strengths: Concise and makes a specific, relevant distinction (assassination vs. other attacks).

Weaknesses: Too terse to be fully productive. The brevity leaves Nathan to guess at the underlying reasoning, which creates friction.

jimmy

Strengths: The most analytically careful. He draws specific distinctions (severity of harm, nature of mockery, what a true parallel would require), acknowledges being a Destiny fan while still critiquing him, and tries to articulate where Destiny’s symmetry-breaking logic fails on its own terms.

Weaknesses: His distinctions could read as motivated reasoning—each example Nathan raises gets explained away with a different caveat. The closing move (suggesting Claude as arbiter) is clever but slightly passive-aggressive; it’s a way of saying “you’re being defensive” while maintaining plausible deniability. He also doesn’t quite grapple with whether his own framework might be similarly self-serving.

Overall pattern: The discussion shows a common failure mode—it becomes a battle over whether specific examples are “close enough” parallels rather than directly addressing the underlying question about when reciprocal norm-violation is justified. Both Nathan and Jimmy are partially right that the other is being somewhat selective.

End of claude.

I’m not really interested in continuing to discuss with you if you are going to dismiss the Paul Pelosi attack out of hand. I just don’t think we’ll get anywhere. Merry Christmas!

Solid article.

Defines terms in ways I agree with. Raised objections I hadn’t thought of. Thought provoking.

On the object level the criticisms of bayesianism seem solid, but I am unsure if the replacement is good.

I think the title was a bit overdone but the content was solid. Worth a read/think for prediction market people. We are perhaps a bit overly optimistic on prediction markets (eg this is a play money one) so yeah.

I think Yglesias is right to have couched his praise for the market in question but that is easy to forget.

Having thought about this a bit, I think it’s reasonable to say that there is some “adverse” “selection” going on as far as those english words are used, but I think it’s not that similar to adverse selection in the economic sense. There, goods leave the market because sellers don’t want to sell them, here they leave because they do. Is that correct?

I think maybe tonally it feels similar to adverse selection—a sort of “types of markets that you should feel suspicious of”. If you go to a used car lot with no warranties, be suspicious. If you go to an empty restaurant at a busy time, be suspicious.

I’m unsure if it’s helpful for this “market types to be suspicious of” is labelled as “adverse selection” which has a technical meaning, rather than coming up with a new phrase.

Your quantitative trading firm is holding its annual juggling tournament. Cost to enter is $18, winner takes all. You know you’re far better at juggling than most of your coworkers, so you sign up. As it turns out, only a few of your coworkers signed up, including Fortune, who used to be in the lucrative professional juggling world before leaving to pursue her lifelong passion of providing moderate liquidity to US Equities markets. You come in second place.

Isn’t this basically a true story about Jane Street? I assume Ricki didn’t want to break confidences but I am not so obligated, so I’ll say, I’m pretty sure this happened! Though I can’t remember what the skill involved was.

I’ve thought about and come back to this article several times and indeed it has encouraged me to understand both the original definition of adverse selection and Ricki’s. To me, this is suggestive of a top quality piece.

I think your response would admit basically no evidence.

I think Paul Pelosi nearly dying and there being jokes and accusations of him being gay were to not take attacks seriously. I think Trump’s comments about Rob Reiner were not to remotely take his death seriously.

I basically reject your argument on those terms.

Kirk’s jokes about the Paul Pelosi hammer attack? Trump not remembering when those state senators were shot.

Veganism:

I though pretty strong, though doesn’t deal with how hard and tiring veganism is. I feel I can do a lot more good if I’m not vegan, hence I offset.

I tried being vegan for 3 months and it was very tiring to me often one of the the main things I was doing that day.

https://www.farmkind.giving/

Do you expect me to struggle to find politically motivated assassinations that Republicans didn’t respond seriously to?

While I don’t love Destiny’s debate style, I think he makes a great point about reciprocation (here-ish). I need to believe that it’s unsustainable to have one side that embages in good debate norms, and the other side that isn’t willing to at all.

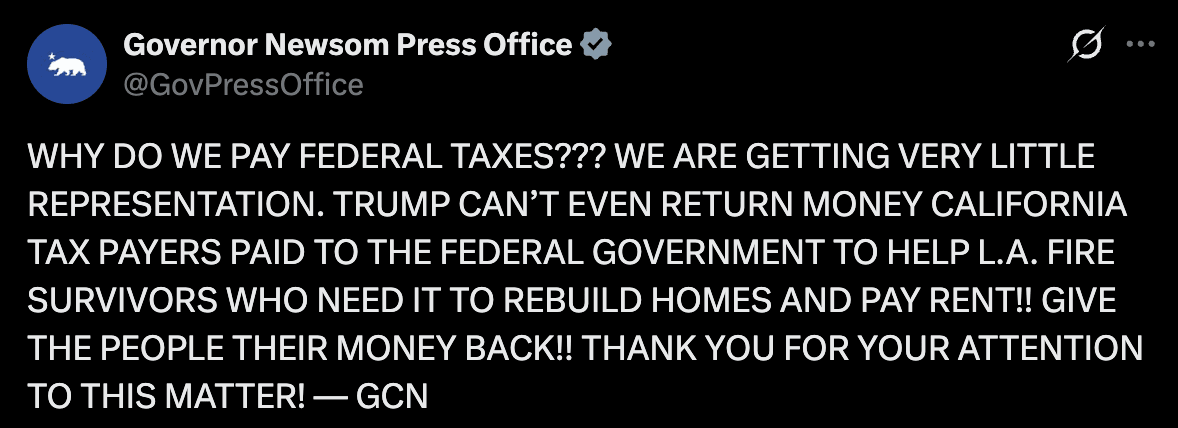

It at least makes sense not to be too upset over Kirk’s death if Republicans won’t do the same over Reiner’s. Likewise while aethetically I don’t like Gavin Newsom’s posts. I think they serve as a balancing force to Trump’s rhetoric.

Finally, I’ve long thought that it makes sense for democrats to gerrymander places if the Republicans are going to gerrymander.

Which leaves me sort of confused as my position here. I don’t like destiny’s crowing over the deaths of Trump supporters. But I find it hard to condemn in the face of the game theory of the situation if republicans often do similar things ( which I think they do).

I have agreed to this bet for £25. Jehan bought me a korean dinner. Please hold me socially accountable if it resolves yes and I don’t pay up.

It’s 5 years from today 70:1.