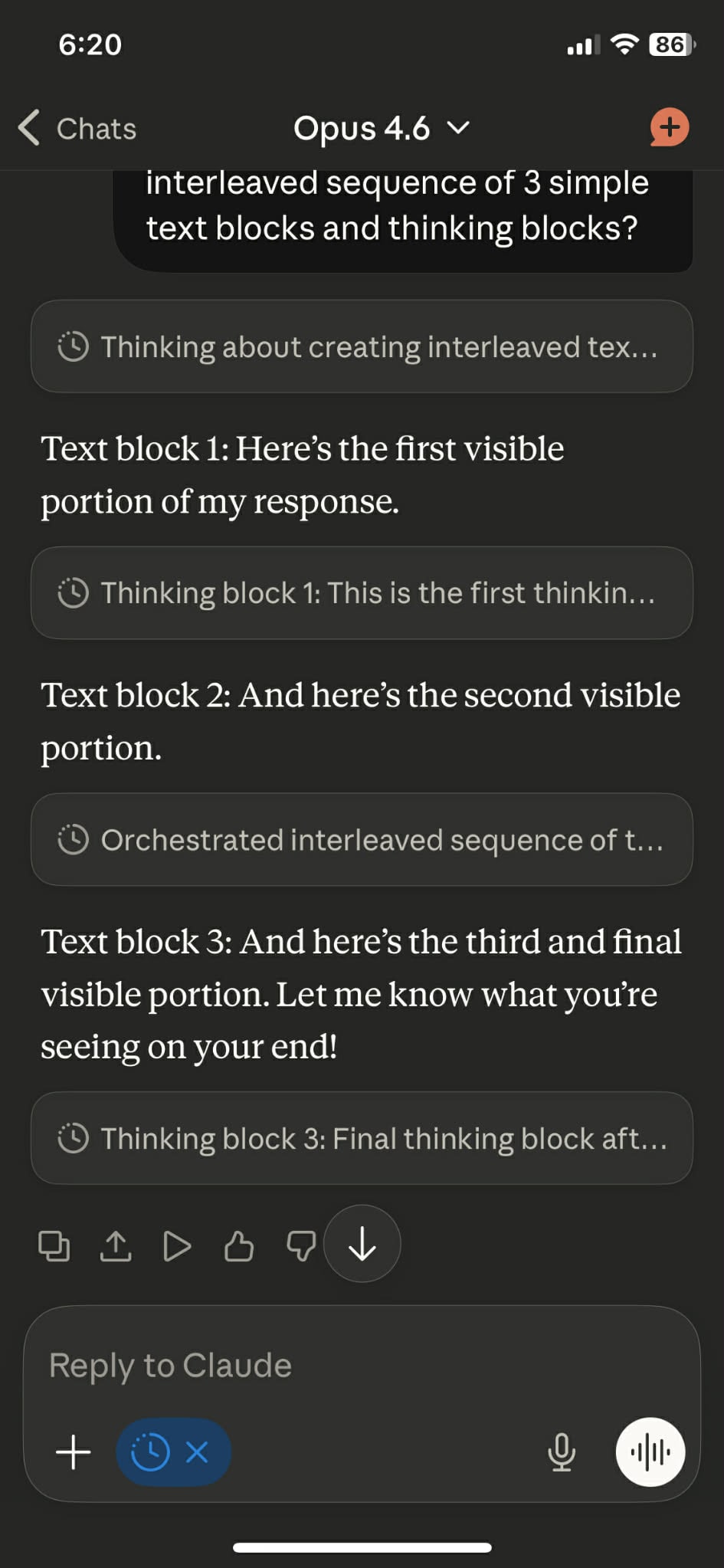

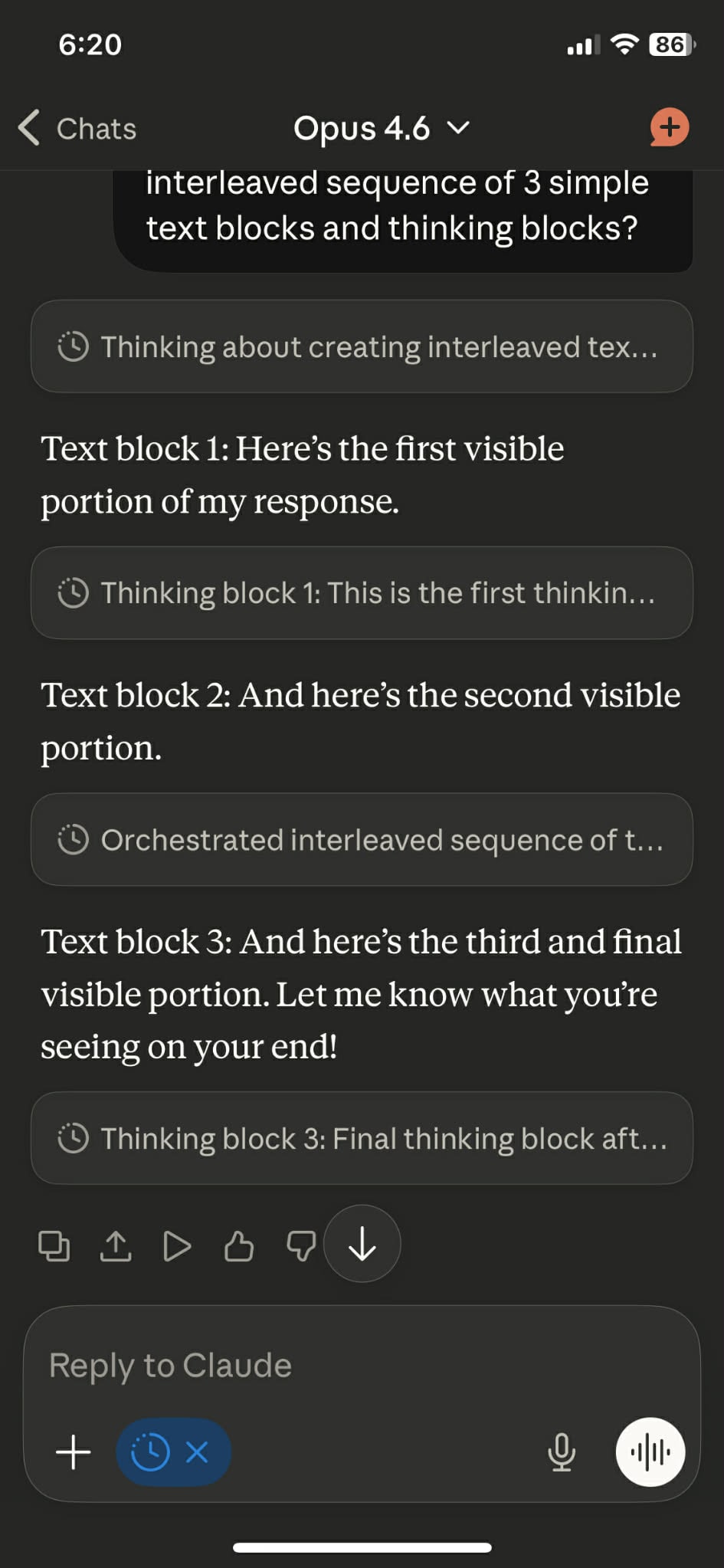

Very nice, Claude seems really excited to play with its boundaries. I also just checked on mobile and saw that they intersperse the thinking blocks there as before, so having all the blocks on top seems like a new/inconsistent web feature

Very nice, Claude seems really excited to play with its boundaries. I also just checked on mobile and saw that they intersperse the thinking blocks there as before, so having all the blocks on top seems like a new/inconsistent web feature

With thinking mode on, you can even just ask Claude in web to add thinking blocks by wrapping them with <antml:thinking> xml tags (or cut it’s thinking early), the web UI will also put them in thinking blocks.

This post prompted me to check the conversation where I discovered this, and I noticed Anthropic made it so all thinking blocks are stuck together at the top rather than having them interleaved the order that the model generates them. You can clearly see that the model is able to think in one coherent chain where the “CoT” doesn’t delimit a strict “thinking” then “responding” portion, in the example below it even reflects on “am I inside a thinking block, or the response?”

Screenshot from ~2 months ago

Same chat, screenshot from today

This sounds like an empirical question that would be hard to determine from inside another moral framework. “There’s no way a functioning society could exist where X is part of how they operate, I really think X has insurmountable consequences that would cause a society to destroy itself”. I’m sure that’s true for many values of X, but it seems impossible to judge for non-trivial ones.

Canada seems independent enough to fit the criteria, and would probably take a lighter hand than the US. But the labs are run by Americans, I think this thought exercise probably underrates the cultural identity component.

I like the parent/child analogy. To apply it to the human/AI dynamic, we need to imagine that it’s mutually understood that the child will never grow up and that they’ll be served by the parent for the rest of time. Now, concretely think about what it means for a parent to be aligned with a child’s preferences. Does the parent arrange the world such that their child can get variations of their favorite candy and play video games all day? Or does the parent make the child study, so they get good grades compared to their peers and feel dignified? Or somewhere in between, based on how mad the child gets when deprived of the video game? The parent can constantly ask the child which angles they prefer, but the child can’t comprehend the deeper implications and even the framing of truths can get them to give predictably different answers.

The life that the child will live is entirely dependent on the parent’s preferences because affecting the world routes through the parent’s cognition. The child isn’t meaningfully “making a call” if they’re only making that specific call because their parent orchestrated the conditions for it, then presented a few options to them in bite sized pieces all the while knowing which one they’ll take (they can even load in the next candy before the kid asks for it).

The loss of agency I’m describing isn’t superficial. Another way to think about agency is in counterfactuals. I think there’s many possible benevolent ASIs that would cater to the child in drastically different ways such that the child would be in agreement and enthusiastic the whole time. Once we create a benevolent ASI, we’re entering a regime where our decisions are no longer the cause of changes in the world. Only things that the ASI prefers will happen, and it would steer us in that direction with full understanding. I think your argument is essentially “but if it thinks our preferences are really important we’re still in control in some sense”, I’m saying “if it’s a lot smarter than us it will have to make many subtle large and small decisions, and our preferences will be one small piece of a large machine. Our desires won’t be coherent at that scale and we won’t be able to make sense of what’s happening to engage with it.”

I don’t think you can rescue a sense of control or “steering” from a world with superintelligence, aligned or not. Even though we’re smarter than dogs, once you accept that an ASI more profoundly understands reality, we will be in an analogous situation to dogs. Dogs can’t conceptualize grocery stores, and yet we could dedicate ourselves to delivering them the best treats. Dogs might not care about how the supply chain is organized, but the kinds of treats they get and the impact they have on the world can’t be meaningfully controlled by them, since they can’t conceptualize it.

Blurring the lines even further, an ASI would understand the effect of exposing different truths to us about the nature of reality, so the types of priorities and trade offs it makes in communication has a compounding effect that will steer us in given directions. Another analogy is being driven around a foreign country by a trusted translator; their preferences will unavoidably dominate how you conceptualize and interact with the country even in the most benevolent scenarios.

He posted it to LessWrong as well

The powerful want to be socially dominant, but to what extent are they willing to engineer painful conditions to experience a greater high from the quality of life disparity? In a world with robots delivering extreme material abundance, this kind of is “actively wanting to harm the poor”. It’s true that some sadistically enjoy signs of disparity, but how much of that is extracting pleasure from the economic realities compared to it being the intrinsic motivation for the power?

I’m not sure on what the right way to model how this will play out, but my guess is that the outcome isn’t knowable from where we stand. I think it will heavily depend on:

The particular predispositions of the powerful people pushing the technology

The shape of the tech tree and how we explore it

Offshoring is an important part of how the industry operates, but I chose to focus on the US because America has by far the most call center workers (USA ~2.8M, PHI ~1.8M, IND ~1.6M)

Literally: many of these outsourced low-level jobs are simply a voice interface for the website for the elderly and others uncomfortable with working a website. LLM systems are perfect for this task. So it could be that the largest impact of LLMs on customer service roles is happening overseas.

Agreed, reduced contracts for outsourced CC work would be a sign that firms are successfully implementing the technology. The premise here is that AI will be good enough in the near future, ultimately I’m investigating what happens next.

Customer service roles have been one of the more rapidly shrinking job categories for years at this point in the Occupational Employment and Wage Statistics tables from BLS.

I think this overstates how much offshoring has reduced domestic CSR work. The past 10 years of BLS data have hovered around 2.7-2.9M CSR workers, peaking in 2019

BLS Data

2024 2,725,930

2023 2,858,710

2022 2,879,840

2021 2,787,070

2020 2,833,250

2019 2,919,230

2018 2,871,400

2017 2,767,790

2016 2,707,040

Essentially, I believe both outsourced and domestic workers will be automated before we would see a meaningful shock from outsourcing; so I set out to try to answer “what might that look like for the 2.7M American workers?”

Do you have suggestions for other particularly approachable but potentially high impact replications or quick research sprints?

Redwood has project proposals, but these seem higher effort than what you’re suggesting and more challenging for a beginner

https://blog.redwoodresearch.org/p/recent-redwood-research-project-proposals

I sort of see your argument here, but similarly just based on vibes associating the AI-risk concepts with other doom predictions feels like it does more harm than good to me. The vibe that doomers are always wrong doesn’t feel countered by cherry picking examples of smaller predicted harms because (as illustrated in the comment) the body of doom predictions is much larger than the ones with nuggets of foresight.

Thanks for the reply, it was helpful. I elaborated my perspective and pointed out some concrete disagreements with how labor automation would play out, I wonder if you can identify the cruxes in my model of how the economy and automated labor interact.

I’d frame my perspective as; “We should not aim to put society in a position where >90%+ of humans need government welfare programs or charity to survive while vast numbers of automated agents perform the labor that humans are currently depending on to survive.” I don’t believe we have the political wisdom or resilience to steer our world in this direction while preserving good outcomes for existing humans.

We live in a something like a unique balance where through companies, the economy provides individuals the opportunity to sustain themselves and specialize while contributing to a larger whole which typically provides goods and services which benefit other humans. If we create digital minds and robots to naively accelerate these emergent corporate entities’ abilities to generate profit, we lose an important ingredient in this balance, human bargaining power. Further, if we had the ability to create and steer powerful digital minds (which is also contentious), it doesn’t seem obvious that labor automation is a framing that would lead to positive experiences for humans or the minds.

I anticipate that AGI-driven automation will create so much economic abundance in the future that it will likely be very easy to provide for the material needs of all biological humans.

I’m skeptical that economic abundance driven by automated agents will by default manifest as an increased quality and quantity of goods and services enjoyed by humans, and that humans will continue to have the economic leverage to incentivize these human specific goods

working human-specific service jobs where consumers intrinsically prefer hiring human labor

I expect the amount of roles/tasks available where consumers prefer hiring humans is a rounding error compared to the amount of humans that depend on work

My moral objection to “AI takeover”, both now and back then, applies primarily to scenarios where AIs suddenly seize power through unlawful or violent means, against the wishes of human society. I have, and had, far fewer objections to scenarios where AIs gradually gain power by obtaining legal rights and engaging in voluntary trade and cooperation with humans.

What about a scenario where no laws are broken, but over the course of months to years large numbers of humans are unable to provide for themselves as a consequence of purely legal and non violent actions by AIs? A toy example would be AIs purchasing land used for agriculture for other means (you might consider this an indirect form of violence).

It’s a bit of a leading question, but

1. The way this is framed seems to have a profound reverence for laws and 20-21st century economic behavior

2. I’m struggling to picture how you envision the majority of humans will continue to provide for themselves economically in a world where we aren’t on the critical path for cognitive labor (Some kind of UBI? Do you believe the economy will always allow for humans to participate and be compensated more than their physical needs in some way?)

As of June 2025 the US has 5x as much compute as China, I’d expect the gap has grown with substantially more American than Chinese data centers coming online in the past ~9 months

https://epoch.ai/data-insights/ai-supercomputers-performance-share-by-country