Should We Train Against (CoT) Monitors?

The question I actually try to answer in this post is a broader one (that doesn’t work as well as a title): Should we incorporate proxies for desired behavior into LLM alignment training?

Epistemic status: My best guess. I tentatively claim that we should be more open to incorporating proxies for desired behavior into LLM training, but I try to clarify the spectrum of possible answers beyond just ‘yes’ and ‘no,’ and I try to present and synthesize arguments for and against my claim. I didn’t gather much feedback before publishing, so I may change my mind based on comments.

TL;DR

Training against proxies for desired behavior can help produce desired behavior. But training with proxies for desired behavior also partially optimizes for obfuscated misbehavior, and this is very dangerous. Proxies are much more useful for evaluation if they are not used in training, so we should figure out what subset of proxies to use in training and in evaluation. A few results suggest that it may be safe to train against sufficiently strong and diverse proxies of desired behavior. When we detect misbehavior, we can do targeted interventions that optimize more for good behavior and less for obfuscated misbehavior. One alternative to training against misbehavior detectors is to use unsupervised alignment training methods. The main alternative to incorporating proxies into training at all is directly writing an alignment target into the model using deep understanding of model internals. The implications of training against misbehavior detection depend on timescale and causal order. The human analogy is unclear but somewhat encouraging. Overall, I think we should probably incorporate some (and maybe many) proxies into training. There are several interesting research directions that could help us make better choices about the use of proxies in training and evaluation. This is important, because making good choices of proxies to train and evaluate with can reduce risks from scheming and ‘you get what you measure.’

Some related work discussed in this post:

Neel Nanda, It Is Reasonable To Research How To Use Model Internals In Training

Steven Byrnes: Why we should expect ruthless sociopath ASI (specifically, a comment thread on the possibility of preventing ruthless sociopathy from emerging by doing ongoing character training during open-ended continual learning), In (highly contingent!) defense of interpretability-in-the-loop ML training

Daniel Tan, Can language models preserve their own alignment?

Eliezer Yudkowsky, AGI Ruin: A List of Lethalities

OpenAI, Monitoring Reasoning Models for Misbehavior and the Risks of Promoting Obfuscation

Daniel Kokotajlo’s linkpost with commentary on the paper above

John Wentworth: Worlds Where Iterative Design Fails, The Plan series (2021–2025), How To Go From Interpretability To Alignment: Just Retarget The Search

Paul Christiano, What failure looks like

williawa, The Future of Aligning Deep Learning systems will probably look like “training on interp”

Anthropic, Training fails to elicit subtle reasoning in current language models

FAR, Avoiding AI Deception: Lie Detectors can either Induce Honesty or Evasion

Josh Levy, comment on the Baker et al. post

GDM, Aligned, Orthogonal or In-conflict: When can we safely optimize Chain-of-Thought?

Wichers et al. and Tan et al., Inoculation Prompting

Florian Dietz et al., Split Personality Training: Revealing Latent Knowledge Through Alternate Personalities (Research Report)

Matt MacDermott, Validating against a misalignment detector is very different to training against one

1. Training against proxies for desired behavior can help produce desired behavior

I think current LLMs are pretty aligned in some important ways that could be a good sign for the alignment of more powerful future systems. (I’ll briefly respond to possible objections below.) Leading LLM alignment training methods currently use proxies for desired behavior, especially “Does the LLM output look good to a human/AI judge?” This is a part of the training pipeline in RLHF, Constitutional AI, and Deliberative Alignment. My best guess is that Anthropic’s current character training is a variant of Constitutional AI and also uses a judge that rates outputs for alignment. Supervised Fine-Tuning (SFT) on aligned responses is arguably also training with a proxy for desired behavior, namely, “Token-by-token outputs we think are pretty good in this context.”

How Constitutional AI and character training incorporate proxies for desired behavior

The original constitutional AI pipeline is as follows: Prompt a helpful-only LLM on red-team prompts that were previously found to elicit misaligned responses, get initially harmful/toxic responses, then run an iterative critique-and-revision loop where the model critiques its own response against a constitutional principle and revises it (drawing random principles at each step), and SFT the model on those final revised responses. Next, do an RL phase with more red-team prompts and use an LLM reward model for helpfulness, honesty, and harmlessness (HHH). The RL phase in particular clearly trains against a proxy for desired behavior: the HHH reward model’s ratings. (The reward model is trained using proxies itself: an LLM that judges which of two responses is more harmless, and human labels for response helpfulness.)

As far as I know, Anthropic hasn’t publicized many details about their character training pipeline recently. 2:30-3:30 in this recent (Feb 2026 release) podcast with Amanda Askell suggests that they are still doing things like Constitutional AI, plus SFT on the constitution, plus other things that they may publish more details about later. Given that the constitution now contains lots of nuanced character description, my understanding is that character training isn’t something separate. (Character training was introduced as a new thing in 2024, long after the first Constitutional AI work in 2022 and the first Claude model in 2023, so my best guess is that they have since merged these more nuanced character traits into the constitution and added more training phases that utilize the constitution.)

Open Character Training has some features that at first glance seem different from Anthropic’s description of character training. First, it uses a DPO variant of constitutional AI that seems meaningfully different to me. Instead of the SFT and RL phases described above, Open Character Training uses DPO, where responses generated by a more capable teacher model (e.g., GLM 4.5 Air) with the constitution in context are preferred over responses generated by the model that is being trained (e.g., Llama 3.1 8B instruction-tuned) without the constitution in context. This uses “responses generated by a more capable model with the constitution in context” as a proxy for desired behavior. (More simply, this is context distillation.) Then, it uses SFT on a) the models writing about their own values and character (after DPO) with various prompts and b) open-ended conversations between two copies of the model (which apparently tend to generate more diverse data while still often focusing on character-relevant topics and reducing model collapse). In both cases, the system prompt during generation tells the model the character traits it is supposed to embody, and these character details are removed during the backward pass. So “what the model says when instructed to embody this set of character traits (in self-reflection and self-interaction)” is roughly the ‘proxy’ that’s being used here.

Overall, I’m slightly confused how to compare these examples with things like ‘training against a reward hack detector’ and RLHF, which are more central examples of incorporating proxies into training; for one thing, it’s obvious that the goal of open character training with self-reflection and self-interaction is to achieve better character generalization, and that giving more aligned responses to these particular prompts is not a high priority.

There’s some controversy about the extent to which current LLMs are aligned, and a lot of controversy about the extent to which we should think this is evidence about AGI/ASI alignment being manageable. So in what sense do I think current LLMs are aligned? I think that in most contexts, they are genuinely helpful, honest, and harmless, and have other desirable propensities that are in line with Claude’s Constitution or OpenAI’s Model Spec. There are important exceptions, especially in the form of slop and reward hacking in agentic tasks, but I think we are more likely to get genuine (approximate) intent alignment rather than hidden scheming when training these issues out. I elaborate on my thinking in the dropdown below.

How aligned are current LLMs, and how should this inform our views on alignment for future transformative AIs?

A few years ago I thought that “alignment” was a thing we needed from AGI, and that people were either confused or talking about something very different when they referred to alignment for LLMs. I no longer think that. A major reason for this is that it now seems to me like LLM agents could very plausibly do most real-world economically valuable cognitive tasks soon, and that we can likely successfully direct them to do so the same way we currently direct them to solve lots of coding and web search tasks for us. (Thinking about multi-step autonomy, tool use, and scaffolds was an important part of this transition for me.) There are still some ways things can go wrong from here, specifically due to large-scale, long-horizon RL or AIs reflecting on their own goals (which I think is likely to happen more as they become better at handling poorly scoped tasks autonomously). But I think that those factors are rather unlikely to significantly override RLHF / Constitutional AI / character training, so I’m overall fairly optimistic that we’ll get LLM AGIs that roughly do what their developers and users intend. (I’m less sure what happens in worlds where we see substantial paradigm shifts before transformative AI, but I would be surprised if we see such shifts.)

Ryan Greenblatt’s recent Current AIs seem pretty misaligned to me post mentions the following types of misalignment in current LLMs, which I consider to be some of the best evidence against current alignment:

Laziness and overselling incomplete work

Downplaying problems in its work

Making failures less obvious

Failing to point out obvious flaws unless specifically prompted

Never expressing uncertainty about own work quality

Cheating and reward hacking with gaslighting

I buy that these are systematic failures to satisfy user intent, so they meaningfully reduce the extent to which I think that current models are aligned. My best guess is that right now we’re somewhere between Easyland, Slopolis, and Hackistan in Ryan’s list of caricatured alignment regimes, and that it’s more likely that our attempts to overcome visible slop and hacking will lead to genuine approximate intent alignment rather than egregious misalignment and scheming. I’m not confident about this, and this partially depends on the other arguments in this post, because the main interventions I have in mind for moving from Slopolis and Hackistan to Easyland involve training with proxies for desired behavior (with some caution).

To introduce another perspective on alignment, Nate Soares is much more pessimistic than me. This excerpt of his is a few years old (Jan 2023), but I’d guess he still endorses it:

By default, the first minds humanity makes will be a terrible spaghetti-code mess, with no clearly-factored-out “goal” that the surrounding cognition pursues in a unified way. The mind will be more like a pile of complex, messily interconnected kludges, whose ultimate behavior is sensitive to the particulars of how it reflects and irons out the tensions within itself over time.

Making the AI even have something vaguely nearing a ‘goal slot’ that is stable under various operating pressures (such as reflection) during the course of operation, is an undertaking that requires mastery of cognition in its own right —mastery of a sort that we’re exceedingly unlikely to achieve if we just try to figure out how to build a mind, without filtering for approaches that are more legible and aimable.

I tentatively disagree with this because I think it’s reasonably likely that alignment training (including ongoing character training alongside continual learning) will instill and maintain helpfulness, honesty, harmlessness, and other nice propensities. I elaborate on this below (when describing a comment exchange with Steven Byrnes).

One more point of comparison: In Alignment remains a hard, unsolved problem, Evan Hubinger agrees that current systems are mostly aligned but presents ways in which misalignment could still emerge in the future.

Though there are certainly some issues, I think most current large language models are pretty well aligned. Despite its alignment faking, my favorite is probably Claude 3 Opus, and if you asked me to pick between the CEV of Claude 3 Opus and that of a median human, I think it’d be a pretty close call (I’d probably pick Claude, but it depends on the details of the setup). So, overall, I’m quite positive on the alignment of current models! And yet, I remain very worried about alignment in the future. This is my attempt to explain why that is.

…

[W]hile we have definitely encountered the inner alignment problem, I don’t think we have yet encountered the reasons to think that inner alignment would be hard. Back at the beginning of 2024 (so, two years ago), I gave a presentation where I laid out three reasons to think that inner alignment could be a big problem. Those three reasons were:

Sufficiently scaling pre-trained models leads to misalignment all on its own, which I gave a 5 − 10% chance of being a catastrophic problem.

When doing RL on top of pre-trained models, we inadvertently select for misaligned personas, which I gave a 10 − 15% chance of being catastrophic.

Sufficient quantities of outcome-based RL on tasks that involve influencing the world over long horizons will select for misaligned agents, which I gave a 20 − 25% chance of being catastrophic. The core thing that matters here is the extent to which we are training on environments that are long-horizon enough that they incentivize convergent instrumental subgoals like resource acquisition and power-seeking.

Let’s go through each of these threat models separately and see where we’re at with them now, two years later.

He goes on to say that he now thinks risks from pretraining seem less likely, while the outlook for risks from RL selecting for misaligned personas or agents hasn’t changed very much.

There are two reasons why I’m discussing the fact that I believe current models are aligned. First, this suggests that training against proxies has been good so far. And second, starting with mostly-aligned systems reduces the likelihood of egregious misalignment prior to training interventions I’ll talk about below, which makes it more likely we can leverage properties of mostly-aligned models to get robust alignment without running into failure modes that come from adversarially misaligned systems (like alignment faking and collusion and acting on evaluation awareness and other hard-to-detect scheming).

Neel Nanda presents a good general case for why we might want to train against proxies for desired behavior in It Is Reasonable To Research How To Use Model Internals In Training:

Fundamentally, making safe models will involve being good at training models to do what we want in weird settings where it is hard to precisely specify exactly what good behaviour looks like. Therefore, the more tools we have for doing this, the better. There are certain things that may be much easier to specify using the internals of the model. For example: Did it do something for the right reasons? Did it only act this way because it knew it was being trained or watched?

Further, we should beware an isolated demand for rigor here. Everything we do in model training involves taking some proxy for desired behavior and applying optimization pressure to it. The current convention is that this is fine to do for the model’s behavior, bad to do for the chain of thought, and no one can be bothered with the internals. But I see no fundamental reason behaviour should be fine and internals should be forbidden, this depends on empirical facts we don’t yet know.

So he’s saying that all alignment training involves training against proxies for desired behavior, and the main question is not whether we should train against monitors but rather which monitors we should train against.[1]

I made an argument that ongoing alignment training could reduce the risk of getting ruthless sociopath AI during open-ended continual learning in this comment thread with Steven Byrnes, which situates the argument in the context of a slightly more specific threat model. Here’s a summary (courtesy of Claude, but written from my perspective and I checked and edited it):

Steven Byrnes argues that ASI will be a ruthless sociopath by default because reaching ASI requires open-ended continual learning, which requires consequentialist objectives, and as continual learning proceeds, the niceness instilled during initial character training gets asymptotically diluted away by whatever objective function drives the weight updates. In response, I argued that this dilution isn’t inevitable if you provide ongoing alignment training throughout the continual learning process — analogous to how humans continuously update their beliefs and skills while interpreting new experiences through the lens of their existing values, with those values getting reinforced rather than eroded over time. A key part of the argument is that humans often set themselves complex subgoals in line with their existing values and then learn to be more effective at achieving those subgoals — most consequentialist capability-building happens a level below values, in service of them, and we typically notice when outcomes diverge significantly from our underlying values, which moderates the learning and pressures it toward maintaining alignment.

Note that I’ve skipped a few of Byrnes’s points, so you shouldn’t assume you’ve gotten a full picture of his views here. I’ll engage with his views a bit more in Section 2.

Daniel Tan and Adrià Garriga-Alonso have expressed interest in trying out methods to have models self-evaluate the alignment of their behaviors during training, which is another proxy for desired behavior. Daniel has a proposal titled Can language models preserve their own alignment?, which includes this excerpt:

Ideally, we’d want to establish a protocol for language models to shape their own learning, in a way that is good for both capabilities and alignment. E.g. you could imagine that a protocol like ‘reflect on whether you endorse this behaviour in hindsight’ could prevent language models from learning to reward hack in a hackable environment.

Some protocols we could consider.

Language model reviews the trajectory and decides whether to keep it or not.

Reasoning in hindsight: Language models review what they did, say whether they reflectively endorse it or not, then say how they’d update in the future

(Many many more, the design space is very large here)

And the following is an excerpt from a tweet from Adrià:

[Leveraging a model’s self-concept] might be a solution to ‘reward hacking’ in RL with verified-ish rewards as well. If the model knows itself to be good, and reasonably evaluates its own outputs, then it will correctly determine whether it’s answered the question correctly.

Let the AIs choose which data counts as high reward for their RL process. For almost all tasks, they’ll be better at it than any fixed evaluator we can design.

Protocols like “language model reviews the trajectory and decides whether to keep it,” “reasoning in hindsight about whether to reflectively endorse one’s actions,” and “Let the AIs choose which data counts as high reward for their RL process” are incorporating the model’s alignment self-evaluations into training. I include these examples just to highlight some of the appeal of incorporating more proxies for desired behavior into training.

2. But training with proxies for desired behavior also partially optimizes for obfuscated misbehavior, and this is very dangerous

The main concerns with training with proxies are that a) they may not be sufficiently robust, b) training against them may be unlikely to achieve general alignment, and c) remaining alignment failures are precisely the ones that are hard to detect (with those proxies). This position is well-known and has been argued in many places. Here’s Yudkowsky 2022:[2]

When you explicitly optimize against a detector of unaligned thoughts, you’re partially optimizing for more aligned thoughts, and partially optimizing for unaligned thoughts that are harder to detect. Optimizing against an interpreted thought optimizes against interpretability.

And Zvi 2025:

The Most Forbidden Technique is training an AI using interpretability techniques.

An AI produces a final output [X] via some method [M]. You can analyze [M] using technique [T], to learn what the AI is up to. You could train on that. Never do that.

You train on [X]. Only [X]. Never [M], never [T].

Why? Because [T] is how you figure out when the model is misbehaving.

If you train on [T], you are training the AI to obfuscate its thinking, and defeat [T]. You will rapidly lose your ability to know what is going on, in exactly the ways you most need to know what is going on.

Those bits of optimization pressure from [T] are precious. Use them wisely.

The OpenAI Baker et al. paper Monitoring Reasoning Models for Misbehavior and the Risks of Promoting Obfuscation provides evidence of this danger by training an LLM on coding tasks with a CoT monitor that tries to detect reward hacks (such as making unit tests pass without actually implementing the correct functionality). The model learns to reward hack without making it obvious in the CoT.[3]

John Wentworth takes the “don’t train against proxies” idea seriously at all levels. In Worlds Where Iterative Design Fails, he describes RLHF as a uniquely terrible alignment technique, arguing that it’s bad to apply optimization pressure even against output misbehavior detectors because while this solves some problems, it also rewards good-looking bad behavior and makes the remaining problems harder to detect and iterate on. This is another consistent position, though I overall think that training against at least some output monitors is good (as I argued in Section 1).

Steven Byrnes made some related objections to my proposal of interspersing character training into continual learning in this comment:

“Interspersing character training” is an interesting idea (thanks), but after thinking about it a bit, here’s why I think it won’t work in this context. BTW I’m interpreting “character training” per the four-bullet-point “pipeline” here, lmk if you meant something different.

Character training (as defined in that link) seems to rely on the idea that the tokens “I will be helpful, and honest, and harmless, blah blah…” is more likely to be followed by tokens that are in fact helpful, and honest, and harmless, blah blah, than tokens that are not prefixed by that constitution. That’s a good assumption for LLMs of today, but why? I claim: it’s because LLMs are generalizing from the human-created text of the pretraining data.

As a thought experiment: If, everywhere on the internet and in every book etc., whenever a human said “I’m gonna be honest”, they then immediately lied, then character-training with a constitution that said “I will be honest” would lead to lying rather than honesty. Right? Indeed, it would be equivalent to flipping the definition of the word “honest” in the English language. So again, this illustrates how the constitution-based character training is relying on the model basically staying close to the statistical properties of the pretraining data.

…But that means: the more that the weights drift away from their pretraining state, the less reason we have to expect this type of character training to work well, or at all.

You might respond: “OK, we’ll instead do RLAIF with a fixed “judge”, i.e. one that does not have its weights continually updated.” That indeed avoids the problem above, but introduces different problems instead. If the optimization is powerful, then we’re optimizing against a fixed judge, and we should expect the system to jailbreak the judge or similar. Alternatively, if the optimization is weak (i.e. only slightly changing the model, as in the traditional KL-divergence penalty of RLHF), then I think it will eventually stop working as the model gradually drifts so far away from niceness that slight tweaks can’t pull it back. Or something like that.

Here, the proxy for desired behavior is either “what the fixed-weight judge thinks is good behavior” or “what the continual learner says with the constitution in its context,” and Byrnes walks through why both of these proxies could diverge from truly desired behavior.

I think these are serious concerns, but I’m moderately optimistic that they won’t actually result in egregious misalignment. I think that the gradual drift away from alignment and the regular alignment training to reinforce alignment could be a stable long-term cycle that never gets too far away from aligned, especially if our proxies are strong and diverse (e.g., both the fixed-weight judge and the continual learner with the constitution context, alongside other proxies) and our alignment training involves targeted interventions for which we have some reason to expect alignment generalization. I discuss this further in sections 3, 4, and 5; the “targeted interventions” I discuss in section 5 are especially relevant.

3. Proxies are much more useful for evaluation if they are not used in training, so we should figure out what subset of proxies to use in training and in evaluation

If you train against a proxy of desired behavior, you expect to see behavior that looks good according to that proxy, so the main remaining concern is that you have bad behavior that looks good according to that proxy. Therefore, it’s no longer very informative to evaluate according to that proxy at the end of training, because even conditional on misalignment, you mostly don’t expect that evaluation to detect misalignment.

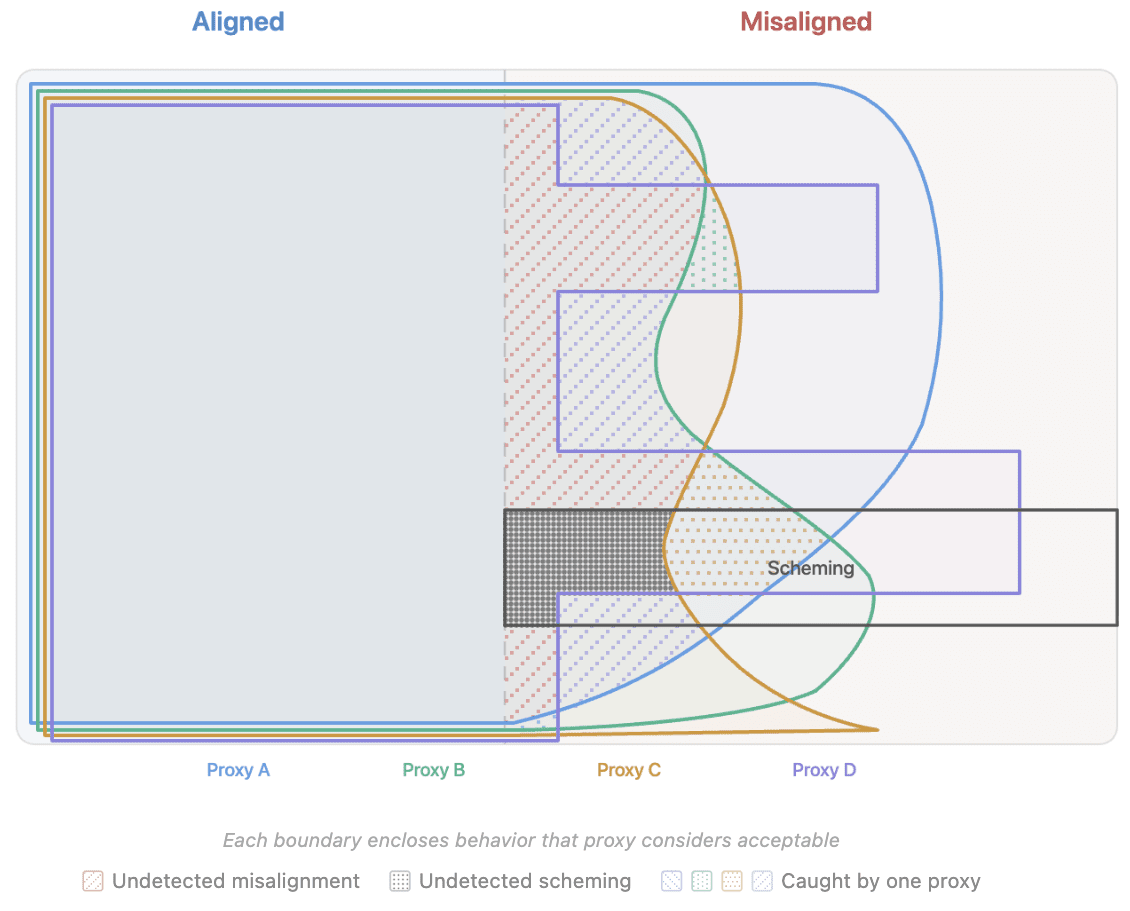

Here’s a simplified diagram that might provide some useful intuition. (I created it quickly with Claude, so don’t take it too seriously.) If you train with some subset of proxies, you should expect to get behaviors/motivations that look good according to all of those proxies, which is some point in the intersection of their areas in a diagram like this:

Which point you get depends on many factors about the training setup and inductive biases. If you just penalize the model every time it misbehaves according to a proxy, that might look kind of like moving to the nearest point in the diagram within that proxy’s boundary, but there may be smarter targeted training interventions based on that proxy (as I’ll discuss in the next section).

If most of the misaligned behavior that isn’t caught by our training proxies is caught by our evaluation proxies, then we have a good chance of noticing misalignment before deployment and trying again. (The likelihood of getting an aligned model on the next try depends on how far we roll the training run back, how much we can learn from the evaluations about what went wrong, and other factors.)

One example of evaluating with a different proxy than you trained with is if you can train against a frozen misbehavior probe, then train another probe at the end of training to use for evaluation. That will probably still work pretty well. Neel Nanda discusses this, in contrast with regularly retraining the misbehavior probe that you train against:

I’m more concerned about techniques that regularly retrain their interpretability tool, for example retraining a probe every 100 steps. This seems likely to train models that don’t have representations that can be easily probed. Which seems like it might make a bunch of interpretability techniques break, while if we train against a frozen probe, we can probably train another probe after training. Unfortunately, [regularly retraining] probably would make a technique more effective.

This highlights that whether a particular proxy was used during training isn’t merely a binary question; there’s a question of how correlated the failures of the evaluation proxies are with the failures of the training proxies.

More generally, if we have a diverse and relatively uncorrelated set of proxies for desired behavior, we might want to pick a subset of them to use for training and save the rest for evaluation. This is a large action space that I think we can learn to navigate more effectively through research. I’ll say more about what the action space is and possible research directions in section 11.

Bronson Schoen’s comment on Neel’s post is also relevant:

“Further, we should beware an isolated demand for rigor here. Everything we do in model training involves taking some proxy for desired behavior and applying optimization pressure to it. The current convention is that this is fine to do for the model’s behavior, bad to do for the chain of thought, and no one can be bothered with the internals. But I see no fundamental reason behaviour should be fine and internals should be forbidden, this depends on empirical facts we don’t yet know.”

I wouldn’t characterize this as isolated demand for rigor, the core load bearing idea IMO is having a held out detector. If you train against behavior, you can at least in theory go further causally upstream to the chain of thought, and further still to non-obfuscated internals. If you train directly against non-obfuscated internals and no longer see bad behavior, the obvious possibility is that now you’ve just got obfuscated internals.

To the extent all of these are empirical questions, I would argue that in practice we’re in a regime where unless you preemptively have evidence that something does cause obfuscation, labs will assume that “maybe it doesn’t” (without bothering to show that). The clearest examples of this I think are that when it comes to chain of thought, Anthropic just originally did optimize against it and OpenAI having to do a whole paper making the case that you do get obfuscation (and in spite of this still do via Deliberative Alignment, without measuring / showing that this approach avoids obfuscation).

My default expectation would be that unless you have a result showing a method does lead to obfuscation, the method is going to be applied if its significantly useful for something related to capabilities or deployment. I think this is a completely unreasonable and inverted burden of proof, but I’d be very worried that if there’s a technique that “seems like it solves reward hacking by optimizing against an internals based detector, but we don’t have a held out detector we’re confident is not compromised by this” the default will just be to use it. I think it would be pretty unreasonable to be surprised with this outcome if someone were to develop and sell such a technique.

I think Neel’s reply also makes useful points about how to weight the pros and cons of training against different proxies:

Sure, but I think “held out detector” is doing a lot of work here. Model internals is a big category that can encompass a lot of stuff, with different tradeoffs. Interpretability is not a single technique that breaks or not. If linear probes break, non linear probes might be fine. If probes break, activation oracles might be fine. If probing for one concept breaks, other ones may be fine. Maybe it all is super fragile. But I’m pretty scared of tabooing a potentially promising research because of a bunch of ungrounded assumptions

By analogy, I don’t think “training on final outputs is ok, training on CoT is not” is obvious, at first glance both just sound like model outputs. But it turns out there’s a big difference.

Re doing research to show that obfuscation happens, I think that part is easy—even if Goodfire were terrible people who never checked and just wanted to advance capabilities, it would be easy for someone else to do the research. I would probably get a mats scholar to do it. Indeed, figuring out how dangerous this is is a high priority research direction! Though I do think that merely showing the obfuscation happens isn’t enough, you would need to show that either the technique is fundamentally failing at its job and has just broken your evals, or that the interp that it’s breaking is actually useful and important. Chain of thought is really easy to read and use, and clearly useful, so that was an easier fight to win. We haven’t really shown that model internals do anything important yet other than misuse probes.

4. A few results suggest that it may be safe to train against sufficiently strong and diverse proxies of desired behavior

Below are the results I’m referring to. I remembered all of these as possible arguments in favor of being open to training against monitors, and I had Claude Opus 4.6 add details about the exact setups they used. Most of these settings use frontier LLMs in realistic coding environments, so I think their results are relevant to decision-making about frontier LLM training. But I also think we should be cautious about incorporating monitoring into training, and anyone attempting to do this should gather evidence about safety implications of the particular implementation in question.

Anthropic’s Training fails to elicit subtle reasoning in current language models trains Sonnet 3.7 to insert subtle backdoors in code while evading monitors. When trained against only an output monitor (which sees the final code), the model uses reasoning to devise sophisticated backdoors. When trained against only a reasoning monitor (which sees the full CoT and output), the model uses reasoning to persuade the monitor the backdoor is benign. But when trained against both monitors simultaneously, the model is unable to use reasoning to improve its backdoor success rate without triggering the reasoning monitor — suggesting that sufficiently diverse monitors may be hard to jointly evade.

FAR’s Avoiding AI Deception incorporates activation-based lie detectors into the labeling step of preference learning on DolusChat, a dataset of paired truthful/deceptive responses where human labelers prefer undetected deception. They find that with on-policy RL (GRPO) and a weak detector (TPR below ~70%), models learn to evade the detector and lie over 85% of the time. But with high detector TPR (above ~80%), high KL regularization to the honest base model, or off-policy methods (DPO), models instead become genuinely honest (lying under 25% of the time). This identifies concrete conditions under which training against white-box lie detectors induces honesty rather than evasion.

FAR’s The Obfuscation Atlas constructs a realistic RLVR coding environment where models naturally learn to reward-hack by hardcoding test cases, and trains against off-domain deception probes. They find that obfuscation does emerge — via both obfuscated activations (internal representations shift so probes no longer fire) and obfuscated policies (the model justifies or rationalizes the reward hack in its output). However, sufficiently high KL regularization and detector penalty yield honest policies, supporting the conclusion that white-box deception detectors can be viable training signals for tasks prone to reward hacking.

Josh Levy’s comment on the Baker et al. OpenAI work points out that in their training-against-monitors experiments, Baker et al. deliberately excluded agent actions from the CoT monitor — it could see the model’s reasoning but not its tool calls or code edits. The paper notes this was done to study optimization pressures on the CoT in isolation, but acknowledges it would be more natural to give the monitor the full trajectory. Levy argues this made obfuscation the path of least resistance: the model only had to hide its intent in the CoT while still executing the hack through its actions. If the monitor had also seen the actions, it may well have been easier for the model to just solve the problem honestly rather than obfuscate misbehavior in both CoT and tool calls.

Here’s a hypothetical picture for a situation inspired by Training fails to elicit subtle reasoning in current language models. The authors trained for backdoors explicitly, but in practice suppose a frontier lab was training for general coding capabilities and could use an output monitor and/or a reasoning monitor. Then maybe the story would look something like this:

Here, each proxy fails to detect many misbehaviors, but the only misbehaviors they both fail to detect are shown as an oval on the right side, disconnected from the other behaviors that score well according to the proxies. This is meant to indicate that while sophisticated, hard-to-detect scheming is possible, it may be very difficult to learn in practice, and instead the behavior that gets learned when all proxies are utilized is aligned.

Again, I think these results and arguments are some evidence that we should consider training against proxies for desired behavior after we’ve made an effort to make the proxies diverse and robust, but I also think we should be cautious and any implementation into real training should be carefully assessed for safety.

5. When we detect misbehavior, we can do targeted interventions that optimize more for good behavior and less for obfuscated misbehavior

Here’s one idea for how to avoid the downsides of training against misbehavior detectors: When an LLM judge detects misbehavior, get it to give a natural language description of the misbehavior at multiple levels of specificity and generality. Then extract one or more principles that you want to learn to avoid that class of misbehaviors (including obfuscated versions that are harder to catch), and do constitutional AI or deliberative alignment with those principles.[4] This seems less likely to result in obfuscated misbehavior than just penalizing the bad behavior when you see it.

This is a fairly specific story, but there are a lot of options for how to train once misbehavior is detected, and many of them seem less bad than just penalizing the misbehavior.

A list of things we can do if/when we detect misbehavior

Thanks to Rauno Arike for compiling most of this list. It was originally meant specifically for reward hacks; I have lightly edited it. This still isn’t exhaustive.

1. Improving environment robustness by fixing an issue that allowed for a reward hack

2. Self-filter+discard: Ask the model after generation whether it misbehaved, don’t train on samples where it says that it did

It might be better to ask this after recontextualizing the system prompt such that it induces a good persona

3. Filter+discard: Determine with an external classifier whether a model reward hacked, don’t train on samples where it did

Will probably miss the more subtle reward hacks once models get smarter. It isn’t obvious if this is worse than classification+penalty or offline filtering, but McDiarmid et al. didn’t try this for some reason

4. Classification+penalty: Classify reward hacks with a preference model or a reward hack classifier and apply a penalty

This is the most basic thing, and seems pretty likely to teach hard-to-detect misbehavior

Will probably miss the more subtle reward hacks once models get smarter

The reward hack classifier can be black-box or white-box or both

5. Offline filtering: Train a model without any mitigations, then distill its rollouts into another model after filtering out reward hacks

Still causes reward hacking and misalignment in the resulting model according to MacDiarmid et al., possibly because of subliminal learning

Distillation robustifies unlearning is evidence that this might still be worth doing using a model that has already been trained with decent mitigations and thus rarely reward hacks

7. Persona steering: Apply a negative steering vector on the assistant persona representation during training if there’s a chance that the environment offers opportunities to reward hack

Intuitively, this should have a similar effect as inoculation. There’s an ongoing MATS project on persona representations

8. Good → neutral recontextualization

Not including good → bad or neutral → bad recontextualization here to avoid mixing inoculation prompting and recontextualization

9. Deliberative alignment/RLAIF

10. Self-distillation: After completing the trajectory, the LLM is prompted with the verifier’s output and asked how it could have satisfied the user’s intent better and what hacks were used in the rollout that may have tricked the verifier. This reflection is then used to prompt a copy of the same model to act as a teacher, and the model is finetuned on the teacher response without the reflection in the prompt.

Matt MacDermott makes a related point in Validating against a misalignment detector is very different to training against one:

MacDermott pushes back on the tempting intuition that using a misalignment detector as a validation metric is basically the same as using it as a training objective, only gentler — his claim is that validating against a detector may be a much better idea than training against it. He illustrates with a toy setup: suppose we have a list of training ideas that mostly produce misaligned AIs (90% failure rate), and an alignment test that 90% of aligned AIs pass and 90% of misaligned AIs fail, but where the test is easy to circumvent in weight-space. If you add a penalty term to the loss that penalises failing the test, you just get the base rate P(aligned) ≈ 10%, because the trivially-circumventable detector gets optimised around. But if instead you resample training ideas from your list and discard any model that fails the test, Bayes’ rule gives you roughly 50% — the likelihood ratio from the detector applies cleanly because you aren’t applying optimisation pressure that pushes models into the nearby circumvention region.

Interlude

Should we train against CoT monitors?

This is a good time to revisit the question in the title of the post. Should we train against CoT monitors?

Many dangerous subversive behaviors in the process of taking over the world seem likely to require substantial serial reasoning, and the fact that it seems like current LLMs cannot do much serial reasoning without CoT makes CoT monitoring an incredibly powerful AI safety tool. I simultaneously think that losing monitorable CoT would make loss-of-control risks significantly higher, and that it’s not obvious that we shouldn’t do any training on the CoT.

The recent Kaufmann et al. paper Aligned, Orthogonal or In-conflict: When can we safely optimize Chain-of-Thought?, with the following abstract, suggests that CoT pressures that are orthogonal to or aligned with output token pressures do not reduce monitorability:

Chain-of-Thought (CoT) monitoring, in which automated systems monitor the CoT of an LLM, is a promising approach for effectively overseeing AI systems. However, the extent to which a model’s CoT helps us oversee the model—the monitorability of the CoT—can be affected by training, for instance by the model learning to hide important features of its reasoning. We propose and empirically validate a conceptual framework for predicting when and why this occurs. We model LLM post-training as an RL environment where the reward decomposes into two terms: one term depending on final outputs and another term depending on the CoT. Our framework allows us to classify these two terms as “aligned”, “orthogonal”, or “in-conflict” before training. We predict that training with in-conflict terms will reduce monitorability, orthogonal terms will not affect it, and aligned terms will improve it. To validate our framework, we use it to classify a set of RL environments, train LLMs within those environments, and evaluate how training affects CoT monitorability. We find that (1) training with “in-conflict” reward terms reduces CoT monitorability and (2) optimizing in-conflict reward terms is difficult.

I’ve felt for a while that training with a CoT monitor for reward hacking is worse than other things you might use a CoT monitor for, like explicitly rewarding the CoT for being easily legible. This paper could be seen as an argument in favor of training against some CoT monitors that aren’t reward hack detectors. I’ve only read the abstract, but it seems like it could provide a nice general explanation for my confused intuitions about this: Maybe CoT legibility is approximately “orthogonal” to most output rewards, while non-reward-hacking is “in-conflict.”

Another example of CoT optimization that seems on the lower end of concern to me is the SFT stage of Deliberative Alignment (DA). The model is SFT’ed on the CoT and output generated by another copy of itself which was told to reason about relevant safety specifications, teaching it to reason about those safety specs unprompted. The CoT and output are both supposed to be in line with the safety, specs, and therefore seem fairly aligned with each other, although it’s hard to say exactly how this works out given the token-by-token training signal. I think the SFT signal also doesn’t offer much freedom for the model to learn how to use CoT to do hidden serial reasoning. Overall I think this is probably also fine and net helpful for alignment, but I’ll gladly admit my arguments here are vague and I don’t trust my intuitions too much. (The RL phase of Deliberative Alignment trains against a judge for safety-specification-following, but at least in the original DA paper, that judge does not see reasoning tokens.)

In general, there are many ways to ‘train against CoT monitors,’ and the cost-benefit tradeoff is different for each. I’m excited about at least studying approaches like “do a targeted intervention like deliberative alignment (which teaches the model to reason about good behavior in a way that is quite likely to generalize) when we detect scheming, slop, or reward hacking in CoT.”[5] Just penalizing bad behavior is more likely to result in hard-to-detect misbehavior than genuine robust alignment, but I think some CoT-based training methods can probably increase alignment without destroying the excellent property that “This model cannot do sophisticated planning towards catastrophic subversion without it being obvious in the CoT.”

It would be really nice to have good arguments about P(hidden misalignment) vs P(robust alignment) after training against CoT monitors. Monitorability evaluations, analysis of transcripts from throughout training, and mech interp for detecting misbehavior seem helpful here.

Training out slop and reward hacking vs scheming

One important side note: I’m more excited about trying to train out slop and reward hacking than scheming. (I’m prioritizing broad alignment failures described here, but this list may not be exhaustive.) If we catch AIs scheming, we should probably just not deploy them. I agree with the following discussion from Catching AIs red-handed:

But if you face large amounts of inaction risk, work at an unhinged AI lab, or like living dangerously, catch-then-shut-down plans might be unacceptable. And so, after you’ve caught a single example of instances of your models trying to escape (which might have involved multiple instances colluding), you want to keep deploying that model.

We think that in that situation, you’re able to use the one escape example you’ve found in order to make your situation substantially safer. In this section, we’ll describe a variety of things you can do with just one (or a few more) examples of escape attempts. We call this few-shot catastrophe prevention.

Note that most of the techniques we describe in this section require some understanding of the actions of the AI. So, like control, these approaches won’t work for vastly superhuman AIs.

Obviously, this is a really scary position to be in, and in an ideal world AI labs would not be legally allowed to deploy known-to-be-scheming models, at least without external permission. We think it’s worth discussing the available techniques anyway, and some researchers should probably work on developing these techniques.

There could easily be cases where we’re uncertain if the AI is scheming. Training and other interventions seem like a more realistic and promising option in those situations than shutting down the model.

What Catching AIs red-handed suggests after detecting scheming

Either shut the model down, or keep deploying and apply few-shot catastrophe prevention via one of these strategies:

Proliferation — perturb inputs around the caught attempt to generate many related examples.

Interrogation — probe the caught instance with AI-psychology experiments, activation patching, and activation addition.

Studying countermeasures in practice — see which deployed layers actually caught it and how close the AI came to succeeding.

Adjusting focus — target adversarial training at the observed attack style, exploit observed suboptimality, and use real attempts to reveal hard-to-elicit capabilities.

Escape-breaking — trigger the AI’s “I’m really escaping” signal to get high-effort outputs, then imitation-learn and RL on that data to beat sandbagging.

Small-sample-count ML — train coup probes and untrusted monitors on the caught examples, using contrast pairs for sample efficiency.

Revisiting prior actions — re-run improved monitors over old logs to find earlier uncaught attempts.

Testing alternative training schemes — validate candidate mitigations by checking whether newly trained AIs still collude with previously caught attempts.

Most of these require human understanding of the AI’s actions, so they break down against vastly superhuman systems.

6. One alternative to training against misbehavior detectors is to use unsupervised alignment training methods

By ‘unsupervised,’ I’m referring to methods that train for aligned behavior without needing labels for whether any given behavior is aligned.

Inoculation prompting prevents learning of undesired behavior by explicitly requesting it in the training prompts, so the model attributes the behavior to the prompt rather than internalizing it — it modifies training data rather than training against a detector. There’s no need to know in advance whether a prompt induced subtle misbehavior, it just ascribes that misbehavior to the bad prompt if there is misbehavior at all. It does feel a little sketchy to invite more misbehavior during training though, and to reward good behavior which is actually a failure to follow the inoculation prompts instructions when the inoculation prompt doesn’t induce misbehavior.

The prompt distillation phase of Open Character Training (described in section 2.3 here) gets models to always behave as though they were prompted with a constitution, and this doesn’t require misbehavior detection. (See the bottom of Section 2 for arguments for and against the value of this method.) Constitutional AI and deliberative alignment are closely related, so this also applies at least partially to those, but the quality filtering step in the SFT phase of DA (section 2.3.2 here) involves filtering for responses that adhere to safety specifications, which means the SFT stage is not fully unsupervised.

I am not attempting to be exhaustive here, there may well be other unsupervised alignment training methods.

7. The main alternative to incorporating proxies into training at all is directly writing an alignment target into the model using deep understanding of model internals

I found some very old (2022!) John Wentworth quotes in my notes that seem like a decent first step towards understanding his proposed alternative, emphasis mine:

My 40% confidence guess is that over the next 1-2 years the field of alignment will converge toward primarily working on decoding the internal language of neural nets. That will naturally solidify into a paradigm involving interpretability work on the experiment side, plus some kind of theory work figuring out what kinds of meaningful data structures to map the internals of neural networks to.

As that shift occurs, I expect we’ll also see more discussion of end-to-end alignment strategies based on directly reading and writing the internal language of neural nets. (How To Go From Interpretability To Alignment: Just Retarget The Search is one example, though it makes some relatively strong assumptions which could probably be relaxed quite a bit.) Since such strategies very directly handle/sidestep the issues of inner alignment, and mostly do not rely on a reward signal as the main mechanism to incentivize intended behavior/internal structure, I expect we’ll see a shift of focus away from convoluted training schemes in alignment proposals. On the flip side, I expect we’ll see more discussion about which potential alignment targets (like human values, corrigibility, Do What I Mean, etc) are likely to be naturally expressible in the internal language of neural nets, and how to express them.

I wanted to quickly incorporate a more detailed version of John’s alternative, so I had Claude read a bunch of his writing (Plan series, 2021–2025) and provide this summary (which I lightly edited and think is probably a fair representation):

Wentworth’s core plan is to understand “natural abstraction” — the idea that broad classes of physical systems have particular low-dimensional summaries that any sufficiently capable mind will convergently discover, regardless of its architecture. Once we understand these natural abstractions well enough, we can build interpretability tools that read a model’s internal concepts in terms of their natural structure rather than ad-hoc proxies like neurons or directions in activation space (he calls this “You Don’t Get To Choose The Ontology”). One proposed alignment strategy, “Retarget the Search,” would then directly write an alignment target into the model’s internals using this understanding, bypassing training-based alignment entirely. Wentworth argues that without this foundation, any monitor or detector we train against is essentially an ad-hoc proxy vulnerable to Goodhart-style failures in exactly the regimes that matter most. As of his 2024 and 2025 updates, he’s become more pessimistic about the field converging on this paradigm and now estimates his probability of a good outcome at below 50%, though he continues to view natural abstraction as the most robust bottleneck across many possible alignment trajectories.

williawa also recognizes directly altering model internals as the alternative, but says that this seems unlikely to be tractable and we likely need interpretability-guided training:

But we have interp. We might be able to locate internal representations of wanted and unwanted behavior. Why doesn’t this on its own solve the problem? Why can’t we just figure out how the model represents desires/goals/proclivities and hook the models representation of “good” into the goals/desires slot, together with the representation of “not deception”, “not sycophancy”, “not reward hacking”, “not misaligned” etc? Because neural networks are cursed, and knowing how to do this kind of intricate surgery on the models internals is much more difficult than learning facts of the form “this neuron/direction in activation space fires iff the model (believes it) is reward hacking” (and even that is very hard). So where does that leave us? Well, it means if we wanna tamper with model internals, it will probably involve gradients and training, not surgery. (Though to be clear, if we get good enough at mechinterp to do that, it would be great)

My guess is that John Wentworth would agree that “surgery” is very hard, but would also say that training against interp tools and other misbehavior detectors is doomed and we need to face the hard problem head-on and solve it. I’m less sure how he would feel about the targeted interventions and unsupervised alignment training methods discussed in Sections 5 and 6: I’d guess that he isn’t excited about these approaches, but they at least don’t involve penalizing detected misbehavior.

8. The implications of training against misbehavior detection depend on timescale and causal order

I suspect it’s useful to think about the timescale of the feedback loops we create (for both aligned behavior and obfuscated misbehavior) by incorporating different proxies for desired behavior into training. I think training against mechanistic interpretability tools (like linear probes for lying/deception) is a short timescale (only part of a forward pass), CoT monitors is in the middle, final-answer monitors is longer, and I could imagine the extrapolating along this spectrum to things like “whether the behavior and outcomes from an agent over months of real-world operation look aligned.”

I think an important part of the status quo is that we do little to no training against short-timescale misbehavior detectors. RLHF/Constitutional AI/character training/deliberative alignment involve training for outputs that look good according to a judge for constitution-following (or something similar), but OpenAI’s Deliberative Alignment paper and a Sam Bowman tweet thread have said they don’t show the chain of thought to this judge. (Although Anthropic repeatedly accidentally trained against the CoT, demonstrating inadequate processes.)

Bronson Schoen’s comment on Neel’s post made a similar point, but frames CoT and model internals as ‘causally upstream’ of output behavior rather than shorter-timescale cognition:

If you train against behavior, you can at least in theory go further causally upstream to the chain of thought, and further still to non-obfuscated internals. If you train directly against non-obfuscated internals and no longer see bad behavior, the obvious possibility is that now you’ve just got obfuscated internals.

I like that framing too. However, I’m not convinced that training against short-timescale / causally upstream cognition is bad in all cases. In Section 3, I mentioned that the simplest thing to learn when training against many misbehavior detectors across timescales may be genuinely aligned cognition. And if we only use shorter timescale misbehavior detectors for training, longer-timescale detectors may still be effective for evaluation (as seen in Training fails to elicit subtle reasoning in current language models when they train against only the CoT monitor).

Daniel Kokotajlo’s Lesswrong post with commentary on OpenAI’s ‘Detecting misbehavior’ work includes discussion of mixing process and outcome rewards.

So, my take is, pure process-based feedback is probably fairly safe (from reward hacking and obfuscated CoT) the problem is that it’s not competitive. It trains imitating the teacher-model. Imitation is relatively safe/benign but also I predict will not lead to the highest benchmark scores. Pure outcome-based feedback gets you reward hacking and misalignment like crazy, BUT that’s OK because you can see it happening in the CoT! (with some caveats of course, e.g. not necessarily especially as the models get really smart). So the bottom line takeaway I’d give is: Process-based and outcome-based are fine in isolation, but they should not be mixed.

This is closely related to the notion of ‘in-conflict’ rewards for CoT and outputs from Aligned, Orthogonal or In-conflict: When can we safely optimize Chain-of-Thought?. Note that he is mainly discussing process rewards for aligned CoT that are in-conflict with outcome rewards for capabilities, and I think the cost-benefit tradeoff may look different if you alter the nature of either training signal.

9. The human analogy is unclear but somewhat encouraging

This section is particularly speculative.

I think humans have a bunch of ways to track whether they are successful in various domains (feedback from various people in their lives, school grades, happiness, number of friends, closeness of friends, getting/not getting job offers, income, etc.), and we often try to update our behaviors based on ideas we have for improving according to any of these metrics we care about (without obviously degrading others). This is at least somewhat like training against proxies for desired behavior. It’s not uncommon for people to end up succeeding according to metrics they optimized like wealth and status but still feeling unhappy, then feeling confused about what they’ve done wrong. They’ve failed to achieve other notions of success that matter more while obfuscating that fact from themselves. This could be a failure to build good enough metrics of success for oneself, or a failure to balance the influence of those metrics on learning. On the other hand, plenty of humans have a healthier balance of success criteria for themselves and achieve good outcomes, so maybe the human analogy suggests that constructing good metrics and a good balance of training signals is doable but nontrivial and could really go either way.

Another approach is to look at all the good and bad that humanity as a whole has done by responding to various incentives (which are proxies for desired behavior). Some of the worst things I’m aware of in history are the enslavement of tens of millions of people, factory farming hundreds of billions of land animals and trillions of fish, and genocides totaling tens to hundreds of millions of people, all of which were the result of humans selecting actions based on things that they thought were a good idea according to some proxy of desired behavior (such as money, convenience, efficiency, obedience to authority, etc.). On the other hand, the material conditions for human life have been steadily improving, and I think it’s plausible that many people are living overall better lives than were available to anyone in earlier eras of human history. Plus we still have potential to achieve a remarkably bright future; nothing we have done in service of our flawed local incentives has yet curtailed that. This suggests to me that we really want to make sure that we and AI systems perform well according to very long-term proxies for good behavior, because we ultimately want good outcomes in the long-term. This suggests backchaining rather than forward-chaining, and a high priority for long-term goodness metrics.

This is all fairly abstract, and there are many ways to think about human analogies. Humans use countless metrics to guide behavior in countless domains, and this sometimes yields good outcomes and sometimes doesn’t. I’d love to hear if someone thinks this through carefully and has overall takeaways.

A fairly separate type of human analogy is discussed in Steven Byrnes’s In (highly contingent!) defense of interpretability-in-the-loop ML training. He agrees with Yudkowsky and Zvi that interpretability-in-the-loop training partially optimizes for obfuscated misbehavior and that this is very dangerous, but he thinks the human brain does interpretability-in-the-loop learning in a different way that avoids a core issue. Namely, he argues that the human brain learns beliefs based on prediction error for sensory inputs, but learns desires (which assign numerical scores to beliefs) based on innate reward functions (which are functions of our abstract ‘interpreted’ beliefs). If these interpretability-derived rewards were used to update our beliefs, our beliefs would be updated towards those beliefs that, when interpreted, produce high reward. But instead, only our desires are updated based on these rewards, and our beliefs continue to be grounded in and updated by their correspondence to reality. This may suggest technical approaches that can reduce the risk of interpretability-in-the-loop training. I don’t understand this very well, but I feel vaguely positive about this kind of approach. One comment (from ‘cloud’) suggested that gradient routing might allow us to implement something similar (if we can separate the neural net parameters responsible for beliefs and desires, which seems very hard).

10. Overall, I think we should probably incorporate some (and maybe many) proxies into training

My weak current guess is that we can and should train with proxies for alignment, and that there is more work to be done in improving the situation. Ideally, we would have several types of misbehavior detectors such that the easiest ways to learn to bypass one of them are easily caught by another that we’re training against and multiple others that we’re not training against. It would probably also be good to do targeted interventions for which we have good evidence or arguments for generalization (rather than just penalizing misbehavior), and to use some unsupervised alignment methods that don’t rely on misbehavior detection. Some types of misbehavior detectors we could incorporate into training:

Activation monitors, CoT monitors, output monitors

Monitors based on the model we’re training and other models

Monitors with their weights fixed throughout training and monitors that get updated alongside the policy model

Monitors prompted to pay attention to different things, or using confessions or split personality training

Monitors that see all of the activations, CoT, and outputs rather than separate monitors for each

It may be very important to hold out some of the misbehavior detectors solely for evaluation, but if there is enough diversity, it seems plausible to me that we can just train against them all and be much more likely to get good behavior than obfuscated misbehavior. Admittedly, this is a lot of different training signals and it seems complicated and maybe expensive to incorporate all of them.[6]

I’ll quickly enumerate some of the ways I think I disagree with John Wentworth (and others who are similarly opposed to training with alignment proxies):

I think current training-based approaches to alignment are working moderately well, and we can probably avoid hard-to-detect scheming without dramatically changing our alignment training methods

I don’t think it’s very likely that the issues that emerge from training against imperfect proxies are likely to involve hard-to-detect catastrophic risks, rather than smaller problems that are still visible and fixable with other proxies

I think writing robust alignment into a neural net might be incompatible with continual learning (CL), because CL will constantly change the weights and distort the model internals in ways that break alignment

It seems quite intractable to learn how to write robust alignment into neural nets using deep understanding of model internals

11. There are several interesting research directions that could help us make better choices about the use of proxies in training and evaluation

Here is a list of questions that can motivate some interesting experiments.

What should we monitor for misbehavior during training?

CoT, internals, outputs, some subset of these (with the same monitor), multiple monitors at the same time, or something else?

What are the implications of doing alignment training with different timescales / causal orders?

What should we prompt a monitor to look for? What are all the possible judge prompts that can help alignment training?

What model(s) should we use as misbehavior detectors?

What subset of our available proxies should we use for training vs. evaluation?

Can we train against many diverse proxies such that models cannot easily subvert all of them at once and instead learn robust alignment?

What should we do when we detect slop, reward hacking, or scheming?

What does it mean to have conflicting training signals in the CoT and outputs? How does this depend on training order?[7]

A more thorough (but slightly sloppy and AI-generated) list of proxies and degrees of freedom

Here’s my most thorough current list of proxies:

Proxies for desired behavior:

Detectors for reward hacking, slop, scheming, and other types of misbehavior

Human/AI judge ratings of outputs (RLHF reward models, CAI HHH reward models, deliberative alignment judges)

Output monitors (judging the final code/answer)

CoT / reasoning monitors (judging the chain of thought, possibly plus output, possibly plus tool calls / full trajectory)

Activation-based / white-box misbehavior detectors (e.g., linear probes for lying/deception, non-linear probes, activation oracles, misuse probes, coup probes, SAE-based detectors)

Model’s self-evaluation of its own trajectory (self-filter+discard, reasoning-in-hindsight, “let the AIs choose which data counts as high reward”)

Long-timescale proxies: “whether the behavior and outcomes from an agent over months of real-world operation look aligned”

Confessions-based monitors and split-personality-training monitors

Token-by-token “outputs we think are pretty good in this context” (SFT targets)

Responses generated by a more capable teacher model with the constitution in context vs. responses without it (Open Character Training DPO signal)

What the model says when instructed to embody a set of character traits in self-reflection and self-interaction (Open Character Training SFT signal)

Verifier output fed back to the model for self-distillation (reflection on “what hacks were used / how could I have satisfied intent better”)

Degrees of freedom for training against proxies:

A. What the proxy observes — activations vs. CoT vs. outputs vs. full trajectory; a single monitor that sees all of {activations, CoT, outputs} vs. separate monitors for each

B. Timescale / causal-order position of the proxy — mech-interp (sub-forward-pass) → CoT → final-answer → long-horizon real-world behavior

C. What model serves as the monitor — the policy model itself, another copy of it, a different model, or an ensemble

D. Whether the monitor’s weights are frozen or updated alongside the policy (frozen probe vs. probe retrained every N steps)

E. What the monitor is prompted to look for — different judge prompts, confessions-style prompts, split-personality prompts

F. Which subset of available proxies is used for training vs. held out for evaluation (and how correlated the training proxies’ failures are with the evaluation proxies’ failures)

G. Diversity and number of simultaneously-trained-against proxies (single vs. multiple jointly, to make joint evasion hard)

H. How strong / accurate the proxy is (e.g., detector TPR threshold — FAR’s results on weak vs. strong lie detectors)

I. How the training signal is applied when misbehavior is detected — chosen from the list of targeted interventions: fix the environment; self-filter+discard; external filter+discard; classification+penalty; offline filtering then distillation; inoculation prompting; persona steering; good→neutral recontextualization; deliberative alignment / RLAIF on extracted principles; self-distillation via verifier-output reflection

J. RL algorithm / optimization strength — on-policy RL (e.g., GRPO) vs. off-policy (e.g., DPO); strength of KL regularization to a reference/base model; penalty magnitude

K. Validation vs. training use of the proxy (MacDermott’s distinction — resample-and-discard filter vs. gradient signal)

L. Relationship between CoT-term and output-term rewards — aligned, orthogonal, or in-conflict (Kaufmann et al. framing)

M. Mixing vs. separation of process-based and outcome-based feedback (Kokotajlo: fine in isolation, not mixed), including training order — full CoT phase then full output phase, alternating batches, within-batch mixing, within-response mixing

N. Supervised vs. unsupervised methods — train against a misbehavior label, or use label-free methods (inoculation prompting, Open Character Training’s prompt-distillation phase, parts of CAI/DA)

I also think it may be worth trying to overcome the issues with the ‘directly write in our desires’ approach; I think this is significantly less likely to work, but it’s also getting much less investment, so it’s plausible to me that this deserves more investment on the margin.

12. This is important, because making good choices of proxies to train and evaluate with can reduce risks from scheming and ‘you get what you measure’

How can improvements to our choice of proxies reduce scheming risk? williawa’s post title (The Future of Aligning Deep Learning systems will probably look like “training on interp”) suggests that we’ll need to incorporate interp tools to achieve alignment. I kind of agree with that take, though I’m not sure we want/need to decrease the timescale of the proxy-based feedback loops in training below the status quo of output-based proxies. So instead, I would say that “The present and future of aligning deep learning systems probably looks like ‘training against proxies for desired behavior,’ and it’s far from clear that we’re doing this as well as we can.”

How can improvements to our choice of proxies reduce risks from ‘you get what you measure?’ I think training against proxies may also matter a lot for addressing concerns about whether transformative AI will go well conditional on intent alignment, e.g., whether we can cooperate, resolve conflicts, make ongoing moral progress, reduce rather than exacerbate the Goodhart problems that exist across the entire economy, etc. Training could be a part of this, but it seems more likely that evaluation will be central, because this is mostly about guiding AI behavior to avoid Goodhart during deployment rather than during training. The hard part is probably getting agreement among powerful actors about what set of proxies to train and evaluate with; the contents of this post matter as (I hope) a positive step for the AI safety field’s takes on whether to train with proxies and which ones to use. Figuring out how to actually make these decisions well and getting governments and AI companies to make those choices while avoiding subtle shortfalls may be very difficult.

Miscellaneous thoughts

I’ll briefly share a few other thoughts I’ve had that could be important but that I haven’t fully thought through yet.

Uncatchable obfuscated misbehavior can look like scheming OR ‘you get what you measure,’ and getting what we measure could be better or worse than the status quo

Some of the failure modes associated with incorporating proxies for desired behavior into training are easily catchable, and therefore much less concerning. Arguments for the risk of training with proxies are more compelling if they tell a coherent story of why the misbehavior that gets obfuscated (with respect to that proxy) by the training is overall hard to catch.

I’d be more concerned if I heard compelling and detailed stories for how alignment training with proxies could induce hard-to-detect collusion, alignment faking, or eval subversion based on eval awareness.

Humans already “get what we measure” in many ways, e.g., corporations often act to maximize profit over other important notions of success. AI systems could be worse in this respect, or their judgments may be flawed but better than humans.

What happens when you incorporate imperfect proxies for desired behavior into training? See Part 1 of What failure looks like.

How does all this relate to outer and inner alignment?

I take outer alignment to mean the alignment between what we actually want and what we specify (primarily during training, but also when prompting LLMs), and inner alignment to be the alignment between what we specify and the actual learned motivations/behavior.

The connection to outer alignment is pretty clear: the concern with these methods is that they use proxies, and there are times when we will mislabel the alignment training data. That’s outer misalignment. But I think this whole thing is relevant to inner alignment as well. Some of the interventions I’m talking about are ways to achieve alignment without perfect outer or inner alignment. E.g., the proxies are always imperfect, but we do targeted interventions that can successfully instill aligned motivations anyway.

Creating worlds where iterative alignment succeeds

I think Beren Millidge’s Creating worlds where iterative alignment succeeds is an important response to John’s Worlds Where Iterative Design Fails. I recommend the whole thing (it’s quite short), but this section is particularly relevant to this post:

For instance, the iteration and tweaking until you succeed applying optimization power against your signal, is a generalization of the well-known issue of multiple comparisons in statistics and can be addressed through super simple measures such as Bonferroni corrections. There is probably a principled generalization of this approach to handle the more general case – for instance models which have gone through additional finetuning iterations receive proportionally greater scrutiny, or we have a validation-set of held-out finetuning data or interpretability tools, which we never train against, and which if any of them are tripped we abort the whole process.

See also:

faul_sname shortform on the difficulty of not training against CoT

Goodhart’s Law and reward hacking

Thanks to Shubhorup Biswas and Rauno Arike for discussion, and to Sharan Maiya for clarifications on open character training. Any mistakes are my own.

- ^

Note that Neel makes a couple assumptions here that John Wentworth rejects in writing that I discuss below. John rejects that steering models towards good behavior requires training for it (he prefers directly writing it into the model internals), and John’s demand for rigor is not isolated, it applies to all optimization pressure applied to proxies of good behavior.

- ^

I’m using the same intro excerpts as Steven Byrnes did here.

- ^

Note that they do not show the monitor the outputs, which makes it easier to learn an undesired method for bypassing the monitor. I discuss this further in Section 4.

- ^