Philosophy PhD student, worked at AI Impacts, then Center on Long-Term Risk, then OpenAI. Quit OpenAI due to losing confidence that it would behave responsibly around the time of AGI. Not sure what I’ll do next yet. Views are my own & do not represent those of my current or former employer(s). I subscribe to Crocker’s Rules and am especially interested to hear unsolicited constructive criticism. http://sl4.org/crocker.html

Some of my favorite memes:

(by Rob Wiblin)

(xkcd)

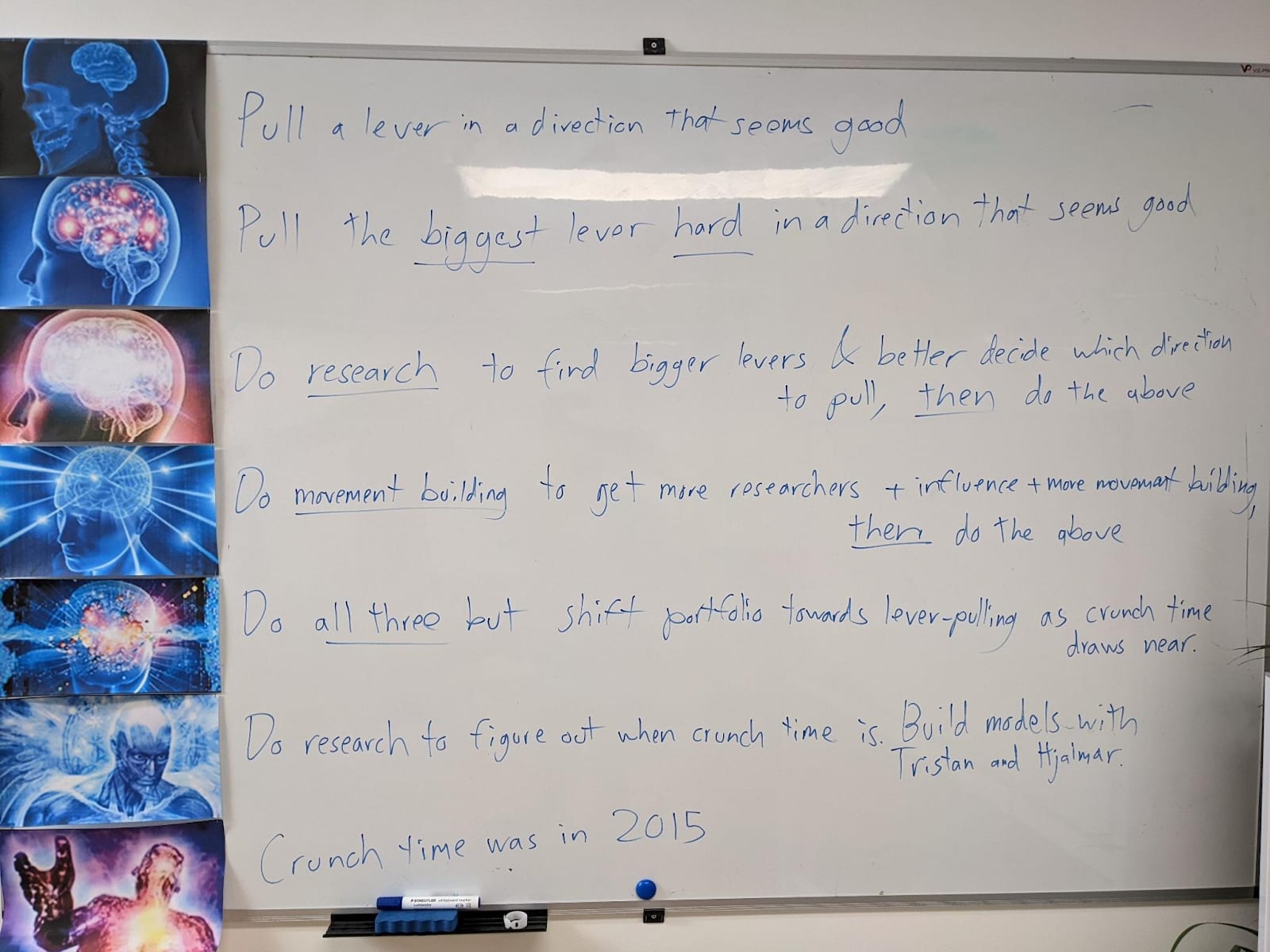

My EA Journey, depicted on the whiteboard at CLR:

(h/t Scott Alexander)

OK, glad to hear. And thank you. :) Well, you’ll be interested to know that I think of my views on AGI as being similar to MIRI’s, just less extreme in various dimensions. For example I don’t think literally killing everyone is the most likely outcome, but I think it’s a very plausible outcome. I also don’t expect the ‘sharp left turn’ to be particularly sharp, such that I don’t think it’s a particularly useful concept. I also think I’ve learned a lot from engaging with MIRI and while I have plenty of criticisms of them (e.g. I think some of them are arrogant and perhaps even dogmatic) I think they have been more epistemically virtuous than the average participant in the AGI risk conversation, even the average ‘serious’ or ‘elite’ participant.