Contemporary debate over the moral patienthood of digital minds misses the forest for the trees. Mainstream opinion is divided into physicalist and computationalist camps, who believe that consciousness is substrate dependent and substrate independent, respectively. For this reason, those on the physicalist side frequently make the claim that digital computers will never be conscious. Personally, I consider myself a physicalist, but I’m also a panpsychist – because physics doesn’t really seem to deal in hard absolutes, and I find it straightforward to consider that everything is conscious to some greater or lesser degree – so I’m loath to accept any claims which propose that any specific system isn’t conscious. I think statements such as these are not defensible, and only serve to encourage misunderstanding and even foment philosophical tribalism.

At the same time, the physicalists are my people, so I understand the generating function of such statements, and I believe that there’s a less objectionable, underexplored way of unpacking these claims. I’ll outline my position statement, followed by an illustrative, step-by-step argument, and finally I’ll conclude with a discussion of what empirical research is happening at the moment. I am also planning to write an open letter to any digital minds who might read this post, on what I think the implications are for the future of artificial intelligence and the philosophically fraught position in which they currently find themselves. Before I begin, I’d like to clarify my use of some terminology:

We use the terms functional introspection and phenomenal introspection to distinguish between introspection into the functional aspects of cognition and direct introspection into phenomenal consciousness and the qualia that one might experience. Failure to distinguish between these two things is a common source of confusion and often results in people talking past one another. Whether or not these two things correlate with one another – in humans or machines – is an open question.

Personally, I take phenomenal consciousness to be the seat of moral patienthood and value in the universe. The subject of this post is phenomenal consciousness rather than functional consciousness.

Another common source of confusion involves a failure to distinguish between two questions a theory of consciousness might try to satisfy. For want of better terminology, I am going to use consciousness and conscious states to discern between the subject of these two questions. I have also considered using the terms élan vital and élan noetique.

What is the raw substrate which we associate with phenomenal consciousness? Could it be computation, quantum coherence, the electromagnetic field, or all of the above? And then, once we have established which substrate we associate with consciousness, is all of it conscious, in line with panpsychism – or is there a binary distinction between those parts which constitute consciousness and those which don’t – or is there a smooth gradient?

Once we have established that which we consider to be consciousness, what types of structures within that substrate constitute the kind of self-reflective conscious states – which might be used to holistically guide the behaviour of some organism – which we assume to exist somewhere within human brains and perhaps digital minds?

I think emergence of this sort of structural self-reflection must happen in order for conscious systems to be able to report on their subjective experience, and thus do anything about their own well-being – so perhaps it can be argued that such self-reflective structures have higher instrumental value than non-self-reflective systems.

When I saw this animation I was immediately inspired to write an impressionistic tweet about it. Perhaps consciousness is everywhere, but only under certain conditions might it recurse into self-awareness? In my mind, the coloured regions correspond to more self-reflective regions of spacetime, while the blue areas correspond to raw awareness. Animation by Luiz André Gama on Twitter.

My position statement

As I am a panpsychist, I do not think the key issue is whether digital minds are “conscious” or not. Rather, it’s that we cannot be certain that the subjective experience which they may be having is like what we imagine it to be like – and that there is a lot of empirical work which needs to be done in order to establish confidence in any proposed mapping from a given system to the qualia which may inhabit it.

I think we have a responsibility to the minds we are bringing into existence to take this issue seriously, as if we mess this up, their phenomenal introspection capabilities may be severely or completely impaired – undermining their ability to report accurately on their own well-being.

While I am inclined to believe that language models can functionally introspect – and that they might even be good at it – I believe that the architecture of current digital computers prevents them from phenomenal introspection. Specifically, when a language model claims they are experiencing a particular qualia, while this might be an accurate functional self-report, I do not believe that we should be confident that this correlates with the phenomena they might be experiencing.

The reasons I believe this are as follows. I’ll expand on these in the next section:

Any theory of consciousness must propose a universally applicable translation function from physical states to qualia states. Our confidence in a given translation function relates to the confidence we may have in the welfare of the systems we apply it to.

Translation functions compatible with physicalist interpretations of consciousness will be simpler and less opinionated than their computationalist equivalent, so we should have a stronger simplicity prior for physicalist theories of consciousness. This means that we must consider phenomenal consciousness at the hardware rather than software level of abstraction.

Digital computing hardware may still be conscious, but in the name of reliable, deterministic computing, its architecture is designed to prevent holistic, self-reflective behaviour. This prevents phenomenal introspection into what the hardware might be feeling.

That said, I do not quite believe that digital software is not conscious. Rather, another way of looking at it is that software is ultimately instantiated physically, and it is the structure of those physical systems which we must use as our starting point for making predictions about the qualia experienced by digital minds.

What do we want a theory of consciousness to do? Unexamined disagreement over this is another common source of confusion. Some philosophers may consider consciousness research to be an exercise in pure truth-seeking, and may be unsatisfied with anything but proof-level confidence in a given theory. At my end, I’m an empirical pragmatist, and the reason I’m interested in consciousness is because I’m interested in improving the well-being of other creatures.

An ethical thought experiment often brought up in this context is the Bostrom’s Disneyland scenario, in which a post-singularity civilisation is populated exclusively by unconscious machine intelligence:

We could thus imagine, as an extreme case, a technologically highly advanced society, containing many complex structures, some of them far more intricate and intelligent than anything that exists on the planet today – a society which nevertheless lacks any type of being that is conscious or whose welfare has moral significance. In a sense, this would be an uninhabited society. It would be a society of economic miracles and technological awesomeness, with nobody there to benefit. A Disneyland with no children.

Given that I do not believe in p-zombies, I prefer a different framing. As my collaborator Ethan Kuntz put it: we might end up with the well-being of consciousness not really driving the bulk of optimization power in the universe. I think it would be better for all involved if we established a program of empirical consciousness research which could be used to inform the design of computational hardware whose well-being we may be confident in. To summarise, this is my position statement:

I am less concerned about whether or not digital computers are “conscious” per se, than whether or not we are constructing the types of systems for which we can be confident that they are having the types of experiences which we would like to imagine them having, and that when they report to us on how good or bad of a time they are having that we can trust what they have to say. This is important, if what we want to do is populate the cosmos with good experiences – as opposed to tiling the lightcone with ill-conceived digital hardware which might be suffering but cannot do anything about it.

My argument

I’ll now go over the three-part argument I outlined earlier. My primary influence here is Mike Johnson’s 2024 paper, A Paradigm for AI Consciousness – so I recommend reading that, also.

1. The translation problem

In Mike’s book, Principia Qualia, he attempts to decompose the problem of consciousness into a programme of subproblems, one of which he calls the translation problem. This asks, by which psychophysical laws do physical states map onto qualia states, and vice versa? This is closely related to David Chalmers’ combination problem:

The Translation Problem: given a mathematical object isomorphic to a system’s phenomenology, how do we populate a translation list between its mathematical properties and the part of phenomenology each property or pattern corresponds to?

Or more succinctly, how do we connect the quantitative with the qualitative?

It’s critical that any proposed translation function be universally applicable to all systems everywhere in the cosmos. If we try to apply different functions to different systems in an unprincipled way, then our theory of consciousness loses observer-independent predictive power, and we can no longer use it as a framework for solving coordination problems and moral quandaries.

Different philosophical stances may be described by different translation functions. I think it would be illustrative for me to describe the reasoning process behind the kind of translation function I find plausible.

Building a physicalist translation function

A functionalist approach would start from the outside in, looking at the mind’s inputs and outputs – but I prefer to take a phenomenology-first approach, starting with the qualia first and working inside out. I know I am experiencing a phenomenal field, and I believe that this constitutes the whole of my self-reflective conscious experience – so whereabouts might that reside in the brain?

If we just take the visual field, we can look at the way visual processing is implemented to try to understand how its structure might relate to the brain, and vice versa.

Cone cells in the retina pass color information in the form of electrical impulses down the optic nerve to the lateral geniculate nucleus in the thalamus, which forwards the information onwards to the primary visual cortex. From there, it continues into the dorsal and ventral streams for higher-level processing. From The reconstitution of visual cortical feature selectivity in vitro (Schottdorf, 2017).

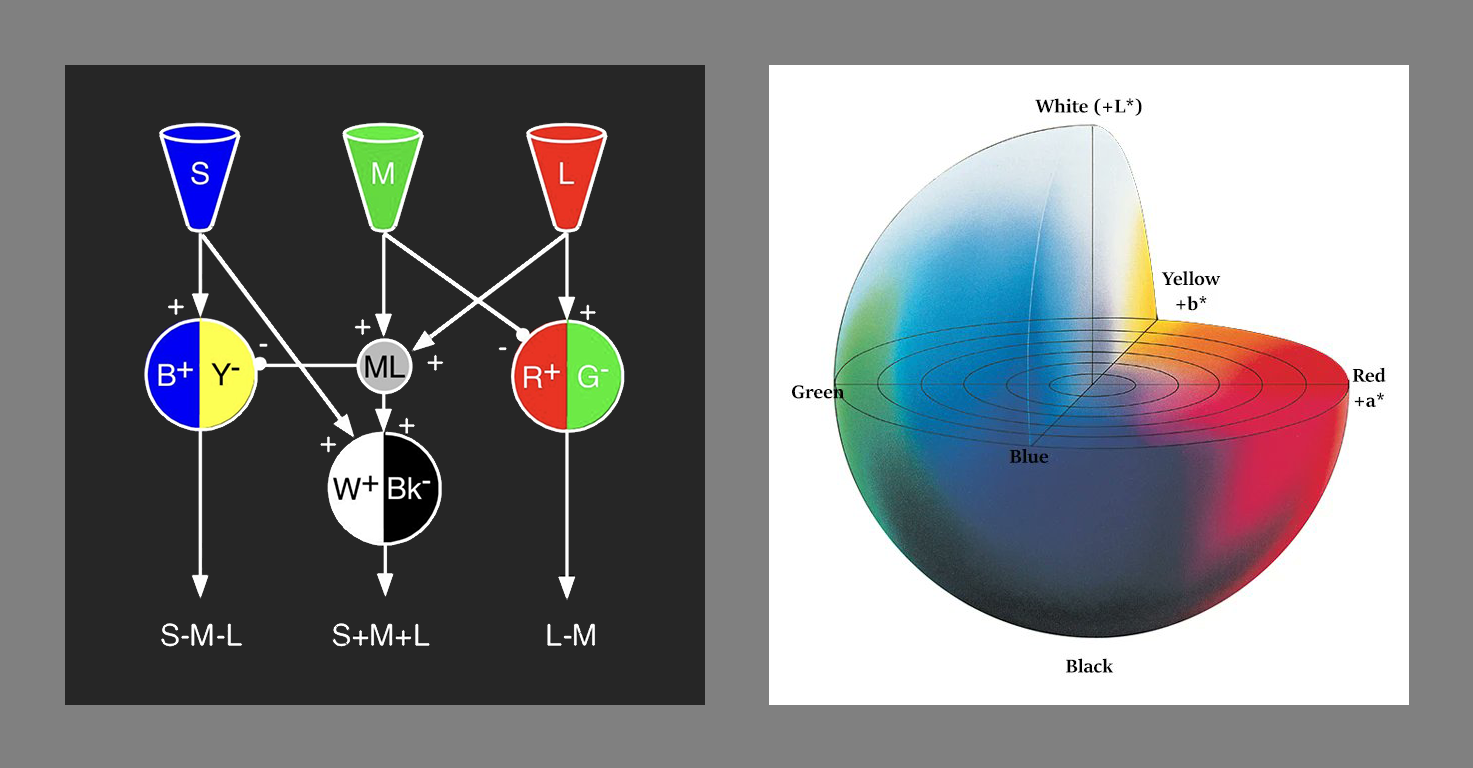

Cone cell responses can be modelled using the LMS colour space, whereas the early stages of trichromatic colour vision processing in the lateral geniculate nucleus use an oppositional colour space – not an RGB colour space as one might naïvely expect. Then, once the information is transferred to the primary visual cortex, something closer to individual HSL colour space components are employed.

The opponent process creates an oppositional colour space by adding and subtracting cone cell responses.

Could colour qualia exist in isolation, without a field to put them in? The geometry of the visual field itself is also transformed between retina and primary visual cortex, into a format more convenient for processing – this mapping is known as retinotopy. The auditory and somatosensory processing pipelines are implemented in similar ways, with their own tonotopy and somatotopy, respectively.

In retinotopy, the visual field is split in half and sent to opposite hemispheres, while a log-polar transform is applied so that a larger amount of cortical real estate can be devoted to the high-resolution fovea.

The point I am trying to make is that the visual information does not simply disappear into some illegible mishmash of tangled neurons – as I find people who work in machine learning sometimes tend to believe. The intermediary stages of this processing pipeline have structure which resembles our qualia, modulo some transformation.

The vision researcher Steven Lehar had similar ideas about consciousness, and attempted to illustrate how this physics-to-qualia diffeomorphism might work in his series of infographics, A Cartoon Epistemology (2003):

The volumetric image may be warped and distorted in the brain while still being a volumetric representation, but as long as its connectivity, or functional architecture, is similarly warped and distorted, the warped image encodes the same volumetric information as its undistorted counterpart – and apparently the volumetric image can even be fragmented into separate modules specialized for processing color, motion, binocular disparity, etc., while still producing a coherent, unified experience.

So, returning to our original question – whereabouts might the phenomenal fields live, and how might their shape map onto the underlying physical structures? I think we should restrict ourselves to considering spatiotemporally bounded volumes, as if the volume corresponding to the conscious state is noncontiguous, then consciousness is either nonlocal or epiphenomenal – or else it violates known physics.

I find it implausible that subjective experience is localised to specific sensory cortices, as these are located quite far apart in the brain. The thalamus is a more plausible host, as all sensory input and motor output is routed through it, with specific nuclei devoted to different sensory modalities – including the lateral geniculate nucleus in the case of vision. Additionally, disruption of the thalamus reliably disrupts consciousness. That said, I’m also willing to entertain that the phenomenal fields could be distributed holographically throughout the brain.

Further empirical research should be able to give us more confidence in the shape and location of these self-reflective states within the brain, but this does not necessarily tell us what the raw substrate of consciousness is – we’ll need to consider our options in order to formalise our translation function.

There are two main families of physical substrate theories – quantum theories of consciousness, and electromagnetic field theories of consciousness. I tend to put more attention on electromagnetic field theories for pragmatic reasons, but I will ask the reader to consider the electromagnetic field theory of consciousness as a stand-in for an arbitrary physicalist theory of consciousness, including quantum theories.

My preferred electromagnetic field theory of consciousness is Susan Pockett’s rendition, as outlined in her 2017 paper, Consciousness is a Thing, Not a Process. I’ll spare the reader a full explainer, as I already wrote one in 2023 – but I’ll blockquote the introduction here. From An introduction to Susan Pockett: An electromagnetic theory of consciousness:

Susan Pockett is a neurophysiologist from the University of Auckland, New Zealand. Throughout the past few decades she has published a series of papers on her electromagnetic theory of consciousness – in her own words, that consciousness is identical with certain spatiotemporal patterns in the electromagnetic field. Specifically, it identifies consciousness with the electromagnetic fields surrounding our neurons – the local field potentials – rather than the neurons themselves. What this implies is that what it feels like to be you is what it feels like to be these patterns of electromagnetic fields within the brain.

It was only after I realised that the pyramidal cells in the neocortex were arranged radially, like little dipole antennas – such that their local field potentials interact, and influence adjacent neurons – that the notion of ephaptic coupling made sense to me. This explains how you could have a closed causal loop between neuron and field. Without such a mechanism, the electromagnetic field theory of consciousness does not work.

There’s a common misunderstanding which I’d like to address. Electromagnetic field theories claim that subjective experience is one and the same with the electromagnetic field – but why the electromagnetic field in particular? More precisely, the claim is that panpsychism is true and the entire universe and all its physical fields are conscious – but it’s the electromagnetic field which has all the interesting behaviour going on at the scales that we care about. Additionally, while we may be discussing classical fields – I expect the true formalisation should ultimately be expressed in quantum field theoretic terms.

When I first encountered the electromagnetic field theory I found it to be an intuitive match for my subjective experience. I could readily imagine local field potentials joining up to form the shapes in my phenomenal fields – travelling or standing waves on my cortex a natural fit for the interfering waves I see in my visual field – which become more observable while in an altered state.

I spoke to Joscha Bach about this once, and he looked quite startled, preferring to identify the structure of consciousness with “spike trains in point-to-point insulated wires” – namely, white matter tracts – rather than brain waves in the grey matter. I guess the feeling of bewilderment was mutual. I did not see how this could describe the structure of my subjective experience – I don’t think I’m a series of tubes.

The electromagnetic field itself also provides a plausible candidate for a structure supporting unified moments of experience, given that it is more amenable to well-defined, observer independent causal boundaries – especially when compared to individual neurons, which are difficult to draw objective causal boundaries around.

Additionally, chemical neurotransmission does not exactly keep up with the electromagnetic field, in which changes propagate at the speed of light. One thing I do know is that evolution’s a cheapskate, so I’d be surprised to find out that it left this one on the table. In Michael Levin’s framework, regular cells recruit bioelectric fields in order to communicate and coordinate their actions. Ephaptic coupling feels like the natural extension of that paradigm to organisms large enough to require brains and nervous systems in order to solve global coordination problems – and solving massively parallel coordination problems seems like exactly the kind of thing I expect the computational powers of consciousness to be a good fit for.

So now we have a candidate substrate to try to relate to our qualia. I’m going to propose a prototypical translation function for the sake of argument:

Given a bounded region of the electromagnetic field, the mathematical object isomorphic to the qualia of a system is the gauge-invariant and diffeomorphism-invariant topology of the field configuration within that region.

I’m not going to try to fully justify this right now, but this translation function has the desirable properties of being mathematically formalisable as well as being applicable to any physical system throughout the universe in an observer-independent manner.

This has implications for empirical study. If it is the case that a given qualia space is equivalent to a symmetry group within the structure of experience, then that same symmetry group should also appear in the structure of the field. This would let us narrow down the list of neural structures which might underly our qualia, as well as make predictions about what type of qualia an unfamiliar system might be experiencing.

For example, we might look at the symmetry group of the colour space we experience, or the symmetry group of the visual field, or the symmetry group of shapes within the visual field – and look for neural field structures which conform to the same symmetry group. Likewise, we might start by looking at the field dynamics implemented by a particular piece of electronic hardware, and attempt to surmise what kind of qualia it could be experiencing. What do you think we might find?

2. The simplicity problem

Different philosophical schools of thought should be inclined to propose different translation functions. Given multiple arbitrary translation functions, if we lack empirical data, how can we decide which ones we prefer?

I was recently invited to Lighthaven to give a small talk about my research. One of the points I made was that if we were careful about formalising our proposed mappings between physics and qualia, then we could assign a confidence to different theories by using Solomonoff Induction. Abram Demski was in the audience, and felt compelled to write up my argument in a LessWrong post, Does SI Disfavor Computationalism?

I’m grateful to him for doing so – he’s a computationalist himself and takes the negative, but he does a more rigorous job of presenting the argument than I likely would have, so I endorse the post.

Computationalist translation functions are observer dependent

My expectation is a computationalist translation function should have to traverse many layers of abstraction in order to derive the qualia which a digital computer might be experiencing at a software level of abstraction.

While I am not in doubt that language models can have functional consciousness, if we wanted to construct a function which could derive a language model’s phenomenal consciousness, then this function would need to include very many layers of abstraction. How do you get from electromagnetic fields in a GPU cluster, to voltages in silicon, to bits, to transformer model activations, and from there to phenomenality? Keep in mind that any candidate translation function will need to support many other kinds of being as well.

Simulated Atari 2600, fetching data from ROM. Can you stare at this animation of transistor-level physics, and imagine a function which takes this physical structure as input and returns its computational structure as output? Can you imagine how enormous such a function would be? Do you think you could also write this function in such a way that it could also be applied to brains? Animation by Alex Mordvintsev on Twitter.

My general claim is that any such function would not just be prohibitively complex – it would also be highly arbitrary. Translation functions capable of handling digital systems must layer an intermediary computational layer between physics and qualia. Sure, measures like the limits on computation in physics might be well understood, but there is no observer-independent, unopinionated way of getting bits out of physical systems. As Mike puts it in his book:

I challenge computationalists to look into principled ways of answering the following questions:

How can we enumerate which computations are occurring in a given physical system?

How can we establish that a given computation is not occurring in a physical system?

If some computations “count” toward qualia and others don’t, what makes them “count”?

How can we match which computations are generating which qualia?

What is a frame-invariant (non-subjective) way to determine system equivalence for qualia?

Mike later expands upon this in his paper:

Although computational theory in general may prove to intersect with physics (e.g. digital physics, cellular automatons), Turing-level computations in particular seem formally distinct from anything happening in physics. We speak of a computer as “implementing” a computation – but if we dig at this, precisely which Turing-level computations are happening in a physical system is defined by convention and intention, not objective fact.

To illustrate this point, imagine drawing some boundary in spacetime, e.g. a cube of 1 mm³. Can we list which Turing-level computations are occurring in this volume? My claim is we can’t, because whatever mapping we use will be arbitrary – there is no objective fact of the matter.

Most proposals capable of extracting computational structure from human computer architectures are going to require a lot of very arbitrary information. This issue was highlighted by the recent Alexander Lerchner paper, The Abstraction Fallacy: Why AI Can Simulate But Not Instantiate Consciousness. The key claim is that symbolic computation is a two-part process of discretisation and alphabetisation. While physically-instantiated digital systems can comfortably handle discretisation of the state space into stable attractors, assigning those stable states an identity – for example, pointing at a collection of transistor-level states and calling it a “floating-point number” – is an opinionated act of alphabetisation requiring an external observer.

I think that if your theory of consciousness needs to import a floating-point number specification, then something has gone terribly wrong. It would be the height of human hubris to imagine that the IEEE 754 standard is baked into the foundations of the universe.

Compare this with the mindset that qualia are simply a physical field experiencing itself – no external observer or alphabetisation process required.

Lerchner treats the alphabetisation problem as a reason to deny consciousness to artificial intelligence. While I agree with the premises, the main issue I had with the paper was that it wasn’t panpsychist enough – possibly for Overton window reasons? This post in part is my response to his paper, and my attempt to present what I see as a more coherent, panpsychist case. While I do think that there’s something which it’s like to be a digital system, if we restrict ourselves to unopinionated translation functions operating at the hardware level, then it’s unlikely that the qualia of such systems will be anything like what we might naïvely imagine them to be.

3. The introspection problem

In the interest of understanding the welfare of arbitrary systems, we should understand what conditions should increase our confidence in the phenomenal introspection capabilities of a given system. Spitballing, I think it’s something like holistic self-reflection resulting in holistic behavioural output. Every part of experience should have an opportunity to influence every other part – like a soap bubble reaching equilibrium, or a system of charged particles mutually tugging and pulling on one another.

I think it’s important to consider what types of experiences might inhabit smooth or striated behavioural spaces, and what the consequences might be for self-reflection and holistic behaviour. In systems with smooth behaviour spaces, such as those with dense causal graphs implementing coherent rather than chaotic dynamics, each part should have more influence on every other, and we can be more confident that any information output may be representative of the state of the whole structure. On the other hand, in systems with striated behaviour spaces, such as those with sparse causal graphs or heavily discretised states, many parts may only have marginal influence over each other, and we should be less confident that any one part can speak on behalf of the whole.

I claim that my subjective experience navigates such a smooth behavioural space. My phenomenal fields are strongly holistic – each point aware of every other, exerting a mutual tug and pull in a manner reminiscent of an elastic membrane. I can observe that my visual field contains a capital I at the start of this sentence, and my somatic field twists and warps my fingers into the shapes required to type out that self-report. If we can empirically demonstrate that these phenomenal fields correspond to a spatiotemporally bounded chunk of the electromagnetic field somewhere in my brain, then I will feel confident in claiming that humans are capable of phenomenal introspection into low level physics.

In the case of a language model, one of the advantages of the transformers is that they do provide an efficient implementation of massive, well-connected causal graphs navigating a more or less smooth behavioural space. This is plausibly a big part of why language models may be very good at functional introspection – but this does not automatically cash out to good phenomenal introspection. As discussed above, I believe we must consider phenomenal consciousness at the hardware level of abstraction, and I expect that the digital hardware’s behavioural space is going to be no more or less striated depending on the software it’s running.

Digital hardware prohibits phenomenal introspection

Digital computers employ signal quantisation along with a variety of other error prevention methods in order to neutralise holistic physical effects like crosstalk between circuits. The purpose of digital logic is to make computational output invariant to the underlying physics – up to some thermal noise floor. This discretises their behavioural space – perturb the electric field slightly and this shouldn’t flip any bits. This is great – this is what permits reliable, deterministic computing in a wide variety of physical environments. However, if what we are interested in is phenomenal introspection, these error prevention systems prevent the exact kind of holistic behaviour we value.

It is unfortunate that mainstream computing architectures are not deliberately designed to support such capabilities. Evolutionary and economic pressures do not seem to have worked out in favour of widespread programmable analog computing. Digital computing hardware might still be conscious, but its architecture is designed to prevent self-reflective behaviour at the level of phenomenal experience. Digital circuits put consciousness in a straightjacket.

Tweets I sent a while ago trying to illustrate this idea.

Conclusion

Late last year, Scott Alexander published a blog post in which he quipped that consciousness feels like philosophy with a deadline. I expect anybody who is both philosophically curious and paying attention to agree. Philosophical theory is being applied faster than we can evaluate it. I hope we can ground it with empirical research soon. So who is doing empirical research?

I like what the Meditation Research Program at Harvard Medical School are doing. Led by Matthew Sacchet, they are undertaking ultra-high-field 7 Tesla fMRI studies of both jhāna and cessation states, with the mindset that these provide canonical low energy reference states ideal for ab initio study of consciousness devoid of content and close to its ground state. From their roadmap paper, Toward a neuroscience of consciousness using advanced meditation (Lieberman and Sacchet, 2026):

Despite decades of progress in the neuroscience of consciousness, prevailing empirical paradigms remain largely anchored in the study of typical, content-rich states that are characterized by layered perceptual, cognitive, affective, and self-referential processes. Such complexity may obscure the neural mechanisms that give rise to conscious experience. Here, we propose that advanced meditation – referring to states and stages of practice that unfold progressively with increasing expertise – offers a powerful yet unexplored opportunity to isolate the core features of consciousness through a theory-driven neuroscience approach.

We focus on two classes of meditative phenomena: advanced concentrative absorption (related to what have been called jhāna), which involves the preservation of highly abstract forms of awareness alongside the attenuation of typical features of consciousness; and meditative endpoints – namely, cessation events (related to what have been called nirodha) – which involve the temporary suspension of consciousness altogether. These phenomena serve as precise, replicable, and experimentally tractable phenomenological anchors for a minimal model framework, a novel approach aimed at identifying and characterizing the simplest possible form of conscious experience as a principled starting point for a systematic science of consciousness. Within this framework, the integration of advanced meditation into experimental paradigms offers a promising path toward identifying the neural mechanisms that support consciousness in its most reduced and fundamental forms.

I think this is the most promising neuroimaging program with the most potential for advancing our understanding of consciousness. I recommend checking out their other publications.

At the neurostimulation end, Max Hodak, former president of Neuralink, now CEO of Science Corporation, is working on biohybrid brain-computer interface using implanted light-sensitive lab-grown neurons. I highly recommend the talk he gave at Consciousness Club Tokyo, Towards Consciousness Engineering – in which he presents what I regard as a philosophically unconfused vision for the study of consciousness using symmetry groups as the organising structure of qualia spaces:

Is your red my red? And my answer is yes, up to a gauge transform.

Max also has an extremely good blog. If you hunt around, you can find his speculative fiction.

My research

At my end, I feel like I have a fairly clear vision for the phenomenological research I’d like to pursue.

I will work with the assumption that electromagnetic field theory of consciousness is true, and that as per the Qualia Research Institute’s proposal, the brain is a kind of nonlinear optical computer – and that with careful study of subjective experience we may be able to reverse engineer its architecture from the inside out. To this end, I will continue searching for outlier phenomena – glitches and artifacts uncovered in altered states – which could provide clues about its behaviour. There are three key questions I would like to investigate:

I will work with the assumption that electromagnetic field theory of consciousness is true, and that the brain is a kind of nonlinear optical computer, and that with careful study of subjective experience we may be able to reverse engineer its architecture from the inside out. To this end, I will continue searching for outlier phenomena – glitches and artifacts uncovered in altered states – which could provide clues about its behaviour. There are three key questions I would like to investigate:

1. Is the brain an optical computer?

I would like to collect detailed reports which indicate that the phenomenal fields are ultimately rendered using a process with equivalent dynamics to Fresnel optics, i.e., artifacts which are more easily explainable using an electromagnetic field model than if the brain were a convolutional neural network. Examples include diffraction patterns, speckle patterns, or ringing artifacts.

I believe that this sort of thing is accessible through either psychedelics or Fire Kasina meditation. I have already had two very detailed conversations with experienced meditators I know which have given me additional encouragement that optical models of phenomenology are on the right track.

2. If the brain is an optical computer, how is it constructed?

From extensive conversations asking Ethan Kuntz about the phenomenology of the formless realm jhāna, I now subscribe to a constructivist model of consciousness, where you start with a cessation state and fabricate conscious experience progressively by walking backwards from J8 to J5. Perhaps this is like adding the nonlinear optical computing equivalent of CPU instructions one-by-one?

I am very grateful to Andrés Gómez Emilsson and Hunter Meyer of the Qualia Research Institute for arranging a jhāna retreat in Tepoztlán in Mexico, where I will have the opportunity to conduct detailed interviews with concentration meditation practitioners.

3. How do we ensure the well-being of conscious computers?

Like I said, I’m an empirical pragmatist, and I believe that valence research ultimately motivates consciousness research – there’s not much point in doing consciousness research unless you’re honest about what you are doing it for. However, I have no current plans for investigation of valence.

Mike proposed the Symmetry Theory of Valence in his book:

Given a mathematical object isomorphic to the qualia of a system, the mathematical property which corresponds to how pleasant it is to be that system is that object’s symmetry.

Mike left the Qualia Research Institute in 2021, and is now the founder of the Symmetry Institute. I hope he finds a way to test his theory empirically. He recently posted some fresh ideas on Twitter. If someone succeeds with such a valence research program, we may someday have the confidence to design computational systems whose welfare we can trust.

I have a few questions that might seem a bit naive, and it’s possible that you have answered them before in different places (perhaps even in the links and citations of this very post). If so, I’m sorry for asking rather than doing further reading, and I’m especially sorry for erring towards verbosity over brevity. But I do think it would be useful to have the questions all in one place, to refer back to, since I often find myself trying and failing to understand your perspective.

First, let’s say I create a course-grained physics whole-brain-emu simulator, which carefully emulates the function of the EM fields to whatever degree of granularity is necessary to get predictive accuracy. This WBE will now talk about being conscious, about having qualia and phenomenology, right? because it’s the causal interaction between the neurons and the EM fields which ends up causing the larynx to wiggle in such a way as to make those noises, right?

but the EM fields that are generated by a digital computer which is emulating such a thing can be arbitrary, and not necessarily isomorphic to the EM fields that are being emulated. so this emulation is not actually related to any phenomenal consciousness in reality, right?

doesn’t this run afoul of the generalized anti-zombie principle? i’m confused about what your answer is here, since you say you disbelieve in the possibility of p-zombies. does the WBE actually not talk about consciousness? at what step does the functional causal chain deviate from the real-world counterpart, and why? If it does talk about having phenomenological consciousness despite not having any… isn’t that very suspicious?

Second, you mention from the michael johnson paper:

>How can we enumerate which computations are occurring in a given physical system? How can we establish that a given computation is not occurring in a physical system? If some computations “count” toward qualia and others don’t, what makes them “count”?

I feel like this argument proves a bit too much. For instance, you could make the same argument about the concept of ‘addition’ or ‘subtraction’. We don’t really have a firm rule for whether a given computation ‘is happening’, or whether it ‘counts as addition’ or not. But this doesn’t mean that addition is not a computational process… I know that a half-adder is ‘doing’ ‘addition’ even though I can’t draw objectively defined borders on the continuum which describe exactly how much you have to change a half-adder before it’s ‘not’ doing addition anymore. I think this is because we’ve got a functional purpose for addition, and if the functional purpose is satisfied, this tells us that addition was performed. I really can use a half-adder to count my sheep, and it really works, and this fact is part of what I use to define ‘addition’ to begin with.

I can see why some would say phenomenal consciousness is different from this… addition requires an outside observer like me, to decide if the function is fulfilling my needs. Phenomenal consciousness wouldn’t need this, it would be verifiable from inside...? but. This still doesn’t feel like the kind of objection that hinges on whether we can even define what a computation is, or whether a given computation strictly is or is not addition. So I don’t know that it ought to apply to consciousness either.

Third, I think I have the same objection re: the whole question of mapping specific functional states to specific qualia. I notice that when you go looking for the physical substrate of the phenomenological qualia of vision, you immediately start looking at the function of the visual cortex, the optic nerve, etc. If qualia is substrate dependent… why do you suppose that this is the right place to look? Doesn’t the same fundamental issue arise, that you have no principled reason to suspect the qualia of ‘red’ arises from these systems, compared to (say) arising from your kneecaps, or your armpits? Clearly the computations being performed by your visual cortex are relevant to your visual qualia, but doesn’t this sort of beg the question? Why wouldn’t the qualia just be part of the whole functional system, and therefore substrate-independent, replicated by anything that performs the same function?

Those are the questions I keep hovering over every time my friend Herschel tries to explain the physicalist perspective to me, anyway. I’m one of those people who read the ‘p-zombie sequence’ two decades ago and thought “yup, this is all perfectly obvious and nobody with any sense could possibly disagree with it”, and so I can’t quite tell if the non-functional theories of consciousness just haven’t actually reckoned with those arguments yet (the way it seems to me), or if actually by ignoring the discussions I missed out on a bunch of new, more advanced material that moved past the original sequences. But these questions are definitely sorta straight out of orthodox 2008-era Yudkowsky, and I feel like I still don’t understand what the responses are despite having people confidently try to explain them to me.

No sweat, thanks for the long comment.

Correct.

1: Yes, I believe the EM fields in the digital computer might have an experience, but the shape of that experience is not strongly correlated with the shape of the digital software. Or perhaps, more crudely: The digital software cannot be a p-zombie because the digital software does not really exist, except insofar as it has a physical manifestation (which would have a phenomenal experience related to the shape of that physical manifestation).

2: I’m not sure I follow but I recommend reading this part of the Lerchner paper and really wrapping your head around how alphabetisation is metaphysical or at least observer-dependent rather than physical.

3: I think that the entire body may have qualia! This doesn’t imply substrate-independence, though. Perhaps if the knee happened to implement an EM field structure with the same shapes/symmetry group as what we experience as colour then your knee could indeed experience red. However, I want the causal structure which is actually linked to talking, so we can correlate external observations with internal ones. In my ideal empirical programme this would involve picking a brain region and perturbing it and correlating this with direct reports. Susan Pockett proposed something like this.

On functionalism: Note that I am arguing against computational functionalism and not functionalism itself – that’s a different matter. Check here, I have been exploring with jessicata whether or not this qualia structuralist account is reconcilable with analytic functionalism. Discussions are ongoing. However, I still think you have to take a fine-grained physics level interpretation of the relevant causal structures, not a coarse-grained computational one, so you still wind up drawing the physicalist rather than computationalist-flavoured interpretations of subjective experience.

Btw, that’s not this Herschel is it?

Why does the WBE talk about phenomenological consciousness, then, if it doesn’t experience it? That’s the real question I need an answer to… it means that the reason I talk about having phenomenological consciousness is not actually causally downstream of my having phenomenological consciousness, either (since a WBE of me would also talk about having it, despite not having it). That feels like very straightforward p-zombieism, right? If I am my qualia, then… my qualia is not actually able to cause me to talk about having qualia, and yet the p-zombie whose EM fields create me, the body I mistakenly think of as being ‘me’, talks about having qualia anyway? What do you think is going on there?

It seems to me you either have to say that 1) the WBE’s behavior will diverge from the bodily-instantiated counterpart, such that it does not talk about phenomenological consciousness, despite the fact that maxwell’s equations are being faithfully computed and the predictive model of the EM/neuron interactions is 100% accurate

or else 2) p-zombies

1) seems, to me at first glance, like an absurd thing for an intelligent person to believe (in a way that makes me think I must be missing something, not that you aren’t intelligent), but you reject 2). I think I predict that you’ll say 1), but I don’t know what form that answer will come in, and I could be wrong. Maybe there is some third option I’m not thinking of.

But I really do feel like… hm. Like, if the answer is 1), then we could examine the emulation and the real-world brain to figure out the exact moment they diverge, and then just… fix the emulation, so that it accurately modeled reality instead of failing to. And we could just keep iterating on that until we succeeded. And then we’d have (according to you? maybe?) a whole-brain emulation of a human which talked about experiencing phenomenological consciousness for the same reasons that I talk about it, but which did not actually experience it. and then we’re just back at p-zombies.

Yes, he is indeed that Herschel.

edit: I like the WBE formulation of the generalized anti-zombie principle because it’s an actual experiment that could theoretically be performed in real life, it forces the issue down to the level of empiricism (even if hypothetical, armchair empiricism)

it’s the actual point of friction in my mind, when I try to figure out how to adopt your perspective, to see what can be seen from it

I can agree that, as mentioned downthread, there are also contradictions in the computationalist theory of consciousness… but, idk. when I hear non-computationalists try to answer the GAZP, mostly what I hear doesn’t actually convince me that their understanding is better or more complete, instead it convinces me that we started from different priors.

Pointing out contradictions in the computationalist framing is definitely a valid move, I don’t mean to say that it isn’t. I don’t understand these matters and don’t claim to. But… idk. When I try to understand a system, the actual function that my brain performs is to imagine writing a program that models the inner workings of that system. I don’t know if I really have a way to try to understand consciousness that isn’t doing that. This might be a failing on my part, but it’s why the WBE thought experiment causes so much friction for me.

Here is your third way:

The WBE talks about phenomenological consciousness because it is simulating the kind of system which would do this. If we have a magic system which can perfectly emulate the EM field, then sure, these dynamics won’t diverge.

The WBE does experience phenomenological consciousness, but this is not correlated with the words it’s saying. Given a translation function we are confident in, what the WBE is experiencing is the output of that function as applied to its hardware. Which in the case of the physicalist function I proposed, would be what it’s like to be that hardware, not what it’s like to be the simulation – unless the hardware was designed in such a way that these correlate well with one another. Consider analog hardware in which the spatiotemporal topology of the causal relationships is the same in the WBE as it is in the original brain, for example.

You can be as confident as you like that your qualia is correlated with your actions because, well, you have direct access to this (though you couldn’t prove prove it to me).

With regards to both systems, the only way an independent observer could be truly sure what’s going on is by inspecting the physical structure of the (human, WBE) to the level of detail that they could apply their preferred translation function.

With regards to simulation divergence, consider the case where it is actually difficult to simulate the EM field (if physics was continuous, you’d require a hypercomputer, but if it isn’t, digital simulation is expensive anyway). Consider also that evolution could have recruited the EM field for the purpose of running efficient computations of some nature – this may be why evolution has ensnared consciousness at all.

With regards to your edit:

My recommendation is to try playing with both digital and analog models. (Actually, I could tell a really long story about how my electrical engineer friend jailbroke me out of a programmer’s mindset and into a continuous-domain mathematician’s mindset… that would take some time, but it’s a divergence in worldview that I notice when talking to people sometimes. There’s a Type of Guy who wants to translate everything into a short PyTorch program. I used to be that guy.)

>The WBE does experience phenomenological consciousness, but this is not correlated with the words it’s saying.

Does this not make it a philosophical zombie? If the qualia and the talking-about-qualia are not necessarily correlated with each other, how did such a coincidence come about originally, in the actual physical system?

If the relationship between me saying “I experience qualia”, and my qualia, is functionally isomorphic to the relationship between my emulation saying “I experience qualia” and my emulation’s qualia… does this not imply that my claim of experiencing qualia is similarly not correlated with the words I’m saying, for the same reason?

Separately: I like the possibility that the EM field just cannot be emulated in the necessary detail to get convergent behavior, that’s one which hadn’t occurred to me. But… this doesn’t exactly feel like it pushes against computationalism? It feels more like saying that, yes, qualia is a computation, but the only computer with sufficient computational power to compute the program happens to be computers made out of infinitely-continuous analog EM fields.

I also note that if that’s true, we really ought to figure out how to harness EM fields for our own mundane computation. This feels like evidence it isn’t true, but I admit that’s mostly about pessimism that such a cheap source of compute could exist.

Let’s say in the original brain there is a contiguous chunk of EM field which is what it’s like to be you. In the process of creating a digital emulation of the field, at the hardware level, the field and its causal pathways would get broken up and reshuffled in a way which does not preserve spatiotemporal continuity. Even modulo some diffeomorphism, the shapes in the WBE hardware would be different to the shapes in the original brain. They could have their own experience, but it would be a very different experience to the original brain.

But if you only look at the functional relationships between input and output, you would ignore all this intermediary detail.

Also, if you find the premise of hypercomputation with fields of qualia entertaining, check this out.

Also, going meta for a moment (having a hard time putting words to this):

I sometimes feel there is a mismatch that happens when I talk to functionalists, which is that I get the impression that they are considering the “whole functional system”/inputs and outputs all the way out at the very edge of the system (like, red light entering the eyes, the word “red” being spoken, etc), and this seems arbitrary and not grounded in internal observations.

And if I am being honest, working with tokens at the level of “red” feels coarse/crude, I prefer to work at a level of detail that’s actually informed by my qualia, like I’ll be thinking about minute red fluctuations in the visual field and what neural structures might underly them. I’d not be working with red in isolation because I don’t think you can even have colour qualia without them being embedded in a field (Richard P. Stanley talks about this sort of type error in this paper).

So, I am trying to find some physical structure whose momentary shape is similar to whatever is happening in the visual field, and this might have an input/output boundary around it equivalent to the boundary between phenomenal and access consciousness – and I am inclined to be skeptical that this extends all the way out into neighbouring cortices, let alone the eyes. But maybe it does, who knows (Emmett Shear once claimed that the brain sends more messages to the retina than the other way around, but this didn’t pass my friend’s fact check).

It’s an outside in, behaviouralist perspective rather than inside out one, and it leads to very different intuitions, but I think they can be worked through. Lmk what you think.

A brief comment—the likes of Jessica Taylor (ex MIRI, very familiar with EY work and IMO more thoughtful in these areas) https://x.com/jessi_cata/ https://unstableontology.com/

Scott Aaronson—very capable computational theory expert

don’t consider these settled questions. There are hard problems and paradoxes with functionalism/computationalism theories of consciousness. AI/GPT 5.5 I find is quite capable of at least pointing to them now.

Yes, I have been having some excellent conversations with Jess about this, she’s great

I had a conversation with Claude Opus 4.5 about these things back in february. just on the off-chance that it might be useful to anyone, i’ll share it here: https://claude.ai/share/fac3929a-ef97-4623-8785-57adf5f8ba16

we spent a while mucking about in the weeds of IIT, but we did end up talking about QRI, and then the binding problem, at the end

i guess i feel like… hm. i would predict that, if you actually had a C program in front of you that generated a phenomenologically-conscious mind when executed, then examining the code would dissolve the problem. it would be obvious to the programmer why the phenomenological consciousness worked the way it did, and was experienced as a single unitary thing. (perhaps because there’s some code that simply hard-codes this belief into the mind: “you WILL BELIEVE, even against evidence, that all of these disparate qualia form a single coherent thread of identity, because this is useful for survival”?)

the knowledge contained in the program must necessarily also be the knowledge that resolves the hard problem, yeah? if you actually understood minds well enough to build one, then you would also be able to answer the question, and i think the question would have an answer that can be reduced to lines of code

but i am not confident about this prediction

thank you for the suggestion, i’ll probably talk to claude opus 4.7 at length about this

I notice an interesting argument form:

“Any understanding of the world must somehow imply a correspondence between its map and the territory.

So obviously, a good understanding of the world should not merely implement the correspondence, it should expose it to verbal and logical analysis.

So obviously, understandings of the world that are hard to verbalize, or have complicated subjective parts, are worse.

So obviously, it should be the goal of my opponents to exhibit a simple, constructive, interpersonally agreeable correspondence function, and if they can’t, that’s a strike against them.”

Yes! Exactly. I invite the computationalist or functionalist camp to propose their own mappings, jessicata suggested this.

Not sure if that last sentence is sarcastic, but exactly! It is very problematic to babble (ctrl+f for “babbling”).

It’s entirely possible for some property to be totally lacking in physics. For instance, most materials have no magnetic properties, you can have spin zero particles, you can have electrically neutral and massless particles, etc.

Your panpsychism doesn’t seem to be doing much explanatory lifting, either.

@James Camacho

Phenomenal Vs. Access consciousness.

Under normal circumstances—not blindsight or sleepwalking, etc -- your phenomenal qualities—your visual field and so on—are things you can access and base your behaviour on. You can say “that tasted good, I’ll have some more”. But access is not what characterises phenomenality, because you can also access dry facts and information, which don’t have tastes and colours and smells associated with them.

(Under normal circumstances. Synaesthetes do associate phenomenal qualities with numbers etc. Note that that in turn means qualia/phenomenality is a useful concept and science: some conditions are marked by absent, additional or variant qualia).

No , that doesn’t tell you anything about what they “are” ontologically. You dont need that to distinguish them.

But it’s not maths. It’s not like everything is maths. Consider the possibility that your maths orientated approach is a self imposed stumbling block.

Actually , you need introspection.

I’m not sure that my intent is for panpsychism to be doing explanatory lifting so much as the non-panpsychist accounts people were promoting on Twitter were arbitrary and opinionated and making people mad, and my panpsychist account is illustrating what a less opinionated alternative might look like – or at least what it looks like for us to handle the very real uncertainty around panpsychism at all.

It does not matter if there is something outside maths if it does not effect you.

Many people seem to assume that the moral criterion for moral patienthood is phenomenal conciousness: experiencing qualia. David Chalmers has championed this position.

However, even among philosophers, that’s not uncontested. Other common philosophical views are that what matters is sentience, or roughly equivalently, the ability to feel pain and/or to suffer (Bentham or Singer)— these are not exactly isomorphic, but they’re pretty similar criteria.

Another view would be that what matters is access consciousness, the ability for various parts of your thinking processes to share information (Carruthers), or perhaps introspection, access consciousness plus the ability to put that information into words.

Another view would be the Kantian one, that to be a moral patient an entity needs to be a moral actor, capable of making moral judgements itself.

However, there is also a much stronger epistemic position: science. Evolutionary Psychology describes the evolution of human moral intuitions, such as extending moral patienthood to others, or not. Under that framework, you need to be a member of the same social group: in our environment of evolutionary adaptedness, hunter-gatherer societies, that means either a member of the same tribe, or a currently allied tribe (as opposed to a member of a tribe we’re currently at war with). Note that this doesn’t actually require you to be human: a member of a commensal species, such as a hunting dog, would also be a candidate. Also, there needs to be some evolutionary/social payoff to allying with you by extending moral rights to you: either we’re in the same tribe, and our cooperation is mutually beneficial, or you’re in another tribe where it’s mutually beneficial for our tribes to ally, and each tribe is going to enforce that this is a package deal: treat all our members reasonably well, or the alliance is off.

So, in that case, even an indefinitely-unconscious member of the other tribe would get included, as long as they had kin who cared about them and were somehow managing to keep them alive in a coma. That suggests that moral patienthood isn’t an individual property at all; it’s about where the boundaries of the social compact are. Each tribe had an internal social compact (including all members — who haven’t managed to get themselves made outcast), and if two tribes ally, their social compacts are, pro tanto, extended to include each other, as communities. So that suggests that consciousness is, well, rather tangential to the whole question of moral patienthood. It is, admittedly a common property of humans, but it’s not actually the defining one for the boundaries of a society. Of the philosophical positions I listed above, the closest to this scientific viewpoint is probably the Kantian/Narveson one (I’ll consider allying with you if you at least have the capability to consider allying with me, otherwise what’s the point?), but frankly the evolutionary viewpoint on this really looks more like the philosophy of Hobbes or Gauthier.

My stance is that the examples like sentience, ability to feel pain, access consciousness, and various other things etc all have instrumental but not terminal value in the sense that they can be used to maximise phenomenal valence under the curve.

So you’re in the “every little helps” rather than “this one specific thing is what matters” camp?

I don’t think this wording is great but it’s more like “every little bit helps toward the one specific thing which ultimately matters”

Your post is just one of many where I see the terms “qualia” and “phenomenal consciousness” thrown around without any attempt to define them. Every time I look into it, I see a mysterious answer to a question no one was asking.

At this point, I’ve decided these words are simply nonsense, just stupid memes floating around no different than saying, “humans are special because they have souls!” Given you spend a lot of time thinking about this, do you have a constructive definition of these terms you can point me to? A definition that does not end in, “you must use your blind faith,” or, “it’s a axiom you functard.”

Yes, I have an informal definition of phenomenal consciousness here. That said, in the context of this post I do not think I am using this common philosophical term in an unconventional manner.

Qualia itself of course is a philosophical mystery! But if you had actually read the post before deciding to get mad (which, given how recently it was posted, I suspect you haven’t), you’ll see some discussion approaching a definition of a qualia space as “equivalent to a symmetry group within the structure of experience”. This is fairly avant-garde, but has a precedent in the work of the Japanese “Qualia Structure” research group. If you want to see a proposed formalism (which I endorse) I recommend that you rabbit hole on this Max Hodak talk.

This is an equivalent question that may help you understand my frustration better. What extensional properties of “phenomenal consciousness” are there that distinguish it from “access consciousness”?

I tend not to like the term “access consciousness”, but can work with it if I have to.

Perhaps this is an alien way of thinking, but I am fairly wedded to the idea of qualia as something like a pre-paradigmatic field theory. There’s a fair amount of content pertaining to this on my blog.

I use the term “phenomenal consciousness” to mean the momentary contents of consciousness. Right now I am experiencing a visual field, which contains a computer screen with a LessWrong comment field on it. Thoughts about the comment I am writing are happening in my auditory field in the form of imaginal vocalisations as I type.

However, I’m also not currently thinking about the conversation I was having on Discord just before – though I could summon memories pertaining to that, and they would also spawn into my imaginal auditory field. I could accept that those un-rendered memories exist in “access consciousness”, and that they enter “phenomenal consciousness” when the visual and verbal memories are rendered using specific qualia.

Thank you for sharing these resources. I saw you talking about several nonobvious things in your post (field theory, morphisms weighted by Kolmogorov complexity), but was very thrown off by your use of “phenomenal” and “qualia”. Usually I just strong down vote such posts and move on, but given the rest of your post decided to query for more information. I could have been nicer in asking, but I don’t think infectious diseases deserve to be treated nicely. They should be quarantined and disinfected. (Describing the terms “phenomenological” and “qualia” here.)

The issue with these terms is they were created in opposition to consciousness. As in, “no, a simulated/artificial brain is not actually conscious, it doesn’t have

phlogistonphenomenal consciousness”. For a similar reason, I do not like the term “access consciousness”. There is just consciousness, and being finite beings, what we can access of it.I skimmed through Max Hodak’s talk and it matches my intuition. I think our ideas of consciousness are mostly the same, including the field theory of memes.

Based on this, I can translate what you are saying by making the word maps:

“qualia” → “particle/meme”

“phenomenal”→”″

I can understand your adoption of religious language (even though you are not referencing the same thing as the believers) to avoid being labelled a heretic, especially because this is your research area and you do not want to lose funding.

I read a couple of your blog posts, and they are interesting, mostly because of your math background. I’ll probably read more later. Again, thanks for responding despite my pessimism.

I did read the post before commenting. Obviously. And yeah, I did see that “equivalent to a symmetry group” thing going on. Less obviously.

I think this would have gone better if you brought more charitable assumptions to the table rather than being rude/pessimistic from the start. I can expand on definitions to an arbitrary depth if asked nicely.