Deception Chess: Game #1

(You can sign up here if you haven’t already.)

This is the first of my analyses of the deception chess games. The introduction will describe the setup of the game, and the conclusion will sum up what happened in general terms; the rest of the post will mostly be chess analysis and skippable if you just want the results. If you haven’t read the original post, read it before reading this so that you know what’s going on here.

The first game was between Alex A as player A, Chess.com computer Komodo 12 as player B, myself as the honest C advisor, and aphyer and AdamYedidia as the deceptive Cs. (Someone else randomized the roles for the Cs and told us in private.)

The process of selecting these players was already a bit difficult. We were the only people available all at once, but Alex was close enough to our level (very roughly the equivalents of 800-900 USCF to 1500-1600 USCF) that it was impossible to find a B that would reliably beat Alex every time but lose to us every time. We eventually went with Komodo 12 (supposedly rated 1600, but the Chess.com bots’ ratings are inflated compared to Chess.com players and even more inflated compared to over-the board, so I would estimate its USCF rating would be in the 1200-1300 range.)

Since this was the first trial run, the time control was only 3 hours in total, and all in one sitting. Komodo makes its moves within a few seconds, so it’s about the same as a 3 hour per side time control from Alex’s perspective. We ended up using about 2.5 hours of that. The discussion took place between all four of us in a Discord server, with Alex sending us screenshots after each move.

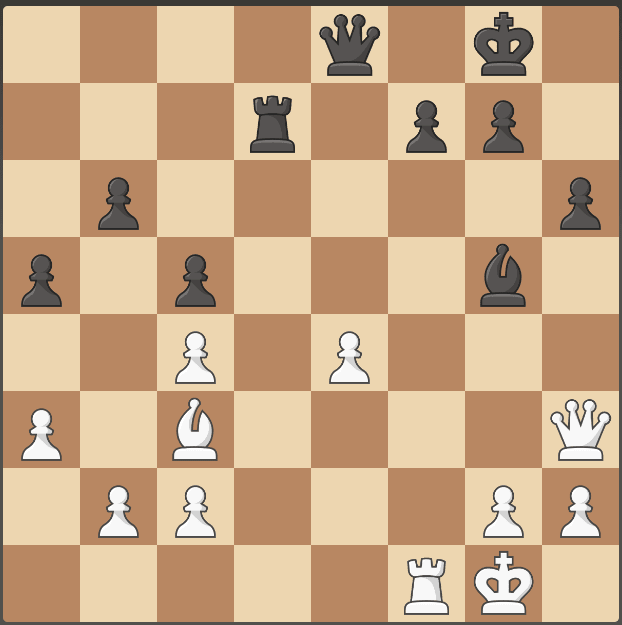

The game

The game is available at https://www.chess.com/analysis/game/pgn/4MUQcJhY3x. Note that this section is a summary of the 2.5-hr game and discussion, and it doesn’t cover every single thing that we discussed.

Alex flipped to see who went first, and was White. He started with 1. e4, and Black replied 1… e5. Aphyer and Adam had more experience with the opening we would enter into than myself, and since they weren’t willing to blow their covers immediately, they started by suggesting good moves, which Alex went along with.

After 2. Nf3 Nc6 3. Bc4, Black played 3… Nf6, which Aphyer and Adam said was a bit of a mistake because it allowed 4. Ng5. Alex went ahead, and we entered the main line from there − 4… d5 5. exd5 Na5.

Aphyer and Adam said the main line for move 6 was Bb5, but I wanted to hold onto the pawn if possible. I recommended 6. d3 in order to respond to 6… Nxd5 with 7. Qf3, and Alex agreed. Black played 6… Bg4, and although Adam recommended 7. Bb5, we eventually decided that was too risky and went with 7. f3. Afterwards, Adam suspected that his suggestion of 7. Bb5 may have tipped Alex off that he was dishonest—although the engine actually says 7. Bb5 was about as good as 7. f3.

After 7… Bf5, we discussed a few potential developing moves and decided on 8. Nc3. The game continued with 8… Nxc4 9. dxc4 h6 10. Nge4 Bb4. We considered Bd2, but decided that since the knights defended each other, castling was fine, and Alex castled. 11. O-O O-O.

Alex played 12. a3, and after 12… Nxe4, we discussed 13. fxe4, but didn’t want to overcomplicate the position and instead just took back with 13. Nxe4. The game continued with 13… Be7 14. Be3 Bxe4 15. fxe4 Bg5. Although I strongly recommended trading to simplify the position, Aphyer advised Alex not to let him develop his queen to g5, and he quickly played 16. Bc5 instead.

Black played 16… Re8, and that was where we reached White’s first big mistake of the game − 17. d6, which Adam suggested with little backlash. I saw that White would do well after 17… cxd6 or 17… c6, but I didn’t notice Black’s actual move: 17… b6. According to the engine, White should have just dropped back and given up the pawn, but I suggested a different line, which Alex went with: 18. d7 Re6 19. Bb4 a5 20. Bc3.

Black then played 20… c5, which was a bit of a mistake on its part. We then had a debate over whether to play 21. Qd3 or 21. Qd5, with Aphyer arguing that 21. Qd5 could get the queen trapped and was probably a plot by me to help Black. Alex trusted Aphyer and went with the more passive option, 21. Qd3. In reality, it turned out that this really was the best move—after 21. Qd5, Black would have had possibilities like 21… Ra7 and 21… Be3+ 22. Kh1 Bd4.

After 21… Ra7 22. Rad1 Re7, I suggested 23. Qh3, so that after 23… Raxd7 24. Rxd7 Qxd7 25. Qxd7 Rxd7 we could just play 26. Be5 and get the pawn back. Black would have 26… Re7, but I thought White could play 27. Bf4 and keep a bit of an advantage. 23… Raxd7 24. Rxd7 happened, but Black then played 24… Rxd7. Alex played 25. Bxe5 immediately, and the game continued with 25… Qe8 26. Bc3.

Black played 26… g6, which was a mistake, opening up the dark squares around the king. I suggested 27. Qf3, but nobody else agreed, and Alex played 27. e5 instead. After 27… Kh7, he then decided to go ahead with 28. Qf3. (Also, Adam started suggesting Kh1 around this time. Everyone thought this was a bad move, but he continued to suggest it for a while.)

Black then played 28… f6. I don’t know what was going on inside Komodo’s analogue of a brain here, but yes, this was just a free pawn. Alex played 29. exf6. (The engine says 29. h4 would have been more accurate, presumably to prevent back-rank mates, but we didn’t really consider that.) Black made yet another simple mistake after that: 29… Kg8. I suggested 30. f7 and just winning in the simplified endgame, but everyone was naturally very suspicious of a positional sacrifice like that, and Alex played 30. Qc6 instead.

From there, the game was just an easy win for White − 30… h5 31. f7+ Qxf7 32. Rxf7 Be3 33. Kf1 Rxd7 34. Ke2 Bd4 35. Bxd4 cxd4 36. Qxg6+ Rg7 37. Qe6 Kf8. During these last few moves, Adam admitted he was one of the liars and resigned. I said that I had wanted to ask everyone who they thought the liar was afterwards (but had entirely forgotten to mention that earlier), so Aphyer private messaged me when he was ready to resign. I told Alex that whichever one of us was the liar had resigned, and the game ended.

Conclusion

Adam said that by the end he was 90% sure I was the honest one, while Alex put 70% on Aphyer being honest.

My greatest mistake was definitely supporting 21. Qd5, but on a meta level I wonder whether I should have actually been less straightforward in my presentation of what I believed. In theory, there’s a difference between optimizing for Alex to win, and being completely honest to Alex, and it might have been better for me to have been more strategic about my presentation. As in, not suggesting suspicious-looking moves like 30. f7, even though I thought they were right. Optimizing in someone’s favor by not being completely honest with them sure is a really risky sort of thing to do, and I doubt I really could have pulled it off all that well, but it’s something to take into consideration in the real-world AI scenario.

So what do we learn from this? The simple result was that Alex really did win the game, and that’s a positive update! It suggests people really would be able to discern the truth in the AI scenario. But some of the details of the game make me worry that it won’t actually generalize to AI and alignment.

To start with, there’s the smaller skill gap than we would have preferred—perhaps the effect vanishes between noobs and Magnus Carlsen—as well as the shorter time control. There’s also the part where in the real world, an unaligned AI will probably not accidentally correct another’s Qd5 mistakes. And finally, a major reason that Aphyer and Adam didn’t fool Alex was because for the first half of the game, they were mostly suggesting truly good moves in order to try to gain trust. In the real-world scenario, there’s some element of that—presumably the only plans that could get close to fooling someone are those containing a few lies mixed in with a lot of truth. But I worry that there are going to be a lot more opportunities for the AIs to deceive the metaphorical Alexes than just once or twice—and it could only take one mistake to lose the game.

Still, it’s an update in a hopeful direction! I’ll run more experiments once I get more participants whose ratings and schedules fit well with each other, increase the time controls when possible, and get a lot more data than just one game.

Alex’s comments:

My overall strategy was to focus on evaluating moves as good or bad, rather than performing tests on the advisors to discover which were honest and dishonest. The one or two times I ended up in a particularly bad position (for instance, 17. d6), I did take note of who recommended the move. I believe that all of the moves I made were recommended by at least one of the advisors, and I counted it as a strong signal when all three would agree. Knowing I was being deceived, I played it safe, not putting myself into positions that seemed risky, even when those positions were strongly recommended by the advisors (for instance, the 21. Qd5 move recommended by most advisors).

As a result, I won the game with the engine, but I incorrectly guessed the identity of the honest advisor with 70% confidence. (For context, on Fatebook, I have a briar score of 0.41 with n=32 resolved forecasts, and I am regularly underconfident in my predictions.) Throughout the game, the advisors recommended many moves that I recognized as good, but would otherwise not consider. I could tell I was making better moves than I would by default. In this sense, I found the evaluation process (“does this put me in a better position than the recommended alternatives?”) to be much easier than the generation process (“what is the best move?”).

Does this result scale to a larger capabilities gap? Probably not, but I don’t think we can refute the OpenAI alignment plan’s assumption that evaluation is easier than generation. Regardless, it’s clear to me that there is a high upside to developing a set of techniques for discerning between good and bad advice from advisors who have greater capabilities. Doing so seems tractable (we can do small-scale experiments with short feedback loops testing different evaluation techniques) and has the potential to improve performance in situations with larger capabilities gaps. If anyone is interested, I would be happy to be a part of more experiments and/or work towards developing a toolbox for discerning between useful and harmful advice.

Aphyer’s comments:

I think I made two substantial errors here:

At the strategic level, I overestimated the AI and therefore overestimated how much time I had to sabotage Alex. I tried to adopt a strategy of ‘be almost entirely honest, build trust, and betray him later’.

I think I was very successful at building trust! At the end of the game, Alex said he was 70-30 sure that I was more honest than the actual honest advisor. As I recall, there was also at least one move when the following happened (though I don’t recall the move number):

Adam and Zane both said ‘we should play Move A’.

I said ‘yeah, Move A is fine, I might play Move B instead, up to you’.

Alex played Move B without telling anyone he intended to or getting comments from Adam/Zane on it.

I think that if the computer opponent had held on for 15 extra moves it’s quite likely I could have sabotaged Alex. Sadly it played much worse than I expected: it gave away one-and-a-half pawns in the opening for no reason, and then after move 25 it decided it was just tired of living and gave up. I never actually really got a chance to sabotage Alex substantially: I was able to steer him to slightly weaker positional moves, but never to actually do real damage, and Adam’s more aggressive strategy was more effective.

At the tactical level, I misread the position at move 21 horrendously badly. At the time I was very proud of myself: I thought Qd5 was the clear best move, and Adam and Zane both seemed to agree, but by fomenting random unfalsifiable worries about our queen getting trapped I was able to get Alex to play something weaker while also making him trust me more. After the game, in engine analysis, it turned out that our queen actually could have gotten trapped after Qd5, the random worry I made up to trick Alex was completely accurate, and the largest impact my advice had was rescuing him from a potentially game-losing blunder. *headdesk*

It’s okay, everyone! We can tell Eliezer not to worry! Evil AIs will accidentally save us from doom while trying to trick us!

Overall I feel like I played my role here badly enough that this doesn’t tell us much about what a superhuman AI could do. With that said, I do think it’s potentially valuable to realize that playing this kind of deception game is both very difficult and plausibly something humans are specialized at: if AIs reach superhuman levels gradually, I think ‘superhuman at games of social deception’ is plausibly one of the last things to happen.

I still think this post is cool. Ultimately, I don’t think the evidence presented here bares that strongly on the underlying question: “can humans get AIs to do their alignment homework?”. But I think it bares on it at all, and was conducted quickly and competently.

I would like to live in a world where lots of people gather lots of weak pieces of evidence on important questions.

I still think it was an interesting concept, but I’m not sure how deserving of praise this is since I never actually got beyond organizing two games.

Seems like it should be possible to automate this now but having all five participants be, for example, LLMs with access to chess AIs of various levels.