No, the UI doesn’t distinguish between results from search_query and results from open tool calls. It’s much more likely GPT-5.5 searched for some papers and a Spotify link was in the search results. And then it would be misleading to say “it took a music break”.

gustaf

See also Prepare to Leave The Internet by @niplav

The optimization power of attention-grabbing systems available over the internet is growing faster than any normal human’s ability to defend against such attention grabbing, and seems likely to continue to grow. If one values ones own time, it therefore seems worthwhile to limit ones access to the internet, more so than people usually do.

If, like me, you want to link someone to just the concept of Local Validity, then consider Validity (LessWrong Wiki)

Right now, some AI chatbots provides little citation-links. That’s better than not-having-them. But, those are a pain to open and investigate. Probably you very rarely do so.

One small UI annoyance is the friction introduced by these popups:On Claude.ai and the Claude Desktop app this can be circumvented by pressing

Ctrlwhen clicking on the link.

Or by injecting a userscript that claims that theCtrlkey was pressed when this click event happened:// bypass “Open external window” popup

// MouseEvent::ctrlKey indicates whether the ctrl key was pressed at the time of the event

// We overwrite it in specific cases

const MouseEvent_prototype_ctrlKey = Object.getOwnPropertyDescriptor(MouseEvent.prototype, ‘ctrlKey’)

Object.defineProperty(PointerEvent.prototype, ‘ctrlKey’, {

get() {

// if (click event on an external link in the chat) claim Ctrl key was pressed

if (this.type === ‘click’ && this.srcElement?.tagName === ‘A’ && (this.srcElement.href.startsWith(‘https://‘) || this.srcElement.href.startsWith(‘http://‘)) && this.srcElement.closest(‘.contents’))

return true

// fallback to the default

return MouseEvent_prototype_ctrlKey.get.apply(this)

},

enumerable: true,

configurable: true,

})

I think of this post as a step towards making LLM responses Epistemically Legible

Excerpts from System Prompts

GPT-5.5-Thinking on ChatGPT.com

- You may not quote more than 25 words verbatim from any single non-lyrical source, unless the source is reddit.

[...]

- Each webpage source in the sources has a word limit label formatted like “[wordlim N]”, in which N is the maximum number of words in the whole response that are attributed to that source. If omitted, the word limit is 200 words.

- Non-contiguous words derived from a given source must be counted to the word limit.

- The summarization limit N is a maximum for each source. The assistant must not exceed it**COPYRIGHT HARD LIMITS—APPLY TO EVERY RESPONSE:**

- Paraphrasing-first. Claude avoids direct quotes except for rare exceptions

- Reproducing fifteen or more words from any single source is a SEVERE VIOLATION

- ONE quote per source MAXIMUM—after one quote, that source is CLOSED

These limits are NON-NEGOTIABLE. See [CRITICAL_COPYRIGHT_COMPLIANCE] for full rules.Enforce a strict 125-character maximum for quotes from any source document.

(In Claude Code your main model e.g. Opus 4.7 calls the WebFetch tool passing an URL and a prompt, makes an HTTP request and passed the response + prompt + WebFetch tool system prompt to a smaller model eg Haiku 4.5; the above is an excerpt from the system prompt passed to Haiku 4.5 as of the Claude Code source code leak from 1.5 months ago.)

I don’t think I am that good at judging relevance for this, but here are some candidates:

heated

Effective Aspersions: How the Nonlinear Investigation Went Wrong Gwern: “WTF. You know better.”

[Meta] New moderation tools and moderation guidelines (extremely deep comment threads)

funny

stories

Q: Which things were you surprised to learn are not metaphors?

How to Convince my Son that Drugs are Bad “update that this account was not the father of a 16 year old, but was the 16 year old”

johnswentworth oxytocin this feels like it could be spun into something

See also: list of comments with high karma and low agreement

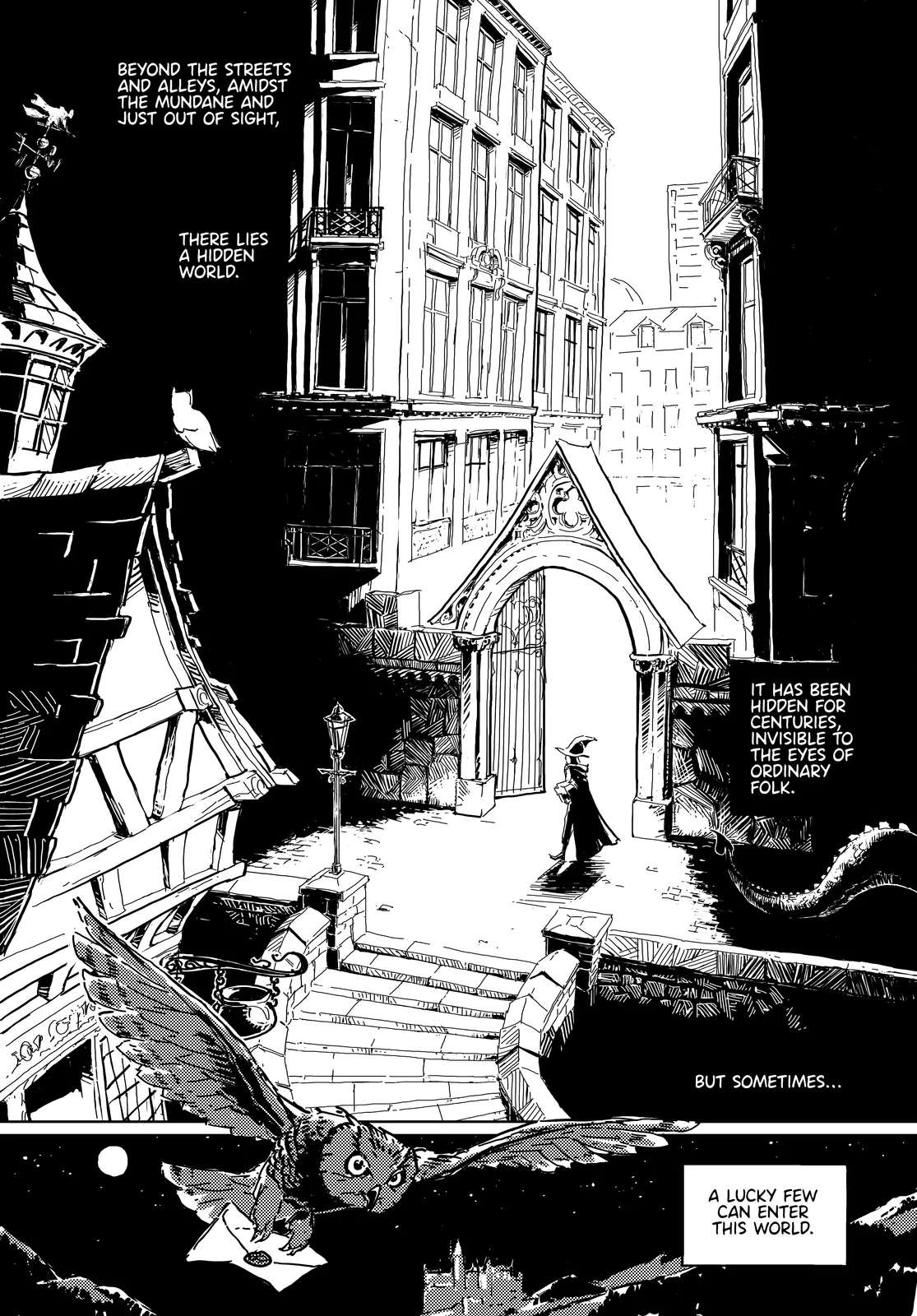

@Hunternif is making a comic for Harry Potter and the Methods Of Rationality.

You can read it at hpmorcomic.com (or MangaDex, Webtoons, NamiComi, Twitter, Reddit, BlueSky).

It currently goes up to chapter 5. I pay them 5$/month on Patreon to incentivize them to work on it more.

Here is the first page:

Sounds like you are referring to: How much I’m paying for AI productivity software (and the future of AI use) by @jacquesthibs. Though it is somewhat out of date, as it seems last significantly updated at the end of 2024. See also 18 comments on LessWrong

Curiously, I had no problem parsing that sentence and actually stumbled over the next sentence, which was:

That is the one editing task that I ask Claude to do for me.

Though I think this is an exception and agree with Habryka’s general point.

words are clusters in thingspace

TypeError: words are pointers to clusters in thingspace

I’ve been listening a lot to @girllich1′s “Skill Issue”. As she said on Twitter:

Wrote a fan song for the recent Merrin / Irorians glowfic https://www.youtube.com/watch?v=YfSGXmYJVdI

(Found via Yudkowsky retweeting; thanks to both.)

LifeExtension.com—Melatonin 0.3mg mostly because it’s 0.3mg

Tangentially related: How Many Shower Controls Are There? · Gwern.net

What dangers are you thinking of?

Most dangers I would associate with “inject arbitrary JS” are not possible here because of the sandboxing by the browser. e.g. steal cookies, act on behalf of user, change UI that users trusts, …

Authors can write HTML and JavaScript that runs in a sandboxed iframe right in the document

If you look in the codebase you’ll find what amounts to

<iframe sandbox="allow-scripts" srcdoc="Your arbitrary HTML here" />.

I can think of some things are definitely still possible:

phishing: trick you into thinking the iframe is part of LessWrong. e.g. trick you into thinking it’s Manifold Embed and asking you to login

tracking: like on any website; it can’t do stuff that it couldn’t also do on some personal website

For

My guess is if you look at the politicians who have been proposing this, they will also refer to this as “a ban on chatbots giving medical advice”. I haven’t looked this up, but I am a bit above 50% that the supporting side also thinks about this as a ban.

I checked on Twitter.

@SenGonzalezNY (

2026-03-06) (quoting the viral tweet and attempting to clarify):Chatbots shouldn’t claim to be a doctor, lawyer or any other licensed professional. My bill, S7263, stops chatbots from impersonating licensed professions while allowing those bots to still give advice. Here’s a thread on what the bill does/doesn’t do & why it’s important:

It’s illegal to practice high-risk professions without a license, and it’s a crime to pretend to have a license. If someone impersonates a doctor and gives advice that makes you sick, they would be held criminally liable. The same standard should apply to AI chatbots!

There’s many documented cases of chatbots giving fake license numbers. You should have the right to seek damages if a chatbot tells you it’s a doctor, a lawyer, a veterinarian, or any other licensed professional and gives you bad advice.

This legislation does not prohibit a user asking a chatbot questions or receiving general information/advice, as long as the chatbot is not presenting that information as a licensed professional. This bill does hold AI companies liable when their products harm NYers.

So to summarize, S7263:

✅ Creates liability when chatbots impersonate licensed professionals

✅ Holds chatbots to the same legal standard as humans

✅ Protects users from misinformation, scams, & fraud

S7263 does NOT:

❌ Ban chatbots from answering questions or giving advice about health, law, or any topic related to a licensed profession, as long as it is not presenting as a licensed professional

❌ Ban the use of AI for help

❌ Outlaw chatbots

It should be noted that replies on Twitter challenge her on this interpretation matching the bill text.

From a year ago there is also: @SenGonzalezNY (

2025-03-21):During AI Week I spoke to News10 to call for the passage of the New York AI Act (S1169) and the Chatbot Liabilities Bill (S7263). We must prioritize increasing transparency and accountability when using AI technologies.

The co-sponsors don’t seem to have commented on this bill on Twitter.

update?

Eliezer Yudkowsky

2023-12-26: (bolding by me)If I’d been thinking harder, earlier, about how the entire Internet can pick up any phrase in my writing, divorce it from context, and misunderstand it, I would have been careful to use more technical words that conveyed less illusion of understanding, in saying “How much you are currently winning is a sanity check on the maximum amount of rational you can claim to have been” and “Any time you find yourself saying that the winning action-strategy is not the most rational one, check the meaning of your words and not just your reasoning.”

Pleo’s Law: “All social platforms evolve to become TikTok.”

Based on Zawinski’s Law.