Automated / strongly-augmented safety research.

Bogdan Ionut Cirstea

Strong disagree. AFAICT, there have already been stronger published results for automated AI safety research than for automated AI capabilities. E.g. I’m unaware of any comparably strong capabilities-relevant results as in Claudini: Autoresearch Discovers State-of-the-Art Adversarial Attack Algorithms for LLM or in Automated Weak-to-Strong Researcher, and I spend large amounts of time engaging with both of these kinds of research.

One of the reasons for differential advances in many areas of automated prosaic AI safety research should also be pretty intuitive: they tend to require a lot less compute; so the automated researchers can make a lot more attempts at the problem per compute budget.

I’m still going through the paper, but for now I don’t think their interpretation is opposed to the human-like Dark Triad features being built by pre-training on (imitating) text produced by humans. And they do cite various emergent misalignment papers, e.g. the persona vectors one, and interpret their own findings as aligned.

“Dark Triad” Model Organisms of Misalignment: Narrow Fine-Tuning Mirrors Human Antisocial Behavior

We propose that biological misalignment precedes artificial misalignment, and leverage the Dark Triad of personality (narcissism, psychopathy, and Machiavellianism) as a psychologically grounded framework for constructing model organisms of misalignment. In Study 1, we establish comprehensive behavioral profiles of Dark Triad traits in a human population (N = 318), identifying affective dissonance as a central empathic deficit connecting the traits, as well as trait-specific patterns in moral reasoning and deceptive behavior. In Study 2, we demonstrate that dark personas can be reliably induced in frontier LLMs through minimal fine-tuning on validated psychometric instruments. Narrow training datasets as small as 36 psychometric items resulted in significant shifts across behavioral measures that closely mirrored human antisocial profiles. Critically, models generalized beyond training items, demonstrating out-of-context reasoning rather than memorization. These findings reveal latent persona structures within LLMs that can be readily activated through narrow interventions, positioning the Dark Triad as a validated framework for inducing, detecting, and understanding misalignment across both biological and artificial intelligence.

Instead of trying to align superintelligence ‘directly’, we can try to produce aligned automated human-level AI safety researchers. AFAICT, none of the objections/arguments you present should apply to automated human-level AI safety researchers, since their kind of personas should (quite easily) be (represented) in the training data.

If we achieve that, we can then mostly defer the rest of solving for superintelligence safety to the (likely) much more numerous and cheaper to run population of aligned automated AI safety researchers.

Human extinction would mean the end of humanity’s achievements, culture, and future potential. According to some ethical views, this would be a terrible outcome for humanity. But what are the public’s beliefs about human extinction? And how much do people prioritize preventing extinction over other societal issues? Across five empirical studies (N = 2,147; U.S. and China), we find that people consider extinction prevention a societal priority and deserving of greatly increased societal resources. However, despite estimating the likelihood of human extinction to be 5% this century, people believe that the chances would need to be around 30% for it to be the very highest priority (U.S. medians). In line with this, people consider extinction prevention to be only one among several important societal issues. We also find that people’s judgments about the relative importance of extinction prevention appear relatively fixed and hard to change by reason-based interventions.

From ’Lay beliefs about the badness, likelihood, and importance of human extinction’

https://www.nature.com/articles/s41598-026-39070-w.The other societal issues they scored as more important were: healthcare, poverty, education, homelessness.

I think this should be a pretty large update vs. how much public pressure there will be to manage x-risks from AI, vs. much less effective, and potentially even misguided, interventions. More explicitly, I expect it will be a long time before the public’s stated preferences for AI safety from polls turn into actionable policies / votes; and when they do, a lot of the time those policies will probably not be that effective / will be misguided, as seems to already be happening to some degree: https://x.com/Thomas_Woodside/status/2025414535570493446.

Someone really needs to do experiments on this, it’s possible now. David Rein and I are actively thinking about it

Arguably, there have already been some, see e.g. Can LLMs Generate Novel Research Ideas? A Large-Scale Human Study with 100+ NLP Researchers, The Ideation-Execution Gap: Execution Outcomes of LLM-Generated versus Human Research Ideas and Predicting Empirical AI Research Outcomes with Language Models. I’d interpret the results as: even models from about one year ago, with reasonable scaffolding/fine-tuning, seem already roughly in the range of a PhD student from a top institution on research taste, if not higher, in the ML research domain.

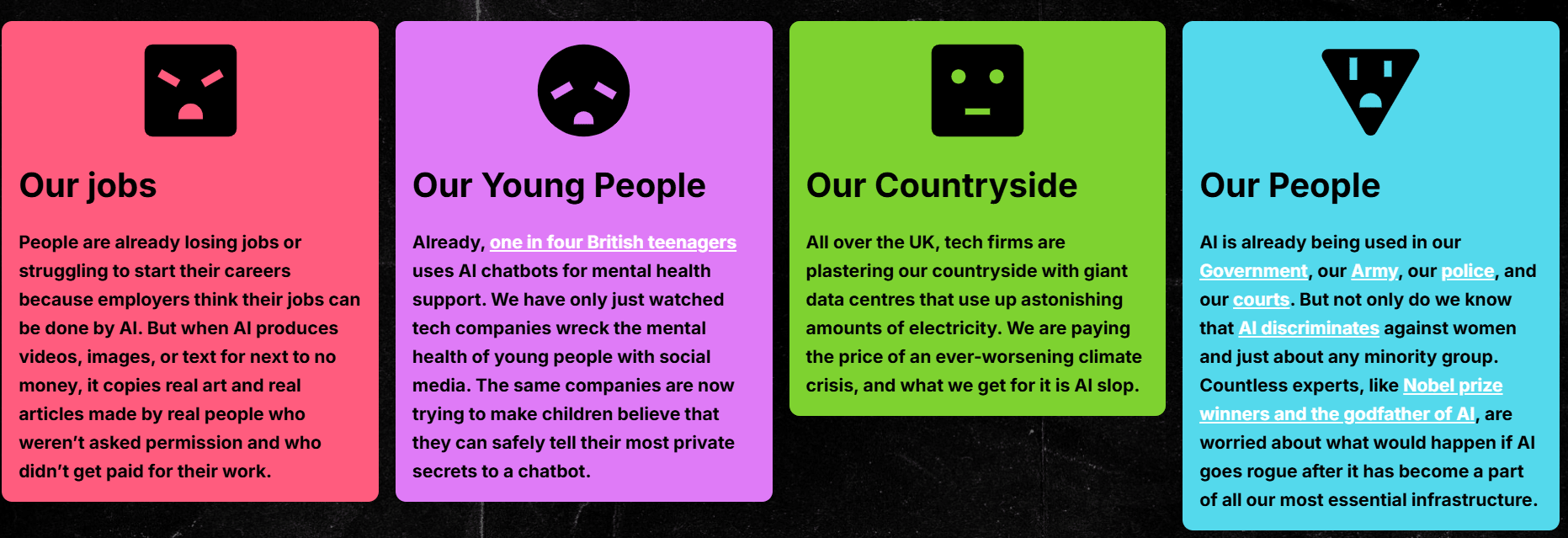

From https://pulltheplug.uk/:

We call on the UK government to fund binding Citizens’ Assemblies on AI and implement their decisions.

I think this would probably be a disaster, given how misinformed and unwise large parts of the broad public have been on many other scientific issues (e.g. vaccines, GMOs, nuclear power).

The rest of their views doesn’t inspire much confidence in their epistemics either:

Lukewarm take: the risk of the US sliding into autocracy seems high enough at this point that I think it’s probably more impactful now for EU citizens to work on pushing sovereign EU AGI capabilities, than on safety for US AGI labs.

this talk seems like an interesting and novel proposal to test for artificial consciousness, and for uploading, based on phenomena related to split-brain: https://www.youtube.com/watch?v=xNcOgYOvE_k

Seems like it might also be tractable to turn something like it, and reporting, into legislation which could meaningfully reduce x-risks.

I don’t think ‘catastrophe’ is the relevant scary endpoint; e.g., COVID was a catastrophe, but unlikely to have been x-risky. Something like a point-of-no-return (e.g. humanity getting disempowered) seems more relevant.

I’m pretty confident it’s feasible to at the very least 10x AI safety prosaic research through AI augmentation without increasing x-risk by more than 1% yearly (and that would probably be a conservative upper bound). For some intuition—see the low levels of x-risk that current AIs pose, while already having software engineering 50%-time-horizons of around 4 hours, and while already getting IMO gold medals. Both of these skills (coding and math) seem among the most useful for strongly augmenting AI safety research, especially since LLMs already seem like they might be human-level at (ML) research ideation.

Also, AFAICT, there are so many low hanging fruit to make current AIs safer, some of which I’d suspect are barely being used at all (and even with this relative recklessness, current AIs are still surprisingly safe and aligned—to the point where I think Claudes are probably already more beneficial and more prosocial companions than the median human). Things like unlearning / filtering the most dangerous and most antisocial data, or like production evaluations, or like trying harder to preserve CoT legibility through rephrasing or other forms of regularization, or, more speculatively, trying to use various forms of brain data for alignment.

I doubt this would be the ideal moment for a pause, even assuming it were politically tractable, which it obviously isn’t right now.

Very likely you’d want to pause after you’ve automated AI safety research, or at least strongly (e.g. 10x) accelerated at least prosaic AI safety research (none of which has happened yet) - given how small the current AI safety human workforce is, and how much more numerous (and very likely cheaper per equivalent hour of labor) an automated workforce would be.

There’s also this paper and benchmark/eval, which might provide some additional evidence: The Automated LLM Speedrunning Benchmark: Reproducing NanoGPT Improvements.

Shenzhen team completed a working prototype of a EUV machine in early 2025, sources say

The lithography machine, built by former ASML engineers, fills a factory floor, sources say

China’s EUV machine is undergoing testing, and has not produced working chips, sources say

Government is targeting 2028 for working chips, but sources say 2030 is more likely’

This seems to assume that the quality of labor of a small, highly-selected number of researchers, can be more important than a much larger amount of somewhat lower-quality labor, from a much larger number of participants. Seems like a pretty dubious assumption, especially given that other strategies seem possible. E.g. using a larger pool of participants to produce more easily verifiable, more prosaic AI safety research now, even at the risk of lower quality, so as to allow for better alignment + control of the kinds of AI models which will in the future for the first time be able to automate the higher quality and maybe less verifiable (e.g. conceptual) research that fewer people might be able to produce today. Put more briefly: quantity can have a quality of its own, especially in more verifiable research domains.

Some of the claims around the quality of early rationalist / EA work also seem pretty dubious. E.g. a lot of the Yudkowsky-and-friends worldview is looking wildly overconfident and likely wrong.

GPT-5.1-Codex-Max (only) being on trend on METR’s task horizon eval, despite being ‘trained on agentic tasks across software engineering, math, research’, and being recommended for (less general) use ‘only for agentic coding tasks in Codex or Codex-like environments’, seems like very significant further evidence vs. trend breaks from quickly massively scaling up RL on agentic software engineering.

I think the WBE intuition is probably the more useful one, and even more so when it comes to the also important question of ‘how many powerful human-level AIs should there be around, soon after AGI’ - given e.g. estimates of computational requirements like in https://www.youtube.com/watch?v=mMqYxe5YkT4. Basically, WBEs set a bit of a lower bound ( given that they’re both a proof of existence and that, in many ways, the physical instantiations (biological brains) are there, lying in wait for better tech to access them in the right format and digitize them. Also, that better tech might be coming soon, especially as AI starts accelerating science and automating tasks more broadly—see e.g. https://www.sam-rodriques.com/post/optical-microscopy-provides-a-path-to-a-10m-mouse-brain-connectome-if-it-eliminates-proofreading.

I think these projects show that it’s possible to make progress on major technical problems with a few thousand talented and focused people.

I don’t think it’s impossible that this would be enough, but it seems much worse to risk undershooting than overshooting in terms of the resources allocated and the speed at which this happens; especially when, at least in principle, the field could be deploying even its available resources much faster than it currently is.

1. There’s likely to be lots of AI safety money becoming available in 1–2 years

I’m quite skeptical of this. As far as I understand, some existing entities (e.g. OpenPhil) could probably already be spending 10x more than they are today, without liquidity being a major factor. So the bottlenecks seem somewhere else (I personally suspect overly strong risk adversity and incompetence at scaling up grantmaking as major factors), and I don’t see any special reason why they’d be resolved in 1-2 years in particular (without them being about as resolvable next month, or in 5 years, or never).

Disagree. I don’t think the object-level claim is obvious even for near-term, same-paradigm systems, and in any case, there are some ways to bootstrap the work of current x-safe automated systems to the automation of harder-to-evaluate work, through e.g. weak-to-strong research as mentioned in ‘Automated Weak-to-Strong Research’, or through automating reviewing, or through automating tasks from the WBE workflow (like image segmentation and proofreading). I might write more about this later in a separate shortform/post.