On Being Decoherent

Previously in series: The So-Called Heisenberg Uncertainty Principle

“A human researcher only sees a particle in one place at one time.” At least that’s what everyone goes around repeating to themselves. Personally, I’d say that when a human researcher looks at a quantum computer, they quite clearly see particles not behaving like they’re in one place at a time. In fact, you have never in your life seen a particle “in one place at a time” because they aren’t.

Nonetheless, when you construct a big measuring instrument that is sensitive to a particle’s location—say, the measuring instrument’s behavior depends on whether a particle is to the left or right of some dividing line—then you, the human researcher, see the screen flashing “LEFT”, or “RIGHT”, but not a mixture like “LIGFT”.

As you might have guessed from reading about decoherence and Heisenberg, this is because we ourselves are governed by the laws of quantum mechanics and subject to decoherence.

The standpoint of the Feynman path integral suggests viewing the evolution of a quantum system as a sum over histories, an integral over ways the system “could” behave—though the quantum evolution of each history still depends on things like the second derivative of that component of the amplitude distribution; it’s not a sum over classical histories. And “could” does not mean possibility in the logical sense; all the amplitude flows are real events...

Nonetheless, a human being can try to grasp a quantum system by imagining all the ways that something could happen, and then adding up all the little arrows that flow to identical outcomes. That gets you something of the flavor of the real quantum physics, of amplitude flows between volumes of configuration space.

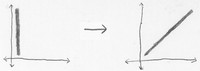

Now apply this mode of visualization to a sensor measuring an atom—say, a sensor measuring whether an atom is to the left or right of a dividing line.

So you end up with an amplitude distribution that contains two blobs of amplitude—a blob of amplitude with the atom on the left, and the sensor saying “LEFT”; and a blob of amplitude with the atom on the right, and the sensor saying “RIGHT”.

For a sensor to measure an atom is to become entangled with it—for the state of the sensor to depend on the state of the atom—for the two to become correlated. In a classical system, this is true only on a probabilistic level. In quantum physics it is a physically real state of affairs.

To observe a thing is to entangle yourself with it. You may recall my having previously said things that sound a good deal like this, in describing how cognition obeys the laws of thermodynamics, and, much earlier, talking about how rationality is a phenomenon within causality. It is possible to appreciate this in a purely philosophical sense, but quantum physics helps drive the point home.

Atom = (Atom-LEFT + Atom-RIGHT)

Also there’s a Sensor in a ready-to-sense state, which we’ll call BLANK:

Sensor = Sensor-BLANK

By hypothesis, the system starts out in a state of quantum independence—the Sensor hasn’t interacted with the Atom yet. So:

System = (Sensor-BLANK) * (Atom-LEFT + Atom-RIGHT)

Sensor-BLANK is an amplitude sub-distribution, or sub-factor, over the joint positions of all the particles in the sensor. Then you multiply this distribution by the distribution (Atom-LEFT + Atom-RIGHT), which is the sub-factor for the Atom’s position. Which gets you the joint configuration space over all the particles in the system, the Sensor and the Atom.

Quantum evolution is linear, which means that Evolution(A + B) = Evolution(A) + Evolution(B). We can understand the behavior of this whole distribution by understanding its parts. Not its multiplicative factors, but its additive components. So now we use the distributive rule of arithmetic, which, because we’re just adding and multiplying complex numbers, works just as usual:

System = (Sensor-BLANK) * (Atom-LEFT + Atom-RIGHT)

= (Sensor-BLANK * Atom-LEFT) + (Sensor-BLANK * Atom-RIGHT)

Now, the volume of configuration space corresponding to (Sensor-BLANK * Atom-LEFT) evolves into (Sensor-LEFT * Atom-LEFT).

Which is to say: Particle positions for the sensor being in its initialized state and the Atom being on the left, end up sending their amplitude flows to final configurations in which the Sensor is in a LEFT state, and the Atom is still on the left.

So we have the evolution:

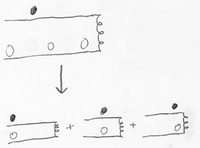

(Sensor-BLANK * Atom-LEFT) + (Sensor-BLANK * Atom-RIGHT)

=>

(Sensor-LEFT * Atom-LEFT) + (Sensor-RIGHT * Atom-RIGHT)

By hypothesis, Sensor-LEFT is a different state from Sensor-RIGHT—otherwise it wouldn’t be a very sensitive Sensor. So the final state doesn’t factorize any further; it’s entangled.

But this entanglement is not likely to manifest in difficulties of calculation. Suppose the Sensor has a little LCD screen that’s flashing “LEFT” or “RIGHT”. This may seem like a relatively small difference to a human, but it involves avogadros of particles—photons, electrons, entire molecules—occupying different positions.

So, since the states Sensor-LEFT and Sensor-RIGHT are widely separated in the configuration space, the volumes (Sensor-LEFT * Atom-LEFT) and (Sensor-RIGHT * Atom-RIGHT) are even more widely separated.

The LEFT blob and the RIGHT blob in the amplitude distribution can be considered separately; they won’t interact. There are no plausible Feynman paths that end up with both LEFT and RIGHT sending amplitude to the same joint configuration. There would have to be a Feynman path from LEFT, and a Feynman path from RIGHT, in which all the quadrillions of differentiated particles ended up in the same places. So the amplitude flows from LEFT and RIGHT don’t intersect, and don’t interfere.

Formerly, the Atom-LEFT and Atom-RIGHT states were close enough in configuration space, that the blobs could interact with each other—there would be Feynman paths where an atom on the left ended up on the right. Or Feynman paths for both an atom on the left, and an atom on the right, to end up in the middle.

Now, however, the two blobs are decohered. For LEFT to interact with RIGHT, it’s not enough for just the Atom to end up on the right. The Sensor would have to spontaneously leap into a state where it was flashing “RIGHT” on screen. Likewise with any particles in the environment which previously happened to be hit by photons for the screen flashing “LEFT”. Trying to reverse decoherence is like trying to unscramble an egg.

And when a human being looks at the Sensor’s little display screen… or even just stands nearby, with quintillions of particles slightly influenced by gravity… then, under exactly the same laws, the system evolves into:

(Human-LEFT * Sensor-LEFT * Atom-LEFT) + (Human-RIGHT * Sensor-RIGHT * Atom-RIGHT)

Thus, any particular version of yourself only sees the sensor registering one result.

That’s it—the big secret of quantum mechanics. As physical secrets go, it’s actually pretty damn big. Discovering that the Earth was not the center of the universe, doesn’t hold a candle to realizing that you’re twins.

That you, yourself, are made of particles, is the fourth and final key to recovering the classical hallucination. It’s why you only ever see the universe from within one blob of amplitude, and not the vastly entangled whole.

Asking why you can’t see Schrodinger’s Cat as simultaneously dead and alive, is like an Ebborian asking: “But if my brain really splits down the middle, why do I only ever remember finding myself on either the left or the right? Why don’t I find myself on both sides?”

Because you’re not outside and above the universe, looking down. You’re in the universe.

Your eyes are not an empty window onto the soul, through which the true state of the universe leaks in to your mind. What you see, you see because your brain represents it: because your brain becomes entangled with it: because your eyes and brain are part of a continuous physics with the rest of reality.

You only see nearby objects, not objects light-years away, because photons from those objects can’t reach you, therefore you can’t see them. By a similar locality principle, you don’t interact with distant configurations.

When you open your eyes and see your shoelace is untied, that event happens within your brain. A brain is made up of interacting neurons. If you had two separate groups of neurons that never interacted with each other, but did interact among themselves, they would not be a single computer. If one group of neurons thought “My shoelace is untied”, and the other group of neurons thought “My shoelace is tied”, it’s difficult to see how these two brains could possibly contain the same consciousness.

And if you think all this sounds obvious, note that, historically speaking, it took more than two decades after the invention of quantum mechanics for a physicist to publish that little suggestion. People really aren’t used to thinking of themselves as particles.

The Ebborians have it a bit easier, when they split. They can see the other sides of themselves, and talk to them.

But the only way for two widely separated blobs of amplitude to communicate—to have causal dependencies on each other—would be if there were at least some Feynman paths leading to identical configurations from both starting blobs.

Once one entire human brain thinks “Left!”, and another entire human brain thinks “Right!”, then it’s extremely unlikely for all of the particles in those brains, and all of the particles in the sensors, and all of the nearby particles that interacted, to coincidentally end up in approximately the same configuration again.

It’s around the same likelihood as your brain spontaneously erasing its memories of seeing the sensor and going back to its exact original state; while nearby, an egg unscrambles itself and a hamburger turns back into a cow.

So the decohered amplitude-blobs don’t interact. And we never get to talk to our other selves, nor can they speak to us.

Of course, this doesn’t mean that the other amplitude-blobs aren’t there any more, any more than we should think that a spaceship suddenly ceases to exist when it travels over the cosmological horizon (relative to us) of an expanding universe.

(Oh, you thought that post on belief in the implied invisible was part of the Zombie sequence? No, that was covert preparation for the coming series on quantum mechanics.

You can go through line by line and substitute the arguments, in fact.

Remember that the next time some commenter snorts and says, “But what do all these posts have to do with your Artificial Intelligence work?”)

Disturbed by the prospect of there being more than one version of you? But as Max Tegmark points out, living in a spatially infinite universe already implies that an exact duplicate of you exists somewhere, with probability 1. In all likelihood, that duplicate is no more than 10^(1029) lightyears away. Or 10^(1029) meters away, with numbers of that magnitude it’s pretty much the same thing.

(Stop the presses! Shocking news! Scientists have announced that you are actually the duplicate of yourself 10^(1029) lightyears away! What you thought was “you” is really just a duplicate of you.)

You also get the same Big World effect from the inflationary scenario in the Big Bang, which buds off multiple universes. And both spatial infinity and inflation are more or less standard in the current model of physics. So living in a Big World, which contains more than one person who resembles you, is a bullet you’ve pretty much got to bite—though none of the guns are certain, physics is firing that bullet at you from at least three different directions.

Maybe later I’ll do a post about why you shouldn’t panic about the Big World. You shouldn’t be drawing many epistemic implications from it, let alone moral implications. As Greg Egan put it, “It all adds up to normality.” Indeed, I sometimes think of this as Egan’s Law.

Part of The Quantum Physics Sequence

Next post: “The Conscious Sorites Paradox”

Previous post: “Where Experience Confuses Physicistss”

- The Quantum Physics Sequence by (11 Jun 2008 3:42 UTC; 77 points)

- Timeless Identity by (3 Jun 2008 8:16 UTC; 64 points)

- Collapse Postulates by (9 May 2008 7:49 UTC; 60 points)

- The Dilemma: Science or Bayes? by (13 May 2008 8:16 UTC; 59 points)

- Timeless Physics by (27 May 2008 9:09 UTC; 55 points)

- Where Experience Confuses Physicists by (26 Apr 2008 5:05 UTC; 44 points)

- Bell’s Theorem: No EPR “Reality” by (4 May 2008 4:44 UTC; 40 points)

- The Born Probabilities by (1 May 2008 5:50 UTC; 38 points)

- And the Winner is… Many-Worlds! by (12 Jun 2008 6:05 UTC; 30 points)

- Decoherence as Projection by (2 May 2008 6:32 UTC; 27 points)

- Spooky Action at a Distance: The No-Communication Theorem by (5 May 2008 2:43 UTC; 22 points)

- Quantum Mechanics and Personal Identity by (12 Jun 2008 7:13 UTC; 21 points)

- Decoherence is Pointless by (29 Apr 2008 6:38 UTC; 18 points)

- An Intuitive Explanation of Quantum Mechanics by (12 Jun 2008 3:45 UTC; 18 points)

- The Conscious Sorites Paradox by (28 Apr 2008 2:58 UTC; 18 points)

- CTMU insight: maybe consciousness *can* affect quantum outcomes? by (19 Apr 2024 15:23 UTC; 15 points)

- Quantum Physics Revealed As Non-Mysterious by (12 Jun 2008 5:20 UTC; 14 points)

- 's comment on Reading the Sequences before Starting to Post: Costs and Benefits by (31 Mar 2011 8:18 UTC; 12 points)

- [SEQ RERUN] On Being Decoherent by (18 Apr 2012 5:06 UTC; 7 points)

- 's comment on [SEQ RERUN] Fake Explanations by (29 Jul 2011 23:44 UTC; 7 points)

- 's comment on February 2022 Open Thread by (20 Feb 2022 6:12 UTC; 2 points)

- 's comment on Why Many-Worlds Is Not The Rationally Favored Interpretation by (18 Jan 2010 21:36 UTC; 1 point)

- 's comment on Designing Rationalist Projects by (15 May 2011 17:06 UTC; -59 points)

Speaking of the Big Bang, after being reminded of a question elsewhere I’m curious as to whether people knowledgeable of it can evaluate my interpretation of it here.

I don’t yet see how the possible existence of “duplicates” of me 10^(10^29) (is this different than 10^30?) light years away or “decohered amplitude-blobs of me” has an effect on my subjective conscious experience. Is the known/extrapolatable universe 10^(10^29) years old? That sounds a bit older than popularly presented ages of the universe.

It sounds like you’re writing that these “duplicates” have no effect on our subjective conscious experience. And that does seem to me to be the case (I assume “decohered amplitude-blobs of me” are likely being tortured, but I don’t seem to be experiencing it), although I think a lot more rigorous exploration may be required.

I’m wary, Eliezer, about you moving from “hey we know this thing about quantum mechanics and cosmology” to “hey, we can be confident that the subjective conscious experience won’t be lost post-cryonics or uploading”. It’s good news if it’s true, but I’m concerned by (1) lack of expert consensus on this, and (2) at a gut/intuitive level I sense cues that you want to believe this separate from what the best empiricism tells us, which may simply be that we don’t know yet.

You also get the same Big World effect from the inflationary scenario in the Big Bang, which buds off multiple universes. And both spatial infinity and inflation are implied by the Standard Model of physics.

How exactly do you get spatial infinity from a big bang in finite time? The stories I hear about the big bang are that the universe was initially very, very small at the beginning of the big bang. If it was small then it was finite. How does an object (such as the universe) grow from finite size to infinite size in finite time?

It’s not known whether the Universe is finite or infinite, this article gives more details:

http://en.wikipedia.org/wiki/Shape_of_the_Universe

If the Universe is infinite, then it has always been so even from the moment after the Big Bang; an infinite space can still expand.

It hadn’t quite sunk in until this article that looked at from a sum-over-histories point of view, only identical configurations interfere; that makes decoherence much easier to understand.

Hopefully Anonymous asked:

It’s different by a factor of roughly 10^(10^29). Strictly speaking the factor is 10^(10^29-30), but making that distinction isn’t much more meaningful than distinguishing between metres and lightyears at those distances.Sebastian, so 10^30 is 10 with 30 zeros after it. 10^10^29 is 10 with zillions of zeros after it. Thanks for making that clear.

Eliezer, I think your usage of “Standard Model” is different from that of physicists.

Quantum suicide is a good way to ensure that your next experience will be the trivial “experience” of being a corpse with no brain activity.

So what you’re saying is that God does not play dice, and that frequentism is fundamentally true.

I think this has been the best post so far. I’d like to answer one of my previous questions to make sure I am grokking this; please weigh in if I am off. Here was my question:

Q: Am I correct in assuming that [the amplitude distribution] is independent of (observations, “wave function collapses”, or whatever it is when we say that we find a particle at a certain point)?

A: As I suspected, a bit of a Wrong Question. But yes, there is only one amplitude distribution that progresses over time

Q: For example, let’s I have a particle that is “probably” going to go in a straight to from x to y, i.e. at each point in time there is a huge bulge in the amplitude distribution at the appropriate point on the line from x to y. If I observe the particle on the opposite side of the moon at some point (i.e. where the amplitude is non-zero, but still tiny), does the particle still have the same probability as before of “jumping” back onto the line from x to y?

A: As was mentioned to me when I first asked this question, the probability of me observing the particle “jump back” is near-zero. When (I think) I realize now is that the reason this is true is that

[Brain being in a state where it remembered particle on the moon 1 second ago] * [Brain being in state where it sees particle “back” on the original line]

is close to zero. (This is of course ignoring the fact that there is no such thing as “this particle” vs “that particle”. I’m pretending this is a new class of particle of which there is only one in the universe. Or something).

BTW, are you going to cover why the probability of a configuration is the square of its amplitude? Or if that was somehow already answered by the Ebborians, could I get a translation? :)

“And both spatial infinity and inflation are standard in the current model of physics.”

As mentioned by a commenter above, spatial infinity is by no means required or implied by physical observation. Non-compact space-times are allowed by general relativity, but so are compact tori (which is a very real possibility) or a plethora of bizarre geometries which have been ruled out by experimental evidence.

Inflation is an interesting theory which agrees well with the small (relative to other areas of physics) amount of cosmological data which has been collected. However, the data by no means implies inflation. In fact, the term “inflation” refers to a huge zoo of models which have many unexplained parameters which can be tuned to fit the date. Physicists are far from absolutely confident in the inflationary picture.

Furthermore, there are serious, serious problems with Many Worlds Interpretation (and likewise for Mangled Worlds), which you neglect to mention here.

I enjoy your take on Quantum Mechanics, Eliezer, and I recommend this blog to everyone I know. I agree with you that Copenhagen untenable and the MWI is the current best idea. But you talk about some of your ideas like it’s obvious and accepted by anyone who isn’t an idiot. This does your readers a disservice.

I realize that this is a blog and not a refereed journal, so I can’t expect you to follow all the rules. But I can appeal to your commitment to honesty in asking you to express the uncertainty of your ideas and to defer when necessary to the academic establishment.

In the prolog to the QM sequence he does actually repeatedly say <this all is my opinion and others have different opinions and I’ll talk about that later>

“But you talk about some of your ideas like it’s obvious and accepted by anyone who isn’t an idiot. This does your readers a disservice.

I realize that this is a blog and not a refereed journal, so I can’t expect you to follow all the rules. But I can appeal to your commitment to honesty in asking you to express the uncertainty of your ideas and to defer when necessary to the academic establishment.”

Eliezer this is really great advice. Please take it.

Jess Reidel: I enjoy your take on Quantum Mechanics, Eliezer, and I recommend this blog to everyone I know. I agree with you that Copenhagen untenable and the MWI is the current best idea. But you talk about some of your ideas like it’s obvious and accepted by anyone who isn’t an idiot. This does your readers a disservice.

I realize that this is a blog and not a refereed journal, so I can’t expect you to follow all the rules. But I can appeal to your commitment to honesty in asking you to express the uncertainty of your ideas and to defer when necessary to the academic establishment.

I mentioned the fact that there were problems with mangled worlds, but admit that I didn’t mention what they were (e.g., seeming to predict that we should find ourselves in a very high-entropy world). In fact, the probability I assign to mangled worlds is below 50% - I just think it is a beautiful exemplar of what a non-mysterious explanation should look like. I’m sorry if this is not clear; I should make that point in an upcoming post explicitly about the Born probabilities.

The main problem with MWI is the Born probabilities—which I did mention, at length. I am not aware of any serious problems with MWI besides the Born probabilities. I will discuss continuity and choice of basis in upcoming days.

I will attempt to establish in upcoming posts that all remaining quantum theories worthy of being taken seriously are many-worlds theories. Hidden variables are experimentally disproved; quantum collapse is unphysical. The non-many-worlds theories are not just wrong, they are silly. Academic physics has been committing a Type II silliness error, where something is very silly but academia views it as not silly.

This is a strong statement, but it is what I will be attempting to establish. I hope that, from this perspective, it will be clear why I have delayed talking about complex craziness until simple sanity is established as a foundation for discussion thereof.

Yes, I talk about these ideas as if they are obvious. They are. It’s important to remember that while learning quantum mechanics. It’s not difficult unless you make it difficult. Just because certain academics are currently doing so, is no reason for me to do the same. I explicitly said at the outset (in “Quantum Explanations”) that the views I presented would not be a uniform consensus among physicists, but I was going to leave out the controversies until later, so I could teach the version that I think is simple and sane. Bayesianism before frequentism.

I thought I was taking Tegmark’s word on infinite universes and inflation, but I would seem to have misinterpreted that word, as verified by Wikipedia; my apologies to my readers. I’ve edited accordingly. It is not an important point except for people having emotional problems with many-worlds.

Greg Egan also said:

“Though a handful of self-described Transhumanists are thinking rationally about real prospects for the future, the overwhelming majority might as well belong to a religious cargo cult based on the notion that self-modifying AI will have magical powers.”

Maybe it’s time to stop holding up Greg Egan like some kind of icon.

“But what do all these posts have to do with your Artificial Intelligence work?”

Some of us are in fact pleased by how closely this does have to do with your AI work.

Maybe it’s time to stop holding up Greg Egan like some kind of icon.

Why? Quarantine and Permutation City are still really good books. Egan is a icon of idea-based science fiction, not an icon of futurism.

Maybe you can admire someone who directly thinks you’re a crackpot, but I can’t.

I think people who view me as a crackpot are a valuable antidote to people who drink the same Kool-Aid as I do. It is entirely beside the point if it happens to be the Kool-Aid of Truth, because all Kool-Aid tastes truthy to those who drink it.

“Yes, I talk about these ideas as if they are obvious. They are. It’s important to remember that while learning quantum mechanics. It’s not difficult unless you make it difficult. Just because certain academics are currently doing so, is no reason for me to do the same. I explicitly said at the outset (in “Quantum Explanations”) that the views I presented would not be a uniform consensus among physicists, but I was going to leave out the controversies until later, so I could teach the version that I think is simple and sane. Bayesianism before frequentism.”

That self-flattering interpretation of the first 4 sentences of this quote is pretty clearly not what Jess Reidel meant. He meant that you promote some of your ideas like there is no significant probability that they’re wrong (“obvious” in that sense), when expert consensus differs from that assessment. As for the last two sentences, perhaps he’d be satisfied with a more prominent and obvious disclaimer. For example, at the moment of presentation of the simple and sane version of each explanation, clearly noting that reasonable controversies exist.

Elsewhere in his post he was pretty clear that your attempts to

Hopefully and Jess,

I understand what you’re saying, but I truly and honestly believe that quantum physics as it works in the real universe truly is a hell of a lot simpler than the arguments that people have about quantum physics. I think the arguments are overcomplicated and pointless. Every time I even mention their existence, I worry whether I’m unnecessarily confusing the readers.

Let’s start with the simple version. It’s even the majority version—no, not the unanimous version, but the majority version among theoretical physicists, yes.

I’m not sure it’s possible to teach quantum physics in blog posts if you try to teach the arguments too. The universe is simpler than our arguments about the universe.

If you want a dutifully gracious introduction to quantum physics that carefully acknowledges all the different positions and tries to explain their supporting arguments—treating all sides with respect, and dismissing no idea still believed by a substantial faction of physicists—then there are plenty of books out there which will be happy to confuse the hell out of you.

″… the overwhelming majority might as well belong to a religious cargo cult based on the notion that self-modifying AI will have magical powers.”

“Maybe you can admire someone who directly thinks you’re a crackpot, but I can’t.”

I have a high regard for most extropians (a subset of Transhumans, I think) I know well, but that doesn’t make me believe that the Egan line is more than hyperbole at most. I don’t take it as a slur against anyone whose name I know. I’ve certainly seen evidence that the majority wouldn’t be able to distinguish the magical explanations that appear.

And the fact that Charles Stross thinks that discussing Extropianism is attractive to his market makes me think Egan has more truth on his side.

But I also want to mention Egan’s “Diaspora”. I bring it often as a great fictional depiction of an AI awakening. I know, I know. “Arguing from fictional evidence.” But many people expect coming to awareness to be magic, and Egan shows how it could happen in a step-by-step manner.

Hopefully: If an exact duplicate of you 10^(10^29) light years away was being tortured you would know because torture is the description of a physical state. Another copy of your physical state can’t be subject to torture without your also being subject to it. OTOH, I think that you are imagining a physical system that has evolved from a system that was, when described classically just like you. Such a system doesn’t transmit any information to you so your consciousness can’t be of it being tortured.

When I say “me” here, I mean my consciousness, the one I’m experiencing right here right now in this locality. So I think it’s your OTOH statement that would be relevant. I read from your post the reasonable point that it’s a presumably impossible paradox that any exact duplicate of me would be tortured while I am not, because then it wouldn’t be my exact duplicate. But presumably by the same big universe logic, 10^(10^29) light years away (or closer) something that is otherwise an exact duplicate of me IS being tortured. Sucks to be that guy! :P

I just wanted to say I’ve benefited greatly from this series, and especially from the last few posts. I’d studied some graduate quantum mechanics, but bailed out before Feynman paths, decoherence, etc; and from what I’d experienced with it, I was beginning to think an intuitive explanation of (one interpretation of) quantum mechanics was nigh-impossible. Thanks for proving me wrong, Eliezer.

The argument (from elegance/Occam’s Razor) for the many-worlds interpretation seems impressively strong, too. I’ll be interested to read the exchanges when you let the one-world advocates have their say.

Hopefully: What do you mean by saying that your consciousness is “in this locality”? How is consciousness “in a locality” at all? “(Stop the presses! Shocking news! Scientists have announced that you are actually the duplicate of yourself 10^(1029) lightyears away! What you thought was “you” is really just a duplicate of you.)” was a joke.

Like another Dave almost three years ago, I think this post was the most effective so far. Not as in ‘constructed better’, because I suspect that almost everything in previous posts in the QM series and quite a lot in posts elsewhere was building up to this.

I’d been getting used to thinking in terms of sensors being entangled with the particles they sense etc. but references to humans being entangled too seemed to be somewhere between obvious and avoiding the issue: I didn’t feel what that meant. In this thread I’d got to the bottom and was wondering why we were talking about a physically infinite universe when the message of halfway through internalised.

Whether I’ll be persuaded of interpretations on QM is unclear, as I have little maths and less physics so I feel hideously under-qualified to judge based on one side of the argument given that it’s perfectly plausible that the counter-argument relies on tools that I don’t have available. But in terms of the aim of making QM seem reasonable, and non-mysterious this is doing astonishingly well. Given that at a certain level I found the mysteriousness quite reassuring, that’s a particularly tough job.

Let me join all those observing that these are great explanations of QM. But I don’t get why we need to invoke MWI and the Ebborians. If the wavefunction evolves into

(Human-LEFT Sensor-LEFT Atom-LEFT) + (Human-RIGHT Sensor-RIGHT Atom-RIGHT)

but we only observe

(Human-LEFT Sensor-LEFT Atom-LEFT)

then it makes far more sense to me that, rather than conjuring up a completely unobservable universe with clones of ourselves where (Human-RIGHT Sensor-RIGHT Atom-RIGHT) happened, a far more empirical explanation is that it simply didn’t happen. Half of the wavefunction disappears, nondeterministically. Why, as Occam might say, multiply trees beyond necessity? Prune them instead. Multiple “worlds” strike me as no more necessary than the aether or absolute space.

This is addressed in Decoherence is Simple.

(Also, the tag doesn’t work because Less Wrong uses Markdown formatting for comments; if you click “Help” under the comment box you can see a reference to some of the more common constructions.)

(Also, welcome to Less Wrong!)

I think the obvious reply here is ‘keep reading to the end of the Sequence’! After all, quite a lot of space is devoted to looking at different models.

On the Occcam’s razor point, the question is what we’re endeavouring to make simple in our theories. Eliezer’s argument is that multiple worlds require no additions to the length of the theory if it was formally expressed, whereas a ‘deleting worlds’ function is additional. It’s also unclear where it would kick in, what ‘counts’ as a sufficiently fixed function to chop off the other bit. It’s not clear from your post if you think the other half’s chopped off because we haven’t observed it, or we don’t observe it because it’s chopped off!

The other point is that if we are ‘Human-LEFT’ then we don’t expect the other part of the wave function to be observable to us. Does that mean we delete it from what is real? The post addressing that question in a context divorced from QM: http://lesswrong.com/lw/pb/belief_in_the_implied_invisible/

Is there a formal expression of the theory of measurement (in a universally agreed upon language) where this can be demonstrated?

I’m fairly certain the answer is “no”.

Basically, what the MWI believer wants to argue is that in a hypothetical universe where we had no hidden variables and no collapses—nothing but unitary evolution under the Schrödinger equation—observers would still have experiences where it ‘seems as if’ there is only one universe, and it ‘seems as if’ their measurement outcomes are probabilistic as described by the Born rule.

On the other hand, the MWI skeptic denies that the formal description of the theory suffices to determine “how things would seem if it were true” without extra mathematical machinery.

Unfortunately, the extra machinery that people propose, in order to bridge the gap between theory and observation, tends to be some combination of complicated, arbitrary-seeming, ugly and inadequate (e.g. the Many Minds of Albert and Loewer, or the various attempts to reduce Born probabilities to ‘counting probabilities’ by De Witt and others). This leaves some people pining for the relative simplicity and elegance of Bohm’s theory.

(Bohm’s theory is precisely “QM without collapse + some extra machinery to account for observations”. The only differences are that (a) the extra machinery describes a ‘single universe’ rather than a multiverse and (b) it doesn’t pretend to be the inevitable, a priori ‘unpacking’ of empirical predictions which are already implicit in “QM without collapse”.)

I’m not sure that’s in any debate or that it should be. MWI and the copenhagen interpretation do produce identical pictures for a person inside. The physics is identical—what’s really different is the ontology. The Bohm theory produces the same results in the non-relativistic picture but apparently has some problems with going relativistic which aren’t resolved. And since relativity definitely is true, that’s a problem.

(a) The Copenhagen Interpretation is incoherent, and for that reason it’s obviously wrong. I wish everyone would just agree never to talk about it again.

(b) It’s a delicate philosophical question whether and how far a formal mathematical theory produces a “picture for the person inside”.

(c) MWI can mean several different things. If, temporarily, we take it to mean “QM without collapses or hidden variables + whatever subjective consequences follow from that” then you have somewhere between a lot of work and an impossible task to deduce the Born probabilities.

(d) I’m not a Bohmian. I think ultimately we will attain a satisfactory understanding of how the Born probabilities are, after all, ‘deducible’ from the rest of QM. Some promising directions include Zurek’s work on decoherence and einselection, Hanson’s notion of “Mangled Worlds”, and cousin_it’s ideas about how to ‘reduce’ the notion of probability to other things.

(a) By the copenhagen interpretation, do you mean what I meant, i.e. the status-quo interpretation used in most of physics? Would you please explain how it’s obviously incoherent?

(b, c, and some discouragement for d) The delicacy is more a property of philosophers than the universe. If people are built out of ordinary matter, then in thought experiments (e.g. Schrodinger’s cat, but with people) we can swap them for any other system with the right number of possible states (one state per possible outcome). Since we don’t ever subjectively experience being in a superposition, it’s fairly obvious that you have to get rid of the resulting superposition if you ask what a person subjectively experiences. To do that you want to do a thing called tracing out the environment (Sorry, no good page on this, but it’s this operation) that in short changes entanglement to probabilistic correlation. 3 guesses what the probabilities are (though this really just moves the information about Born probabilities are from an explicit rule to the rules of density matricies, so it’s not really “deducible”).

If the wavefunction collapses upon measurement, and no adequate definition of the term “measurement” has been given, then the theory as it stands is incoherent. (I realize that a Copenhagenist thinks that they can get around this by simply denying that the wavefunction exists, but the price of that move is that they don’t have any coherent picture of reality underneath the mathematics.)

OK, but let’s note in passing that the MWI believer needs show this a priori, whether from the mathematics of QM or by deconstructing the concept of “experiencing a superposition” or both. I don’t think that should be particularly difficult, though.

I know what that means.

The trouble is at some point you need to explain why our measurement records show sequences of random outcomes distributed according to the Born rules. Now it may be the case that branches where measurement records show significant deviation from the Born rules have ‘low amplitude’, but then you need to explain why we don’t experience ourselves in “low amplitude branches”. More precisely, you need to explain why we seem to experience ourselves in branches at rates proportional to the norm squared of the amplitudes of the branches (whatever this talk of ‘experiencing ourselves’ is supposed to mean, and whatever a ‘branch’ is supposed to be). Why should that be true? Why shouldn’t the subjective probabilities simply be a matter of ‘counting up’ branches irrespective of their weighting? After all, the wavefunction still contains all of the information even about ‘lightly weighted’ branches.

The MWI believer thinks that, by talking about reduced density matrices obtained by ‘tracing out’ the environment, you’ve thereby made good progress towards showing where the Born probabilities come from. But the MWI skeptic thinks that the ‘last little bit’ that you still have to do (i.e. explaining why we experience ourselves in heavily weighted branches more than lightly weighted ones) is and always was the entire problem.

Sorry, I don’t know what you’re talking about.

Not even the quantum-information-ey definition of “transfer of information from the measuree to the measurer?”

What I’m taking from this is that you don’t know many copenhagenists familiar with Bell’s theorem.

Well I suppose I could tell the MWI (or any sort of) skeptic about what density matricies and mixed states are (other readers: to wikipedia!), and how when you see a mixed state it is by definition probabilistic.

Well, even after the next question of “why do density matrices work that way?”, you can always ask “why?” one more time. But eventually we, having finite information, will always end with something like “because it works.” So how can we judge explanations? Well, one “why” deeper is good enough for me.

Tsk, fine, 0 guesses then: the probabilities you get from tracing out the environment are the Born probabilities. But as I said this doesn’t count as deducing them, they’re hidden in the properties of density matrices, which were in turn determined using the Born probabilities.

That sounds fine, but there’s no objective way of defining what a “measurer” is. So essentially what you have is a ‘solipsistic’ theory, that predicts “the measurer’s” future measurements but refuses to give any determinate picture of the “objective reality” of which the measurer herself is just a part.

I have to concede that many thinkers are prepared to live with this, and scale down their ambitions about the scientific enterprise accordingly, but it seems unsatisfactory to me. Surely there is such a thing as “objective reality”, and I think science should try to tell us what it’s like.

That may very well be true, but how does pointing out that the Copenhagen Interpretation denies the objective existence of the wavefunction entail it?

Perhaps. My post in the discussion section, and my subsequent comments, try (and fail!) to explain as clearly as I can what troubles me about MWI.

It’s true that an MWI non-collapsing wavefunction has ‘enough information’ to pin down the Born probabilities, and it’s also true that you can’t get the empirical predictions exactly right unless you simulate the entire wavefunction. But it still seems to me that in some weird sense the wavefunction contains ‘too much’ information, in the same way that simulating a classically indeterministic universe by modelling all of its branches gives you ‘too much information’.

I know what you mean, but as I’m sure you know it’s not mere perversity that has led many to accept “modeling all the branches” of the QM universe. In the case of a classically indeterministic universe, you can model just one indeterministic branch, but in the case of the QM universe you can’t do that, or you can’t do it anywhere near as satisfactorily. The “weirdness” of QM is precisely that aspect of it which (in the eyes of many) forces us to accept the reality of all the branches.

Finally, let me return to your original question:

Based on your previous replies to me, it’s evident that you both believe in, and have a fairly sophisticated understanding of, the idea that you can extract the empirical predictions of quantum mechanics from unitary evolution alone (with no hidden variables) and without ‘adding anything’ (like Many Minds or whatever).

Since one obviously does need to ‘add something’ (e.g. rules about collapse, or Bohmian trajectories) in order to obtain a ‘single universe’ theory, it sounds as though you’ve answered your own question. Or at least, it’s not clear to me what kind of answer you were expecting other than that. (I don’t understand how it helps or why it’s necessary to use a ‘formal expression of the theory of measurement’, or even what such a thing would mean.)

Every interpretation is “adding something.” Just because interpreters choose to bundle their extra mechanisms in vague English language “interpretations” rather than mathematical models does not mean they aren’t extra mechanisms. Copenhagen adds an incoherent and subjective entity called “the observer.” MWI adds a preposterous amount of mechanism involving an infinite and ever-exponentially-expanding number of completely unobservable clone universes. Copenhagen grossly violates objectivity and MWI grossly violates Occam’s Razor. Also, MWI needs a way to determine when a “world” splits, or to shove the issue under the rug, every bit as much as collapse theories need to figure out or ignore when collapse occurs. If as many “interpreters” like to claim QM itself is just the wavefunction, then collapse and world-splits are both extra mechanisms.

But QM is not just the wavefunction. QM is also the Born probabilities. The wavefunction predicts nothing if we do not square it to find the probabilities of the events we actually observe. Of all the interpretations, objective collapse adds the least to quantum mechanics as it is actually practiced. Everybody who uses QM for practical purposes uses the Born probabilities or the direct consequences thereof (e.g. spectra). Thus—despite the many who shudder at the nondeterminism of the universe and thus come up with interpretations like Copenhagen and MWI to try to turn inherent nondeterminism into mere subjective ignorance—the nondeterministic quantum event whereby a superposition of eigenvectors reduces to a single eigenvector (and the various other isomorphic ways this can be mathematically represented) is every bit as central to QM as the nominally deterministic wavefunction. The Born probabilities are not in any way “extra mechanism” they are central to QM. Even more central than the wavefunction, because all that we observe directly are the Born random events. The wavefunction we never observe directly, but only infer it as defining the probability distribution of the nondeterministic events we do observe.

Thus any interpretation of QM as it is actually practiced must take the Born probabilities as being at least as objective and physical as the wavefunction. If the Born probabilities are objective, we have objective collapse, and neither Copenhagen nor MWI are true.

Wikipedia has a bare-bones description of objective collapse:

http://en.wikipedia.org/wiki/Objective_collapse_theory

Further experimental evidence: if the Born probabilities do not represent an objective and physical randomness that is inherent to the universe, then the EPR/Bell/Aspect/et,. al. work tells us that FTL signaling (and more importantly a variety of related paradoxes, FTL signaling not itself being paradoxical in QM) is possible. QM is not special relativity. Special relativity can’t explain the small scale or even certain macroscale effects like diffraction that QM explains. Special relativity is just an emergent large-scale special case of QM (specifically of QFT), it is QM that is fundamental. QM itself, in the EPR/et. al. line of work, tells is that it is the objective and physical randomness inherent in the universe, not causal locality, that stands in the way of FTL signaling and its associated paradoxes.

There’s no mechanism to it other than the mechanism that every interpretation of QM already has for describing the evolution of non-macroscopic quantum systems. MWI just says that large systems and small systems aren’t separate magisteria with different laws.

“Worlds” and “branching” are epiphenomenal concepts; they’re simplifications of what MWI actually talks about (see Decoherence is Pointless).

It doesn’t matter whether branching occurs at a point of or at during some blob of time, probabilistic or otherwise, it’s a central part of MWI and you need an equation to describe when it happens. And that equation should agree with the Born probabilities up to our observational limits. Likewise for collapse in theories that invoke collapse. Otherwise it’s just hand-waving not science.

What is or is not a “branch” is unimportant. If you have read the link you’ll know that a “branch” is not a point mass but a blob spread out in configuration space. All MWI needs is “the probability density of finding oneself in point x in the wavefunction is the amplitude squared at that point”. It’s standard probability theory then to integrate over a “branch” to find your probability of being in that branch. But the only reason to care about “branches” is because the world looks precisely identical to an observer at every point in that branch.

Not a clue. But in this particular case, the argument is that the theory without mutliple worlds is precisely the multi-worlds theory with an extra postulate, so it’s certainly more complicated.

It would really help if some people who knew about the relevant parts of the Sequences lurked around to aid the confused!

“Eliezer’s argument is that multiple worlds require no additions to the length of the theory if it was formally expressed, whereas a ‘deleting worlds’ function is additional. It’s also unclear where it would kick in, what ‘counts’ as a sufficiently fixed function to chop off the other bit.”

Run time is at least as important as length. If we want to simulate evolution of the wavefunction on a computer, do we get a more accurate answer of more phenomena by computing an exploding tree of alternatives that don’t actually significantly influence anything that we can ever observe, or does the algorithm explain more by pruning these irrelevant branches and elaborating the branches that actually make an observable difference? We save exponential time and thus explain exponentially more by pruning the branches.

“It’s not clear from your post if you think the other half’s chopped off because we haven’t observed it, or we don’t observe it because it’s chopped off!”

Neither. QM is objective and the other half is chopped off because decoherence created a mutually exclusive alternative. This presents no more problem for my interpretation (which might be called “quantum randomness is objective” or “God plays dice, get over it”) than it does for MWI (when does a “world” branch off?) It’s the sorities paradox either way.

“The other point is that if we are ‘Human-LEFT’ then we don’t expect the other part of the wave function to be observable to us. Does that mean we delete it from what is real?”

Yes, for the same reason we delete other imagined but unobserved things like Santa Claus, absolute space, and the aether from what we consider real. If we don’t observe them and they are unnecessary for explaining the world we do see, they don’t belong in science.

You’re arguing about something that seems interesting and possibly important, but it doesn’t sound like the mathematical likelihood of the theory. Eliezer starts from a Bayesian interpretation of this number as a rational degree of belief, theoretically determined by the evidence we have. As I understand it, this quantity has a correct value, and the question of how much the theory explains has a definite answer, whether or not we can calculate it. The alternate Discordian or solipsistic view has much to recommend it but runs into problems if we take it as a general principle.

Now run time has no obvious effect on likelihood of truth. I don’t know if message length does either, but at least we have an argument for this (see Solomonoff induction). And the claim that MWI adds an extra postulate of its own seems false. MWI tries to follow Occam’s Razor—in a form that seems to agree with Solomonoff and Isaac Newton—by saying that no causes exist but arrows attached to large sets of numbers, and the function that attaches them. Everything you call magical or imaginary follows directly from this.

Before moving on to the problem with this interpretation, please note that Bayesianism also gives a different account of “unobserved things”. Some of them, like aether and possibly absolute space, decrease the prior likelihood of a theory by adding extra assumptions to the math. (Eliezer argues this applies to objective collapse.) Others, like Santa Claus, would increase the probability of evidence we do not observe. This has no relevance for alternate worlds. The evidence you seem to want has roughly zero probability in the theory you criticize, so its absence doesn’t tell us anything. The argument for adopting the theory lies elsewhere, in the success of quantum math.

Now obviously the Born rule creates a problem for this argument. The theory has a great big mathematical hole in it. But from this Bayesian perspective, and going by the information I have so far, we have no reason to think that whatever fills the hole will reduce the number of “worlds” to exactly one, any more than we have reason to believe in exactly 666 worlds. It really does seem that simple. And from what I’ve managed to read of Feynman and Hibbs the authors definitely believe in more than one world. (“From what does the uncertainty arise? Almost without doubt it arises from the need to amplify the effects of single atomic events to such a level that they may be readily observed by large systems.” p.22) So I don’t think my simple view results from ignorance of QM as it existed then.

You’re almost exactly playing the part of Huve Erett in this dialog:

http://lesswrong.com/lw/q7/if_manyworlds_had_come_first/

Emphasize the “almost”. I’m advocating objective collapse, not Copenhagen.

It sure seems to me as though Huve Erett advocates objective collapse. Maybe you can explain what part of the dialog convinces you that Huve Erett can’t be talking about objective collapse.

“This happens when, way up at the macroscopic level, we ‘measure’ something.”

vs. in objective collapse, when the collapse occurs has no necessary relationship to measurement. “Measurement” is a Copenhagen thing.

“So the wavefunction knows when we ‘measure’ it. What exactly is a ‘measurement’? How does the wavefunction know we’re here? What happened before humans were around to measure things?”

Again, this describes Copenhagen (or even Conscious Collapse, which is even worse). Objective collapse depends on neither measurements nor measurers.

Much of the rest of this parody might be characterized as a preposterously unfair roast of collapse theories, objective or otherwise, but the trouble is all the valid criticisms also apply to MWI. For example “the only law in all of quantum mechanics that is non-linear, non-unitary, non-differentiable and discontinuous” also applies to the law that is necessary for any actually scientific account of MWI, but that MWI people sweep under the rug with incoherent talk about “decoherence”, namely when “worlds” “split” such that we “find ourselves” in one but not the other. AFAIK, no MWI proponent has ever proposed a linear, unitary, or differentiable function that predicts such a split that is consistent with what we actually observe in QM. And they couldn’t, because “world split” is nearly isomorphic with “collapse”—it’s just an excessive way of saying the same thing. If MWI came up with an objective “world branch” function it would serve equallywell, or even better given Occam’s Razor, as an objective collapse function. In both MWI and collapse part of the wave function effectively disappears from the observable universe—MWI only adds a gratuitous extra mechanism, that it re-appears in another, imaginary, unobservable “world.”

BTW, the standard way that QM treats the nondeterministic event predicted probabilistically by the wavefunction and the Born probabilities (whether you choose to call such event “collapse”, “decoherence”, “branching worlds”, or otherwise) is completely non-linear, non-unitary, non-differentiable and discontinuous, and worst of all, nondeterminstic (horrors!). In the matrix model, the “collapse”, if you will forgive the phrase, of a large (often infinite) set of possible eigenvalues and corresponding eigenvectors to one, the one we actually observe, according to the Born probabilities. No matter how much “interpreters” try to sweep this under the rug this nondeterminstic disappearance of all eigenvectors (or their isomorphs in other algebras) save one is central to real-world QM math and if it weren’t so it wouldn’t predict the quantum events we actually observe. So the dispute here is with QM itself, not with collapse theories.

Well, I don’t agree with the “vs”, but let that pass, since then the dialog quickly continues:

That occurs as early as one fourth of the way through the dialog, so that leaves three fourths of the dialog addressing what you are apparently calling an objective collapse theory.

Eliezer thinks objective collapse = Copenhagen. More precisely, I’ve never seen him distinguish the two, or acknowledge the possibility of denying that the wavefunction exists.

When an object leaves our Hubble volume does it cease to exist?

It’s reasonable to assume run time is important, but problematic to formalize. Run time is much more dependent on the underlying computational abstraction than description length is. Is the computer sequential? parallel? non-deterministic? quantum?

Depending on the underlying computer model MWI could actually be faster than a collapse hypothesis. MWI is totally local, hence easily parallelizable. Collapse hypotheses however require non-local communication, which create severe bottlenecks for parallel simulations.

“Imagine a universe containing an infinite line of apples.”

If we did I would imagine it, but we don’t. In QM we don’t observe infinite anything, we observe discrete events. That some of the math to model this involves infinities may be merely a matter of convenience to deal with a universe that may merely have a very large but finite number of voxels (or similar), as suggested by Planck length and similar ideas.

“It’s reasonable to assume run time is important, but problematic to formalize.”

Run time complexity theory (and also memory space complexity, which also grows at least exponentially in MWI) is much easier to apply than Kolmogorov complexity in this context. Kolmogorov complexity only makes sense as an order of magnitude (i.e. O(f(x) not equal to merely a constant), because choice of language adds an (often large) constant to program length. So from Kolmogorov theory it doesn’t much matter than one adds a small extra constant amount of bits to one’s theory, making it problematic to invoke Kolmogorov theory to distinguish between different interpretations and equations that each add only a small constant amount of bits.

(Besides the fact that QM is really wavefunction + nondeterministic Born probability, not merely the nominally deterministic wave function on which MWI folks focus, and everybody needs some “collapse”/”world split” rule for when the nondeterministic event happens, so there really is not even any clear constant factor equation description length parsimony to MWI).

OTOH, MWI clearly adds at least an exponential (and perhaps worse, infinitely expanding at every step!) amount of run time, and a similar amount of required memory, not merely a small constant amount. As for the ability to formalize this there’s a big literature of run-time complexity that is similar to, but older and more mature than, the literature on Kolmogorov complexity.

I see. I think you are making a common misunderstanding of MWI (in fact, a misunderstanding I had for years). There is no actual branching in MWI, so the amount of memory required is constant. There is just a phase space (a very large phase space), and amplitudes at each point in the phase space are constantly flowing around and changing (in a local way).

If you had a computer with as many cores as there are points in the phase space then the simulation would be very snappy. On the other hand, using the same massive computer to simulate a collapse theory would be very slow.

This is an answer to a question from another person’s thread. My question was “When an object leaves our Hubble volume does it cease to exist?” I’m still curious to hear your answer.

That’s an easy one—objective collapse QM predicts that with astronomically^astronomically^astronomically high probability objects large enough to be seen at that distance (or even objects merely the size of ourselves) don’t cease to exist. Like everything else they continue to follow the laws of objective collapse QM whether we observe them or not.

The hypo is radically different from believing in an infinitely expanding infinity of parallel “worlds”, none of which we have ever observed, either directly or indirectly, and none of which are necessary for a coherent and objective QM theory.

Then I can define a new hypothesis, call it objective collapse++, which is exactly your objective collapse hypothesis with the added assumption that objects cease to exist outside of our Hubble volume. Collapse++ has a slightly longer description length, but it has a greatly reduced runtime. If we care about runtime length, why would we not prefer Collapse++ over the original collapse hypothesis?

See my above comment about MWI having a fixed phase space that doesn’t actually increase in size over time. The idea of an increasing number of parallel universes is incorrect.

“MWI having a fixed phase space that doesn’t actually increase in size over time.”

(1) That assumes we are already simulating the entire universe from the Big Bang forward, which is already preposterously infeasible (not to mention that we don’t know the starting state).

(2) It doesn’t model the central events in QM, namely the nondeterministic events which in MWI are interpreted as which “world” we “find ourselves” in.

Of course in real QM work simulations are what they are, independently of interpretations, they evolve the wavefunction, or a computationally more efficient but less accurate version of same, to the desired elaboration (which is radically different for different applications). For output they often either graph the whole wavefunction (relying on the viewer of the graph to understand that such a graph corresponds to the results of a very large number of repeated experiments, not to a particular observable outcome) or do a Monte Carlo or Markov simulation of the nondeterministic events which are central to QM. But I’ve never seen a Monte Carlo or Markov simulation of QM that simulates the events that supposedly occur in “other worlds” that we can never observe—it would indeed be exponentially (at least) more wasteful in time and memory, yet utterly pointless, for the same reasons that the interpretation itself is wasteful and pointless. You’d think that a good interpretation, even if it can’t produce any novel experimental predictions, could at least provide ideas for more efficient modeling of the theory, but MWI suggests quite the opposite, gratuitously inefficient ways to simulate a theory that is already extraordinarily expensive to simulate.

Objective collapse, OTOH, continually prunes the possibilities of the phase space and thus suggests exponential improvements in simulation time and memory usage. Indeed, some versions of objective collapse are bone fide new theories of QM, making experimental predictions that distinguish it from the model of perpetual elaboration of a wavefunction. Penrose for example bases his theory on a quantum gravity theory and several experiments have been proposed to test his theory.

BTW, it’s MWI that adds extra postulates. In both MWI and collapse, parts of the wavefunction effectively disappear from the observable universe (or as MWI folks like to say “the world I find myself in.”) MWI adds the extra and completely gratuitous postulate that this portion of the wave function magically re-appears in another, imaginary, completely unobservable “world”, on top of the gratuitous extra postulate that these new worlds are magically created, and all of us magically cloned, such that the copy of myself I experience finds me in one “world” but not another. And all that just to explain why we observe a nondeterministic event, one random eigenstate out of the infinity of eigenstates derived from the wavefunction and operator, instead of observing all of them.

Why not just admit that quantum events are objectively nondeterministic and be done with it? What’s so hard about that?

This does not correspond to the MWI as promulgated by Eliezer Yudkowsky, which is more like, “In MWI, parts of the wavefunction effectively disappear from the observable universe—full stop.” My understanding is that EY’s view is that chunks of the wavefunction decohere from one another. The “worlds” of the MWI aren’t something extra imposed on QM; they’re just a useful metaphor for decoherence.

This leaves the Born probabilities totally unexplained. This is the major problem with EY’s MWI, and has been fully acknowledged by him in posts made in years past. It’s not unreasonable that you would be unaware of this, but until you’ve read EY’s MWI posts, I think you’ll be arguing past the other posters on LW.

Upvoted, although my understanding is that there is no difference between Eliezer’s MWI and canonical MWI as originally presented by Everett. Am I mistaken?

Since I’m not familiar with Everett’s original presentation, I don’t know if you’re mistaken. Certainly popular accounts of MWI do seem to talk about “worlds” as something extra on top of QM.

Popular accounts written by journalists who don’t really understand what they are talking about may treat “worlds” as something extra on top of QM, but after reading serious accounts of MWI by advocates for over two decades, I have yet to find any informed advocate who makes that mistake. I am positive that Everett did not make that mistake.

I think that’s just a common misunderstanding most people have of MWI, unfortunately. Visualizing a giant decohering phase space is much harder than imagining parallel universes splitting off. I’m fairly certain that Eliezer’s presentation of MWI is the standard one though (excepting his discussion of timeless physics perhaps).

Mainstream philosophy of science claims to have explained the Born probabilities; Eliezer and some others here disagree with the explanations, but it’s at least worth noting that the quoted claim is controversial among those who have thought deeply about the question.

Good to know.

Imagine a universe containing an infinite line of apples. You can see them getting smaller into the distance, until eventually it’s not possible to resolve individual apples. Do you want to say that we could never justify or regard-as-scientific a theory which said “this line of apples is infinite”?

My twin 10^29^29 ly away… if he picks up an astronomy atlas, will he find the exact same objects listed with the exact same coordinates digit for digit? That implies that space itself repeats, like tiles in a desktop pattern.

Is cyclicity a necessary consequence of filling an infinite space with things that can only have a finite number of configurations? If so, does that mean if I zoom in on a Mandelbrot set far enough I will see an exact replica of the image I started with and if I run a cellular automaton long enough I will get a regular pattern?

Probably not. Copies of bokov are pretty rare in the universe (one every 10^(10^29) meters), but copies of bokov together with a correct copy of the whole observable universe have got to be vastly rarer. Only one out of every

(insanely huge number)would see the same night sky you do.In general, you’re not guaranteed to see any particular pattern more than once, or for the pattern of repetition to be particularly neat. The pigeonhole principle says that with an infinite number of pigeons placed into a finite number of slots, at least one of the slots needs to contain more than one pigeon (in fact, one or more of them needs to contain an infinite number of pigeons). But it doesn’t say that all of the slots need to be filled more than once.

Eventually you have to return to a point you have been before, but the cycle need not encompass all the possibilities. For example an infinite string of the numbers 0-5 could go as follows 1, 2, 3, 4, 5, 0, 5, 0, 5, 0, …

Not necessarily, because the number of “possible” images (a continuous infinity, equivalent to R^R) is not smaller than the number of possible “zoom” positions (a smaller continuous infinity equivalent to R).

You’re not guaranteed to see any particular pattern, as I said, and in fact conway’s game of life has “garden of eden” configurations, which can’t be obtained by running the cellular automaton from any possible starting position. So you don’t even go through all the “theoretically possible” states before cycling.

But, in the case Eliezer mentioned, I think it’s fairly reasonable to assume that there is in fact a copy of you somewhere out there, assuming the world is infinite, because of things like quantum “randomness” and thermal noise that give each section of space an independent chance of spontaneously manifesting pretty much any configuration of matter, and those chances add up to “certainty” after an infinite number of chances.

Hope I am not spamming you, but noticed something else.

Isn’t the space of spatial coordinates in the same sense a smaller infinity than the number of quantum configurations possible at any given set of spatial coordinates? So that would refute the assertion that twins of us must exist. At least in the sense of inhabiting our Everett branch somewhere far beyond our Hubble volume.

I.e. Boltzmann brains? That makes me realize another thing. If all of them have an identical configuration, why should some of them dissolve back into the thermal noise whence they came while others do not?

What if slots are not discrete and the contents of each location are a function of previous contents of all locations within its light-cone?

I must say, this twin thing sounds cool and all but I’m starting to think maybe it’s too strongly stated.

Since someone who has read that Tau Ceti is in a different location than I read it is will no longer be identical to me, can I make myself unique by learning a lot of astronomy?

Or are most bokovs merely similar and only occupy identical configurations by mistake, e.g. by misreading information in the same way? But wouldn’t we each still be entangled with enormous numbers of objects that are entangled with the true locations of our respective stars called Tau Ceti?

If we restrict our attention to identical bokovs living on relatively earth-like, or at least life supporting worlds, what generally happens is that on some planet an atom-by-atom identical copy of you suddenly spontaneously materializes (due to quantum/thermal noise). The first thing this copy will notice is that it seems to be somewhere other than it was before, since it has your memories: the last thing it remembers is sitting down reading this site. Then, if it’s night time, it will notice that the stars are in completely wrong places and eventually become very different to the bokov here as it gets used to living on the alien world.

ETA: Yeah, as with boltzmann brains, actually the majority of patterns identifiable as bokov will be manifested in very bad places to live, like in space, in stars or oceans. And they will immediately die, or be reduced to random particles again in the case of a bokov formed inside a star.

And if we don’t restrict our attention in this manner, this number is dwarfed by the number of bokovs being spawned inside gas giants and stars. Which are in turn dwarfed by random blobs of particles containing random bokov body parts spawning.

So your answer to the question of subjective expectation is “never mind red room or green room, you should expect to wake up boiling alive and/or freezing while gasping for air and clutching at the spot you legs used to be”.

But by that logic, given that so far I have not been, I should assign this explanation a very low posterior probability.

Well, no, while the existence of the boltzmann bokovs is intuitively obvious to me, I currently prefer to keep an open mind regarding if and how that matters for subjective expectation.

I can think of two answers that have fewer open ends, though I don’t know if they are valid physics, i am sadly lacking in that area.

The bigger a spontaneous blob is the more stable it is. The only reason it should vanish into thermal noise once it comes into existence would be the same as would cause any other object to disintegrate. So, a spontaneous copy of me? Dead in seconds. Earth? All observers die in a day or so, rock stays around indefinitely. Galaxy? Cluster? Possibly pretty stable if nothing else is nearby.

If reality is a superposition of all waveforms, would that perhaps repeat at some very low frequency?