The Friendly Telepath Problems

Companion to “The Hostile Telepaths Problem” (by Valentine)

Epistemic status: This is my own work, though I asked Valentine for feedback on an early draft. I’m confident that the mechanisms underlying the Hostile/Friendly Telepath dynamic are closely related. The problems of the friendly telepath problems seem well-established. I am less confident, or at least can’t make as strong a claim, on the relation to selfhood/the self.

Valentine’s “Hostile Telepaths” is about what your mind will do when you have to deal with people who can read your emotions and intentions well enough to discover when you are not thinking what they want you to think, and you have to expect punishment for what they find. In such a scenario, your mind will make being-read less dangerous in one of multiple ways, for example, by warping what you can discover in yourself and know about your own intentions.

If that doesn’t seem plausible to you, I recommend reading Valentine’s post first. Otherwise, this post mostly stands on its own and describes a different but sort of symmetric or opposite case.

“Telepathy,” or being legible for other people, isn’t only a danger. It also has benefits for collaboration. As in Valentine’s post, I mean by “telepath” people who can partly tell if you are being honest, whether you are afraid, whether you will stick to your commitments, or in other ways seem to know what you are thinking. I will show that such telepathy is part of a lot of everyday effective cooperation. And beyond that, I will ask: What if a lot of what we call “having a self” is built, developmentally and socially, because it makes cooperation possible by reducing complexity? I will also argue that Valentine’s Occlumency, i.e., hiding your intentions from others (and yourself), is not only a defensive hack. It can also function as a commitment device: it makes the conscious interaction between such telepaths trustworthy.

Two sides of one coin

In the hostile-telepath setting, the world contains someone who can infer your inner state and who uses that access against you.

That creates pressure in two directions: You can reduce legibility to them by using privacy, misdirection, strategic silence, or other such means. Or you can reduce legibility even to yourself when self-knowledge is itself dangerous. If I can’t know it, I can’t be punished for it.

Valentine’s post is mostly about the second move: the mind sometimes protects itself by making the truth harder to access.

But consider the flip side: Suppose the “telepath” is not hunting for reasons to punish you. Suppose they’re a caregiver, a teammate, a friend, a partner, or someone trying to coordinate with you. Then, being legible is valuable. Not because everything about you must be transparent, but because the other person needs a stable, simple, efficient way to predict and align with you:

Is he hungry or scared?

Does she mean yes?

Will he be there at 7 PM?

Is this a real preference or a polite reflex?

A big part of “becoming a person” is becoming the kind of being with whom other people can coordinate. At first, caregivers, then peers, then institutions.

The interface

If you squint, a self is an interface to a person. Here is a nice illustration by Kevin Simler that gives the idea:

But Kevin Simler is talking about the stable result of the socialization process (also discussed by Tomasello[1]). The roles/masks we are wearing as adults, whether we are aware of them or not. I want to talk about the parts prior to the mask. The mask is a standardized interface, but the pointy and gooey parts are also a lower-level interface.

The origin of the interface

I have a four-month-old baby. She can’t speak. She has needs, distress, curiosity, but except for single of comfort or discomfort, she has no way to communicate, and much less to negotiate.

I can’t coordinate with “the whole baby.” I can’t look into its mind. I can’t even remember all the details of its behavior. I can only go by behavior patterns that are readable: different types of crying, moving, and looking. New behaviors or ones I already know (maybe from its siblings).

Over time, the baby becomes more legible. And they are surprisingly effective at it[2]. But how? Not by exposing every internal detail, but by developing stable handles that I or others can learn:

A baby shows distinguishable signals: I can recognize different cries and movements when it is tired vs hungry vs in pain vs bored (especially the latter is common).

It develops increasingly consistent preferences. Not so much for toys yet, but it never took the pacifier, for example. This includes getting bored by or being interested in specific things.

There is beginning anticipation and collaboration: If I diaper it, it will be calm.

Eventually, children will be able to make and hold commitments: “I will do it.”

So I interpret its behaviors in a form that is legible to me. I have a limited number of noisy samples and can’t memorize all details, so I compress into something I can handle (“it likes bathing in the evening”) - with all the issues that brings - (oops, it likes bathing when X, Y, but not Z” many of which I don’t know).

Vygotsky[3] describes how this interpretation of children’s behavior gives rise to the interface by interpretation and mutual reinforcement.

From the outside, the baby starts to look like “a person.” From the inside, I imagine, it starts to feel like “me.”

Mandatory constraints

It is in the interest of the baby to be legible, and so it is for people. If other people know our interface, we can cooperate more effectively. And it also seems like more information and less noise is better to get a clearer reading and less errors when compressing each others communication. This may sound like an argument for radical transparency or radical honesty: if legibility is good, why not make everything transparent to others and also to yourself? But consider: what happens if you could edit everything on demand? The interface stops being informative. What makes coordination possible is constraint. A partner can treat a signal as evidence only if it isn’t infinitely plastic. Examples:

If you could instantly rewrite fear, then “I’m scared” becomes less diagnostic and more negotiational.

If you could rewrite attachment on command, then “I care about you” is no longer a commitment.

If you can always generate the perfect emotion on cue, then sincerity becomes hard to distinguish from performance.

So some opacity doesn’t just hide—it stabilizes. It makes your actions real in the boring sense: they reflect constraints in the system, not only strategic choices.

All things being equal, we prefer to associate with people who will never murder us, rather than people who will only murder us when it would be good to do so—because we personally calculate good with a term for our existence. People with an irrational, compelling commitment are more trustworthy than people compelled by rational or utilitarian concerns (Schelling’s Strategy of Conflict) -- Shockwave’s comment here (emphasis mine). See also Thomas C. Schelling’s “Strategy of Conflict”

So opacity to ourselves can not only function as a defence against hostile opponents, but also enables cooperation of others with us. As long as we don’t know why we consistently behave in a predictable way, we can’t systematically change it. Opacity enables commitment.

But not all types of opacity or transparency are equal. When people argue about “transparency,” they often conflate at least three things:

Opacity to others: Privacy, boundaries, and omission (strategic information hiding).

Opacity to self: You don’t fully know what’s driving you; the process and input that gives rise to your thoughts is at least partly hidden.

Opacity to will: You can’t rewrite your motivations on demand.

What is special about cooperation here is that you can want more legibility at the interface while still needing less legibility in the implementation.

A good team doesn’t need to see every desire. It needs reliable commitments and predictably named states.

Failure modes

Valentine discusses some ways self-deception can go wrong; for example, it can mislabel the problem as a “personal flaw” or show up as akrasia, mind fog, or distraction. Se should also expect the the reverse direction, the coordination interface, to have failure modes. Which ones can you think of? Here are four illustrations for common failure modes. Can you decode which ones they are before reading on?

Performative transparency

In A non-mystical explanation of insight meditation… Kaj writes:

I liked the idea of becoming “enlightened” and “letting go of my ego.” I believed I could learn to use my time and energy for the benefit of other people and put away my ‘selfish’ desires to help myself, and even thought this was desirable. This backfired as I became a people-pleaser, and still find it hard to put my needs ahead of other peoples to this day.

You become legible, but the legibility is optimized for being approved of, not for being true.

The tell is if your “self” changes with the audience; you feel managed rather than coordinated.

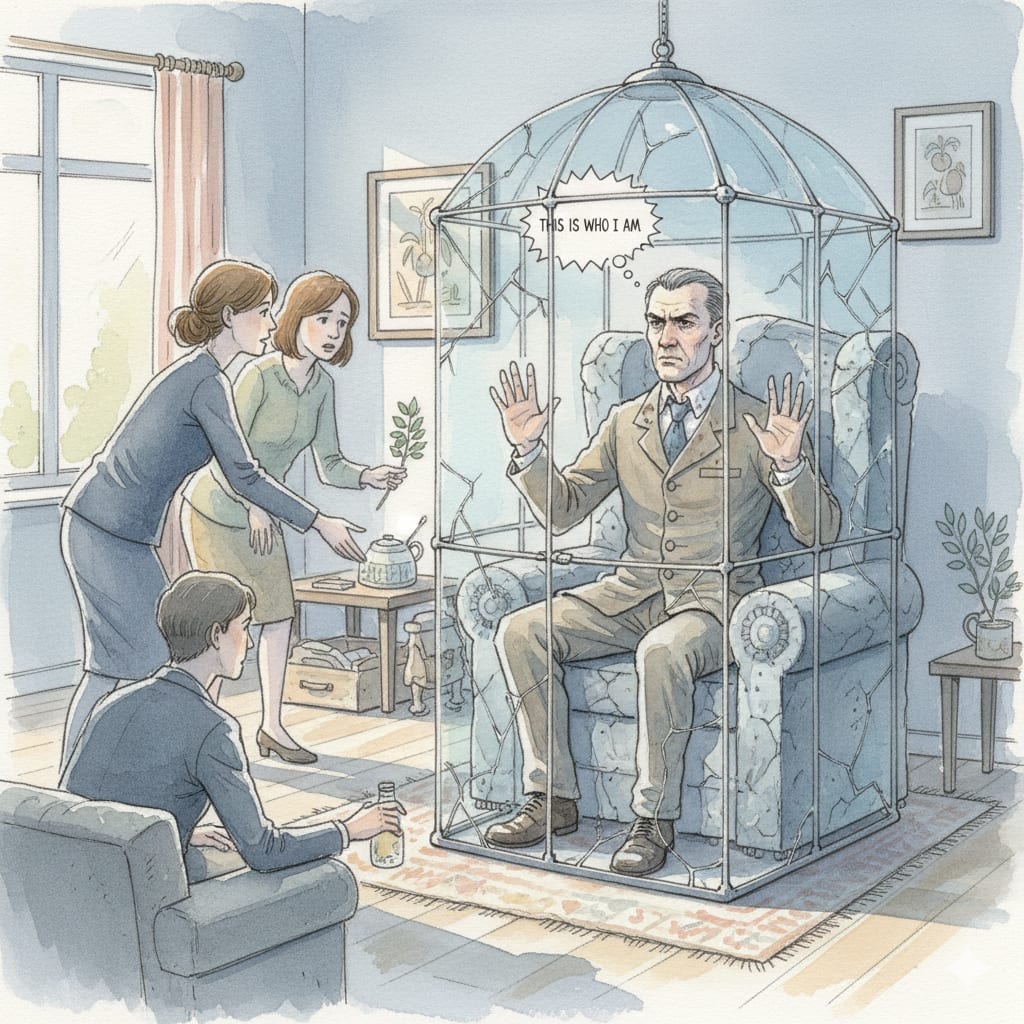

Rigidity dressed up as authenticity

In When Being Yourself Becomes an Excuse for Not Changing Fiona writes:

a friend breezed into lunch nearly an hour late and, without a hint of remorse, announced, “I’m hopeless with time.” Her casual self-acceptance reminded me of my younger self. From my current vantage point, I see how easily “that’s just who I am” becomes a shield against any real effort to adapt.

Sometimes “this is just who I am” is a boundary. Sometimes it’s a refusal to update. Everybody has interacted with stubborn people. This is a common pattern. But you can tell if it is adaptive if it leads to better cooperation. Does it make you more predictable and easy rto cooperate with, or does it mainly shut down negotiations that might (!) overwhelm you?

The rule of equal and opposite advice advice applies here. There is such a thing as asserting too few boundaries. For a long time, I had difficulties asserting my boundaries. I was pretty good at avoiding trouble, but it didn’t give people a way to know and include my boundaries in their calculations—and in many cases where I avoided people and situations, they would have happily adapted.

The internal hostile telepath

In Dealing with Awkwardness, Jonathan writes:

Permanently letting go of self-judgement is tricky. Many people have an inner critic in their heads, running ongoing commentary and judging their every move. People without inner voices can have a corresponding feeling about themselves—a habitual scepticism and derisiveness.

So you can develop an inner critic: an internalized role of, maybe of a parent or teacher, that audits feelings and demands that you “really mean it.”

Then you’re surveilled from the inside. I think this shows up as immature defenses[4], numbness or theatricality.

Dependency on mirrors

In What Universal Human Experiences are You Missing without Realizing it? Scott Alexander recounts:

Ozy: It took me a while to have enough of a sense of the food I like for “make a list of the food I like” to be a viable grocery-list-making strategy.

Scott: I’ve got to admit I’m confused and intrigued by your “don’t know my own preferences” thing.

Ozy: Hrm. Well, it’s sort of like… you know how sometimes you pretend to like something because it’s high-status, and if you do it well enough you _actually believe_ you like the thing? Unless I pay a lot of attention _all_ my preferences end up being not “what I actually enjoy” but like “what is high status” or “what will keep people from getting angry at me”

A benevolent friend can help you name what you feel. But there’s a trap: outsourcing selfhood and endorsed preferences.

Do you only know what you want after someone (or the generalized “other people”) wants it too?

If you want to balance the trade-offs between being legible and opaque, what would you do? Perhaps:

Be predictable without being exposed.

Be legible in commitments; be selectively private about details.

Prefer “I don’t know yet” over a confident story that doesn’t fit.

Reduce fear-based opacity, keep boundary-based opacity.

What does the latter mean?

Some contexts are structurally hostile; don’t try to win them with more openness.

Use rituals that don’t demand instant inner conformity. Sometimes “I’m sorry” is the appropriate, even if the emotion is lagging.

“I like Italian food” is legible but doesn’t help with choosing a restaurant. Sometimes you have to tell more. But often the details just add noise.

What I can’t answer is the deeper question: What is a stable way of having a “self as an interface” that is stable under both coordination pressure (friendly telepaths) and adversarial pressure (hostile telepaths)? Bonus points if you can also preserve autonomy and truth-tracking.

- ^

how humans put their heads together with others in acts of so-called shared intentionality, or “we” intentionality. When individuals participate with others in collaborative activities, together they form joint goals and joint attention, which then create individual roles and individual perspeсtives that must be coordinated within them (Moll and Tomasello, 2007). Moreover, there is a deep continuity between such concrete manifestations of joint action and attention and more abstract cultural practices and products such as cultural institutions, which are structured-indeed, created-by agreedupon social conventions and norms (Tomasello, 2009). In general, humans are able to coordinate with others, in a way that other primates seemingly are not, to form a “we” that acts as a kind of plural agent to create everything from a collaborative hunting party to a cultural institution.

Tomasello (2014) A natural history of human thinking, page 4

- ^

We propose that human communication is specifically adapted to allow the transmission of generic knowledge between individuals. Such a communication system, which we call ‘natural pedagogy’, enables fast and efficient social learning of cognitively opaque cultural knowledge that would be hard to acquire relying on purely observational learning mechanisms alone. We argue that human infants are prepared to be at the receptive side of natural pedagogy (i) by being sensitive to ostensive signals that indicate that they are being addressed by communication, (ii) by developing referential expectations in ostensive contexts and (iii) by being biased to interpret ostensive-referential communication as conveying information that is kind-relevant and generalizable.

Natural Pedagogy by Csibra & Gergely (2009), in Trends in Cognitive Sciences pages 148–153

- ^

We caIl the internal reconstruction of an external operation intentionalization. A good example of this process may be found in the development of pointing. Initially, this gesture is nothing more than an

unsuccessful attempt to grasp something, a movement aimed at a certain

object which designates forthcoming activity. The child attempts to

grasp an object placed beyond his reach; his hands, stretched toward

that object, remain poised in the air. His fingers make grasping movements. At this initial stage pointing is represented by the child’s movement, which seems to be pointing to an object-that and nothing more.When the mother comes to the child’s aid and realizes his movement indicates something, the situation changes fundamentally, Pointing

becomes a gesture for others. The child’s unsuccessful attempt engenders

a reaction not from the object he seeks but from another person. Consequently, the primary meaning of that unsuccessful grasping movement is established by others. Only later, when the child can link his unsuccessful grasping movements to the objective situation as a whole, does he begin to understand this movement as pointing. At this juncture there occurs a change in that movement’s function: from an object-oriented

movement it becomes a movement aimed at another person, a means of

establishing relations, The grasping movement changes to the act of pointing.Vygotsky (1978), Mind in society, page 56

- ^

See Defence mechanism. A writeup is in Understanding Level 2: Immature Psychological Defense Mechanisms. I first read about mature and immature defenses in Aging Well.

If I’m reading you right, one of your arguments is that we need opacity with respect to ourselves so that we can’t edit ourselves, which then allows us to have rigid commitments, which is good for coordination.

I want to push back against that argument a bit. (Apologies if that’s not your argument! In which case I’ll be over here punching a tangential practice dummy.)

It does seem very plausible to me that rigidity in commitments is key for coordination. I hadn’t had that thought before reading your article.

It also seems plausible to me that one way of making one’s commitments rigid is by being unable to consciously access them and/or to make them “read only” so to speak.

But I think that last strategy is just one possibility, and it incentivizes irrationality.

Here’s another one: I know I need to maintain a commitment rigidly in order to coordinate with others. So I have a commitment which in some abstract sense I could edit, but I don’t, at a policy level.

This point is akin to how in principle my roommate could kill me. But I know he won’t. Not because he’s literally incapable of it, and not because it happens to be convenient for his local incentives, but because he’s attending to more long-range incentives. Even if he could become very rich by killing me, I’m pretty darn confident he wouldn’t.

The difference here is that instead of it being some rigid rule that we pretend organic strategic critters can’t edit in themselves, it’s a lucid recognition of the actual incentives involved, including the fact that having something playing the role of rigid rules is useful.

I think that kind of setup makes more thoughts thinkable, which avoids making irrationality strategic.

That said, I do think it’s helpful to have a barrier between your social interfaces and your conscious processing, and I do think it’s helpful for coordination. It’s just that the mechanism I see goes through the fact that having that barrier lets you think more strategically. With enough clarity, you can rederive why prosocial commitment devices make sense, and integrate that truth into your strategies (instead of having a bunch of potentially useful thoughts being unthinkable in invisible ways).

At least that’s how I’m currently approaching it.

All that said, I like your analysis for highlighting a thing I think in fact happens! Even if it should turn out not to be the optimal strategy for rationalists (which it still might be!).

Yes, I meant that

And most of the post is downstream of that. Thanks for addressing the central claim.

The alternate strategy makes sense to me. I agree that long-term planning is real.

The brain does long term credit assignment. And I think some of that happens via reflection on past and future experiences (“I don’t do X because I’m optimizing long-term Y.”). While not everyone does such a clean rationalist version, I think an approximation of that is often going on.

So my “read-only via opacity” is too simplistic. Let me do better.

I think a position I defend more strongly is: As your long-term reasoning (system 2) is iterated, practiced, and rewarded, it gets condensed into habitual responses by system 1. In the momentari situation of an interaction, the habitual action is selected (and in that moment you do not have access).

To explain, let me unpack your “I could edit, but I don’t, at a policy level.”

I would interpret “I could edit” as “I could spend some time reflecting on my commitments and the related habits. I could change (eg renege on) my commitments in the sense of thinking about such changes, practicing them in hypothetical settings or otherwise see the changes play out. I would cache these thoughts such that in a concrete immediate situation I would respond differently. And then “I don’t, at a policy level.” means that you do not go thru these motions and leave your prosocial habits in place because you know or estimate that in expectation that’s better over many roll-outs of the habits/cached thoughts.

Let me know if that unpacking sounds reasonable to you.

The key thing is that in the moment of a concrete interaction, we actually can’t go thru all these motions of self-editing. There is not enough time. The actions come out automatically, habitually, naturally. And the other party can see that.

The habit is the interface.

Would you say that the system 1 caching of thoughts is the barrier?

I agree that this barrier is not one of opacity, at least neither in the sense of self-obscuring nor factual unaccessiblity of access. But the access that is there is too slow for on-the-spot rewrite.

I do think that there is opacity involved in the process (see the opacity described in Parameters of Metacognition—The Anesthesia Patient), but its effect on the barrier and it’s enabling of commitment is indirect. And seems more involved than I thought. Thank you for drilling down on the concept.

Tangent 1:

The rewriting process needs an entity that is doing the rewriting intentionally. As I tried to show in Between Entries, reflecting and condensing is a large part of how we build a self-model. So this strengthens the connection to the self as an interface.

Tangent 2:

I agree that this is useful knowledge to gain esp. if you can make it habitual. But “with enough clarity” does a lot of work here. Such commitment devices do not work in all environments. So even if you can derive them (which may require contexts most people never encounter), you might not benefit from it.

Agree. In the cached thought frame these useful thoughts would still be thinkable in principle. Whether a person does so or whether they are opaque to them is then a different question.

Just to make sure I understand your idea right: the friendly telepath problem is something like “How do I create a workable interface with someone who might be able to collaborate with me, given that I’m kind of transparent to that person’s ‘telepathy’?” Is that basically right?

And then you’re suggesting a bunch of quirks about how to solve it, like how maintaining opaque parts of your psyche is actually helpful for solving this problem. Yes?

Kinda. Collaborating with friendly telepaths is not a problem normally. It is not something you have to fight for or against. The friendly telepath is not your problem. But the normallx workable interface can still go wrong! There are problems you may encounter—but it is more special quirks to avoid or at least be aware of. That’s why the title is The friendly telepath problems.

But overall yes, I say that the fact that our minds are at least partly opaque (at least in the refined sense in the reply to your other comment) is actually helpful for collaboration and commitment.

I’m sorry I’m being kind of thick here. Can you spell out plainly to me what exactly the problem your title is referring to is? “The friendly telepath problem” implies there’s some kind of problem that somehow involves friendly telepaths. You say I “kinda” described it correctly by saying it’s about how to create workable interfaces with friendly telepaths. Then you go on to say… um… I think to say that “the normally workable interface can still go wrong” as being the problem. Which I think is what I was saying? But you seem to say it as a correction to what I was trying to name.

So… what precisely is the friendly telepath problem?

I really shouldn’t have tried to mimic your title.

The post is about the problems you can have even with a friendly telepath. And the problems are (using your barrier phrasing):

Performative transparency—the barrier is doing PR for you, not helping you coordination

Rigidity dressed up as authenticity—the barrier becomes unmovable

The internal hostile telepath—the barrier served protection from a hostile telepath, but the hostile telepath is gone, and the barrier never updated

Dependency on mirrors—the barrier allows you to use other friendly telepaths as an oracle for yourself

“Self as an interface” feels weird. For me, self has connotations with being unique, internal, and usually not intentionally morphed. Here I feel like you’re talking about intentionally shaping your external interface according to the environment (including who you are interacting with), which goes against the three connotations I have with the word self.

I don’t have a better word suggestion though. I have been thinking about roles as interfaces in a non-telepathic context, but “roles as interfaces” seem subtly different from your idea.

I wonder if the right term here is “persona” or “personality”. The mask your self wears to be a person in a social setting.

Roles strike me as different. I think of those as being about where you are and which character you’re playing in the social web. Your persona is more like how you’re playing whichever role you have.

Maybe I should have left the self-aspect out of this post. I will elaborate on the connection in a later post. I feels quite intuitive for me though. The self sure feels unique and internal. But maybe you can agree that it does change or at least that things associated with it change in ways we can’t seem to control—which is the point that I am making. It enables commitments.