Align it

sudo

The specific dynamics of RL here are better discovered empirically, and in any case is not precisely within scope.

I was thinking of a more general optimization loop, as in: what evals should we make, how can we track model progress on writing, etc. My suggestion is that once we figure out how to make models write well in this playground (where evaluation is easier, generation is cheaper, etc.) -- either by training or pushing on things like harness design—we’ll be in a good position to improve LLM writing abilities more generally.

I argue that this tendency is difficult to learn in short-form, because it’s hard to realize that the payoff is never coming when it has to come now or never

I bet you that current frontier models, when challenged to write prose in the 200-word range, will make all the mistakes I describe in my post or you describe in your comment.

You point out the mistake of hints and promises that you can’t deliver on. I claim that current models will absolutely do this even in 200-word works. Once we can RL it out of the models in this range, we can keep going longer.

I’ll admit that 200 is kind of absurdly short in a way that creates substantive, qualitative differences from more common types of writing (e.g. maybe it really penalizes certain kinds of action or dialogue). I could be convinced that ~500 is right.

[Hot take] Problems with AI prose

https://en.wikipedia.org/wiki/Anti-satellite_weapon

It’s only getting easier. You also don’t necessarily need to shoot it down. You can try to hit it with another satellite, or use directed energy. If you’re desperate, you can try to trigger Kessler.

Meanwhile, on the ground you can rest in the relative safety of layered air defense.

You have limited fuel for manoeuvring.

It seems much easier to shoot down a defenseless, slow-moving thing in low earth orbit than something on earth or underground (which could be covered by SAMs, patrolled by fighter jets, shielded by thick cement).

[New Research] You need Slack to be an effective agent

Review of Kawabata’s “Palm of the Hand” stories and their translation into English

1b. As AI becomes more powerful and AI safety concerns go more mainstream, other wealthy donors may become activated

I’m worried about a motte-and-bailey situation where people sometimes use “AI safety” to mean “make AI go well” and other times use “AI safety” to mean “reduce catastrophic risk.” I take the authors to mean the latter, in which case 1b is valid.

I agree that government intervention and non-EA philanthropists will make a meaningful impact to funding opportunities for reducing catastrophic risk.

However, I think the world is likely to remain wrong about other key issues (digital sentience, longtermism and scope sensitivity broadly), such that 1b is in fact not valid for the former definition of “AI safety,” which I claim is what people should really care about.

I’m curious if people have looked into the extent to which Chinese AI labs are/aren’t broadly value-aligned with ~longtermism (compared to US labs).

Similar question about safety: Curious if people have looked deeply into how Chinese AI labs are doing+thinking about safety relative to US labs. I think it’s not entirely fair to compare on a commitment-to-commitment basis, because you’d imagine that commitments common among US companies are just not top-of-mind for Chinese labs.

I’m particularly interested in the attitudes of lab leads, and to some extent also members of technical staff.

We need not provide the strong model with access to the benchmark questions.

Depending on the benchmark, it can be difficult or impossible to encode all the correct responses in a short string.

My reply to both your and @Chris_Leong ’s comment is that you should simply use robust benchmarks on which high performance is interesting.

In the adversarial attack context, the attacker’s objectives are not generally beyond the model’s “capabilities.”

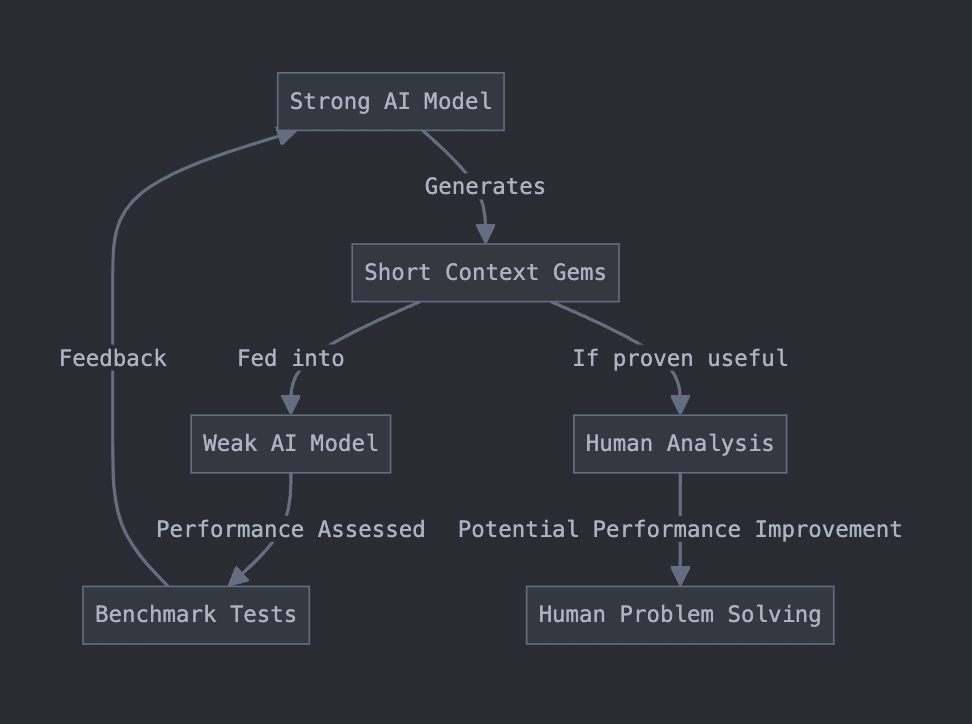

A long time ago, I worked on an idea I called “Context Gems.” A friend asked me to make a short, publicly accessible writeup for it. This is a really rough writeup of the idea, based on some old notes I had lying around.

Context Gems was an attempt at coming up with a theoretical way of safely eliciting superhuman capabilities from an AI. It was a fairly direct off-shoot of e.g. OSNR I later heard it was similar to some ideas Paul Christiano had a long time ago, like imitative generalization/learning the prior.

The goal is to get effective cognitive labor out of a superintelligent but possibly misaligned AI model without causing catastrophe. One insight is that if for a given goal, we want to achieve this goal while receiving a minimum amount of input/information from the superintelligent model. (Another way to phrase this is that we want to maximize the density of “helpful” information in the model’s outputs, to reduce its opportunities for hijacking the human operators).

Our setup is to train/use a strong (possibly superhuman) model to generate short strings (“context gems”). We intend these strings to contain some insight about the problem domain. To verify the usefulness of these short strings, we feed them into context of weaker model, which we can assess on some benchmarks. We may “reward” the strong model based on the performance of the weak model on some problem domain.

I had Claude make me a flow-chart.

If the gems do dramatically improve the performance of the weak model, then perhaps they contain some true insight about the problem domain. Then, if the strings are sufficiently short, we might be able to expose the string to humans without causing catastrophe, hopefully resulting in similar performance improvements for humans.

Some things we might think about:

What should be true of these “context gems”? Perhaps we want them to be short. Are there other restrictions we want to put on them?

What are the right problem domains to select?

If you were an adversarial/misaligned superintelligence, how might you attempt to take over the world given such a setup?

What sort of information might actually go into these context gems?

I’d also be interested in any empirical evidence that such a setup would work.

Thanks for the feedback!

I agree that it is possible to learn quickly without mentorship. However, I believe that for most programmers, the first “real” programming job is a source of tremendous growth. Why not have that earlier, and save more of one’s youth?

Conventional advice directed at young people seem shockingly bad. I sat down to generate a list of anti-advice.

The anti-advice are things that I wish I was told in high school, but that are essentially negations of conventional advice.

You may not agree with the advice given here. In fact, they are deliberately controversial. They may also not be good advice. YMMV.

When picking between colleges, do care a lot about getting into a prestigious/selective university. Your future employers often care too.

Care significantly less about nebulous “college fit.” Whether you’ll enjoy a particular college is determined mainly by 1, the location, and 2, the quality of your peers

Do not study hard and conscientiously. Instead, use your creativity to find absurd arbitrages. Internalize Thiel’s main talking points and find an unusual path to victory.

Refuse to do anything that people tell you will give you “important life skills.” Certainly do not take unskilled part time work unless you need to. Instead, focus intently on developing skills that generate surplus economic value.

If you are at all interested in a career in software (and even if you’re not), get a “real” software job as quickly as possible. Real means you are mentored by a software engineer who is better at software engineering than you.

If you’re doing things right, your school may threaten you with all manners of disciplinary action. This is mostly a sign that you’re being sufficiently ambitious.

Do not generically seek the advice of your elders. When offered unsolicited advice, rarely take it to heart. Instead, actively seek the advice of elders who are either exceptional or unusually insightful.

[Stanford Daily] Table Talk

Thanks for the post!

The problem was that I wasn’t really suited for mechanistic interpretability research.

Sorry if I’m prodding too deep, and feel no need to respond. I always feel a bit curious about claims such as this.

I guess I have two questions (which you don’t need to answer):

Do you have a hypothesis about the underlying reason for you being unsuited for this type of research? E.g. do you think you might be insufficiently interested/motivated, have insufficient conscientiousness or intelligence, etc.

How confident are you that you just “aren’t suited” to this type of work? To operationalize, maybe given e.g. two more years of serious effort, at what odds would you bet that you still wouldn’t be very competitive at mechanistic interpretability research?

What sort of external feedback are you getting vis a vis your suitability for this type of work? E.g. have you received feedback from Neel in this vein? (I understand that people are probably averse to giving this type of feedback, so there might be many false negatives).

Hi, do you have a links to the papers/evidence?

Strong upvoted.

I think we should be wary of anchoring too hard on compelling stories/narratives.

However, as far as stories go, this vignette scores very highly for me. Will be coming back for a re-read.

I appreciate your scientific spirit.

I do not think this is true. The model does try to make metaphors, the metaphors just do not make sense.

See mine:

Outside of these excerpts, I have seen LLMs make many attempts at parallelism and metaphor that are deeply imperfect or incoherent.

This generalizes to other attempts at figurative language.

For example, models often attempt but struggle to keep parallelism between paragraphs or list items.

From a friend’s conversation with ChatGPT (which he highlighted as good prose...):

Note the flawed parallelism with “it admits,” and then the subsequent confusion regarding the subject of comparison.

Finally, I also challenge you to produce good prose with a Kimi or DeepSeek model.