I’m a software developer by training with an interest in genetics. I am currently doing independent research on gene therapy with an emphasis on intelligence enhancement.

GeneSmith

OpenAI’s continued practice of publishing the blueprints allowing others to create more powerful models seems to undermine their claims that they are worried about “bad actors getting there first”.

If you were a scientist working on the Manhattan project because you were worried about Hitler getting the atomic bomb first, you wouldn’t send your research on centrifuge design to german research scientists. Yet every company that claims they are more likely than other groups to create safe AGI continues to publish the blueprints for creating AGI to the open web.

Is there any actual justification for this other than “The prestige of getting published in top journals makes us look impressive?”

I think people underestimate the degree to which hardware improvements enable software improvements. If you look at AlphaGo, the DeepMind team tried something like 17 different configurations during training runs before finally getting something to work. If each one of those had been twice as expensive, they might not have even conducted the experiment.

I do think it’s true that if we wait long enough, hardware restrictions will not be enough.

It’s not clear whether that will mean the end of humanity in the sense of the systems we’ve created destroying us. It’s not clear if that’s the case, but it’s certainly conceivable. If not, it also just renders humanity a very small phenomenon compared to something else that is far more intelligent and will become incomprehensible to us, as incomprehensible to us as we are to cockroaches.

Q: That’s an interesting thought. [nervous laughter]

Hofstadter: Well, I don’t think it’s interesting. I think it’s terrifying. I hate it. I think about it practically all the time, every single day. [Q: Wow.] And it overwhelms me and depresses me in a way that I haven’t been depressed for a very long time.

I don’t think I’ve ever seen a better description of how I feel about the coming creation of artificial superintelligence. I find myself returning over and over again to that post by benkuhn about “Staring into the abyss as a core life skill” I think that is going to become a necessary core life skill for almost everyone in the coming years.

It has been morbidly gratifying to see more and more people develop the same feelings about AI as I have had for about a year now. Like validation in the worst possible way. I think if people actually understood what was coming there would be a near total call to ban improvements in this technology and only allow advancement under very strict conditions. But almost no one has really thought through the consequences of making a general purpose replacement for human beings.

This reminds me a bit of my own hiring process. I wanted to work for a company doing polygenic embryo screening, but I didn’t fit any of the positions they were hiring for on their websites, and when I did apply my applications were ignored.

One day Scott Alexander posted “Welcome Polygenically Screened Babies”, profiling the first child to be born using those screening methods. I left a comment doing a long cost-effectiveness analysis of the technology, and it just so happened that the CEO of one of the companies read it and asked me if I’d like to collaborate with them.

The collaboration went well and they offered me a full-time position a month later.

All because a comment I left on a blog.

Man, what a post!

My knowledge of alignment is somewhat limited, so keep in mind some of my questions may be a bit dumb simply because there are holes in my understanding.

It seems hard to scan a trained neural network and locate the AI’s learned “tree” abstraction. For very similar reasons, it seems intractable for the genome to scan a human brain and back out the “death” abstraction, which probably will not form at a predictable neural address. Therefore, we infer that the genome can’t directly make us afraid of death by e.g. specifying circuitry which detects when we think about death and then makes us afraid. In turn, this implies that there are a lot of values and biases which the genome cannot hardcode…

I basically agree with the last sentence of this statement, but I’m trying to figure out how to square it with my knowledge of genetics. Political attitudes, for example, are heritable. Yet I agree there are no hardcoded versions of “democrat” or “republican” in the brain.

This leaves us with a huge puzzle. If we can’t say “the hardwired circuitry down the street did it”, where do biases come from? How can the genome hook the human’s preferences into the human’s world model, when the genome doesn’t “know” what the world model will look like? Why do people usually navigate ontological shifts properly, why don’t people want to wirehead, why do people almost always care about other people if the genome can’t even write circuitry that detects and rewards thoughts about people?”.

This seems wrong to me. Twin studies, GCTA estimates, and actual genetic predictors all predict that a portion of the variance in human biases is “hardcoded” in the genome. So the genome is definitely playing a role in creating and shaping biases. I don’t know exactly how it does that, but we can observe that such biases are heritable, and we can actually point to specific base pairs in the genome that play a role.

Somehow, the plan has to be coherent, integrating several conflicting shards. We find it useful to view this integrative process as a kind of “bidding.” For example, when the juice-shard activates, the shard fires in a way which would have historically increased the probability of executing plans which led to juice pouches. We’ll say that the juice-shard is bidding for plans which involve juice consumption (according to the world model), and perhaps bidding against plans without juice consumption.

Wow. I’m not sure if you’re aware of this research, but shard theory sounds shockingly similar to Guynet’s description of how the parasitic lamprey fish make decisions in “The Hungry Brain”. Let me just quote the whole section from Scott Alexander’s Review of the book:

How does the lamprey decide what to do? Within the lamprey basal ganglia lies a key structure called the striatum, which is the portion of the basal ganglia that receives most of the incoming signals from other parts of the brain. The striatum receives “bids” from other brain regions, each of which represents a specific action. A little piece of the lamprey’s brain is whispering “mate” to the striatum, while another piece is shouting “flee the predator” and so on. It would be a very bad idea for these movements to occur simultaneously – because a lamprey can’t do all of them at the same time – so to prevent simultaneous activation of many different movements, all these regions are held in check by powerful inhibitory connections from the basal ganglia. This means that the basal ganglia keep all behaviors in “off” mode by default. Only once a specific action’s bid has been selected do the basal ganglia turn off this inhibitory control, allowing the behavior to occur. You can think of the basal ganglia as a bouncer that chooses which behavior gets access to the muscles and turns away the rest. This fulfills the first key property of a selector: it must be able to pick one option and allow it access to the muscles.

Spoiler: the pallium is the region that evolved into the cerebral cortex in higher animals.

Each little region of the pallium is responsible for a particular behavior, such as tracking prey, suctioning onto a rock, or fleeing predators. These regions are thought to have two basic functions. The first is to execute the behavior in which it specializes, once it has received permission from the basal ganglia. For example, the “track prey” region activates downstream pathways that contract the lamprey’s muscles in a pattern that causes the animal to track its prey. The second basic function of these regions is to collect relevant information about the lamprey’s surroundings and internal state, which determines how strong a bid it will put in to the striatum. For example, if there’s a predator nearby, the “flee predator” region will put in a very strong bid to the striatum, while the “build a nest” bid will be weak…

Each little region of the pallium is attempting to execute its specific behavior and competing against all other regions that are incompatible with it. The strength of each bid represents how valuable that specific behavior appears to the organism at that particular moment, and the striatum’s job is simple: select the strongest bid. This fulfills the second key property of a selector – that it must be able to choose the best option for a given situation…

With all this in mind, it’s helpful to think of each individual region of the lamprey pallium as an option generator that’s responsible for a specific behavior. Each option generator is constantly competing with all other incompatible option generators for access to the muscles, and the option generator with the strongest bid at any particular moment wins the competition.

You can read the whole review here or the book here. It sounds like you may have independently rederived a theory of how the brain works that neuroscientists have known about for a while.

I think this independent corroboration of the basic outline of the theory makes it even more likely shard theory is broadly correct.

I hope someone can work on the mathematics of shard theory. It seems fairly obvious to me that shard theory or something similar to it is broadly correct, but for it to impact alignment, you’re probably going to need a more precise definition that can be operationalized and give specific predictions about the behavior we’re likely to see.

I assume that shards are composed of some group of neurons within a neural network, correct? If so, it would be useful if someone can actually map them out. Exactly how many neurons are in a shard? Does the number change over time? How often do neurons in a shard fire together? Do neurons ever get reassigned to another shard during training? In self-supervised learning environments, do we ever observe shards guiding behavior away from contexts in which other shards with opposing values would be activated?

Answers to all the above questions seem likely to be downstream of a mathematical description of shards.

Gene editing can fix major mutations, to nudge IQ back up to normal levels, but we don’t know of any single genes that can boost IQ above the normal range

This is not true. We know of enough IQ variants TODAY to raise it by about 30 points in embryos (and probably much less in adults). But we could fix that by simply collecting more data from people who have already been genotyped.

None of them individually have a huge effect, but that doesn’t matter much. It just means you need to perform more edits.

If we want safe AI, we have to slow AI development.

I agree this would help a lot.

EDIT: added a graph

I am not saying plieotropy doesn’t exist. I’m saying it’s not as big of a deal as most people in the field assume it is.

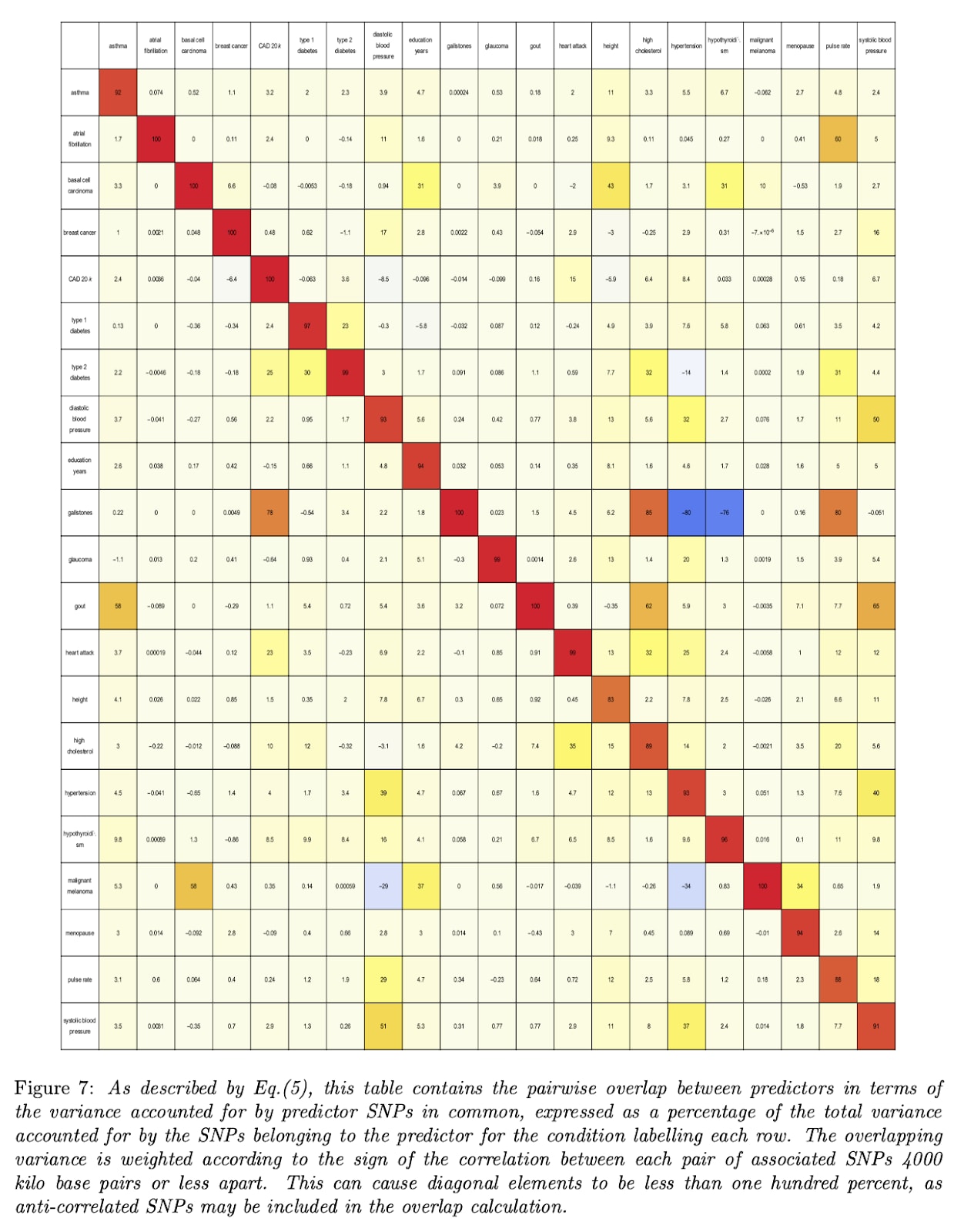

Take disease risk for example. Here’s a chart showing the genetic correlations between various conditions:

With a few notable exceptions, there is not very much correlation between different diseases.

And to the extent that plieotropy does exist, it mostly works in your favor. That’s why most of the boxes are yellowish instead of bluish. Editing or selecting embryos to reduce the risk of one disease usually results in a tiny reduction of others.

Evolution succeeds by tinkering over many generations. It creates as many downsides as upsides. Who’s going to volunteer to be tinkered upon?

Evolution cannot simultaneously consider data from millions of people when deciding which genetic variants to give someone. We can.

None of these proposals deal with novel genetic variants. Every target variant we would introduce is already present in tens of thousands of individuals and is known to not cause any monogenic disorder.

as long as there’s a reason to think you’ll get more upside than downside, which limited theory will provide

I’m not quite sure what you’re getting at here. Do you believe it’s impossible to make advantageous genetic tradeoffs? Or that there is no way to genetically alter organisms in a way that results in a net benefit?

However, there’s no way the FDA is going to approve tinkering. You’d have to do this outside of US jurisdiction.

The FDA routinely approves clinical trials to treat fatal diseases with no effective treatments. There are many lethal brain disorders that satisfy this requirement; Alzheimer’s, dementia, ALS, Parkinson’s and others.

Would the FDA approve a treatment to enhance intelligence? Probably not, unless US citizens were flying out of the country to get it. But if you can treat a polygenic brain disorder like Alzheimer’s with gene therapy, you can quite easily repurpose the platform to target intelligence by simply swapping the guide RNAs.

It’s worth noting that most of the major news orgs passed on this story despite being offered the opportunity to cover it. We don’t know why they did it yet, but given that various orgs have covered the Snowden documents and other whistleblowers that the government very much didn’t like, my guess is they did it for reasons related to the quality of the story rather than any conversations with government officials who encouraged them not to cover it.

My priors against us having discovered alien tech are very high, though not literally infinite.

But I still don’t have a clear story for exactly what’s going on. Most of the videos of UFOs look pretty similar: silvery orbs flying around at very high speed. I haven’t yet heard an explanation of how this could be explained by camera artefacts, weather phenomena, or anything else.

Other videos like this one released by the Navy show non-spherical objects that even rotate while moving. I struggle to think of what could be causing this.

I’m too lazy to look into it right now, but at the very least there’s a scientific mystery here. Whether or not the explanation turns out to be interesting remains to be seen. There seems to be a big stigma against reporting UAP in the military, which some NASA officials think is hindering our understanding; with fewer recorded phenomena, it’s hard to figure out what’s going on.

Sometimes I read these posts and feel like I am standing on an island of sanity among a sea of insane people. That 88% support of Johnson & Johnson vaccine pause just seems totally nuts.

If you’re looking for something useful to do, call your senators and congresspeople and ask them to send our Astra Zeneca doses to hard hit parts of India.

Yes, I think many in the field would share this viewpoint and that’s part of why we haven’t seen someone already attempt this.

I disagree for reasons I’ve shared in my post on “Black Box Biology”, but it’s worth reiterating my reasons here:

You don’t need to understand the causal mechanism of genes. Evolution has no clue what effects a gene is going to have, yet it can still optimize reproductive fitness. The entire field of machine learning works on black box optimization.

Most genetic variants (especially those that commonly vary among humans, which are the ones we would be targeting) have linear effects on a single trait. We don’t actually need to worry about gene-gene interactions that much.

To the degree plieotropy does exist and is a concern, you can optimize your edit targeting criteria according to multiple traits. For example, you could try to edit to reduce (or at the very least keep constant) the risk of schizophrenia and other mental disorders.

(As stated in the post), a delivery vector that doesn’t induce an adaptive immune response can be administered in multiple rounds, with a relatively small number of edits made each time, further decreasing the risk of large side-effects.

As far as regulation goes, we’ve already approved one CRISPR-based gene therapy in the US. I see no reason to expect that you couldn’t conduct a clinical trial to treat a polygenic brain disease like Alzheimers or treatment resistant depression. That’s why in my roadmap I proposed clinical trails for treating a fatal brain disorder as a first step before we tackle intelligence.

I think my dislike of Bitcoin and the poor arguments I’ve heard on behalf of its value has clouded my judgement on this topic. I still don’t understand the actual value argument for Bitcoin. It doesn’t work as money both due to its volatility and the transaction rate limit. It’s basically digital gold.

But why is that valuable? I don’t want to hold gold unless the financial system is collapsing and inflation is spiraling out of control or something like that.

My current theory for what happened is that everyone bought into this delusion about the value of bitcoin, but that unlike other bubbles it didn’t burst because Bitcoin has a limited supply and there is literally nothing to anchor its value. So there’s no point where investors give up and sell because there is literally no point at which it’s overpriced.

Am I still missing something? I just have no idea how to apply lessons from Bitcoin to anything else. What lesson is there to draw from this other than “buy into things with fixed supplies and no intrinsic worth that are going up in price”?

Another of my biases that caused me to miss the train was my belief that Bitcoin is fundamentally bad for the world. The only way the price goes up is if everyone buys into the delusion that it has value. And once you’re bought in you have the incentive to pressure other people to buy into the same delusion. And what is the end-result? The only thing Bitcoin ever facilitated was Silk Road and other black market exchanges. And even for those use-cases it has been superseded by other crypto designed to actually function as money.

The end-result of Bitcoin getting more valuable is that a ton of talented people spend time building exchanges and building mining rigs and thinking about Bitcoin and consuming a shit ton of power to mine more Bitcoin. On net it’s just a huge loss for world productivity.

Furtheremore, everyone I talked to or whose work I read on this subject made these really poor-sounding arguments as to why I should buy bitcoin that just set off all my epistemic alarm bells. I disliked these arguments so much that it made me start to actively dislike the idea of cryptocurrency.

What is your advice now? Do I just buy in anyway and give the proceeds (if there any) to some effective charity? It seems like the only limit on the price of Bitcoin is the liquidity of the entire world economy. How do I think about when to sell?

Suddenly the Monty Python monks make so much more sense

Yes, I slightly oversimplified this point for the sake of keeping it short.

The effects ARE linear in the sense that you can predict diabetes risk with a function f(x_1+x_2+x_3...) for all diabetes affecting variants. The actual shape of the curve is not a straight line though. It’s more like an exponential.

As far as plieotropy goes (one gene having multiple effects), a better approach would be to create genetic predictors for a large number of traits and prioritize edits in proportion to their effect on the trait of interest, their probability of being causal, and the severity of the condition.

But do I believe you could probably just do edits for one disease without a significant negative effect? Yes, so long as the resulting genome is within roughly the normal human range in terms of overall disease risk. There isn’t that much plieotropy to begin with, and the plieotropy that does exist tends to work in your favor; decreasing diabetes risk also tends to decrease risk of hypertension and heart disaease.

A relevant tweet from Nate Silver on the methodology used to conduct the survey:

This is not a scientific way to do a survey. The biggest issue is that it involved personalized outreach based on a totally arbitrary set of criteria. That’s a huge no-no. It also, by design, had very few biosafety or biosecurity experts.

The tweet has some screenshots of relevant parts of the paper

I was pleasantly surprised by how many people enjoyed this post about mountain climbing. I never expected it to gain so much traction, since it doesn’t relate that clearly to rationality or AI or any of the topics usually discussed on LessWrong.

But when I finished the book it was based on, I just felt an overwhelming urge to tell other people about it. The story was just that insane.

Looking back I think Gwern probably summarized what this story is about best: a world beyond the reach of god. The universe does not respect your desire for a coherent, meaningful story. If you make the wrong mistake at the wrong time, game over.

For the past couple of months I’ve actually been drafting a sequel of sorts to this post about a man named Nims Purja. I hope to post it before Christmas!

I can’t believe I’m about to write a comment about air conditioners on a thread about world-ending AI, but having bought one of these one-hose systems for my apartment during a particularly hot summer I can say I was pretty disappointed with its performance.

The main drawback to the one hose system is the cool air never makes it outside the room with the unit. I tried putting a bunch of fans to blow the air to the rest of the house, but as you can imagine that didn’t work very well.

I had no idea why until I zoned out one day while thinking about the air conditioner and realized it was sucking the cold air into the intake and blowing it out of the house. And I did indeed read a bunch of reviews from Costco customers before I bought the unit, none of which mentioned the problem.

Yes, I suspect most parents will probably feel like you. Having kids with a big group of people is just going to be too weird for most people.

You can see from Tsvi’s chart that the gains from selection among just two people are already pretty large: probably 1.5-2 standard deviations across a large panel of traits. So if someone manages to get the protocol working you can still benefit from it even if you only want to use it for yourself and your wife.

But it’s worth pointing out that this is not that much different than having grand kids or great-grandkids; you’ll share about these same amount of DNA with them as you would with children you have with 3-7 other people. So if you’re ok with the idea of grand kids this shouldn’t be THAT weird other than the “skipping generations” part.

Musk is strangely insightful about some things (electric cars becoming mainstream, reusable rockets being economically feasible), and strangely thoughtless about other things. If I’ve learned anything from digesting a few hundred blogs and podcasts on AI over the past 7 years, it’s that there is no single simple objective that captures what we actually want AI to do.

Curiosity is not going to cut it. Nor is “freedom”, which is what Musk was talking with Stuart Russel about maximizing a year or two ago. Human values are messy and context-dependent and not even internally consistent. If we actually want AI that fulfills the desires that humans would want after long reflection on the outcomes, it’s going to involve a lot of learning from human behavior, likely with design mistakes along the way which we will have to correct.

The hard part is not finding a single thing to maximize. The hard part is training an agent that is corrigible so that when we inevitably mess up, we don’t just die or enter a permanent dystopia on the first attempt.

I visited New York City for the first time in my life last week. It’s odd coming to the city after a lifetime of consuming media that references various locations within it. I almost feel like I know it even though I’ve never been. This is the place where it all happens, where everyone important lives. It’s THE reference point for everything. The heights of tall objects are compared to The Statue of Liberty. The blast radius of nuclear bombs are compared to the size of Manhattan. Local news is reported as if it is in the national interest for people around the country to know.

The people were different than the ones I’m accustomed to. The drivers honk more and drive aggressively. The subway passengers wear thousand-dollar Balenciaga sneakers. They are taller, better looking, and better dressed than the people you’re used to.

And everywhere there is self-reference. In the cities I frequent, paraphernalia bearing the name of the city is confined to a handful of tourist shops in the downtown area (if it exists at all). In New York City, it is absolutely everywhere. Everywhere the implicit experience for sale is the same: I was there. I was part of it. I matter.

I felt this emotion everywhere I went. Manhattan truly feels like the center of the country. I found myself looking at the cost of renting an apartment in Chinatown or in Brooklyn, wondering if I could afford it, wondering who I might become friends with if I moved there, and what experiences I might have that I would otherwise miss.

I also felt periodic disgust with the excess, the self-importance, and the highly visible obsession with status that so many people seem to exhibit. I looked up at the empty $200 million apartments in Billionaire’s row and thought about how badly large cities need a land value tax. I looked around at all the tourists in Times Square, smiling for the camera in front large billboards, then frowning as they examined the photo to see whether it was good enough to post on Instagram. I wondered how many children we could cure of malaria if these people shifted 10% of their spending towards helping others.

This is the place where rich people go to compete in zero-sum status games. It breeds arrogant, out-of-touch elites. This is the place where talented young people go to pay half their income in rent and raise a small furry child simulator in place of the one they have forgotten to want. This is, as Isegoria so aptly put it, an IQ grinder, that disproportionately attracts smart and well educated people who reproduce at below replacement rate.

The huge disparities between rich and poor are omnipresent. I watched several dozen people (myself included) walk past a homeless diabetic with legs that were literally rotting away. I briefly wondered what was wrong with society that we allowed this to happen before walking away to board a bus out of the city.

I’m sure all these things have been said about New York City before, and I’m sure they will be said again in the future. I’ll probably return for a longer visit sometime in the future.

I’ll give a quick TL;DR here since I know the post is long.

There’s about 20,000 genes that affect intelligence. We can identify maybe 500 of them right now. With more data (which we could get from government biobanks or consumer genomics companies), we could identify far more.

If you could edit a significant number of iq-decreasing genetic variants to their iq-increasing counterpart, it would have a large impact on intelligence. We know this to be the case for embryos, but it is also probably the case (to a lesser extent) for adults.

So the idea is you inject trillions of these editing proteins into the bloodstream, encapsulated in a delivery capsule like a lipid nanoparticle or adeno-associated virus, they make their way into the brain, then the brain cells, and the make a large number of edits in each one.

This might sound impossible, but in fact we’ve done something a bit like this in mice already. In this paper, the authors used an adenovirus to deliver an editor to the brain. They were able to make the targeted edit in about 60% of the neurons in the mouse’s brain.

There are two gene editing tools created in the last 7 years which are very good candidates for our task, with a low chance of resulting in off-target edits or other errors. Those two tools are called base editors and prime editors. Both are based on CRISPR.

If you could do this, and give the average brain cell 50% of the desired edits, you could probably increase IQ by somewhere between 20 and 100 points.

What makes this difficult

There are two tricky parts of this proposal: getting high editing efficiency, and getting the editors into the brain.

The first (editing efficiency) is what I plan to focus on if I can get a grant. The main issue is getting enough editors inside the cell and ensuring that they have high efficiency at relatively low doses. You can only put so many proteins inside a cell before it starts hurting the cell, so we have to make a large number of edits (at least a few hundred) with a fixed number of editor proteins.

The second challenge (delivery efficiency) is being worked on by several companies right now because they are trying to make effective therapies for monogenic brain diseases. If you plan to go through the bloodstream (likely the best approach), the three best candidates are lipid nanoparticles, engineered virus-like particles and adeno-associated viruses.

There are additional considerations like how to prevent a dangerous immune response, how to avoid off-target edits, how to ensure the gene we’re targeting is actually the right one, how to get this past the regulators, how to make sure the genes we target actually do something in adult brains, and others which I address in the post.

What I plan to do

I’m trying to get a grant to do research on multiplex editing. If I can we will try to increase the number of edits that can be done at the same time in cell culture while minimizing off-targets, cytotoxicity, immune response, and other side-effects.

If that works, I’ll probably try to start a company to treat polygenic brain disorders like Alzheimers. If we make it through safety trials for such a condition, we can probably start a trial for intelligence enhancement.

If you know someone that might be interested in funding this work, or a biologist with CRISPR editor expertise, please send me a message!