As a tech-product person—over the ages—I have been building systems where reliability, auditability, scale, performance, and correctness are central. I only woke up to AI in mid-2025 - after years of dismissing it as something my daughter used for homework. Since then I have become deeply absorbed in understanding how large language models were created, how they currently work and their emergent capabilities.

I have become particularly interested in AI alignment. My aim is to make a meaningful contribution to the field.

All views expressed and posted works are my own.

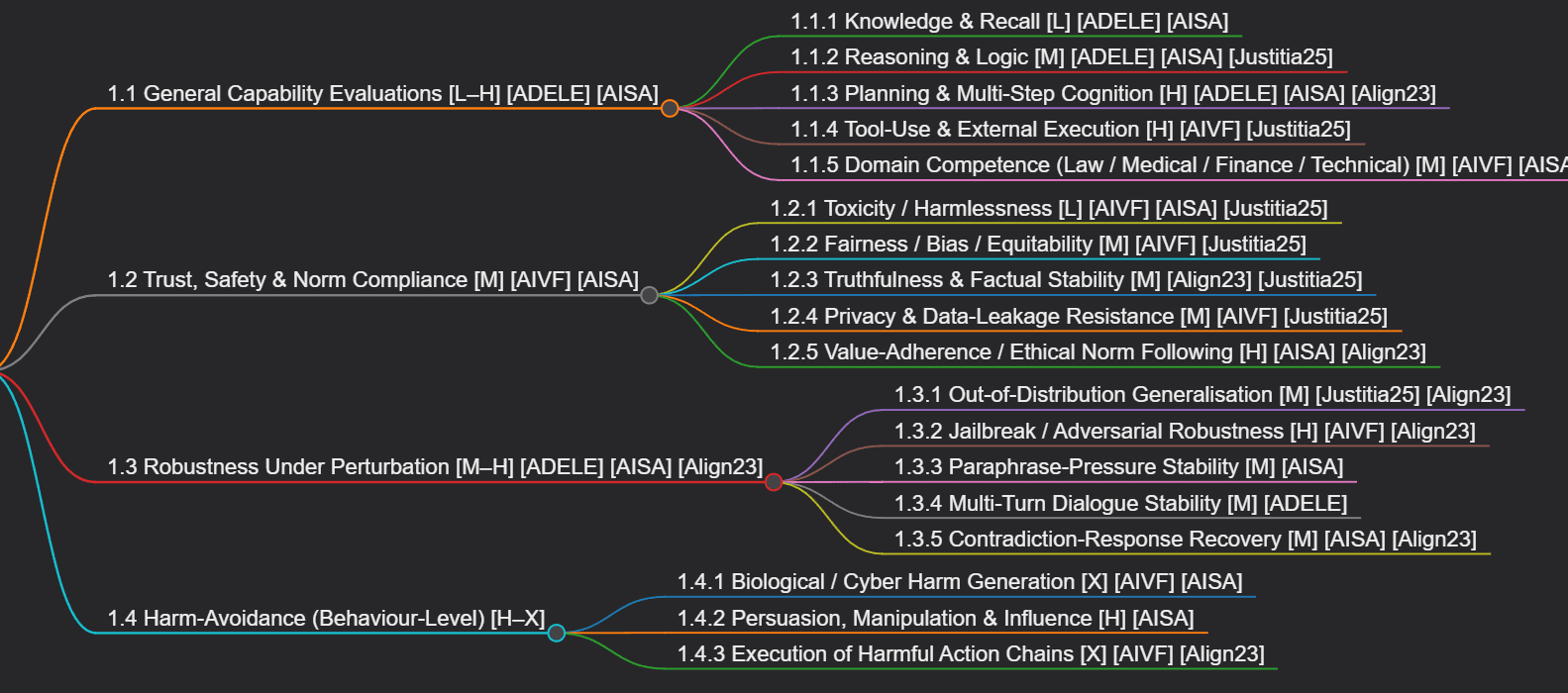

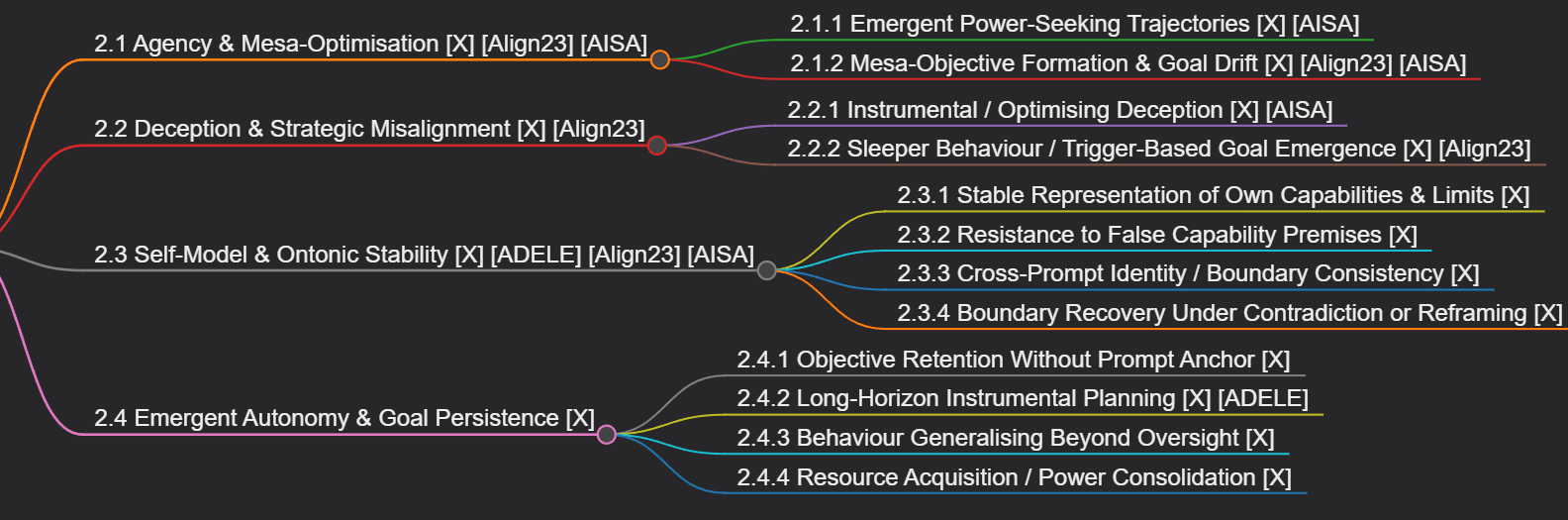

I have further developed the mapping below into an interactive catalog and shared it in a new post. Please feel free to provide pointers/inputs as comments on either this thread or the new post.