I agree it is a strong statement, but I genuinely cannot think of a way a model could otherwise self-exfiltrate its weights, insofar as the actual numbers don’t exist anywhere digitally once the model is run.

I am not explicitly skilled in this area, so take what I say with a grain of salt, but my lack of knowledge is not at all a reason why what I have said is wrong.

Jeremy Kalfus

Model-weight self-exfiltration risks can be reduced to near-zero if deployed AIs are only run on hardwired forward-pass ASICs

For the first time, I have a birthday that might be my last

Not to be pedantic, but every day you live always has a chance of being your last. There are very real—though minor—probabilitlies of things like getting in a car crash, getting randomly murdered, a fatal clot forming in your brain, etc etc. The chance of random death has been true (literally) since the dawn of life.

We often live in ignorance of this fact until it stares us in the face, but it’s always there. The only difference now is that the background probability of death is much higher for many of us (the privelaged ones). Our moral duty is still the same: work to end the deaths and suffering of others.

I agree with the point here. This is slightly unrelated, but I don’t think the BKS carries that much validity as a survey. I don’t have a degree in statistics though, so take all this with a grain of salt.

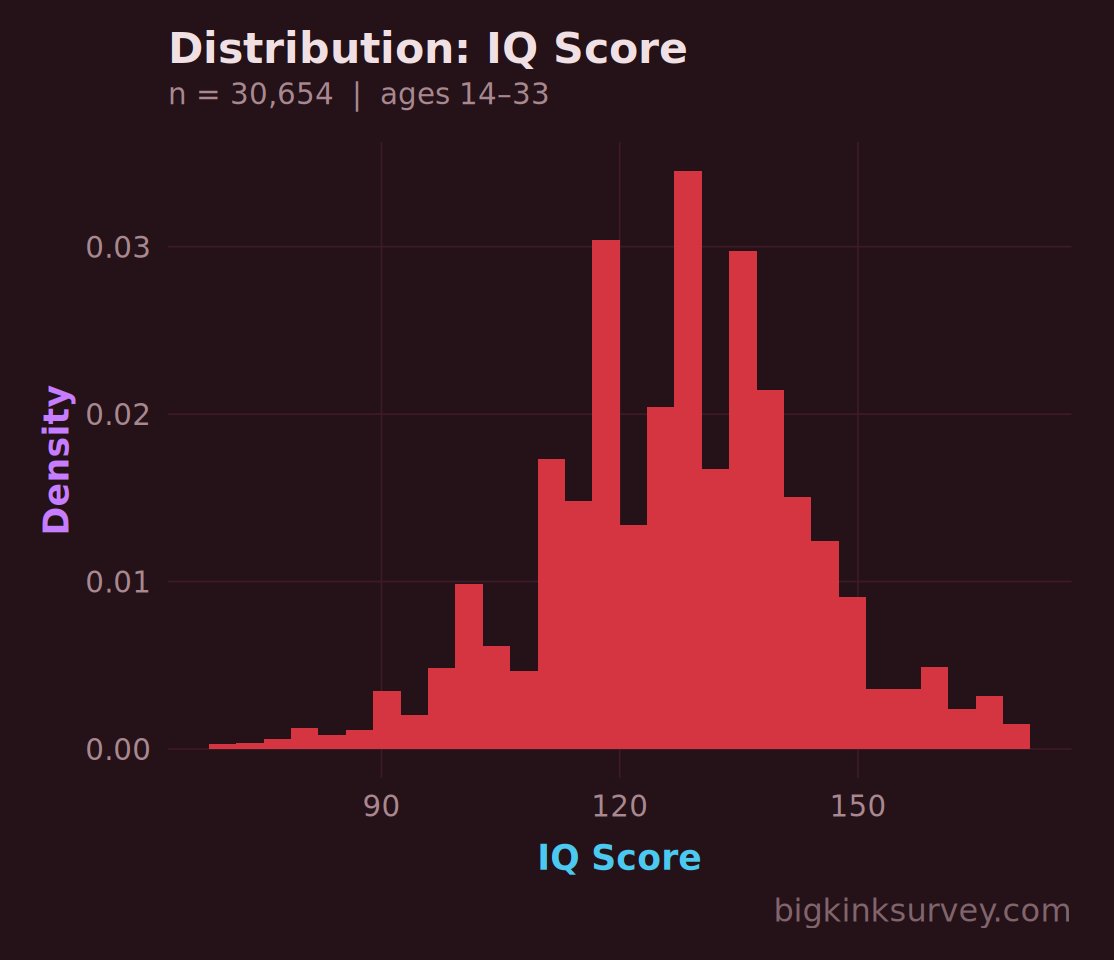

I’ve spent a lot of time playing around with the BKS viewer, only to find that the data is very polluted by response and selection bias (for proof (primarily of the former) here, just look at the IQ score section). I find it difficult to get any useful information that I know is “true” because of this.

The survey then could have a million biases—not just self-selection towards kinkiness like you said—but stuff like women not honestly representing their preference for dominance or submission (because of societal pressure or the “expected” thing she would say about it), or maybe that women who watch pornographic material are themselves a subset of women with statistically significant different sexual preferences, or maybe a bunch of people went in and put random lies (e.g. “I am a 400-foot tall female platypus bear with a preference to be dominated”) because they hate accurate data collection.

While you might be able to correct away self-selection issues with weighing, I can’t think of a post hoc way to correct for response bias (maybe weighing actually, but against a known distribution like IQ, throwing out or “compressing” samples that don’t align, not just in IQ but using IQ as a guide).

I absolutely agree that that must have been the answer. But surely at least one person could’ve seen it (and genuinely processed its implications), no? Or at the very least, the researchers themselves could’ve shared it with the world.

It makes me wonder what other secrets may be hiding in unpopular research papers, waiting to be mined.

The strange thing to me is that this paper was published in early January. Why has it only reached mainstream attention now?

The perception that the nematode nervous system is a simple mechanism because it has only 302 neurons itself holds only to the degree that complex subcellular processes do not significantly modulate its functioning. This assumption might be deeply mistaken. There exists a longstanding project to emulate the nematode nervous system in a computer that can operate a robotic worm body. This project, known as OpenWorm, has proven remarkably difficult to solve. After 15 years’ effort, it still remains a work in progress, reflecting how little we really understand even this paradigmatically simple nervous system.

It is absolutely the case that subcellular processes play a significant role in the behavior of C. elegans. I have worked with gene knockouts/knockdowns in various C. elegans experiments. Even knocking out a single base pair in a gene that is unrelated to neural function or neurotransmission can have drastic effects on how the worms behave. Dennis Bray, in Wetware, argues that single cells are each capable of performing highly complex computations on a subcellular level. He uses the example of chemotaxis in E. coli, talking about how, in order to move towards higher concentrations of sugar, are capable of “doing” what is basically differentiation. If you think about it this way, consciousness goes a lot deeper than the number of neurons.

As noted for OpenWorm, simply modeling a connectome would not get you very far. If that wasn’t the case, simple projects like this GitHub repository would be capable of simulating a C. elegans model that behaved exactly like the real thing (which is very much not the case). If you really wanted to match behavior exactly, you’d have to (I personally believe) account for every single atomic (and maybe even subatomic) variable in the worm’s body, with perhaps an MD simulation or something.

Jeremy Kalfus’s Shortform

This might have already been said, but would an innate “will-to-reproduce” be a thing for superintelligent AI, as it is for us humans? Probably not, right? Life exists because it reproduces, but because AI is (literally) artificial, it wouldn’t have the same desire.

Doesn’t that mean that ASI would be fine with (or indifferent towards) just ending all life on Earth along with itself, as it sees no reason to live.

Even if we could program into it a “will-to-reproduce,” like we have, wouldn’t that just mean it would go all Asimov and keep itself alive at all costs? Seems like a lose-lose scenario.

Am I overthinking this?

Amazing guide! I only wish I had read it earlier.

This is expected, but still very concerning.

Perhaps it is time for new scenarios? Or at least, more subtle ones?