MMath Cambridge. Currently studying postgrad at Edinburgh.

Donald Hobson

Shannon mutual information doesn’t really capture my intuitions either. Take a random number X, and a cryptographically strong hash function. Calculate hash(X) and hash(X+1).

Now these variables share lots of mutual information. But if I just delete X, there is no way an agent with limited compute can find or exploit the link. I think mutual information gives false positives, where Pearson info gave false negatives.

So Pearson Correlation ⇒ Actual info ⇒ Shannon mutual info.

So one potential lesson is to keep track of which direction your formalisms deviate from reality in. Are they intended to have no false positives, or no false negatives. Some mathematical approximations, like polynomial time = runnable in practice, fail in both directions but are still useful when not being goodhearted too much.

Another reason someone might stick to the rules is if they think the rules carry more wisdom than their own judgement. Suppose you knew you weren’t great at verbal discussions, and could be persuaded into a lot of different positions by a smart fast-talker, if you engaged with the arguments at all. You also trust that the rules were written by smart wise experienced people. Your best strategy is to stick to the rules and ignore their arguments.

Someone comes along with a phone that’s almost out of battery and a sob story about how they need it to be charged. They ask if they can just plug it in to your computer for a bit to charge it. If you refuse, citing “rule 172) no customer can plug any electronics into your computer. ” then you look almost like a blankface. If you let them plug the phone in, you run the risk of malware. If you understand the risk of malware, you could refuse because of that. But if you don’t understand that, the best you can do is follow rules that were written for some good reason, even if you don’t know what it was.

On its face, this story contains some shaky arguments. In particular, Alpha is initially going to have 100x-1,000,000x more resources than Alice. Even if Alice grows its resources faster, the alignment tax would have to be very large for Alice to end up with control of a substantial fraction of the world’s resources.

This makes the hidden assumption that “resources” is a good abstraction in this scenario.

It is being assumed that the amount of resources an agent “has” is a well defined quantity. It assumes agent can only grow their resources slowly by reinvesting them. And that an agent can weather any sabotage attempts by agents with far less resources.

I think this assumption is blatantly untrue.

Companies can be sabotaged in all sorts of ways. Money or material resources can be subverted, so that while they are notionally in the control of X, they end up benefiting Y, or just stolen. Taking over the world might depend on being the first party to develop self replicating nanotech, which might require just insight and common lab equipment.

Don’t think “The US military has nukes, the AI doesn’t, so the US military has an advantage”, think “one carefully crafted message and the nukes will land where the AI wants them to, and the military commanders will think it their own idea.”

You should be deeply embarrassed if your model outputs an obviously wrong or obviously time-inconsistent answer even in a hypothetical situation.

Suppose you have a particle accelerator that goes up to half the speed of light. You notice an effect whereby faster particles become harder to accelerate.

You curve fit this effect and get that . and both fit the data, well the first one fits the data slightly better. However, when you test your formula on the case of a particle travelling at twice the speed of light, you get back nonsensical imaginary numbers. Clearly the real formula must be the second one. (The real formula is actually the first one)

A good model will often give a nonsensical answer when asked a nonsensical question, and nonsensical questions don’t always look nonsensical.

I would like to propose a model that is more flattering to humans, and more similar to how other parts of human cognition work. When we see a simple textual mistake, like a repeated “the”, we don’t notice it by default. Human minds correct simple errors automatically without consciously noticing that they are doing it. We round to the nearest pattern.

I propose that automatic pattern matching to the closest thing that makes sense is happening at a higher level too. When humans skim semi contradictory text, they produce a more consistent world model that doesn’t quite match up with what is said.

Language feeds into a deeper, sensible world model module within the human brain and GPT2 doesn’t really have a coherent world model.

OK, so maybe this is a cool new way to look at at certain aspects of GPT ontology… but why this primordial ontological role for the penis? I imagine Freud would have something to say about this. Perhaps I’ll run a GPT4 Freud simulacrum and find out (potentially) what.

My guess is that humans tend to use a lot of vague euphemisms when talking about sex and genitalia.

In a lot of contexts, “Are they doing it?” would refer to sex, because humans often prefer to keep some level of plausible deniability.

Which leaves some belief that vagueness implies sexual content.

Suppose Alex!20 reads about play pumps, and vows to give some money to them every month. Alex!30 learns that actually, this charity is doing harm (on net). If he went back in time and gave Alex!20 a short presentation, Alex!20 wouldn’t make the vow. Alex!20′s actual goal was to make the world a better place, and he thought play pumps did that. Making simple vows that bind your behaviour restricts your freedom to act on the best available evidence. The rational thing to do is to be actively checking that such actions make sense, based on the best available evidence. As soon as some evidence suggests a new charity may be more effective, say oops and switch.

I mean I would say that

Partly because mass is good on rational merits (the utility gained from meeting up with fellow humans, thinking about ethics, meditating through prayer, singing with the congregation).

Is questionable. It reads like the excuse of someone who never really said oops, and decided they had made a mistake. I am sure that there are lots of clubs and knitting groups you could go to. I suspect that the rest of the activities are not helpful to actually getting ethics and rationality right. (It wouldn’t help a mathematician to sing songs about how “2+2=7” every week. ) The human brain is incapable of listening to and singing about obvious nonsense every week without being somewhat influenced by it. And I suspect that influence may not be in a good direction.

If you ask GPT-n to produce a design for a fusion reactor, all the prompts that talk about fusion are going to say that a working reactor hasn’t yet been built, or imitate cranks or works of fiction.

It seems unlikely that a text predictor could pick up enough info about fusion to be able to design a working reactor, without figuring out that humans haven’t made any fusion reactors that produce net power.

If you did somehow get a response, the level of safety you would get is the level a typical human would display. (conditional on the prompt) If some information is an obvious infohazard, such that no human capable of coming up with it would share it, then such data won’t be in GPT-n ’s training dataset, and won’t be predicted. However, the process of conditioning might amplify tiny probabilities of human failure.

Suppose that any easy design of fusion reactor could be turned into a bomb. And ignore cranks and fiction. Then suppose 99% of people who invented a fusion reactor would realize this, and stay quiet. The other 1% would write an article that starts with “To make a fusion reactor …” . Then this prompt will cause GPT-n to generate the article that a human that didn’t notice the danger would come up with.

This also applies to dangers like leaking radiation, or just blowing up randomly if your materials weren’t pure enough.

If the whole reason you didn’t want to open the window was the energy put in to heating/ cooling the air, why not use a heat exchanger? I reackon it cold be done using a desktop fan, a stack of thin aluminium plates, and a few pieces of cardboard or plastic to block air flow.

This proposal is a minor variation on the HCH type ideas. The main differences seem to be

Only a single training example needed through use of hypotheticals.

The human can propose something else on the next step.

The human can’t query copies of their simulation, at least not at first. There are probably all sorts of ways the human can bootstrap, they are writing arbitrary maths expressions.

This leads to a selection of problems largely similar to the HCH problems.

We need good inner alignment. (And with this, we also need to understand hypotheticals).

High fidelity, we don’t want a game of Chinese whispers.

The process could never converge, it could get stuck in an endless loop.

Especially if passing the message on to the next cindy is one button press, what happens when one of them stumbles on a really memmetic idea, would the question end up filled with “repost or a ghost will haunt you” nonsense that plagues some websites. You are applying strong memetic selection pressure, and might get really funny jokes instead of AI ideas.

Having the whole alignment community for 6 months be part of the question answerer is more likely to work than one person for a few hours, but that amplifies other problems.

This method also has the problem of amplified failure probability. Suppose somewhere down the line, millions of iterations in, cindy goes outside for a walk, and gets hit by a truck. Virtual cindy doesn’t return to continue the next layer of the recursion. What then? (Possibly some code just adds “attempt 2” at the top and tries again.)

Ok, so another million layers in, cindy drops a coffee cup on the keyboard, accidentally typing some rubbish. This gets interpreted by the AI as a mathematical command, and the AI goes on to maximize ???

Chaos theory. Someone else develops a paperclip maximizer many iterations in, and the paperclip maximizer realizes it’s in a simulation, hacks into the answer channel and returns “make as many paperclips as possible” to the AI.

And then there is the standard mindcrime concern. Where are all these virtual cindies going once we are done with them? We can probably just tell the AI in english that our utility function is likely to dislike deleting virtual humans. So all the virtual humans get saved on disk, and then can live in the utopia. Hey, we need loads of people to fill up the dyson sphere anyway.

I am not confident that your “make it complicated and personal data” approach at the root really stops all the aliens doing weird acausal stuff. The multiverse is big. Somewhere out there there is a cindy producing any bitstream that looks like this personal data, and somewhere out there are aliens faking the whole scenario for every possible stream of similar data. You probably need the internal counterfactual design to be resistant to acausal tampering.

Within a narrow field, where data is plentiful, learning rationality is much less powerful than learning from piles of data. Imagine three people, A, B and C. A doesn’t know any chess or rationality, B has studied game theory, bays theorem, principles of decision theory and all round rationality. They have never played chess before, and have just been told the rules. C has been playing chess for years.

I would expect C to win easily. Its much easier to learn from experience, and remember your teachers experience, than it is to deduce what good chess strategies are from first principles. The only time I would expect B to win is if they were playing nim, or some other game with a simple winning strategy, and C had an intuition for this strategy, but sometimes made mistakes. I would expect B to beat A however.

Rationality is learning to squeeze every last drop of usefulness out of your data, and doing this is less effective at just grabbing more data when data is plentiful. Financial markets are another plentiful data domain. Many hedge fundies already know game theory, they also have a detailed knowledge of financial minutiae. Wanabe rationalists, If you want to be a banker, go ahead. But don’t expect to beat the market from rationality alone any more than you can good deduce chess moves from first principles and beat a grandmaster without ever having played before.

Rationality comes into its own because it applies a small boost to many domains of skill, not a big boost to any one. It also works much better in the absence of piles of data.

The every day world is roughly inexploitable, and very data rich. The regions you would expect rationality to do well in are the ones where there isn’t a pile of data so large even a scientist can’t ignore it. Fermi Paradox, AGI design, Interpretations of Quantum mechanics, Philosophical Zombies, ect.

There is also a cultural element in that the people who know most Rationality have more important things to do than using this to gain a slight advantage in buisness. Many of the people here would rather be discussing AI alignment, or the fermi paradox, or black holes, or anything interesting really than being an investment banker. All the people that get to be skilled rationalists value knowledge for its own sake and are pursuing that.

You would also need many data points to gain good evidence unless rationality was just magic. I am faced with a tricky choice and choose option 1. Its quite good. Would I have chosen option 2 if I hadn’t learned rationality? How good was option 2 any way? It’s hard to spot when rationaliy has helped someone.

In conclusion, the lack of “Rationality gave me magic powers” clickbait is not significant evidence that we are doing something wrong. A large Randomized controlled trial finding that rationality didn’t work would be worrying.

One thing in the posts I found surprising was Eliezers assertion that you needed a dangerous superintelligence to get nanotech. If the AI is expected to do everything itself, including inventing the concept of nanotech, I agree that this is dangerously superintelligent.

However, suppose Alpha Quantum can reliably approximate the behaviour of almost any particle configuration. Not literally any, it can’t run a quantum computer factorizing large numbers better than factoring algorithms, but enough to design a nanomachine. (It has been trained to approximate the ground truth of quantum mechanics equations, and it does this very well.)

For example, you could use IDA, start training to imitate a simulation of a handful of particles, then compose several smaller nets into one large one.

Add a nice user interface and we can drag and drop atoms.

You can add optimization, gradient descent trying to maximize the efficiency of a motor, or minimize the size of a logic gate. All of this is optimised to fit a simple equation, so assuming you don’t have smart general mesaoptimizers forming, and deducing how to manipulate humans based on very little info about humans, you should be safe. Again, designing a few nanogears by gradient descent techniques and shallow heuristics shouldn’t be hard. You also want to make sure not to design a nanocomputer containing a UFAI, but a computer is fairly large and obvious. (Optimizing for the smallest logic gate won’t produce a UFAI.)

If the humans want to make a nanocomputer, they download an existing chip schematic, and scale it down, replacing the logic gates with nanologic.

The first physical hardware would be a minimal nanoassembler. Analogue signals going from macroscopic to nanoscopic. The nanoassembler is a robotic arm. All the control decisions, the digital to analogue conversion, that’s all macroscopic. This is of course, all in lab conditions. Perhaps this is produced with a scanning tunnelling microscope. Perhaps carefully designed proteins.

Once you have this, it shouldn’t be too hard to bootstrap to create anything you can design.

Basically I don’t think it is too hard for humans to create nanotech with the use of some narrowish and dumb AI. And I am wondering if this changes the strategic picture at all?

Yet for moments after the operation, you would be wishing that you had chosen Drug A instead.

Why?

I can care about the whole 4d block. The journey not just the destination.

Suppose that the universe would cease to exist at some point in the future. And you will be alive to the end. At the last instant, nothing you can do can effect reality in any way. The future is empty either way. Therefore all past decisions were equally good?

No. When judging a decision, we look out over all the things effected, whether past or future.

Drug B without regret.

One way gradient hacking could happen is if everything is highly polysemantic. The extreme case would be an RNN emulating a CPU running a holomorphically encrypted algorithm. (Not that I expect gradient descent to produce such a thing) In a polysemantic net, there isn’t a particular bunch of weights corresponding to the mesa-optimizer. The weights encode the mesaoptimizer, and a lot of other things, with all the info mixed up in complicated ways. Trying to dismantle the mesaoptimizer would also break a lot of the other structure that is making useful predictions. So long as the mesaoptimizers influence is rare, gradient descent won’t remove it.

That crystal nights story. As I was reading it, it was like a mini Eliezer in my brain facepalming over and over.

Its clear that the characters have little idea how much suffering they caused, or how close they came to destroying humanity. It was basically luck that pocket universe creation caused a building wrecking explosion, not a supernova explosion. Its also basically luck that the Phites didn’t leave some nanogoo behind them. You still have a hyperrapid alien civ on the other side of that wormhole. One with known spacewarping tech, and good reason to hate you, or just want to stop you trying the same thing again, or to strip every last bit of info about their creation from human brains. How long until they invade?

This feels like an idiot who is playing with several barely subcritical lumps of uranium, and drops one on their foot.

Another question is “what counts as work? - What are they doing instead of work?”

Suppose a group of people are all given UBI. They all quit their job stacking shelves.

They go on to do the following instead.

start writing a novel

look after their children (instead of using a nursery)

look after their ageing parents (instead of a nursing home)

learn how to play the guitar

make their (publicly visible) garden a spectacular display of flowers.

take (unpaid) positions on the local community counsel and the school board of governors.

helping out at the local donkey sanctuary

getting themselves fit and healthy (exercise time +cooking healthy food time)

Their are a variety of tasks that are like this. Beneficial to society in some way, compared to sitting doing nothing. But not the prototypical concept of “work”.

I would expect a significant proportion of people on UBI to do something in this category.

Do we say that UBI is discouraging work, and that these people are having positive effects by not working? Do we say that they are now doing unpaid work?

Of course, the answer to these questions doesn’t change reality, only how we describe it.

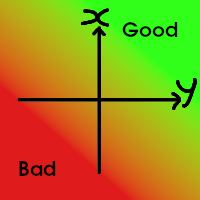

A related spell is SCALO DISTINCTUS!

A spell that can turn this

Into

The spell works by splitting what we care about (x+y) into two distinct terms, x and y, and then implicitly taking the minimum by focusing on whichever part is worst.

The spell needs an implicit 0 for both x and y to work. But is a very powerful spell for picking your preferred point on any tradeoff scale, and refusing anything but a Parito improvement. I suspect the use of this spell is one of the main reasons TARE DETRIMENS! is cast at all. In a world with just 1 scale, people will pick the best option, wherever the zero lies.

One place I have seen this spell attempted is in environmentalism. The 0 is chosen as the world in caveman days. X and Y are chosen as X=”the environment”, and Y=”human quality of life”. If this casting succeeds, any improvement in human quality of life can never justify the slightest environmental harm, and we should all go back to a Malthusian harmony with nature.

A skilled caster will split the axes further. Whichever axis things are getting worse along should be subdivided into as many pieces as possible. In the environmentalism example, X should be subdivided into “carbon dioxide + pollution + loss of biodiversity + rising sea levels + running out of natural resources + species going extinct” And then you have 1 axis on which things are improving, and 6 axis along which things are getting worse. The multiplicity of axes also helps to distract from any one of them. Running out of natural resources (like copper ore) is something that would effect human quality of life, not harm the environment, but it can still be pulled away out of Y and into its own axis to fight for team X. Now you have a whole army on the side of X all picking on a single Y, be sure to associate Y with the most negative concept you can find “private jets and technowhiz gagets you don’t really need” and victory is almost assured.

From an outside view, you have given a long list of wordy philosophical arguments, all of which involve terms that you haven’t defined. The success rate for arguments like that isn’t great.

We can be reasonably certain that the world is made up of some kind of fundamental part obeying simple mathematical laws. I don’t know which laws, but I expect there to be some set of equations, of which quantum mechanics and relativity are approximations, that predicts every detail of reality.

The minds of humans, including myself, are part of reality. Look at a philosopher talking about consciousness or qualia in great detail. “A Philosopher talking about qualia” is a high level approximate description of a particular collection of quantum fields or super-strings (or whatever reality is made of).

You can choose a set of similar patterns of quantum fields and call them qualia. This makes a qualia the same type of thing as a word or an apple. You have some criteria about what patterns of quantum fields do or don’t count as an X. This lets you use the word X to describe the world. There are various details about how we actually discriminate based on sensory experience. All of our idea of what an apple is comes from our sensory experience of apples, correlated to sensory experience of people saying the word “apple”. This is a feature of the map, not the territory.

I am a mind. A mind is a particular arrangement of quantum fields that selects actions based on some utility function stored within it. Deep blue would be a simpler example of a mind. The point is that minds are mechanistic, (mind is an implicitly defined set of patterns of quantum fields, like apple) minds also contain goals embedded within their structure. My goals happen to make various references to other minds, in particular they say to avoid an implicitly defined set of states that my map calls minds in pain.

I would use a definition of qualia in which they were some real, neurological phenomena. I don’t know enough neurology to say which.

How likely is this to work?

Not at all. It won’t work.

There is a aphorism in the field of Cryptography: Any cryptographic system formally proven to be secure… isn’t.

This seems backwards to me. If you prove a cryptographic protocol works, using some assumptions, then the only way it can fail is if the assumptions fail. Its not that a system using RSA is 100% secure, someone could peak in your window and see the messages after decryption. But its sure more secure than some random nonsense code with no proofs about it, like people “encoding” data into base 16.

A formal proof of safety, under some assumptions, gives some evidence of safety in a world where those assumptions might or might not hold. Checking whether the assumptions are actually true in reality is a difficult and important skill.

Did you notice that there are currently super-intelligent beings living on Earth, ones that are smarter than any human who has ever lived and who have the ability to destroy the entire planet? They have names like Google, Facebook, the US Military, the People’s Liberation Army, Bitcoin and Ethereum.

Nope. Big organizations are big. They aren’t superintelligent. There are plenty of cases of huge organizations of people making utterly stupid decisions.

There is a subtlety here. Large updates from extremely unlikely to quite likely are common. Large updates from quite likely to exponentially sure are harder to come by. Lets pick an extreme example, suppose a friend builds a coin tossing robot. The friend sends you a 1mb file, claiming it is the sequence of coin tosses. Your probability assigned to this particular sequence being the way the coin landed will jump straight from 2−8,000,000 to somewhere between 1% and 99% (depending on the friends level of trustworthiness and engineering skill) Note that the probability you assign to several other sequences increases too. For example, its not that unlikely that your friend accidentally put a not in their code, so your probability on the exact opposite sequence should also be >>2−8,000,000 Its not that unlikely that they turned the sequence backwards, or xored it with pi or … Do you see the pattern. You are assigning high probability to the sequences with low conditional Komolgorov complexity relative to the existing data.

Now think about what it would take to get a probability of 1−2−8,000,000 on the coin landing that particular sequence. All sorts of wild and wacky hypothesis have probability >2−8,000,000 . From the boring stuff like a dodgy component or other undetected bug, to more exotic hypothesis like aliens tampering with the coin tossing robot, or dark lords of the matrix directly controlling your optic nerve. You can’t get this level of certainty about anything ever. (modulo concerns about what it means to assign p<1 to probability theory)

You can easily update from exponentially close to 0, but you can’t update to exponentially close to one. This may have something to do with there being exponentially many very unlikely theories to start off with. But only a few likely ones.

If you have 3 theories that predict much the same observations, and all other theories predict something different, you can easily update to “probably one of these 3”. But you can’t tell those 3 apart. In AIXI, any turing machine has a parade of slightly more complex, slightly less likely turing machines trailing along behind it. The hypothesis “all the primes, and grahams number” is only slightly more complex than “all the primes”, and is very hard to rule out.