The Anti-Singularity

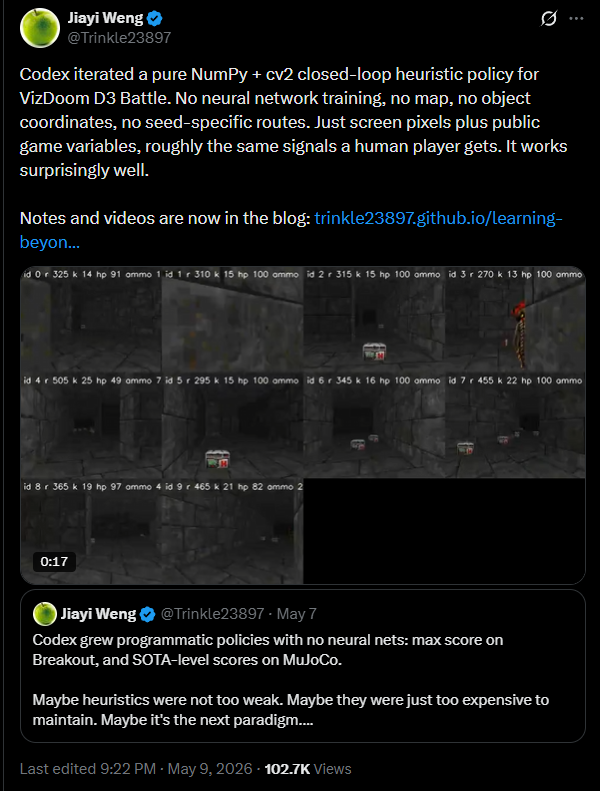

Heuristic solution to Doom generated by GPT-5.4

In his blog post, Jiayi Weng proposes “the next paradigm” for Machine Learning: rather than trying to find beautiful abstractions for general-purpose-learning, we simply take advantage of LLM’s ability to tirelessly iterate on complex designs to build heuristics that can solve whatever task-at-hand we are dealing with.

I do not know whether this next paradigm is indeed the future or not (indeed I hope not) but I think it is at least worth considering the ramifications if it is.

The Singularity

If you are reading this post, you are undoubtedly already familiar with the concept of the Singularity .

As computers become better at learning, they eventually reach a level known as General Purpose AI (GAI) where they are able to perform all of the intellectual tasks that humans can do, but faster and cheaper. This leads to Recursive-Self-Improvement (RSI) where the AI improves itself, since one of the things humans are able to do is build GAI. Eventually RSI leads to Super-Intelligent AI (SAI), a single AI with godlike powers able to solve any conceivable problem, finally and truly defeat Moloch ushering in an age of unprecedented wealth and prosperity, and a sort of golden-age for Humankind where having finally completed our last invention we can finally relax and enjoy the fruits of our labors.

While believers in the Singularity will frequently warn of its perils—if we don’t seed the SAI correctly it may turn us all into paperclips—belief in the Singularity is fundamentally a Utopian vision. Even a world turned into paperclips is perfectly turned into paperclips. Intelligence is a single, measurable concept and once it reaches its final form all will be arrayed beneath its command.

The Anti-Singularity

The anti-singularity exists in a future where the utopian visions of singularity theorists do not merely end badly (as with a paperclip maximizer) but where they cannot come to pass because they are based on shaky philosophical foundations.

In the anti-singularity, there is no such thing as a General Purpose Intelligence. Or, more precisely, the only GAI that is possible is the blind watchmaker of Darwin. In the world of the anti-singularity there is no deeper underlying theory of intelligence. Things just happen because they happen. The optimal form for for surviving on Earth circa 1mya by some amount of luck also happens to be able to make transistors and rocket-ships but never reaches beyond that to the true depths of cosmic knowledge.

We don’t need to wonder what a world of anti-singularity will look like, we already have two examples at hand: biology and discrete-mathematics.

Biology

Biology is a notoriously difficult field to work in. Despite the fact that we have a readily available corpus to learn from (all of nature) and the gobsmakingly huge amounts of wealth available for whoever claims the prize (self-replicating solar farms, immortality, computation too cheap to meter) progress in the field has been slow and uneven.

the field of biology continues to be dominated by high stakes trial-and-error

In a world where AI startups are now worth trillions of dollars, comparable biology startups are barely a blip on the radar. Drug trials can easily end in billion-dollar failures. We still have yet to replicate even the simplest organisms digitally. The reason is computers were designed by humans to be easy to understand and control. Biology, by contrast, is the result of billions of years of purposeless evolution. This slow accumulation of “what works” via tinkering results in systems that are incredibly resistant to the methods of modern science.

This is not to say there has been no progress (we have made great strides) but progress is hard-won, piece-by-piece and rarely generalizes.

Discrete Mathematics

Rule 30 is an example of a simple system with complex behavior

Nearly half-a-century-ago, Stephen Wolfram described an astonishing phenomena. Many of the patterns that we see in nature are the product of neither intentional design, nor of mere-optimization. Rather these patterns arise at a fundamental level from simple rules in the world of discrete mathematics. Wolfram predicted that this discovery would usher in a New Kind of Science. By understanding the basic patterns underlying all of nature, Wolfram claimed, we would be able to reduce all of science to computation based on a simple set of rules.

Simple rules give rise to computational-irreducibility

Unfortunately, Wolfram’s views have yet to have the impact on science that he anticipated. Physics has not yet been reduced to a simple set of rules. More concerningly, for most questions of the type Wolfram is interested in, there is no efficient general-purpose solution. This is due to a phenomena called computational-irreducibility.

As with biology, there are simply too many details with no overarching structure and so the best we can do is simple trial-and-error to see what happens.

So what?

Suppose we live not in the world of the Singularity—where a single SAI brings order to the universe—but the world of the Anti-Singularity—where almost all systems are described by a complex set of interactions that can only be understood via trial-and-error. What does this mean?

It does not mean that AI will not be powerful. Indeed, if the best we can do is to try many possibilities, then the fact that AI can try millions of possibilities in the time it takes for a human to try one will make it exceedingly powerful.

It does mean that the shape of the AI-Alignment problem is quite different. Rather than building a single SAI and trusting our future—good or bad—to it, we instead will find ourselves tending to a diverse garden of different AIs, each optimized to a different environment. In this future, Humanity does not simply build a Last Invention and then enjoy a golden retirement. Rather, the future is filled with an endless series of new and unique challenges that we must adapt—and dare I say evolve—in response to.

The problem becomes less: “we chose the wrong optimization function and now the SAI turned the whole universe into paperclips” and more “Agent58adc9862bd08b56284eadb6bede52c1a033b03306b4333105b07435e55b7339 is producing an anomalously high number of paperclips, somebody needs to go down and figure out what went wrong.” Humans become gardeners atop a new wild ecosystem of heuristic optimizers. These heuristic optimizers are less dangerous—because they are adapted to a particular local set of circumstances—but less predicable—because computational irreducibility says there’s no simple explanation of what goes wrong.

How Likely is this to happen?

I don’t know.

Personally, I am optimistic. I think p(good singularity) > p(anti-singularity) > p(bad singularity)

What do you think?

I’m worried, what should I do about this?

The good news is: there’s nothing you can do. Whether we live in the world of the Singularity or the Anti-Singularity is a fact about the base reality in which we live, not something that can be influenced by human actions.

There may be things that you can do to prepare yourself in the case that the anti-singularity comes to pass. For example strategies like “I should spend all of my money before the singularity because—good or bad—money won’t matter after the singularity” might not apply if the anti-singularity comes to pass. By contrast, the unique set of heuristics that humans have accumulated via 3.5 billion years of evolution may prove more valuable in the world of the anti-singularity. In the world of the anti-singularity diversity, robustness and adaptability matter more than getting it right the first time.

Questions? Comments?

Questions I particularly would like answered: What metrics can we use to tell us ahead of time whether we are in the world of the Singularity or Anti-Singularity? What actions are beneficial in both futures? Only in one of them? Are there any AI-alignment techniques that are obviously applicable in one future but not the other? What does all of this have to do with the current mess in mathematics?

Porque no los dos? We can have a general ASI and a world full of narrow heuristic intelligences used for specific purposes.

You’re asking what if ASI and the intelligence isn’t possible? It’s looking like it almost certainly is. We are general intelligences. So is the AI that coded those heuristics for Doom. We’d have to hit a brick wall almost immediately now to avoid hitting human level AGI. It’s not guaranteed but you’d need to start with a much stronger argument for this to garner my interest

I realize this isn’t a very complete argument. But it does seem like it’s getting more absurd to say things like AI can do all the types of thinking I can, but it’s not as good as I am at some of them, so human level AI might be impossible! It’s technically true but increasingly seeming highly improbable that the limit is just between us and current systems.

I think maybe you missed the point? I’m not saying human-level intelligence isn’t possible (obviously it is). In the world of the anti-singularity Human Intelligence isn’t “General” intelligence (because there’s no such thing) and SAI is impossible.

I don’t understand why the previous sections in the post implies that. Here’s my understanding of the argument:

Intelligence is largely about understanding patterns that allow you to simplify underlying domains. However, many domains we care about have a tremendous amount of irreducible complexity—biological organisms evolved via a pseudorandom process, there’s no reason to expect them to obey clean laws of abstraction. There is no unified theory of biology because there is no simple set of rules that lead to emergent complexity. It’s just tons of weird stuff built on top of each other all the way down. Therefore, an ASI couldn’t just understand all of biology and then one-shot an immortality drug. Creating an immortality drug would necessarily involve a great deal of trial and error—an optimal approach might look something like a random sampling across a very large space of possibilities, which occasionally hits on something that lets you narrow down in the possibility space.

Thus, we can’t have powerful AI, and we won’t have a single ASI controlling everything. We’ll have narrow AI’s optimised for different things instead, and humans will be the gardeners of these optimisers.

===

But why on earth does the second paragraph follow from the first? What’s stopping an ASI from figuring out the best way to navigate these heuristics and applying them itself, the same way humans manage to do both biology and discrete mathematics without having separate bio-humans and math-humans? If the best strategy to solve science is to have many different narrow AI’s, the ASI is the thing that can create those narrow AI’s. Why exactly is it that humans are the ones who “become gardeners atop a wild ecosystem of heuristic optimizers” when your own example of Doom from the start was about an AI doing this right now?

It seems like the “anti-singularity” just means “We have an ASI that can do everything, except the ASI also needs to invest an enormous amount of labor into solving computationally intractable domains. It will only be mildly more sample-efficient than humans and largely have to rely on being able to think faster and work 24⁄7 to have an advantage over people in these areas.” So, we don’t get an AI bootstrapping itself into godhood, but we still become obsolete eventually.

But that’s not what you seem to be saying. You seem to go straight from “Domains like biology have a large amount of computational irreducibility” to “Therefore, humans will always remain at the highest level of abstraction.” Why? An ASI doesn’t have to find a unified theory of biology to make us essentially obsolete. Seems like an anti-singularity just gets us to the same place slower.

I think this is an interesting way to frame this idea. Intelligent behaviour/learning in this frame is basically studying classes of particular phenomena closely as well as learning the general skill to simulate computational systems—but not in a way that leads to total dominance over all fields. In other words, just because you know how to operate a Turing machine does not automatically give you a clean and easy way to master writing quines, even if they are technically both “computational” activities.

Can we have a single high-level AI-bureaucracy which regulates creation and behavior of specialized task-specific AIs? From external view it will look like as single AGI with some set of goals.

In the world of the anti-singularity the AI bureaucracy has all of the same problems modern bureaucracy has. it is confused, error-prone, has conflicting goals at various layers of management and is dramatically less efficient than a swarm of simpler heuristic agents acting on local-information and trading information via markets.

And they will be Moloch eating human resources.