Nice connection! I’d totally overlooked this.

ParrotRobot

A simple “null hypothesis” mechanism for the steady exponential rise in METR task horizon: shorter-horizon failure modes outcompete longer-horizon failure modes for researcher attention.

That is, with each model release, researchers solve the biggest failure modes of the previous system. But longer-horizon failure modes are inherently rarer, so it is not rational to focus on them until shorter-horizon failure modes are fixed. If the distribution of horizon lengths of failures is steady, and every model release fixes the X% most common failures, you will see steady exponential progress.

It’s interesting to speculate about how the recent possible acceleration in progress could be explained under this framework. A simple formal model:

There is a sequence of error types e_1, e_2, e_3, etc.

The first s error types have already been solved, such that errorrate(e_1) = errorrate(e_s) = 0. The long tail of errors e_{s+1} etc has error frequencies decaying exponentially.

With each model release (t → t+1), researchers can afford to fix n error types.

METR time horizon is inversely proportional to the total error rate sum(errorrate(e_i) for all i)

Under this model, there are only two ways progress can speed up: the distribution becomes shorter-tailed (maybe AI systems have become inherently better at generalizing, such that solving the most frequent failure modes now generalizes to many more failures), or the time it takes to fix a failure mode has decreased (perhaps because RLVR offers a more systematic way to solve any reliably measurable failure mode).

(Based on a tweet I posted a few weeks ago)

A concrete suggestion for economists who want to avoid bad intuitions about AI but find themselves cringing at technologists’ beliefs about economics: learn about economic history.

It’s a powerful way to broaden one’s field of view with regard to what economic structures are possible, and the findings do not depend on speculation about the future, or taking Silicon Valley people seriously at all.

I tried my hand at this in this post, but I’m not an economist. A serious economist or economic historian can do much better.

Edited!

This is a consequence of decreasing returns to scale! Without decreasing returns to scale, humans could buy some small territory before their labor is obsolete, and they could run a non-automated economy just on that small territory, and the fact that the territory is small would be no problem, since there are no decreasing returns to scale.

Wow, I didn’t read. Their argument does make sense. And it’s intuitive. Arguably this is sort of happening with AI datacenter investment, where companies like Microsoft are reallocating their limited cash flow away from employees (i.e., laying people off) so they can afford to build AI data centers.

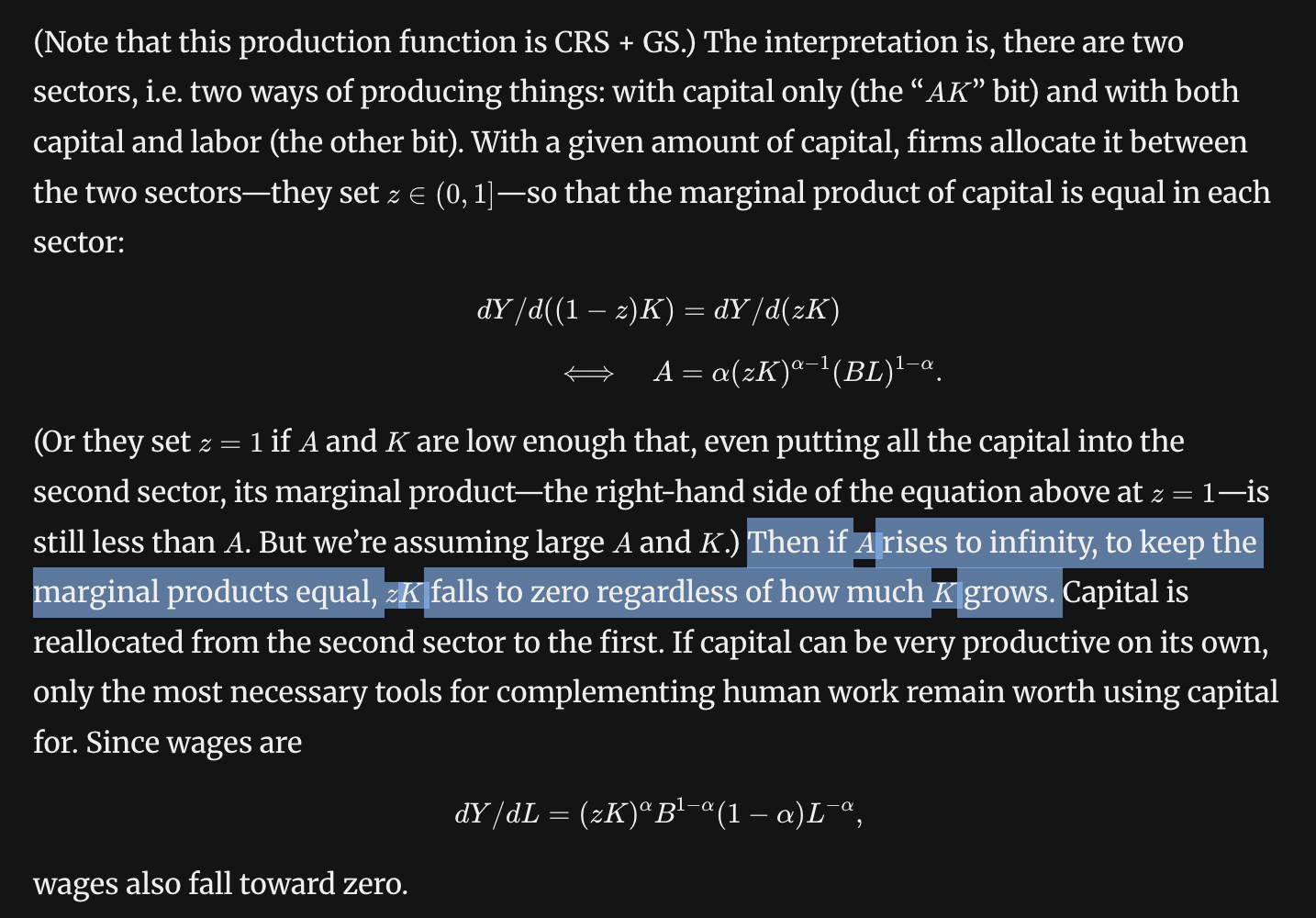

A funny thing about their example is that labor would be far better off if they “walled themselves off” in autarky. In their example, wages fall to zero because of capital flight — because there is an “AK sector” that can absorb an infinite amount of capital at high returns, it is impossible to invest in labor-complementary capital unless wages are zero. So my intuition that humans could “always just leave the automated society” still applies, their example just rules it out by assumption.

Here’s a random list of economic concepts that I wish tech people were more familiar with of. I’ll focus on concepts, not findings, and on intuition rather than exposition.

The semi-endogenous growth model: There is a tug of war between diminishing returns to R&D and growth in R&D capacity from economic growth. For the rate of progress to even stay the same, R&D capacity must continually grow.

Domar aggregation: With few assumptions, overall productivity growth depends on sector-specific productivity growth in proportion to sectors’ revenues. If a sector is 11% of GDP, the economy is “11% bottlenecked” on it.

Why wages increase exponentially with education level: This is empirically observed to be roughly true (the Mincer equation), but why? A simple explanation: the opportunity cost of education is proportional to the wage you can earn with your current level of education. So to be worthwhile, obtaining one more year of education needs to increase your wage by a certain percentage, no matter your current level of education. Each year of education earning people 10% more will look like an exponential.

This is basically “P = MC”, but applied to human capital.

Automation only decreases wages if the economy becomes “decreasing returns to scale”.This posthas a good explanation. Intuition: if humans don’t have to compete with automated actors for things that humans can’t produce (e.g., land or energy), humans could always just leave the automated society and build a 2025-like economy somewhere else.

Is it valuable for tech & AI people to try to learn economics? I very much enjoy doing so, but it certainly hasn’t led to direct benefits or directly relevant contributions. So what is the point? (I think there is a point.)

It’s good to know enough to not be tempted to jump to conclusions about AI impact. I’m a big fan of the kind of arguments that the Epoch & Mechanize founders post on Twitter. A quick “wait, really?” check can dispel assumptions that AI must immediately have a huge impact, or conversely that AI can’t have an unprecedentedly fast impact. This is good for sounding smart. But not directly useful (unless I’m talking to someone who is confused).

I also feel like economic knowledge helps give meaning to the things I personally work on. The most basic version of this is when I familiarize myself with quantitative metrics of impact of past technologies (“comparables”), and try to keep up with how the stuff I work on tracks. I think it’s the same joy that some people get by watching sports and trying to quantify how players and teams are performing.

In that world, I think people wouldn’t say “we have AGI”, right? Since it would be obvious to them that most of what humans do (what they do at that time, which is what they know about) is not yet doable by AI.

Your preferred definition would leave the term AGI open to a scenario where 50% of current tasks get automated gradually using technology similar to current technology (i.e., normal economic growth). It wouldn’t feel like “AGI arrived”, it would feel like “people gradually built more and more software over 50 years that could do more and more stuff”.

I’m worried that the recent AI exposure versus jobs papers (

1,2) are still quite a distance from an ideal identification strategy of finding “profession twins” that differ only in their exposure to AI. Occupations that are differently exposed to AI are different in countless other ways that are correlated with the impact of other recent macroeconomic shocks.

I was reminded of OpenAI’s definition of AGI, a technology that can “outperform humans at most economically valuable work”, and it triggered a pet peeve of mine: It’s tricky to define what it means for something to make up some percentage of “all work”, and OpenAI was painfully vague about what they meant.

More than 50% of people in England used to work in agriculture, and now it’s far less. But if you said that farming machines “can outperform humans at most economically valuable work” it just wouldn’t make sense. Farming machines obviously don’t perform 50% of current economic value.

The most natural definition, in my opinion, is that even after the economy adjusts, AI would still be able to perform >50% of the economically valuable work. Labor income would make up <<50% of GDP, and AI income would make up >50% of GDP. In a simple Acemoglu/Autor task continuum model of the economy where there is a continuum of tasks, this corresponds to AI doing more than 50% of the tasks.

I don’t think OpenAI’s definition is wrong, exactly, since it does have a reasonably natural interpretation. But I really wish they’d been more clear.

Does a need for broad automation really place a speed limit on economic growth?

I’ve been trying to better understand the assumptions behind people’s differing predictions of economic growth from AI, and what we can monitor — investment? employment? interest rates? — to narrow down what is actually happening.

I’m not an economist; I am an engineer who implements AI systems. The reason I want to understand the potential impact of AI is because it’s going to matter for my own career and for everyone I know.

In the spirit of “learning in public”, I’ll share what I’ve learned (which is a little) and what’s not making sense to me (which is a lot).

In Ege Erdil’s recent case for multi-decade AI timelines, he gives the following intuition for why a “software-only singularity” is unlikely:

The case for AI revenue growth not slowing down at all, or perhaps even accelerating, rests on the feedback loops that would be enabled by human-level or superhuman AI systems: short timelines advocates usually emphasize software R&D feedbacks more, while I think the relevant feedback loops are more based on broad automation and reinvestment of output into capital accumulation, chip production, productivity improvements, et cetera.

The implicit assumption is that chip production, energy buildouts, and general physical capital accumulation can only go so fast.

Certainly, today’s physical capital stock took a long time to accumulate. With today’s technology, it’s not feasible to scale physical capital formation by 10x, or to 10x the capital stock in 10 years; it would simply be far too expensive.

But economics does not place any theoretical limit on the productivity of new vintages of capital goods. If tomorrow’s technology was far more effective at producing capital goods, physical capital could grow at an unprecedented rate.

(In frontier economies today, the speed of physical capital growth is well below historical records. For reference, South Korea’s physical capital stock grew at ~13.2% per year at the peak of its growth miracle. And from 2004 to 2007, China’s electricity production grew at an average of ~14.2% per year.)

Today’s physical capital has two unintuitive properties that creates a potential for it to be produced at much lower cost, despite the intuition that “physical = slow to build”.

As income increases, consumption is “dematerialized”. Spending on physical capital increasingly goes toward high-value-to-weight items, such as semiconductors and medical devices. Explosive economic growth may not mainly be about building, say, 10 houses and airplanes per human (how would this even get used?) but rather building increasingly elaborate hospitals, medical equipment, and so on.

The barrier to creating these goods may lie more with R&D and design and coordination, rather than physical throughput limits. Some physical work is still required, but physical throughput is not necessarily the bottleneck.

High-value physical capital embeds a great deal of skilled labor. Capital equipment is produced using factors of production that can be recursively attributed to non-capital inputs — labor, energy, and natural resources. This is reflected, for instance, in BEA’s industry-level “KLEMS” (capital, labor, energy, materials, and services) accounts. The supply chain of a complex capital good generally has skilled labor as a major, or even dominant, portion of the value added in its production.

Example: MRI machines. Medical MRI machines are very expensive, often costing more than $1 million per unit. I asked o3 to recursively break down the cost; I’ll keep the inferences high-level, since I don’t trust o3’s precise claims. Essentially, a large portion of the cost of an MRI machine is because the superconducting magnet requires a great deal of specialized equipment to make, and the equipment is complex and produced at low volume. The reason why low-volume capital goods are expensive is that they embody considerable skilled labor amortized over few units.

Physical constraints on the replication rate of capital goods do exist, but in at least one major case are far from binding. Solar panels, for example, require ~1 year to pay back their energy cost. If solar panels were the only source of electricity, GDP could not grow at >100% per year while remaining equally electricity intensive. But this is far, far, above any predicted rate of economic growth, even “explosive” growth, which Davidson and Erdil and Besiroglu define to start at 30% per year.

I see a strong possibility that if human-level skilled labor were free, the capital stock could grow at an unprecedented rate.

Hmm, the math isn’t rendering. Here is a rendered version:

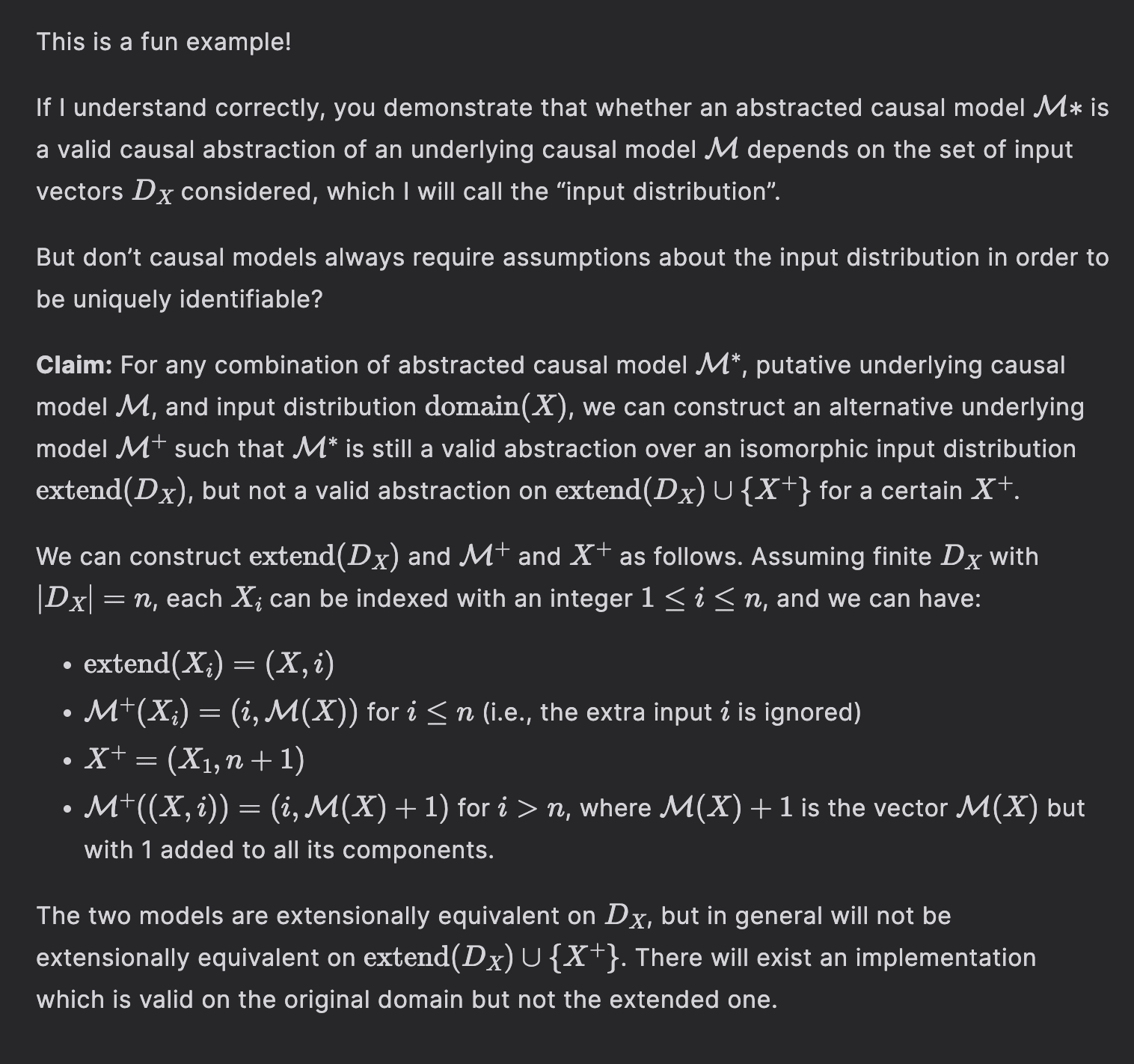

This is a fun example!

If I understand correctly, you demonstrate that whether an abstracted causal model $\mathcal{M}*$ is a valid causal abstraction of an underlying causal model $\mathcal{M}$ depends on the set of input vectors $D_X$ considered, which I will call the “input distribution”.

But don’t causal models always require assumptions about the input distribution in order to be uniquely identifiable?

**Claim:** For any combination of abstracted causal model $\mathcal{M}^*$, putative underlying causal model $\mathcal{M}$, and input distribution $\mathrm{domain}(X)$, we can construct an alternative underlying model $\mathcal{M}^+$ such that $\mathcal{M}^*$ is still a valid abstraction over an isomorphic input distribution $\mathrm{extend}(D_X)$, but not a valid abstraction on $\mathrm{extend}(D_X) \cup \{X^{+}\}$ for a certain $X^+$.

We can construct $\mathrm{extend}(D_X)$ and $\mathcal{M}^+$ and $X^+$ as follows. Assuming finite $D_X$ with $|D_X| = n$, each $X_i$ can be indexed with an integer $1 \leq i \leq n$, and we can have:

- $\mathrm{extend}(X_i) = (X, i)$

- $\mathcal{M}^+(X_i) = (i, \mathcal{M}(X))$ for $i \leq n$ (i.e., the extra input $i$ is ignored)

- $X^+ = (X_1, n+1)$

- $\mathcal{M}^+((X, i)) = (i, \mathcal{M}(X) + 1)$ for $i > n$, where $\mathcal{M}(X) + 1$ is the vector $\mathcal{M}(X)$ but with 1 added to all its components.The two models are extensionally equivalent on $D_X$, but in general will not be extensionally equivalent on $\mathrm{extend}(D_X) \cup \{X^{+}\}$. There will exist an implementation which is valid on the original domain but not the extended one.

I did not appreciate until how unique Less Wrong is as a community blogging platform.

It’s long-form and high-effort with high discoverability of new users’ content. It preserves the best features of the old-school Internet forums.

Substack isn’t nearly as tailored for people who are sharing passing thoughts.

My fear of equilibrium

Carlsmith’s series of posts does much to explore what it means to be in a position to shape future values, and with how to do so in a way that is “humanist” rather than “tyrannical”. His color concepts have really stuck with me; I will be thinking in terms of green, black, etc. for a long time. But on the key question addressed in this post — how we should influence the future — there is a key assumption treated as given by both Lewis and Carlsmith that I found difficult to suspend disbelief for, given how I usually think about the future.

I understand Carlsmith’s key idea (as it is expressed in this post, ignoring the series as a whole) to be as follows:

Lewis worries that we will “condition” the values of future people in a tyrannical way.

But it is not really tyrannical to influence future values as long as an appropriate degree of care is taken.

I will call this kind of Carlsmith-endorsed conditioning “green conditioning”, borrowing his “green” idea from later in the series.

I certainly agree that we should not aggressively try to alter the future without care, in a non-attuned and non-green way. Doing so risks imposing a wholly inappropriate plan on the world. Think about the historical figures who have done the greatest damage with their grand plans: they obviously did not take appropriate care, and they egregiously violated the principles of “green”.

Ultimately, however, I found Carlsmith’s positivity about “green conditioning” difficult to wholeheartedly endorse.

The possibility of equilibrium

I felt that the concerns about “conditioning”, whether green or not, largely ignored the possibility of a force that I personally believe critically constrains (and may even dominate) the trajectory of the future: equilibrium. Defined broadly, this is the idea that the world is a system that experiences negative feedback: if we try to push on it, it will push back. Equilibrium occurs as a concept in many domains — most notably, in economics — but here, I am concerned about equilibrium in what values are held. A simple example to illustrate: some leaders might come to think that convicting people without trial has benefits, but if obviously unjust convictions start to be reported, they will face pressure to scale back or reverse their change.

I fear that if we pursue “conditioning” while neglecting equilibrium constraints, we risk trying to “condition” in ways that are doomed to fail. More subtly, we may train our sense of “green” on the wrong things, on things that are of little relevance for the success of our efforts.

When equilibrium is decisive, the greater risk in disregarding the Tao would then not be in tyranny, but in hubris. We are not actually free to create the future we dream of; if we do depart from the basin of equilibrium, we will fall back down, like Icarus did.

I won’t justify my personal belief in the decisive importance of equilibrium; there is evidently a “worldview gap” that I am on one particular side of. Instead, I will focus on why the “green conditioning” idea jives uncomfortably with this worldview.

Illustration of a system returning to equilibrium:

Control when the equilibrium state is unknown

For simplicity, I’ll focus on the possibility of “strong equilibrium”, where all important features of the future are foreordained: whatever course of action we choose, negative feedback from the system will cause our alterations to be fully erased with time. (As an illustration, consider the trajectory of modernization. Once modernization was apparent, many figures used the newfound leverage to produce huge effects, both positive and negative. But once modernization was apparent, the basic fact that modernization would completely displace the old order was already inevitable.)

If we are at an equilibrium, and will stay at that location forever more, respect for equilibrium asks very little of us: we can simply do nothing. But suppose that the ultimate equilibrium is at a location that we are not yet able to see, is very far away from where we currently stand, and is narrow and sharply sloped. Then it would be all too easy for us to get lost, turn the wrong way, and fall. Merely keeping up our grip on the landscape could take us everything we have.

What I find interesting about this picture is that versions of Carlsmith’s green and black show up naturally here, too. But the orientation toward the future that comes out of this perspective is less optimistically humanist than Carlsmith’s.

Without green, we risk misjudging the location of the ultimate equilibrium. The ultimate equilibrium is a holistic one; deduction from sparse data, rationalist-style, diverges more fatally the more distant the ultimate equilibrium is. The most precious knowledge is not what is, but what will change and what will not.

Without black, we will not have the energy to keep up with the movement of equilibrium. The future, in fact, will not be like the present. We will lose much of “what in fact is” (Le Guin). If we do not want much of this, we will fall behind, and the way down to equilibrium will look steeper and steeper.

There is no campfire, though. We stand on an equilibrium landscape, and this landscape is not about us. And if we try to act like it is, and spend our energy thinking about that, we will lose touch with equilibrium and fall.

Glossary of Carlsmith’s concepts (generated by Claude)

Green: One of the five colors in the Magic: The Gathering color wheel that Carlsmith uses as a philosophical typology. Green represents attunement, reverence, and receptivity — a stance of perceiving and honoring what exists rather than reshaping it. Associated with nature, tradition, and the wisdom of what has survived.

Black: The color of power, effectiveness, and instrumental rationality. Carlsmith describes it as “not fucking around” — actually caring about outcomes, having something to protect, and being willing to act. Can be destructive when aimed at domination, but valuable when aimed at genuine stakes.

The Tao: C.S. Lewis’s term (borrowed from Chinese philosophy) for an objective moral order — a natural law that transcends individual or cultural preference. Lewis argues that conditioning future values without the Tao leads to tyranny.

Conditioning: Deliberately shaping the values of future generations. Lewis worries that those with technological power become “conditioners” who mold others without any external standard to guide them.

The campfire: An image from Carlsmith’s essay “Loving a world you don’t trust.” Humanity as a small group huddled around a campfire in a vast, dark universe. Represents the humanist stance: we matter to each other even if the cosmos is indifferent.

“What in fact is”: From Ursula K. Le Guin’s lecture “A Non-Euclidean View of California as a Cold Place to Be,” quoted by Carlsmith: “To reconstruct the world, to rebuild or rationalize it, is to run the risk of losing or destroying what in fact is.” Carlsmith uses this to articulate what green cares about protecting.