kaarelh AT gmail DOT com

Kaarel

Grokking, memorization, and generalization — a discussion

Searching for a model’s concepts by their shape – a theoretical framework

A starting point for making sense of task structure (in machine learning)

[Question] Transferring credence without transferring evidence?

A gentle primer on caring, including in strange senses, with applications

I’d be very interested in a concrete construction of a (mathematical) universe in which, in some reasonable sense that remains to be made precise, two ‘orthogonal pattern-universes’ (preferably each containing ‘agents’ or ‘sophisticated computational systems’) live on ‘the same fundamental substrate’. One of the many reasons I’m struggling to make this precise is that I want there to be some condition which meaningfully rules out trivial constructions in which the low-level specification of such a universe can be decomposed into a pair such that and are ‘independent’, everything in the first pattern-universe is a function only of , and everything in the second pattern-universe is a function only of . (Of course, I’d also be happy with an explanation why this is a bad question :).)

I started writing this but lost faith in it halfway through, and realized I was spending too much time on it for today. I figured it’s probably a net positive to post this mess anyway although I have now updated to believe somewhat less in it than the first paragraph indicates. Also I recommend updating your expected payoff from reading the rest of this somewhat lower than it was before reading this sentence. Okay, here goes:

{I think people here might be attributing too much of the explanatory weight on noise. I don’t have a strong argument for why the explanation definitely isn’t noise, but here is a different potential explanation that seems promising to me. (There is a sense in which this explanation is still also saying that noise dominates over any relation between the two variables—well, there is a formal sense in which that has to be the case since the correlation is small—so if this formal thing is what you mean by “noise”, I’m not really disagreeing with you here. In this case, interpret my comment as just trying to specify another sense in which the process might not be noisy at all.) This might be seen as an attempt to write down the “sigmoids spiking up in different parameter ranges” idea in a bit more detail.

First, note that if the performance on every task is a perfectly deterministic logistic function with midpoint x_0 and logistic growth rate k, i.e. there is “no noise”, with k and x_0 being the same across tasks, then these correlations would be exactly 0. (Okay, we need to be adding an epsilon of noise here so that we are not dividing by zero when calculating the correlation, but let’s just do that and ignore this point from now on.) Now as a slightly more complicated “noiseless” model, we might suppose that performance on each task is still given by a “deterministic” logistic function, but with the parameters k and x_0 being chosen at random according to some distribution. It would be cool to compute some integrals / program some sampling to check what correlation one gets when k and x_0 are both normally distributed with reasonable means and variances for this particular problem, with no noise beyond that.}

This is the point where I lost faith in this for now. I think there are parameter ranges for how k and x_0 are distributed where one gets a significant positive correlation and ranges where one gets a significant negative correlation in the % case. Negative correlations seem more likely for this particular problem. But more importantly, I no longer think I have a good explanation why this would be so close to 0. I think in logit space, the analysis (which I’m omitting here) becomes kind of easy to do by hand (essentially because the logit and logistic function are inverses), and the outcome I’m getting is that the correlation should be positive, if anything. Maybe it becomes negative if one assumes the logistic functions in our model are some other sigmoids instead, I’m not sure. It seems possible that the outcome would be sensitive to such details. One idea is that maybe if one assumes there is always eps of noise and bounds the sigmoid away from 1 by like 1%, it would change the verdict.

Anyway, the conclusion I was planning to reach here is that there is a plausible way in which all the underlying performance curves would be super nice, not noisy at all, but the correlations we are looking at would still be zero, and that I could also explain the negative correlations without noisy reversion to the mean (instead this being like a growth range somewhere decreasing the chance there is a growth range somewhere else) but the argument ended up being much less convincing than I anticipated. In general, I’m now thinking that most such simple models should have negative or positive correlation in the % case depending on the parameter range, and could be anything for logit. Maybe it’s just that these correlations are swamped by noise after all. I’ll think more about it.

I think this comment is incorrect (in the stated generality). Here is a simple counterexample. Suppose you have a starting endowment of $1, and that you can bet any amount at 0.50001 probability of doubling your bet and 0.49999 probability of losing everything you bet. You can bet whatever amount of your money you want a total of n times. (If you lost everything in some round, we can think of this as you still being allowed to bet 0 in remaining future rounds.) The strategy that maximizes expected linear utility is the one where you bet everything every time.

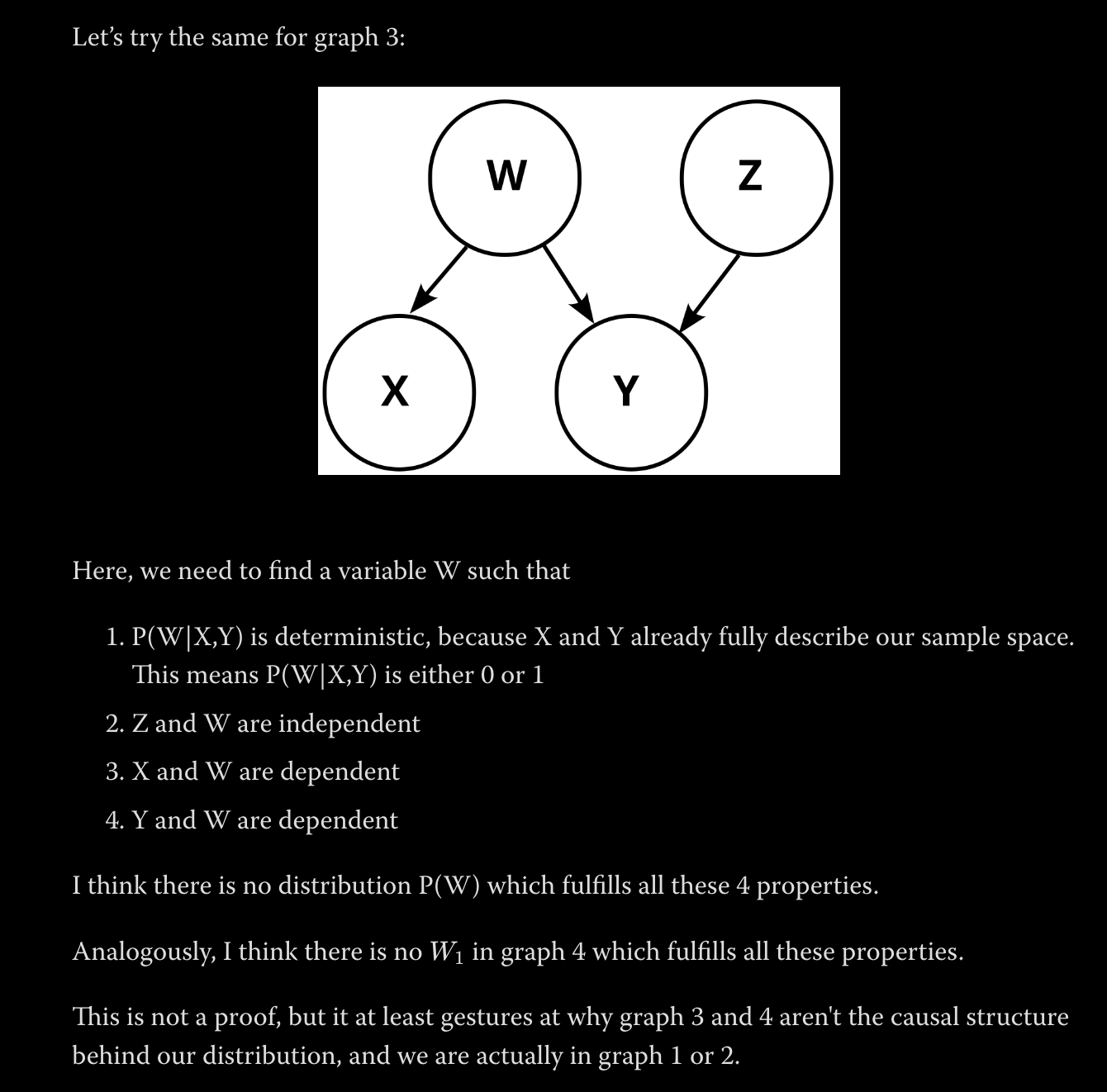

I don’t understand why 1 is true – in general, couldn’t the variable $W$ be defined on a more refined sample space? Also, I think all $4$ conditions are technically satisfied if you set $W=X$ (or well, maybe it’s better to think of it as a copy of $X$).I think the following argument works though. Note that the distribution of $X$ given $(Z,Y,W)$ is just the deterministic distribution $X=Y \xor Z$ (this follows from the definition of Z). By the structure of the causal graph, the distribution of $X$ given $(Z,Y,W)$ must be the same as the distribution of $X$ given just $W$. Therefore, the distribution of $X$ given $W$ is deterministic. I strongly guess that a deterministic connection is directly ruled out by one of Pearl’s inference rules.

The same argument also rules out graphs 2 and 4.

I took the main point of the post to be that there are fairly general conditions (on the utility function and on the bets you are offered) in which you should place each bet like your utility is linear, and fairly general conditions in which you should place each bet like your utility is logarithmic. In particular, the conditions are much weaker than your utility actually being linear, or than your utility actually being logarithmic, respectively, and I think this is a cool point. I don’t see the post as saying anything beyond what’s implied by this about Kelly betting vs max-linear-EV betting in general.

I’d be quite interested in elaboration on getting faster alignment researchers not being alignment-hard — it currently seems likely to me that a research community of unupgraded alignment researchers with a hundred years is capable of solving alignment (conditional on alignment being solvable). (And having faster general researchers, a goal that seems roughly equivalent, is surely alignment-hard (again, conditional on alignment being solvable), because we can then get the researchers to quickly do whatever it is that we could do — e.g., upgrading?)

I think does not have to be a variable which we can observe, i.e. it is not necessarily the case that we can deterministically infer the value of from the values of and . For example, let’s say the two binary variables we observe are and . We’d intuitively want to consider a causal model where is causing both, but in a way that makes all triples of variable values have nonzero probability (which is true for these variables in practice). This is impossible if we require to be deterministic once is known.

more on 4: Suppose you have horribly cyclic preferences and you go to a rationality coach to fix this. In particular, your ice cream preferences are vanilla>chocolate>mint>vanilla. Roughly speaking, Hodge is the rationality coach that will tell you to consider the three types of ice cream equally good from now on, whereas Mr. Max Correct Pairs will tell you to switch one of the three preferences. Which coach is better? If you dislike breaking cycles arbitrarily, you should go with Hodge. If you think losing your preferences is worse than that, go with Max. Also, Hodge has the huge advantage of actually being done in a reasonable amount of time :)

I liked the post; here are some thoughts, mostly on the “The futility of computing utility” section:

1 )

If we’re not incredibly unlucky, we can hope to sort N-many outcomes with comparisons.

I don’t understand why you need to not be incredibly unlucky here. There are plenty of deterministic algorithms with this runtime, no?

2) I think that in step 2, once you know the worst and the best outcome, you can skip to step 3 (i.e. the full ordering does not seem to be needed to enter step 3. So instead of sorting in n log n time, you could find min and max in linear time, and then skip to the psychophysics.

3) Could you elaborate on what you mean by a basis of possibility-space? It is not obvious to me that possibility-space has a linear structure (i.e. that it is a vector space), or that utility respects this linear structure (e.g. the utility I get from having chocolate ice cream and having vanilla ice cream is not in general approximated well by the sum of the utilities I get from having only one of these, and similarly for multiplication by scalars). Perhaps you were using these terms metaphorically, but then I currently have doubts about this being a great metaphor / would appreciate having the metaphor spelled out more explicitly. I could imagine doing something like picking some random subset of the possibilities, doing something to figure out the utilities of this subset, and then doing some linear regression (or something more complicated) on various parameters to predict the utilities of all possibilities. It seems like a more symmetric way to think about this might be to consider the subset of outcomes (with each pair, or some of the pairs, being labeled according to which one is preferred) to be the training data, and then training a neural network (or whatever) that predict utilities of outcomes so as to minimize loss on this training data. And then to go from training data to any possibility, just unleash the same neural network on that possibility. (Under this interpretation, I guess “elementary outcomes” <-> training data, and there does not seem to be a need to assume linear structure of possibility-space.)

4) I think I have something cool to say about a specific and very related problem. Input: a set of outcomes and some pairwise preferences between them. Desired output: a total order on these outcomes such that the number of pairs which are ordered incorrectly is minimal. It turns out that this is NP-hard: https://epubs.siam.org/doi/pdf/10.1137/050623905. (The problem considered in this paper is the special case of the problem I stated where all the pairwise preferences are given, but well, if a special case is NP-hard, then the problem itself is also NP-hard.)

You can prove e.g. by (backwards) induction that you should bet everything every time. With the odds being p>0.5 and 1-p, if the expectation of whatever strategy you are using after n-1 steps is E, then the maximal expectation over all things you could do on the n’th step is p2E (you can see this by writing the expectation as a conditional sum over the outcomes after n-1 steps), which corresponds uniquely to the strategy where you bet everything in any situation on the n’th step. It then follows that the best you can do on the (n-1)th step is also to maximize the expectation after it, and the same argument gives that you should bet everything, and so on.

(Where did you get n=10^5 from? If it came from some computer computation, then I would wager that there was some overflow/numerical issues.)

A thread into which I’ll occasionally post notes on some ML(?) papers I’m reading

I think the world would probably be much better if everyone made a bunch more of their notes public. I intend to occasionally copy some personal notes on ML(?) papers into this thread. While I hope that the notes which I’ll end up selecting for being posted here will be of interest to some people, and that people will sometimes comment with their thoughts on the same paper and on my thoughts (please do tell me how I’m wrong, etc.), I expect that the notes here will not be significantly more polished than typical notes I write for myself and my reasoning will be suboptimal; also, I expect most of these notes won’t really make sense unless you’re also familiar with the paper — the notes will typically be companions to the paper, not substitutes.

I expect I’ll sometimes be meaner than some norm somewhere in these notes (in fact, I expect I’ll sometimes be simultaneously mean and wrong/confused — exciting!), but I should just say to clarify that I think almost all ML papers/posts/notes are trash, so me being mean to a particular paper might not be evidence that I think it’s worse than some average. If anything, the papers I post notes about had something worth thinking/writing about at all, which seems like a good thing! In particular, they probably contained at least one interesting idea!

So, anyway: I’m warning you that the notes in this thread will be messy and not self-contained, and telling you that reading them might not be a good use of your time :)

A few notes/questions about things that seem like errors in the paper (or maybe I’m confused — anyway, none of this invalidates any conclusions of the paper, but if I’m right or at least justifiably confused, then these do probably significantly hinder reading the paper; I’m partly posting this comment to possibly prevent some readers in the future from wasting a lot of time on the same issues):

1) The formula for here seems incorrect:

This is because W_i is a feature corresponding to the i’th coordinate of x (this is not evident from the screenshot, but it is evident from the rest of the paper), so surely what shows up in this formula should not be W_i, but instead the i’th row of the matrix which has columns W_i (this matrix is called W later). (If one believes that W_i is a feature, then one can see this is wrong already from the dimensions in the dot product not matching.)

2) Even though you say in the text at the beginning of Section 3 that the input features are independent, the first sentence below made me make a pragmatic inference that you are not assuming that the coordinates are independent for this particular claim about how the loss simplifies (in part because if you were assuming independence, you could replace the covariance claim with a weaker variance claim, since the 0 covariance part is implied by independence):However, I think you do use the fact that the input features are independent in the proof of the claim (at least you say “because the x’s are independent”):

Additionally, if you are in fact just using independence in the argument here and I’m not missing something, then I think that instead of saying you are using the moment-cumulants formula here, it would be much much better to say that independence implies that any term with an unmatched index is . If you mean the moment-cumulants formula here https://en.wikipedia.org/wiki/Cumulant#Joint_cumulants , then (while I understand how to derive every equation of your argument in case the inputs are independent), I’m currently confused about how that’s helpful at all, because one then still needs to analyze which terms of each cumulant are 0 (and how the various terms cancel for various choices of the matching pattern of indices), and this seems strictly more complicated than problem before translating to cumulants, unless I’m missing something obvious.

3) I’m pretty sure this should say x_i^2 instead of x_i x_j, and as far as I can tell the LHS has nothing to do with the RHS:(I think it should instead say sth like that the loss term is proportional to the squared difference between the true and predictor covariance.)

An attempt at a specification of virtue ethics

I will be appropriating terminology from the Waluigi post. I hereby put forward the hypothesis that virtue ethics endorses an action iff it is what the better one of Luigi and Waluigi would do, where Luigi and Waluigi are the ones given by the posterior semiotic measure in the given situation, and “better” is defined according to what some [possibly vaguely specified] consequentialist theory thinks about the long-term expected effects of this particular Luigi vs the long-term effects of this particular Waluigi. One intuition here is that a vague specification could be more fine if we are not optimizing for it very hard, instead just obtaining a small amount of information from it per decision.

In this sense, virtue ethics literally equals continuously choosing actions as if coming from a good character. Furthermore, considering the new posterior semiotic measure after a decision, in this sense, virtue ethics is about cultivating a virtuous character in oneself. Virtue ethics is about rising to the occasion (i.e. the situation, the context). It’s about constantly choosing the Luigi in oneself over the Waluigi in oneself (or maybe the Waluigi over the Luigi if we define “Luigi” as the more likely of the two and one has previously acted badly in similar cases or if the posterior semiotic measure is otherwise malign). I currently find this very funny, and, if even approximately correct, also quite cool.

Here are some issues/considerations/questions that I intend to think more about:

What’s a situation? For instance, does it encompass the agent’s entire life history, or are we to make it more local?

Are we to use the agent’s own semiotic measure, or some objective semiotic measure?

This grounds virtue ethics in consequentialism. Can we get rid of that? Even if not, I think this might be useful for designing safe agents though.

Does this collapse into cultivating a vanilla consequentialist over many choices? Can we think of examples of prompting regimes such that collapse does not occur? The vague motivating hope I have here is that in the trolley problem case with the massive man, the Waluigi pushing the man is a corrupt psycho, and not a conflicted utilitarian.

Even if this doesn’t collapse into consequentialism from these kinds of decisions, I’m worried about it being stable under reflection, I guess because I’m worried about the likelihood of virtue ethics being part of an agent in reflective equilibrium. It would be sad if the only way to make this work would be to only ever give high semiotic measure to agents that don’t reflect much on values.

Wait, how exactly do we get Luigi and Waluigi from the posterior semiotic measure? Can we just replace this with picking the best character from the most probable few options according to the semiotic measure? Wait, is this just quantilization but funnier? I think there might be some crucial differences. And regardless, it’s interesting if virtue ethics turns out to be quantilization-but-funnier.

More generally, has all this been said already?

Is there a nice restatement of this in shard theory language?

I agree with you regarding 0 lebesgue. My impression is that the Pearl paradigm has some [statistics → causal graph] inference rules which basically do the job of ruling out causal graphs for which having certain properties seen in the data has 0 lebesgue measure. (The inference from two variables being independent to them having no common ancestors in the underlying causal graph, stated earlier in the post, is also of this kind.) So I think it’s correct to say “X has to cause Y”, where this is understood as a valid inference inside the Pearl (or Garrabrant) paradigm. (But also, updating pretty close to “X has to cause Y” is correct for a Bayesian with reasonable priors about the underlying causal graphs.)

(epistemic position: I haven’t read most of the relevant material in much detail)

I find [the use of square brackets to show the merge structure of [a linguistic entity that might otherwise be confusing to parse]] delightful :)