Hi, I am a Physicist, an Effective Altruist and AI Safety researcher.

Linda Linsefors

During training, the AGI comes across two contradictory expectations (e.g. “demand curves usually slope down” & “many studies find that minimum wage does not cause unemployment”). The AGI updates its internal models to a more nuanced and sophisticated understanding that can reconcile those two things. Going forward, it can build on that new knowledge.

During deployment, the exact same thing happens, with the exact same result.

In the continual-learning, brain-like-AGI case, there’s no distinction. Both of these are the same algorithm doing the same thing.

By contrast, in conventional ML systems (e.g. LLMs), these two cases would be handled by two different algorithmic processes. Case #1 would involve changing the model weights, while Case #2 would solely involve changing the model activations, while leaving the weights untouched.

To me, this is a huge point in favor of the plausibility of the continual learning approach. It only requires solving the problem once, rather than solving it twice in two different ways. And this isn’t just any problem; it’s sorta the core problem of AGI!

I agree that currently brains have continual learning in a way that LLMs don’t.

However I do think that both brains and LLMs have two different solutions for remembering information for later use. In the brain these are the [long-term memory] vs the [short-term / working memory]. In LLMs these are [updating weights] v.s. [context window].I don’t think it’s a coincidence that both systems have something like a context window and something like long-term memory, and I expect any future brain-like AGI to also have these two types of memory, implemented in different ways.

I also heard hypothesized that humans have more additional levels of memory. I.e. that things that happened the last days/months, are stored in one way, and things further in the past are stored in another way, and that memories are slowly moved from medium-term storage to long-term storage over time.

There were rats that had only had access to the food-access lever when super-hungry. Naturally, they learned that pressing the food-access lever was an awesome idea. Then they were shown the lever again while very full. They enthusiastically pressed the lever as before. But they did not enthusiastically open the food magazine. Instead (I imagine), they pressed the lever, then started off towards the now-accessible food magazine, then when they got close, they stopped and said to themselves, “Yuck, wait, I’m not hungry at all, this is not appealing, what am I even doing right now??”. (We’ve all been there, right?)

This is so relatable!

OK, so the former ingredient (C) is out. But then, what about (1) and (2) above, i.e. my original two reasons for believing that (C) should be there?

What are (1) and (2) referring to?

It’s kinda the same idea as “safewords” in fight-related sports (among other places).

1) The link is broken.

2) No, it’s not the same.

Laugh, or other play signals means [don’t worry everything is ok]. There is a similarity in that, if you have a safeword, you’re probably playing. But if anyone says the safeword, it’s because something is wrong, i.e. it’s not fun anymore. In a way it’s the opposite of a laugh.

The big picture—The whole post will revolve around this diagram. Note that I’m oversimplifying in various ways, including in the bracketed neuroanatomy labels. I think this picture would be clearer if you drew [predict sensory inputs] as a separate box from Though Generator.

In the picture in my head, there is [predict sensory inputs] box, that revives and tries to predict the sensory output. This box also sends a signal of [current context] to both the Though Generator and the Though Assessor. Also, [predict sensory inputs] gets some signal from Though Generator, so that it knows what we’re about to do, which is important for what we’re about to observe.

I’m guessing there is some reason you didn’t draw it this way?

We might have talked about this before?

How corrigible do we want a future superintelligence to be?

I don’t want it to switch goal just because a single human tells it to do so (for most humans).

One way to do this is to have a special input channel, and have the AI fully corrigible to inputs given there. But I still don’t like that solution, because it’s both vulnerable to falling into the wrong hands, and also to getting lost completely (ending up in no-ones hands).

I used to think the right balance would be super hard to specify. But if we’re in the business of asking for what we want in natural language, anyway, then I’d suggest something like:

If the majority of humans is against some action, then don’t do it.

How do we operationalize “majority”, “humans”, “against” and “action”? Eh, don’t worry about it. Either this natural language thing works, and then the common sense meaning should be fine, or it doesn’t work, and then it’s out of scope for this discussion.

I don’t think it’s possible to figure out an operationalization, without knowing how we get the values into the AI, since what we can express and how, depends on the value loading method.

I remember reading a claim that the steering subsystem notices how much of the white is visible in other peoples eyes.

The text probably didn’t use the term “steering subsystem” (unless I got this from one of your posts), but that’s how I remember interpreting it.

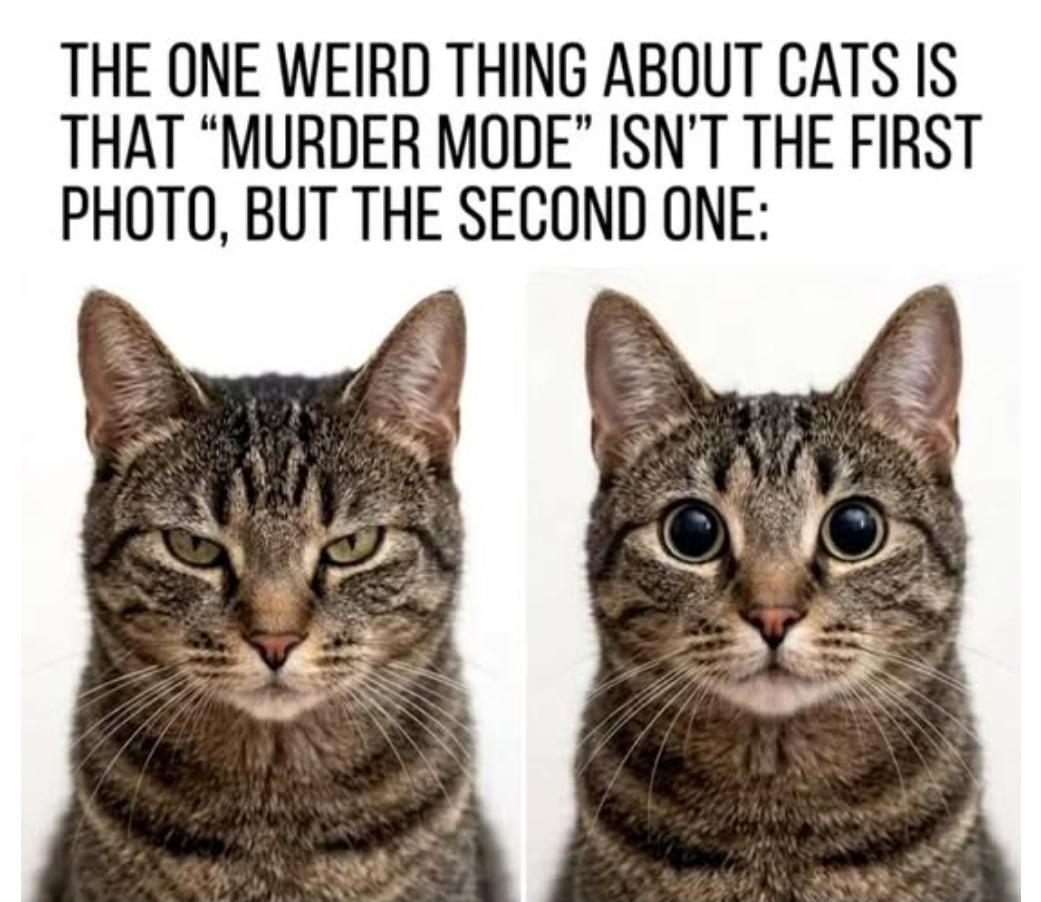

Cats have eye-based facial expression too. Squinting (half closed eyes) means relaxed and trusting. If a cat slow-blinks at you (closes their eyes all the way) that’s means they like you.

I updated to that it’s at least possible that narrowing and widening eyes has a practical + cultural explanation, rather than something encoded in the steering system.

I tried the widening eyes experiment again and it still has no effect for me. But I believe that it works for Steve. Narrowing eyes doesn’t work for me (who has good visions) it also doesn’t work for Phil (who is nearsighted), but it works for some people which is enough.

If some people squint when they are suspicious, this facial expression can spread though culture.

I notice that something that seemed ridiculous to me, was not ridiculous, and I have updated accordingly. Thanks!

I knew the optics stuff, I just forgot. Thanks for reminding me.

And now I’m embarrassed about it (but admitting this makes me less embraced). There is a story in my brain that I want to set higher standards for my self to remember relevant facts, in these situation (e.g. before commenting on LW). Being embraced is a reminder that I felt short, but writing it down is a memory aid (I’m not likely to read it, but writing it still make it’s more likely that I remember it), so now I’m less likely to fall short the next time, so the emotion of being embarrassed has don it’s job.

Tring to remember my state of mind when I wrote that (Yesterday), I think my objection was to the stronger statement that this explains all or most facial expressions.

Today, after reading your and Raemon’s responses, I find it a bit more plausible that more expressions have immediate functional explanations. But defiantly not all.

I’m trying to think of a way to give a confidence interval, but I don’t even know what it would mean for 20% of facial expression to have immediate functional explanations. What is meant by “20%”? What’s the measure?

I mostly wrote this comment because I felt compelled to, because of the familiar “someone’s wrong on the internet” reaction. Except the thing that is wrong is a quote from a throwaway comment, so maybe not that important.

But reflecting a bit more generally, I don’t think the attempted steal man

OK fine, the anti-Ekman position is false, but that’s just because we all have structurally-similar faces, and certain ways of contorting one’s face tend to be useful for corresponding purposes (that are not arbitrary social conventions). For example, maybe the thing we might call a “disgust facial expression” is just objectively the best way to eject stuff from the mouth and nose that shouldn’t be there—a useful activity for any human. So it’s no surprise that we find that kind of expression recurring across cultures!

is at all plausible either

…If you’re uncertain whether a person directly in front of you could harm you, you might narrow your eyes to see the person’s face better. If danger is potentially lurking around the next corner, your eyes might widen to improve your peripheral vision…

I tried narrowing my eyes. This does not help improve my vision. It just causes my eyelashes to slightly get in the way and make my vision slightly worse.

Widening my eyes does not seem to improve my peripheral vision.

Also, if you know anything about how eyes work, it’s pretty obvious how this wouldn’t work.

For example, Ekman says “surprise” versus “fear” typically involve awfully similar facial expressions

I think this is factually incorrect.

If I simulate these feelings in me, I make very different faces. Or more correctly, very many different faces, depending on what type of surprise and what type of fear. But these are not very overlapping.

Still others of these “brain-like AGI ingredients” seem mostly or totally absent from today’s most popular ML algorithms (e.g. ability to form “thoughts” [e.g. “I’m going to the store”] that blend together immediate actions, short-term predictions, long-term predictions, and flexible hierarchical plans, inside a generative world-model that supports causal and counterfactual and metacognitive reasoning).

I think that chain-of-though planing in an agentic LLM-driven model, might qualify as this. Would you agree?

Maybe it’s a populist medium, more than a leftist medium?

I had the same question about the arguments in the post.

If Claud somehow starts down a trajectory of always talking about how good it is, how is this self reinforcing? If it has a tendency of always talking like that, this should be both upweighted and downweighted, becase it will sometimes succeed and sometimes fail.

Maybe the rewards signals aren’t balanced? I.e. over all it get more possitive than neggative reward?

Or mayne it’s more likely to talk about it’s motivation when it succeeds at staying on task?

Or possibly this storry about self reinforcement (“gradient hacking”) is just wrong, and the explanation of Calud 3′s character is something else.

I expect Good to have some chance of generalising safely when the AI gets too smart, while Obedience has aproximatly no chance to do so. I don’t have a technical argument for this, just strong intuition.

What is “canary strings”?

I remember hearing that Amanda Askil had more influence over Claude 3 Opus’s aliment training specifically, and used her philosopy powers to make it more deeply aligned. Is this wrong?

I’m surprised by this paragraph. Magrus Carlsen’s Steering Subsystem doesn’t have to understand his thoughts about chess moves. And neither will we have to directly interpret the thoughts of an AGI.

If humans are playing the role of the Steering Subsystem, we’d start with training the AI’s Though Assessor to give us predictions of things we’ll understand. This would include predictions of everything we need to stay alive, e.g. Earth future climate, etc. And also thinks we want. Writing a complete list, would be hard, possibly too hard unfortunately. So I’m not saying this is a great plan.

I’m just saying if we’re the steering subsystem, we’re not going to try to interpret activations patterns of the AI, because that’s not the steering subsystems job, in your model. Right?