Philosophy PhD student, worked at AI Impacts, then Center on Long-Term Risk, then OpenAI. Quit OpenAI due to losing confidence that it would behave responsibly around the time of AGI. Not sure what I’ll do next yet. Views are my own & do not represent those of my current or former employer(s). I subscribe to Crocker’s Rules and am especially interested to hear unsolicited constructive criticism. http://sl4.org/crocker.html

Some of my favorite memes:

(by Rob Wiblin)

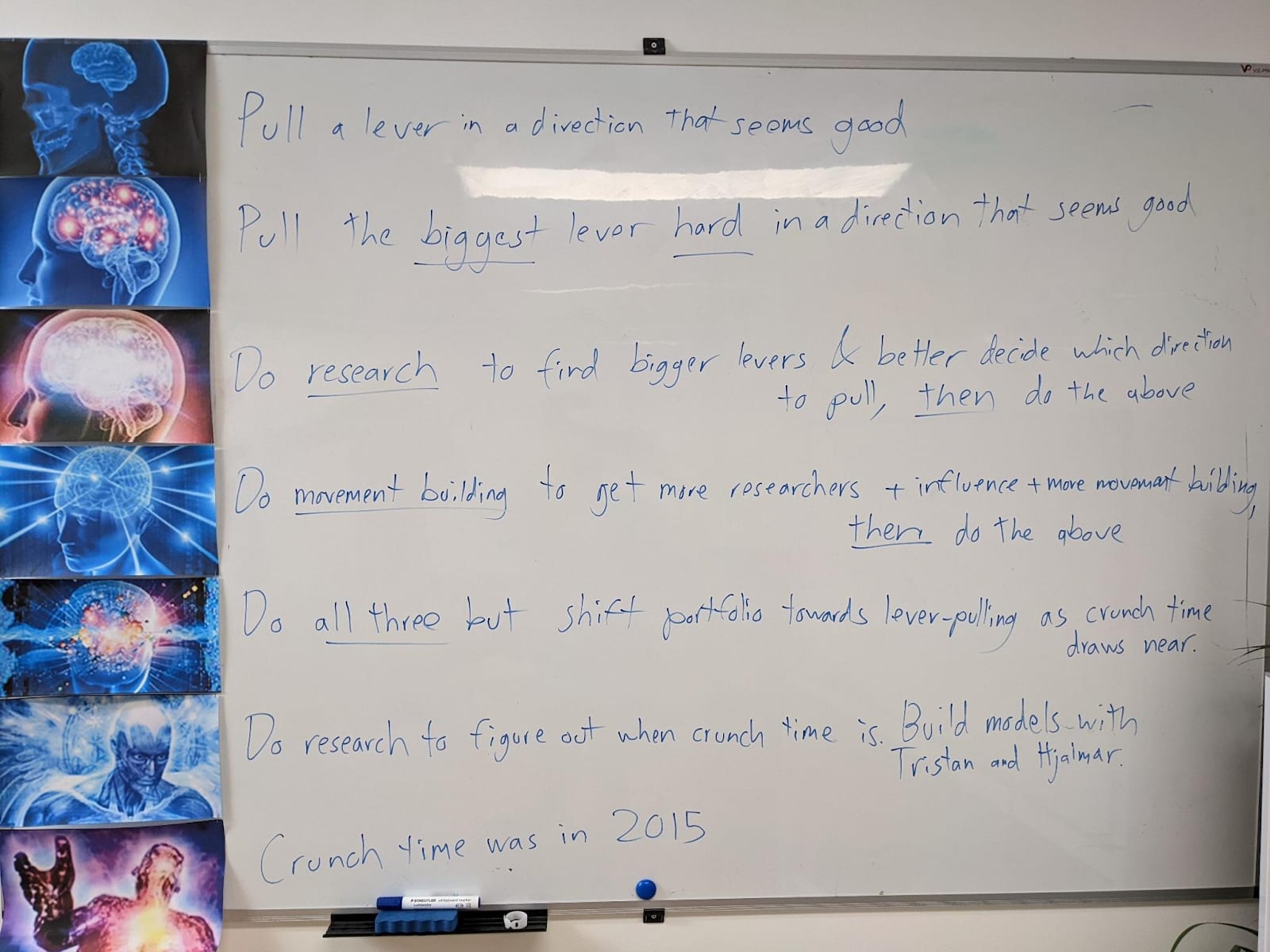

My EA Journey, depicted on the whiteboard at CLR:

(h/t Scott Alexander)

Huh, I have the opposite intuition. I was about to cite that exact same “Death with dignity” post as an argument for why you are wrong; it’s undignified for us to stop trying to solve the alignment problem and publicly discussing the problem with each other, out of fear that some of our ideas might accidentally percolate into OpenAI and cause them to go slightly faster, and that this increased speedup might have made the difference between victory and defeat. The dignified thing to do is think and talk about the problem.