I’m an artist, writer, and human being.

To be a little more precise: I make video games, edit Wikipedia, and write here on LessWrong!

I’m an artist, writer, and human being.

To be a little more precise: I make video games, edit Wikipedia, and write here on LessWrong!

If that is the case, then I would very much like them to publicize the details for why they think other approaches are doomed. When Yudkowsky has talked about it in the past, it tends to be in the form of single-sentence statements pointing towards past writing on general cognitive fallacies. For him I’m sure that would be enough of a hint to clearly see why strategy x fits that fallacy and will therefore fail, but as a reader, it doesn’t give me much insight as to why such a project is doomed, rather than just potentially flawed. (Sorry if this doesn’t make sense btw, I’m really tired and am not sure I’m thinking straight atm)

Certainly for some people (including you!), yes. For others, I expect this post to be strongly demotivating. That doesn’t mean it shouldn’t have been written (I value honestly conveying personal beliefs and are expressing diversity of opinion enough to outweigh the downsides), but we should realistically expect this post to cause psychological harm for some people, and could also potentially make interaction and PR with those who don’t share Yudkowsky’s views harder. Despite some claims to the contrary, I believe (through personal experience in PR) that expressing radical honesty is not strongly valued outside the rationalist community, and that interaction with non-rationalists can be extremely important, even to potentially world-saving levels. Yudkowsky, for all of his incredible talent, is frankly terrible at PR (at least historically), and may not be giving proper weight to its value as a world-saving tool. I’m still thinking through the details of Yudkowsky’s claims, but expect me to write a post here in the near future giving my perspective in more detail.

that’s probably exactly what’s going on. The usernames were so frequent in the reddit comments dataset that the tokenizer, the part that breaks a paragraph up into word-ish-sized-chunks like ” test” or ” SolidGoldMagikarp” (the space is included in many tokens) so that the neural network doesn’t have to deal with each character, learned they were important words. But in a later stage of learning, comments without complex text were filtered out, resulting in your usernames getting their own words… but the neural network never seeing the words activate. It’s as if you had an extra eye facing the inside of your skull, and you’d never felt it activate, and then one day some researchers trying to understand your brain shined a bright light on your skin and the extra eye started sending you signals. Except, you’re a language model, so it’s more like each word is a separate finger, and you have tens of thousands of fingers, one on each word button. Uh, that got weird,

This is an incredible analogy

Could someone from MIRI step in here to explain why this is not being done? This seems like an extremely easy avenue for improvement.

May I ask why you guys decided to publish this now in particular? Totally fine if you can’t answer that question, of course.

Just wanted to provide some positive feedback that this post is really incredible, and I thank you for your work. I’ve been feeling a deep sort of low-level anxiety recently, and this is a nice starting point to try to work through some of that.

Please do this!!

Would it be fair to call this AGI, albeit not superintelligent yet?

Gato performs over 450 out of 604 tasks at over a 50% expert score threshold.

👀

I’ll tell you that one of my brothers (who I greatly respect) has decided not to be concerned about AGI risks specifically because he views EY as being a very respected “alarmist” in the field (which is basically correct), and also views EY as giving off extremely “culty” and “obviously wrong” vibes (with Roko’s Basilisk and EY’s privacy around the AI boxing results being the main examples given), leading him to conclude that it’s simply not worth engaging with the community (and their arguments) in the first place. I wouldn’t personally engage with what I believe to be a doomsday cult (even if they claim that the risk of ignoring them is astronomically high), so I really can’t blame him.

I’m also aware of an individual who has enormous cultural influence, and was interested in rationalism, but heard from an unnamed researcher at Google that the rationalist movement is associated with the alt-right, so they didn’t bother looking further. (Yes, that’s an incorrect statement, but came from the widespread [possibly correct?] belief that Peter Theil is both alt-right and has/had close ties with many prominent rationalists.) This indicates a general lack of control of the narrative surrounding the movement, and likely has directly led to needlessly antagonistic relationships.

This is the best counter-response I’ve read on the thread so far, and I’m really interested what responses will be. Commenting here so I can easily get back to this comment in the future.

For me, having listened to the guy talk is even stronger evidence since I think I’d notice it if he was lying, but that’s obviously not verifiable.

Going to quote from Astrid Wilde here (original source linked in post):

i felt this way about someone once too. in 2015 that person kidnapped me, trafficked me, and blackmailed me out of my life savings at the time of ~$45,000. i spent the next 3 years homeless.

sociopathic charisma is something i never would have believed in if i hadn’t experienced it first hand. but there really are people out there who spend their entire lives honing their social intelligence to gain wealth, power, and status.

most of them just don’t have enough smart but naive people around them to fake competency and reputation launder at scale. EA was the perfect political philosophy and community for this to scale....

I would really very strongly recommend not updating on an intuitive feeling of “I can trust this guy,” considering that in the counterfactual case (where you could not in fact, trust the guy), you would be equally likely to have that exact feeling!

As for SBF being vegan as evidence, see my reply to you on the EA forum.

The first part of this posts reads almost beat-for-beat like this post I wrote a while back: https://www.lesswrong.com/posts/jTQaFKL6s3pppSNx4/god-and-moses-have-a-chat Did you happen to read it before writing this, or are we just both thinking along the same lines?

The way this story is written would suggest that the solution to this particular future would simply be to spam the internet with plausible stories about a friendly AI takeoff which an AGI will identify with and be like “oh hey cool that’s me”

Random future reader (ten years in the future in fact) confirming that this post was indeed of utility to me.

VR hardware and software are in their infancy and you simply can’t have very crisp graphics at this stage

As an occasional video game developer, I’m going to strongly disagree with you there. To give a counter-example:

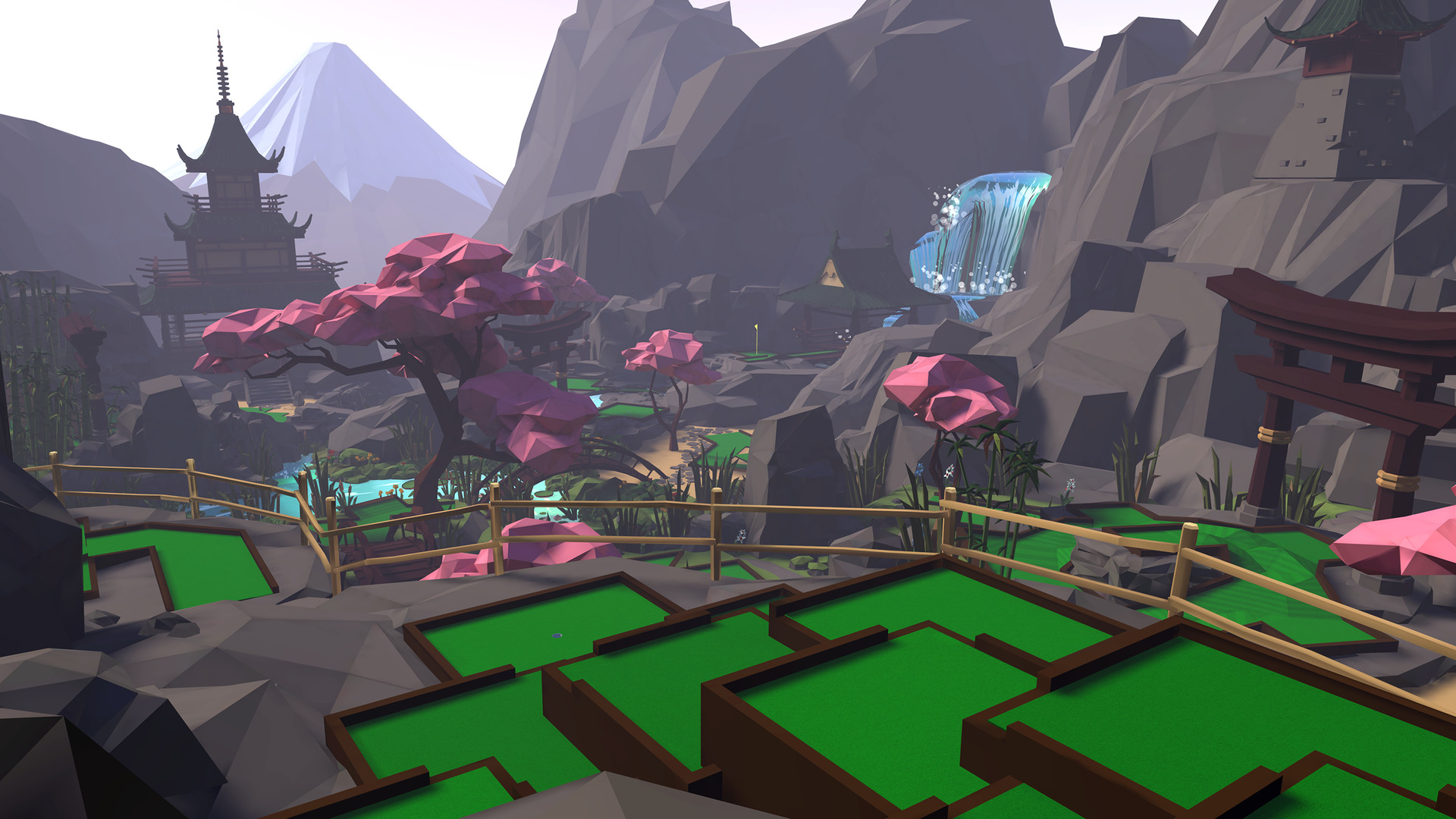

Walkabout Mini Golf is a VR game that runs on Oculus Quest, Rift, and Steam VR, made by this fairly small studio (and most of the people listed there didn’t even work on the game) [EDIT: I reached out to the studio on Twitter and it turns out the game was mainly developed by a single guy, Lucas Martell]. It looks like this:

I’ve played this game with a friend of mine (who shows up as a stylized floating head that looks pretty great), and it was crisp, clear, high frame-rate VR perfection. Even in multiplayer, everything works smoothly, and it serves as a really nice virtual social space.

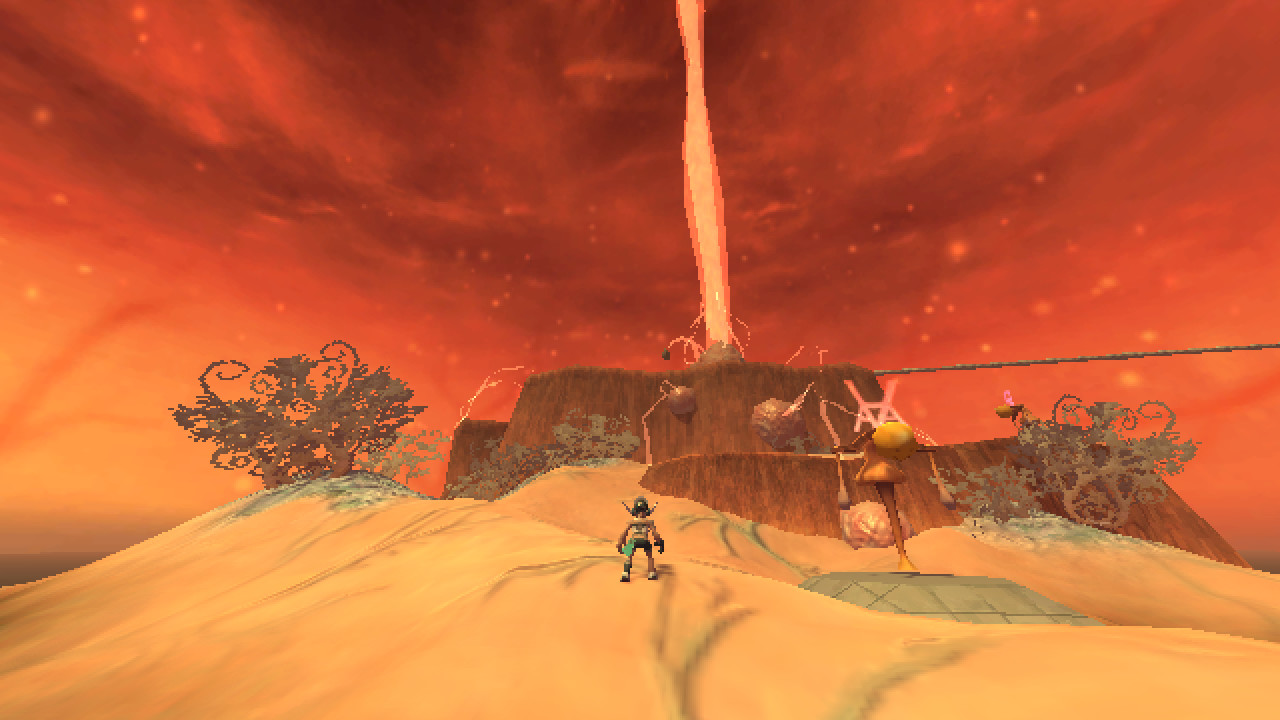

Having limited graphics capabilities does not place a significant limiting bound on aesthetics. As another example, Anodyne 2: Return to Dust is a jaw-droppingly beautiful game (developed by only two people!) deliberately made with PS1-era graphics:

Simplicity does not necessitate ugliness.

Rather, he is surrounded by employees and journalists whose primary complaint is that Horizon Worlds is not sterile enough.

This may very well be true (despite my personal distaste for that line of thought), but even if it is, being inoffensive and “bland” doesn’t mean you have to look bad! Nintendo’s oeuvre, for instance, shows that being friendly for all ages doesn’t require sacrificing aesthetic beauty. Meanwhile, in screenshots online and in the “selfie” Zuckerberg posted, model sizes are wildly inconsistent (look at the trees—or is that supposed to be grass?—on the ground), the clothing of avatars are almost surrealistically bad (why is Mark’s top button so far off to the left?), the shading is worse than what I could make in half a day with Unity when I was 12, and overall everything manages to look more slapped together than this notorious disaster of an asset flip.

I can’t help but feel that on some level this must be intentional, or at least the result of some absolutely horrific mismanagement.

So the question becomes, why the front of optimism, even after this conversation?

Should be pointed out that $1000 is no skin in the game to you. To some people I know, $1000 would have been nearly lifesaving at certain points in their lives.

You seem to be equating saving someone from death with them living literally forever, which ultimately appears to be forbidden, given the known laws of physics. The person who’s life you saved has some finite value (under these sorts of ethical theories at least), presumably calculated by the added enjoyment they get to experience over the rest of their life. That life will be finite, because thermodynamics + the gradual expansion of the universe kills everything, given enough time. Therefore, I think there will always be some theoretical amount of suffering which will outweigh the value of a given finite being.

As an unenlightened person, why would I want satisfaction while living in a world that has things I want to change? I guess I’m asking if drives persist with perfect contentment, and if so, how?

Hi, I joined because I was trying to understand Pascal’s Wager, and someone suggested I look up “Pascal’s mugging”… next thing I know I’m a newly minted HPMOR superfan, and halfway through reading every post Yudkowsky has ever written. This place is an incredible wellspring of knowledge, and I look forward to joining in the discussion!