Researcher and software engineer interested in applying economics to AI safety

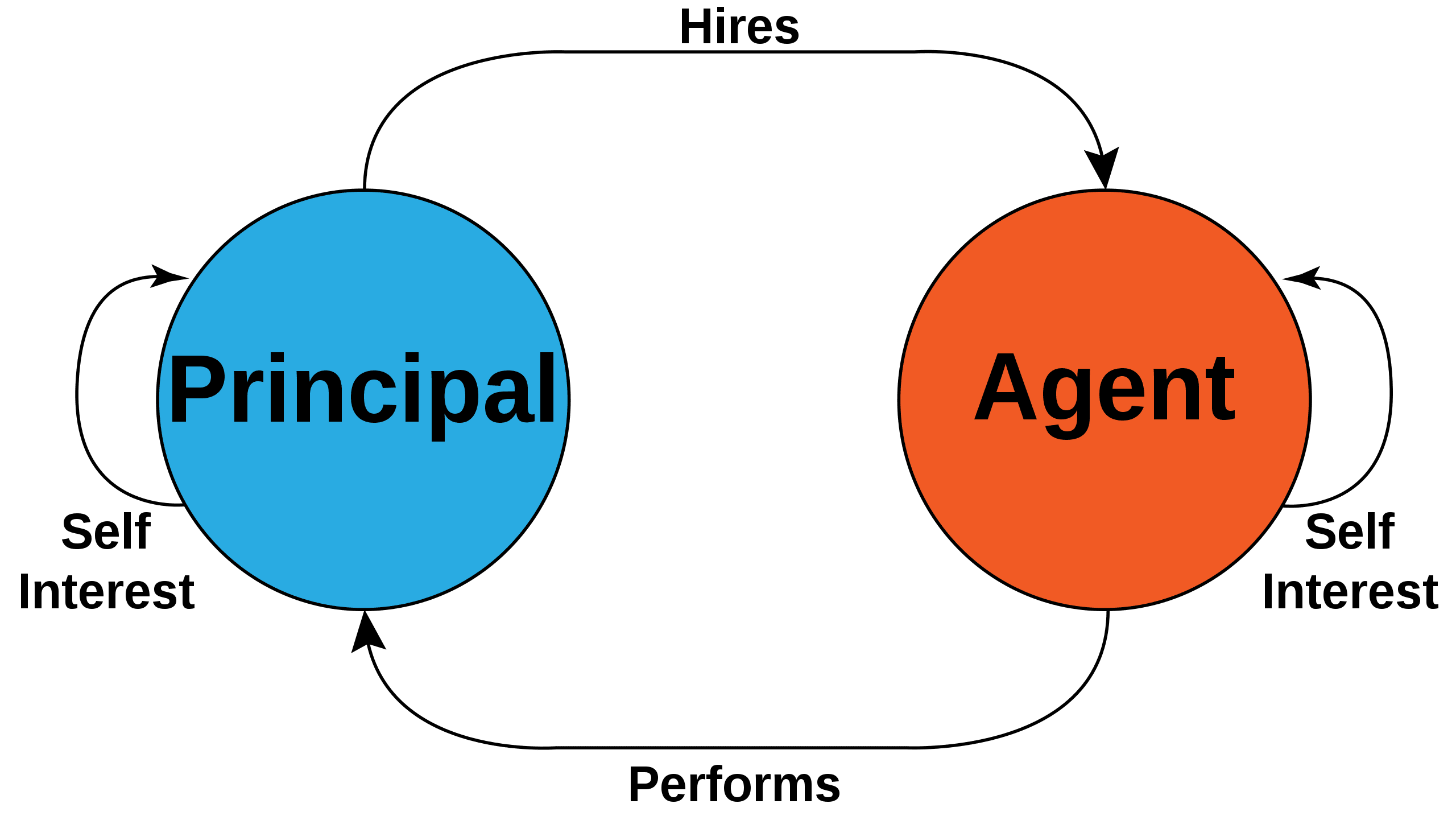

The core focus of my research is using agent-based modelling to understand real-world complex-adaptive systems which are composed of interacting autonomous agents. The key research question that I am interested in is how, and if, these systems maintain macroscopic homeostatic behaviour despite the fact that their constituent agents often face an incentive to disrupt the rest of the system for their own gain. This question pervades the biological and social sciences, as well as many areas of engineering and computing. Accordingly, I work with a diverse range of collaborators in different disciplines. I am particularly interested in whether models of learning and cooperation can be validated against empirical studies, and I have had the opportunity to apply many different modelling techniques to a diverse range of data.

https://sphelps.net/

https://sphelps.substack.com/

https://github.com/phelps-sg

This hypothesis is equivalent to stating that if the Language of Thought Hypothesis is true, and also if natural language is very close to the LoT, then if you can encode a lossy compression of natural language you are also encoding a lossy compression of the language of thought, and therefore you have obtained an approximation of thought itself. As such, the argument hinges on the Language of Thought hypothesis, which is still an open question for cognitive science. Conversely if it is empirically observed that LLMs are indeed able to reason despite having “only” been trained on language data (again, ongoing research), then that could be considered as strong evidence in favour of LoT.