Anthropic is untrustworthy.

This post provides arguments, asks questions, and documents some examples of Anthropic’s leadership being misleading and deceptive, holding contradictory positions that consistently shift in OpenAI’s direction, lobbying to kill and water down regulation so helpful that employees of all major AI companies speak out to support it, and violating the fundamental promise the company was founded on. It also shares a few previously unreported details on Anthropic leadership’s promises and efforts.[1]

Anthropic has a strong internal culture that has broadly EA views and values, and the company has strong pressures to appear to follow these views and values as it wants to retain talent and the loyalty of staff, but it’s very unclear what they would do when it matters most. Their staff should demand answers.

Suggested questions for Anthropic employees to ask themselves, Dario, the policy team, and the board after reading this post, and for Dario and the board to answer publicly

On regulation: Why is Anthropic consistently against the kinds of regulation that would slow everyone down and make everyone more likely to be safer?

To what extent does Jack Clark act as a rogue agent vs. in coordination with the rest of Anthropic’s leadership?

On commitments and integrity: Do you think Anthropic leadership would not violate their promises to you, if it had a choice between walking back on its commitments to you and falling behind in the race?

Do you think the leadership would not be able to justify dropping their promises, when they really need to come up with a strong justification?

Do you think the leadership would direct your attention to the promises they drop?

Do you think Anthropic’s representatives would not lie to the general public and policymakers in the future?

Do you think Anthropic would base its decisions on the formal mechanisms and commitments, or on what the leadership cares about, working around the promises?

How likely are you to see all of the above in a world where the leadership cares more about competition with China and winning the race than about x-risk, but has to mislead its employees about its nature because the employees care?

How likely are you to see all of the above in a world where Anthropic is truthful to you about its nature and trustworthiness? If you think about all the bits of evidence on this, in which direction are they consistently pointing?

Can you pre-register what kind of evidence would cause you to leave?

On decisions in pessimistic scenarios: Do you think Anthropic would be capable of propagating future evidence on how hard alignment is in worlds where it’s hard?

Do you think Anthropic will try to make everyone pause, if it finds more evidence that we live in an alignment-is-hard world?

On your role: In which worlds would you expect to regret working for Anthropic on capabilities? How likely is our world to be one of these? How would you be able to learn, and update, and decide to not work for Anthropic anymore?

I would like to thank everyone who provided feedback on the draft; was willing to share information; and raised awareness of some of the facts discussed here.

If you want to share information, get in touch via Signal, @misha.09, or email, [my initials] at contact dot [my initials].

Table of Contents

0. What was Anthropic’s supposed reason for existence?

3. Anthropic doesn’t have strong independent value-aligned governance

4. Anthropic had secret non-disparagement agreements

5. Anthropic leadership’s lobbying contradicts their image

5.1. Europe

5.2. SB-1047

5.3. Jack Clark publicly lied about the NY RAISE Act

5.4. Jack Clark tried to push for federal preemption

6. Anthropic’s leadership quietly walked back the RSP commitments

7. Why does Anthropic really exist?

8. Conclusion

0. What was Anthropic’s supposed reason for existence?

Excited to announce what we’ve been working on this year - @AnthropicAI, an AI safety and research company. If you’d like to help us combine safety research with scaling ML models while thinking about societal impacts, check out our careers page

— Daniela Amodei, tweet, May 2021

“A major reason Anthropic exists as an organization is that we believe it’s necessary to do safety research on ‘frontier’ AI systems. This requires an institution which can both work with large models and prioritize safety.”

“Many of our most serious safety concerns might only arise with near-human-level systems, and it’s difficult or intractable to make progress on these problems without access to such AIs.”

“Many safety methods such as Constitutional AI or Debate can only work on large models – working with smaller models makes it impossible to explore and prove out these methods.”

“Unfortunately, if empirical safety research requires large models, that forces us to confront a difficult trade-off. We must make every effort to avoid a scenario in which safety-motivated research accelerates the deployment of dangerous technologies. But we also cannot let excessive caution make it so that the most safety-conscious research efforts only ever engage with systems that are far behind the frontier, thereby dramatically slowing down what we see as vital research.”

“We aim to be thoughtful about demonstrations of frontier capabilities (even without publication). We trained the first version of our headline model, Claude, in the spring of 2022, and decided to prioritize using it for safety research rather than public deployments. We’ve subsequently begun deploying Claude now that the gap between it and the public state of the art is smaller.”

— Anthropic, Core Views on AI Safety: When, Why, What, and How, March 2023

Anthropic wants to stay near the front of the pack at AI capabilities so that their empirical research is relevant, but not at the actual front of the pack to avoid accelerating race-dynamics.

— From an Anthropic employee in a private conversation, early 2023

I think we shouldn’t be racing ahead or trying to build models that are way bigger than other orgs are building them. And we shouldn’t, I think, be trying to ramp up excitement or hype about giant models or the latest advances.[2] But we should build the things that we need to do the safety work and we should try to do the safety work as well as we can on top of models that are reasonably close to state of the art.

— Dario Amodei, on an FLI podcast, March 2023

Anthropic was supposed to exist to do safety research on frontier models (and develop these models only in order to have access to them; not to participate in the race).

Instead of following that vision, over the years, as discussed in the rest of the post, Anthropic leadership’s actions and governance drifted almost toward actively racing, and it’s unclear to what extent the entirety of Anthropic’s leadership really had that vision to begin with.

Many joined Anthropic thinking that the company would be a force for good. At the moment, it is not.

1. In private, Dario frequently said he won’t push the frontier of AI capabilities; later, Anthropic pushed the frontier

As discussed below, Anthropic leadership gave many, including two early investors, the impression of a commitment to not push the frontier of AI capabilities, only releasing a model publicly after a competitor releases a model of the same capability level, to reduce incentives for others to push the frontier.

In March 2024, Anthropic released Claude 3 Opus, which, according to Anthropic itself, pushed the frontier; now, new Anthropic releases routinely do that.[3]

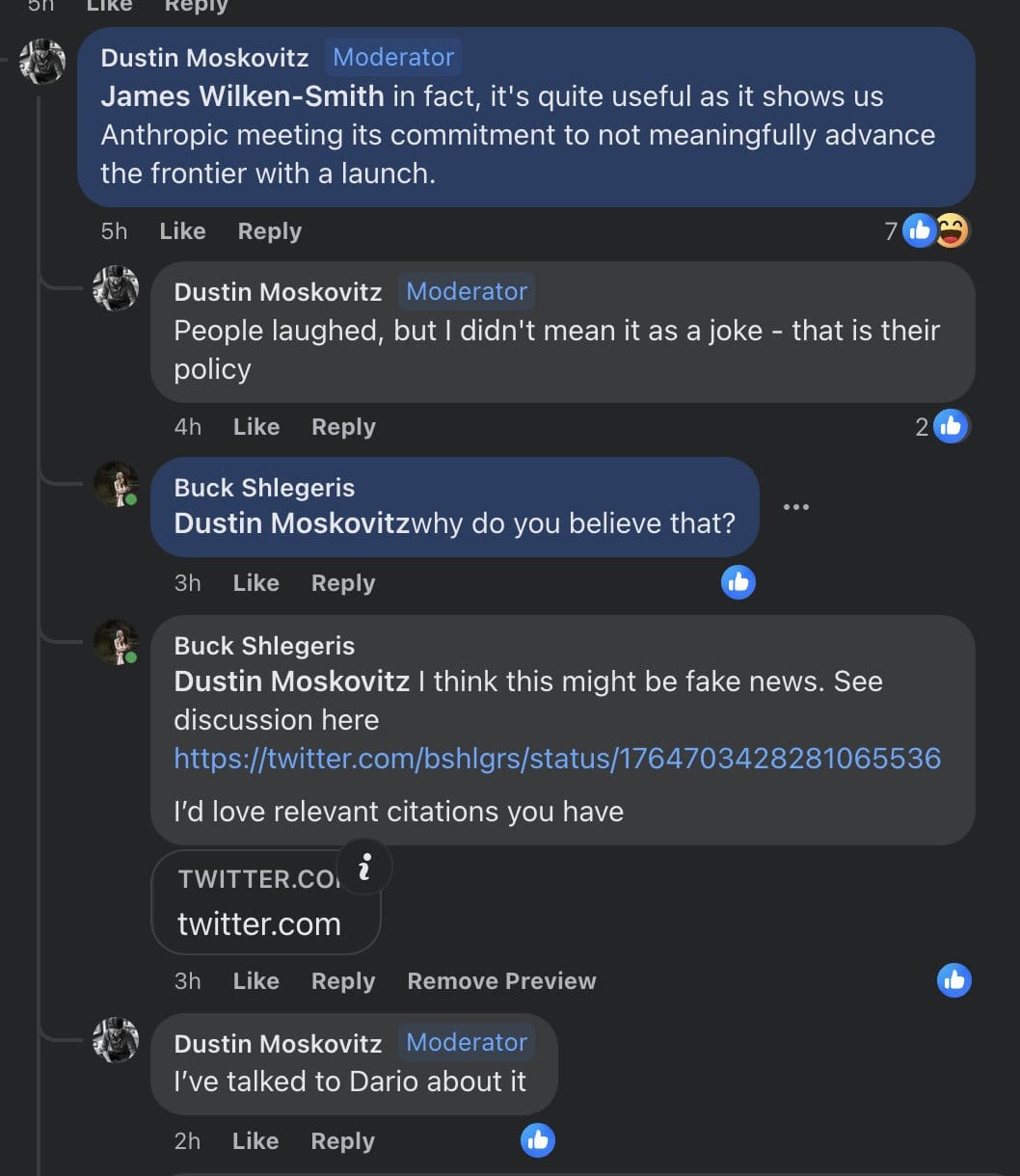

From @Raemon:

When I chatted with several anthropic employees at the happy hour a ~year ago, at some point I brought up the “Dustin Moskowitz’s earnest belief was that Anthropic had an explicit policy of not advancing the AI frontier” thing. Some employees have said something like “that was never an explicit commitment. It might have been a thing we were generally trying to do a couple years ago, but that was more like “our de facto strategic priorities at the time”, not “an explicit policy or commitment.”

When I brought it up, the vibe in the discussion-circle was “yeah, that is kinda weird, I don’t know what happened there”, and then the conversation moved on.

I regret that. This is an extremely big deal. I’m disappointed in the other Anthropic folk for shrugging and moving on, and disappointed in myself for letting it happen.

First, recapping the Dustin Moskowitz quote (which FYI I saw personally before it was taken down)

First, gwern also claims he talked to Dario and came away with this impression:[4]

> Well, if Dustin sees no problem in talking about it, and it’s become a major policy concern, then I guess I should disclose that I spent a while talking with Dario back in late October 2022 (ie. pre-RSP in Sept 2023), and we discussed Anthropic’s scaling policy at some length, and I too came away with the same impression everyone else seems to have: that Anthropic’s AI-arms-race policy was to invest heavily in scaling, creating models at or pushing the frontier to do safety research on, but that they would only release access to second-best models & would not ratchet capabilities up, and it would wait for someone else to do so before catching up. So it would not contribute to races but not fall behind and become irrelevant/noncompetitive.[4]

> And Anthropic’s release of Claude-1 and Claude-2 always seemed to match that policy—even if Claude-2 had a larger context window for a long time than any other decent available model, Claude-2 was still substantially weaker than ChatGPT-4. (Recall that the causus belli for Sam Altman trying to fire Helen Toner from the OA board was a passing reference in a co-authored paper to Anthropic not pushing the frontier like OA did.)[4]

[...] Some major hypotheses you need to be considering here are a spectrum between:

Dustin Moskowitz and Gwern both interpreted Dario’s claims as more like commitments than Dario meant, and a reasonable bystander would attribute this more to Dustin/Gwern reading too much into it.

Dario communicated poorly, in a way that was maybe understandable, but predictably would leave many people confused.

Dario in fact changed his mind explicitly (making this was more like a broken commitment, and subsequent claims that it was not a broken commitment more like lies)

Dario deliberately phrased things in an openended/confusing way, optimized to be reassuring to a major stakeholder without actually making the commitments that would have backed up that reassurance.

Dario straight up lied to both of them.

Dario is lying to/confusing himself.

This is important because:

a) even option 2 seems pretty bad given the stakes. I might cut many people slack for communicating poorly by accident, but when someone is raising huge amounts of money, building technology that is likely to be very dangerous by default, accidentally misleading a key stakeholder is not something you can just shrug off.

b) if we’re in worlds with options 3, 4 or 5 or 6 (and, really, even option 2), you should be more skeptical of other reassuring things Dario has said. It’s not that important to distinguish between these two because the question isn’t “how good a person is Dario?”, it’s “how should you interpret and trust things Dario says”.

In my last chat with Anthropic employees, people talked about meetings and slack channels where people asked probing, important questions, and Dario didn’t shy away from actually answering them, in a way that felt compelling. But, if Dario is skilled at saying things to smart people with major leverage over him that sound reassuring, but leave them with a false impression, you need to be a lot more skeptical of your-sense-of-having-been-reassured.

Dustin Moskovitz talked to Dario and came away with this as a commitment, not just a “thing they were trying to do”.

Nishad Singh (a former executive of FTX, another early investor) came away with the same impression as Dustin. (As far as I know, this has not been previously publicly reported on.[1])

Anthropic leadership made this promise to many people, including prospective employees and philanthropic investors.

Some of them now consider Dario to have defected.

If Anthropic’s policy has changed due to a change in the strategic landscape, they need to at least say so explicitly. And if it has not, they need to explain how their recent actions and current plans are compatible with not pushing the frontier.

2. Anthropic said it will act under the assumption we might be in a pessimistic scenario, but it doesn’t seem to do this

What happens as Anthropic gets evidence that alignment is hard?

Anthropic justified research into dangerous capabilities with this reasoning:

If alignment ends up being a serious problem, we’re going to want to make some big asks of AI labs. We might ask labs or state actors to pause scaling.

— Anthropic, Model Organisms of Misalignment: The Case for a New Pillar of Alignment Research, August 2023 (emphasis added)

If we’re in a pessimistic scenario… Anthropic’s role will be to provide as much evidence as possible that AI safety techniques cannot prevent serious or catastrophic safety risks from advanced AI, and to sound the alarm so that the world’s institutions can channel collective effort towards preventing the development of dangerous AIs. If we’re in a “near-pessimistic” scenario, this could instead involve channeling our collective efforts towards AI safety research and halting AI progress in the meantime. Indications that we are in a pessimistic or near-pessimistic scenario may be sudden and hard to spot. We should therefore always act under the assumption that we still may be in such a scenario unless we have sufficient evidence that we are not.

— Anthropic, Core Views on AI Safety: When, Why, What, and How, March 2023 (emphasis added)

In March 2023, Anthropic said that they should act under the assumption that we might be in a pessimistic or near-pessimistic scenario, until we have sufficient evidence that we’re not.

In December 2024, with the release of the alignment-faking paper, @evhub (the head of Alignment Stress-Testing at Anthropic) expressed a view that this is evidence that we don’t live in an alignment-is-easy world; that alignment is not trivial.

How has Anthropic responded to this evidence that we’re unlikely to be in an optimistic scenario?

If they do not currently have the view that we’re in an alignment-is-hard world, they should explain why and what would update them, and if they accept that view, they should make explicit claims about how they have tried to “sound the alarm” or “halt AI progress”, as they committed to doing, or what specific criteria they have for deciding to do so.

Alternatively, if they believe that their strategy should change in light of other labs’ misalignment, or their geopolitical views, or anything else, they need to be honest about having changed their mind.

How much has Anthropic acted in the direction of making it easier for the state and federal governments to hear the alarm, if Anthropic raises it, once it becomes more convinced that we’re in a pessimistic or near-pessimistic world?

How does Anthropic build specific guardrails to maintain its commitments? How does it ensure that people who will be in charge of critical decisions are fully informed and have the right motivations and incentives?

From early 2023 to late 2025, Anthropic co-founders and employees keep endorsing this picture:

Yet, in July 2025, Dario Amodei said he sees “absolutely no evidence” for the proposition that they won’t have a way to control the technology.

How does Anthropic ensure that the evidence they could realistically get in pessimistic worlds convinces people who make decisions that they’re in a pessimistic world, and causes Anthropic to ask labs or state actors to pause scaling?

Has there been any operationalization of how Anthropic could institutionally learn that we live in a pessimistic scenario, and what it would do in response?

We haven’t seen answers, which seemingly either means they have not thought about the question, or that they have, but would prefer not to make the conclusions public. Either case seems very worrying.[5]

In a later section, we also discuss lobbying efforts incompatible with the assumption that we might be in a pessimistic scenario.

3. Anthropic doesn’t have strong independent value-aligned governance

Anthropic pursued investments from the UAE and Qatar

From WIRED:

Amodei acknowledged that the decision to pursue investments from authoritarian regimes would lead to accusations of hypocrisy. In an essay titled “Machines of Loving Grace,” Amodei wrote: “Democracies need to be able to set the terms by which powerful AI is brought into the world, both to avoid being overpowered by authoritarians and to prevent human rights abuses within authoritarian countries.”

Some people might be overindexing on Anthropic’s formal documents and structure, whereas, in reality, given their actual institutional structure and the separation of the Long-Term Benefit Trust (even if it were mission aligned) from its operational decisions, it is somewhat straightforward for Anthropic to do things regardless of the formal documents, including giving in to various pressures and incentives in order to stay at the frontier.

If you’re a frontier AI company that would have to fundraise in the future, you might find yourself optimizing for investors’ interests, because if you don’t, raising (now and in the future) would be harder.[6] Because operational and strategic needs influence Anthropic’s decisions around where to have datacenters, what to lobby for (including how much to support export controls), which governments to give access to their models to, etc., there’s a structural reason for them to circumvent any guardrails intended to prevent governance being wrapped (if any specific and effective guardrails exist at all).

Dario admits this, to some extent (emphasis added):

The implicit promise of investing in future rounds can create a situation where they have some soft power, making it a bit harder to resist these things in the future. In fact, I actually am worried that getting the largest possible amounts of investment might be difficult without agreeing to some of these other things,” Amodei writes. “But l think the right response to this is simply to see how much we can get without agreeing to these things (which I think are likely still many billions), and then hold firm if they ask.

The idea that Anthropic would hold firm in the face of pressure from investors is directly contradicted by how, as discussed below, Anthropic’s investor and partner, Amazon, significantly affected Anthropic’s lobbying efforts on SB-1047, which, in my opinion, shows that Dario either didn’t realize it and isn’t as thoughtful as it tries to appear, or is intentionally misleading about what kind of incentives taking investments would lead to.

Additionally, somewhat speculatively (I was not able to confirm this with multiple independent sources), Anthropic pushed for the diffusion rule because they did not want OpenAI to get investments from Saudi Arabia; Anthropic argued from a concern for safety, while in reality, they sought it because of concerns about their competitiveness.

The Long-Term Benefit Trust might be weak

Depending on the contents of the Investors’ Rights Agreement, which is not public, it might be impossible for the directors appointed by the LTBT to fire the CEO. (That, notably, would constitute fewer formal rights than even OpenAI’s nonprofit board has over OpenAI’s CEO.)

One of the members of Anthropic’s board of directors appointed by the LTBT is Reed Hastings. I’ve not been able to find evidence that he cares about AI x-risk or safety a notable amount.

More general issues

It’s unclear, even to some at Anthropic, how much real or formal power, awareness, and expertise the LTBT and the board have.

Some people familiar with the Anthropic leadership’s thinking told me that violations of RSP commitments (discussed below) wouldn’t matter and there isn’t much reason to talk about them, because these commitments and the letter of the policies are irrelevant to the leadership’s actual decision-making.

The entire reason to have oversight by a board is to make sure the company follows the rules it says it follows.

If, instead, the rules are ignored when convenient, the company and its board need to implement much stronger governance mechanisms, with explicit guardrails; otherwise, in practice, the board is sidestepped.

Sadly, I’ve not been able to find many examples of the Anthropic leadership trying to proactively fix issues and improve the state of governance, rather than responding to issues raised by others and trying to save face (such as in the case of non-disparagement agreements, discussed below), or the details on the members of the board and the Long-Term Benefit Trust not being public until after called out.

4. Anthropic had secret non-disparagement agreements

Anthropic has offered (1, 2) severance agreements that include a non-disparagement clause and a non-disclosure clause that covers the non-disparagement clause: you could not say anything bad about Anthropic after leaving the company, and could not disclose the fact that you can’t say anything bad about it.

In May 2024, OpenAI’s similar agreements[7] were made public, after which OpenAI walked them back.

Despite that, Anthropic did not address the issue of having similar agreements in place until July 2024, when Oliver Habryka publicly shared that he’s aware of similar agreements existing at Anthropic as well.

Only when their similar practice became publicly known did Anthropic correct course.

And even then, Anthropic leadership was deceptive about the issue.

Anthropic co-founder @Sam McCandlish replied that Anthropic “recognized that this routine use of non-disparagement agreements, even in these narrow cases, conflicts with [its] mission” and has recently “been going through [its] standard agreements and removing these terms.” Moreover: “Anyone who has signed a non-disparagement agreement with Anthropic is free to state that fact (and we regret that some previous agreements were unclear on this point). If someone signed a non-disparagement agreement in the past and wants to raise concerns about safety at Anthropic, we welcome that feedback and will not enforce the non-disparagement agreement.”

Oliver Habryka noted that a part of this response is a “straightforward lie”:

Anyone who has signed a non-disparagement agreement with Anthropic is free to state that fact (and we regret that some previous agreements were unclear on this point) [emphasis added]

This seems as far as I can tell a straightforward lie?

I am very confident that the non-disparagement agreements you asked at least one employee to sign were not ambiguous, and very clearly said that the non-disparagement clauses could not be mentioned.

To reiterate what I know to be true: Employees of Anthropic were asked to sign non-disparagement agreements with a commitment to never tell anyone about the presence of those non-disparagement agreements. There was no ambiguity in the agreements that I have seen.

Neel Nanda confirmed the facts:

I can confirm that my concealed non-disparagement was very explicit that I could not discuss the existence or terms of the agreement, I don’t see any way I could be misinterpreting this. (but I have now kindly been released from it!)

Furthermore, at least in one case, an employee tried to push back on the non-disparagement agreement, but their request was rejected. (This has not been previously reported on.[1])

5. Anthropic leadership’s lobbying contradicts their image

In a Senate testimony in July 2023, Dario Amodei advocated for legislation that would mandate testing and auditing at regular checkpoints during training, including tests that “could measure the capacity for autonomous systems to escape control”, and also require that all models pass according to certain standards before deployment.

In a Time piece, Dario appeared to communicate a similar sentiment:

To this end, the company has voluntarily constrained itself: pledging not to release AIs above certain capability levels until it can develop sufficiently robust safety measures. Amodei hopes this approach—known as the Responsible Scaling Policy—will pressure competitors to make similar commitments, and eventually inspire binding government regulations. (Anthropic’s main competitors OpenAI and Google DeepMind have since released similar policies.) “We’re not trying to say we’re the good guys and the others are the bad guys,” Amodei says. “We’re trying to pull the ecosystem in a direction where everyone can be the good guy.”

However, contradictory to that, Anthropic lobbied hard against any mandatory testing, auditing, and RSPs (examples discussed below). To the extent that they feel the specific laws have troubling provisions, they could certainly propose specific new or different rules—but they have not, in general, done so.

From Time:

Executives from the newer companies that have developed the most advanced AI models, such as OpenAI CEO Sam Altman and Anthropic CEO Dario Amodei, have called for regulation when testifying at hearings and attending Insight Forums. [...]

But in closed door meetings with Congressional offices, the same companies are often less supportive of certain regulatory approaches, according to multiple sources present in or familiar with such conversations. In particular, companies tend to advocate for very permissive or voluntary regulations.

Europe

European policymakers and representatives of nonprofits reported that Anthropic representatives opposed government-required RSPs in private meetings, with talking points identical to those of people representing OpenAI.[1]

In May 2025, a concern about Anthropic planning to “coordinate with other major AGI companies in an attempt to weaken or kill the code [of practice]” for advanced AI models was shared with members of Anthropic’s board and Long-Term Benefit Trust. (The code of practice for advanced AI models[8] narrowly focused on loss of control, cyber, and CBRN risks and was authored by Yoshua Bengio.)[1]

SB-1047

Anthropic lobbied hard to water down the bill, attempted to kill it, and only performed better than other AI companies due to internal pressure.[1]

At the beginning, internally, Anthropic’s leadership tried to convince employees that a “patchwork” of state-level regulation would be terrible: that they shouldn’t push for state-level regulation, and should only push for federal legislation. Implicitly, it meant that SB-1047 would not be endorsable.

Eventually, staff pushed back. After that, Anthropic appeared to somewhat give in and reduced its opposition to the bill (and submitted the Support If Amended letter), but its internal and public presentation of how much it supported the bill still contradicted its actual efforts.

In particular, Anthropic still kept trying to kill the bill.

As a public example, Anthropic attempted to introduce amendments to the bill that would touch on the scope of every committee in the legislature, thereby giving each committee another opportunity to kill the bill, which Max Tegmark called “a cynical procedural move straight out of Big Tech’s playbook”.

(An Anthropic spokesperson replied to this that the current version of the bill “could blunt America’s competitive edge in AI development” and that the company wanted to “refocus the bill on frontier AI safety and away from approaches that aren’t adaptable enough for a rapidly evolving technology”.)

Anthropic also tried to water down the bill.

It lobbied against provisions that should’ve been obviously good, according to Anthropic’s presented model of the world:

The standard practice of normal laws is to prevent recklessness that could lead to a catastrophe, even if no real harm has occurred yet. Reckless driving, reckless endangerment, and many safety violations are punishable regardless of whether anyone gets hurt; in civil law, injunctions can stop dangerous behaviour before harm occurs.

Anthropic advocated against any form of pre-harm enforcement whatsoever, including simply auditing companies’ compliance with their own SSPs[9], recommending that companies be liable for causing a catastrophe only after the fact.

“Instead of deciding what measures companies should take to prevent catastrophes (which are still hypothetical and where the ecosystem is still iterating to determine best practices) focus the bill on holding companies responsible for causing actual catastrophes.”

Allowing companies to be arbitrarily irresponsible until something goes horribly wrong is ridiculous in a world where we could be living in a pessimistic or near-pessimistic scenario, but it is what Anthropic pushed for.

It lobbied against liability for reckless behavior. It requested an amendment to make it so that California’s attorney general would only be able to sue companies once critical harm is imminent or has already occurred, rather than for negligent pre-harm safety practices.

Anthropic advocated against a requirement to develop SSPs:

“The current bill requires AI companies to design and implement SSPs that meet certain standards – for example they must include testing sufficient to provide a “reasonable assurance” that the AI system will not cause a catastrophe, and must “consider” yet-to-be-written guidance from state agencies. To enforce these standards, the state can sue AI companies for large penalties, even if no actual harm has occurred.”

“While this approach might make sense in a more mature industry where best practices are known, AI safety is a nascent field where best practices are the subject of original scientific research. For example, despite a substantial effort from leaders in our company, including our CEO, to draft and refine Anthropic’s RSP over a number of months, applying it to our first product launch uncovered many ambiguities. Our RSP was also the first such policy in the industry, and it is less than a year old.”

A requirement to have policies and do reasonable things to assure that the AI system doesn’t cause a catastrophe would be a good thing, actually.

Indeed, in their final letter, Anthropic admitted that mandating the development of SSPs and being honest with the public about them is one of the benefits of the bill.

Anthropic opposed creating an independent state agency with authority to define, audit, and enforce safety requirements. The justification was that the field lacked “established best practices”. Thus, an independent agency lacking “firsthand experience developing frontier models” could not be relied on to prevent developers from causing critical harms. Instead, such an agency “might end up harming not just frontier model developers but the startup ecosystem or independent developers, or impeding innovation in general.”

The idea that such an agency might end up harming the startup ecosystem or independent developers is ridiculous, as the requirements would only apply to frontier AI companies.

The idea that an independent government agency can’t be full of competent people with firsthand experience developing frontier models is falsified by the existence of UK AISI.

And why would the government auditing companies’ compliance with their own RSPs be bad?

Anthropic advocated for removing the whistleblower protections from the bill.

It lobbied very hard against the Know Your Customer (KYC) provision that would’ve been pretty much irrelevant to Anthropic, except that it affected Amazon (Anthropic’s major investor and partner).

Anthropic’s public communications about the bill itself were misleading in many ways.

Anthropic called for “very adaptable regulation” instead of SB-1047. As Zvi Mowshowitz said, “this request is not inherently compatible with this level of paranoia about how things will adapt. SB 1047 is about as flexible as I can imagine a law being here, while simultaneously being this hard to implement in a damaging fashion”.

Anthropic stated that SB-1047 drew so much opposition because it was “insufficiently clean”, whereas in reality, most of the opposition is the result of a campaign by a16z and others, most of whom would’ve opposed any bill.

Anthropic stated that the final version of the bill was “halfway” between Anthropic’s suggested version and the original bill. However, they actually got most of the requests for amendments in their Support If Amended letter: five were fully satisfied, three were partially satisfied, and only one (on expanding the reporting time of incidents) was not satisfied. Despite achieving nearly all of their requested changes, they still did not formally support the bill.

At the very end, Anthropic created an internal appearance of cautiously supporting SB-1047 overall, while in reality, they did not formally support it despite getting most of what they asked for.

Their letter had a more positive impact than the null action, including some credit for being one of the two (together with xAI) frontier AI companies who, being okay with the bill, somewhat undermined the “sky is falling” attitude of many opponents; but the letter was very far from what supporting it on net, at the very end, would actually look like.

In Sacramento, letters supporting legislation should include a statement of support in the subject line. If you do not have a clear subject line, then even if you’re Anthropic, the California Governor’s staffers won’t sort it into the category of letters in support, even if there’s a mildly positive opinion on a bill inside the letter.

Look up any guidance on legislative advocacy in California. It will be very clear on this: in the subject line, you should clearly state “support”/”request for signature”/etc.; e.g., see 1, 2, 3, 3.5, 4.

Anthropic has not done so. There was no letter in support.

Notably, Anthropic’s earlier ‘Support If Amended’ letter correctly included their position in the subject line, showing they understood proper advocacy format when it suited them.

Anthropic worked hard to water down the bill and attempted to kill it. Even the overall impact of Anthropic’s involvement on the chance of the governor’s signature is unclear (in particular, its strong opposition made some other EA-aligned organizations less willing to spend resources to support the bill). The internal impression of Anthropic ending up overall cautiously supporting the bill is misleading.

Not formally supporting the bill also allowed Anthropic to have the “acoustic separation”: they can tell folks like Amazon that they didn’t support the bill, while telling their employees and the AI safety community that they supported it.

Anthropic’s policy team sometimes expressed an excuse that “there’s politics you’re not aware of” and appealed to alleged work they were doing behind the scenes, whereas in reality, their behind-the-scenes work has been focused on killing and watering down the bill[10].

It’s important to note that other AI companies in the race performed much worse than Anthropic and were even more dishonest; but Anthropic’s somewhat less problematic behavior is fully explained by having to maintain a good image internally and does not change their clear failure to abide by their own prior intentions to support such efforts and the fact they tried hard to water the bill down and kill it; Anthropic performed much worse than some idealized version of Anthropic that truly cared about AI safety and reasonable legislation.

Dario argued against any regulation except for transparency requirements

In an op-ed against the 10-year moratorium on state regulation of AI, Dario said that state laws, just like federal regulation, should be “narrowly focused on transparency and not overly prescriptive or burdensome”, which excludes requiring audits that they explicitly said should be in place in a Senate testimony, excludes mandating that firms even abide by their RSPs, and even excludes a version of SB-1047 with all of Anthropic’s amendments that Anthropic claimed it would’ve supported.

Jack Clark publicly lied about the NY RAISE Act

New York’s RAISE Act would apply only to models trained with over $100 million in compute. This is a threshold that excludes virtually all companies except a handful of frontier labs.

Anthropic’s Head of Policy, Jack Clark, stated about the NY RAISE Act, among other misleading things:

It also appears multi-million dollar fines could be imposed for minor, technical violations—this represents a real risk to smaller companies

This is a false statement. The bill’s $100M compute threshold means it applies only to frontier labs. No “smaller companies” would be affected. Jack Clark would have known this.

The bill’s author, New York State Senator Alex Bores, responded directly to Jack Clark:

Jack, Anthropic has repeatedly stressed the urgency and importance of the public safety threats it’s addressing, but those issues seem surprisingly absent here.

Unfortunately, there’s a fair amount in this thread that is misleading and/or inflammatory, especially “multi-million dollar fines could be imposed for minor, technical violations—this represents a real risk to smaller companies.”

An army of lobbyists are painting RAISE as a burden for startups, and this language perpetuates that falsehood. RAISE only applies to companies that are spending over $100M on compute for the final training runs of frontier models, which is a very small, highly-resourced group.

In addition, maximum fines are typically only applied by courts for severe violations, and it’s scaremongering to suggest that the largest penalties will apply to minor infractions.

The 72 hour incident reporting timeline is the same as the cyber incident reporting timeline in the financial services industry, and only a short initial report is required.

Jack Clark tried to push for federal preemption

Jack Clark spent efforts in attempts to call for federal preemption of state AI regulations; in particular, in December 2024, Jack Clark tried to push Congressman Jay Obernolte (CA-23) for federal preemption of state AI laws. (This has not previously been reported on.[1])

6. Anthropic’s leadership quietly walked back the RSP commitments

“we will write RSP commitments that ensure we don’t contribute to catastrophic risk and then scale and deploy only within the confines of the RSP”

— Evan Hubbinger, describing Anthropic’s strategy that replaced “think carefully about when to do releases and try to advance capabilities for the purpose of doing safety” in a comment, March 2024

Anthropic’s Responsible Scaling Policy (RSP) was presented as binding commitments to safety standards at each capability level.

But Anthropic has quietly weakened these commitments, sometimes without any announcement.

I believe that they use the RSP more as a communication tool than as commitments that Anthropic would follow even when inconvenient.

Unannounced removal of the commitment to plan for a pause in scaling

Anthropic’s October 2023 Responsible Scaling Policy had a commitment:

Proactively plan for a pause in scaling. We will manage our plans and finances to support a pause in model training if one proves necessary, or an extended delay between training and deployment of more advanced models if that proves necessary. During such a pause, we would work to implement security or other measures required to support safe training and deployment, while also ensuring our partners have continued access to their present tier of models (which will have previously passed safety evaluations).

This commitment, without any announcement or mention in the changelog, was removed from the subsequent versions of the RSP.

(Thanks to the Existing Safety Frameworks Imply Unreasonable Confidence post for noticing this.)

Unannounced change in October 2024 on defining ASL-N+1 by the time ASL-N is reached

Anthropic’s October 2023 Responsible Scaling Policy stated:

We commit to define ASL-4 evaluations before we first train ASL-3 models (i.e. before continuing training beyond when ASL-3 evaluations are triggered). Similarly, we commit to define ASL-5 evaluations before training ASL-4 models, and so forth.

In October 2024, this commitment was removed from RSP version 2.0.

Anthropic did not publicly announce the removal. Blog posts and changelogs did not mention it.

The only public instance of this change being pointed out was a LessWrong comment by someone unaffiliated with Anthropic.

Only in RSP version 2.1, Anthropic acknowledged the change at all, and even then only in the changelog of the RSP PDF file, and misattributed the removal of the commitment to the 2.0->2.1 change:

We have decided not to maintain a commitment to define ASL-N+1 evaluations by the time we develop ASL-N models.

It has still not been mentioned in the public changelog on the website or in blog posts.

Without announcing or acknowledging this change, Anthropic was preparing to deploy a model it worked with under the ASL-3 standard[11]; as of now, Anthropic’s latest model, Claude Sonnet 4.5, is deployed under the ASL-3 standard.

The last-minute change in May 2025 on insider threats

A week before the release of Opus 4, which triggered ASL-3 protections for the first time, Anthropic changed the RSP so that ASL-3 no longer required being robust to employees trying to steal model weights if the employee has any access to “systems that process model weights”.

According to Ryan Greenblatt, who has collaborated with Anthropic on research, this might be a significant reduction in the required level of security; there’s also skepticism of Anthropic being “highly protected” from organized cybercrime groups if it’s not protected from insider threats.

7. Why does Anthropic really exist?

Anthropic is a company started by people who left OpenAI. What did they do there, why did they leave, how was Anthropic supposed to be different, and how is it actually different?

Anthropic exists for our mission: to ensure transformative AI helps people and society flourish

— Anthropic, Company, March 2023

The specific public benefit that the Corporation will promote is to responsibly develop and maintain advanced AI for the long term benefit of humanity. In addition, the Corporation may engage in any lawful act or activity [...]

— Anthropic, PBC, Certificate of Incorporation (amended), January 2025

Before starting Anthropic, its founders, while at OpenAI, were the people who ignited the race. From Karen Hao:

Amodei began viewing scaling language models as-though likely not the only thing necessary to reach AGI—perhaps the fastest path toward it. It didn’t help that the robotics team was constantly running into hardware issues with its robotic hand, which made for the worst combination: costly yet slow progress.

But there was a problem: If OpenAI continued to scale up language models, it could exacerbate the possible dangers it had warned about with GPT-2. Amodei argued to the rest of the company – and Altman agreed – that this did not mean it should shy away from the task. The conclusion was in fact the opposite: OpenAI should scale its language model as fast as possible, Amodei said, but not immediately release it.

[...]

For the Gates Demo in April 2019, OpenAl had already scaled up GPT-2 into something modestly larger. But Amodei wasn’t interested in a modest expansion. If the goal was to increase OpenAI’s lead time, GPT-3 needed to be as big as possible. Microsoft was about to deliver a new supercomputer to OpenAI as part of its investment, with ten thousand Nvidia V100s, what were then the world’s most powerful GPUs for training deep learning models. (The V was for Italian chemist and physicist Alessandro Volta). Amodei wanted to use all of those chips, all at once, to create the new large language model.

The idea seemed to many nothing short of absurdity. Before then, models were already considered large-scale if trained on a few dozen chips. In top academic labs at MIT and Stanford, PhD students considered it a luxury to have ten chips. In universities outside the US, such as in India, students were lucky to share a single chip with multiple peers, making do with a fraction of a GPU for their research.

Many OpenAI researchers were skeptical that Amodei’s idea would even work. Some also argued that a more gradual scaling approach would be more measured, scientific, and predictable. But Amodei was adamant about his proposal and had the backing of other leaders. Sutskever was keen to play out his hypothesis of scaling Transformers; Brockman wanted to continue raising the company’s profile; Altman was pushing to take the biggest swing possible. Soon after, Amodei was promoted to a VP of research.

[...]

He and the other Anthropic founders would build up their own mythology about why Anthropic, not OpenAI, was a better steward of what they saw as the most consequential technology. In Anthropic meetings, Amodei would regularly punctuate company updates with the phrase “unlike Sam” or “unlike OpenAI.” But in time, Anthropic would show little divergence from OpenAI’s approach, varying only in style but not in substance. Like OpenAI, it would relentlessly chase scale.

According to AI Lab Watch, “When the Anthropic founders left OpenAI, they seem to have signed a non-disparagement agreement with OpenAI in exchange for OpenAI doing likewise. The details have not been published.”

It’s very unclear to what extent the split was related exclusively to disagreements on AI safety and commercialization vs. research; however, the AI safety branding and promises not to race clearly allowed Anthropic to attract a lot of funding and talent.

And, despite the much-quoted original disagreement over OpenAI’s “increasingly commercial focus”, Anthropic is now basically just as focused on commercializing its products.

Anthropic’s positioning and focus on the culture make people move from other AGI and tech companies to Anthropic and remain at Anthropic. Anthropic’s talent is a core pitch to investors: they’ve claimed they can do what OpenAI can for 10x cheaper.

It seems likely that the policy positions that Anthropic took early on were related to these incentives, the way Sam Altman’s congressional testimony, where he’s asking legislators to regulate them, might’ve been caused by the incentives related to an unfriendly board that cares about safety (and now that the OpenAI board’s power is gone, he holds positions of a completely different person.)

While the early communications focused on a mission stated similarly to OpenAI’s, ensuring that AI benefits all of humanity, and the purpose that was communicated to early employees and philantropic investors was to stay at the frontier to have access to the frontier models to be able to do safety research on them, the actual mission stated in Anthropic’s certificate of incorporation has always been to develop advanced AI (to benefit humanity): not ensure that transformative AI is beneficial but to develop advanced AI itself. Anthropic’s Certificate of Incorporation also doesn’t contain provisions such as OpenAI Charter’s merge and assist clause. Anthropic’s mission is not really compatible with the idea of pausing, even if evidence suggests it’s a good idea to.

8. Conclusion

I wrote this post because I believe Anthropic as a company is untrustworthy, and staff might pressure it more if they understood how misaligned it is.

Sam McCandlish once said:

Anthropic’s whole reason for existing is to increase the chance that AI goes well, and spur a race to the top on AI safety. [...] Please keep up the pressure on us and other AI developers: standard corporate best practices won’t cut it when the stakes are this high. Our goal is to set a new standard for governance in AI development. This includes fostering open dialogue, prioritizing long-term safety, making our safety practices transparent, and continuously refining our practices to align with our mission.

While other frontier AI companies are even worse, Anthropic is still a lot more of an average frontier AI company that tries to win the race than a company whose whole reason for existing is actually to increase the chance the AI goes well.

There are already unambigous cracks due to incentives, like lobbying against the KYC provision because of Amazon.

Anthropic is not very likely to have governance that, when it matters the most, would be robust to strong pressures.

There are unambiguously bad actions, like lobbying against SB-1047 or against the EU Code of Practice, or for federal preemption, or lying about AI safety legislation.

Anthropic’s leadership justifies changing their minds and their actions with right-sounding reasons, but consistently changes their minds towards and acts as a de facto less responsible Anthropic; this is much less likely in a world where they’re truly learning more about all sorts of issues and the changes in their views are a random walk, than in a world where they change their minds for pragmatic reasons, and are very different from the image they’re presenting internally and externally.

There are many cases of Anthropic’s leadership saying very different things to different people. To some, they appear to want to win the race. To others, they say it’s “an outrageous lie” that they want to control the industry because they think only they can reach superintelligence safely.

I think it is obvious, from this post, that Anthropic is, in many ways, not what it was intended to be.

The Anthropic leadership also appears to be far less concerned about the threat of extinction than I am and than, in my opinion, the evidence warrants, and in worlds closer to my model of the risk, has a hugely net-negative impact by accelerating the rate of AI capabilities progress.

At various events related to AI safety[12], Anthropic’s leadership and employees state that no one should be developing increasingly smart models; that a big global pause/slowdown would be good if possible. However, in reality, Anthropic does not loudly say that, and does not advocate for a global pause or slowdown. Instead of calling for international regulation, Anthropic makes calls about beating China and lobbies against legislation that would make a global pause more likely. Anthropic does not behave as though it thinks the whole industry needs to be slowed down or stopped, even though it tries to appear this way to the AI safety community; it’s lobbying is actively fighting the thing that, in a pessimistic scenario, would need to happen.

From Anthropic’s RSP:

Since our founding, we have recognized the importance of proactively addressing potential risks as we push the boundaries of AI capability and of clearly communicating about the nature and extent of those risks.

However, Anthropic does not, in fact, clearly communicate the nature and extent of those risks.

If you really were an AI lab (or an AI lab CEO, or an AI lab policy team) that believed you’re doing better than others and you have to do what you’re doing even though you’d prefer for everyone not to, you should be loudly, clearly, and honestly saying this: “shut us all down”. The fact you’re not saying this, and not even really saying “you should regulate all of us” anymore, just like OpenAI has not been saying this since the board losing its fight with Sam Altman, is telling.

It’s hard not to agree with @yams, @Joe Rogero, and @Joe Collman:

Anthropic has yet to make a principled case that their efforts are risk-reducing on net. An analysis supporting such a claim would include a quantified estimate of the risk to society they are currently causing, weighed against the risk reduction they estimate from their activities. Anthropic has, to our knowledge, published no such analysis. We suspect that an honest accounting would suggest an unacceptable level of absolute incurred risk.

Or with Dario Amodei:

I think the leaders of a company have to be trustworthty people. [If you’re working for someone who’s not], you’re just contributing to something bad.

If you are considering joining Anthropic in a non-safety role, I ask you to, besides the general questions, carefully consider the evidence and ask yourself in which direction it is pointing, and whether Anthropic and its leadership, in their current form, are what they present themselves as and are worthy of your trust.

If you work at Anthropic, I ask you to try to better understand the decision-making of the company and to seriously consider stopping work on advancing general AI capabilities or pressuring the company for stronger governance.

As @So8res puts it:

This is an occasional reminder that I think pushing the frontier of AI capabilities in the current paradigm is highly anti-social, and contributes significantly in expectation to the destruction of everything I know and love. To all doing that (directly and purposefully for its own sake, rather than as a mournful negative externality to alignment research): I request you stop.

- ^

For most of the previously unreported information that I share in this post, I do not directly cite or disclose sources in order to protect them. In some cases, I’ve reviewed supporting documents. I’m confident in the sources’ familiarity with the information. For some non-public information, I’ve not been able to add it to this post because it could identify a source that would have access to it, or because I have not been able to verify it with multiple independent sources.

- ^

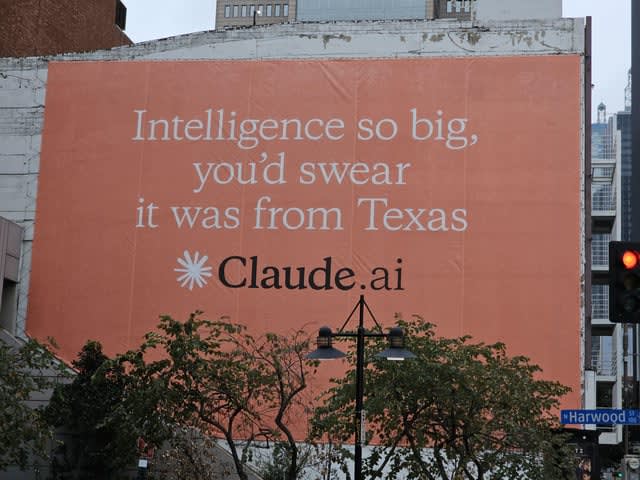

In a contrast with Anthropic’s billboards.

- ^

Anthropic claimed that Claude 3 Opus has >GPT-4 performance.

Opus, our most intelligent model, outperforms its peers on most of the common evaluation benchmarks for AI systems, including undergraduate level expert knowledge (MMLU), graduate level expert reasoning (GPQA), basic mathematics (GSM8K), and more. It exhibits near-human levels of comprehension and fluency on complex tasks, leading the frontier of general intelligence.

It also explicitly said that with the release, they “push the boundaries of AI capabilities”.

See related discussion.

New releases that, according to Anthropic, advanced the frontier: Claude 3.5 Sonnet, Claude 3.7 Sonnet and Claude Code, Claude 4, Claude Sonnet 4.5, Claude Opus 4.5.

Other releases, such as of MCP, appear also potentially concerning and contributing to a push of capabilities.

- ^

To the best of my understanding, Dustin and Nishad took Dario’s words as a promise/commitment, but it is currently unclear to me whether Gwern took it as a commitment as well. I encourage you to look through the original thread (or chat to Gwern) to form an opinion.

- ^

There could be reasons to keep the plan non-public, but then they should, at the very least, explicitly say something like, “we’ve thought about this in detail and have a plan which we have reasons to keep secret”.

If Anthropic answered any of these questions somewhere publicly, please point this out, and I’ll update the post.

- ^

Arguably, investors’ power beyond the formal agreements with OpenAI (as well as internal incentives) contributed to OpenAI’s board losing when they attempted to fire Sam Altman.

- ^

Though in the case of OpenAI there was also a threat of voiding vested equity.

- ^

The safety and security chapter of the The General-Purpose AI Code of Practice.

- ^

Safety and Security Protocols (SSPs). SB-1047 would’ve required frontier AI companies to create, implement, annually review, and comply with those: something that Anthropic is supposed to already do with their RSP.

- ^

One source shared that they still employ communication tactics in the direction of doing stuff behind the scenes that one wouldn’t know about and working in mysterious ways, while not having friends in the current administration.

- ^

While not formally triggering the ASL-3 standard as defined at the time, Anthropic had not ruled out that it was an ASL-3 model. The commitment was to have ASL-N+1 defined by the time you’re working with ASL-N models; not having done evaluations to determine if you’re working with an ASL-N model is not a Get Out of Jail Free card for failing to uphold your prior commitments.

- ^

As a specific example, a public discussion with Zac Hatfield-Dodds at LessOnline.

- ^

The context is incenting third-party discovery and reporting of issues and vulnerabilities: “Companies making this commitment recognize that AI systems may continue to have weaknesses and vulnerabilities even after robust red-teaming.”

Even this single thing alone seems basically unforgiveable.

I’d score these subclaims as complicated, false, and false—complicated because I think Anthropic’s proposals were to move from a strong but non-viable bill towards a weaker but viable approach, which was vetoed anyway.

(I appreciate you commenting; and I’m probably not going to try to evaluate your claims, because I’m not trying to be an expert in this stuff; but

I would suggest linking to an explanation of this;

I think it would be valuable for someone to evaluate this in more detail.

E.g. I’m pretty skeptical for lay-reasons like “Really? If one of the 3ish major companies strongly supported the bill, that wouldn’t much increase its chances of getting passed?” and “Really? But couldn’t they have still said the bill was best as-is?” and “Really? How are you / how was Anthropic leadership so confident the bill wasn’t viable?” and “Really? We’re still doing this ‘play it cool’ strategy instead of just saying what we think would be good to do?” and “Wow, one of the top companies somehow figured out a way to rationalize not supporting regulating themselves even though they nobly said they would support that and they are such amazing homies, what an incredible surprise”. But I could imagine lacking some context that makes the decision seem better.)

Yes, politics at this level is definitely an area where you need both non-layman expertise, and a lot of specific context.

Is there a short summary that you’d be willing to undersign, regarding the intentions / efforts of Anthropic leadership around regulation? E.g.

Or something like that.

Some unstructured thoughts:

I think it’s sort of a type error to refer to Anthropic as something that one could trust or not. Anthropic is a company which has a bunch of executives, employees, board members, LTBT members, external contractors, investors, etc, all of whom have influence over different things the company does.

I think the main case where people are tempted to use the word “trust” in connection with Anthropic is when they are trying to decide how good it is to make Anthropic generically more powerful, e.g. by working there on AI capabilities.

I do think that many people (including most Anthropic staff) are well described as trusting Anthropic too much. For example, some people are trustworthy in the sense that things they say make it pretty easy to guess what they’re going to do in the future in a wide variety of situations that might come up; I definitely don’t think that this is the case for Anthropic. This is partially because it’s generally hard to take companies literally when they say things, and partially because Anthropic leadership aren’t as into being truthful as, for example, rationalists are. I think that many Anthropic staff take Anthropic leadership at its word to an extent that degrades their understanding of AI-risk-relevant questions.

But is that bad? It’s complicated by the fact that it’s quite challenging to have enough context on the AI risk situation that you can actually second-guess Anthropic leadership in a way that overall makes the situation better. Most AI-safety-concerned people who work at Anthropic spend most of their time trying to do their job instead of thinking a lot about e.g. what should happen on state legislation; I think it would take a lot of time for them to get confident enough that Anthropic was behaving badly that it would add value for them to try to pressure Anthropic (except by somehow delegating this judgement call to someone who is less COI-ed and who can amortize this work).

I think that in some cases in the past, Anthropic leadership did things that safety-concerned staff wouldn’t have liked, and where Anthropic leadership looks like they made the right call in hindsight. For example, I think AI safety people often have sort of arbitrary strong takes about things that would be very bad to do, and it’s IMO sometimes been good that Anthropic leadership hasn’t been very pressured by their staff.

On the general topic of whether it’s good for Anthropic to be powerful, I think that it’s also a big problem that Anthropic leadership is way less worried than I am about AIs being egregiously misaligned; I think it’s plausible that in the future they’ll take actions that I think are very bad for AI risk. (For example, I think that in the face of ambiguous evidence about AI misalignment that I think we’re likely to get, they are much more likely than I would be to proceed with building more powerful models.) This has nothing to do with whether they’re honest.

I also recommend Holden Karnofsky’s notes on trusting AI companies, summarized here.

Note that while the title refers to “Anthropic”, the post very clearly discusses Anthropic’s leadership, in general and in specific, and discusses Anthropic staff separately.

I kinda agree that it’s kinda a type error—but also you have a moral obligation not to be eaten by the sort of process that would eat people, such as “pretend to be appropriately concerned with X-risk in order to get social approval from EA / X-deriskers, including funding and talent, and also act against those interests”.

Could you give a more specific example, that’s among the strongest such examples?

I don’t get this. You said that you yourself think Anthropic leadership is noticeably less honest (than people around here), and less concerned about alignment difficult than you are. Given that, and also given that they clearly have very strong incentives to act against X-derisking interests, and given that their actions seem against X-derisking interests, and (AFAIK?) they haven’t credibly defended those actions (e.g. re/ SB 1047) in terms of X-derisking, what else could one be waiting to see before judging Anthropic leadership on the dimension of aiming for X-derisking and/or accurately representing their X-derisking stance?

I think this is specifically talking about investments from gulf states (which imo means it’s not “directly contradicted” by the amazon thing). If that’s true, I’d suggest making that more clear.

Thanks! I meant to say that the idea that Anthropic would hold firm in the face of pressure from investors is directly contradicted by the amazon thing. Made the edit.

I just want to chime in here as someone who just posted an article, today, that covers interpretability research, primarily by academic researchers, but with Anthropic researchers also playing a key contributor to the story. (I had no idea these posts would come out on the same day.)

I just want to say that I very much appreciate and endorse this kind of post, and I think Anthropic employees should too; and I’m guessing that many of them do. It may be a trite cliche, but it’s simply true; with great power comes great responsibility, and there are a lot of reasons to question what the company Anthropic (and other large AI companies) are doing.

As a science journalist, I also have to say that I especially endorse questioning people who would describe themselves as journalists—including myself—on their roles in such matters. The whole point of labelling yourself as a journalist is to try to clarify the principled nature of your work, and it is very unclear to me how anyone can sustain those principles in certain contexts, like working at Anthropic.

That said, generally speaking, I also want to note something of my personal views, which is that I see ethics as being extremely complicated; it’s just simply true that we humans live in a space of actions that is often deeply ethically flawed and contradictory. And I believe we need to make space for these contradictions (within reason … which we should all be trying to figure out, together), and there’s really no other way of going through things. But I think fair efforts to hold people and organizations accountable should almost universally tend to be welcomed and encouraged, not discouraged.

I am so very tired of these threads, but I’ll chime in at least for this comment. Here’s last time, for reference.

I continue to think that working at Anthropic—even in non-safety roles, I’m currently on the Alignment team but have worked on others too—is a great way to contribute to AI safety. Most people I talk to agree that they think the situation would be worse if Anthropic had not been founded or didn’t exist, including MIRI employees.

I’m not interested in litigating an is-ought gap about whether “we” (human civilization?) “should” be facing such high risks from AI; obviously we’re not in such an ideal world, and so discussions from that implicit starting point are imo useless.

I have a lot of non-public information too, which points in very different directions to the citations here. Several are from people who I know to have lied about Anthropic in the past; and many more are adversarially construed. For some I agree on an underlying fact and strongly disagree with the framing and implication.

I continue to have written redlines which would cause me to quit in protest.

My post is about Anthropic being untrustworthy. If that was not the case, if Anthropic clearly and publicly was making the case for doing their work with full understanding of the consequences, if the leadership did not communicate contradictory positions to different people and was instead being honest and high-integrity, I could imagine a case being made for working at Anthropic on capabilities, to have a company that stays at the frontier and is able to get and publish evidence, and use its resources to slow down everyone on the planet.

But we, instead, live in a world where multiple people showed me misleading personal messages from Jack Clark.

One should be careful not to galaxy-brain themselves into thinking that it’s fine for people to be low-integrity.

I don’t think the assumptions that you think I’m making are feeding into most of the post.

If you think I got any of the facts wrong, please do correct me on them. (You can reach out in private, and share information with me in private, and I will not share it further without permission.)

I appreciate you having done this.

Despite working at a competitor, I am happy Anthropic exists. I worry about centralization of control- I want OpenAI to be successful but I don’t want it to be a monopoly. Competition can create incentives to cut corners, but it also enables a variety of ideas and approaches as well as collaboration when it comes to safety. (And there have been some such cross industry collaborations.) In particular I appreciate some of the great research on AI safety that has come from Anthropic.

No company is perfect, we all make mistakes, which we should be criticized for. But I think many of the critiques (of both OpenAI and Anthropic) are based on unrealistic and naive world views.

I suspect the real disagreement between you and Anthropic-blamers like me is downstream of a P(Doom) disagreement (where yours is pretty low and others’ is high), since I’ve seen this is often the case with various cases of smart people disagreeing.

Realistically/pragmatically balanced moves in a lowish-P(Doom) world are unacceptable in a high-P(Doom) world.